Q-Learning and Pontryagin's Minimum Principle

description

Transcript of Q-Learning and Pontryagin's Minimum Principle

Q-Learning and Pontryagin's Minimum Principle

Sean Meyn

Department of Electrical and Computer Engineeringand the Coordinated Science Laboratory University of Illinois

Joint work with Prashant MehtaNSF support: ECS-0523620

Outline

?

Step 1: Recognize Step 2: Find a stab...Step 3: Optimality Step 4: Adjoint Step 5: Interpret

Example: Decentralized controlExample: Decentralized control

Coarse models - what to do with them?Coarse models - what to do with them?

Q-learning for nonlinear state space modelsQ-learning for nonlinear state space models

Example: Local approximationExample: Local approximation

Outline

?

Step 1: Recognize Step 2: Find a stab...Step 3: Optimality Step 4: Adjoint Step 5: Interpret

Example: Decentralized controlExample: Decentralized control

Coarse models - what to do with them?Coarse models - what to do with them?

Q-learning for nonlinear state space modelsQ-learning for nonlinear state space models

Example: Local approximationExample: Local approximation

Coarse Models: A rich collection of model reduction techniques

Many of today’s participants have contributed to this research.A biased list:Many of today’s participants have contributed to this research.A biased list:

Fluid models: Law of Large Numbers scaling, most likely paths in large deviations

Clustering: spectral graph theory Markov spectral theory

Large population limits: Interacting particle systems

Singular perturbations

Workload relaxation for networks Heavy-traffic limits

Workload Relaxations

An example from CTCN:An example from CTCN:

Workload at two stations evolves as a two-dimensional systemCost is projected onto these coordinates:Workload at two stations evolves as a two-dimensional systemCost is projected onto these coordinates:

w2w2

-20 -10 0 10 20 30 40 50

-20

-10

0

10

20

30

40

50

-20 -10 0 10 20 30 40 50

-20

-10

0

10

20

30

40

50

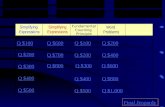

R∗RSTO RSTO R∗

w1w1

−(1− ρ)−(1− ρ)

Figure 7.1: Demand-driven model with routing, scheduling, and re-work.

Optimal policy for relaxation = hedging policy for full network

Figure 7.2: Optimal policies for two instances of the network shown in Figure 7.1.In each figure the optimal stochastic control region RSTO is compared with the optimalregion R∗ obtained for the two dimensional fluid model.

Workload Relaxations and Simulation

50 100 150 200 250 300

1020

3040

5060

1020

3040

5060

1020

3040

5060

2030

4050

60

VIA initialized with Simulated mean with and without control variate:

ZeroFluid value function

An example from CTCN:An example from CTCN:

DP and simulations accelerated using fluid value function for workload relaxationDP and simulations accelerated using fluid value function for workload relaxation

Decision making at stations 1 & 2e.g., setting safety-stock levels

safety-stock levels

Station 1

Station 2

α

α

µµ

µµ

Ave

rag

e co

st

Ave

rag

e co

st

Iteration

What To Do With a Coarse Model?

50 100 150 200 250 300

VIA initialized with

ZeroFluid value function

Ave

rag

e co

st

Iteration

Setting: we have qualitative or partial quantitative insight regarding optimal controlSetting: we have qualitative or partial quantitative insight regarding optimal control

The network examples relied on specific network structureThe network examples relied on specific network structure

What about other models?What about other models?

What To Do With a Coarse Model?

50 100 150 200 250 300

VIA initialized with

ZeroFluid value function

Ave

rag

e co

st

Iteration

Setting: we have qualitative or partial quantitative insight regarding optimal controlSetting: we have qualitative or partial quantitative insight regarding optimal control

The network examples relied on specific network structureThe network examples relied on specific network structure

An answer lies in a new formulation of Q-learningAn answer lies in a new formulation of Q-learning

What about other models?What about other models?

Outline

?

Step 1: Recognize Step 2: Find a stab...Step 3: Optimality Step 4: Adjoint Step 5: Interpret

Example: Decentralized controlExample: Decentralized control

Coarse models - what to do with them?Coarse models - what to do with them?

Q-learning for nonlinear state space modelsQ-learning for nonlinear state space models

Example: Local approximationExample: Local approximation

What is Q learning?

50 100 150 200 250 300

VIA initialized with

ZeroFluid value function

Ave

rag

e co

st

Iteration

Watkin’s 1992 formulation applied to finite state space MDPsWatkin’s 1992 formulation applied to finite state space MDPs

Idea is similar to Mayne & Jacobson’s differential dynamic programmingIdea is similar to Mayne & Jacobson’s differential dynamic programming Differential dynamic programming

D. H. Jacobson and D. Q. MayneAmerican Elsevier Pub. Co. 1970

Q-LearningC. J. C. H. Watkins and P. Dayan Machine Learning, 1992

What is Q learning?

50 100 150 200 250 300

VIA initialized with

ZeroFluid value function

Ave

rag

e co

st

Iteration

Watkin’s 1992 formulation applied to finite state space MDPsWatkin’s 1992 formulation applied to finite state space MDPs

Deterministic formulation: Nonlinear system on Euclidean space,Deterministic formulation: Nonlinear system on Euclidean space,

Infinite-horizon discounted cost criterion,Infinite-horizon discounted cost criterion,

with c a non-negative cost function.with c a non-negative cost function.

Idea is similar to Mayne & Jacobson’s differential dynamic programmingIdea is similar to Mayne & Jacobson’s differential dynamic programming

ddtx(t) = f(x(t),u(t)), t≥ 0

J∗(x) = inf∞

0

e−γsc(x(s),u(s)) ds, x(0) = x

Differential dynamic programming D. H. Jacobson and D. Q. MayneAmerican Elsevier Pub. Co. 1970

Q-LearningC. J. C. H. Watkins and P. Dayan Machine Learning, 1992

What is Q learning?

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Deterministic formulation: Nonlinear system on Euclidean space,Deterministic formulation: Nonlinear system on Euclidean space,

Infinite-horizon discounted cost criterion,Infinite-horizon discounted cost criterion,

with c a non-negative cost function.with c a non-negative cost function.

Differential generator: For any smooth function h,Differential generator: For any smooth function h,

ddtx(t) = f(x(t),u(t)), t≥ 0

J∗(x) = inf∞

0

e−γsc(x(s),u(s)) ds, x(0) = x

Duh (x) := (∇h (x))Tf(x,u)

What is Q learning?

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Deterministic formulation: Nonlinear system on Euclidean space,Deterministic formulation: Nonlinear system on Euclidean space,

Infinite-horizon discounted cost criterion,Infinite-horizon discounted cost criterion,

with c a non-negative cost function.with c a non-negative cost function.

Differential generator: For any smooth function h,Differential generator: For any smooth function h,

ddtx(t) = f(x(t),u(t)), t≥ 0

J∗(x) = inf∞

0

e−γsc(x(s),u(s)) ds, x(0) = x

HJB equation:HJB equation: minu

c(x,u) + DuJ∗ (x) = γJ∗(x)

Duh (x) := (∇h (x))Tf(x,u)

What is Q learning?

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Deterministic formulation: Nonlinear system on Euclidean space,Deterministic formulation: Nonlinear system on Euclidean space,

Infinite-horizon discounted cost criterion,Infinite-horizon discounted cost criterion,

with c a non-negative cost function.with c a non-negative cost function.

The Q-function of Q-learning is this function of two variablesThe Q-function of Q-learning is this function of two variables

Differential generator: For any smooth function h,Differential generator: For any smooth function h,

ddtx(t) = f(x(t),u(t)), t≥ 0

J∗(x) = inf∞

0

e−γsc(x(s),u(s)) ds, x(0) = x

HJB equation:HJB equation: minu

c(x,u) + DuJ∗ (x) = γJ∗(x)

Duh (x) := (∇h (x))Tf(x,u)

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Sequence of five steps:Sequence of five steps:

Step 1: Recognize fixed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Sequence of five steps:Sequence of five steps:

Goal - seek the best approximation, within a parameterized classGoal - seek the best approximation, within a parameterized class

Step 1: Recognize fixed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Step 1: Recognize fixed point equation for the Q-functionStep 1: Recognize fixed point equation for the Q-function

Q-function: Q-function:

Its minimum:Its minimum:

Fixed point equation:Fixed point equation:

c(x,u) +DuJ∗∗ (x)=H (x,u)

H∗(x) := minu∈U

H∗(x,u) = γJ∗(x)

DuH∗ (x) = −γ(c(x,u)−H∗(x,u))

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

DuH∗ (x) = ddtH

∗(x(t))x=x(t)u=u(t)

Step 1: Recognize fixed point equation for the Q-functionStep 1: Recognize fixed point equation for the Q-function

Q-function: Q-function:

Its minimum:Its minimum:

Fixed point equation:Fixed point equation:

Key observation for learning: For any input-output pair,Key observation for learning: For any input-output pair,

c(x,u) +DuJ∗∗ (x)=H (x,u)

H∗(x) := minu∈U

H∗(x,u) = γJ∗(x)

DuH∗ (x) = −γ(c(x,u)−H∗(x,u))

Q learning - LQR example

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Linear model and quadratic cost,

Cost:

Q-function:

c(x, u) = 12xTQx + 1

2uTRu

= c(x, u) + (

= c(x, u) +

Ax + Bu)TP ∗x

Solves Riccatti eqnDuJ∗ (x)

=J∗ (x)

∗H (x,u)

P ∗x12xT

Q learning - LQR example

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Linear model and quadratic cost,

Cost:

Q-function:

Q-function approx:

Minimum:

Minimizer:

Hθ(x) = 12xT Q + Eθ − F θT

R−1F θ x

φθ(x) =uθ(x) = −R−1F θx

c(x, u) = 12xTQx + 1

2uTRu

= c(x, u) + (Ax + Bu)TP ∗x

Solves Riccatti eqn

Hθ(x, u) = c(x, u) + 12

dx

i=1

θxix

TEix +

dxu

j=1

θxjx

TF iu

∗H (x,u)

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Step 2: Stationary policy that is ergodic?Step 2: Stationary policy that is ergodic?

Assume the LLN holds for continuous functionsAssume the LLN holds for continuous functions

AsAs

F : R × R u → RT → ∞,

1

T

T

0

F (x(t), u(t)) dt −→X×U

F (x, u) (dx, du)

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Step 2: Stationary policy that is ergodic?Step 2: Stationary policy that is ergodic?

Suppose for example the input is scalar, and the system is stable [Bounded-input/Bounded-state]Suppose for example the input is scalar, and the system is stable [Bounded-input/Bounded-state]

Can try a linearcombination of sinusouids

Can try a linearcombination of sinusouids

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08

u(t) = A(sin(t) + sin(πt) + sin(et))

Step 2: Stationary policy that is ergodic?Step 2: Stationary policy that is ergodic?

Suppose for example the input is scalar, and the system is stable [Bounded-input/Bounded-state]Suppose for example the input is scalar, and the system is stable [Bounded-input/Bounded-state]

Can try a linearcombination of sinusouids

Can try a linearcombination of sinusouids

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Based on observations, minimize the mean-square Bellman error:Based on observations, minimize the mean-square Bellman error:

First order condition for optimality:First order condition for optimality:

θ θ,

with ψθ θi (x) = ψθ

i (x, φ (x)),1 ≤ i ≤ d

θ,Duψθi − γψθ

i = 0

Step 3: Bellman errorStep 3: Bellman error

Q learning - Convex Reformulation

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Based on observations, minimize the mean-square Bellman error:Based on observations, minimize the mean-square Bellman error:

θ θ,

G

Gθ(x) ≤ Hθ(x, u), all x, u

Step 3: Bellman errorStep 3: Bellman error

Q learning - LQR example

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

Linear model and quadratic cost,

Cost:

Q-function:

Q-function approx:

Approximation to minimum

Minimizer:

G θ(x) = 12xT Gθ x

φθ(x) =uθ(x) = −R−1F θx

c(x, u) = 12xTQx + 1

2uTRu

H∗(x) = c(x, u) + (Ax + Bu)TP ∗x

Solves Riccatti eqn

Hθ(x, u) = c(x, u) + 12

dx

i=1

θxix

TEix +

dxu

j=1

θxjx

TF iu

Q learning - Steps towards an algorithm

g : R × R w → R

Rβg (x, w) =∞

0

e−βtg(x(t), ξ(t)) dt

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

For any function of two variables,Resolvent gives a new function,For any function of two variables,Resolvent gives a new function,

Skip to examples

Q learning - Steps towards an algorithm

g : R × R w → R

Rβg (x, w) =∞

0

e−βtg(x(t), ξ ,(t)) dt β > 0

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

For any function of two variables,Resolvent gives a new function,For any function of two variables,Resolvent gives a new function,

controlled using the nominal policycontrolled using the nominal policy

stabilizing & ergodicstabilizing & ergodic

u(t) = φ(x(t), ξ(t)), t ≥ 0

Q learning - Steps towards an algorithm

g : R × R w → R

Rβg (x, w) =∞

0

e−βtg(x(t), ξ ,(t)) dt β > 0

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

For any function of two variables,Resolvent gives a new function,For any function of two variables,Resolvent gives a new function,

Resolvent equation:Resolvent equation:

Q learning - Steps towards an algorithm

g : R × R w → R

Rβg (x, w) =∞

0

e−βtg(x(t), ξ ,(t)) dt β > 0

Lθ,β = RβLθ

= [βRβ − I]Hθ + γRβ(c − Hθ)

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

For any function of two variables,Resolvent gives a new function,For any function of two variables,Resolvent gives a new function,

Resolvent equation:Resolvent equation:

Smoothed Bellman error:Smoothed Bellman error:

Q learning - Steps towards an algorithm

Eβ(θ) := 12

θ,β 2

∇Eβ(θ) =

=

θ,β ,∇θLθ,β

Smoothed Bellman error:Smoothed Bellman error:

zero at an optimumzero at an optimum

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

Q learning - Steps towards an algorithm

Eβ(θ) := 12

θ,β 2

∇Eβ(θ) =

=

θ,β ,∇θLθ,β

Smoothed Bellman error:Smoothed Bellman error:

zero at an optimumzero at an optimum

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

Involves terms of the formInvolves terms of the form Rβg,R βh

Q learning - Steps towards an algorithm

Eβ(θ) := 12

θ,β 2

∇Eβ(θ) = θ,β ,∇θLθ,β

R†βRβ = (R†

β + Rβ)

Rβg,R βh =1

2βg,R †

βh + h,R †βg

1

2β

Smoothed Bellman error:Smoothed Bellman error:

Adjoint operation: Adjoint operation:

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

Q learning - Steps towards an algorithm

Eβ(θ) := 12

θ,β 2

∇Eβ(θ) = θ,β ,∇θLθ,β

R†βRβ = (R†

β + Rβ)

Rβg,R βh =1

2βg,R †

βh + h,R †βg

1

2β

Smoothed Bellman error:Smoothed Bellman error:

Adjoint operation: Adjoint operation:

Step 4: Causal smoothing to avoid differentiationStep 4: Causal smoothing to avoid differentiation

Adjoint realization: time-reversal Adjoint realization: time-reversal

R†βg (x, w) =

∞

0

e−βtEx, w [g(x◦(−t), ξ◦(−t))] dt

expectation conditional on x◦(0) = x, ξ◦(0) = w.

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

Step 1: Recognize �xed point equation for the Q-functionStep 2: Find a stabilizing policy that is ergodic Step 3: Optimality criterion - minimize Bellman errorStep 4: Adjoint operationStep 5: Interpret and simulate!

After Step 5: Not quite adaptive control:After Step 5: Not quite adaptive control:

Ergodic input appliedErgodic input applied

Desired behavior

Measured behavior

Compareand learn Inputs

Outputs

Complex system

Q learning - Steps towards an algorithm

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

After Step 5: Not quite adaptive control:After Step 5: Not quite adaptive control:

Ergodic input appliedBased on observations minimize the mean-square Bellman error:Ergodic input appliedBased on observations minimize the mean-square Bellman error:

Desired behavior

Measured behavior

Compareand learn Inputs

Outputs

Complex system

ddth(x(t))

x=x(t)w=ξ(t)

= Duh (x)

Deterministic Stochastic Approximation

0 1 2 3 4 5 6 7 8 9 10

0

1

-1

(indi

vidu

al st

ate)

(ens

embl

e st

ate)

Gradient descent:Gradient descent:

Converges* to the minimizer of the mean-square Bellman error:Converges* to the minimizer of the mean-square Bellman error:

Convergence observed in experiments!For a convex re-formulation of the problem, see Mehta & Meyn 2009

*

ddtθ = −ε θ,Du∇θH

θ − γ∇θHθ

ddth(x(t))

x=x(t)w=ξ(t)

= Duh (x)

Deterministic Stochastic Approximation

0 1 2 3 4 5 6 7 8 9 10

0

1

-1

(indi

vidu

al st

ate)

(ens

embl

e st

ate)

Stochastic ApproximationStochastic Approximation

ddtθ = −ε θ,Du∇θH

θ − γ∇θHθ

Gradient descent:

Mean-square Bellman error:

ddtθ = −εtLθ

tddt∇θH

θ (x◦(t)) − γ∇θHθ(x◦(t), u◦(t))

Lθt := d

dtHθ (x◦(t))+γ(c(x◦(t) u◦(t))−Hθ(x◦(t), ◦(t)))u,

Outline

?

Step 1: Recognize Step 2: Find a stab...Step 3: Optimality Step 4: Adjoint Step 5: Interpret

Example: Decentralized controlExample: Decentralized control

Coarse models - what to do with them?Coarse models - what to do with them?

Q-learning for nonlinear state space modelsQ-learning for nonlinear state space models

Example: Local approximationExample: Local approximation

Q learning - Local Learning

Cubic nonlinearity:Cubic nonlinearity:

Desired behavior

Measured behavior

Compareand learn Inputs

Outputs

Complex system

ddtx = −x3 + u, c(x, u) = 1

2x2 + 12u2

Q learning - Local Learning

Cubic nonlinearity:Cubic nonlinearity:

HJB:HJB:

Desired behavior

Measured behavior

Compareand learn Inputs

Outputs

Complex system

ddtx = −x3 + u, c(x, u) = 1

2x2 + 12u2

minu

12x2 + 1

2u2 + (−x3 + u)∇J∗(x) = γJ∗(x)( )

Q learning - Local Learning

Cubic nonlinearity:Cubic nonlinearity:

HJB:HJB:

Basis:Basis:

Desired behavior

Measured behavior

Compareand learn Inputs

Outputs

Complex system

ddtx = −x3 + u, c(x, u) = 1

2x2 + 12u2

minu

12x2 + 1

2u2 + (−x3 + u)∇J∗(x) = γJ∗(x)( )

Hθ(x, u) = c(x, u) + θxx2 + θxu x

1 + 2x2u

Q learning - Local Learning

Cubic nonlinearity:Cubic nonlinearity:

HJB:HJB:

Basis:Basis:

Low amplitude inputLow amplitude input High amplitude inputHigh amplitude input

Desired behavior

Measured behavior

Compareand learn Inputs

Outputs

Complex system

0.01

0.02

0.03

0.04

0.05

0.06

−1 0 1−1

0

1

Optimal policy

−1 0 1−1

0

1

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08Optimal policy

u(t) = A(sin(t) + sin(πt) + sin(et))

ddtx = −x3 + u, c(x, u) = 1

2x2 + 12u2

minu

12x2 + 1

2u2 + (−x3 + u)∇J∗(x) = γJ∗(x)( )

Hθ(x, u) = c(x, u) + θxx2 + θxu x

1 + 2x2u

Outline

?

Step 1: Recognize Step 2: Find a stab...Step 3: Optimality Step 4: Adjoint Step 5: Interpret

Example: Decentralized controlExample: Decentralized control

Coarse models - what to do with them?Coarse models - what to do with them?

Q-learning for nonlinear state space modelsQ-learning for nonlinear state space models

Example: Local approximationExample: Local approximation

Multi-agent modelM. Huang, P. E. Caines, and R. P. Malhame. Large-populationcost-coupled LQG problems with nonuniform agents: Individual-massbehavior and decentralized ε-Nash equilibria. IEEE Trans. Auto.Control, 52(9):1560–1571, 2007.

Huang et. al. Local optimization for global coordinationHuang et. al. Local optimization for global coordination

Multi-agent model

Model: Linear autonomous models - global cost objectiveModel: Linear autonomous models - global cost objective

HJB: Individual state + global average HJB: Individual state + global average

Basis: Consistent with low dimensional LQG modelBasis: Consistent with low dimensional LQG model

Results from five agent model:Results from five agent model:

Multi-agent model

Model: Linear autonomous models - global cost objectiveModel: Linear autonomous models - global cost objective

HJB: Individual state + global average HJB: Individual state + global average

Basis: Consistent with low dimensional LQG modelBasis: Consistent with low dimensional LQG model

Estimated state feedback gainsEstimated state feedback gains

Gains for agent 4: Q-learning sample paths

and gains predicted from ∞-agent limit

Gains for agent 4: Q-learning sample paths

and gains predicted from ∞-agent limit

Results from five agent model:Results from five agent model:

0

1

-1

(individual state)

(ensemble state)

time

Outline

?

Step 1: Recognize Step 2: Find a stab...Step 3: Optimality Step 4: Adjoint Step 5: Interpret

Example: Decentralized controlExample: Decentralized control

... Conclusions... Conclusions

Coarse models - what to do with them?Coarse models - what to do with them?

Q-learning for nonlinear state space modelsQ-learning for nonlinear state space models

Example: Local approximationExample: Local approximation

Conclusions

Coarse models give tremendous insight

They are also tremendously useful for design in approximate dynamic programming algorithms

Coarse models give tremendous insight

They are also tremendously useful for design in approximate dynamic programming algorithms

Conclusions

Coarse models give tremendous insight

They are also tremendously useful for design in approximate dynamic programming algorithms

Q-learning is as fundamental as the Riccati equation - this should be included in our first-year graduate control courses

Coarse models give tremendous insight

They are also tremendously useful for design in approximate dynamic programming algorithms

Q-learning is as fundamental as the Riccati equation - this should be included in our first-year graduate control courses

Conclusions

Coarse models give tremendous insight

They are also tremendously useful for design in approximate dynamic programming algorithms

Q-learning is as fundamental as the Riccati equation - this should be included in our first-year graduate control courses

Coarse models give tremendous insight

They are also tremendously useful for design in approximate dynamic programming algorithms

Q-learning is as fundamental as the Riccati equation - this should be included in our first-year graduate control courses

Current research: Current research: Algorithm analysis and improvementsApplications in biology and economicsAnalysis of game-theoretic issues in coupled systems

Algorithm analysis and improvementsApplications in biology and economicsAnalysis of game-theoretic issues in coupled systems

References

. PhD thesis, University of London, London, England, 1967.

. American Elsevier Pub. Co., New York, NY, 1970.

Learning from Delayed Rewards. PhD thesis, King’s College, Cambridge, UK, 1989.

Machine Learning, 8(3-4):279–292, 1992.

SIAM J. Control Optim., 38(2):447–469, 2000.

on policy iteration. Automatica, 45(2):477 – 484, 2009.

Submitted to the 48th IEEE Conference on Decision and Control, December 16-18 2009.

[9] C. Moallemi, S. Kumar, and B. Van Roy. Approximate and data-driven dynamic programming for queueing networks. Preprint available at http://moallemi.com/ciamac/research-interests.php, 2008.