Performance Analysis of CNN Frameworks for · PDF filemachine learning tasks such as visual...

Transcript of Performance Analysis of CNN Frameworks for · PDF filemachine learning tasks such as visual...

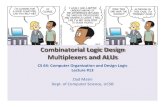

Performance Analysis of CNN

Frameworks for GPUs

Heehoon Kim†, Hyoungwook Nam†, Wookeun Jung, and Jaejin Lee

Department of Computer Science and Engineering

Seoul National University, Korea

http://aces.snu.ac.kr

†The two authors contributed equally to this work as the first authors

1

Convolutional Neural Network

Deep Learning Framework

GPU Library

2

Motivation

Convolutional Neural Networks (CNN) have been successful in machine learning tasks such as visual recognition

Previous studies reveal performance differences among deep learning frameworks

However, those studies do not identify reasons for the differences

3

4

0 100 200 300 400 500 600

Torch

Theano

TensorFlow

CNTK

Caffe

Time (ms)

Goals

Analyze differences in the performance characteristics of the

five deep learning frameworks in a single GPU context

Analyze scalability of the frameworks in the multiple GPU

context

Analyze performance characteristics of different convolution

algorithms for each layer

5

Outline

Convolutional Neural Network

Deep Learning Frameworks

Framework Comparison

Multi-GPU Comparison

Layer-wise Analysis of Convolution Algorithms

Conclusions

6

Convolutional Neural Network

7

conv

n

conv

1

conv

2

…Inputsfc n

fc 1

fc 2 …so

ftmax

Outputs

ConvolutionalFeature Extractor

Fully-connectedClassifier

Computational Complexity of Convolution

8

𝐶 × 𝐻𝑊 × 𝑅𝑆 × 𝐾 × 𝑁 × 2 (𝑚𝑢𝑙𝑡𝑖𝑝𝑙𝑦 𝑎𝑛𝑑 𝑎𝑑𝑑)

Ex) 96 × 27 × 27 × 5 × 5 × 256 × 256 × 2 = 229 𝐺𝑜𝑝𝑠

Conv2 layer

C = 96

(input channel)

[H,W] = [13, 13]

(input dimension)

[R,S] = [5, 5]

(kernel dimension)

K = 256

(output channel)

N = 256

(batch size)

Convolution Algorithms for GPU

Direct Convolution

• Straightforward, but hard to optimize

GEMM Convolution

• Converts convolutions into matrix multiplications

• Easier to optimize

FFT Convolution

• Reduced computational complexity

• 𝑂(𝐾𝑁) (Direct convolution) 𝑂(𝑁𝑙𝑜𝑔𝑁) (FFT convolution)

Winograd Convolution

• Reduces the complexity of convolution like Strassen’s algorithm

• Specific filtering algorithm is required for each kernel dimension

9

AlexNet Model

10

Winner of ILSVRC 2012 (ImageNet Challenge)

Commonly used CNN model for benchmarking

Includes various kinds of layers

• 3x3 convolution, 5x5 convolution, fully connected layers, etc.

Training a CNN

11

Layer

Input

Output

Forward

Layer

Gradient Data

Loss

Backward Data

Layer

Weight Gradient

Gradient Data

Backward Gradient

1 forward computation and 2 backward computations

Forward and backward computations are symmetric and have

the same computational cost

Layer

Weight Gradient

Update Parameters

Outline

Convolutional Neural Network

Deep Learning Frameworks

Framework Comparison

Multi-GPU Comparison

Layer-wise Analysis of Convolution Algorithms

Conclusions

12

Five Deep Learning Frameworks

13

Framework User Interface Data Parallelism Model Parallelism

Caffe protobuf, C++, Python Yes Limited

CNTK BrainScript, C++, C# Yes No

TensorFlow Python, C++ Yes Yes

Theano Python No No

Torch LuaJIT Yes Yes

Popular frameworks chosen by GitHub stars

All five frameworks use cuDNN as backend

Theano only supports single GPU

cuDNN

Deep Neural Network library with NVIDIA CUDA

Provides DNN primitives

• Convolution, pooling, normalization, activation, …

State-of-the-art performance

All five frameworks support use of cuDNN as a backend

Unfortunately, not open-source (distributed in binaries)

14

System Setup

CPU 2 x Intel Xeon E5 [email protected]

GPU 4 x NVIDIA Titan X (Maxwell)

Main memory 128GB DDR3

GPU memory 4 x 12GB GDDR5

Operating system CentOS 7.2.1511 (Linux 3.10.0-327)

15

Outline

Convolutional Neural Network

Deep Learning Frameworks

Framework Comparison

Multi-GPU Comparison

Layer-wise Analysis of Convolution Algorithms

Conclusions

16

Execution Time Comparison (default setting)

17

Convolution layers take up more than 70% of training time

f: forward computation, b: backward computation

0 100 200 300 400 500 600

Torch

Theano

TensorFlow

CNTK

Caffe

Time (ms)

conv1f

conv2f

conv3f

conv4f

conv5f

fc1f

fc2f

fc3f

conv1b

conv2b

conv3b

conv4b

conv5b

fc1b

fc2b

fc3b

Options for Convolution Algorithms

18

Framework User Selectable Heuristic-based Profile-based Default

Caffe No Yes No Heuristic-based

CNTK No No Yes Profile-based

TensorFlow No No No Heuristic-based†

Theano Yes Yes Yes GEMM

Torch Yes Yes Yes GEMM

cuDNN Get API is a heuristic based approach to choose an algorithm

cuDNN Find API is a profile-based approach to choose an algorithm

By default, Torch and Theano use GEMM convolution

†TensorFlow uses its own heuristic algorithm

Options for Convolution Algorithms

19

Up to 2x speedup by providing algorithm options

0 100 200 300 400 500 600

Torch(Profile)

Torch

Theano(Profile)

Theano(Heuristic)

Theano(FFT)

Theano

Time (ms)

Conv Forward

FC Forward

Conv Backward

FC Backward

Data Layout

20

NCHWlayout

NHWClayout

cuDNNtranspose transpose

For example, cuDNN’s FFT convolution only supports NCHW

If the user uses another layout, TensorFlow implicitly transposes

Changing the layout leads to 15% speedup in TensorFlow

NHWClayout

NCHWlayout

0 50 100 150 200 250 300

TensorFlow (NCHW)

TensorFlow

Time (ms)

Unnecessary Backpropagation

21

Layer 3

Layer 2

Layer 1

Layer 0

Input

Forward

Backward Data

Backward Gradient

Unnecessary

‘Backward Data’ is unnecessary in the first layer.

Caffe, CNTK, Theano

• Automatically omitted.

Torch

• User option (layer0.gradInput = nil)

TensorFlow

• No options to users

Unnecessary Backpropagation

22

0 100 200 300 400 500 600

Torch (w/o first)

Torch

Time (ms)

Speedup in the backward computation of the first layer

Optimized Results

23

Framework differences are not significant if carefully optimized

Remaining differences come from other operations, such as bias

addition and ReLU activation

0 100 200 300 400 500 600

Torch(Profile)

Torch

Theano(Profile)

Theano

TensorFlow (NCHW)

TensorFlow

CNTK

Caffe

Time (ms)

Conv Forward

FC Forward

Conv Backward

FC Backward

Outline

Convolutional Neural Network

Deep Learning Frameworks

Framework Comparison

Multi-GPU Comparison

Layer-wise Analysis of Convolution Algorithms

Conclusions

24

Data-parallel SGD

25

CNN

GPU0 GPU1 GPU2 GPU3

CNN CNN CNN

Update Update Update Update

Critical path : 2logN transfer

Batch 0 Batch 1 Batch 2 Batch 3

Multi-GPU Scalability

With small batches, multi-GPU is worse than a single GPU

Even with large batches, 4GPUs’ speedup is only around 1.5x

26

0

0.2

0.4

0.6

0.8

1

1.2

1.4

1.6

1.8

2

128 256 512

Speedup

Batch size

0

0.2

0.4

0.6

0.8

1

1.2

1.4

1.6

1.8

2

128 256 512

Speedup

Batch size

0

0.2

0.4

0.6

0.8

1

1.2

1.4

1.6

1.8

2

128 256 512

Speedup

Batch size

0

0.2

0.4

0.6

0.8

1

1.2

1.4

1.6

1.8

2

128 256 512

Speedup

Batch size

1GPU

2GPUs

4GPUs

Caffe Torch TensorFlow CNTK

Communication-Compute Overlapping

27

Forward

Transfer Transfer

Backward

Transfer Transfer

Transfer overhead is not negligible

Transfer as soon as gradients of each layer become available

TensorFlow is partly doing this

The last layer’s gradients are computed.

Forward & Backward Transfer Transfer Transfer Transfer

~200ms with a batch size of 256 ~45ms(~250MB gradients, ~5GB/s)

Reducing Amount of Data Transfer

30

Forward & Backward Transfer Transfer Transfer Transfer

Forward & Backward

Quantization methods

• CNTK’s 1bit-SGD (1/32 transfer)

Avoid fully connected layers

• 90% of parameters reside in fully-connected layers

• Use 1x1 convolution layers instead of fully-connected layers (e.g. GoogLeNet)

2.62

0

0.5

1

1.5

2

128 256 512

Speedup

1GPU 2GPUs 4GPUs

CNTK 1bit-SGD

Outline

Convolutional Neural Network

Deep Learning Frameworks

Framework Comparison

Multi-GPU Comparison

Layer-wise Analysis of Convolution Algorithms

Conclusions

31

Direct Convolution Algorithm

Straightforward convolution algorithm

Not supported by cuDNN, thus we use cuda-convnet3 for

testing

Easy to implement but hard to optimize

cuda-convnet requires CHWN tensor layout instead of NCHW

Computation time for forward and backward computations are

not symmetric

32

GEMM Convolution Algorithm

33

Treat convolutions as vector dot products in matrix multiplication

Forward and backward computations are symmetric

Efficiently optimized, but tiling inserts unnecessary computations

FFT Convolution Algorithm

FFT CGEMM inverse FFT == Convolution

In 2D convolution, computational complexity reduces from

O(𝐻𝑊𝑅𝑆) to O(𝐻𝑊 log 𝐻𝑊 )

Computational cost does not depend on kernel dimension

cuDNN FFT convolution does not support strides

34

0

50

100

150

200

250

conv1 conv2 conv3 conv4 conv5

Gig

a O

pera

tio

ns

Kernel operation counts for each convolution layer

Direct

GEMM

FFT

Winograd

Theoretical

Winograd Convolution Algorithm

Based on GEMM convolution method

Minimal filtering algorithm for 3x3 kernel and 4x4 tiling

reduces 144 multiplications into 36 (4x difference).

Each kernel dimension requires own minimal filtering algorithm.

cuDNN 5.1 supports Winograd algorithm for 3x3 and 5x5

convolutions with no strides

35

0

50

100

150

200

250

conv1 conv2 conv3 conv4 conv5

Gig

a O

pera

tio

ns

Kernel operation counts for each convolution layer

Direct

GEMM

FFT

Winograd

Theoretical

Computation Time Comparison

36

Direct algorithm shows poor performance on backward computations

FFT is the fastest algorithm for most of the time

0

50

100

150

32 64 128 256

Tim

e (

ms)

Batch size

Forward Computation Time

Direct

GEMM

FFT

Winograd0

200

400

600

32 64 128 256

Tim

e (

ms)

Batch size

Backward Computation Time

Direct

GEMM

FFT

Winograd

0

20

40

60

80

32 64 128 256

Tim

e (

ms)

Batch size

Conv3,4,5 Forward Computation Time

Direct

GEMM

FFT

Winograd0

1000

2000

3000

4000

5000

6000

32 64 128 256

Mem

ory

(M

B)

Batch size

VRAM Usage

Direct

GEMM

FFT

Winograd

Computation Time Comparison

37

Direct algorithm shows poor performance on backward computations

FFT is the fastest algorithm for most of the time

Winograd performs better in smaller batches and 3x3 convolutions

0

50

100

150

32 64 128 256

Tim

e (

ms)

Batch size

Forward Computation Time

Direct

GEMM

FFT

Winograd0

200

400

600

32 64 128 256

Tim

e (

ms)

Batch size

Backward Computation Time

Direct

GEMM

FFT

Winograd

0

20

40

60

80

32 64 128 256

Tim

e (

ms)

Batch size

Conv3,4,5 Forward Computation Time

Direct

GEMM

FFT

Winograd0

1000

2000

3000

4000

5000

6000

32 64 128 256

Mem

ory

(M

B)

Batch size

VRAM Usage

Direct

GEMM

FFT

Winograd

Computation Time Comparison

38

Direct algorithm shows poor performance on backward computation

FFT is the fastest algorithm for most of the time

Winograd performs better in smaller batches and 3x3 convolutions

Memory usage differences are not significant

0

50

100

150

32 64 128 256

Tim

e (

ms)

Batch size

Forward Computation Time

Direct

GEMM

FFT

Winograd0

200

400

600

32 64 128 256

Tim

e (

ms)

Batch size

Backward Computation Time

Direct

GEMM

FFT

Winograd

0

20

40

60

80

32 64 128 256

Tim

e (

ms)

Batch size

Conv3,4,5 Forward Computation Time

Direct

GEMM

FFT

Winograd0

1000

2000

3000

4000

5000

6000

32 64 128 256

Mem

ory

(M

B)

Batch size

VRAM Usage

Direct

GEMM

FFT

Winograd

Layer-wise Analysis of Convolution Layers

39

Operation count is the primary factor for the execution time

Conv2 layer requires the most computations

Thus, FFT and Winograd are faster than Direct or GEMM

0

50

100

150

200

250

conv1 conv2 conv3 conv4 conv5

Tim

e (

ms)

Backward computation time for each layer

Direct

GEMM

FFT

Winograd

0

50

100

150

200

250

conv1 conv2 conv3 conv4 conv5

Gig

a O

pe

ratio

ns

Kernel operation counts for each convolution layer

Direct

GEMM

FFT

Winograd

Theoretical

0

10

20

30

40

50

conv1 conv2 conv3 conv4 conv5

Tim

e (

ms)

Forward computation time for each layer

Direct

GEMM

FFT

Winograd

Layer-wise Analysis of Convolution Layers

40

0

50

100

150

200

250

conv1 conv2 conv3 conv4 conv5

Gig

a O

pe

ratio

ns

Kernel operation counts for each convolution layer

Direct

GEMM

FFT

Winograd

Theoretical

Operation count is the primary factor for execution time

Conv2 layer requires the most computations

Thus, FFT and Winograd are faster than Direct or GEMM

Direct convolution is slow because its backward computation in the first layer is inefficient

0

50

100

150

200

250

conv1 conv2 conv3 conv4 conv5

Tim

e (

ms)

Backward computation time for each layer

Direct

GEMM

FFT

Winograd

0

10

20

30

40

50

conv1 conv2 conv3 conv4 conv5

Tim

e (

ms)

Forward computation time for each layer

Direct

GEMM

FFT

Winograd

Conclusions

Convolution layers take up most of the computation time

while training CNN models

Performance difference of the frameworks are mainly due to

convolution algorithms

Choosing optimal options can double the training speed of

the AlexNet model

Tensor layout and unnecessary backpropagation might result

in minor performance differences

41

Conclusions

FFT convolution algorithm is the fastest in most of the time

because of its reduced computation complexity

Winograd convolution can be faster than FFT in 3x3

convolution layers with small batch sizes

Data parallelism is inefficient in most frameworks because of

the communication cost, but some techniques might improve

the multi-GPU scalability

42