GPU Superscalar (GPUSs) BSC

description

Transcript of GPU Superscalar (GPUSs) BSC

GPU Superscalar (GPUSs) BSC

Outline

• StarSs programming model

• StarSs syntax

• GPUSs compiler and runtime

• Examples and performance results

• Conclusions

StarSs Programming Model

• Programmability

– Standard sequential look and feel (C, Fortran)

– Incremental parallelization/restructure

– Abstract/separate algorithmic issues from resources

– Methodology/practices

• Block algorithms: modularity

• “No” side effects: local addressing

• Promote visibility of “Main” data

• Explicit synchronization variables

• Portability

– Runtime for each type of target platform.

• Matches computations to resources

• Achieves “decent” performance

– Even to sequential platform

– Single source for maintained version of a application

• Performance

– Runtime intelligence

SsCellSs

SMPSs GPUSs

GridSs

NestedSs

StarSs: a sequential program …

void vadd3 (float A[BS], float B[BS], float C[BS]); void scale_add (float sum, float A[BS], float B[BS]); void accum (float A[BS], float *sum);

for (i=0; i<N; i+=BS) // C=A+B vadd3 ( &A[i], &B[i], &C[i]);...for (i=0; i<N; i+=BS) // sum(C[i]) accum (&C[i], &sum);...for (i=0; i<N; i+=BS) // B=sum*A scale_add (sum, &E[i], &B[i]);...for (i=0; i<N; i+=BS) // A=C+D vadd3 (&C[i], &D[i], &A[i]);...for (i=0; i<N; i+=BS) // E=G+F vadd3 (&G[i], &F[i], &E[i]);

StarSs: … taskified …

#pragma css task input(A, B) output(C)void vadd3 (float A[BS], float B[BS], float C[BS]);#pragma css task input(sum, A) inout(B)void scale_add (float sum, float A[BS], float B[BS]);#pragma css task input(A) inout(sum)void accum (float A[BS], float *sum);

for (i=0; i<N; i+=BS) // C=A+B vadd3 ( &A[i], &B[i], &C[i]);...for (i=0; i<N; i+=BS) // sum(C[i]) accum (&C[i], &sum);...for (i=0; i<N; i+=BS) // B=sum*A scale_add (sum, &E[i], &B[i]);...for (i=0; i<N; i+=BS) // A=C+D vadd3 (&C[i], &D[i], &A[i]);...for (i=0; i<N; i+=BS) // E=G+F vadd3 (&G[i], &F[i], &E[i]);

1 2 3 4

13 14 15 16

5 6 87

17

9

18

10

19

11

20

12

Color/number: order of task instantiationSome antidependences covered by flow dependences not drawn

Compute dependences @ task instantiation time

WriteDecouplehow we write

formhow it is executed

StarSs: … and executed in a data-flow model

#pragma css task input(A, B) output(C)void vadd3 (float A[BS], float B[BS], float C[BS]);#pragma css task input(sum, A) inout(B)void scale_add (float sum, float A[BS], float B[BS]);#pragma css task input(A) inout(sum)void accum (float A[BS], float *sum);

1 1 1 2

2 2 2 3

2 3 54

7

6

8

6

7

6

8

7

for (i=0; i<N; i+=BS) // C=A+B vadd3 ( &A[i], &B[i], &C[i]);...for (i=0; i<N; i+=BS) // sum(C[i]) accum (&C[i], &sum);...for (i=0; i<N; i+=BS) // B=sum*A scale_add (sum, &E[i], &B[i]);...for (i=0; i<N; i+=BS) // A=C+D vadd3 (&C[i], &D[i], &A[i]);...for (i=0; i<N; i+=BS) // E=G+F vadd3 (&G[i], &F[i], &E[i]);

Execute

Color/number: a possible order of task execution

StarSs

• Flat global address space seen by programmer

• Flexibility to dynamically traverse dataflow graph “optimizing”

• Concurrency. Critical path

• Memory access: data transfers performed by run time

• Opportunities for

• Prefetch

• Reuse

• Eliminate antidependences (rename)

• Replication management

• Coherency/consistency handled by the runtime

StarSs: … reductions

#pragma css task input(A, B) output(C)void vadd3 (float A[BS], float B[BS], float C[BS]);#pragma css task input(sum, A) inout(B)void scale_add (float sum, float A[BS], float B[BS]);#pragma css task input(A) inout(sum) reduction(sum)void accum (float A[BS], float *sum);

1 1 1 2

2 2 2 3

2 3 3

5

4

6

4

5

4

6

5

for (i=0; i<N; i+=BS) // C=A+B vadd3 ( &A[i], &B[i], &C[i]);...for (i=0; i<N; i+=BS) // sum(C[i]) accum (&C[i], &sum);...for (i=0; i<N; i+=BS) // B=sum*A scale_add (sum, &E[i], &B[i]);...for (i=0; i<N; i+=BS) // A=C+D vadd3 (&C[i], &D[i], &A[i]);...for (i=0; i<N; i+=BS) // E=G+F vadd3 (&G[i], &F[i], &E[i]);

Color/number: possible order of task execution

2

StarSs & heterogeneity

#pragma css task input (T[TS][TS]) inout (B[TS][TS])void chol_strsm (float *T, float *B);

#pragma css target device (cuda) implements (chol_strsm) \copyin (T[TS][TS], B[TS][TS]) copyout (B[TS][TS])#pragma css task input (T[TS][TS]) inout (B[TS][TS])void chol_strsm_cuda (float *T, float *B);

#pragma css target device (cell) copyin (A[TS][TS], C[TS][TS]) \ copyout (C[TS][TS])#pragma css task input (A[TS][TS]) inout (C[TS][TS])void chol_ssyrk (float *A, float *C);

• A really heterogeneous system may have several hosts, and different types of accelerators or specific resources

• Different task implementations

• Default: every task should at least be runable on the host

• implementation for each specific accelerators (even alternative implementations)

#pragma css task inout (A[TS][TS])void chol_spotrf (float *A);

#pragma css target device (cell, cuda) copyin (T[TS][TS], B[TS][TS], C[TS][TS]) \ copyout (B[TS][TS])#pragma css task input (A[TS][TS], B[TS][TS}) inout (C[TS][TS])void chol_sgemm (float *A, float *B, float *C);

GPUSs: Compiler phase

Code translation

(mcc)

smpss-cc_app.c pack

C compiler(gcc, icc, ...)

app.tasks (tasks list)

app.c

smpss-cc_app.o

app.o

gpuss-cc kernel.cu

nvcc

kernel.o

smpss-cc-app.c

GPUSs: Linker phase

app.c

unpack

smpss-cc-app.c

app-adapters.c

execlibSMPSS.so

Linker

glue code generator

app.capp.o

app.tasks

exec-adapters.c

app-adapters.ccsmpss-cc_app.o

C compiler(gcc, icc,...)

exec-registration.c

exec-adapters.oexec-registration.o

gpuss-cc

kernel.okernel.o

GPUSs implementation

• Architecture implications

• Large local device storage O(GB) large task granularity Good

• Data transfers: Slow, non overlapped Bad

• Cache management

• Write-through

• Write-back

• Run time implementation

• Powerful main processor and multiple cores

• Dumb accelerator (not able to perform data transfers, implement software cache,…)

GPUSs implementation

Slave threads

FUFUFU

Helper thread

IFUREG

ISSIQRENDEC

RETMain thread

E. Ayguade, et al, “An Extension of the StarSs Programming Model for Platforms with Multiple GPUs” Europar2009

CPU

User main program

GPUSs lib

GPU0

Device Memory

Task code

Slave threads

Main thread

Memory

Userdata

Stage in/out data

kernel execution

Data dependence Data renaming

Scheduling

Renaming table

...

Stage in/out data

Kernel executionCachetable

Task Control Buffer

Helper thread

GPU1

Device Memory

Task code

__global__ void matmul_cuda ( float * A, float * B, float * C, int wA, int wB ){ int bx = blockIdx.x; int by = blockIdx.y; int tx = threadIdx.x; int ty = threadIdx.y;

int aBegin = wA * BLOCK_SIZE * by; int aEnd = aBegin + wA – 1; int aStep = BLOCK_SIZE; int bBegin = BLOCK_SIZE * bx; int bStep = BLOCK_SIZE * wB; float Csub = 0;

for( int a = aBegin, b = bBegin; a <= aEnd; a += aStep, b += bStep ){ __shared__ float As[ BLOCK_SIZE ][ BLOCK_SIZE ]; __shared__ float Bs[ BLOCK_SIZE ][ BLOCK_SIZE ]; As[ ty ][ tx ] = A[ a+wA * ty + tx ]; Bs[ ty ][ tx ] = B[ b+wB * ty + tx ]; __syncthreads( ); for( int k = 0;: k < BLOCK_SIZE; k++ ) Csub += As[ ty ][ k ] * Bs[ k ][ tx ]; __syncthreads( ); }}#pragma css task input(A[BS][BS], B[BS][BS]) inout( C[BS][BS] )#pragma css target device (CUDA)void matmul_tile (float *A, float *B, float *C ){ matmul_cuda <<<dimGrid, dimBlock>>>(A, B, C, BS, BS); cudaThreadSynchronize();}

GPUSs examples

Standard CUDA code for matrix-matrix multiplication

Main program:• No explicit data transfers or allocation• No explicit execution configuration• The same StarSs main program can be used

int main( void ){ ... for (i = 0; i < N; i++) for (j = 0; j < N; j++) for (k = 0; k < N; k++) matmul_tile (A[i][k], B[k][j], C[i][j]); ... }

GPUSs examples

Standard CUDA code using CUBLAS lib

int main( void ){ ... for (i = 0; i < N; i++) for (j = 0; j < N; j++) for (k = 0; k < N; k++) matmul_tile (A[i][k], B[k][j], C[i][j]); ... }

#pragma css task input(A[BS][BS], B[BS][BS]) inout( C[BS][BS] )#pragma css target device (CUDA)void matmul_tile (float *A, float *B, float *C) {unsigned char TR = 'T', NT = 'N';float DONE = 1.0, DMONE = -1.0;float *d_A, *d_B, *d_C; cublasStatus status;

cublasSgemm (NT, NT, BS, BS, BS, DONE, A, BS, B, BS,DONE, C, BS); status = cublasGetError();

if( status != CUBLAS_STATUS_SUCCESS ) printf( "CUBLAS EROOR\n" );

cudaThreadSynchronize();}

Main program:• No explicit data transfers or allocation• No explicit execution configuration• The same StarSs main program can be used

GPUSs results: MxM @ GPUSs using CUBLAS kernel

int main (int argc, char **argv) {int i, j, k;…

initialize(A, B, C);

for (i=0; i < NB; i++) for (j=0; j < NB; j++) for (k=0; k < NB; k++) mm_tile( C[i][j], A[i][k], B[k][j], BS); }

#pragma css task input(A[NB][NB], B[NB][NB], NB) \ inout(C[NB][NB]) target device(cuda)void mm_tile (float *A, float *B, float *C, int NB){ unsigned char TR = 'T', NT = 'N'; float DONE = 1.0, DMONE = -1.0; float *d_A, *d_B, *d_C;

cublasSgemm (NT, NT, NB, NB, NB, DMONE, A, NB, B, NB, DONE, C, NB);}

BS

BSNB

NB

BS

BS

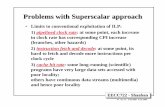

GPUSs results: MxM @ GPUSs using CUBLAS kernel

• Run time instrumentation

• Analysis: i.e.

• No overlap between communication and computation

• Some kind of self synchronization of data transfers

GPUSs results

GPUSs results: StarSs and Accelerators

• Same source “any” target

• Possibly optimized tasks.

• Transparent data transfer

• Prefetch, double buffer,cache,…

• Minimize bandwidth: locality aware scheduling

MxM @ 4 Cards

ClearSpeedSs

CellSs

0 500 1000 1500 2000 2500 3000 3500 4000 4500

0

20

40

60

80

100

120

140

160Cholesky perfomance (GFlops)

1 SPU

4 SPUs

8 SPUs

Matrix Size

GF

lop

s

GPUSsCholesky @ 1-4 GPUs

Conclusions

• StarSs is a programming model that aims to simplify the development of parallel applications, while achieving good performance

• Portability and access to accelerators is one of the main objectives

• GPUSs is the first prototype of the StarSs family towards the use of GPUs

• Distributed as open source (soon downloadable from www.bsc.es)