初探AWS 平台上的 NoSQL 雲端資料庫服務

-

Upload

amazon-web-services -

Category

Technology

-

view

433 -

download

2

Transcript of 初探AWS 平台上的 NoSQL 雲端資料庫服務

© 2015, Amazon Web Services, Inc. or its Affiliates. All rights reserved.

蔣宗恩, Technical Account Manager, AWS Enterprise Support

2017/06

Getting Started with NoSQL Cloud

Database Service on AWS

Agenda

1. What is NoSQL?

2. Relational (SQL) vs. non-relational?

3. What is DynamoDB?

4. DynamoDB Tables & Indexes

5. Scaling

6. Integration Capabilities

7. Demo

What is NoSQL?

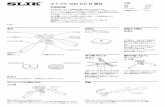

Data volume since 2010

• 90% of stored data generated in

last 2 years

• 1 terabyte of data in 2010 equals

6.5 petabytes today

• Linear correlation between data

pressure and technical innovation

• No reason these trends will not

continue over time

Timeline of database technologyD

ata

Pre

ssu

re

What is NoSQL?

NoSQL is a term to describe data stores that trade full ACID

compliance for high availability and scale.

A

C

I

D

tomicity

onsistency

solation

urability

Single row/single item only

Eventual consistency

Dirty Read

Data replication on commodity storage

Why NoSQL?

• Dirty Reads?

• Eventual Consistency?

• Single row transactions only?

• Why would anybody trade ACID compliance for this?

Relational (SQL) vs. non-relational?

Relational vs. non-relational databases

Traditional SQL NoSQL

DB

Primary Secondary

Scale up

DB

DB

DBDB

DB DB

Scale out

Scale Up vs Scale Out

The CAP Theorem

Network partitions will happen in

distributed systems:

DB

DBDB

DB DB

Consistency

Availability

Partition Tolerance

C A

P

CA

APCP

SQL vs. NoSQL schema design NoSQL design optimizes for

compute instead of storage

Why NoSQL?

Optimized for storage Optimized for compute

Normalized/relational Denormalized/hierarchical

Ad-hoc queries Instantiated views

Scale vertically Scale horizontally

Good for OLAP Built for OLTP at scale

SQL NoSQL

What is DynamoDB?

RDBMSDynamoDB

Amazon’s Path to DynamoDB

Amazon DynamoDB

DynamoDB is a fully managed, NoSQL document and key value data store

Predictable Performance

Highly Available

Massively Scalable

Fully Managed

Low Cost

Consistently low latency at scale

PREDICTABLE

PERFORMANCE!

WRITES

Replicated continuously to 3

Availability Zones

Persisted to disk (custom SSD)

READS

Strongly or eventually consistent

No latency trade-off

Designed to

support 99.99%

of availability

Built for high

durability

High availability and durability

High availability and durability

DynamoDB automatically partition data

• Partition key spreads data (and workload) across partitions

• Automatically partitions as data grows and throughput needs

increase

High-scale

APP

Large number of unique hash keys

+

Uniform distribution of workload

across hash keys

Partition 1..N

Fully managed service = automated operations

DB hosted on premises DynamoDB

DynamoDB Tables & Indexes

DynamoDB table structureTable

Items

Attributes

Partitionkey

Sortkey

Mandatory

Key-value access pattern

Determines data distribution Optional

Model 1:N relationships

Enables rich query capabilities

All items for key==, <, >, >=, <=“begins with”“between”“contains”“in”sorted resultscountstop/bottom N values

00 55 A954 FFAA00 FF

Partition Keys

Id = 1

Name = Jim

Hash (1) = 7B

Id = 2

Name = Andy

Dept = Eng

Hash (2) = 48

Id = 3

Name = Kim

Dept = Ops

Hash (3) = CD

Key Space

Partition Key uniquely identifies an item

Partition Key is used for building an unordered hash index

Allows table to be partitioned for scale

Partition 3

Partition:Sort Key uses two attributes together to uniquely identify an Item

Within unordered hash index, data is arranged by the sort key

No limit on the number of items (∞) per partition key

Except if you have local secondary indexes

Partition:Sort Key

00:0 FF:∞

Hash (2) = 48

Customer# = 2

Order# = 10

Item = Pen

Customer# = 2

Order# = 11

Item = Shoes

Customer# = 1

Order# = 10

Item = Toy

Customer# = 1

Order# = 11

Item = Boots

Hash (1) = 7B

Customer# = 3

Order# = 10

Item = Book

Customer# = 3

Order# = 11

Item = Paper

Hash (3) = CD

55 A9:∞54:∞ AAPartition 1 Partition 2

Partitions are three-way replicated

Id = 2

Name = Andy

Dept = Engg

Id = 3

Name = Kim

Dept = Ops

Id = 1

Name = Jim

Id = 2

Name = Andy

Dept = Engg

Id = 3

Name = Kim

Dept = Ops

Id = 1

Name = Jim

Id = 2

Name = Andy

Dept = Engg

Id = 3

Name = Kim

Dept = Ops

Id = 1

Name = Jim

Replica 1

Replica 2

Replica 3

Partition 1 Partition 2 Partition N

Local secondary index (LSI)

Alternate sort key attribute

Index is local to a partition key

A1

(partition)

A3

(sort)

A2

(item key)

A1

(partition)

A2

(sort)A3 A4 A5

LSIsA1

(partition)

A4

(sort)

A2

(item key)

A3

(projected)

Table

KEYS_ONLY

INCLUDE A3

A1

(partition)

A5

(sort)

A2

(item key)

A3

(projected)

A4

(projected)ALL

10 GB max per partition key, i.e.

LSIs limit the # of range keys!

Global secondary index (GSI)

Alternate partition and/or sort key

Index is across all partition keys

Use composite sort keys for compound indexes

A1

(partition)A2 A3 A4 A5

A5

(partition)

A4

(sort)

A1

(item key)

A3

(projected)INCLUDE A3

A4

(partition)

A5

(sort)

A1

(item key)

A2

(projected)

A3

(projected)

ALL

A2

(partition)

A1

(itemkey)KEYS_ONLY

GSIs

TableRCUs/WCUs provisioned

separately for GSIs

Online indexing

How do GSI updates work?

Table

Primary

tablePrimary

tablePrimary

tablePrimary

table

Global

Secondary

Index

Client

2. Asynchronous

update (in progress)

If GSIs don’t have enough write capacity, table writes will be throttled!

LSI or GSI?

LSI can be modeled as a GSI

If data size in an item collection > 10 GB, use GSI

If eventual consistency is okay for your scenario,

use GSI!

Scaling

Scaling

Throughput

Provision any amount of throughput to a table

Size

Add any number of items to a table

- Max item size is 400 KB

- LSIs limit the number of range keys due to 10 GB limit

Scaling is achieved through partitioning

Throughput

Provisioned at the table level

Write capacity units (WCUs) are measured in 1 KB per second

Read capacity units (RCUs) are measured in 4 KB per second

- RCUs measure strictly consistent reads

- Eventually consistent reads cost 1/2 of consistent reads

Read and write throughput limits are independent

WCURCU

Partitioning Math

In the future, these details might change…

Number of Partitions

By Capacity (Total RCU / 3000) + (Total WCU / 1000)

By Size Total Size / 10 GB

Total Partitions CEILING(MAX (Capacity, Size))

Partitioning Example

Table size = 8 GB, RCUs = 5000, WCUs = 500

RCUs per partition = 5000/3 = 1666.67

WCUs per partition = 500/3 = 166.67

Data/partition = 10/3 = 3.33 GB

RCUs and WCUs are uniformly

spread across partitions

Number of Partitions

By Capacity (5000 / 3000) + (500 / 1000) = 2.17

By Size 8 / 10 = 0.8

Total Partitions CEILING(MAX (2.17, 0.8)) = 3

What causes throttling?

If sustained throughput goes beyond provisioned throughput per partition

Non-uniform workloads

Hot keys/hot partitions

Very large bursts

Mixing hot data with cold data

Use a table per time period

From the example before:

Table created with 5000 RCUs, 500 WCUs

RCUs per partition = 1666.67

WCUs per partition = 166.67

If sustained throughput > (1666 RCUs or 166 WCUs) per key or partition, DynamoDB

may throttle requests

- Solution: Increase provisioned throughput

To learn more, please attend:

Deep Dive on Amazon DynamoDB 3:55 p.m.– 4:35 p.m.

Integration Capabilities

DynamoDB Streams

Stream of table update

Asynchronous

Exactly once

Strictly ordered

24-hr lifetime per item

Integration Capabilities

DynamoDB Triggers

Implement as AWS lambda

function

Your code scale automatically

Java, Node.js and Python

IAM

Fine-grained access control

via AWS IAM

Table-,Item, and attribute- level

access control

Integration Capabilities

ElasticSearch integration

Full-text queries

Add search to mobile app

Monitor IoT sensor status

code

App telemetry pattern

discovery using regular

expressions

Reference Architecture

Demo

Architecture of a simple serverless web

application

AWS Identity &

Access

ManagementDynamoDBAPI

Gateway

JavaScript

users

Amazon

S3 Bucket

internet

Lambda

bit.ly/NoSQLDesignPatterns