Visual Recognition of Hand Motion - the UWA Profiles and … · sensing data as gestures. The...

Transcript of Visual Recognition of Hand Motion - the UWA Profiles and … · sensing data as gestures. The...

i

Visual Recognition of Hand Motion

THIS THESIS IS

PRESENTED TO THE

DEPARTMENT OF COMPUTER SCIENCE

FOR THE DEGREE OF

DOCTOR OF PHILOSOPHY

OF THE

UNIVERSITY OF WESTERN AUSTRALIA

By

Eun-Jung Holden

January 1997

iii

Abstract

Hand gesture recognition is an active area of research in recent years, being

used in various applications from deaf sign recognition systems to human-

machine interaction applications. The gesture recognition process, in general,

may be divided into two stages: the motion sensing, which extracts useful data

from hand motion; and the classification process, which classifies the motion

sensing data as gestures. The existing vision-based gesture recognition

systems extract 2-D shape and trajectory descriptors from the visual input, and

classify them using various classification techniques from maximum likelihood

estimation to neural networks, finite state machines, Fuzzy Associative

Memory (FAM) or Hidden Markov Models (HMMs). This thesis presents the

framework of the vision-based Hand Motion Understanding (HMU) system

that recognises static and dynamic Australian Sign Language (Auslan) signs

by extracting and classifying 3-D hand configuration data from the visual

input. The HMU system is a pioneer gesture recognition system that uses a

combination of a 3-D hand tracker for motion sensing, and an adaptive fuzzy

expert system for classification.

The HMU 3-D hand tracker extracts 3-D hand configuration data that consists

of the 21 degrees-of-freedom parameters of the hand from the visual input of a

single viewpoint, with an aid of a colour coded glove. The tracker uses a

model-based motion tracking algorithm that makes incremental corrections to

the 3-D model parameters to re-configure the model to fit the hand posture

appearing in the images through the use of a Newton style optimisation

iv

technique. Finger occlusions are handled to a certain extent by recovering the

missing hand features in the images through the use of a prediction algorithm.

The HMU classifier, then, recognises the sequence of 3-D hand configuration

data as a sign by using an adaptive fuzzy expert system where the sign

knowledge are used as inference rules. The classification is performed in two

stages. Firstly, for each image, the classifier recognises Auslan basic hand

postures that categorise the Auslan signs like the alphabet in English.

Secondly, the sequence of Auslan basic hand postures that appear in the image

sequence is analysed and recognised as a sign. Both the posture and sign

recognition are performed by the same adaptive fuzzy inference engine.

The HMU rule base stores 22 Auslan basic hand postures, and 22 signs. For

evaluation, 44 motion sequences (2 for each of the 22 signs) are recorded.

Among them, 22 randomly chosen sequences (1 for each of the 22 signs) are

used for testing and the rest are used for training. The evaluation shows that

before training the HMU system correctly recognised 20 out of 22 signs. After

training, with the same test set, the HMU system recognised 21 signs correctly.

All of the failed cases did not produce any output. The evaluation has

successfully demonstrated the functionality of the combined use of a 3-D hand

tracker and an adaptive fuzzy expert for a vision-based sign language

recognition.

v

Preface

An attempt to build an automated sign language translator began with the

translation of English text into sign language using computer graphics. This

work has been published as a Master's thesis at the University of Western

Australia (Holden 1991), in the proceedings o f the 1992 ACM/SIGAPP

Symposium on Applied Computing (Holden & Roy 1992A), and in the Computer

Graphics Forum (Holden & Roy 1992B).

The research presented in this thesis deals with the reverse problem that

translates hand motion images into signs. Preliminary investigation on the

vision-based recognition of hand motion has been reported in the Department

of Computer Science Technical Report Series (Holden 1993).

The work on the adaptive fuzzy expert system that classifies 3-D hand motion

data into a sign has been published previously. The classifier was initially

tested with the data that was generated by a Power Glove and the result has

been published in the proceedings of the 1994 Western Australian Computer

Science Symposium (Holden et al. 1994). The classifier was also tested with

synthetic motion data. This experiment and the results have been published in

the proceedings of the IEEE International Conference on Neural Networks (Holden

et al. 1995). An extended paper on the work has been accepted in the

International Journal of Expert Systems (Holden et al. 1997).

vi

The research work on the 3-D hand tracker and the classification results using

real motion data is yet to be published.

The work described in these publications, and this thesis, is solely my own.

vii

Acknowledgments

The course of this thesis has turned out to be quite eventful due to the birth of

my first child. It has been a struggle to complete this thesis that had to be

achieved concurrently with the more important duties of a mother and a wife.

Thus this was only possible with the help and support of many people.

Firstly, I would like thank my supervisors, Associate Professor Robyn Owens,

and Professor Geoffrey Roy for their academic and moral support during the

course of this study. I thank Robyn especially for her incredible skills and

patience that went into the proof reading of this thesis, as well as her empathy

and understanding in having to juggle the roles of student, mother and wife.

Geoff has been my supervisor throughout my entire postgraduate studies. I

am always thankful for his enthusiasm for my work, and I have greatly

benefited from his positive encouragement at times of difficulty.

Secondly, in developing a hand tracker, I appreciated help from various

people who communicated with me through email. Brigitte Dorner from

Simon Fraser University in Canada was very helpful in providing me with her

tracker programs and thesis, and answering my questions. My tracker has

turned out to be quite different from hers, but her enthusiastic help has

provided an excellent start to the tracker development. Dr. David Lowe from

British Columbia University in Canada has also provided me with his general

tracking source code, and the module that solves normal equations has been

viii

used in my hand tracker. James Regh from Carnegie Mellon University,

U.S.A. was also helpful answering questions about his hand tracker.

Thirdly, I thank colleagues who provided support for this research. Jason

Birch deserves much thanks for sharing his in-depth knowledge of fuzzy logic

with me in many discussions. Bruce Mills has always made himself available

to explain various mathematical problems. Dr. Dorota Kieronska provided

valuable friendship and support throughout the study and I appreciated her

proof–reading a major part of this thesis. Lifang Gu shared invaluable

discussions on 3-D model-based tracking. Macintosh gurus, Jon Quinn and

Marcus Jager provided excellent technical support at the earlier part of this

project, and Shay Telfer for the later part. I thank the colleagues at the

Robotics and Vision lab who made working towards the completion of this

thesis so pleasant, and especially Rameri Salama for the occasional proof

reading.

Fourthly, I would like to sincerely thank Dr Chris Sauer who taught me the

ways of research with tremendous patience and graciousness, during my

honours undergraduate year. His encouragement has lead me to postgraduate

studies.

Lastly, I would like to thank my parents who taught me the value of learning,

my brother Jai-Seung for his loving support, and my husband David and

daughter Jacqui, for allowing their lives to be shared with a computer. I thank

God for his goodness.

ix

To my parents,

Major General and Mrs Chang, Keun-Hwan,

who sacrificed everything for my education.

x

Contents

Abstract....................................................................................................................... iii

Preface........................................................................................................................... v

Acknowledgments................................................................................................... vii

Contents........................................................................................................................ x

Abbreviations ........................................................................................................... xiv

Chapter 1: Introduction ............................................................................................ 1

1.1 Background ................................................................................................................................ 1

1.2 Recognition of Gestures ........................................................................................................... 3

1.3 The Approach ............................................................................................................................ 6

1.3.1 Motion Sensing Through a 3-D Hand Tracker..................................................... 9

1.3.2 Classification of a 3-D motion sequence ............................................................... 9

1.3.3 Platform.................................................................................................................... 10

1.4 Contributions ........................................................................................................................... 11

1.5 Layout of the Thesis ................................................................................................................ 12

Chapter 2: Literature Review................................................................................. 14

2.1 Chapter Overview................................................................................................................... 15

2.2 Human Perception of Biological Motion ............................................................................. 16

2.3 Hand Shape Recognition........................................................................................................ 18

2.4 Motion Understanding Using Two-Dimensional Information ........................................ 20

xi

2.5 Three-Dimensional Motion Understanding Using VR Technology ................................ 25

2.6 Three-Dimensional Motion Sensing Techniques................................................................ 26

2.6.1 Three-Dimensional Model-Based Hand Tracking............................................. 28

2.7 Summary .................................................................................................................................. 30

2.8 Introduction to the HMU System ......................................................................................... 31

Chapter 3: A Vision-Based Three-Dimensional Hand Tracker ...................... 34

3.1 Chapter Overview................................................................................................................... 36

3.2 Assumptions ............................................................................................................................ 39

3.2.1 The signing speed................................................................................................... 39

3.2.2 Features and Occlusions ........................................................................................ 40

3.3 The Hand Model ..................................................................................................................... 40

3.4 Colour Glove ............................................................................................................................ 45

3.5 Feature Measurement ............................................................................................................. 49

3.5.1 Colour segmentation .............................................................................................. 49

3.5.2 Marker Detection .................................................................................................... 52

3.5.3. Imposter or Missing Markers .............................................................................. 55

3.5.3.1 Prediction algorithm.............................................................................. 57

3.5.4. Finger Joint Correspondence ............................................................................... 60

3.6 The State Estimation ............................................................................................................... 62

3.6.1 Projection of the 3-D Model onto a 2-D Image................................................... 63

3.6.2 Definitions ............................................................................................................... 64

3.6.3 Newton's Method ................................................................................................... 67

3.6.4 Minimisation ........................................................................................................... 68

3.6.5 Lowe's Stabilisation and Convergence Forcing Technique .............................. 71

3.6.6 Calculating the Jacobian Matrix ........................................................................... 74

3.6.7 Dealing with Noise in Image Processing ............................................................ 75

3.6.8 Constraints: Joint Angle Change Limit from Frame to Frame......................... 77

xii

3.6.9 The State Estimation Algorithm ........................................................................... 77

3.7 Summary .................................................................................................................................. 78

Chapter 4: Hand Motion Data Classification..................................................... 81

4.1 Overview of the Chapter........................................................................................................ 82

4.2 Introduction to the HMU Classifier...................................................................................... 83

4.2.1 Sign Knowledge Representation .......................................................................... 83

4.2.2 Problems in the Direct Use of Movement Data.................................................. 86

4.2.3 User-Adaptability ................................................................................................... 88

4.2.4 Comparison to other Classifiers ........................................................................... 88

4.3. Fuzzy Knowledge Representation....................................................................................... 90

4.3.1 Posture Representation .......................................................................................... 90

4.3.2 Motion Representation .......................................................................................... 95

4.3.3 Sign Representation................................................................................................ 98

4.4 Inference Rules for Auslan Hand Postures and Signs ..................................................... 100

4.4.1 Posture Rule Base ................................................................................................. 100

4.4.2 Sign Knowledge Base........................................................................................... 102

4.5 The Classification Process .................................................................................................... 103

4.5.1. Fuzzy Inference Engine ...................................................................................... 104

4.5.2. Classification Process at Work........................................................................... 106

4.5.2.1 Posture Recognition............................................................................. 106

4.5.2.2 Analysis of the Posture Sequence ...................................................... 108

4.5.2.3 Sign Classification ................................................................................ 110

4.6 Adaptive Engine.................................................................................................................... 112

4.7 Summary ................................................................................................................................ 114

Chapter 5: Experimental Results ........................................................................ 116

5.1 Chapter Overview................................................................................................................. 117

5.2 Experimental Details ............................................................................................................ 117

xiii

5.2.1 Assumptions.......................................................................................................... 117

5.2.2 Data Collection...................................................................................................... 118

5.2.3 Selection of Training Data ................................................................................... 119

5.2.4 Experiment Methodology.................................................................................... 122

5.3 Results..................................................................................................................................... 122

5.3.1 Recognition Process.............................................................................................. 125

5.3.2 Impact of Training ................................................................................................ 127

5.3.2.1 The Lower Rule Activation Levels (RALs) After Training ............ 128

5.3.2.2 The Examples of Improved Recognition Through Training ......... 133

5.3.2.3 The Failed Case After Training .......................................................... 136

5.4 Limitations ............................................................................................................................. 138

5.4.1 Palm Rotation ........................................................................................................ 138

5.4.2 Motion .................................................................................................................... 140

5.5 Summary ................................................................................................................................ 140

Chapter 6: Conclusion .......................................................................................... 142

6.1 Summary ................................................................................................................................ 142

6.2 Contributions ......................................................................................................................... 144

6.3 Further Development ........................................................................................................... 145

Appendix A.............................................................................................................. 148

Appendix B .............................................................................................................. 149

Appendix C .............................................................................................................. 151

Bibliography............................................................................................................ 169

xiv

Abbreviations

ASL American Sign Language

Auslan AUstralian Sign LANguage

CMC CarpoMetaCarpal

DH Denavit-Hartenberg

DIP Distal InterPhalangeal

FAM Fuzzy Associative Memory

HMM Hidden Markov Model

HMU Hand Motion Understanding

HST Hand Sign Translator

IP InterPhalangeal

MCP MetaCarpoPhalangeal

PIP Proximal InterPhalangeal

RAL Rule Activation Level

VR Virtual Reality

1

Chapter 1

Introduction

1.1 Background

Deaf people in Australia communicate with one another by using a sign

language called Auslan. Signers use a combination of hand movements that

change in shape and location relative to the upper body, and facial

expressions. Auslan is different from American Sign Language (ASL) or any

other, though it is related to British Sign Language. As is the case in other

countries, Auslan has rules of context and grammar that are separable from

the spoken language of the community, in this case, English. For example,

one-to-one mappings of English words and signs are not always possible, and

the rules for sentence formation are also different (MacDougall 1988). In order

to give deaf children access to the "grammar" of English, deaf educators in

Australia have developed a standardised Sign System, called Signed English

(Jeanes et al. 1989) which represents a manual interpretation of English by

using the exact syntactic and semantic correspondence between English words

and signs. Signs used in Signed English are adapted mostly from Auslan, as

well as from other sign languages such as Gestuno, which is an international

2

sign system developed under the auspices of the United Nations, ASL and

British sign language (Jeanes et al. 1981).

Despite the development of sign languages and the effort to educate the deaf

community to master the written form of spoken language, there is still a vast

communication barrier between the deaf and aurally unaffected people, the

majority of whom do not know sign language. Thus, there is a need for a

communication bridge and a means whereby unaffected people can efficiently

learn sign language.

An automated communication system, or an automated sign language

learning device for unaffected people, may be an ideal solution to benefit both

deaf and unaffected people of the community. Whilst automated

communication systems may not perform all aspects of translation, such as the

semantic interpretation of the signs, some mapping between signs and

letters/words/sentences could provide an adequate translation for certain

formal interactions (for example, legal proceedings, or conferences) and

informal ones (for example, restaurants). It could also be useful in emergency

situations such as at hospitals or in police stations, where urgent information

could be conveyed without having to wait for a human interpreter, and where

written communication is either too slow or otherwise inappropriate.

A prototype of the Hand Sign Translator (HST) has been previously developed

(Holden & Roy 1991; Holden & Roy 1992A; Holden & Roy 1992B). The HST

system translates English sentences into Signed English by animating a two-

handed movement using computer graphics. It uses a human movement

3

animation technique where the hand shapes and their motion are generated by

the computer. The prototype has a tutorial interface where an unaffected

person can learn Signed English. The interface provides a user with the skills

for translating English into Signed English, and allows the user to enter

English sentences and request the system to demonstrate the signs. It also

provides a limited means to test a user's reverse translation ability, where the

user is requested to answer multiple choice questions. As a learning device,

however, it fails to observe the progress of the learner's signing skills.

Moreover, as a communication tool, the HST only provides one-way

communication and fails to give the recognition of feedback.

To achieve two-way communication, a system that translates signs into

English needs to be developed. A complete sign language recognition system

would inevitably require an ability to recognise the motion of the whole upper

body, as well as the facial expression, both of which form an integral part in

understanding Auslan. However, the objective of this current research is to

provide an initial step towards this goal by researching and developing a

framework for the Hand Motion Understanding (HMU) system, a visual hand

motion recognition system that understands one-handed Auslan signs.

1.2 Recognition of Gestures

Sign language signs are a subset of gestures where either a static hand posture

or a dynamic hand motion imply a meaning. A sign has a specific semantic

meaning, whilst a gesture may represent just a configuration. Throughout this

thesis, a hand posture refers to a 3-D hand configuration, whereas a hand

shape is the projected silhouette of the hand posture onto an image plane.

4

Therefore, a static gesture may be recognised by a hand posture only, while a

dynamic gesture is recognised by 3-D hand motion that consists of the

changes of hand postures and 3-D hand locations during the gesture.

In recent years, automatic recognition of static and dynamic hand gestures has

been an active area of research in various fields from sign language

recognition (Tamura & Kawasaki 1988; Murakami & Takuchi 1991; Starner &

Pentland 1995; Uras & Verri 1995) to human-computer interaction applications

where a set of specific gestures are used as a tool for users to communicate

with the computer (Hunter et al. 1995; Freeman & Roth 1995; Darrell &

Pentland 1993).

There are two technologies on which the gesture recognition systems are

based: Virtual Reality (VR) technology, and computer vision technology.

VR glove-based gesture recognition systems require a user to wear a VR glove

(Eglowstein 1990) to perform gestures. The glove produces a 3-D hand motion

sequence that is a sequence of 3-D hand configuration sets each containing

finger orientation angles. VR glove-based systems (Murakami & Taguchi 1991;

Fels & Hinton 1993; Vamplew & Adams 1995) use various structures of neural

networks to recognise 3-D motion data as gestures.

On the other hand, vision-based systems use hand images that are captured by

using a single or multiple cameras. They extract some 2-D characteristic

descriptors of hand shapes or motion (that represent the changes of hand

shapes as well as hand trajectory), which are then matched with stored hand

5

gestures. A classical classification technique such as the κ -nearest neighbour

rule is used to recognise both the static gestures (Uras & Verri 1995; Hunter et

al. 1995) and dynamic gestures (Tamura & Kawasaki 1988). Alternatively,

Wilson and Anspach (1993) use neural networks to classify the characteristic

shape descriptors as static gestures. Among the systems that recognise a

sequence of gestures, Davis and Shah (1994) use a finite state machine to

segment a sequence of 2-D characteristic hand shape descriptors that are

extracted from an image sequence in their vision-based gesture recognition

system. The ending of each gesture is indicated by a specific hand motion.

The system developed by Starner and Pentland (1995), however, recognises a

sequence of ASL signs without any indicator that separates signs. They

segment a sequence of coarse 2-D characteristic motion descriptors appearing

in the image by using Hidden Markov Models (HMMs).

The existing vision-based systems extract and classify 2-D hand shape

information, usually from images from a single viewpoint, in order to

recognise gestures. The representation of 3-D hand postures or 3-D motion by

using 2-D characteristic descriptors from a single viewpoint, has its inherent

limitations. As the hand posture rotates in 3-D, the hand shape appearing in

the image from the same viewpoint may change significantly. The sign

recognition systems that relies only on the 2-D shape information makes an

assumption that each posture must face the camera at a certain angle. This

assumption is unrealistic in sign motion recognition because the angle that is

presented to the camera may vary amongst signers and depend on the

preceding and following movement.

6

Even though the use of a VR glove does not presume the unrealistic

assumption mentioned above and avoids computationally expensive image

processing, a reliable VR glove is costly. VR glove users also report that the

wires which are placed on the glove to detect the hand configuration are very

sensitive to even a slight pressure. This may cause problems when performing

signs that involve movements such as crossing fingers, or one finger touching

others.

The idea of a vision-based sign recognition system that uses 3-D hand

configuration data was previously suggested by Dorner (1994A). She has

developed a general hand tracker that extracts 26 degrees-of-freedom of a

single hand configuration from the visual input as a first step towards an ASL

sign recognition system. It is generally accepted that the physiologically

possible hand movement uses 26 degrees-of-freedom, being 6 degrees-of-

freedom for translations and rotations of the wrist, plus 4 degrees-of-freedom

for rotations of each finger and thumb. Regh & Kanada (1995) have also

developed a hand tracker that extracts 27 degrees-of-freedom (an additional

degree-of-freedom is added to the thumb) of a single hand configuration.

These trackers, however, do not allow for occlusion, and thus the tracking

capability of a meaningful gesture sequence has not been tested.

1.3 The Approach

This thesis presents the Hand Motion Understanding (HMU) system, a vision-

based sign recognition system that extracts and classifies 3-D hand

configuration data from images taken from a single viewpoint, in order to

understand static and dynamic hand signs. The system recognises "fine grain"

7

hand motion, such as configuration changes of fingers, by using a combination

of a robust 3-D hand tracker and a fuzzy expert classifier.

A signer wears a colour-coded glove and performs the sign commencing from

a specified hand posture, then proceeds to a static or dynamic sign. An

example where the hand performs a dynamic sign by starting from the

specified hand posture is shown in Figure 1.

posture_flat0Specified initial hand posture Dynamic sign

sign_good_animal

Figure 1: Specified initial hand posture followed by a dynamic sign.

A colour image sequence that is captured through a single video camera is

used as input. The system is able to determine 3-D hand postures from the

input and recognise them as a sign. The HMU system consists of two main

components:

• The 3-D model-based hand tracker that extracts a 3-D hand motion

sequence (each frame containing a set of hand kinematic configuration

data) from the visual input;

• The classification module that recognises the 3-D motion sequence as a

sign by using an adaptive fuzzy expert system.

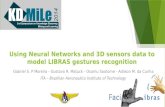

The structure of the HMU system is shown in Figure 2.

8

3D MODEL BASED TRACKER

3D Model

CLASSIFIER

AUSLANhand posturerule base

Hand Posture Classifierusing a Fuzzy Expert System

High Level Motion Analyser

Sign rule base

Hand Sign Classifierusing a Fuzzy Expert System

SIGN with a decision confidence

sign knowledge representationstarting posture - motion- ending posture

. . .

modelstateestimate

posture "spread"

Figure 2: Structure of the HMU system.

9

1.3.1 Motion Sensing Through a 3-D Hand Tracker

The HMU hand tracker determines 3-D hand configurations appearing in the

visual input, by using a 3-D model-based object tracking technique. The

tracker employs a hand model that consists of 21 degrees-of-freedom

parameters, being 6 translation and rotation parameters of the wrist, plus 3

rotation parameters for each finger and the thumb (one less parameter for each

of the five fingers than the full 26 degrees-of-freedom hand model).

Throughout the tracking process, these 21 model parameters are incrementally

corrected to fit the postures captured in the images.

3-D model-based tracking has been previously used by other 3-D hand

trackers in recovering full degrees-of-freedom of the hand (Dorner 1994A;

Regh and Kanada 1995). Given a 3-D model and its initial configuration, the

2-D image features and the 2-D projection of the model are compared in order

to re-configure the 3-D model to fit the posture captured in the image. The

HMU tracker uses a robust and efficient model fitting algorithm previously

developed by Lowe (1991). Lowe's general algorithm is especially designed

for 3-D model-based tracking, and has not been previously adapted for

tracking hand movement with an extensive number of degrees-of-freedom.

The HMU tracker allows for occlusions to a certain degree by employing a

prediction algorithm.

1.3.2 Classification of a 3-D motion sequence

The classification of the 3-D motion data is performed by using a novel

classification technique that uses an adaptive fuzzy expert system. The 3-D

10

motion sequence is classified as a sign by firstly recognising key postures,

namely Auslan basic hand postures for each frame; secondly by analysing the

changes of postures to determine the starting and ending postures of the

sequence as well as the motion that occurred in between; and thirdly, by

recognising them as a sign.

Both the posture and sign recognition use the same fuzzy inference engine.

Fuzzy set theory allows the system to express the sign and posture knowledge

in natural and imprecise descriptions. It also caters for the slight errors caused

by the tracker or the slight variations exhibited amongst the signers. The

performance of fuzzy inference is further improved by employing an adaptive

engine that enables the defined fuzzy sets to be adaptive to the tracker errors

and motion variations occurring in real 3-D motion sequence produced by the

tracker.

1.3.3 Platform

The HMU system has been developed on a Macintosh Quadra800 using the C

programming language with the CodeWarrior compiler. A Raster Ops video

card was installed in the Macintosh, allowing a movie sequence of one-handed

movement to be captured by a single camera (Sony Hi8 video camera) under

normal office lighting.

The prototype of the HMU system is aimed to demonstrate a framework of

gesture recognition, and with the given hardware, it was not possible to

achieve real-time performance. The system uses a sequence of images, which

is a QuickTime movie previously captured by the video camera.

11

1.4 Contributions

The thesis has made the following contributions:

• I have developed a robust and effective hand tracker that recovers the

21 degrees-of-freedom of the hand by adapting a general 3-D model

based tracking algorithm which was developed by Lowe (1991). Even

though the hand tracker is developed for sign motion understanding, it is

a general hand tracker.

• The tracker allows for some degree of occlusion of fingers by

employing a prediction algorithm. To my knowledge, solving the

problem of occlusions in a hand modelled with extensive degrees-of-

freedom had not been previously attempted by any other 3-D hand

trackers.

• I have developed a novel classification technique to classify a 3-D hand

motion sequence into a sign. This technique uses a fuzzy expert system

that has not been previously used in sign language recognition.

• The fuzzy expert system employs an adaptive engine in order to

improve its recognition performance according to the accuracy of

tracking results and the movement variations among the participating

signers.

The system has been evaluated with 22 static and dynamic signs, and

successfully recognised 20 signs before training, and 21 signs after training.

The results show that the tracker computes the movement data with an

accuracy that is sufficient for effective classification. The HMU system is a

pioneer sign recognition system that uses the combination of 3-D hand

tracking and an adaptive fuzzy expert system.

12

1.5 Layout of the Thesis

This thesis consists of the following chapters:

Chapter 2 reviews the related literature of gesture recognition by examining

various motion sensing and classification techniques. These techniques are

referred to throughout the thesis, and those that have been seminal in the

development of this thesis are identified.

Chapter 3 illustrates the 3-D hand tracker. It consists of

• discussions on the techniques used for hand tracking, as well as the

comparisons between the HMU tracker and the other 3-D hand trackers

previously introduced in Chapter 2;

• a description of the hand model, the features that represent the model

in the images, and how the state of the hand model is updated to closely

fit the features appearing in the image; and

• a summary of the implemented tracking process.

Chapter 4 describes the classification process through a fuzzy expert system.

It consists of

• discussions on the techniques used in the classification process, and

how this process compares with other classification techniques

previously explained in Chapter 2;

• an explanation of the knowledge representations of postures and signs

that are used as inference rules for the classification of the postures and

signs;

• a detailed explanation of the classification process; and

• a summary of the classification technique.

13

Chapter 5 explains the experimental results including the evaluation details,

discussions on the results, the limitations that are found during the

experiment.

Chapter 6 concludes the thesis with contributions and discussions on the

future development.

14

Chapter 2

Literature Review

In the physiological sense, the hand is probably the most complex mechanism

in the human body, consisting of many small bones jointed to perform high

dexterity movement. While humans can recognise gestures in a seemingly

effortless fashion, the machine recognition requires two distinct tasks: motion

sensing that produces the information that represents the motion, and

classification that classifies the motion sensing information into a gesture. The

choice of motion sensing and classification techniques used in existing gesture

recognition systems depends on the complexity of the gestures a system aims

to recognise. Motion sensing processes vary from extracting some 2-D shape

invariants of the hand posture appearing in the image (Uras & Verri 1995;

Hunter et al. 1995; Wilson & Anspach 1993), or 2-D motion information that

describes the hand shape changes and the trajectory appearing the images

(Tamura & Kawasaki 1988) to extracting full degrees-of-freedom 3-D hand

configurations (Murakami & Taguchi 1991; Fels & Hinton 1993). For the

classification of 2-D hand shapes, a classical nearest neighbour classification

algorithm (Hunter et al. 1995; Uras & Verri 1995) seems to be adequate. The

motion classification, however, uses various techniques such as neural

networks (Murakami & Taguchi 1991), a finite state machine (Davis & Shah

15

1994), Fuzzy Associate Memory (FAM) (Ushida et al. 1994) or Hidden Markov

Models (HMMs) (Starner & Pentland 1995).

In this chapter, the related literature on existing gesture recognition systems is

described in detail. Firstly, research on the human perception of biological

motion is discussed and then the existing hand gesture recognition systems are

categorised by complexity of the gestures they aim to recognise.

2.1 Chapter Overview

This chapter consists of the following sections:

• Section 2.2 reports the psychological study on human perception of

biological motion.

• Section 2.3 reviews vision-based recognition systems that recognise 2-D

hand shapes.

• Section 2.4 reviews vision-based recognition systems that recognise 2-D

hand motion.

• Section 2.5 reports on 3-D hand motion understanding systems based

on a VR technology.

• Section 2.6 introduces the 3-D hand motion sensing techniques that are

used in the existing hand trackers.

• Section 2.7 summarises the techniques used in the existing gesture

recognition systems.

• Finally, section 2.8 introduces the techniques used in the HMU system.

16

2.2 Human Perception of Biological Motion

Johansson (1973) has performed an experiment on the human perception of

biological motion, specifically the perception of human locomotion. He stated

that in everyday perception, visual information from biological motion and

from the corresponding figurative contour patterns, that is, the shape of the

body, are intermingled. The experiment was conducted to study the

information from the motion pattern without interference from the pictorial

information. Small patches of retro-reflective tape ("reflex patches") were

attached to the main joints (shoulders, elbows, wrists, hip, knees, and ankles)

of the assistant actor. The actor was flooded by the light from search lights

(1000-4000W) that were mounted very close to the lens of the TV camera. The

movements of the actor were recorded, and when the recording was displayed

by using the brightness control on TV, only the reflex patches were shown on

the display. The result shows that the display of those joint positions evoke a

compelling impression of human walking. Johansson also adds that when the

figure remains stationary, the set of joint positions are never interpreted as

representing a human body. The experimental outcome that 10 joint points

moving simultaneously on a screen in a rather irregular way give such a vivid

and definite impression of human walking, raises an interesting question: Is

the perceptual grouping of a human Gestalt determined by a recognition of the

walking pattern, or is this recognition dependent on a spontaneous grouping

in consequence of some general principles for grouping in visual motion

perception? Johansson believes that definite grouping is determined by

general perceptual principles, but that the vividness of the percept is a

consequence of prior learning.

17

Johansson's technique of using point-light display of joint movement as a tool

for isolating information in motion patterns from information in form patterns

has been used in many other experiments. Kozlowski and Cutting (1977), for

example, used the technique to recognise the gender of a walker, and with

more relevance to the current research, Poizner et al. (1981) used it in the study

of American Sign Language (ASL) perception. Poizner et al. use the placement

of nine point lights (namely head, left and right shoulders, the index fingers of

the left and right hand, and wrists and elbows of the left and right arm) on a

signer in a darkened room, and taped the movement on video. Following this,

other signers were asked whether they could recognise the signs on the video

tape. The results show that they could accurately match lexical and

inflectional movement presented in dynamic point-light displays presented in

the video tape. Furthermore, the signers could identify signs of a constant

hand configuration and ASL inflections presented in the point-light display.

The experimental outcome that the signs were identified almost as well when

presented in two-dimensional images as when presented in three, reflects, in

part, the information that moving dots carry about depth. Their investigation

on the information-carrying components within this point-light display found

that the more distal the joint, the more information its movement carries for

sign identification. Therefore, the movement of the fingertips is found to be

necessary for sign identification.

Results from the above experiments show the importance of motion patterns

of the physical joints in human perception of biological motion. An

independently moving a set of points is recognised as a particular figure

(especially in Poizner's experiment) as well as its movement being recognised.

This means that humans are not only able to group set of points as form

18

information by connecting points as a figure, but also to recognise the motion

using prior experiences (Johansson 1973).

In machine recognition of hand gestures, various methods of recognition

processes have been investigated by various researchers. Some recognition

systems are designed just to recognise hand postures, and others are extended

to recognise the hand motion. These systems vary in the types of information

that are extracted from the movement, and also in their classification

techniques.

2.3 Hand Shape Recognition

There are gesture recognition systems that are designed specifically to

recognise hand shapes. The appearance of a hand posture in an image

changes as the hand rotates, and various techniques are used to describe the

shape of the hand in order to enhance the recognition performance.

Uras and Verri (1995) have developed a system that recognises 25 hand shapes

that represent the ASL alphabet (excluding "Z"). Their system uses size

functions that encode the topological and geometric information of the hand

shape, for example the distance between the centre of mass of the hand

contour points and some important contour points. Each shape is represented

by a description vector that is based on size functions. Uras and Verri extract

description vectors from the images that capture hand postures. A training set

of description vectors is built from real images and the κ -nearest neighbour

rule is employed for the classification. Their evaluation shows that if the

training and the test sets refer to the same subject, the recognition rate is about

19

80%. With the implementation of the rejection rule (an input description

vector is classified only if the three nearest neighbours identify the same sign),

they achieve nearly 99% accuracy with a 20% rejection rate.

A rather limited case of a hand shape recognition system has been developed

specifically for a human-computer interface application by Hunter et al. (1995).

This system extracts descriptors, based on Zernike moments (that is, a rotation

invariant descriptor), from the hand images, and these descriptors are then

classified using a nearest class-mean classifier. Their technique is suitable for

the small, distinct 6 hand shape vocabulary which they use. After training

with 720 images, they achieve a 95% recognition rate from 738 test images.

There also exist other systems that use various shape estimation descriptors,

which are suitable for recognising a set of postures specific to their

applications. For example, Freeman and Roth (1995) extracted descriptors

based on the centre of mass and circularity of hand shape, from colour images,

in order to recognise 6 hand shapes for their real-time man machine interaction

system.

An alternative to explicit pattern matching is to use a neural network, where

the matching is done implicitly. Wilson and Anspach (1993) classified video

images of hand shapes into their linguistic counterpart in ASL. The video

images were preprocessed to yield Fourier descriptors which encode the shape

of the hand silhouette. These descriptors were then used as input into the

neural network that classifies signs. Classification is performed for 36 hand

20

shapes and it achieves 78% accuracy. This shape recognition process is

developed as a potential algorithm for their sign motion recognition system.

As it is important to recognise hand shapes, an understanding of hand motion

is an integral part of gesture recognition.

2.4 Motion Understanding Using Two-Dimensional Information

An early image processing system by Tamura and Kawasaki (1988)

demonstrates the recognition of Japanese sign language signs based on

matching sets of information, called cheremes, which consist of hand shape,

movement and location. They used as input video image sequences in which a

signer commences each sign from a neutral start position and returns to the

neutral position after finishing the sign. From the images, the system extracts

the skin area of the right hand, as well as the face, which is used as a reference

point to determine the hand location and its movement direction. The system

finds the number of still frames (with such frames representing the pausing of

the hand movement) in the sequence. If one still is found, the sign is

determined to be a static sign, otherwise, if two or more stills are found, it is a

dynamic sign. For a static sign, the system extracted from the still frame the

shape of the right hand that is described by using a polygonal approximation

of the contour lines, and its location in reference to the upper body. But for a

dynamic sign, the system extracted the static hand sign information from both

of two still frames, one as the initial pose and the other as the final pose, as

well as the movement direction. Those shape and motion descriptors were

used to match stored signs in the dictionary. The experiment was made for 20

words and they achieved a correct recognition rate of 45%; in the remaining

21

55% of cases, the system found two matching words, one of which was the

correct one.

A similar technique was used by Charayaphan and Marble (1992, cited by

Dorner 1994A) in their sign recognition system. In order to recognise a sign,

the system used the initial and the final hand locations, and if necessary, the

shape of the hand trajectory which was calculated by tracking the hand in real

time.

More recently, Ushida et al. (1994) have developed a human motion

recognition system that uses a colour image tracking device to locate the

position of the hand, face and other parts of the human body, every 0.016

second. The system obtains the angle under the right arm appearing in each

image by using the position of the right hand and the right shoulder. The

change of this angle over time, allows the system to find the appearance of

three characteristic states: a stable state where the angle remains constant; a

mountain state where the angle increases then decreases; and a valley state

where the angle first decreases, then increases. These characteristic states in

the sequence were extracted and were directly used for classification. The

classification was performed by FAM where the specific transition patterns of

the characteristic states were used as rules. FAM is a kind of associative

memory network, consisting of several bidirectional associative memories.

Their fuzzy associative inference was driven by node activation propagation in

the associative memory. They represented a gesture fuzzy rule by using 3

layers where the input layer contains the nodes representing membership

functions of the condition (that is, the IF-part), the output layer contains the

conclusion (that is, the THEN-part), and the middle layer describes the

22

relationships between conditions and conclusions. In their real-time

experiment, the system recognised three basic tennis motions (forehand stroke,

backhand stroke, and smash) for unspecified people who were not involved in

training, with an average success rate of 84%. This proves that the technique is

independent of the person being measured and the speed of the motion.

The above-mentioned systems deal with a single sign which may contain

motion. When attempting to recognise a sequence of signs, however, the

difficulty lies with the segmentation problem, which needs to distinguish the

keyframes that provide clues for recognising the gesture, from the

intermediate frames that exist between the keyframes. This is similar to the

segmentation problem in natural language processing.

In approaching the segmentation problem, Starner and Pentland (1995) used

HMMs, which had previously been used successfully in speech recognition,

for the recognition of ASL sentences. They used a coarse description of hand

shape, orientation and trajectory, which was tracked in real time from input

images from a single colour camera. Their system is designed to recognise

sentences of the grammatical form "personal pronoun, verb, noun, adjective,

personal pronoun". Six personal pronouns, nine verbs, twenty nouns, and five

adjectives were included, making a total lexicon consisting of forty words.

They selected 494 sentences (using the chosen lexicon), and used 395 training

sentences as a training set and 99 independent sentences as a test set. When

they provided the recognisor with the rules of their grammar, that is the

known form of legitimate sentences, they achieved a 99.2% recognition rate.

Without the grammar, the recognition rate was 91.3%.

23

A finite state machine was employed by Davis and Shah (1994) to deal with

segmenting a motion sequence. They have developed a real-time system that

recognises a sequence of multiple gestures, where the signer is required to

wear a glove with markers on each finger tip. The system analysed a sequence

of binary images that represents a series of 7 signs, where each sign consists of

movement that starts from a specified initial hand posture (same for all signs)

and moves to a posture representing a static gesture. The system used a

tracking algorithm to find the motion trajectories of the finger tips, which were

then used by a finite state machine that guides the flow and recognition of

gestures. A finite state machine is designed by using four phases of generic

gesture: firstly keeping still in the starting posture; secondly, smoothly moving

fingers to a gesture position; thirdly, keeping the hand in the gesture position;

then fourthly, smoothly moving the fingers back to reach the starting posture.

Because of the nature of the finite state machine, the system does not need a

fixed number of frames which constitute the motion of a gesture, and the path

of the finger tips to the gesture position is irrelevant in recognition. Thus the

system does not require the time warping of the image sequence to match the

model. Gestures are represented as a list of vectors which indicate the

movement of finger tips from the initial posture to the gesture position, and

these are used to match the stored gesture vector models using table lookup

based on vector displacements. Ten sequences of over 200 frames (digitised at

4 Hz) were used for the evaluation. The result shows that for 8 sequences all

of 7 signs were recognised successfully; for one sequence it failed to recognise

one of the 7 signs; and for the other sequence, the system found errors in 3

signs.

24

The use of 2-D hand shape descriptors in dynamic hand gesture recognition

has its limitations. A hand posture may appear different amongst the gesture

images based on the hand rotation involved. Being aware of this limitation,

Darrell and Pentland (1993) represent a gesture with a set of view models.

Given a sequence of gesture images, a set of view models is automatically

constructed by tracking the hand using a normalized correlation score for each

image. Gestures are modelled as a set of view correlation scores over time,

and the input sequences are matched with the stored gestures by using

dynamic time warping. This method offers real-time performance by using

special hardware. The system was trained to recognise two gestures (waving

"hello" and waving "good-bye") for a particular user, and was tested for the

ability to recognise "hello" gesture from different users who performed the

gesture interleaved with three other gestures. The result shows the

recognition rate of 96%. The system was later extended (Darrell & Pentland

1995) in order to apply it to a video-based unconstrained interaction with

virtual environments. A view-based facial recognition process is implemented

in order to identify the user, which is used to find an index into the best set of

view templates to use for gesture recognition when multiple users are present.

Detailed gesture recognition performance after employing this extension was

not discussed in the paper.

While the vision-based gesture recognition systems described so far, rely on

2-D information captured in the images to recognise gestures, VR glove-based

gesture recognition systems use 3-D kinematic configuration data to recognise

motion gestures.

25

2.5 Three-Dimensional Motion Understanding Using VR

Technology

The most accessible way to extract the 3-D hand configuration data may be

through a VR glove that mechanically senses the hand configuration and then

directly transmits the data to the computer (Eglowstein 1990).

The VR glove has been used in many gesture systems in recent years

(Murakami & Taguchi 1991; Fels & Hinton 1993; Vaanaanen & Bohm 1994;

Vamplew & Adams 1995). The researchers extract sequences of kinematic

hand configuration data (such as finger joint angles, and wrist rotations) from

a VR glove, and classify the movement sequence as discrete hand signs using

various designs of neural networks.

The system devised by Fels and Hinton is restricted to static hand signs, with

forward or backwards movement in one of six directions indicating the word

ending. The word segmentation is obtained by monitoring hand accelerations.

Their system classified 66 words with up to 6 different endings each, giving a

total vocabulary of 203 words. They returned errors in 1% of cases and failed

to return any word in a further 5% of cases.

Murakami and Taguchi (1991) have developed a gesture recognition system

that extracts hand configuration data from a Data Glove, and uses recurrent

neural networks in order to dynamically process the hand motion. The system

was tested with 10 motion signs from the Japanese sign language, with the

objective to recognise both hand shape and motion in the signs. During the

evaluation, they achieved an accuracy rate of 96%.

26

Recurrent neural networks were also used by Vamplew and Adams (1995) in

their gesture recognition system. They used a CyberGlove equipped with a

Polhemus sensor for measuring the location and orientation of the hand.

Three different users participated in data collection, where each user executed

several example signs, each of sixteen different motions. 560 data sets were

used to train the recurrent neural network and the remaining 320 sets were

used to test the system. The system achieved near 99% accuracy. Their paper

also proposed the possible usefulness of a thresholding technique for

segmentation.

An alternative method to the use of VR technology for extracting 3-D hand

configuration is offered by computer vision technology.

2.6 Three-Dimensional Motion Sensing Techniques

An attempt to recover 3-D information from 2-D images dates back to the

work of Roberts (1965, cited by Lowe 1991). Roberts' work concentrated on

segmentation, object recognition and the mathematical analysis required to

determine an object's three-dimensional position. Although Roberts' solution

methods to solve three-dimensional parameters were specialised to certain

classes of objects, such as rectangular blocks, his work emphasised the

importance of quantitative parameter determination for making vision robust

against missing and noisy data.

27

Since then, most work in the analysis of image sequences of moving objects

has been directed to the analysis of the two-dimensional movement of objects

(Martin & Aggarwal 1978). Finally, in 1980, an attempt to recover 3-D

information in object tracking was made. Roach and Aggarwal (1980)

experimented on finding the three dimensional model of points on an object's

surface as well as its movement (up to a scale factor) from a sequence of

images from multiple views. A technique for solving for viewpoint and model

parameters was independently developed by Lowe (1980, cited by Lowe 1991).

Later, Lowe (1991) presented an efficient and robust method for solving

projection and model parameters that best fit models with arbitrary curved

surfaces and any number of internal parameters to matched image features.

The model-based recognition used prior knowledge of the shape and

appearance of specific objects during the process of visual interpretation. This

link between perception and prior knowledge of the component of the scene

allowed the system to make inferences about the scene that went beyond what

was explicitly available from the image.

A summary of Lowe's approach is as follows: Given a 3-D model to be

tracked, the system extracts relevant features from the image frames. The

system then performs an optimisation loop that consists of calculating the

model's 2-D projection, and comparing the model's projection and image

features in order to calculate a correction of the model's 3-D pose. The

locations of projected model features in an image are a nonlinear function of

the viewpoint and model parameters (translational and rotational). Therefore

the solution is based on Newton's method of linearization and iteration to

perform a least-squares minimisation.

28

The Newton style nonlinear minimisation technique often used in general

motion tracking techniques (Gennery 1992; Kumar et al. 1989), has been

applied to the field of hand tracking by a number of researchers, as detailed

below.

2.6.1 Three-Dimensional Model-Based Hand Tracking

Vaillant and Darmon (1995) have developed a system that tracks hand

movement using a 3-D hand model with 4 degrees-of-freedom, being one

rotation parameter for each of the thumb, index, fourth and last fingers. The

simplified hand model almost treats the hand as a rigid object, by assuming

the user keeps the hand open and the fingers straight. The system analyses an

image sequence from a single camera, and the user is not requested to wear

any glove. Thus the bare hand is segmented from the image and points of

interests (that is, the points of the contour which are extrema of the curvature

such as finger tips) are extracted. The Kalman-filter-based tracking method

was used to trace the changes of feature locations. They demonstrated how 4

degrees-of-freedom parameters are estimated using a Newton-style iterative

model fitting algorithm.

Dorner (1993; 1994A) used the 3-D model-based tracking approach to recover

all 26 degrees-of-freedom hand parameters. The user was required to wear a

colour-coded glove, where finger joints and tips were marked with distinct 3-

ring markers. The system extracted the joint positions by detecting the

markers of relevant colour combinations from the images. This system follows

Lowe's work in using an optimisation approach, partially in his choice of

mathematical algorithm, which is an extension of Newton's algorithm.

Specifically, Dorner used a Quasi Newton algorithm (a NAG library routine)

29

for solving the nonlinear least-squares minimisation problem. The tracking

process also used a prediction of the hand model state, which was made by

analysing the movement over three previous frames. This system was

developed as a vision module for their ASL understanding system, but it does

not handle occlusions. They also suggested a parser that can be used for ASL

understanding (Dorner & Hagen 1994B), but it is yet to be implemented.

Regh and Kanade (1995), on the other hand, have developed a hand tracker

called DigitEyes that recovers the state of 27 degrees-of-freedom hand model

(one more degree-of-freedom is added to Dorner's thumb model) by using line

and point features extracted from images of unmarked, unadorned hands, and

taken from one or more viewpoints. The grey scale images are grabbed at

speeds of up to 10Hz. Their image features consists of finger link feature

vectors that represent the central axis of each finger segment (that are links

between one joint to another adjacent joint), and points representing finger

tips. Once the image features are extracted from images, the system calculates

the feature residuals (that are the Euclidean distances between the features and

the corresponding projected model points) for each line and tip in the model.

Then the state correction of the model is obtained by a modified Gauss-

Newton algorithm that minimises the feature residuals. This system however,

is limited to scenes without occlusion of fingers, or complicated backgrounds.

When image features are extracted, the projection of the previous estimated

model is used to hypothesise that the closest available feature is the correct

match. DigitEyes was applied to a 3-D mouse interface problem and

successfully demonstrated its functionality even though the gestures were

very limited, since occlusions had to be avoided in the movement.

30

2.7 Summary

This chapter introduced the existing gesture recognition systems, explaining

their motion sensing techniques, classification techniques, and recognition

performances.

The vision-based gesture recognition systems obviously prefer to use a 2-D

motion sensing technique that extracts the hand shape and trajectory

information, rather than a 3-D motion sensing technique that recovers the

changes of 3-D hand postures. This is because not only that 3-D motion

sensing is a complex and computationally expensive process, but also that the

difficulties in allowing finger occlusions in 3-D motion sensing lead to an

incapability to include a reasonable range of movement in a gesture

recognition system.

The 2-D motion sensing data are classified by using a variety of techniques. A

classical maximum likelihood classification method is used to recognise hand

shapes (Uras & Verri 1995; Hunter et al. 1995), or hand shapes as well as

trajectory in an earlier sign recognition system (Tamura & Kawasaki 1998).

Wilson and Anspach (1993), on the other hand, use neural networks to

recognise hand shapes.

There are three other interesting classification techniques published in recent

years. A FAM is used to classify 2-D human arm motion data into one of three

tennis strokes (Ushida et al. 1994). A finite state machine is used to recognise a

sequence of static signs with specified starting and ending postures (Davis and

Shah 1994). And HMMs are used to recognise a sequence of signs that are

31

performed in an order of the specified grammar in the ASL recognition system

developed by Starner and Pentland (1995). All of these systems achieve above

80% recognition rate.

VR glove-based systems extract 3-D motion data (that is a sequence of 3-D

hand configuration data) from a VR glove, which are then classified as

gestures using various types of neural networks. (Fels & Hinton 1993;

Murakami & Taguchi 1991; Vamplew & Adams 1995). These systems achieve

a very high recognition rate of above 90 %.

In the area of visual 3-D hand motion sensing, there exist hand trackers that

extract 3-D motion data. Dorner (1994A), and independently, Regh and

Kanade (1995) have developed hand trackers that recover full degrees-of-

freedom hand configuration parameters (26 parameters are used in Dorner's

tracker, and 27 parameters are used in DigitEyes) from the visual input. They

use a model-based motion tracking approach where the differences between

the hand image features and the projected features of the 3-D model state are

used to find parameter corrections for the hand model to fit the hand posture

appearing in the image.

2.8 Introduction to the HMU System

Previously in the field of gesture recognition, an attempt both to extract and

classify an extensive number of 3-D degrees-of-freedom of the hand from the

visual input has not been made, even though the technique for 3-D hand

tracking exists.

32

The tracking techniques used in the existing 3-D hand trackers (Dorner 1994A;

Regh & Kanada 1995) do not deal with occlusions from a single viewpoint,

greatly limiting the hand movement allowed in the system. In a sign language

system, it would be impossible to avoid occlusions in hand movement since

even basic hand movements such as closing and opening, pointing, etc. cause

the occlusion of fingers. Thus a hand tracker that is capable of handling

occlusions and robustly extracting 3-D data needs to be devised for sign

recognition. The HMU tracker achieves this goal, by using a robust and

efficient general tracking algorithm that was previously developed by Lowe

(1991), and by employing a prediction algorithm in order to handle limited

occlusions. The tracker uses similar but a slightly different optimisation

algorithm from those used by Dorner, or Regh and Kanada. The task of being

able to understand the complex finger movement that includes occlusions

occurring in the unmarked hand images from a single view is beyond the

scope of this project. The HMU tracker employs colour-coded glove that

enables a robust feature extraction.

For the classification of the 3-D motion sequence into signs, neural networks

are generally favoured over classical classification techniques such as

maximum likelihood estimation among the existing gesture recognition

systems. This is because the classical methods are restricted to the

morphology of the clusters to be separated; and in order to improve results,

they require preprocessing, such as cluster analysis. Neural networks, on the

other hand, can avoid expensive preprocessing because they are able to cluster

arbitrary density functions in feature space, simply by altering the number of

layers and neurons (Vaanaanen & Bohm 1994).

33

Hand signs are very well-defined gestures, where the motion of each sign is

explicitly understood by both the signer and the informed viewer. Neural

networks fail to capture this explicit information, encoding the classification

knowledge implicitly as a function of network behaviour. For this reason, the

classification of hand signs seems to be well-suited to the expert system

domain, where explicit sign knowledge can be formulated and represented. In

addition, such a setting makes it easy to modify existing sign knowledge or to

add new signs to the knowledge base. This is achieved by using an adaptive

fuzzy system. The closest gesture classification reported so far is the FAM

used by Ushida et al. (1994) in their use of fuzzy logic and inference rules to

represent the gestures. Both systems however, deal with different sizes and

complexities of inputs and possible output dimensions. Ushida’s classifier

uses 3 possible characteristic descriptions to represent 3 tennis strokes, and

classifies the changes of one 2-D angle in a sequence of images as a stroke. The

HMU classifier, on the other hand, uses 22 Auslan basic hand postures and

motion variables in order to represent 22 static and dynamic hand signs, and

classifies the changes of 21 3-D hand joint angles that are extracted from an

image sequence as a sign.

34

Chapter 3

A Vision-Based Three-DimensionalHand Tracker

Visual hand tracking is a sequential estimation problem where the time-

varying state of the hand is recovered by processing a sequence of images.

The HMU tracker consists of three basic components:

• the hand model that specifies a mapping from a hand state space, which

characterises all possible spatial configurations of the hand, to a feature

space that represent the hand in an image;

• feature measurement that extracts the necessary features from images;

and

• state estimation that calculates, by inverting the model, the state vector

of the model that best fits the measured features.

The aim of the tracking is to use the 2-D differences between the projected

features of the 3-D hand model and the measured features from the image to

calculate 3-D parameter corrections to the hand model in order to re-configure

the model to fit the posture that is captured in the image.

35

A hand exercises many degrees-of-freedom, which makes the tracking of hand

movement a difficult and complex task. In the HMU tracker, the following

considerations are made to ensure robust and efficient tracking:

• The simplified hand model

Estimating many parameters is computationally expensive. While a full

physiological capability of the hand makes use of 26 degrees-of-freedom that

consist of 6 rotation and translation parameters for the palm, and 4 rotation

parameters for each of the five fingers, the hand model used in the HMU system

has been reduced to 21 degrees-of-freedom (one parameter for each of the five

fingers is reduced from the 26 degrees-of-freedom model) without

compromising the information required to recognise the signs.

• Reliable and robust feature measurement

The HMU tracker uses the joint positions as features to represent the hand,

following many other human-motion tracking systems (Davis 1988; Long and

Yang 1991; Dorner 1994). As many degrees-of-freedom are exercised, the

hand's appearance is complicated, which causes some difficulty in locating the

features. The HMU tracker employs a colour-coded glove with joint markers

for a robust extraction of the features. Occlusion of the fingers or shadows are

generally the major causes of difficulty in finding features in the image. These

problems are dealt partially by using a prediction algorithm.

• Efficient and robust state estimation

Many degrees-of-freedom used in the hand model may introduce kinematic

singularities which arise when a change in a given state has no effect on the

image features. They cause a common inverse kinematic problem in Robotics

36

(Yoshikawa 1990, pp. 67-70), and thus require an effective stabilisation

technique in the state estimation process. Lowe’s state estimation algorithm is

adapted in the HMU tracker to deal with this problem by using stabilisation

and forcing convergence techniques (Lowe 1991).

3.1 Chapter Overview

The HMU tracker uses a hand model which represents a kinematic chain of

3-D hand configuration. The hand state encodes the orientation of the palm

(three rotation and three translation parameters) and the joint angles of fingers

(three rotation parameters for each finger and the thumb). On each image, the

hand state is mapped to a set of features that consists of the locations of the

wrist, and three joints for each of the five fingers.

Given the initial hand model state, tracking is achieved by making incremental

corrections to the model state throughout the sequence of images. Thus one

cycle of the corrections to the model is referred to as the state estimation and