The Epistemology of Measurement: A Model-Based Account · I am especially thankful to Hasok Chang...

Transcript of The Epistemology of Measurement: A Model-Based Account · I am especially thankful to Hasok Chang...

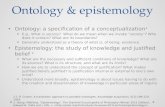

The Epistemology of Measurement:

A Model-Based Account

by

Eran Tal

A thesis submitted in conformity with the requirements

for the degree of Doctor of Philosophy

Graduate Department of Philosophy

University of Toronto

© Copyright by Eran Tal 2012

ii

The Epistemology of Measurement: A Model-Based Account

Eran Tal, Doctor of Philosophy

Department of Philosophy, University of Toronto, 2012

Thesis abstract

Measurement is an indispensable part of physical science as well as of commerce,

industry, and daily life. Measuring activities appear unproblematic when performed with

familiar instruments such as thermometers and clocks, but a closer examination reveals a

host of epistemological questions, including:

1. How is it possible to tell whether an instrument measures the quantity it is

intended to?

2. What do claims to measurement accuracy amount to, and how might such

claims be justified?

3. When is disagreement among instruments a sign of error, and when does it

imply that instruments measure different quantities?

Currently, these questions are almost completely ignored by philosophers of science,

who view them as methodological concerns to be settled by scientists. This dissertation

shows that these questions are not only philosophically worthy, but that their exploration

has the potential to challenge fundamental assumptions in philosophy of science, including

the distinction between measurement and prediction.

iii

The thesis outlines a model-based epistemology of physical measurement and uses it to

address the questions above. To measure, I argue, is to estimate the value of a parameter in

an idealized model of a physical process. Such estimation involves inference from the final

state (‘indication’) of a process to the value range of a parameter (‘outcome’) in light of

theoretical and statistical assumptions. Idealizations are necessary preconditions for the

possibility of justifying such inferences. Similarly, claims to accuracy, error and quantity

individuation can only be adjudicated against the background of an idealized representation

of the measurement process.

Chapters 1-3 develop this framework and use it to analyze the inferential structure of

standardization procedures performed by contemporary standardization bureaus.

Standardizing time, for example, is a matter of constructing idealized models of multiple

atomic clocks in a way that allows consistent estimates of duration to be inferred from clock

indications. Chapter 4 shows that calibration is a special sort of modeling activity, i.e. the

activity of constructing and testing models of measurement processes. Contrary to

contemporary philosophical views, the accuracy of measurement outcomes is properly

evaluated by comparing model predictions to each other, rather than by comparing

observations.

iv

Acknowledgements

In the course of writing this dissertation I have benefited time and again from the

knowledge, advice and support of teachers, colleagues and friends. I am deeply indebted to

Margie Morrison for being everything a supervisor should be and more: generous with her

time and precise in her feedback, unfailingly responsive and relentlessly committed to my

success. I thank Ian Hacking for his constant encouragement, for never ceasing to challenge

me, and for teaching me to respect the science and scientists of whom I write. I owe many

thanks to Anjan Chakravartty, who commented on several early proposals and many sketchy

drafts; this thesis owes its clarity to his meticulous feedback. My teaching mentor, Jim

Brown, has been a constant source of friendly advice on all academic matters since my very

first day in Toronto, for which I am very grateful.

In addition to my formal advisors, I have been fortunate enough to meet faculty members in

other institutions who have taken an active interest in my work. I am grateful to Stephan

Hartmann for the three wonderful months I spent as a visiting researcher at Tilburg

University; to Allan Franklin for ongoing feedback and assistance during my visit to the

University of Colorado; to Paul Teller for insightful and detailed comments on virtually the

entire dissertation; and to Marcel Boumans, Wendy Parker, Léna Soler, Alfred Nordmann

and Leah McClimans for informal mentorship and fruitful research collaborations.

Many other colleagues and friends provided useful comments on this thesis at various stages

of writing, of which I can only mention a few. I owe thanks to Giora Hon, Paul Humphreys,

Michela Massimi, Luca Mari, Carlo Martini, Ave Mets, Boaz Miller, Mary Morgan, Thomas

Müller, John Norton, Isaac Record, Jan Sprenger, Jacob Stegenga, Jonathan Weisberg,

Michael Weisberg, Eric Winsberg, and Jim Woodward, among many others.

I am especially thankful to Hasok Chang for writing a thoughtful and detailed appraisal of

this dissertation, and to Joseph Berkovitz and Denis Walsh for serving on my examination

committee.

v

The work presented here depended on numerous physicists who were kind enough to meet

with me, show me around their labs and answer my often naive questions. I am grateful to

members of the Time and Frequency Division at the US National Institute of Standards and

Technology (NIST) and JILA labs in Boulder, Colorado for their helpful cooperation. The

long hours I spent in conversation with Judah Levine introduced me to the fascinating world

of atomic clocks and ultimately gave rise to the central case studies reported in this thesis.

David Wineland’s invitation to visit the laboratories of the Ion Storage Group at NIST in

summer 2009 resulted in a wealth of materials for this dissertation. I am also indebted to

Eric Cornell, Till Rosenband, Scott Diddams, Tom Parker and Tom Heavner for their time

and patience in answering my questions. Special thanks go to Chris Ellenor and Rockson

Chang, who, as graduate students in Aephraim Steinberg’s laboratory in Toronto, spent

countless hours explaining to me the technicalities of Bose-Einstein Condensation.

My research for this dissertation was supported by several grants, including three Ontario

Graduate Scholarships, a Chancellor Jackman Graduate Fellowship in the Humanities, a

School of Graduate Studies Travel Grant (the latter two from the University of Toronto),

and a Junior Visiting Fellowship at Tilburg University.

I am indebted to Gideon Freudenthal, my MA thesis supervisor, whose enthusiasm for

teaching and attention to detail inspired me to pursue a career in philosophy.

My mother, Ruth Tal, has been extremely supportive and encouraging throughout my

graduate studies. I deeply thank her for enduring my infrequent visits home and the

occasional cold Toronto winter.

Finally, to my partner, Cheryl Dipede, for suffering through my long hours of study with

only support and love, and for obligingly jumping into the unknown with me, thanks for

being you.

vi

Table of Contents

Introduction ...................................................................................................... 1

1. Measurement and knowledge........................................................................... 1 2. The epistemology of measurement ................................................................. 3 3. Three epistemological problems...................................................................... 5

The problem of coordination.................................................................... 8 The problem of accuracy ......................................................................... 11 The problem of quantity individuation.................................................. 12 Epistemic entanglement........................................................................... 14

4. The challenge from practice........................................................................... 15 5. The model-based account............................................................................... 17 6. Methodology..................................................................................................... 21 7. Plan of thesis .................................................................................................... 24

1. How Accurate is the Standard Second? ................................................... 26

1.1. Introduction...................................................................................................... 26 1.2. Five notions of measurement accuracy ........................................................ 29 1.3. The multiple realizability of unit definitions................................................ 33 1.4. Uncertainty and de-idealization ..................................................................... 37 1.5. A robustness condition for accuracy ............................................................ 40 1.6. Future definitions of the second ................................................................... 44 1.7. Implications and conclusions......................................................................... 46

2. Systematic Error and the Problem of Quantity Individuation ................ 48

2.1. Introduction...................................................................................................... 48 2.2. The problem of quantity individuation ........................................................ 51

2.2.1. Agreement and error................................................................... 51 2.2.2. The model-relativity of systematic error .................................. 55 2.2.3. Establishing agreement: a threefold condition........................ 59 2.2.4. Underdetermination.................................................................... 62 2.2.5. Conceptual vs. practical consequences .................................... 64

2.3. The shortcomings of foundationalism ......................................................... 67 2.3.1. Bridgman’s operationalism......................................................... 68 2.3.2. Ellis’ conventionalism................................................................. 70 2.3.3. Representational Theory of Measurement .............................. 73

2.4. A model-based account of measurement..................................................... 78 2.4.1. General outline ............................................................................ 78 2.4.2. Conceptual quantity individuation............................................ 83 2.4.3. Practical quantity individuation ................................................. 88

2.5. Conclusion: error as a conceptual tool ......................................................... 91

vii

3. Making Time: A Study in the Epistemology of Standardization ............ 93

3.1. Introduction...................................................................................................... 93 3.2. Making time universal ..................................................................................... 99

3.2.1. Stability and accuracy.................................................................. 99 3.2.2. A plethora of clocks..................................................................103 3.2.3. Bootstrapping reliability ...........................................................106 3.2.4. Divergent standards ..................................................................108 3.2.5. The leap second.........................................................................111

3.3. The two faces of stability..............................................................................112 3.3.1. An explanatory challenge .........................................................112 3.3.2. Conventionalist explanations...................................................113 3.3.3. Constructivist explanations......................................................118

3.4. Models and coordination..............................................................................123 3.4.1. A third alternative......................................................................123 3.4.2. Mediation, legislation, and models..........................................126 3.4.3. Coordinative freedom...............................................................130

3.5. Conclusions ....................................................................................................136

4. Calibration: Modeling the Measurement Process ..................................138

4.1. Introduction....................................................................................................138 4.2. The products of calibration..........................................................................142

4.2.1. Metrological definition .............................................................142 4.2.2. Indications vs. outcomes..........................................................143 4.2.3. Forward and backward calibration functions........................146

4.3. Black-box calibration.....................................................................................148 4.4. White-box calibration....................................................................................151

4.4.1. Model construction ...................................................................151 4.4.2. Uncertainty estimation..............................................................154 4.4.3. Projection ...................................................................................158 4.4.4. Predictability, not just correlation...........................................160

4.5. The role of standards in calibration ............................................................164 4.5.1. Why standards?..........................................................................164 4.5.2. Two-way white-box calibration...............................................165 4.5.3. Calibration without metrological standards...........................168 4.5.4. A global perspective..................................................................170

4.6. From predictive uncertainty to measurement accuracy ...........................174 4.7. Conclusions ....................................................................................................177

Epilogue .........................................................................................................178

Bibliography................................................................................................... 181

viii

List of Tables

Table 1.1: Comparison of uncertainty budgets of aluminum (Al) and mercury (Hg) optical atomic clocks. ............................................................. 45

Table 3.1: Excerpt from Circular-T............................................................................... 104 Table 4.1: Uncertainty budget for a torsion pendulum measurement of G,

the Newtonian gravitational constant......................................................... 156 Table 4.2: Type-B uncertainty budget for NIST-F1, the US primary

frequency standard......................................................................................... 166

List of Figures

Figure 3.1: A simplified hierarchy of approximations among model parameters in contemporary timekeeping..................................................129

Figure 4.1: Modules and parameters involved in a white-box calibration of a simple caliper..................................................................................................153

Figure 4.2: A simplified diagram of a round-robin calibration scheme .....................169

1

The Epistemology of Measurement: A Model-Based Account

Introduction

I often say that when you can measure what you are speaking about and express

it in numbers you know something about it; but when you cannot measure it,

when you cannot express it in numbers, your knowledge is of a meagre and

unsatisfactory kind […].

– William Thomson, Lord Kelvin (1891, 80)

1. Measurement and knowledge

Measurement is commonly seen as a privileged source of scientific knowledge. Unlike

qualitative observation, measurement enables the expression of empirical claims in

mathematical form and hence makes possible an exact description of nature. Lord Kelvin’s

famous remark expresses high esteem for measurement for this same reason. Today, in an

age when thermometers and ammeters produce stable measurement outcomes on familiar

scales, Kelvin’s remark may seem superfluous. How else could one gain reliable knowledge

of temperature and electric current other than through measurement? But the quantities

called ‘temperature’ and ‘current’ as well as the instruments that measure them have long

2

histories during which it was far from clear what was being measured and how – histories in

which Kelvin himself played important roles1.

These early struggles to find principled relations between the indications of material

instruments and values of abstract quantities illustrate the dual nature of measurement. On

the one hand, measurement involves the design, execution and observation of a concrete

physical process. On the other hand, the outcome of a measurement is a knowledge claim

formulated in terms of some abstract and universal concept – e.g. mass, current, length or

duration. How, and under what conditions, are such knowledge claims warranted on the

basis of material operations?

Answering this last question is crucial to understating how measurement produces

knowledge. And yet contemporary philosophy of measurement offers little by way of an

answer. Epistemological concerns about measurement were briefly popular in the 1920s

(Campbell 1920, Bridgman 1927, Reichenbach [1927] 1958) and again in the 1960s (Carnap

[1966] 1995, Ellis 1966), but have otherwise remained in the background of philosophical

discussion. Until less than a decade ago, the philosophical literature on measurement focused

on either the metaphysics of quantities (Swoyer 1987, Michell 1994) or the mathematical

structure of measurement scales. The Representational Theory of Measurement (Krantz et al

1971), for example, confined itself to a discussion of structural mappings between empirical

and quantitative domains and neglected the possibility of telling what, and how accurately,

such mappings measure. It is only in the last several years that a new wave of philosophical

writings about the epistemology of measurement has appeared (most notably Chang 2004,

Boumans 2006, 2007 and van Fraassen 2008, Ch. 5-7). Partly drawing on these recent

1 See Chang (2004, 173-186) and Gooday (2004, 2-9).

3

achievements, this thesis will offer a novel systematic account of the ways in which

measurement produces knowledge.

2. The epistemology of measurement

The epistemology of measurement, as envisioned in this dissertation, is a subfield of

philosophy concerned with the relationships between measurement and knowledge. Central

topics that fall under its purview are the conditions under which measurement produces

knowledge; the content, scope, justification and limits of such knowledge; the reasons why

particular methodologies of measurement and standardization succeed or fail in supporting

particular knowledge claims; and the relationships between measurement and other

knowledge-producing activities such as observation, theorizing, experimentation, modeling

and calculation. The pursuit of research into these topics is motivated not only by the need

to clarify the epistemic functions of measurement, but also by the prospects of contributing

to other areas of philosophical discussion concerning e.g. reliability, evidence, causality,

objectivity, representation and information.

As measurement is not exclusively a scientific activity – it plays vital roles in

engineering, medicine, commerce, public policy and everyday life – the epistemology of

measurement is not simply a specialized branch of philosophy of science. Instead, the

epistemology of measurement is a subfield of philosophy that draws on the tools and

concepts of traditional epistemology, philosophy of science, philosophy of language,

philosophy of technology and philosophy of mind, among other subfields. It is also a multi-

4

disciplinary subfield, ultimately engaging with measurement techniques from a variety of

disciplines as well as with the histories and sociologies of those disciplines.

The goal of providing a comprehensive epistemological theory of measurement is

beyond the scope of a single doctoral dissertation. This thesis is cautiously titled ‘account’

rather than ‘theory’ in order to signal a more modest intention: to argue for the plausibility

of a particular approach to the epistemology of measurement by demonstrating its strengths

in a specific domain. I call my approach ‘model-based’ because it tackles epistemological

challenges by appealing to abstract and idealized models of measurement processes. As I will

explain below, this thesis constitutes the first systematic attempt to bring insights from the

burgeoning literature on the philosophy of scientific modeling to bear on traditional

problems in the philosophy of measurement. The specific domain I will focus on is physical

metrology, officially defined as “the science of measurement and its application”2. Metrologists

are the physicists and engineers who design and standardize measuring instruments for use

in scientific and commercial applications, and often work at standardization bureaus or

specially accredited laboratories.

The immediate aim of this dissertation, then, is to show that a model-based approach

to measurement successfully solves certain epistemological challenges in the domain of

physical metrology. By achieving this aim, a more far-reaching goal will also be

accomplished, namely, a demonstration of the importance of research into the epistemology

of measurement and of the promise held by model-based approaches for further research in

this area.

2 JCGM 2008, 2.2.

5

The epistemological challenges addressed in this thesis may be divided into two kinds.

The first kind consists of abstract and general epistemological problems that pertain to any

sort of measurement, whether physical or nonphysical (e.g. of social or mental quantities). I

will address three such problems: the problem of coordination, the problem of accuracy, and

the problem of quantity individuation. These problems will be introduced in the next

section. The second kind of epistemological challenge consists of problems that are specific

to physical metrology. These problems arise from the need to explain the efficacy of

metrological methods for solving problems of the first sort - for example, the efficacy of

metrological uncertainty evaluations in overcoming the problem of accuracy. After

discussing these ‘challenges from practice’, I will introduce the model-based account,

explicate my methodology and outline the plan of this thesis.

3. Three epistemological problems

This thesis will address three general epistemological problems related to

measurement, which arise when one attempts to answer the following three questions:

1. Given a procedure P and a quantity Q, how is it possible to tell whether P

measures Q?

2. Assuming that procedure P measures quantity Q, how is it possible to tell how

accurately P measures Q?

6

3. Assuming that P and P′ are two measuring procedures, how is it possible to

tell whether P and P′ measure the same quantity?

Each of these three questions pertains to the possibility of obtaining knowledge of

some sort about the relationship between measuring procedures and the quantities they

measure. The sort of possibility I am interested in is not a general metaphysical or epistemic

one – I do not consider the existence of the world or the veridical character of perception as

relevant answers to the questions above. Rather, I will be interested in possibility in the

practical, technological sense. What is technologically possible is what humans can do with

the limited cognitive and material resources they have at their disposal and within reasonable

time3. Hence to qualify as an adequate answer to the questions above, a condition of

possibility must be cognitively accessible through one or more empirical tests that humans may

reasonably be expected to perform. For example, an adequate answer to the first question

would specify the sort of evidence scientists are required to collect in order to test whether

an instrument is a thermometer – i.e. whether or not it measures temperature – as well as

general considerations that apply to the analysis of this evidence.

An obvious worry is that such conditions are too specific and can only be supplied on

a case-by-case basis. This worry would no doubt be justified if one were to seek particular

test specifications or ‘experimental recipes’ in response to the questions above. No single

test, nor even a small set of tests, exist that can be applied universally to any measuring

procedure and any quantity to yield satisfactory answers to the questions above. But this

worry is founded on an overly narrow interpretation of the questions’ scope. The conditions

3 For an elaboration of the notion of technological possibility see Record (2011, Ch. 2).

7

of possibility sought by the questions above are not empirical test specifications but only

general formal constraints on such specifications. These formal constraints, as we shall see,

pertain to the structure of inferences involved in such tests and to general representational

preconditions for performing them. Of course, it is not guaranteed in advance that even

general constraints of this sort exist. If they do not, knowledge claims about measurement,

accuracy and quantity individuation would have no unifying grounds. Yet at least in the case

of physical quantities, I will show that a shared inferential and representational structure

indeed underlies the possibly of knowing what, and how accurately, one is measuring.

Another, sceptical sort of worry is that the questions above may have no answer at all,

because it may in fact be impossible to know whether and how accurately any given

procedure measures any quantity. I take this worry to be indicative of a failure in

philosophical methodology rather than an expression of a cautious approach to the

limitations of human knowledge. The terms “measurement”, “quantity” and “accuracy”

already have stable (though not necessarily unique) meanings set by their usage in scientific

practice. Claims to measurement, accuracy and quantity individuation are commonly made in

the sciences based on these stable meanings. The job of epistemologists of measurement, as

envisioned in this thesis, is to clarify these meanings and make sense of scientific claims

made in light of such meanings. In some cases the epistemologist may conclude that a

particular scientific claim is unfounded or that a particular scientific method is unreliable.

But the conclusion that all claims to measurement are unfounded is only possible if

philosophers create perverse new meanings for these terms. For example, the idea that

measurement accuracy is unknowable in principle cannot be seriously entertained unless the

meaning of “accuracy” is detached from the way practicing metrologists use this term, as will

be shown in Chapter 1. I will elaborate further on the interplay between descriptive and

8

normative aspects of the epistemology of measurement when I discuss my methodology

below.

As mentioned, the attempt to answer the three questions above gives rise to three

epistemological problems: the problem of coordination, the problem of accuracy and the

problem of quantity individuation, respectively. The next three subsections will introduce

these problems, and the fourth subsection will discuss their mutual entanglement.

The problem of coordination

How can one tell whether a given empirical procedure measures a given quantity? For

example, how can one tell that an instrument is a thermometer, i.e. that the procedure of its

use results in estimates of temperature? The answer is clear enough if one is allowed to

presuppose, as scientists do today, an accepted theory of temperature along with accepted

standards for measuring temperature. The epistemological conundrum arises when one

attempts to explain the possibility of establishing such theories and standards in the first

place. To establish a theory of temperature one has to be able to test its predictions

empirically, a task which requires a reliable method of measuring temperature; but

establishing such method requires prior knowledge of how temperature is related to other

quantities, e.g. volume or pressure, and this can only be settled by an empirically tested

theory of temperature. It appears to be impossible to coordinate the abstract notion of

temperature to any concrete method of measuring temperature without begging the

question.

9

The problem of coordination was discussed by Mach ([1896] 1966) in his analysis of

temperature measurement and by Poincaré ([1898] 1958) in relation to the measurement of

space and time. Both authors took the view that the choice of coordinative principles is

arbitrary and motivated by considerations of simplicity. Which substance is taken to expand

uniformly with temperature, and which kind of clock is taken to ‘tick’ at equal time intervals,

are choices based of convenience rather than observation. The conventionalist solution was

later generalized by Reichenbach ([1927] 1958), Carnap ([1966] 1995) and Ellis (1966), who

understood such coordinative principles (or ‘correspondence rules’) as a priori definitions

that are in no need of empirical verification. Rather than statements of fact, such principles

of coordination were viewed as semantic preconditions for the possibility of measurement.

However, unlike ‘ordinary’ conceptual definitions, conventionalists maintained that

coordinative definitions do not fully determine the meaning of a quantity concept but only

regulates its use. For example, what counts as an accurate measurement of time depends on

which type of clock is chosen to regulate the application of the notion of temporal

uniformity. But the extension of the notion of uniformity is not limited to that particular

type of clock. Other types of clock may be used to measure time, and their accuracy is

evaluated by empirical comparison to the conventionally chosen standard4.

Another approach to the problem of coordination, closely aligned with but distinct

from conventionalism, was defended by Bridgman (1927). Bridgman’s initial proposal was to

define a quantity concept directly by the operation of its measurement, so that strictly

speaking two different types of operation necessarily measure different quantities. The

4 See, for example, Carnap on the periodicity of clocks (1995 [1966], 84). For a discussion of the differences between operationalism and conventionalism see Chang and Cartwright (2008, 368.)

10

operationalist solution is more radical than conventionalism, as it reduces the meaning of a

quantity concept to its operational definition. Bridgman motivated this approach by the need

to exercise caution when applying what appears to be the same quantity concept across

different domains. Bridgman later modified his view in response to various criticisms and no

longer viewed operationalism as a comprehensive theory of meaning (Bridgman 1959,

Chang 2009, 2.1).

A new strand of writing on the problem of coordination has emerged in the last

decade, consisting most notably of the works of Chang (2004) and van Fraassen (2008, Ch.

5). These works take a historical-contextual and coherentist approach to the problem. Rather

than attempt a solution from first principles, these writers appeal to considerations of

coherence and consistency among different elements of scientific practice. The process of

theory-construction and standardization is seen as mutual and iterative, with each iteration

respecting existing traditions while at the same time correcting them. At each such iteration

the quantity concept is re-coordinated to a more robust set of standards, which in turn

allows theoretical predictions to be tested more accurately, etc. The challenge for these

writers is not to find a vantage point from which coordination is deemed rational a priori,

but to trace the inferential and material apparatuses responsible for the mutual refinement of

theory and measurement in any specific case. Hence they reject the traditional question:

‘what is the general solution to the problem of coordination?’ in favour of historically

situated, local investigations.

As will become clear, my approach to the problem of coordination continues the

historical-contextual and coherentist trend in recent scholarship, but at the same time seeks

to specify general formal features common to successful solutions to this problem. Rather

than abandon traditional approaches to the problem altogether, my aim will be to shed new

11

light on, and ultimately improve upon, conventionalist and operationalist attempts to solve

the problem of coordination. To this end I will provide a novel account of what it means to

coordinate quantity concepts to physical operations – an account in which coordination is

understood as a process rather than a static definition – and clarify the conventional and

empirical aspects of this process.

The problem of accuracy

Even if one can safely assume that a given procedure measures the quantity it is

intended to, a second problem arises when one tries to evaluate the accuracy of that

procedure. Quantities such as length, duration and temperature, insofar as they are

represented by non-integer (e.g. rational or real) numbers, cannot be measured with

complete accuracy. Even measurements of integer-valued quantities, such as the number of

alpha-particles discharged in a radioactive reaction, often involve uncertainties. The accuracy

of measurements of such quantities cannot, therefore, be evaluated by reference to exact

values but only by comparing uncertain estimates to each other. Such comparisons by their

very nature cannot determine the extent of error associated with any single estimate but only

overall mutual compatibility among estimates. Hence multiple ways of distributing errors

among estimates are possible that are all consistent with the evidence gathered through

12

comparisons. It seems that claims to accuracy are intrinsically underdetermined by any

possible evidence5.

Many of the authors who have discussed the problem of coordination appear to have

also identified the problem of accuracy, although they have not always distinguished the two

very clearly. Often, as in the cases of Mach, Ellis and Carnap, they naively believed that

fixing a measurement standard in an arbitrary manner is sufficient to solve both problems at

once. However, measurement standards are physical instruments whose construction,

maintenance, operation and comparison suffer from uncertainties just like those of other

instruments. As I will show, the absolute accuracy of measurement standards is nothing but

a myth that obscures the complexity behind the problem of accuracy. Indeed, I will argue

that the role played by standards in the evaluation of measurement accuracy has so far been

grossly misunderstood by philosophers. Once the epistemic role of standards is clarified,

new and important insights emerge not only with respect to the proper solution to the

problem of accuracy but also with respect to the other two problems.

The problem of quantity individuation

When discussing the previous two problems I implicitly assumed that it is possible to

tell whether multiple measuring procedures, compared to each other either synchronically or

diachronically, measure the same quantity. But this assumption quickly leads to another

underdetermination problem, which I call the ‘problem of quantity individuation.’ Even

5 See also Kyburg (1984, 183)

13

when two different procedures are thought to measure the same quantity, their outcomes

rarely exactly coincide under similar conditions. Therefore when the outcomes of two

procedures appear to disagree with each other two kinds of explanation are open to

scientists: either one (or both) of the procedures are inaccurate, or the two procedures

measure different quantities6. But any empirical test that may be brought to bear on this

dilemma necessarily presupposes additional facts about agreement or disagreement among

measurement outcomes and merely duplicates the problem. Much like claims about

accuracy, claims about quantity individuation are underdetermined by any possible evidence.

As Chapter 2 will make clear, existing philosophical accounts of quantity individuation

do not fully acknowledge the import of the problem. Bridgman and Ellis, for example, both

acknowledge that claims to quantity individuation are underdetermined by facts about

agreement and disagreement among measuring instruments. And yet they fail to notice that

facts about agreement and disagreement among measuring instruments are themselves

underdetermined by the indications of those instruments. Once this additional level of

underdetermination is properly appreciated, Bridgman and Ellis’ proposed criteria of

quantity individuation are exposed as question-begging. A proper solution to the problem of

quantity individuation, I will argue, is possible only if one takes into account its

entanglement with the first two problems.

6 This second option may be further subdivided into sub-options. The two procedures may be measuring different quantity tokens of the same type, e.g. lengths of different objects, or two different types of quantity altogether, e.g. length and area.

14

Epistemic entanglement

Though conceptually distinct, I will argue that the three problems just mentioned are

epistemically entangled, i.e. that they cannot be solved independently of one another.

Specifically, I will show that (i) it is impossible to test whether a given procedure P measures

a given quantity Q without at the same time testing how accurately procedure P would

measure quantity Q; (ii) it is impossible to test how accurately procedure P would measure

quantity Q without comparing it to some other procedure P′ that is supposed to measure Q;

and (iii) it is impossible to test whether P and P′ measure the same quantity without at the

same time testing whether they measure some given quantity, e.g. Q. Note that these

‘impossibility theses’ are epistemic rather than logical. For example, it is logically possible to

know that two procedures measure the same quantity without knowing which quantity they

measure7. Nevertheless, it is epistemically impossible to test whether two procedures

measure the same quantity without making substantive assumptions about the quantity they

are supposed to measure.

The extent and consequences of this epistemic entanglement have hitherto remained

unrecognized by philosophers, despite the fact that some of the problems themselves have

been widely acknowledged for a long time. The model-based account presented here is the

first epistemology of measurement to clarify how it is possible in general to solve all three

problems simultaneously without getting caught in a vicious circle.

7 The opposite is not the case, of course: one cannot (logically speaking) know which quantities two procedures measure without knowing whether they measure the same quantity. Questions 1 and 3 are therefore logically related, but not logically equivalent.

15

4. The challenge from practice

Apart from solving abstract and general problems like those discussed in the previous

section, a central challenge for the epistemology of measurement is to make sense of specific

measurement methods employed in particular disciplines. Indeed, it would be of little value

to suggest a solution to the abstract problems that has no bearing on scientific practice, as

such solution would not be able to clarify whether and how accepted measurement methods

actually overcome these problems. The ‘challenge from practice’, then, is to shed light on the

epistemic efficacy of concrete methodologies of measurement and standardization. How do

such methods overcome the three general epistemological problems discussed above? As

already mentioned, this thesis will focus on the standardization of physical measuring

instruments. Physical metrology involves a variety of methods for instrument comparison,

error detection and correction, uncertainty evaluation and calibration. These methods

employ theoretical and statistical tools as well as techniques of experimental manipulation

and control. A central desideratum for the plausibility of the model-based account will be its

ability to explain how, and under what conditions, these methods support knowledge claims

about measurement, accuracy and quantity individuation.

As my focus will be on physical metrology, I will pay special attention to the

methodological guidelines developed by practitioners in that field. In particular, I will

frequently refer to two documents published in 2008 by the Joint Committee for Guides in

Metrology (JCGM), a committee that represents eight leading international standardization

16

bodies8. The first document is the International Vocabulary of Metrology – Basic and General

Concepts and Associated Terms (VIM), 3rd edition (JCGM 2008)9. This document contains

definitions and clarificatory remarks for dozens of key concepts in metrology such as

calibration, measurement accuracy, measurement precision and measurement standard.

These definitions shed light on the way practitioners understand these concepts and on their

underlying (and sometimes conflicting) epistemic and metaphysical commitments. The

second document is titled Evaluation of Measurement Data — Guide to the Expression of

Uncertainty in Measurement (GUM), 1st edition (JCGM 2008a). This document provides

detailed guidelines for evaluating measurement uncertainties and for comparing the results

of different measurements. Together these two documents portray a methodological picture

of metrology in which abstract and idealized representations of measurement processes play

a central role. However, being geared towards regulating practice, these documents do not

explicitly analyze the presuppositions underlying this methodological picture nor its efficacy

for overcoming general epistemological conundrums that are of interest to philosophers. It

is this gap between methodology and epistemology that the model-based account of

measurement is intended to fill.

8 The JCGM is composed of representatives from the International Bureau of Weights and Measures (BIPM), the International Electrotechnical Commission (IEC), the International Federation of Clinical Chemistry and Laboratory Medicine (IFCC), the International Laboratory Accreditation Cooperation (ILAC), the International Organization for Standardization (ISO), the International Union of Pure and Applied Chemistry (IUPAC), the International Union of Pure and Applied Physics (IUPAP) and the International Organization of Legal Metrology (OIML).

9 A new version of the 3rd edition of the VIM with minor changes was published in early 2012. My discussion in this thesis applies equally to this new version.

17

5. The model-based account

According to the model-based account, a necessary precondition for the possibility of

measuring is the specification of an abstract and idealized model of the measurement process. To

measure a physical quantity is to make coherent and consistent inferences from the final

state(s) of a physical process to value(s) of a parameter in the model. Prior to the

subsumption of a process under some idealized assumptions, it is impossible to ground such

inferences and hence impossible to obtain a measurement outcome. Rather than be given by

observation, measurement outcomes are sensitive to the assumptions with which a

measurement process is modelled and may change when these assumptions change. The

same holds true for estimates of measurement uncertainty, accuracy and error, as well as for

judgements about agreement and disagreement among measurement outcomes – all are

relative to the assumptions under which the relevant measurement processes are modelled.

My conception of the nature and functions of models follows closely the views

expressed in Morrison and Morgan (1999), Morrison (1999), Cartwright et al. (1995) and

Cartwright (1999). I take a scientific model to be an abstract representation of some local

phenomenon, a representation that is used to predict and explain aspects of that

phenomenon. A model is constructed out of assumptions about the ‘target’ phenomenon

being represented. These assumptions may include laws and principles from one or more

theories, empirical generalizations from available data, statistical assumptions about the data,

and other local (and sometimes ad hoc) simplifying assumptions about the phenomenon of

interest. The specialized character of models allows them to function autonomously from

the theories that contributed to their construction, and to mediate between the highly

abstract assumptions of theory and concrete phenomena. I view models as instruments that

18

are more or less useful for purposes of prediction, explanation, experimental design and

intervention, rather than as descriptions that are true or false.

Though not committed to any particular view on how models represent the world, the

model-based account does not require models to mirror the structure of their target systems

in order to be successful representational instruments. My framework therefore differs from

the ‘semantic’ view, which takes models to be set-theoretical relational structures that are

isomorphic to relations among objects in the target domain (Suppes 1960, van Fraassen

1980, 41-6). The model-based account is also permissive with respect to the ontology of

models, and apart from assuming that models are abstract constructs I do not presuppose

any particular view concerning their nature (e.g. abstract entities, mathematical objects,

fictions). I do, however, take models to be non-linguistic entities and hence different from

the equations used to express their assumptions and consequences10.

The epistemic functions of models have received far less attention in the context of

measurement than in other contexts where models are used to produce knowledge, e.g.

theory construction, prediction, explanation, experimentation and simulation. An exception

to this general neglect is the use of models for measurement in economics, a topic about

which philosophers have gained valuable insights in recent years (Boumans 2005, 2006,

2007; Morgan 2007). The Representational Theory of Measurement (Krantz et al 1971)

appeals to models in the set-theoretical sense to elucidate the adequacy of different types of

scales, but completely neglects epistemic questions concerning coordination, accuracy and

quantity individuation. This thesis will focus on the epistemic functions of models in

10 On this last point my terminology is at odds with that of the VIM, which defines a measurement model as a set of equations. Cf. JCGM 2008, 2.48 “Measurement Model”, p. 32.

19

physical measurement, a topic on which relatively little has been written, and to date no

systematic account has been offered11.

The models I will discuss represent measurement processes. Such processes have physical

and symbolic aspects. The physical aspect of a measurement process, broadly construed,

includes interactions between a measuring instrument, one or more measured samples, the

environment and human operators. The symbolic aspect includes data processing operations

such as averaging, data reduction and error correction. The primary function of models of

measurement processes is to represent the final states – or ‘indications’ – of the process in

terms of values of the measured quantity. For example, the primary function of a model of a

cesium fountain clock is to represent the output frequency of the clock (the frequency of its

‘ticks’) in terms of the ideal frequency associated with a specific hyperfine transition in

cesium-133. To do this, the model of the clock must incorporate theoretical and statistical

assumptions about the working of the clock and its interactions with the cesium sample and

the environment, as well as about the processing of the output frequency signal.

A measurement procedure is a measurement process as represented under a particular set

of modeling assumptions. Hence multiple procedures may be instantiated on the basis of the

same measurement process when the latter is represented with different models12. For

example, the same interactions among various parts of a cesium fountain clock and its

11 But see important contributions to this topic by Morrison (2009) and Frigerio, Giordani and Mari (2010).

12 Here too I have chosen to slightly deviate from the terminology of the VIM, which defines a measurement procedure as a description of a measurement process that is based on a measurement model (JCGM 2008, 2.6, p. 18). I use the term, by contrast, to denote a measurement process as represented by a measurement model. The difference is that in the VIM definition a procedure does not itself measure but only provides instructions on how to measure, whereas in my definition a procedure measures.

20

environment may instantiate different procedures for measuring time when modelled with

different assumptions.

According to the model-based account, knowledge claims about coordination,

accuracy and quantity individuation are properly ascribable to measurement procedures rather

than to measurement processes. That is, such knowledge claims presuppose that the

measurement process in question is already subsumed under specific idealized assumptions,

and may therefore be judged as true or false only relative to those assumptions. The central

reason for this model-relativity is that prior to the subsumption of a measurement process

under a model it is impossible to warrant objective claims about the outcomes of

measurement, that is, claims that reasonably ascribe the outcome to the object being measured

rather than to idiosyncrasies of the procedure. This will be explained in detail in Chapter 2.

As I will argue, the model-based account meets both the abstract and practice-based

challenges I have discussed. Once the inferential grounds of measurement claims are

relativized to a representational context, it becomes clear how all three epistemic problems

mentioned above may be solved simultaneously. Moreover, it becomes clear how

contemporary metrological methods of standardization, calibration and uncertainty

evaluation are able to solve these problems, and what practical considerations and trade-offs

are involved in the application of such methods. Finally, it becomes clear why measurement

outcomes retain their objective validity outside the representational context in which they are

obtained, thereby avoiding problems of incommensurability across different measuring

procedures.

In providing a model-based epistemology of measurement, I intend to offer neither a

critique nor an endorsement of metaphysical realism with respect to measurable quantities.

My account remains agnostic with respect to metaphysics and pertains to measurement

21

solely as an epistemic activity, i.e. to the inferences and assumptions that make it possible to

warrant knowledge claims by operating measuring instruments. For example, nothing in my

account depends on whether or not ratios of mass (or length, or duration) exist mind-

independently. Indeed, in Chapters 1 and 4 I show that the problem of accuracy is solved in

exactly the same way regardless of whether one interprets measurement uncertainties as

deviations from true quantity values or as estimates of the degree of mutual consistency

among the consequences of different models. The question of realism with respect to

measurable quantities is therefore independent of the epistemology of measurement and

underdetermined by any evidence one can gather from the practice of measuring.

6. Methodology

As I have mentioned, the model-based account is designed to meet both general

epistemological challenges and challenges from practice. These two sorts of challenge may

be distinguished along the lines of a normative-descriptive divide and formulated as two

questions:

1. Normative question: what are some of the formal desiderata for an adequate

solution to the problems of coordination, accuracy and quantity

individuation?

2. Descriptive question: do the methods employed in physical metrology satisfy

these desiderata?

22

It is tempting to try to answer these questions separately – first by analyzing the

abstract problems and arriving at formal desiderata for their solution, and then by surveying

metrological methods for compatibility with these desiderata. But on a closer look it

becomes clear that these two questions cannot be answered completely independently of

each other. Much like the first-order problems, these questions are entangled. On the one

hand, overly strict normative desiderata would lead to the absurdity that no method can

resolve the problems (why this is an absurdity was discussed above). An example of an

overly strict desideratum is the requirement that measurement processes be perfectly

repeatable, a demand that is unattainable in practice. On the other hand, overly lenient

desiderata would run the risk of vindicating methods that practitioners regard as flawed.

While not necessarily absurd, if such cases abounded they would eventually raise the worry

that one’s normative account fails to capture the problems that practitioners are trying to

solve. To avoid these two extremes, the epistemologist must be able to learn from practice what

counts a good solution to an epistemological problem, yet do so without relinquishing the

normativity of her account.

These seemingly conflicting needs are fulfilled by a method I call ‘normative analysis of

exemplary cases’. I provide original and detailed case studies of metrological methods that

practitioners consider exemplary solutions to the general epistemological problems posed

above. Being exemplary solutions, they must also come out as successful solutions in my own

epistemological account, for otherwise I have failed to capture the problems that

metrologists are trying to solve. Note that this is not a license to believe everything

practitioners say, but merely a reasonable starting point for a normative analysis of practice.

In other words, this method reflects a commitment to learn from practitioners what their

23

problems are and assess their success in solving these problems rather than the preconceived

problems of philosophers.

For my main case studies I have chosen to concentrate on the standardization of time

and frequency, the most accurately and stably realized physical quantities in contemporary

metrology. In addition to a study of the metrological literature, I spent several weeks at the

laboratories of the Time and Frequency Division at the US National Institute of Standards

and Technology (NIST) in Boulder, Colorado. I conducted interviews with ten of the

Division’s scientists as well as with several other specialists at the University of Colorado’s

JILA labs. In these interviews I invited metrologists to reflect on the reasons why they make

certain knowledge claims about atomic clocks (e.g. about their accuracy, errors and

agreement), on the methods they use to validate such claims, and on problems or limitations

they encounter in applying these methods.

These materials then served as the basis for abstracting common presuppositions and

inference patterns that characterize metrological methods more generally. At the same time,

my superficial ‘enculturation’ into metrological life allowed me to reconceptualise the general

epistemological problems and assess their relevance to the concrete challenges of the

laboratory. These ongoing iterations of abstraction and concretization eventually led to a

stable set of desiderata that fit the exemplars and at the same time were general enough to

extend beyond them.

24

7. Plan of thesis

This dissertation consists of four autonomous essays, each dedicated to a different

aspect of the epistemic and methodological challenges mentioned above. Rather than

advance a single argument, each essay contains a self-standing argument in favour of the

model-based account from different but interlocking perspectives.

Chapter 1 is dedicated to primary measurement standards, and debunks the myth

according to which such standards are perfectly accurate. I clarify how the uncertainty

associated with primary standards is evaluated and how the subsumption of standards under

idealized models justifies inferences from uncertainty to accuracy.

Chapter 2 introduces the problem of quantity individuation, and shows that this

problem cannot be solved independently of the problems of coordination and accuracy. The

model-based account is then presented and shown to dissolve all three problems at once.

Chapter 3 expands on the problem of coordination through a discussion of the

construction and maintenance of Coordinated Universal Time (UTC). As I argue, abstract

quantity concepts such as terrestrial time are not coordinated directly to any concrete clock,

but only indirectly through a hierarchy of models. This mediation explains how seemingly ad

hoc error corrections can stabilize the way an abstract quantity concept is applied to

particulars.

Finally, Chapter 4 extends the scope of discussion from standards to measurement

procedures in general by focusing on calibration. I show that calibration is a special sort of

modeling activity, and that measurement uncertainty is a special sort of predictive

uncertainty associated with this activity. The role of standards in calibration is clarified and a

25

general solution to the problem of accuracy is provided in terms of a robustness test among

predictions of multiple models.

26

The Epistemology of Measurement: A Model-Based Account

1. How Accurate is the Standard Second?

Abstract: Contrary to the claim that measurement standards are absolutely accurate by definition, I argue that unit definitions do not completely fix the referents of unit terms. Instead, idealized models play a crucial semantic role in coordinating the theoretical definition of a unit with its multiple concrete realizations. The accuracy of realizations is evaluated by comparing them to each other in light of their respective models. The epistemic credentials of this method are examined and illustrated through an analysis of the contemporary standardization of time. I distinguish among five senses of ‘measurement accuracy’ and clarify how idealizations enable the assessment of accuracy in each sense.13

1.1. Introduction

A common philosophical myth states that the meter bar in Paris is exactly one meter

long – that is, if any determinate length can be ascribed to it in the metric system. One

variant of the myth comes from Wittgenstein, who tells us that the meter bar is the one

thing “of which one can say neither that it is one metre long, nor that it is not one metre

long” (1953 §50). Kripke famously disagrees, but develops a variant of the same myth by

13 This chapter was published with minor modifications as Tal (2011).

27

stating that the length of the bar at a specified time is rigidly designated by the phrase ‘one

meter’ (1980, 56). Neither of these pronouncements is easily reconciled with the 1960

declaration of the General Conference on Weights and Measures, according to which “the

international Prototype does not define the metre with an accuracy adequate for the present

needs of metrology” and is therefore replaced by an atomic standard (CGPM 1961). There

is, of course, nothing problematic with replacing one definition with another. But how can

the accuracy of the meter bar be evaluated against anything other than itself, let alone be

found lacking?

Wittgenstein and Kripke almost certainly did not subscribe to the myth they helped

disseminate. There are good reasons to believe that their examples were meant merely as

hypothetical illustrations of their views on meaning and reference14. This chapter does not

take issue with their accounts of language, but with the myth of the absolute accuracy of

measurement standards, which has remained unchallenged by philosophers of science. The

meter is not the only case where the myth clashes with scientific practice. The second and

the kilogram, which are currently used to define all other units in the International System

(i.e. the ‘metric’ system), are associated with primary standards that undergo routine accuracy

evaluations and are occasionally improved or replaced with more accurate ones. In the case

of the second, for example, the accuracy of primary standards has increased more than a

thousand-fold over the past four decades (Lombardi et al 2007).

This chapter will analyze the methodology of these evaluations, and argue that they

indeed provide estimates of accuracy in the same senses of ‘accuracy’ normally presupposed

14 Wittgenstein mentions the meter bar only in passing as an analogy to color language-games. Kripke carefully notes that the uniqueness of the meter bar’s role in standardizing length is no more than a hypothetical supposition (1980, 55, fn. 20.)

28

by scientific and philosophical discussions of measurement. My main examples will come

from the standardization of time. I will focus on the methods by which time and frequency

standards are evaluated and improved at the US National Institute of Standards and

Technology (NIST). These tasks are carried out by metrologists, experts in highly reliable

measurement. The methods and tools of metrology – a live discipline with its own journals

and controversies – have received little attention from philosophers15. Recent philosophical

literature on measurement has mostly been concerned either with the metaphysics of

quantity and number (Swoyer 1987, Michell 1994) or with the mathematical structures

underlying measurement scales (Krantz et al. 1971). These ‘abstract’ approaches treat the

topics of uncertainty, accuracy and error as extrinsic to the theory of measurement and as

arising merely from imperfections in its application. Though they do not deny that

measurement operations involve interactions with imperfect instruments in noisy

environments, authors in this tradition analyze measurement operations as if these

imperfections have already been corrected or controlled for.

By contrast, the current study is meant as a step towards a practice-oriented

epistemology of physical measurement. The management of uncertainty and error will be

viewed as intrinsic to measurement and as a precondition for the possibility of gaining

knowledge from the operation of measuring instruments. At the heart of this view lies the

recognition that a theory of measurement cannot be neatly separated into fundamental and

applied parts. The methods employed in practice to correct errors and evaluate uncertainties

crucially influence which answers are given to so-called ‘fundamental’ questions about

15 Notable exceptions are Chang (2004) and Boumans (2007). Metrology has been studied by historians and sociologists of science, e.g. Latour (1987, ch. 6), Schaffer (1992), Galison (2003) and Gooday (2004).

29

quantity individuation and the appropriateness of measurement scales. This will be argued in

detail in Chapter 2.

In this chapter I will use insights into metrological practices to outline a novel account

of the underexplored relationship between uncertainty and accuracy. Scientists often include

uncertainty estimates in their reports of measurement results, but whether such estimates

warrant claims about the accuracy of results is an epistemological question that philosophers

have overlooked. Based on an analysis of time standardization, I will argue that inferences

from uncertainty to accuracy are justified when a doubly robust fit – among instruments as

well as among idealized models of these instruments – is demonstrated. My account will

shed light on metrologists’ claims that the accuracy of standards is being continually

improved and on the role played by idealized models in these improvements.

1.2. Five notions of measurement accuracy

Accuracy is often ascribed to scientific theories, instruments, models, calculations and

data, although the meaning of the term varies greatly with context. Even within the limited

context of physical measurement the term carries multiple senses. For the sake of the

current discussion I offer a preliminary distinction among five notions of measurement

accuracy. These are intended to capture different senses of the term as it is used by

physicists and engineers as well as by philosophers of science. The five notions are neither

co-extensive nor mutually exclusive but instead partially overlap in their extensions. As I will

argue below, the sort of robustness test metrologists employ to evaluate the uncertainty of

30

measurement standards provides sufficient evidence for the accuracy of those standards

under all five senses of ‘measurement accuracy’.

1. Metaphysical measurement accuracy: closeness of agreement between a

measured value of a quantity and its true value16

(correlate concept: truth)

2. Epistemic measurement accuracy: closeness of agreement among values

reasonably attributed to a quantity based on its measurement17

(correlate concept: uncertainty)

3. Operational measurement accuracy: closeness of agreement between a

measured value of a quantity and a value of that quantity obtained by

reference to a measurement standard

(correlate concept: standardization)

4. Comparative measurement accuracy: closeness of agreement among

measured values of a quantity obtained by using different measuring systems,

or by varying extraneous conditions in a controlled manner

(correlate concept: reproducibility)

16 cf. “Measurement Accuracy” in the International Vocabulary of Metrology (VIM) (JCGM 2008, 2.13). My own definitions for measurement-related terms are inspired by, but in some cases diverge from, those of the VIM.

17 cf. JCGM 2008, 2.13, Note 3.

31

5. Pragmatic measurement accuracy (‘accuracy for’): measurement accuracy

(in any of the above four senses) sufficient for meeting the requirements of a

specified application.

Let us briefly clarify each of these five notions. First, the metaphysical notion takes

truth to be the standard of accuracy. For example, a thermometer is metaphysically accurate

if its outcomes are close to true ratios between measured temperature intervals and the

chosen unit interval. If one assumes a traditional understanding of truth as correspondence

with a mind-independent reality, the notion of metaphysical accuracy presupposes some

form of realism about quantities. The argument advanced in this chapter is nevertheless

independent of such realist assumptions, as it neither endorses nor rejects metaphysical

conceptions of measurement accuracy.

Second, a thermometer is epistemically accurate if its design and use warrant the

attribution of a narrow range of temperature values to objects. The dispersion of reasonably

attributed values is called measurement uncertainty and is commonly expressed as a value

range18. Epistemic accuracy should not be confused with precision, which constitutes only one

aspect of epistemic accuracy. Measurement precision is the closeness of agreement among

measured values obtained by repeated measurements of the same (or relevantly similar)

18 cf. “Measurement Uncertainty” (JCGM 2008, 2.26.) Note that this term does not refer to a degree of confidence or belief but to a dispersion of values whose attribution to a quantity reasonably satisfies a specified degree of confidence or belief.

32

objects using the same measuring system19. Imprecision is therefore caused by uncontrolled

variations to the equipment, operation or environment when measurements are repeated.

This sort of variation is a ubiquitous but not exclusive source of measurement uncertainty.

As will be explained below, some measurement uncertainty stems from other sources,

including imperfect corrections to systematic errors. The notion of epistemic accuracy is

therefore broader than that of precision.

Third, operational measurement accuracy is determined relative to an established

measurement standard. For example, a thermometer is operationally accurate if its outcomes are

close to those of a standard thermometer when the two measure relevantly similar samples.

The most common way of evaluating operational accuracy is by calibration, i.e. by modeling

an instrument in a manner that establishes a relation between its indications and standard

quantity values20.

Fourth, comparative accuracy is the closeness of agreement among measurement

outcomes when the same quantity is measured in different ways. The notion of comparative

accuracy is closely linked with that of reproducibility. To say that a measurement outcome is

comparatively accurate is to say that it is closely reproducible under controlled variations to

measurement conditions and methods.21 For example, thermometers in a given set are

comparatively accurate if their outcomes closely agree with one another’s when applied to

relevantly similar samples.

19 cf. “Measurement Precision” (JCGM 2008, 2.15) and “Measurement Repeatability” (ibid, 2.21). My concept of precision is narrower than that of the VIM (see also fn. 21.)

20 cf. “Calibration” (JCGM 2008, 2.39) 21 Unlike precision, reproducibility concerns controlled variations to measurement conditions. I deviate

slightly from the VIM on this point to reflect general scientific usage of these terms (cf. “Measurement Reproducibility”, JCGM 2008, 2.25).

33

Finally, pragmatic measurement accuracy is accuracy sufficient for a specific use, such

as a solution to an engineering problem. There are four sub-senses of pragmatic accuracy,

corresponding to the first four senses of measurement accuracy. For example, a

thermometer is pragmatically accurate in an epistemic sense if the overall uncertainty of its

outcomes is low enough to reliably achieve a specified goal, e.g. keeping an engine from

over-heating. Of course, whether or not a measuring system (or a measured value) is

pragmatically accurate depends on its intended use22.

In the physical sciences quantitative expressions of measurement accuracy are typically

cast in epistemic terms, namely in terms of uncertainty. This does not mean that scientific

estimates of accuracy are always and only estimates of epistemic accuracy. What matters to

the classification of accuracy is not the form of its expression, but the kind of evidence on

which estimates of accuracy are based. As I will argue below, metrological evaluations

provide evidence of the right sort for estimating accuracy under all five notions. Before

delving into the argument, the next section will provide some background on the concepts,

methods and problems involved in the standardization of time.

1.3. The multiple realizability of unit definitions

A key distinction in the standardization of physical units is that between definition

and realization. Since 1967 the second has been defined as the duration of exactly

22 Pragmatic accuracy may be understood as a threshold (pass/fail) concept. Alternatively, pragmatic accuracy may be represented continuously, for example as the likelihood of achieving the specified goal. Both analyses of the concept are compatible with the argument presented here.

34

9,192,631,770 periods of the radiation corresponding to a hyperfine transition of cesium-133

in the ground state (BIPM 2006). This definition pertains to an unperturbed cesium atom at

a temperature of absolute zero. Being an idealized description of a kind of atomic system, no

actual cesium atom ever satisfies this definition. Hence a question arises as to how the

reference of ‘second’ is fixed. The traditional philosophical approach would be to propose

some ‘semantic machinery’ through which the definition succeeds in picking out a definite

duration, e.g. a possible-world semantics of counterfactuals. However, this sort of approach

is hard pressed to explain how metrologists are able to experimentally access the extension

of ‘second' given the fact that it is physically impossible to instantiate the conditions

specified by the definition. Consequently, it becomes unclear how metrologists are able to

tell whether the actual durations they label ‘second’ satisfy the definition. By contrast, the

approach adopted in this chapter takes the definition to fix a reference only indirectly and

approximately by virtue of its role in guiding the construction of atomic clocks. Rather than

picking out any definite duration on its own, the definition functions as an ideal specification

for a class of atomic clocks. These clocks approximately satisfy – or in the metrological jargon,

‘realize’ – the conditions specified by the definition23. The activities of constructing and

modeling cesium clocks are therefore taken to fulfill a semantic function, i.e. that of

approximately fixing the reference of ‘second’, rather than simply measuring an already

linguistically fixed time interval.

The construction of an accurate primary realization of the second – a ‘meter stick’ of

time – must make highly sophisticated use of theory, apparatus and data analysis in order to

23 The verb ‘realize’ has various meanings in philosophical discussions. Here I follow the metrological use of this term and take it to be synonymous with ‘approximately satisfy’ (pertaining to a definition.)

35

approximate as much as possible the ideal conditions specified by the definition. But

multiple kinds of physical processes can be constructed that would realize the second, each

departing from the ideal definition in different respects and degrees. In other words,

different clock designs and environments correspond to different ways of de-idealizing the

definition. As of 2009, thirteen atomic clocks around the globe are used as primary

realizations of the second. There are also hundreds of official secondary realizations of the

second, i.e. atomic clocks that are traced to primary realizations. Like any collection of

physical instruments, different realizations of the second disagree with one another, i.e. ‘tick’

at slightly different rates. The definition of the second is thus multiply realizable in the sense

that multiple real durations approximately satisfy the definition, and no method can

completely rid us of the approximations.

That the definition of the second is multiply realizable does not mean that there are