The AppLeS Project: Harvesting the Grid Francine Berman U. C. San Diego This presentation will...

-

date post

20-Jan-2016 -

Category

Documents

-

view

214 -

download

0

Transcript of The AppLeS Project: Harvesting the Grid Francine Berman U. C. San Diego This presentation will...

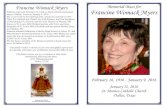

The AppLeS Project: Harvesting the Grid

Francine Berman

U. C. San Diego

Computing Today

data archives

networks

visualizationinstruments

MPPs

clusters

PCs

Workstations

Wireless

The Computational Grid• The Computational Grid

– ensemble of heterogeneous, distributed resources

– emerging platform for high-performance computing

• The Grid is the ultimate “supercomputer”– Grids aggregate an enormous number of resources

– Grids support applications that cannot be executed at any single site

Moreover,

– Grids provides increased access to each kind of resource (MPPs, clusters, etc.)

– Grid can be accessed at any time

Computational Grids• Computational Grids you know and love:

– Your computer lab

– The Internet

– The PACI partnership resources

– Your home computer, CS and all the resources you have access to

• You are already using the Computational Grid …

Programming the Grid I• Basics

– Need way to login, authenticate in different domains, transfer files, coordinate execution, etc.

Grid Infrastructure

Resources

Globus

Legion

Condor

NetSolve

PVM

Application Development Environment

Programming the Grid II

• Performance-oriented programming

– Need way to develop and execute performance-efficient programs

– Program must achieve performance in context of • heterogeneity • dynamic behavior• multiple administrative domains

This can be extremely challenging.

– What approaches are most effective to achieve Grid application execution performance?

Performance-oriented Execution

• Careful scheduling required to achieve application performance in parallel and distributed environments

• For the Grid, adaptivity right approach to leverage deliverable resource capacities

UCSD

UTK

OGI

File Transfer time to SC’99 in Portland [Alan Su]NWS prediction

• Fundamental components:– Application-centric performance model

• Provides quantifiable measure of Grid resources in terms of their potential impact on the application

– Prediction of deliverable resource performance at execution time

– User’s preferences and performance criteria

These components form the basis for AppLeS.

Adaptive Application Scheduling

What is AppLeS ?• AppLeS = Application Level Scheduler

– Joint project with Rich Wolski (U. of Tenn.)

• AppLeS is a methodology– Project has investigated adaptive application scheduling using

dynamic information, application-specific performance models, user preferences.

– AppLeS approach based on real-world scheduling.

• AppLeS is software– Have developed multiple AppLeS-enabled applications which

demonstrate the importance and usefulness of adaptive scheduling on the Grid.

How Does AppLeS Work?• Select resources

• For each feasible resource set, plan a schedule

– For each schedule, predict application performance at execution time

– consider both the prediction and its qualitative attributes

• Deploy the “best” of the schedules wrt user’s performance criteria – execution time– convergence– turnaround time

AppLeS + application• Resource selection• Schedule Planning• Deployment

Grid InfrastructureNWS

Resources

Network Weather Service (Wolski, U. Tenn.)

• The NWS provides dynamic resource information for AppLeS

• NWS is stand-alone system

• NWS – monitors current system state

– provides best forecast of resource load from multiple models

Sensor Interface

Reporting Interface

Forecaster

Model 2 Model 3Model 1

AppLeS Example: Jacobi2D

• Jacobi2D is a regular, iterative SPMD application

• Local communication using 5 point stencil

• AppLeS written in KeLP/MPI by Wolski

• Partitioning approach = strip decomposition

• Goal is to minimize execution time

Jacobi2D AppLeS Resource Selector

• Feasible resources determined according to application-specific “desirability” metrics

– memory

– application-specific benchmark

• Desirability metric used to sort resources

• Feasible resource sets formed from initial subset of sorted desirability list

– Next step is to plan a schedule for each feasible resource set

– Scheduler will choose schedule with best predicted execution time

Execution time for ith strip

where load = predicted percentage of CPU time available (NWS)comm = time to send and receive messages factored by

predicted BW (NWS)

AppLeS uses time-balancing to determine best partition on a given set of resources

Solve

for

loadipt

OperComp

CommCompAreaT

unloadedi

iiii

))((

Jacobi2D Performance Model and Schedule Planning

p T T T 2 1

}{ iArea

P1 P2 P3

Jacobi2D Experiments• Experiments compare

– Compile-time block [HPF] partitioning

– Compile-time irregular strip partitioning [no NWS, no resource selection]

– Run-time strip AppLeS partitioning

• Runs for different partitioning methods performed back-to-back on production systems

• Average execution time recorded

• Distributed UCSD/SDSC platform: Sparcs, RS6000, Alpha Farm, SP-2

Jacobi2D AppLeS Experiments• Representative Jacobi 2D

AppLeS experiment• Adaptive scheduling leverages

deliverable performance of contended system

• Spike occurs when a gateway between PCL and SDSC goes down

• Subsequent AppLeS experiments avoid slow link

0

1

2

3

4

5

6

7

Execu

tion T

ime (

secon

ds)

1000

1100

1200

1300

1400

1500

1600

1700

1800

1900

2000

Problem Size

Comparison of Execution Times

Compile-time Blocked

Compile-time Irregular Strip

Runtime

Work-in-Progress: AppLeS Templates

• AppLeS-enabled applications perform well in multi-user environments.

• Methodology is right on target but …

– AppLeS must be integrated with application –-- labor-intensive and time- intensive

– You generally can’t just take an AppLeS and plug in a new application

Templates• Current thrust is to develop AppLeS templates which

– target structurally similar classes of applications

– can be instantiated in a user-friendly timeframe

– provide good application performance

AppLeS Template

ApplicationModule

PerformanceModule

SchedulingModule

DeploymentModule

AP

I

AP

I

AP

I

Network Weather Service

Case Study: Parameter Sweep Template

• Parameter Sweeps = class of applications which are structured as multiple instances of an “experiment” with distinct parameter sets

• Independent experiments may share input files

• Examples:

– MCell

– INS2DApplication Model

Example Parameter Sweep Application: MCell

• MCell = General simulator for cellular microphysiology

• Uses Monte Carlo diffusion and chemical reaction algorithm in 3D to simulate complex biochemical interactions of molecules

– Molecular environment represented as 3D space in which trajectories of ligands against cell membranes tracked

• Researchers plan huge runs which will make it possible to model entire cells at molecular level.

– 100,000s of tasks

– 10s of Gbytes of output data

– Would like to perform execution-time computational steering , data analysis and visualization

PST AppLeS

• Template being developed by Henri Casanova and Graziano Obertelli

• Resource Selection:

– For small parameter sweeps, can dynamically select a performance efficient number of target processors [Gary Shao]

– For large parameter sweeps, can assume that all resources may be used

Platform Model

Cluster

User’s hostand storage

network links

storage

MPP

• Contingency Scheduling: Allocation developed by dynamically generating a Gantt chart for scheduling unassigned tasks

• Basic skeleton1. Compute the next scheduling event

2. Create a Gantt Chart G

3. For each computation and file transfer currently underway, compute an estimate of its completion time and fill in the corresponding slots in G

4. Select a subset T of the tasks that have not started execution

5. Until each host has been assigned enough work, heuristically assign tasks to hosts, filling in slots in G

6. Implement schedule

Scheduling Parameter Sweeps

1 2 1 2 1 2

Network links

Hosts(Cluster 1)

Hosts(Cluster 2)

Com

puta

tion

Tim

e

Resources

G

Schedulingevent

Schedulingevent

Com

puta

tion

Parameter Sweep Heuristics• Currently studying scheduling heuristics useful for parameter sweeps in

Grid environments

• HCW 2000 paper compares several heuristics– Min-Min [task/resource that can complete the earliest is assigned first]

– Max-Min [longest of task/earliest resource times assigned first]

– Sufferage [task that would “suffer” most if given a poor schedule assigned first, as computed by max - second max completion times]

– Extended Sufferage [minimal completion times computed for task on each cluster, sufferage heuristic applied to these]

– Workqueue [randomly chosen task assigned first]

• Criteria for evaluation:

– How sensitive are heuristics to location of shared input files and cost of data transmission?

– How sensitive are heuristics to inaccurate performance information?

Preliminary PST/MCell Results• Comparison of the performance of scheduling heuristics when it is

up to 40 times more expensive to send a shared file across the network than it is to compute a task

• “Extended sufferage” scheduling heuristic takes advantage of file sharing to achieve good application performance

Max-min

Workqueue

XSufferage

Sufferage

Min-min

Preliminary PST/MCell Results with “Quality of Information”

AppLeS in Context• Application Scheduling

– Mars, Prophet/Gallop, MSHN, etc.

• Grid Programming Environments– GrADS, Programmers’ Playground, VDCE, Nile, etc.

• Scheduling Services– Globus GRAM, Legion Scheduler/Collection/Enactor

• Resource and High-Throughput Schedulers– PBS, LSF, Maui Scheduler, Condor, etc.

• PSEs– Nimrod, NEOS, NetSolve, Ninf

• Performance Monitoring, Prediction and Steering– Autopilot, SciRun, Network Weather Service, Remos/Remulac, Cumulus

• AppLeS project contributes careful and useful study of adaptive scheduling for Grid environments

New Directions• Quality of Information

– Adaptive Scheduling

– AppLePilot / GrADS

• Resource Economies– Bushel of AppLeS

– UCSD Active Web

• Application Flexibility– Computational Steering

– Co-allocation

– Target-less computing

Quality of Information

• How can we deal with imperfect or imprecise predictive information?

• Quantitative measures of qualitative performance attributes can improve scheduling and execution– lifetime

– cost, overhead

– accuracy

– penalty

B CAtim

e

Using Quality of Information• Stochastic Scheduling: Information about the variability of the

target resources can be used by scheduler to determine allocation

– Resources with more performance variability assigned slightly less work

– Preliminary experiments show that resulting schedule performs well and can be more predictable

SOR Experiment [Jenny Schopf]

Current “Quality of Information” Projects

• “AppLepilot”

– Combines AppLeS adaptive scheduling methodology with “fuzzy logic” decision making mechanism from Autopilot

– Provides a framework in which to negotiate Grid services and promote application performance

– Collaboration with Reed, Aydt, Wolski

– Builds on the software being developed for GrADS

GrADS: Building an Adaptive Grid Application Development and Execution Environment• System being developed as performance economy

– Performance contracts used to exchange information, bind resources, renegotiate execution environment

• Joint project with large team of researchers

Ken KennedyAndrew ChienRich WolskiIan FosterCarl Kesselman

Jack DongarraDennis GannonDan ReedLennart JohnssonFran Berman

PSE

Config.object

program

wholeprogramcompiler

Source appli-cation

libraries

Realtimeperf

monitor

Dynamicoptimizer

Grid runtime System

(Globus)

negotiation

Softwarecomponents

Scheduler/Service

Negotiator

Performance feedback

Perfproblem

Grid Application Development System

In Summary ...

• Development of AppLeS methodology, applications, templates, and models provides a careful investigation of adaptivity for emerging Grid environments

• Goal of current projects is to use real-world strategies to promote performance– dynamic scheduling – qualitative and quantitative modeling– multi-agent environments– resource economies

• AppLeS Corps:– Fran Berman, UCSD– Rich Wolski, U. Tenn– Jeff Brown– Henri Casanova– Walfredo Cirne– Holly Dail– Marcio Faerman

– Jim Hayes– Graziano Obertelli– Gary Shao– Otto Sievert– Shava Smallen– Alan Su

• Thanks to NSF, NASA, NPACI, DARPA, DoD

• AppLeS Home Page: http://apples.ucsd.edu