Tackling&an&Unknown&Number&of& Features&with&Sketching&& · 4/7/15 2...

Transcript of Tackling&an&Unknown&Number&of& Features&with&Sketching&& · 4/7/15 2...

4/7/15

1

©Emily Fox 2015 1

Case Study 1: Es.ma.ng Click Probabili.es

Tackling an Unknown Number of Features with Sketching

Machine Learning for Big Data CSE547/STAT548, University of Washington

Emily Fox April 9th, 2015

Problem 1: Complexity of LR Updates

• LogisTc regression update:

• Complexity of updates: – Constant in number of data points – In number of features?

• Problem both in terms of computaTonal complexity and sample complexity

• What can we with very high dimensional feature spaces? – Kernels not always appropriate, or scalable – What else?

©Emily Fox 2015 2

w

(t+1)i w

(t)i + ⌘t

n

��w(t)i + x

(t)i [y(t) � P (Y = 1|x(t)

,w

(t))]o

4/7/15

2

Problem 2: Unknown Number of Features

• For example, bag-‐of-‐words features for text data: – “Mary had a liZle lamb, liZle lamb…”

• What’s the dimensionality of x? • What if we see new word that was not in our vocabulary?

– Obamacare

– TheoreTcally, just keep going in your learning, and iniTalize wObamacare = 0 – In pracTce, need to re-‐allocate memory, fix indices,… A big problem for Big Data

©Emily Fox 2015 3

• Keep p by m Count matrix

• p hash funcTons: – Just like in Bloom Filter, decrease errors with mulTple hashes – Every Tme see string i:

©Emily Fox 2015 4

8j 2 {1, . . . , p} : Count[j, hj(i)] Count[j, hj(i)] + 1

Count-‐Min Sketch: general case

4/7/15

3

Querying the Count-‐Min Sketch

• Query Q(i)? – What is in Count[j,k]?

– Thus:

– Return:

©Emily Fox 2015 5

8j 2 {1, . . . , p} : Count[j, hj(i)] Count[j, hj(i)] + 1

But updates may be posiTve or negaTve

• Count-‐Min sketch for posiTve & negaTve case – ai no longer necessarily posiTve

• Update the same: Observe change Δi to element i:

– Each Count[j,h(i)] no longer an upper bound on ai

• How do we make a predicTon?

• Bound: – With probability at least 1-‐δ1/4, where ||a|| = Σi |ai|

©Emily Fox 2015 6

|ai � ai| 3✏||a||1

w

(t+1)i w

(t)i + ⌘t

n

��w(t)i + x

(t)i [y(t) � P (Y = 1|x(t)

,w

(t))]o

8j 2 {1, . . . , p} : Count[j, hj(i)] Count[j, hj(i)] +�i

4/7/15

4

Finally, Sketching for LR

• Never need to know size of vocabulary! • At every iteraTon, update Count-‐Min matrix:

• Making a predicTon:

• Scales to huge problems, great pracTcal implicaTons… ©Emily Fox 2015 7

w

(t+1)i w

(t)i + ⌘t

n

��w(t)i + x

(t)i [y(t) � P (Y = 1|x(t)

,w

(t))]o

Hash Kernels • Count-‐Min sketch not designed for negaTve updates • Biased esTmates of dot products

• Hash Kernels: Very simple, but powerful idea to remove bias • Pick 2 hash funcTons:

– h : Just like in Count-‐Min hashing

– ξ : Sign hash funcTon • Removes the bias found in Count-‐Min hashing (see homework)

• Define a “kernel”, a projecTon ϕ for x:

©Emily Fox 2015 8

4/7/15

5

Hash Kernels Preserve Dot Products

• Hash kernels provide unbiased esTmate of dot-‐products!

• Variance decreases as O(1/m)

• Choosing m? For ε>0, if

– Under certain condiTons… – Then, with probability at least 1-‐δ:

©Emily Fox 2015 9

(1� ✏)||x� x

0||22 ||�(x)� �(x0)||22 (1 + ✏)||x� x

0||22

m = O log

N�

✏2

!

�i(x) =X

j:h(j)=i

⇠(j)xj

Learning With Hash Kernels • Given hash kernel of dimension m, specified by h and ξ

– Learn m dimensional weight vector • Observe data point x

– Dimension does not need to be specified a priori!

• Compute ϕ(x): – IniTalize ϕ(x) – For non-‐zero entries j of xj:

• Use normal update as if observaTon were ϕ(x), e.g., for LR using SGD:

©Emily Fox 2015 10

w(t+1)i w(t)

i + ⌘tn

��w(t)i + �i(x

(t))[y(t) � P (Y = 1|�(x(t)),w(t))]o

4/7/15

6

InteresTng ApplicaTon of Hash Kernels: MulT-‐Task Learning

• Personalized click esTmaTon for many users: – One global click predicTon vector w:

• But…

– A click predicTon vector wu per user u:

• But…

• MulT-‐task learning: Simultaneously solve mulTple learning related problems: – Use informaTon from one learning problem to inform the others

• In our simple example, learn both a global w and one wu per user: – PredicTon for user u:

– If we know liZle about user u:

– Axer a lot of data from user u:

©Emily Fox 2015 11

Problems with Simple MulT-‐Task Learning

• Dealing with new user is annoying, just like dealing with new words in vocabulary

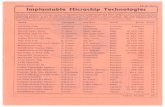

• Dimensionality of joint parameter space is HUGE, e.g. personalized email spam classificaTon from Weinberger et al.: – 3.2M emails – 40M unique tokens in vocabulary – 430K users – 16T parameters needed for personalized classificaTon!

©Emily Fox 2015 12

4/7/15

7

Hash Kernels for MulT-‐Task Learning • Simple, preZy soluTon with hash kernels:

– Very mulT-‐task learning as (sparse) learning problem with (huge) joint data point z for point x and user u:

• EsTmaTng click probability as desired:

• Address huge dimensionality, new words, and new users using hash kernels:

©Emily Fox 2015 13

Simple Trick for Forming ProjecTon ϕ(x,u) • Observe data point x for user u

– Dimension does not need to be specified a priori and user can be new!

• Compute ϕ(x,u): – IniTalize ϕ(x,u) – For non-‐zero entries j of xj: • E.g., j=‘Obamacare’ • Need two contribuTons to ϕ:

– Global contribuTon – Personalized ContribuTon

• Simply:

• Learn as usual using ϕ(x,u) instead of ϕ(x) in update funcTon ©Emily Fox 2015 14

4/7/15

8

Results from Weinberger et al. on Spam ClassificaTon: Effect of m

©Emily Fox 2015 15

Results from Weinberger et al. on Spam ClassificaTon: MulT-‐Task Effect

©Emily Fox 2015 16

4/7/15

9

What you need to know • Hash funcTons • Bloom filter

– Test membership with some false posiTves, but very small number of bits per element

• Count-‐Min sketch – PosiTve counts: upper bound with nice rates of convergence – General case

• ApplicaTon to logisTc regression • Hash kernels:

– Sparse representaTon for feature vectors – Very simple, use two hash funcTon (Can use one hash funcTon…take least significant bit to define ξ) – Quickly generate projecTon ϕ(x) – Learn in projected space

• MulT-‐task learning: – Solve many related learning problems simultaneously – Very easy to implement with hash kernels – Significantly improve accuracy in some problems (if there is enough data from individual users)

©Emily Fox 2015 17

©Emily Fox 2015 18

Machine Learning for Big Data CSE547/STAT548, University of Washington

Emily Fox April 9th, 2015

Task DescripTon: Finding Similar Documents

Case Study 2: Document Retrieval

4/7/15

10

Document Retrieval

©Emily Fox 2015 19

n Goal: Retrieve documents of interest n Challenges:

¨ Tons of arTcles out there ¨ How should we measure similarity?

Task 1: Find Similar Documents

©Emily Fox 2015 20

n To begin… ¨ Input: Query arTcle ¨ Output: Set of k similar arTcles

4/7/15

11

Document RepresentaTon

©Emily Fox 2015 21

n Bag of words model

1-‐Nearest Neighbor

©Emily Fox 2015 22

n ArTcles

n Query:

n 1-‐NN ¨ Goal:

¨ FormulaTon:

4/7/15

12

k-‐Nearest Neighbor

©Emily Fox 2015 23

n ArTcles

n Query:

n k-‐NN ¨ Goal:

¨ FormulaTon:

X = {x1, . . . , x

N}, x

i 2 Rd

x 2 Rd

Distance Metrics – Euclidean

©Emily Fox 2015 24 24

Other Metrics… n Mahalanobis, Rank-‐based, CorrelaTon-‐based, cosine similarity…

where

Or, more generally,

⌃ =

2

6664

�21 0 · · · 00 �2

2 · · · 0...

... · · ·...

0 0 . . . �2d

3

7775

d(u, v) =p

(u� v)0⌃(u� v)

d(u, v) =

vuutdX

i=1

�2i (ui � vi)2

d(u, v) =

vuutdX

i=1

(ui � vi)2

Equivalently,

4/7/15

13

Notable Distance Metrics (and their level sets)

©Emily Fox 2015 25

L1 norm (absolute)

L∞ (max) norm

Scaled Euclidian (L2)

Mahalanobis (Σ is general sym pos def matrix, on previous slide = diagonal)

n Recall distance metric

n What if each document were Tmes longer? ¨ Scale word count vectors

¨ What happens to measure of similarity?

n Good to normalize vectors

Euclidean Distance + Document Retrieval

©Emily Fox 2015 26 26

d(u, v) =

vuutdX

i=1

(ui � vi)2

↵

4/7/15

14

Issues with Document RepresentaTon

©Emily Fox 2015 27

n Words counts are bad for standard similarity metrics

n Term Frequency – Inverse Document Frequency (�-‐idf)

¨ Increase importance of rare words

TF-‐IDF

©Emily Fox 2015 28

n Term frequency:

¨ Could also use n Inverse document frequency:

n �-‐idf:

¨ High for document d with high frequency of term t (high “term frequency”) and few documents containing term t in the corpus (high “inverse doc frequency”)

tf(t, d) =

{0, 1}, 1 + log f(t, d), . . .

idf(t,D) =

tfidf(t, d,D) =

4/7/15

15

n Naïve approach: Brute force search ¨ Given a query point ¨ Scan through each point ¨ O(N) distance computaTons per

1-‐NN query! ¨ O(Nlogk) per k-‐NN query!

n What if N is huge??? (and many queries)

Issues with Search Techniques

©Emily Fox 2015 29

33 Distance Computations

x

x

i

n Smarter approach: kd-‐trees ¨ Structured organizaTon of documents

n Recursively parTTons points into axis aligned boxes.

¨ Enables more efficient pruning of search space

n Examine nearby points first. n Ignore any points that are further than the nearest point found so far.

n kd-‐trees work “well” in “low-‐medium” dimensions ¨ We’ll get back to this…

KD-‐Trees

©Emily Fox 2015 30

4/7/15

16

KD-‐Tree ConstrucTon

©Emily Fox 2015 31

Pt X Y

1 0.00 0.00 2 1.00 4.31 3 0.13 2.85 … … …

n Start with a list of d-‐dimensional points.

KD-‐Tree ConstrucTon

©Emily Fox 2015 32

Pt X Y 1 0.00 0.00 3 0.13 2.85 … … …

X>.5

Pt X Y 2 1.00 4.31 … … …

YES NO

n Split the points into 2 groups by: ¨ Choosing dimension dj and value V (methods to be discussed…)

¨ SeparaTng the points into > V and <= V. x

idj

x

idj

4/7/15

17

KD-‐Tree ConstrucTon

©Emily Fox 2015 33

X>.5

Pt X Y 2 1.00 4.31 … … …

YES NO

n Consider each group separately and possibly split again (along same/different dimension). ¨ Stopping criterion to be discussed…

Pt X Y 1 0.00 0.00 3 0.13 2.85 … … …

KD-‐Tree ConstrucTon

©Emily Fox 2015 34

Pt X Y 3 0.13 2.85 … … …

X>.5

Pt X Y 2 1.00 4.31 … … …

YES NO

Pt X Y 1 0.00 0.00 … … …

Y>.1 NO YES

n Consider each group separately and possibly split again (along same/different dimension). ¨ Stopping criterion to be discussed…

4/7/15

18

KD-‐Tree ConstrucTon

©Emily Fox 2015 35

n ConTnue spli�ng points in each set ¨ creates a binary tree structure

n Each leaf node contains a list of points

KD-‐Tree ConstrucTon

©Emily Fox 2015 36

n Keep one addiTonal piece of informaTon at each node: ¨ The (Tght) bounds of the points at or below this node.

4/7/15

19

KD-‐Tree ConstrucTon

©Emily Fox 2015 37

n Use heurisTcs to make spli�ng decisions: n Which dimension do we split along?

n Which value do we split at?

n When do we stop?

Many heurisTcs…

©Emily Fox 2015 38

median heurisTc center-‐of-‐range heurisTc

4/7/15

20

Nearest Neighbor with KD Trees

©Emily Fox 2015 39

n Traverse the tree looking for the nearest neighbor of the query point.

Nearest Neighbor with KD Trees

©Emily Fox 2015 40

n Examine nearby points first: ¨ Explore branch of tree closest to the query point first.

4/7/15

21

Nearest Neighbor with KD Trees

©Emily Fox 2015 41

n Examine nearby points first: ¨ Explore branch of tree closest to the query point first.

Nearest Neighbor with KD Trees

©Emily Fox 2015 42

n When we reach a leaf node: ¨ Compute the distance to each point in the node.

4/7/15

22

Nearest Neighbor with KD Trees

©Emily Fox 2015 43

n When we reach a leaf node: ¨ Compute the distance to each point in the node.

Nearest Neighbor with KD Trees

©Emily Fox 2015 44

n Then backtrack and try the other branch at each node visited

4/7/15

23

Nearest Neighbor with KD Trees

©Emily Fox 2015 45

n Each Tme a new closest node is found, update the distance bound

Nearest Neighbor with KD Trees

©Emily Fox 2015 46

n Using the distance bound and bounding box of each node: ¨ Prune parts of the tree that could NOT include the nearest neighbor

4/7/15

24

Nearest Neighbor with KD Trees

©Emily Fox 2015 47

n Using the distance bound and bounding box of each node: ¨ Prune parts of the tree that could NOT include the nearest neighbor

Nearest Neighbor with KD Trees

©Emily Fox 2015 48

n Using the distance bound and bounding box of each node: ¨ Prune parts of the tree that could NOT include the nearest neighbor

4/7/15

25

Complexity

©Emily Fox 2015 49

n For (nearly) balanced, binary trees... n ConstrucTon

¨ Size: ¨ Depth: ¨ Median + send points lex right: ¨ ConstrucTon Tme:

n 1-‐NN query ¨ Traverse down tree to starTng point: ¨ Maximum backtrack and traverse: ¨ Complexity range:

n Under some assumpTons on distribuTon of points, we get O(logN) but exponenTal in d (see citaTons in reading)

Complexity

©Emily Fox 2015 50

4/7/15

26

Complexity for N Queries

©Emily Fox 2015 51

n Ask for nearest neighbor to each document

n Brute force 1-‐NN:

n kd-‐trees:

InspecTons vs. N and d

©Emily Fox 2015 52

0 2000 4000 6000 8000 10000

10

20

30

40

50

60

70

80

1 3 5 7 9 11 13 150

100

200

300

400

500

600

0 2000 4000 6000 8000 10000

10

20

30

40

50

60

70

80

1 3 5 7 9 11 13 150

100

200

300

400

500

600

4/7/15

27

K-‐NN with KD Trees

©Emily Fox 2015 53

n Exactly the same algorithm, but maintain distance as distance to furthest of current k nearest neighbors

n Complexity is:

Approximate K-‐NN with KD Trees

©Emily Fox 2015 54

n Before: Prune when distance to bounding box > n Now: Prune when distance to bounding box > n Will prune more than allowed, but can guarantee that if we return a neighbor at

distance , then there is no neighbor closer than . n In pracTce this bound is loose…Can be closer to opTmal. n Saves lots of search Tme at liZle cost in quality of nearest neighbor.

r/↵r

4/7/15

28

Wrapping Up – Important Points

©Emily Fox 2015 55

kd-‐trees n Tons of variants

¨ On construcTon of trees (heurisTcs for spli�ng, stopping, represenTng branches…) ¨ Other representaTonal data structures for fast NN search (e.g., ball trees,…)

Nearest Neighbor Search n Distance metric and data representaTon are crucial to answer returned For both… n High dimensional spaces are hard!

¨ Number of kd-‐tree searches can be exponenTal in dimension n Rule of thumb… N >> 2d… Typically useless.

¨ Distances are sensiTve to irrelevant features n Most dimensions are just noise à Everything equidistant (i.e., everything is far away) n Need technique to learn what features are important for your task

What you need to know

©Emily Fox 2015 56

n Document retrieval task ¨ Document representaTon (bag of words) ¨ �-‐idf

n Nearest neighbor search ¨ FormulaTon ¨ Different distance metrics and sensiTvity to choice ¨ Challenges with large N

n kd-‐trees for nearest neighbor search ¨ ConstrucTon of tree ¨ NN search algorithm using tree ¨ Complexity of construcTon and query ¨ Challenges with large d

4/7/15

29

Acknowledgment

©Emily Fox 2015 57

n This lecture contains some material from Andrew Moore’s excellent collecTon of ML tutorials: ¨ hZp://www.cs.cmu.edu/~awm/tutorials

n In parTcular, see: ¨ hZp://grist.caltech.edu/sc4devo/.../files/sc4devo_scalable_datamining.ppt