Sherman’s Theorem

description

Transcript of Sherman’s Theorem

Pg 1 of 10AGI www.agi.com

Sherman’s TheoremFundamental Technology for ODTK

Jim Wright

Pg 2 of 10AGI www.agi.com

Why?

Satisfaction of Sherman's Theorem guarantees that the mean-squared state estimate error on each state estimate component is minimized

Pg 3 of 10AGI www.agi.com

Sherman Probability Density

52.50-2.5-5

0.3

0.25

0.2

0.15

0.1

0.05

0

ShermanProbability Density Function sPx

Pg 4 of 10AGI www.agi.com

Sherman Probability Distribution

52.50-2.5-5

1

0.75

0.5

0.25

0

ShermanProbability Distribution FunctionSPx

Pg 5 of 10AGI www.agi.com

Notational Convention Here

• Bold symbols denote known quantities (e.g., denote the optimal state estimate by ΔXk+1|k+1, after processing measurement residual Δyk+1|k)

• Non-bold symbols denote true unknown quantities (e.g., the error ΔXk+1|k in propagated state estimate Xk+1|k)

Pg 6 of 10AGI www.agi.com

Admissible Loss Function L

• L = L(ΔXk+1|k) a scalar-valued function of state

• L(ΔXk+1|k) ≥ 0; L(0) = 0

• L(ΔXk+1|k) is a non-decreasing function of distance from the origin: limΔX → 0L(ΔX) = 0

• L(-ΔXk+1|k) = L(ΔXk+1|k)

Example of interest (squared state error):

L(ΔXk+1|k) = (ΔXk+1|k)T (ΔXk+1|k)

Pg 7 of 10AGI www.agi.com

Performance Function J(ΔXk+1|k)

J(ΔXk+1|k) = E{L(ΔXk+1|k)}

Goal: Minimize J(ΔXk+1|k), the mean value of loss on the unknown state error ΔXk+1|k in the propagated state estimate Xk+1|k.

Example (mean-squared state error):

J(ΔXk+1|k) = E{(ΔXk+1|k)T (ΔXk+1|k)}

Pg 8 of 10AGI www.agi.com

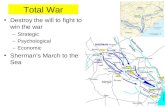

Aurora Response to CME

Pg 9 of 10AGI www.agi.com

Minimize Mean-Squared State Error

Smoother V3333 Atmospheric Density Simulator V3333 Atmospheric Density

0.00

0.03

0.06

0.09

0.12

0.15

0.18

0.21

0.24

0.27

0.30

0.33

0.36

0.39

-0.03

-0.06

-0.09

-0.12

-0.15

-0.18

6000 7000 8000 9000 10000 11000 12000 13000 14000

Atm

osph

eric

Dens

ity

Atmospheric Density Overlay dp/pAtmospheric Density Overlay dp/p

Minutes after Midnight 01 Sep 2003 00:00:00.00

Satellite: V3333 Process: Smoother, Simulator

Time of First Data Point: 01 Sep 2003 00:00:00.00

OverlayAtmospheric Density / : Simulated and Smoothed (simDATA)

Pg 10 of 10AGI www.agi.com

Sherman’s Theorem

Given any admissible loss function L(ΔXk+1|k), and any Sherman conditional probability distribution function F(ξ|Δyk+1|k), then the optimal estimate ΔXk+1|k+1 of ΔXk+1|k is the conditional mean:

ΔXk+1|k+1 = E{ΔXk+1|k| Δyk+1|k}

Pg 11 of 10AGI www.agi.com

Doob’s First TheoremMean-Square State Error

If L(ΔXk+1|k) = (ΔXk+1|k)T (ΔXk+1|k)

Then the optimal estimate ΔXk+1|k+1 of ΔXk+1|k is the conditional mean:

ΔXk+1|k+1 = E{ΔXk+1|k| Δyk+1|k}

The conditional distribution function need not be Sherman; i.e., not symmetric nor convex

Pg 12 of 10AGI www.agi.com

Doob’s Second TheoremGaussian ΔXk+1|k and Δyk+1|k

If:

ΔXk+1|k and Δyk+1|k have Gaussian probability distribution functions

Then the optimal estimate ΔXk+1|k+1 of ΔXk+1|k is the conditional mean:

ΔXk+1|k+1 = E{ΔXk+1|k| Δyk+1|k}

Pg 13 of 10AGI www.agi.com

Sherman’s Papers

• Sherman proved Sherman’s Theorem in his 1955 paper.

• Sherman demonstrated the equivalence in optimal performance using the conditional mean in all three cases, in his 1958 paper

Pg 14 of 10AGI www.agi.com

Kalman

• Kalman’s filter measurement update algorithm is derived from the Gaussian probability distribution function

• Explicit filter measurement update algorithm not possible from Sherman probability distribution function

Pg 15 of 10AGI www.agi.com

Gaussian Hypothesis is Correct

• Don’t waste your time looking for a Sherman measurement update algorithm

• Post-filtered measurement residuals are zero mean Gaussian white noise

• Post-filtered state estimate errors are zero mean Gaussian white noise (due to Kalman’s linear map)

Pg 16 of 10AGI www.agi.com

Measurement System Calibration

• Definition from Gaussian probability density function

• Radar range spacecraft tracking system example

Pg 17 of 10AGI www.agi.com

Gaussian Probability Density N(μ,R2) = N(0,1/4)

52.50-2.5-5

0.6

0.4

0.2

0

GaussianN0,1/4 Probability Density Function

Pg 18 of 10AGI www.agi.com

Gaussian Probability Distribution N(μ,R2) = N(0,1/4)

52.50-2.5-5

1

0.75

0.5

0.25

0

GaussianN0,1/4 Probability Distribution Function

Pg 19 of 10AGI www.agi.com

Calibration (1)

N(μ,R2) = N(0,[σ/σinput]2)

N(μ,R2) = N(0,1) ↔ σinput = σ

σinput > σ• Histogram peaked relative to N(0,1)

• Filter gain too large

• Estimate correction too large

• Mean-squared state error not minimized

Pg 20 of 10AGI www.agi.com

Calibration (2)

σinput < σ• Histogram flattened relative to N(0,1)

• Filter gain too small

• Estimate correction too small

• Residual editor discards good measurements – information lost

• Mean-squared state error not minimized

Pg 21 of 10AGI www.agi.com

Before Calibration

Percentage Found Normal Distribution

0.00

20.00

40.00

60.00

80.00

100.00

120.00

140.00

160.00

0 1 2 3 4-1-2-3-4

Per

cent

age

Foun

d

Range Residual Ratios DistributionRange Residual Ratios Distribution

Number of Sigmas

Ground Station: BOSS-A Sample Size: 4039 Satellite: V3333

Gaussiannon-N0,1 Peaked Histogram of Real Range Residual Ratios Before Calibration

Pg 22 of 10AGI www.agi.com

After Calibration

Percentage Found Normal Distribution

0.00

10.00

20.00

30.00

40.00

0 1 2 3 4-1-2-3-4

Perce

ntage

Foun

d

Range Residual Ratios DistributionRange Residual Ratios Distribution

Number of Sigmas

Ground Station: BOSS-A Sample Size: 3988 Satellite: V3333

GaussianN0,1 Histogram of Real Range Residual Ratios After Calibration

Pg 23 of 10AGI www.agi.com

Nonlinear Real-Time Multidimensional Estimation

• Requirements

- Validation

• Conclusions

- Operations

Pg 24 of 10AGI www.agi.com

Requirements (1 of 2)

• Adopt Kalman’s linear map from measurement residuals to state estimate errors

• Measurement residuals must be calibrated: Identify and model constant mean biases and variances

• Estimate and remove time-varying measurement residual biases in real time

• Process measurements sequentially with time

• Apply Sherman's Theorem anew at each measurement time

Pg 25 of 10AGI www.agi.com

Requirements (2 of 2)

• Specify a complete state estimate structure

• Propagate the state estimate with a rigorous nonlinear propagator

• Apply all known physics appropriately to state estimate propagation and to associated forcing function modeling error covariance

• Apply all sensor dependent random stochastic measurement sequence components to the measurement covariance model

Pg 26 of 10AGI www.agi.com

Necessary & Sufficient ValidationRequirements

• Satisfy rigorous necessary conditions for real data validation

• Satisfy rigorous sufficient conditions for realistic simulated data validation

Pg 27 of 10AGI www.agi.com

Conclusions (1 of 2)

• Measurement residuals produced by optimal estimators are Gaussian white residuals with zero mean

• Gaussian white residuals with zero mean imply Gaussian white state estimate errors with zero mean (due to linear map)

• Sherman's Theorem is satisfied with unbiased Gaussian white residuals and Gaussian white state estimate errors

Pg 28 of 10AGI www.agi.com

Conclusions (2 of 2)

• Sherman's Theorem maps measurement residuals to optimal state estimate error corrections via Kalman's linear measurement update operation

• Sherman's Theorem guarantees that the mean-squared state estimate error on each state estimate component is minimized

• Sherman's Theorem applies to all real-time estimation problems that have nonlinear measurement representations and nonlinear state estimate propagations

Pg 29 of 10AGI www.agi.com

Operational Capabilities

• Calculate realistic state estimate error covariance functions (real-time filter and all smoothers)

• Calculate realistic state estimate accuracy performance assessment (real-time filter and all smoothers)

• Perform autonomous data editing (real-time filter, near-real-time fixed-lag smoother)