Sharding @ Instagram - PostgreSQL · Sharding @ Instagram SFPUG April 2012 Mike Krieger Instagram...

Transcript of Sharding @ Instagram - PostgreSQL · Sharding @ Instagram SFPUG April 2012 Mike Krieger Instagram...

Sharding InstagramSFPUG April 2012

Mike KriegerInstagram

Monday April 23 12

me

- Co-founder Instagram

- Previously UX amp Front-end Meebo

- Stanford HCI BSMS

- mikeyk on everything

Monday April 23 12

pug

Monday April 23 12

communicating and sharing in the real world

Monday April 23 12

Monday April 23 12

Monday April 23 12

Monday April 23 12

30+ million users in less than 2 years

Monday April 23 12

at its heart Postgres-driven

Monday April 23 12

a glimpse at how a startup with a small eng team scaled with PG

Monday April 23 12

a brief tangent

Monday April 23 12

the beginning

Monday April 23 12

Text

Monday April 23 12

2 product guys

Monday April 23 12

no real back-end experience

Monday April 23 12

(you should have seen my first time finding my

way around psql)

Monday April 23 12

analytics amp python meebo

Monday April 23 12

CouchDB

Monday April 23 12

CrimeDesk SF

Monday April 23 12

Monday April 23 12

early mix PG Redis Memcached

Monday April 23 12

but were hosted on a single machine

somewhere in LA

Monday April 23 12

Monday April 23 12

less powerful than my MacBook Pro

Monday April 23 12

okay we launchednow what

Monday April 23 12

25k signups in the first day

Monday April 23 12

everything is on fire

Monday April 23 12

best amp worst day of our lives so far

Monday April 23 12

load was through the roof

Monday April 23 12

friday rolls around

Monday April 23 12

not slowing down

Monday April 23 12

letrsquos move to EC2

Monday April 23 12

Monday April 23 12

PG upgrade to 90

Monday April 23 12

Monday April 23 12

scaling = replacing all components of a car

while driving it at 100mph

Monday April 23 12

this is the story of how our usage of PG has

evolved

Monday April 23 12

Phase 1 All ORM all the time

Monday April 23 12

why pg at first postgis

Monday April 23 12

managepy syncdb

Monday April 23 12

ORM made it too easy to not really think through

primary keys

Monday April 23 12

pretty good for getting off the ground

Monday April 23 12

Mediaobjectsget(pk = 4)

Monday April 23 12

first version of our feed (pre-launch)

Monday April 23 12

friends = Relationshipsobjectsfilter(source_user = user)

recent_photos = Mediaobjectsfilter(user_id__in = friends)order_by(lsquo-pkrsquo)[020]

Monday April 23 12

main feed at launch

Monday April 23 12

Redis user 33 postsfriends = SMEMBERS followers33for user in friends LPUSH feedltuser_idgt ltmedia_idgt

for readingLRANGE feed4 0 20

Monday April 23 12

canonical data PGfeedslistssets Redis

object cache memcache

Monday April 23 12

post-launch

Monday April 23 12

moved db to its own machine

Monday April 23 12

at time largest table photo metadata

Monday April 23 12

ran master-slave from the beginning with

streaming replication

Monday April 23 12

backups stop the replica xfs_freeze drives and take EBS snapshot

Monday April 23 12

AWS tradeoff

Monday April 23 12

3 early problems we hit with PG

Monday April 23 12

1 oh that setting was the problem

Monday April 23 12

work_mem

Monday April 23 12

shared_buffers

Monday April 23 12

cost_delay

Monday April 23 12

2 Django-specific ltidle in transactiongt

Monday April 23 12

3 connection pooling

Monday April 23 12

(we use PGBouncer)

Monday April 23 12

somewhere in this crazy couple of months

Christophe to the rescue

Monday April 23 12

photos kept growing and growing

Monday April 23 12

and only 68GB of RAM on biggest machine in EC2

Monday April 23 12

so what now

Monday April 23 12

Phase 2 Vertical Partitioning

Monday April 23 12

django db routers make it pretty easy

Monday April 23 12

def db_for_read(self model) if app_label == photos return photodb

Monday April 23 12

once you untangle all your foreign key

relationships

Monday April 23 12

(all of those useruser_id interchangeable calls bite

you now)

Monday April 23 12

plenty of time spent in PGFouine

Monday April 23 12

read slaves (using streaming replicas) where

we need to reduce contention

Monday April 23 12

a few months later

Monday April 23 12

photosdb gt 60GB

Monday April 23 12

precipitated by being on cloud hardware but likely to have hit limit eventually

either way

Monday April 23 12

what now

Monday April 23 12

horizontal partitioning

Monday April 23 12

Phase 3 sharding

Monday April 23 12

ldquosurely wersquoll have hired someone experienced before we actually need

to shardrdquo

Monday April 23 12

never true about scaling

Monday April 23 12

1 choosing a method2 adapting the application

3 expanding capacity

Monday April 23 12

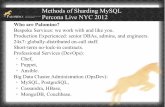

evaluated solutions

Monday April 23 12

at the time none were up to task of being our

primary DB

Monday April 23 12

NoSQL alternatives

Monday April 23 12

Skypersquos sharding proxy

Monday April 23 12

Rangedate-based partitioning

Monday April 23 12

did in Postgres itself

Monday April 23 12

requirements

Monday April 23 12

1 low operational amp code complexity

Monday April 23 12

2 easy expanding of capacity

Monday April 23 12

3 low performance impact on application

Monday April 23 12

schema-based logical sharding

Monday April 23 12

many many many (thousands) of logical

shards

Monday April 23 12

that map to fewer physical ones

Monday April 23 12

8 logical shards on 2 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 A 3 A 4 B 5 B 6 B 7 B

Monday April 23 12

8 logical shards on 2 4 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 C 3 C 4 B 5 B 6 D 7 D

Monday April 23 12

iexclschemas

Monday April 23 12

all that lsquopublicrsquo stuff Irsquod been glossing over for 2

years

Monday April 23 12

- database - schema - table - columns

Monday April 23 12

spun up set of machines

Monday April 23 12

using fabric created thousands of schemas

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineB shard4 photos_by_user shard5 photos_by_user shard6 photos_by_user shard7 photos_by_user

Monday April 23 12

(fabric or similar parallel task executor is

essential)

Monday April 23 12

application-side logic

Monday April 23 12

SHARD_TO_DB =

SHARD_TO_DB[0] = 0SHARD_TO_DB[1] = 0SHARD_TO_DB[2] = 0SHARD_TO_DB[3] = 0SHARD_TO_DB[4] = 1SHARD_TO_DB[5] = 1SHARD_TO_DB[6] = 1SHARD_TO_DB[7] = 1

Monday April 23 12

instead of Django ORM wrote really simple db

abstraction layer

Monday April 23 12

selectupdateinsertdelete

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

shard_key num_logical_shards = shard_id

Monday April 23 12

in most cases user_id for us

Monday April 23 12

custom Django test runner to createtear-down sharded DBs

Monday April 23 12

most queries involve visiting handful of shards

over one or two machines

Monday April 23 12

if mapping across shards on single DB UNION

ALL to aggregate

Monday April 23 12

clients to library pass in((shard_key id)

(shard_keyid)) etc

Monday April 23 12

library maps sub-selects to each shard and each

machine

Monday April 23 12

parallel execution (per-machine at least)

Monday April 23 12

-gt Append (cost=00097372 rows=100 width=12) (actual time=0290160035 rows=30 loops=1) -gt Limit (cost=00080624 rows=30 width=12) (actual time=0288159913 rows=14 loops=1) -gt Index Scan Backward using index on table (cost=0001865104 rows=694 width=12) (actual time=0286159885 rows=14 loops=1) -gt Limit (cost=0007115 rows=30 width=12) (actual time=00150018 rows=1 loops=1) -gt Index Scan using index on table (cost=00010199 rows=43 width=12) (actual time=00130014 rows=1 loops=1) (etc)

Monday April 23 12

eventually would be nice to parallelize across

machines

Monday April 23 12

next challenge unique IDs

Monday April 23 12

requirements

Monday April 23 12

1 should be time sortable without requiring

a lookup

Monday April 23 12

2 should be 64-bit

Monday April 23 12

3 low operational complexity

Monday April 23 12

surveyed the options

Monday April 23 12

ticket servers

Monday April 23 12

UUID

Monday April 23 12

twitter snowflake

Monday April 23 12

application-level IDs ala Mongo

Monday April 23 12

hey the db is already pretty good about

incrementing sequences

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

CREATE OR REPLACE FUNCTION insta5next_id(OUT result bigint) AS $$DECLARE our_epoch bigint = 1314220021721 seq_id bigint now_millis bigint shard_id int = 5BEGIN SELECT nextval(insta5table_id_seq) 1024 INTO seq_id

SELECT FLOOR(EXTRACT(EPOCH FROM clock_timestamp()) 1000) INTO now_millis result = (now_millis - our_epoch) ltlt 23 result = result | (shard_id ltlt 10) result = result | (seq_id)END$$ LANGUAGE PLPGSQL

Monday April 23 12

pulling shard ID from ID

shard_id = id ^ ((id gtgt 23) ltlt 23)timestamp = EPOCH + id gtgt 23

Monday April 23 12

pros guaranteed unique in 64-bits not much of a

CPU overhead

Monday April 23 12

cons large IDs from the get-go

Monday April 23 12

hundreds of millions of IDs generated with this

scheme no issues

Monday April 23 12

well what about ldquore-shardingrdquo

Monday April 23 12

first recourse pg_reorg

Monday April 23 12

rewrites tables in index order

Monday April 23 12

only requires brief locks for atomic table renames

Monday April 23 12

20+GB savings on some of our dbs

Monday April 23 12

Monday April 23 12

especially useful on EC2

Monday April 23 12

but sometimes you just have to reshard

Monday April 23 12

streaming replication to the rescue

Monday April 23 12

(btw repmgr is awesome)

Monday April 23 12

repmgr standby clone ltmastergt

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineArsquo shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineC shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

PGBouncer abstracts moving DBs from the

app logic

Monday April 23 12

can do this as long as you have more logical shards than physical

ones

Monday April 23 12

beauty of schemas is that they are physically

different files

Monday April 23 12

(no IO hit when deleting no lsquoswiss cheesersquo)

Monday April 23 12

downside requires ~30 seconds of maintenance to roll out new schema

mapping

Monday April 23 12

(could be solved by having concept of ldquoread-

onlyrdquo mode for some DBs)

Monday April 23 12

not great for range-scans that would span across

shards

Monday April 23 12

latest project follow graph

Monday April 23 12

v1 simple DB table(source_id target_id

status)

Monday April 23 12

who do I followwho follows me

do I follow Xdoes X follow me

Monday April 23 12

DB was busy so we started storing parallel

version in Redis

Monday April 23 12

follow_all(300 item list)

Monday April 23 12

inconsistency

Monday April 23 12

extra logic

Monday April 23 12

so much extra logic

Monday April 23 12

exposing your support team to the idea of cache invalidation

Monday April 23 12

Monday April 23 12

redesign took a page from twitterrsquos book

Monday April 23 12

PG can handle tens of thousands of requests very light memcached

caching

Monday April 23 12

next steps

Monday April 23 12

isolating services to minimize open conns

Monday April 23 12

investigate physical hardware etc to reduce

need to re-shard

Monday April 23 12

Wrap up

Monday April 23 12

you donrsquot need to give up PGrsquos durability amp features to shard

Monday April 23 12

continue to let the DB do what the DB is great at

Monday April 23 12

ldquodonrsquot shard until you have tordquo

Monday April 23 12

(but donrsquot over-estimate how hard it will be either)

Monday April 23 12

scaled within constraints of the cloud

Monday April 23 12

PG success story

Monday April 23 12

(wersquore really excited about 92)

Monday April 23 12

thanks any qs

Monday April 23 12

me

- Co-founder Instagram

- Previously UX amp Front-end Meebo

- Stanford HCI BSMS

- mikeyk on everything

Monday April 23 12

pug

Monday April 23 12

communicating and sharing in the real world

Monday April 23 12

Monday April 23 12

Monday April 23 12

Monday April 23 12

30+ million users in less than 2 years

Monday April 23 12

at its heart Postgres-driven

Monday April 23 12

a glimpse at how a startup with a small eng team scaled with PG

Monday April 23 12

a brief tangent

Monday April 23 12

the beginning

Monday April 23 12

Text

Monday April 23 12

2 product guys

Monday April 23 12

no real back-end experience

Monday April 23 12

(you should have seen my first time finding my

way around psql)

Monday April 23 12

analytics amp python meebo

Monday April 23 12

CouchDB

Monday April 23 12

CrimeDesk SF

Monday April 23 12

Monday April 23 12

early mix PG Redis Memcached

Monday April 23 12

but were hosted on a single machine

somewhere in LA

Monday April 23 12

Monday April 23 12

less powerful than my MacBook Pro

Monday April 23 12

okay we launchednow what

Monday April 23 12

25k signups in the first day

Monday April 23 12

everything is on fire

Monday April 23 12

best amp worst day of our lives so far

Monday April 23 12

load was through the roof

Monday April 23 12

friday rolls around

Monday April 23 12

not slowing down

Monday April 23 12

letrsquos move to EC2

Monday April 23 12

Monday April 23 12

PG upgrade to 90

Monday April 23 12

Monday April 23 12

scaling = replacing all components of a car

while driving it at 100mph

Monday April 23 12

this is the story of how our usage of PG has

evolved

Monday April 23 12

Phase 1 All ORM all the time

Monday April 23 12

why pg at first postgis

Monday April 23 12

managepy syncdb

Monday April 23 12

ORM made it too easy to not really think through

primary keys

Monday April 23 12

pretty good for getting off the ground

Monday April 23 12

Mediaobjectsget(pk = 4)

Monday April 23 12

first version of our feed (pre-launch)

Monday April 23 12

friends = Relationshipsobjectsfilter(source_user = user)

recent_photos = Mediaobjectsfilter(user_id__in = friends)order_by(lsquo-pkrsquo)[020]

Monday April 23 12

main feed at launch

Monday April 23 12

Redis user 33 postsfriends = SMEMBERS followers33for user in friends LPUSH feedltuser_idgt ltmedia_idgt

for readingLRANGE feed4 0 20

Monday April 23 12

canonical data PGfeedslistssets Redis

object cache memcache

Monday April 23 12

post-launch

Monday April 23 12

moved db to its own machine

Monday April 23 12

at time largest table photo metadata

Monday April 23 12

ran master-slave from the beginning with

streaming replication

Monday April 23 12

backups stop the replica xfs_freeze drives and take EBS snapshot

Monday April 23 12

AWS tradeoff

Monday April 23 12

3 early problems we hit with PG

Monday April 23 12

1 oh that setting was the problem

Monday April 23 12

work_mem

Monday April 23 12

shared_buffers

Monday April 23 12

cost_delay

Monday April 23 12

2 Django-specific ltidle in transactiongt

Monday April 23 12

3 connection pooling

Monday April 23 12

(we use PGBouncer)

Monday April 23 12

somewhere in this crazy couple of months

Christophe to the rescue

Monday April 23 12

photos kept growing and growing

Monday April 23 12

and only 68GB of RAM on biggest machine in EC2

Monday April 23 12

so what now

Monday April 23 12

Phase 2 Vertical Partitioning

Monday April 23 12

django db routers make it pretty easy

Monday April 23 12

def db_for_read(self model) if app_label == photos return photodb

Monday April 23 12

once you untangle all your foreign key

relationships

Monday April 23 12

(all of those useruser_id interchangeable calls bite

you now)

Monday April 23 12

plenty of time spent in PGFouine

Monday April 23 12

read slaves (using streaming replicas) where

we need to reduce contention

Monday April 23 12

a few months later

Monday April 23 12

photosdb gt 60GB

Monday April 23 12

precipitated by being on cloud hardware but likely to have hit limit eventually

either way

Monday April 23 12

what now

Monday April 23 12

horizontal partitioning

Monday April 23 12

Phase 3 sharding

Monday April 23 12

ldquosurely wersquoll have hired someone experienced before we actually need

to shardrdquo

Monday April 23 12

never true about scaling

Monday April 23 12

1 choosing a method2 adapting the application

3 expanding capacity

Monday April 23 12

evaluated solutions

Monday April 23 12

at the time none were up to task of being our

primary DB

Monday April 23 12

NoSQL alternatives

Monday April 23 12

Skypersquos sharding proxy

Monday April 23 12

Rangedate-based partitioning

Monday April 23 12

did in Postgres itself

Monday April 23 12

requirements

Monday April 23 12

1 low operational amp code complexity

Monday April 23 12

2 easy expanding of capacity

Monday April 23 12

3 low performance impact on application

Monday April 23 12

schema-based logical sharding

Monday April 23 12

many many many (thousands) of logical

shards

Monday April 23 12

that map to fewer physical ones

Monday April 23 12

8 logical shards on 2 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 A 3 A 4 B 5 B 6 B 7 B

Monday April 23 12

8 logical shards on 2 4 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 C 3 C 4 B 5 B 6 D 7 D

Monday April 23 12

iexclschemas

Monday April 23 12

all that lsquopublicrsquo stuff Irsquod been glossing over for 2

years

Monday April 23 12

- database - schema - table - columns

Monday April 23 12

spun up set of machines

Monday April 23 12

using fabric created thousands of schemas

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineB shard4 photos_by_user shard5 photos_by_user shard6 photos_by_user shard7 photos_by_user

Monday April 23 12

(fabric or similar parallel task executor is

essential)

Monday April 23 12

application-side logic

Monday April 23 12

SHARD_TO_DB =

SHARD_TO_DB[0] = 0SHARD_TO_DB[1] = 0SHARD_TO_DB[2] = 0SHARD_TO_DB[3] = 0SHARD_TO_DB[4] = 1SHARD_TO_DB[5] = 1SHARD_TO_DB[6] = 1SHARD_TO_DB[7] = 1

Monday April 23 12

instead of Django ORM wrote really simple db

abstraction layer

Monday April 23 12

selectupdateinsertdelete

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

shard_key num_logical_shards = shard_id

Monday April 23 12

in most cases user_id for us

Monday April 23 12

custom Django test runner to createtear-down sharded DBs

Monday April 23 12

most queries involve visiting handful of shards

over one or two machines

Monday April 23 12

if mapping across shards on single DB UNION

ALL to aggregate

Monday April 23 12

clients to library pass in((shard_key id)

(shard_keyid)) etc

Monday April 23 12

library maps sub-selects to each shard and each

machine

Monday April 23 12

parallel execution (per-machine at least)

Monday April 23 12

-gt Append (cost=00097372 rows=100 width=12) (actual time=0290160035 rows=30 loops=1) -gt Limit (cost=00080624 rows=30 width=12) (actual time=0288159913 rows=14 loops=1) -gt Index Scan Backward using index on table (cost=0001865104 rows=694 width=12) (actual time=0286159885 rows=14 loops=1) -gt Limit (cost=0007115 rows=30 width=12) (actual time=00150018 rows=1 loops=1) -gt Index Scan using index on table (cost=00010199 rows=43 width=12) (actual time=00130014 rows=1 loops=1) (etc)

Monday April 23 12

eventually would be nice to parallelize across

machines

Monday April 23 12

next challenge unique IDs

Monday April 23 12

requirements

Monday April 23 12

1 should be time sortable without requiring

a lookup

Monday April 23 12

2 should be 64-bit

Monday April 23 12

3 low operational complexity

Monday April 23 12

surveyed the options

Monday April 23 12

ticket servers

Monday April 23 12

UUID

Monday April 23 12

twitter snowflake

Monday April 23 12

application-level IDs ala Mongo

Monday April 23 12

hey the db is already pretty good about

incrementing sequences

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

CREATE OR REPLACE FUNCTION insta5next_id(OUT result bigint) AS $$DECLARE our_epoch bigint = 1314220021721 seq_id bigint now_millis bigint shard_id int = 5BEGIN SELECT nextval(insta5table_id_seq) 1024 INTO seq_id

SELECT FLOOR(EXTRACT(EPOCH FROM clock_timestamp()) 1000) INTO now_millis result = (now_millis - our_epoch) ltlt 23 result = result | (shard_id ltlt 10) result = result | (seq_id)END$$ LANGUAGE PLPGSQL

Monday April 23 12

pulling shard ID from ID

shard_id = id ^ ((id gtgt 23) ltlt 23)timestamp = EPOCH + id gtgt 23

Monday April 23 12

pros guaranteed unique in 64-bits not much of a

CPU overhead

Monday April 23 12

cons large IDs from the get-go

Monday April 23 12

hundreds of millions of IDs generated with this

scheme no issues

Monday April 23 12

well what about ldquore-shardingrdquo

Monday April 23 12

first recourse pg_reorg

Monday April 23 12

rewrites tables in index order

Monday April 23 12

only requires brief locks for atomic table renames

Monday April 23 12

20+GB savings on some of our dbs

Monday April 23 12

Monday April 23 12

especially useful on EC2

Monday April 23 12

but sometimes you just have to reshard

Monday April 23 12

streaming replication to the rescue

Monday April 23 12

(btw repmgr is awesome)

Monday April 23 12

repmgr standby clone ltmastergt

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineArsquo shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineC shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

PGBouncer abstracts moving DBs from the

app logic

Monday April 23 12

can do this as long as you have more logical shards than physical

ones

Monday April 23 12

beauty of schemas is that they are physically

different files

Monday April 23 12

(no IO hit when deleting no lsquoswiss cheesersquo)

Monday April 23 12

downside requires ~30 seconds of maintenance to roll out new schema

mapping

Monday April 23 12

(could be solved by having concept of ldquoread-

onlyrdquo mode for some DBs)

Monday April 23 12

not great for range-scans that would span across

shards

Monday April 23 12

latest project follow graph

Monday April 23 12

v1 simple DB table(source_id target_id

status)

Monday April 23 12

who do I followwho follows me

do I follow Xdoes X follow me

Monday April 23 12

DB was busy so we started storing parallel

version in Redis

Monday April 23 12

follow_all(300 item list)

Monday April 23 12

inconsistency

Monday April 23 12

extra logic

Monday April 23 12

so much extra logic

Monday April 23 12

exposing your support team to the idea of cache invalidation

Monday April 23 12

Monday April 23 12

redesign took a page from twitterrsquos book

Monday April 23 12

PG can handle tens of thousands of requests very light memcached

caching

Monday April 23 12

next steps

Monday April 23 12

isolating services to minimize open conns

Monday April 23 12

investigate physical hardware etc to reduce

need to re-shard

Monday April 23 12

Wrap up

Monday April 23 12

you donrsquot need to give up PGrsquos durability amp features to shard

Monday April 23 12

continue to let the DB do what the DB is great at

Monday April 23 12

ldquodonrsquot shard until you have tordquo

Monday April 23 12

(but donrsquot over-estimate how hard it will be either)

Monday April 23 12

scaled within constraints of the cloud

Monday April 23 12

PG success story

Monday April 23 12

(wersquore really excited about 92)

Monday April 23 12

thanks any qs

Monday April 23 12

pug

Monday April 23 12

communicating and sharing in the real world

Monday April 23 12

Monday April 23 12

Monday April 23 12

Monday April 23 12

30+ million users in less than 2 years

Monday April 23 12

at its heart Postgres-driven

Monday April 23 12

a glimpse at how a startup with a small eng team scaled with PG

Monday April 23 12

a brief tangent

Monday April 23 12

the beginning

Monday April 23 12

Text

Monday April 23 12

2 product guys

Monday April 23 12

no real back-end experience

Monday April 23 12

(you should have seen my first time finding my

way around psql)

Monday April 23 12

analytics amp python meebo

Monday April 23 12

CouchDB

Monday April 23 12

CrimeDesk SF

Monday April 23 12

Monday April 23 12

early mix PG Redis Memcached

Monday April 23 12

but were hosted on a single machine

somewhere in LA

Monday April 23 12

Monday April 23 12

less powerful than my MacBook Pro

Monday April 23 12

okay we launchednow what

Monday April 23 12

25k signups in the first day

Monday April 23 12

everything is on fire

Monday April 23 12

best amp worst day of our lives so far

Monday April 23 12

load was through the roof

Monday April 23 12

friday rolls around

Monday April 23 12

not slowing down

Monday April 23 12

letrsquos move to EC2

Monday April 23 12

Monday April 23 12

PG upgrade to 90

Monday April 23 12

Monday April 23 12

scaling = replacing all components of a car

while driving it at 100mph

Monday April 23 12

this is the story of how our usage of PG has

evolved

Monday April 23 12

Phase 1 All ORM all the time

Monday April 23 12

why pg at first postgis

Monday April 23 12

managepy syncdb

Monday April 23 12

ORM made it too easy to not really think through

primary keys

Monday April 23 12

pretty good for getting off the ground

Monday April 23 12

Mediaobjectsget(pk = 4)

Monday April 23 12

first version of our feed (pre-launch)

Monday April 23 12

friends = Relationshipsobjectsfilter(source_user = user)

recent_photos = Mediaobjectsfilter(user_id__in = friends)order_by(lsquo-pkrsquo)[020]

Monday April 23 12

main feed at launch

Monday April 23 12

Redis user 33 postsfriends = SMEMBERS followers33for user in friends LPUSH feedltuser_idgt ltmedia_idgt

for readingLRANGE feed4 0 20

Monday April 23 12

canonical data PGfeedslistssets Redis

object cache memcache

Monday April 23 12

post-launch

Monday April 23 12

moved db to its own machine

Monday April 23 12

at time largest table photo metadata

Monday April 23 12

ran master-slave from the beginning with

streaming replication

Monday April 23 12

backups stop the replica xfs_freeze drives and take EBS snapshot

Monday April 23 12

AWS tradeoff

Monday April 23 12

3 early problems we hit with PG

Monday April 23 12

1 oh that setting was the problem

Monday April 23 12

work_mem

Monday April 23 12

shared_buffers

Monday April 23 12

cost_delay

Monday April 23 12

2 Django-specific ltidle in transactiongt

Monday April 23 12

3 connection pooling

Monday April 23 12

(we use PGBouncer)

Monday April 23 12

somewhere in this crazy couple of months

Christophe to the rescue

Monday April 23 12

photos kept growing and growing

Monday April 23 12

and only 68GB of RAM on biggest machine in EC2

Monday April 23 12

so what now

Monday April 23 12

Phase 2 Vertical Partitioning

Monday April 23 12

django db routers make it pretty easy

Monday April 23 12

def db_for_read(self model) if app_label == photos return photodb

Monday April 23 12

once you untangle all your foreign key

relationships

Monday April 23 12

(all of those useruser_id interchangeable calls bite

you now)

Monday April 23 12

plenty of time spent in PGFouine

Monday April 23 12

read slaves (using streaming replicas) where

we need to reduce contention

Monday April 23 12

a few months later

Monday April 23 12

photosdb gt 60GB

Monday April 23 12

precipitated by being on cloud hardware but likely to have hit limit eventually

either way

Monday April 23 12

what now

Monday April 23 12

horizontal partitioning

Monday April 23 12

Phase 3 sharding

Monday April 23 12

ldquosurely wersquoll have hired someone experienced before we actually need

to shardrdquo

Monday April 23 12

never true about scaling

Monday April 23 12

1 choosing a method2 adapting the application

3 expanding capacity

Monday April 23 12

evaluated solutions

Monday April 23 12

at the time none were up to task of being our

primary DB

Monday April 23 12

NoSQL alternatives

Monday April 23 12

Skypersquos sharding proxy

Monday April 23 12

Rangedate-based partitioning

Monday April 23 12

did in Postgres itself

Monday April 23 12

requirements

Monday April 23 12

1 low operational amp code complexity

Monday April 23 12

2 easy expanding of capacity

Monday April 23 12

3 low performance impact on application

Monday April 23 12

schema-based logical sharding

Monday April 23 12

many many many (thousands) of logical

shards

Monday April 23 12

that map to fewer physical ones

Monday April 23 12

8 logical shards on 2 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 A 3 A 4 B 5 B 6 B 7 B

Monday April 23 12

8 logical shards on 2 4 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 C 3 C 4 B 5 B 6 D 7 D

Monday April 23 12

iexclschemas

Monday April 23 12

all that lsquopublicrsquo stuff Irsquod been glossing over for 2

years

Monday April 23 12

- database - schema - table - columns

Monday April 23 12

spun up set of machines

Monday April 23 12

using fabric created thousands of schemas

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineB shard4 photos_by_user shard5 photos_by_user shard6 photos_by_user shard7 photos_by_user

Monday April 23 12

(fabric or similar parallel task executor is

essential)

Monday April 23 12

application-side logic

Monday April 23 12

SHARD_TO_DB =

SHARD_TO_DB[0] = 0SHARD_TO_DB[1] = 0SHARD_TO_DB[2] = 0SHARD_TO_DB[3] = 0SHARD_TO_DB[4] = 1SHARD_TO_DB[5] = 1SHARD_TO_DB[6] = 1SHARD_TO_DB[7] = 1

Monday April 23 12

instead of Django ORM wrote really simple db

abstraction layer

Monday April 23 12

selectupdateinsertdelete

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

shard_key num_logical_shards = shard_id

Monday April 23 12

in most cases user_id for us

Monday April 23 12

custom Django test runner to createtear-down sharded DBs

Monday April 23 12

most queries involve visiting handful of shards

over one or two machines

Monday April 23 12

if mapping across shards on single DB UNION

ALL to aggregate

Monday April 23 12

clients to library pass in((shard_key id)

(shard_keyid)) etc

Monday April 23 12

library maps sub-selects to each shard and each

machine

Monday April 23 12

parallel execution (per-machine at least)

Monday April 23 12

-gt Append (cost=00097372 rows=100 width=12) (actual time=0290160035 rows=30 loops=1) -gt Limit (cost=00080624 rows=30 width=12) (actual time=0288159913 rows=14 loops=1) -gt Index Scan Backward using index on table (cost=0001865104 rows=694 width=12) (actual time=0286159885 rows=14 loops=1) -gt Limit (cost=0007115 rows=30 width=12) (actual time=00150018 rows=1 loops=1) -gt Index Scan using index on table (cost=00010199 rows=43 width=12) (actual time=00130014 rows=1 loops=1) (etc)

Monday April 23 12

eventually would be nice to parallelize across

machines

Monday April 23 12

next challenge unique IDs

Monday April 23 12

requirements

Monday April 23 12

1 should be time sortable without requiring

a lookup

Monday April 23 12

2 should be 64-bit

Monday April 23 12

3 low operational complexity

Monday April 23 12

surveyed the options

Monday April 23 12

ticket servers

Monday April 23 12

UUID

Monday April 23 12

twitter snowflake

Monday April 23 12

application-level IDs ala Mongo

Monday April 23 12

hey the db is already pretty good about

incrementing sequences

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

CREATE OR REPLACE FUNCTION insta5next_id(OUT result bigint) AS $$DECLARE our_epoch bigint = 1314220021721 seq_id bigint now_millis bigint shard_id int = 5BEGIN SELECT nextval(insta5table_id_seq) 1024 INTO seq_id

SELECT FLOOR(EXTRACT(EPOCH FROM clock_timestamp()) 1000) INTO now_millis result = (now_millis - our_epoch) ltlt 23 result = result | (shard_id ltlt 10) result = result | (seq_id)END$$ LANGUAGE PLPGSQL

Monday April 23 12

pulling shard ID from ID

shard_id = id ^ ((id gtgt 23) ltlt 23)timestamp = EPOCH + id gtgt 23

Monday April 23 12

pros guaranteed unique in 64-bits not much of a

CPU overhead

Monday April 23 12

cons large IDs from the get-go

Monday April 23 12

hundreds of millions of IDs generated with this

scheme no issues

Monday April 23 12

well what about ldquore-shardingrdquo

Monday April 23 12

first recourse pg_reorg

Monday April 23 12

rewrites tables in index order

Monday April 23 12

only requires brief locks for atomic table renames

Monday April 23 12

20+GB savings on some of our dbs

Monday April 23 12

Monday April 23 12

especially useful on EC2

Monday April 23 12

but sometimes you just have to reshard

Monday April 23 12

streaming replication to the rescue

Monday April 23 12

(btw repmgr is awesome)

Monday April 23 12

repmgr standby clone ltmastergt

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineArsquo shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineC shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

PGBouncer abstracts moving DBs from the

app logic

Monday April 23 12

can do this as long as you have more logical shards than physical

ones

Monday April 23 12

beauty of schemas is that they are physically

different files

Monday April 23 12

(no IO hit when deleting no lsquoswiss cheesersquo)

Monday April 23 12

downside requires ~30 seconds of maintenance to roll out new schema

mapping

Monday April 23 12

(could be solved by having concept of ldquoread-

onlyrdquo mode for some DBs)

Monday April 23 12

not great for range-scans that would span across

shards

Monday April 23 12

latest project follow graph

Monday April 23 12

v1 simple DB table(source_id target_id

status)

Monday April 23 12

who do I followwho follows me

do I follow Xdoes X follow me

Monday April 23 12

DB was busy so we started storing parallel

version in Redis

Monday April 23 12

follow_all(300 item list)

Monday April 23 12

inconsistency

Monday April 23 12

extra logic

Monday April 23 12

so much extra logic

Monday April 23 12

exposing your support team to the idea of cache invalidation

Monday April 23 12

Monday April 23 12

redesign took a page from twitterrsquos book

Monday April 23 12

PG can handle tens of thousands of requests very light memcached

caching

Monday April 23 12

next steps

Monday April 23 12

isolating services to minimize open conns

Monday April 23 12

investigate physical hardware etc to reduce

need to re-shard

Monday April 23 12

Wrap up

Monday April 23 12

you donrsquot need to give up PGrsquos durability amp features to shard

Monday April 23 12

continue to let the DB do what the DB is great at

Monday April 23 12

ldquodonrsquot shard until you have tordquo

Monday April 23 12

(but donrsquot over-estimate how hard it will be either)

Monday April 23 12

scaled within constraints of the cloud

Monday April 23 12

PG success story

Monday April 23 12

(wersquore really excited about 92)

Monday April 23 12

thanks any qs

Monday April 23 12

communicating and sharing in the real world

Monday April 23 12

Monday April 23 12

Monday April 23 12

Monday April 23 12

30+ million users in less than 2 years

Monday April 23 12

at its heart Postgres-driven

Monday April 23 12

a glimpse at how a startup with a small eng team scaled with PG

Monday April 23 12

a brief tangent

Monday April 23 12

the beginning

Monday April 23 12

Text

Monday April 23 12

2 product guys

Monday April 23 12

no real back-end experience

Monday April 23 12

(you should have seen my first time finding my

way around psql)

Monday April 23 12

analytics amp python meebo

Monday April 23 12

CouchDB

Monday April 23 12

CrimeDesk SF

Monday April 23 12

Monday April 23 12

early mix PG Redis Memcached

Monday April 23 12

but were hosted on a single machine

somewhere in LA

Monday April 23 12

Monday April 23 12

less powerful than my MacBook Pro

Monday April 23 12

okay we launchednow what

Monday April 23 12

25k signups in the first day

Monday April 23 12

everything is on fire

Monday April 23 12

best amp worst day of our lives so far

Monday April 23 12

load was through the roof

Monday April 23 12

friday rolls around

Monday April 23 12

not slowing down

Monday April 23 12

letrsquos move to EC2

Monday April 23 12

Monday April 23 12

PG upgrade to 90

Monday April 23 12

Monday April 23 12

scaling = replacing all components of a car

while driving it at 100mph

Monday April 23 12

this is the story of how our usage of PG has

evolved

Monday April 23 12

Phase 1 All ORM all the time

Monday April 23 12

why pg at first postgis

Monday April 23 12

managepy syncdb

Monday April 23 12

ORM made it too easy to not really think through

primary keys

Monday April 23 12

pretty good for getting off the ground

Monday April 23 12

Mediaobjectsget(pk = 4)

Monday April 23 12

first version of our feed (pre-launch)

Monday April 23 12

friends = Relationshipsobjectsfilter(source_user = user)

recent_photos = Mediaobjectsfilter(user_id__in = friends)order_by(lsquo-pkrsquo)[020]

Monday April 23 12

main feed at launch

Monday April 23 12

Redis user 33 postsfriends = SMEMBERS followers33for user in friends LPUSH feedltuser_idgt ltmedia_idgt

for readingLRANGE feed4 0 20

Monday April 23 12

canonical data PGfeedslistssets Redis

object cache memcache

Monday April 23 12

post-launch

Monday April 23 12

moved db to its own machine

Monday April 23 12

at time largest table photo metadata

Monday April 23 12

ran master-slave from the beginning with

streaming replication

Monday April 23 12

backups stop the replica xfs_freeze drives and take EBS snapshot

Monday April 23 12

AWS tradeoff

Monday April 23 12

3 early problems we hit with PG

Monday April 23 12

1 oh that setting was the problem

Monday April 23 12

work_mem

Monday April 23 12

shared_buffers

Monday April 23 12

cost_delay

Monday April 23 12

2 Django-specific ltidle in transactiongt

Monday April 23 12

3 connection pooling

Monday April 23 12

(we use PGBouncer)

Monday April 23 12

somewhere in this crazy couple of months

Christophe to the rescue

Monday April 23 12

photos kept growing and growing

Monday April 23 12

and only 68GB of RAM on biggest machine in EC2

Monday April 23 12

so what now

Monday April 23 12

Phase 2 Vertical Partitioning

Monday April 23 12

django db routers make it pretty easy

Monday April 23 12

def db_for_read(self model) if app_label == photos return photodb

Monday April 23 12

once you untangle all your foreign key

relationships

Monday April 23 12

(all of those useruser_id interchangeable calls bite

you now)

Monday April 23 12

plenty of time spent in PGFouine

Monday April 23 12

read slaves (using streaming replicas) where

we need to reduce contention

Monday April 23 12

a few months later

Monday April 23 12

photosdb gt 60GB

Monday April 23 12

precipitated by being on cloud hardware but likely to have hit limit eventually

either way

Monday April 23 12

what now

Monday April 23 12

horizontal partitioning

Monday April 23 12

Phase 3 sharding

Monday April 23 12

ldquosurely wersquoll have hired someone experienced before we actually need

to shardrdquo

Monday April 23 12

never true about scaling

Monday April 23 12

1 choosing a method2 adapting the application

3 expanding capacity

Monday April 23 12

evaluated solutions

Monday April 23 12

at the time none were up to task of being our

primary DB

Monday April 23 12

NoSQL alternatives

Monday April 23 12

Skypersquos sharding proxy

Monday April 23 12

Rangedate-based partitioning

Monday April 23 12

did in Postgres itself

Monday April 23 12

requirements

Monday April 23 12

1 low operational amp code complexity

Monday April 23 12

2 easy expanding of capacity

Monday April 23 12

3 low performance impact on application

Monday April 23 12

schema-based logical sharding

Monday April 23 12

many many many (thousands) of logical

shards

Monday April 23 12

that map to fewer physical ones

Monday April 23 12

8 logical shards on 2 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 A 3 A 4 B 5 B 6 B 7 B

Monday April 23 12

8 logical shards on 2 4 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 C 3 C 4 B 5 B 6 D 7 D

Monday April 23 12

iexclschemas

Monday April 23 12

all that lsquopublicrsquo stuff Irsquod been glossing over for 2

years

Monday April 23 12

- database - schema - table - columns

Monday April 23 12

spun up set of machines

Monday April 23 12

using fabric created thousands of schemas

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineB shard4 photos_by_user shard5 photos_by_user shard6 photos_by_user shard7 photos_by_user

Monday April 23 12

(fabric or similar parallel task executor is

essential)

Monday April 23 12

application-side logic

Monday April 23 12

SHARD_TO_DB =

SHARD_TO_DB[0] = 0SHARD_TO_DB[1] = 0SHARD_TO_DB[2] = 0SHARD_TO_DB[3] = 0SHARD_TO_DB[4] = 1SHARD_TO_DB[5] = 1SHARD_TO_DB[6] = 1SHARD_TO_DB[7] = 1

Monday April 23 12

instead of Django ORM wrote really simple db

abstraction layer

Monday April 23 12

selectupdateinsertdelete

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

shard_key num_logical_shards = shard_id

Monday April 23 12

in most cases user_id for us

Monday April 23 12

custom Django test runner to createtear-down sharded DBs

Monday April 23 12

most queries involve visiting handful of shards

over one or two machines

Monday April 23 12

if mapping across shards on single DB UNION

ALL to aggregate

Monday April 23 12

clients to library pass in((shard_key id)

(shard_keyid)) etc

Monday April 23 12

library maps sub-selects to each shard and each

machine

Monday April 23 12

parallel execution (per-machine at least)

Monday April 23 12

-gt Append (cost=00097372 rows=100 width=12) (actual time=0290160035 rows=30 loops=1) -gt Limit (cost=00080624 rows=30 width=12) (actual time=0288159913 rows=14 loops=1) -gt Index Scan Backward using index on table (cost=0001865104 rows=694 width=12) (actual time=0286159885 rows=14 loops=1) -gt Limit (cost=0007115 rows=30 width=12) (actual time=00150018 rows=1 loops=1) -gt Index Scan using index on table (cost=00010199 rows=43 width=12) (actual time=00130014 rows=1 loops=1) (etc)

Monday April 23 12

eventually would be nice to parallelize across

machines

Monday April 23 12

next challenge unique IDs

Monday April 23 12

requirements

Monday April 23 12

1 should be time sortable without requiring

a lookup

Monday April 23 12

2 should be 64-bit

Monday April 23 12

3 low operational complexity

Monday April 23 12

surveyed the options

Monday April 23 12

ticket servers

Monday April 23 12

UUID

Monday April 23 12

twitter snowflake

Monday April 23 12

application-level IDs ala Mongo

Monday April 23 12

hey the db is already pretty good about

incrementing sequences

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

CREATE OR REPLACE FUNCTION insta5next_id(OUT result bigint) AS $$DECLARE our_epoch bigint = 1314220021721 seq_id bigint now_millis bigint shard_id int = 5BEGIN SELECT nextval(insta5table_id_seq) 1024 INTO seq_id

SELECT FLOOR(EXTRACT(EPOCH FROM clock_timestamp()) 1000) INTO now_millis result = (now_millis - our_epoch) ltlt 23 result = result | (shard_id ltlt 10) result = result | (seq_id)END$$ LANGUAGE PLPGSQL

Monday April 23 12

pulling shard ID from ID

shard_id = id ^ ((id gtgt 23) ltlt 23)timestamp = EPOCH + id gtgt 23

Monday April 23 12

pros guaranteed unique in 64-bits not much of a

CPU overhead

Monday April 23 12

cons large IDs from the get-go

Monday April 23 12

hundreds of millions of IDs generated with this

scheme no issues

Monday April 23 12

well what about ldquore-shardingrdquo

Monday April 23 12

first recourse pg_reorg

Monday April 23 12

rewrites tables in index order

Monday April 23 12

only requires brief locks for atomic table renames

Monday April 23 12

20+GB savings on some of our dbs

Monday April 23 12

Monday April 23 12

especially useful on EC2

Monday April 23 12

but sometimes you just have to reshard

Monday April 23 12

streaming replication to the rescue

Monday April 23 12

(btw repmgr is awesome)

Monday April 23 12

repmgr standby clone ltmastergt

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineArsquo shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineC shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

PGBouncer abstracts moving DBs from the

app logic

Monday April 23 12

can do this as long as you have more logical shards than physical

ones

Monday April 23 12

beauty of schemas is that they are physically

different files

Monday April 23 12

(no IO hit when deleting no lsquoswiss cheesersquo)

Monday April 23 12

downside requires ~30 seconds of maintenance to roll out new schema

mapping

Monday April 23 12

(could be solved by having concept of ldquoread-

onlyrdquo mode for some DBs)

Monday April 23 12

not great for range-scans that would span across

shards

Monday April 23 12

latest project follow graph

Monday April 23 12

v1 simple DB table(source_id target_id

status)

Monday April 23 12

who do I followwho follows me

do I follow Xdoes X follow me

Monday April 23 12

DB was busy so we started storing parallel

version in Redis

Monday April 23 12

follow_all(300 item list)

Monday April 23 12

inconsistency

Monday April 23 12

extra logic

Monday April 23 12

so much extra logic

Monday April 23 12

exposing your support team to the idea of cache invalidation

Monday April 23 12

Monday April 23 12

redesign took a page from twitterrsquos book

Monday April 23 12

PG can handle tens of thousands of requests very light memcached

caching

Monday April 23 12

next steps

Monday April 23 12

isolating services to minimize open conns

Monday April 23 12

investigate physical hardware etc to reduce

need to re-shard

Monday April 23 12

Wrap up

Monday April 23 12

you donrsquot need to give up PGrsquos durability amp features to shard

Monday April 23 12

continue to let the DB do what the DB is great at

Monday April 23 12

ldquodonrsquot shard until you have tordquo

Monday April 23 12

(but donrsquot over-estimate how hard it will be either)

Monday April 23 12

scaled within constraints of the cloud

Monday April 23 12

PG success story

Monday April 23 12

(wersquore really excited about 92)

Monday April 23 12

thanks any qs

Monday April 23 12

Monday April 23 12

Monday April 23 12

Monday April 23 12

30+ million users in less than 2 years

Monday April 23 12

at its heart Postgres-driven

Monday April 23 12

a glimpse at how a startup with a small eng team scaled with PG

Monday April 23 12

a brief tangent

Monday April 23 12

the beginning

Monday April 23 12

Text

Monday April 23 12

2 product guys

Monday April 23 12

no real back-end experience

Monday April 23 12

(you should have seen my first time finding my

way around psql)

Monday April 23 12

analytics amp python meebo

Monday April 23 12

CouchDB

Monday April 23 12

CrimeDesk SF

Monday April 23 12

Monday April 23 12

early mix PG Redis Memcached

Monday April 23 12

but were hosted on a single machine

somewhere in LA

Monday April 23 12

Monday April 23 12

less powerful than my MacBook Pro

Monday April 23 12

okay we launchednow what

Monday April 23 12

25k signups in the first day

Monday April 23 12

everything is on fire

Monday April 23 12

best amp worst day of our lives so far

Monday April 23 12

load was through the roof

Monday April 23 12

friday rolls around

Monday April 23 12

not slowing down

Monday April 23 12

letrsquos move to EC2

Monday April 23 12

Monday April 23 12

PG upgrade to 90

Monday April 23 12

Monday April 23 12

scaling = replacing all components of a car

while driving it at 100mph

Monday April 23 12

this is the story of how our usage of PG has

evolved

Monday April 23 12

Phase 1 All ORM all the time

Monday April 23 12

why pg at first postgis

Monday April 23 12

managepy syncdb

Monday April 23 12

ORM made it too easy to not really think through

primary keys

Monday April 23 12

pretty good for getting off the ground

Monday April 23 12

Mediaobjectsget(pk = 4)

Monday April 23 12

first version of our feed (pre-launch)

Monday April 23 12

friends = Relationshipsobjectsfilter(source_user = user)

recent_photos = Mediaobjectsfilter(user_id__in = friends)order_by(lsquo-pkrsquo)[020]

Monday April 23 12

main feed at launch

Monday April 23 12

Redis user 33 postsfriends = SMEMBERS followers33for user in friends LPUSH feedltuser_idgt ltmedia_idgt

for readingLRANGE feed4 0 20

Monday April 23 12

canonical data PGfeedslistssets Redis

object cache memcache

Monday April 23 12

post-launch

Monday April 23 12

moved db to its own machine

Monday April 23 12

at time largest table photo metadata

Monday April 23 12

ran master-slave from the beginning with

streaming replication

Monday April 23 12

backups stop the replica xfs_freeze drives and take EBS snapshot

Monday April 23 12

AWS tradeoff

Monday April 23 12

3 early problems we hit with PG

Monday April 23 12

1 oh that setting was the problem

Monday April 23 12

work_mem

Monday April 23 12

shared_buffers

Monday April 23 12

cost_delay

Monday April 23 12

2 Django-specific ltidle in transactiongt

Monday April 23 12

3 connection pooling

Monday April 23 12

(we use PGBouncer)

Monday April 23 12

somewhere in this crazy couple of months

Christophe to the rescue

Monday April 23 12

photos kept growing and growing

Monday April 23 12

and only 68GB of RAM on biggest machine in EC2

Monday April 23 12

so what now

Monday April 23 12

Phase 2 Vertical Partitioning

Monday April 23 12

django db routers make it pretty easy

Monday April 23 12

def db_for_read(self model) if app_label == photos return photodb

Monday April 23 12

once you untangle all your foreign key

relationships

Monday April 23 12

(all of those useruser_id interchangeable calls bite

you now)

Monday April 23 12

plenty of time spent in PGFouine

Monday April 23 12

read slaves (using streaming replicas) where

we need to reduce contention

Monday April 23 12

a few months later

Monday April 23 12

photosdb gt 60GB

Monday April 23 12

precipitated by being on cloud hardware but likely to have hit limit eventually

either way

Monday April 23 12

what now

Monday April 23 12

horizontal partitioning

Monday April 23 12

Phase 3 sharding

Monday April 23 12

ldquosurely wersquoll have hired someone experienced before we actually need

to shardrdquo

Monday April 23 12

never true about scaling

Monday April 23 12

1 choosing a method2 adapting the application

3 expanding capacity

Monday April 23 12

evaluated solutions

Monday April 23 12

at the time none were up to task of being our

primary DB

Monday April 23 12

NoSQL alternatives

Monday April 23 12

Skypersquos sharding proxy

Monday April 23 12

Rangedate-based partitioning

Monday April 23 12

did in Postgres itself

Monday April 23 12

requirements

Monday April 23 12

1 low operational amp code complexity

Monday April 23 12

2 easy expanding of capacity

Monday April 23 12

3 low performance impact on application

Monday April 23 12

schema-based logical sharding

Monday April 23 12

many many many (thousands) of logical

shards

Monday April 23 12

that map to fewer physical ones

Monday April 23 12

8 logical shards on 2 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 A 3 A 4 B 5 B 6 B 7 B

Monday April 23 12

8 logical shards on 2 4 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 C 3 C 4 B 5 B 6 D 7 D

Monday April 23 12

iexclschemas

Monday April 23 12

all that lsquopublicrsquo stuff Irsquod been glossing over for 2

years

Monday April 23 12

- database - schema - table - columns

Monday April 23 12

spun up set of machines

Monday April 23 12

using fabric created thousands of schemas

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineB shard4 photos_by_user shard5 photos_by_user shard6 photos_by_user shard7 photos_by_user

Monday April 23 12

(fabric or similar parallel task executor is

essential)

Monday April 23 12

application-side logic

Monday April 23 12

SHARD_TO_DB =

SHARD_TO_DB[0] = 0SHARD_TO_DB[1] = 0SHARD_TO_DB[2] = 0SHARD_TO_DB[3] = 0SHARD_TO_DB[4] = 1SHARD_TO_DB[5] = 1SHARD_TO_DB[6] = 1SHARD_TO_DB[7] = 1

Monday April 23 12

instead of Django ORM wrote really simple db

abstraction layer

Monday April 23 12

selectupdateinsertdelete

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

Monday April 23 12

select(fields table_name shard_key where_statement where_parameters)

shard_key num_logical_shards = shard_id

Monday April 23 12

in most cases user_id for us

Monday April 23 12

custom Django test runner to createtear-down sharded DBs

Monday April 23 12

most queries involve visiting handful of shards

over one or two machines

Monday April 23 12

if mapping across shards on single DB UNION

ALL to aggregate

Monday April 23 12

clients to library pass in((shard_key id)

(shard_keyid)) etc

Monday April 23 12

library maps sub-selects to each shard and each

machine

Monday April 23 12

parallel execution (per-machine at least)

Monday April 23 12

-gt Append (cost=00097372 rows=100 width=12) (actual time=0290160035 rows=30 loops=1) -gt Limit (cost=00080624 rows=30 width=12) (actual time=0288159913 rows=14 loops=1) -gt Index Scan Backward using index on table (cost=0001865104 rows=694 width=12) (actual time=0286159885 rows=14 loops=1) -gt Limit (cost=0007115 rows=30 width=12) (actual time=00150018 rows=1 loops=1) -gt Index Scan using index on table (cost=00010199 rows=43 width=12) (actual time=00130014 rows=1 loops=1) (etc)

Monday April 23 12

eventually would be nice to parallelize across

machines

Monday April 23 12

next challenge unique IDs

Monday April 23 12

requirements

Monday April 23 12

1 should be time sortable without requiring

a lookup

Monday April 23 12

2 should be 64-bit

Monday April 23 12

3 low operational complexity

Monday April 23 12

surveyed the options

Monday April 23 12

ticket servers

Monday April 23 12

UUID

Monday April 23 12

twitter snowflake

Monday April 23 12

application-level IDs ala Mongo

Monday April 23 12

hey the db is already pretty good about

incrementing sequences

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

[ 41 bits of time in millis ][ 13 bits for shard ID ][ 10 bits sequence ID ]

Monday April 23 12

CREATE OR REPLACE FUNCTION insta5next_id(OUT result bigint) AS $$DECLARE our_epoch bigint = 1314220021721 seq_id bigint now_millis bigint shard_id int = 5BEGIN SELECT nextval(insta5table_id_seq) 1024 INTO seq_id

SELECT FLOOR(EXTRACT(EPOCH FROM clock_timestamp()) 1000) INTO now_millis result = (now_millis - our_epoch) ltlt 23 result = result | (shard_id ltlt 10) result = result | (seq_id)END$$ LANGUAGE PLPGSQL

Monday April 23 12

pulling shard ID from ID

shard_id = id ^ ((id gtgt 23) ltlt 23)timestamp = EPOCH + id gtgt 23

Monday April 23 12

pros guaranteed unique in 64-bits not much of a

CPU overhead

Monday April 23 12

cons large IDs from the get-go

Monday April 23 12

hundreds of millions of IDs generated with this

scheme no issues

Monday April 23 12

well what about ldquore-shardingrdquo

Monday April 23 12

first recourse pg_reorg

Monday April 23 12

rewrites tables in index order

Monday April 23 12

only requires brief locks for atomic table renames

Monday April 23 12

20+GB savings on some of our dbs

Monday April 23 12

Monday April 23 12

especially useful on EC2

Monday April 23 12

but sometimes you just have to reshard

Monday April 23 12

streaming replication to the rescue

Monday April 23 12

(btw repmgr is awesome)

Monday April 23 12

repmgr standby clone ltmastergt

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineArsquo shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

machineA shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

machineC shard0 photos_by_user shard1 photos_by_user shard2 photos_by_user shard3 photos_by_user

Monday April 23 12

PGBouncer abstracts moving DBs from the

app logic

Monday April 23 12

can do this as long as you have more logical shards than physical

ones

Monday April 23 12

beauty of schemas is that they are physically

different files

Monday April 23 12

(no IO hit when deleting no lsquoswiss cheesersquo)

Monday April 23 12

downside requires ~30 seconds of maintenance to roll out new schema

mapping

Monday April 23 12

(could be solved by having concept of ldquoread-

onlyrdquo mode for some DBs)

Monday April 23 12

not great for range-scans that would span across

shards

Monday April 23 12

latest project follow graph

Monday April 23 12

v1 simple DB table(source_id target_id

status)

Monday April 23 12

who do I followwho follows me

do I follow Xdoes X follow me

Monday April 23 12

DB was busy so we started storing parallel

version in Redis

Monday April 23 12

follow_all(300 item list)

Monday April 23 12

inconsistency

Monday April 23 12

extra logic

Monday April 23 12

so much extra logic

Monday April 23 12

exposing your support team to the idea of cache invalidation

Monday April 23 12

Monday April 23 12

redesign took a page from twitterrsquos book

Monday April 23 12

PG can handle tens of thousands of requests very light memcached

caching

Monday April 23 12

next steps

Monday April 23 12

isolating services to minimize open conns

Monday April 23 12

investigate physical hardware etc to reduce

need to re-shard

Monday April 23 12

Wrap up

Monday April 23 12

you donrsquot need to give up PGrsquos durability amp features to shard

Monday April 23 12

continue to let the DB do what the DB is great at

Monday April 23 12

ldquodonrsquot shard until you have tordquo

Monday April 23 12

(but donrsquot over-estimate how hard it will be either)

Monday April 23 12

scaled within constraints of the cloud

Monday April 23 12

PG success story

Monday April 23 12

(wersquore really excited about 92)

Monday April 23 12

thanks any qs

Monday April 23 12

Monday April 23 12

Monday April 23 12

30+ million users in less than 2 years

Monday April 23 12

at its heart Postgres-driven

Monday April 23 12

a glimpse at how a startup with a small eng team scaled with PG

Monday April 23 12

a brief tangent

Monday April 23 12

the beginning

Monday April 23 12

Text

Monday April 23 12

2 product guys

Monday April 23 12

no real back-end experience

Monday April 23 12

(you should have seen my first time finding my

way around psql)

Monday April 23 12

analytics amp python meebo

Monday April 23 12

CouchDB

Monday April 23 12

CrimeDesk SF

Monday April 23 12

Monday April 23 12

early mix PG Redis Memcached

Monday April 23 12

but were hosted on a single machine

somewhere in LA

Monday April 23 12

Monday April 23 12

less powerful than my MacBook Pro

Monday April 23 12

okay we launchednow what

Monday April 23 12

25k signups in the first day

Monday April 23 12

everything is on fire

Monday April 23 12

best amp worst day of our lives so far

Monday April 23 12

load was through the roof

Monday April 23 12

friday rolls around

Monday April 23 12

not slowing down

Monday April 23 12

letrsquos move to EC2

Monday April 23 12

Monday April 23 12

PG upgrade to 90

Monday April 23 12

Monday April 23 12

scaling = replacing all components of a car

while driving it at 100mph

Monday April 23 12

this is the story of how our usage of PG has

evolved

Monday April 23 12

Phase 1 All ORM all the time

Monday April 23 12

why pg at first postgis

Monday April 23 12

managepy syncdb

Monday April 23 12

ORM made it too easy to not really think through

primary keys

Monday April 23 12

pretty good for getting off the ground

Monday April 23 12

Mediaobjectsget(pk = 4)

Monday April 23 12

first version of our feed (pre-launch)

Monday April 23 12

friends = Relationshipsobjectsfilter(source_user = user)

recent_photos = Mediaobjectsfilter(user_id__in = friends)order_by(lsquo-pkrsquo)[020]

Monday April 23 12

main feed at launch

Monday April 23 12

Redis user 33 postsfriends = SMEMBERS followers33for user in friends LPUSH feedltuser_idgt ltmedia_idgt

for readingLRANGE feed4 0 20

Monday April 23 12

canonical data PGfeedslistssets Redis

object cache memcache

Monday April 23 12

post-launch

Monday April 23 12

moved db to its own machine

Monday April 23 12

at time largest table photo metadata

Monday April 23 12

ran master-slave from the beginning with

streaming replication

Monday April 23 12

backups stop the replica xfs_freeze drives and take EBS snapshot

Monday April 23 12

AWS tradeoff

Monday April 23 12

3 early problems we hit with PG

Monday April 23 12

1 oh that setting was the problem

Monday April 23 12

work_mem

Monday April 23 12

shared_buffers

Monday April 23 12

cost_delay

Monday April 23 12

2 Django-specific ltidle in transactiongt

Monday April 23 12

3 connection pooling

Monday April 23 12

(we use PGBouncer)

Monday April 23 12

somewhere in this crazy couple of months

Christophe to the rescue

Monday April 23 12

photos kept growing and growing

Monday April 23 12

and only 68GB of RAM on biggest machine in EC2

Monday April 23 12

so what now

Monday April 23 12

Phase 2 Vertical Partitioning

Monday April 23 12

django db routers make it pretty easy

Monday April 23 12

def db_for_read(self model) if app_label == photos return photodb

Monday April 23 12

once you untangle all your foreign key

relationships

Monday April 23 12

(all of those useruser_id interchangeable calls bite

you now)

Monday April 23 12

plenty of time spent in PGFouine

Monday April 23 12

read slaves (using streaming replicas) where

we need to reduce contention

Monday April 23 12

a few months later

Monday April 23 12

photosdb gt 60GB

Monday April 23 12

precipitated by being on cloud hardware but likely to have hit limit eventually

either way

Monday April 23 12

what now

Monday April 23 12

horizontal partitioning

Monday April 23 12

Phase 3 sharding

Monday April 23 12

ldquosurely wersquoll have hired someone experienced before we actually need

to shardrdquo

Monday April 23 12

never true about scaling

Monday April 23 12

1 choosing a method2 adapting the application

3 expanding capacity

Monday April 23 12

evaluated solutions

Monday April 23 12

at the time none were up to task of being our

primary DB

Monday April 23 12

NoSQL alternatives

Monday April 23 12

Skypersquos sharding proxy

Monday April 23 12

Rangedate-based partitioning

Monday April 23 12

did in Postgres itself

Monday April 23 12

requirements

Monday April 23 12

1 low operational amp code complexity

Monday April 23 12

2 easy expanding of capacity

Monday April 23 12

3 low performance impact on application

Monday April 23 12

schema-based logical sharding

Monday April 23 12

many many many (thousands) of logical

shards

Monday April 23 12

that map to fewer physical ones

Monday April 23 12

8 logical shards on 2 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 A 3 A 4 B 5 B 6 B 7 B

Monday April 23 12

8 logical shards on 2 4 machines

user_id 8 = logical shard

logical shards -gt physical shard map

0 A 1 A 2 C 3 C 4 B 5 B 6 D 7 D

Monday April 23 12

iexclschemas

Monday April 23 12

all that lsquopublicrsquo stuff Irsquod been glossing over for 2

years

Monday April 23 12

- database - schema - table - columns

Monday April 23 12

spun up set of machines