Recurrent Neural Networks - GitHub Pages

Transcript of Recurrent Neural Networks - GitHub Pages

Recurrent Neural Networks-The most widely used sequential model

Hao Dong

2019, Peking University

1

• Motivation

• Before We Begin: Word Representation

• Sequential Data

• Vanilla Recurrent Neural Network

• Long Short-Term Memory

• Time-series Applications

2

Recurrent Neural Networks

Motivation

3

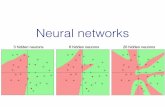

Mo1va1on

• Both Multi-layer Perceptron and Convolutional Neural Networks take one data sample as the inputand output one result, are categorised as feed-forward neural networks (FNNs) as they only passdata layer-by-layer and obtain one output for one input (e.g., an image in input with an output of aclass label)

• There are many time-series data sets, such as language, video, and biosignals that could not fit intothis framework

• The recurrent neural network (RNN) is a deep learning architecture designed for processing time-series data.

4

Cat 0.97

Feed-forwardNeuralNetwork

“oneminustwoplusthree” “theansweristwo”

𝑝 𝑦, 𝑥8, 𝑥9, 𝑥: = 𝑝 𝑦 𝑝 𝑥8 𝑥; 𝑝(𝑥9|𝑥;, 𝑥8)𝑝(𝑥:|𝑥;, 𝑥8, 𝑥9)

Motivation

5

LanguageTranslation

Chatbot

ImageCaptioning

Anti-spam SignalAnalysis

VideoAnalysis

SentenceGeneration

Before We Begin: Word Representation

6

Before We Begin: Word Representation

• One-hot Vector

7

• RecurrentNeuralNetwork receiveswordsonebyone,butletseehowawordisrepresentedincomputer.

• Simplemethod1:One-hot vector

“DeepLearningisveryuseful”

0000100010

0010001000

10000

Disadvantages:

• Largevocabularywillleadsto“CurseofDimensionality”.

• Allwordrepresentationsareindependent!Toosparse!

Word representa2on

Before We Begin: Word Representation

• Bag of Words

8

• Simplemethod2:BagofWordsusethewordfrequenciestorepresentthesentence

Disadvantages:

• Largevocabularywillleadto“CurseofDimensionality”.

• Missingtheinformationofthewordlocations.

“we like TensorLayer, do we?”

Word Frequency

we 2

like 1

TensorLayer 1

do 1

[2, 1, 1, 1]

Sentence (text) representation

Before We Begin: Word Representation

• Word Embedding

9

• Represent a word using a vector of floating-point numbers.

hellohi

sheheit

one

twothreebeijing

hangzhou london

Wordrepresentationspace

“hello” [0.32, 0.98, 0.12, 0.54 ….]

Wordembeddingvector

Similar words are grouped together

Before We Begin: Word Representation

• Word Embedding

10

• Given a vocabulary with 5 words, we can have a word embedding table that eachword has 3 feature values.

Word embedding vectorOne-hot vector Word embedding table

Inpractice,wewillnotmultipletheone-hotvectorandthewordembeddingtable,tosavetime,wedirectlyusethewordIDastherowindextofindtheembeddingvectorfromthetable

Word ID (Row Index)

--- 0

deep 1

learning 2

is 3

popular 4

Each word has a unique ID

We need to find a “good” table!

Before We Begin: Word Representation

• Ideal Word Embedding

11

• Low dimension == High-level features to represent the words• Contains semantic information of the words

Similar words, such as “cat”-“tiger” and “water”-“liquid”, should have similarword representation.

Semantic information allows semantic operations:

King − Man + Women = QueenParis − France + Italy = Rome

The features in the word embeddingcontain information, such as “gender”and “location”.

Before We Begin: Word Representation

• Learn Word Embedding

12

• Use the text document as the trainingdata without any labels.

• Find similar words by comparing thecontext.

• Existing algorithms learn the word embedding table by reading a large textdocument to find the patterns, which is one type of self-supervised learning.

• This is a blue bird• This is a yellow bird• This is a red bird• This is a green bird

As the ”color” words located in similarlocations of the sentences, we can groupthe “color” words together.

Before We Begin: Word Representation

• Word2Vec

13

• Google 2013

• Word2Vec = Skip-Gram/CBOW+ Negative Sampling

Before We Begin: Word Representation

• Word2Vec

14

• Continuous Bag-of-Words (CBOW): predicts the middle word using the context.• In “a blue bird on the tree”, the context of “bird” is [“a” , “blue” , “on” , “the”, “ tree”]

this is a blue bird on the tree

bird

a

blue CBOW: maximize the probability of the middle word

Inthisexample,weonlyconsider4wordsonleftandright,i.e.,windowsizeis2.

on

on

Note:onlyonewordembeddingtablehere,differentinputsreusethesametable.

Before We Begin: Word Representation

• Word2Vec

15

• Skip-Gram (SG) is opposite to CBOW, but for the same propose• CBOW predicts the middle word using the context, while SG predicts the context using the

middle word. In “a blue bird on the tree”, the input of SG is “bird”, the outputs are [“a” , “blue” ,“on” , “the” , “tree”]

bird

SG: maximize the probability of the contextblue

on

this is a blue bird on the tree

a

the

Before We Begin: Word Representation

• Word2Vec

16

birdblue

on

this is a blue bird on the tree

a

the

birdblue

on

a

the

tree

other..

0.99

0.00

0.98

0.99

0.97

0.01

Equal

Sigmoid

• Skip-Gram (SG) is opposite to CBOW, but for the same propose• CBOW predicts the middle word using the context, while SG predicts the context using the

middle word. In “a blue bird on the tree”, the input of SG is “bird”, the outputs are [“a” , “blue” ,“on” , “the” , “tree”]

Before We Begin: Word Representation

• Word2Vec

17

• Noise-Contrastive Estimation (NCE)

• Skip-Gram has multiple target outputs, so we use Sigmoid instead of Softmax.Each word in the vocabulary is separated into either positive and negative sample, and weclassify each word independently.

• A large vocabulary will lead to large computational cost.We use Negative Sampling to speed up the computation of the loss function,by randomlysample N negative samples from the vocabulary.

• This method is called Noise-Contrastive Estimation:

𝐸 = −( eJ∈LMN

log 𝑦J + eO∈PQR

log −𝑦O )

Randomly select N negative samplesPositive samples

Sequential Data

18

Sequential Data

19

FeedforwardNeuralNetworks Nontime-seriesproblemssuchasimageclassificationandobjectdetection

Input

Hiddenstate

Output

Sequential Data

20

One data input and many data outputs in order

Input

Hiddenstate

Output

Imagecaptioning:inputanimagetogenerateasentence

Sequential Data

21

Multiple data inputs and a single data output

Input

Hiddenstate

Output

Sentencesentimentclassification:inputasentenceinorderandoutputtheprobabilityofhappiness.

Sequential Data

22

Multiple data inputs and multiple data outputs

Input

Hiddenstate

Output

Languagetranslation:inputtheentiresentencetothemodelbeforestartingtogeneratethetranslatedsentence

asynchronous(Seq2Seq)

Sequential Data

23

Multiple data inputs and multiple data outputs

synchronous(simultaneous)

Input

Hiddenstate

Output

Weatherprediction:inputtheinformationtothemodelineverytime-stepandoutputthepredictweathercondition.

asynchronous(Seq2Seq)

Sequential Data

24

Recurrent Neural NetsStore and process the temporal information

Input

Hiddenstate

Output

Vanilla Recurrent Neural Network

25

Vanilla Recurrent Neural Network

• Hidden Vector (State)

26

𝑋!:theinputoftime 𝑡

The hidden vector of time 𝑡 is the input of 𝑡 + 1

TheonlydifferencebetweenRNNandFNN:Feedtheinformationofprevious

time-steptoitsnextstep.

Unrolled

Thehiddenvectoroftime 𝑡

Vanilla Recurrent Neural Network

• Processing Temporal Information

27

I am from China, so I speak ________Chinese𝑿𝟎 𝑿𝟏 𝑿𝟐 𝑿𝟑 𝑿𝟒 𝑿𝟓 𝑿𝟔 𝑿𝟕

ℎ! = 𝜎"(𝑊"𝑥! +𝑈"ℎ!#$ + 𝑏")𝑦! = 𝜎%(𝑊%ℎ! + 𝑏%)

𝑊、𝑈、 𝑏 :Matrixandbias𝜎!、 𝜎" :activation, 𝜎! istanh𝑦# :outputattime 𝑡

ℎ! isazerovector,ℎ"#$ contains the information

of the previous time-step.

Pass information to the future

Unrolled

Vanilla Recurrent Neural Network

• Limitation: Long-TermDependencyProblem

28

Difficult to maintain information for a long term.

I am from China, and I live in the UK and US for 10 years, my mother language is ________?

Long Short-Term Memory

29

Long Short-Term Memory

• For solving the Long-Term Dependency Problem

30

VanillaRNN LSTM

Long Short-Term Memory

• Gate Function

31

0.53

-0.32

0.72

0.45

1.23

-0.24

0.01

0.99

0.98

0.04

0.98

0.02

0.0053

-0.3168

0.7056

0.0018

1.2054

-0.0048

Input Vector Gate Vector Output Vector

=⨀

• RNNhasahiddenvector,LSTMhasbothhiddenandcellvectors.

• Thevaluesingatevectorarevaryingfrom0to1,0means“close|,1means“open.”

• “Filter”theinformationbymultipletheinputvectorandgatevectorelement-wisely.

0~1

Long Short-Term Memory

• Forget Gate

32

Cellvector

• Computetheforgetgatevector:

𝑓! = 𝑠𝑖𝑔𝑚𝑜𝑖𝑑( ℎ!#$, 𝑥! 𝑾& + 𝑏&)

Concatenatetwovectors

Long Short-Term Memory

• Input Gate

33

𝐶!=𝑓!⊙𝐶!#$ + 𝑖!⊙ I𝐶!

Forgetpreviousinformation Inputnewinformation

𝑖!=s𝑖𝑔𝑚𝑜𝑖𝑑( ℎ!#$, 𝑥! 𝑾' + 𝑏')• Computeinputgatevector

I𝐶!=tanh( ℎ!#$, 𝑥! 𝑾( + 𝑏()• Computeinformationvector

• Computenewcellvector

Long Short-Term Memory

• Output Gate

34

𝑜!=sigmoid( ℎ!#$, 𝑥! 𝑾) + 𝑏))

ℎ! = 𝑜!⊙tanh(𝐶!)

• Computeoutputgatevector

• Computenewhiddenvector

Long Short-Term Memory

• Questions

35

• CouldweuseReLU toreplaceSigmoidintheGatefunction?

• WhyweusetanhratherthanSigmoidwheninputtinginformationtoavector?

Long Short-Term Memory

• Variants of LSTM

36

• LSTM was invented in 1997, several variants of LSTM exist, including the Gate RecurrentUnit (GRU). However, Greff et al. [1] analyzed eight LSTM variants on three representative tasks,including speech recognition, handwriting recognition, and polyphonic music modeling, andsummarised the results of 5,400 experimental runs (representing 15 years of CPU time). Thisreview suggests that none of the LSTM variants provides significant improvements to thestandard LSTM.

• Gated Recurrent Unit (GRU) does not have cell state and reduce computational cost andmemory usage of LSTM.

[1] K. Greff, R. K. Srivastava, J. Koutn ́ık, B. R. Steunebrink, and J. Schmidhuber, “LSTM: A search space odyssey,” IEEE TNNLS, 2017.

Time-series Applications

42

Time-series Applications

• Many-to-one: Sentence Sentiment Classification

43

• Use the last output to compute the loss.

• Stack a fully connected layer with Softmax on the top ofthe hidden vector.

I Feel Bad

0.12 0.88Positive/Negative

Time-series Applications

• One-to-many: Image Captioning

44

This

This

is

is

a

a

bird

bird

<EOS>• Use the output of every time-step as the inputof its next step.

• Terminate the process when the output is aspecial token for end of sentence (EOS)

• Use all outputs to compute the loss, e.g.,averaged cross-entropy of all outputs.

Time-series Applications

45

• Synchronous Many-to-Many: Traffic Counting

• Duringtraining,apre-definedsequencelengthisrequired tocomputetheloss.

• Duringtesting,inputdatasamplesone byone.

5 6 7 6

Sequencelength= 4

Time-series Applications

• Synchronous Many-to-Many: Text Generation/Language Modelling

46

this is a bird

is a bird <EOS>

During testing, input “this,” output the entire sentence

• Theoutputofeachstepisequaltotheinputofitsnextstep.

Time-series Applica1ons

• Asynchronous Many-to-Many (Seq2Seq): Chatbot

47

How are you

I’m fine <EOS>

Encode

Decode

• Encodetheinputsequentialdatabeforestartingtooutputthesequentialresult.

Time-series Applications

• Asynchronous Many-to-Many: Chatbot

48

<START>

Targetoutput

How are you

I’m fine <EOS>

I’m fineDecoderinputEncoderinput

• Encode the sequential inputdata before starting to outputthe result.

• During training, add an EOStoken on the target outputand add a START token on thedecoder input.

Summary

49

• Motivation• Time-seriesdata

• Before We Begin: Word Representation• one-hotvector,BOW,wordembedding,Word2Vec,CBOW,Skip-Gram,negativesampling,NCE

• Sequential Data• one-to-many,many-to-one,asynchronousmany-to-many,synchronousmany-to-many

• Vanilla Recurrent Neural Network• Hiddenvector(state),long-termdependencyproblem

• Long Short-Term Memory• Cellvector(state),forgetgate,inputgate,outputgate

• Time-series Applications• one-to-many,many-to-one,asynchronousmany-to-many,synchronousmany-to-many• Detailsoftrainingandtesting(inferencing)

50

Recurrent Neural Networks

51

Recurrent Neural Networks

• References

• Word2Vec Parameter Learning Explained. Rong Xin. arXiv. 2016

• Deep Learning, NLP, and Representation. Colah Blog. 2014

• Natural Language Processing with Deep Learning. Christopher manning, Richard Socher. Stanford University. 2017

Thanks

52