Random data matrices - Duke Universitypdh10/Teaching/832/Notes/rmatrix.pdfSummary: Mean-variance...

Transcript of Random data matrices - Duke Universitypdh10/Teaching/832/Notes/rmatrix.pdfSummary: Mean-variance...

Random data matrices

Peter Hoff

October 1, 2020

Contents

1 From samples to populations 3

2 Separating the mean from the variance 6

3 Multivariate normal distributions 12

4 Decompositions of a normal matrix 20

4.1 SVD of a normal matrix . . . . . . . . . . . . . . . . . . . . . 21

4.2 Polar decomposition of a normal matrix . . . . . . . . . . . . 25

5 Decompositions of a Wishart matrix 26

1

5.1 Eigendecomposition of Wishart matrix . . . . . . . . . . . . . 29

5.2 Asymptotic distribution of the eigendecomposition . . . . . . . 31

5.3 Evaluating partial isotropy . . . . . . . . . . . . . . . . . . . . 36

6 Shrinkage estimators of covariance 47

6.1 Overdispersion of sample eigenvalues . . . . . . . . . . . . . . 47

6.2 Equivariant covariance estimation . . . . . . . . . . . . . . . . 48

6.3 Orthogonally equivariant estimators . . . . . . . . . . . . . . . 53

Abstract

We show how matrix decompositions can be related to estimation

of the mean and variance of a random matrix. We introduce the

multivariate normal distribution, and describe the distributions of the

components of the decomposition a random normal matrix, including

those of the sample mean, sample variance, principal components and

principal axes. A reference for some of this material can be found

in chapters 3 and 8 of Mardia et al. [1979], chapter 5 of Hardle and

Simar [2015] and chapter 3.3 of Izenman [2008]. More material on

distributions on the Stiefel manifold and the orthogonal group can be

found in Chikuse [2003], Hoff [2009] and James [1954].

2

1 From samples to populations

Much of our discussion so far has focused on decomposing our data matrix

Y as follows:

Y = 1y> + UDV>,

where

• y = Y>1/n and

• UDV> is the SVD of Y − 1y> = CY.

In particular

• the columns of V are the principal axes;

• the columns of U are the (scaled) principal components;

• 1y> + UrDrV>r provides the best r-dimensional affine approximation

to the rows of the data matrix.

This suggests a decomposition in terms of the sample mean and variance:

Y = 1y> + UDV>

= 1y> + (√n− 1UV>)VDV>/

√n− 1

≡ 1µ> + ZΣ1/2

where µ = y, and Σ1/2 = VDV>/√n− 1. Then we have

Σ = Σ1/2Σ1/2 = VD2V>/(n− 1)

= VDU>UDV>/(n− 1)

= Y>CCY>/(n− 1)

= Y>CY/(n− 1) = S/(n− 1),

3

so Σ1/2 is the symmetric matrix square root of S/(n−1), and Σ is the sample

covariance matrix. So we have written

Y = 1µ> + ZΣ1/2

where µ and Σ are the sample mean and covariance matrix, respectively.

What are the properties of Z?

Z>Z = (n− 1)VU>UV>

Z>Z/(n− 1) = VV> = Ip

where the latter equality is assuming n ≥ p. If n > p, then ZZ>/(n − 1) =

UU> but this is not the identity (it is an n× n matrix of rank p or less - it

is an idempotent projection matrix). To summarize:

Sample moment representation:

• Y = 1µ> + ZΣ1/2;

• µ and Σ are the sample mean and sample covariance;

• Z satisfies Z>Z/(n− 1) = I, that is var(Z) = I.

In what follows, we will use “var” to denote the sample covariance of the

rows of a matrix, that is, what you would get if you applied the var function

to a matrix in R. This is to distinguish the sample variance of a collection

of numbers from the variance of a random object, which we will denote as

“Var”.

Now consider an analogous representation in terms of population moments:

Population moment representation:

4

• Y = 1µ> + ZΣ1/2;

• Z is a random matrix with rows z1, . . . , zn that satisfy

E[zi] = 0

E[ziz>i ] = Ip

E[ziz>i′ ] = 0p×p.

Under these assumptions on Y, you should be able to show that E[yi] = µ,

Var[yi] = Σ and Cov[yi,yi′ ] = 0 for i 6= i′.

Exercise 1. Show the above conditions on Z are equivalent to the following:

• the elements of Z are mean zero, variance one and uncorrelated.

• for column vectors zj, zj′, E[zj] = 0n×1, E[zjz>j ] = In, E[zjz

>j′ ] = 0n×n.

Also show that this implies

• E[Z>Z/n] = Ip

• E[ZZ>/p] = In

So we see a correspondence between the sample and population moment

representations. Furthermore, the sample moments provide estimates of the

population moments:

Theorem 1. If Y = 1µ> + ZΣ1/2 where the elements of Z are mean-zero,

variance-one and uncorrelated, then

E[µ] = µ;

E[Σ] = Σ.

5

There are several ways to show E[Σ] = Σ.

(n− 1)Σ = Y>CY

= Σ1/2Z>CZΣ1/2

(n− 1)E[Σ] = Σ1/2E[Z>CZ]Σ1/2

So we need to show E[Z>CZ] = (n − 1)Ip. Consider entry (j, j′) of this

matrix

E[z>j Czj′ ] = E[tr(z>j Czj′)]

= E[tr(Czj′z>j )]

= tr(E[Czj′z>j ])

= tr(CE[zj′z>j ]).

If j 6= j′ then the expectation is 0n×n by Exercise 1. If j = j′ then the

expectation is In and the matrix entry is tr(C) = n − 1. There are many

calculations like this in multivariate statistical theory, so make sure you un-

derstand this one.

Exercise 2. Show that tr(C) = n−1, where C is the n×n centering matrix.

2 Separating the mean from the variance

Covariance estimation is different from mean estimation, and we will often

want to discuss covariance estimation without having to bother with the

mean. For this reason, it is often helpful to decompose the data matrix

into two orthogonal parts, one for mean estimation and one for covariance

estimation. We’ve used these decompositions before - now we study their

distributional properties.

Mean-variance decomposition

6

Let Y = 1µ> + ZΣ1/2 where the elements of Z are mean-zero, variance one

and uncorrelated.

Decompose Y as follows

• Y = (I− 11>/n+ 11>/n)Y

• Y = 11>Y/n = 1y>

• E = (I− 11>/n)Y = CY.

Clearly, we have

• E[Y] = 1µ>

• E[E] = 0n×p

Geometrically, we know that the columns of Y are orthogonal to those of E,

E>Y = Y>C(11>/n)Y = 0p×p.

The rows of E are not in general orthogonal to those of Y (y).

EY> 6= 0n×n.

However, we do have this result on average:

Theorem 2. The elements of y and E are uncorrelated.

Showing this can be done with our existing techniques by “brute force,” but

it involves a lot of messy index notation. However, it can be made easy with

the use of Kronecker products and a simple identify.

7

Vectorization and Kronecker products

Right now we are studying data matrices, and later we will study data ten-

sors of varying shapes and sizes. Linear and multilinear operations on these

objects can be performed using a variety of notational tools, but the most

universal approach is to just vectorize our data object.

Definition 1. For Y ∈ Rn×p,

vec(Y) = vec

(y1 · · · yp

| |

)=

y1

...

yp

∈ Rnp

Vectorization allows us to perform linear operations on matrices by using the

notation of linear algebra. For example, suppose we want to express CY in

terms of a vector:

vec(CY) =

Cy1

...

Cyp

=

C 0 · · · 0

0 C · · · 0...

...

0 · · · C

y1

...

yp

= (Ip ⊗C)vec(Y).

Here, “⊗” is the Kronecker product, defined by

A⊗B =

a11B · · · a1qB

......

ap1B · · · apqB

so A⊗B ∈ Rpm×qn for A ∈ Rp×q,B ∈ Rm×n.

8

As another example, let Z ∈ Rn×p and suppose we want to study ZΣ1/2.

With some work, you can show

vec(ZΣ1/2) = (Σ1/2 ⊗ In)vec(Z).

In particular, we have the following:

Exercise 3. Suppose the elements of Z are mean zero, variance one, uncor-

related random variables, and let Y = 1µ> + ZΣ1/2. Then

Var[vec(Y)] = Σ⊗ I.

More generally, we have the following vec-Kronecker identity:

vec(AXB>) = (B⊗A)vec(X) = (B⊗A)x. (1)

Remember, the matrix on the right goes first, and gets transposed. Here are

some results that follow from the definition and this identity:

• (B + C)⊗A = B⊗A + C⊗A.

• (B⊗A)(D⊗C) = (BD)⊗ (AC)

• (B⊗A)> = (B> ⊗A>)

Exercise 4. Derive the above three identities. One approach is to use the

vec-Kronecker identity.

Theorem 3. Let Z be a matrix with uncorrelated entries, each with the same

variance. Then X = AZB> and Y = CZD> are uncorrelated iff AC> = 0

or BD> = 0.

Exercise 5. Prove the theorem.

9

Now let’s use this tool to prove that the residuals are uncorrelated with the

sample mean. Let Y = 1µ>+ ZΣ1/2 where the entries of Z are uncorrelated

with zero mean and unit variance. We want to show that y and E = CY

are uncorrelated, meaning that every entry of y is uncorrelated with every

entry of E.

Let’s first compute the vectorization of E = CY in terms of Z and the

parameters:

e = vec(E) = vec(C(1µ> + ZΣ1/2))

= vec(CZΣ1/2)

= (Σ1/2 ⊗C)z

Now let’s vectorize y. Isn’t y already a vector? Well yes, but to compute

the correlation we want to write it in terms of the vectorization of Z.

ny> = 1>Y = 1>1µ> + 1>ZΣ1/2

= nµ> + 1>ZΣ1/2.

Thinking of y> as a 1× p matrix, we have y = vec(y>), giving

ny = nµ + (Σ1/2 ⊗ 1>)z.

The covariance between e and y is then the covariance between (Σ1/2⊗C)z

and (Σ1/2 ⊗ 1>)z/n, and so

Cov[e, ny] = E[ez>(Σ1/2 ⊗ 1)]

= E[(Σ1/2 ⊗C)zz>(Σ1/2 ⊗ 1)]

= (Σ1/2 ⊗C)E[zz>](Σ1/2 ⊗ 1)

= (Σ1/2 ⊗C)I(Σ1/2 ⊗ 1)

= (Σ1/2 ⊗C)(Σ1/2 ⊗ 1)

= Σ⊗ (C1n)

= Σ⊗ 0 = 0.

10

Summary: Mean-variance decomposition of a data matrix

Let

Y = 1µ> + ZΣ1/2

where the elements of Z are mean zero, variance one and uncorrelated. From

Exercise 3, we know that Var[vec(Y)] = Σ⊗ I. For this reason, we write

Y ∼Mn×p(1µ>,Σ⊗ I).

The first argument is the matrix mean (expectation) of Y. The second

argument is the variance of (vec) Y.

We can write Y as

Y =(11>/n+ (I− 11>/n)

)Y

= 1y> + CY

= 1y> + E.

We have shown that if Y ∼Mn×p(1µ>,Σ⊗ I), then

• E[y] = µ, Var[y] = Σ/n.

• E[E>E/(n− 1)] = Σ.

• E and y are uncorrelated.

So we have decomposed the data matrix Y into uncorrelated parts y and E

that we use to estimate the mean µ and variance Σ, respectively.

11

3 Multivariate normal distributions

To do much more we will need to make additional distributional assumptions

on Z. The most studied assumption is that the elements of Z are i.i.d.

standard normal random variables. Before proceeding, we review a few basic

definitions and facts about vectors of normal random variables (see Mardia,

Kent and Bibby 3.1)

Definition 2.

• z ∼ Np(0, Ip) if z1, . . . , zp ∼ i.i.d. N(0, 1).

• y ∼ Np(µ,Σ) if yd= µ + Σ1/2z where z ∼ Np(0, Ip).

This particular definition highlights the fact that the family of all p-variate

normal distributions is closed under shifts and linear transformations, i.e.

affine transformations.

Results concerning linear transformations of random variables are most easily

dealt with using characteristic functions or MGFs.

Theorem 4.

Mz(s) ≡ E[exp(s>z)] = exp(s>s/2)

My(s) = exp(s>µ + s>Σs/2)

Some important corollaries follow:

Corollary 1.

1. y ∈ Rp is multivariate normal iff a>y is normal for all a ∈ Rp.

12

2. Two subvectors ya and yb of a multivariate normal vector are indepen-

dent iff they are uncorrelated.

Exercise 6. Prove the corollary.

Theorem 5.

1. If y ∼ Np(µ,Σ) and x = Ay + b then x ∼ Nq(Aµ + b,AΣA>).

2. If y ∼ Np(µ,Σ) and Σ−1 exists then z = Σ−1/2(y − µ) ∼ Np(0, I).

3. From 2, z>z = (y − µ)>Σ−1(y − µ) ∼ χ2p.

4. If y ∼ Np(µ, σ2I) and G ∈ Rp×q satisfies G>G = Iq, then

G>y ∼ Nq(G>µ, σ2I).

The first item can be shown with the basic change of variables formula or

MGFs. It implies, in particular, that linear transformations of normal ran-

dom variables are also normal. Items 2 and 4 are corollaries of 1. Item 3

follows from the change of variables formula, using MGFs or just the defini-

tion of a χ2 random variable, depending on your perspective.

Now let’s extend the assumptions about the moments of Y to assumptions

about its distribution:

Matrix moment assumptions: Y ∼Mn×p(1µ>,Σ⊗ In) if

• Y = 1µ> + ZΣ1/2

• E[Z] = 0, Var[vec(Z)] = Inp.

Matrix normal assumptions: Y ∼ Nn×p(1µ>,Σ⊗ In) if

13

• Y = 1µ> + ZΣ1/2

• E[Z] = 0, Var[vec(Z)] = Inp.

• The elements of Z are jointly normally distributed.

The last two items are the same as zi,j ∼ i.i.d. N(0, 1).

Just reading off the row vectors of Y, we see that these distributional as-

sumptions are equivalent to y1, . . . ,yn ∼ i.i.d. Np(µ,Σ), where yi is a row

vector of Y. So the “In” in “Σ ⊗ In” refers to the across-row independence

(within-column independence) of the data.

Theorem 6. If Y ∼ Nn×p(1µ>,Σ⊗ I) then

YA> + 1b> ∼ Nn×q(1(Aµ + b)>,AΣA> ⊗ I).

Exercise 7. Prove the theorem.

Recall our decomposition

Y = (11>/n+ C)Y

= 1y> + CY

= 1y> + E.

Under the normal distribution the elements of Z are mean zero, variance one

and uncorrelated, so all of our previous results about the matrix moments

hold:

1. E[y] = µ, Var[y] = Σ/n.

2. E[S/(n− 1)] = E[E>E/(n− 1)] = Σ.

14

3. y and E are uncorrelated.

What additional results can we obtain? A few standard results that you

should have seen before are the following:

1. y ∼ Np(µ,Σ/n).

2. y and E are independent, so y and S = E>E are independent.

3. S ∼ Wn−1(Σ)

Items 1 and 2 follow from things we’ve just discussed. Item 3 is less clear.

We will “derive” this result from an easy starting point:

Definition 3. Suppose Y = ZΣ1/2 where Z ∈ Rn×p with Z ∼ Nn×p(0, Ip ⊗In). Then Y>Y =

∑i yiy

>i ∼ Wn(Σ).

Corollary 2. If W ∼ Wn(Ψ) then

• E[W/n] = Ψ.

• Var[wij/n] = (ψijψij + ψiiψjj)/n

• W/n→ Σ a.s. as n→∞

We will now derive the result that S = E>E ∼ Wn−1(Σ). First note that

E>E =n∑i=1

eie>i

ei ∼ Np(0,n−1n

Σ),

and so E>E is the sum of the outer products of n multivariate normal vectors.

So why is E>E ∼ Wn−1(Σ) rather than E>E ∼ Wn(n−1n

Σ)?

15

Exercise 8. Show that Var[E] = Σ⊗C.

The problem is that, as can be seen from the variance of E, the rows of E are

not statistically independent. In fact, they will be linearly dependent with

probability 1, since each row of E may be expressed as a linear combination

of the other rows (as the rows sum to zero). Another way to see this is

that the “row covariance” C of E is singular and not positive definite - it is

rank n − 1 and not n. A random normal matrix or vector with a singular

covariance matrix is called a “singular normal distribution”.

To derive the distribution of E>E, we will use a trick that converts a singular

normal matrix or vector into a non-singular one of lower dimension. To do

this, we need to know a bit more about the centering matrix C. Recall

• C = I− 11>/n

• C is an n× n idempotent projection matrix

• C projects onto the null space of the vector 1/√n.

• Since 1 is 1-dimensional, its null space is (n− 1)-dimensional.

• C has one eigenvector with an eigenvalue of zero.

• Any eigenvector with a non-zero eigenvalue has an eigenvalue of 1.

Theorem 7. Let P be a symmetric idempotent matrix. Then the eigenvalues

of P are either zero or one.

Corollary 3. The centering matrix C = I− 11>/n has eigendecomposition

C = GIG> = GG>,

where G ∈ Rn×(n−1) satisfies G>G = In−1.

16

Exercise 9. Prove the theorem and corollary.

Now let R = G>Y, so R is a random (n− 1)× p matrix. Then

E>E = Y>CY

= Y>GG>Y

= R>R.

What is the distribution of R?

R = G>Y

= G>(1µ> + ZΣ1/2)

= G>ZΣ1/2

R ∼ N(n−1)×p(0,Σ⊗G>G)

= N(n−1)×p(0,Σ⊗ In−1).

So by the definition of the Wishart distribution,

E>E = R>R ∼ Wn−1(Σ).

What we have done is express the sum of squared variation of a centered n×pmatrix as the sum of squared variation of an uncentered (n− 1)× p matrix.

This and related dimension-reducing techniques are useful for separating the

problem of mean estimation from that of variance estimation.

Theorem 8. Let C = GG> with G>G = In−1.

• If z ∼ Nn(0, I) then G>z ∼ Nn−1(0, I).

• If Z ∼ Nn×p(0, Ip ⊗ In) then G>Z ∼ Nn−1×p(0, Ip ⊗ In−1)

• If Z ∼ Nn×p(0,Σ⊗ In) then G>Z ∼ Nn−1×p(0,Σ⊗ In−1)

17

• If Y ∼ Nn×p(1µ>,Σ⊗ In) then G>Y ∼ Nn−1×p(0,Σ⊗ In−1)

These follow from our basic facts about multivariate normal distributions.

Exercise 10. Prove the results.

To summarize,

• E = CY is mean-zero normal but with n linearly dependent rows.

• R = G>Y is mean-zero normal with n− 1 i.i.d. rows.

• E>E = R>R ∼ Wn−1(Σ).

Here is a more general version of what we have just done, which will come

in useful later when we study regression:

Theorem 9. If Y ∼ N(M,Σ⊗ I) and P ∈ Rn×n is a symmetric idempotent

matrix with rank r such that PM = 0, then Y>PY ∼ Wr(Σ).

This is more or less known as Cochran’s theorem.

Exercise 11. Prove the theorem.

Stochastic representation:

We now have the following representation of the Nn×p(1µ>,Σ ⊗ In) distri-

bution:

Y ∼ Nn×p(1µ>,Σ⊗ In) ⇔ Y

d= 1y> + GR, where

• y ∼ Np(µ,Σ/n),

18

• R ∼ Nn−1×p(0,Σ⊗ In−1),

• y and R are independent.

This representation is important - it allows us to separate the problems of

mean estimation and variance estimation. In particular, this result means

that inference for Σ based on

Y ∼ Nn×p(1µ>,Σ⊗ In)

can be accomplished based on

R = G>Y ∼ Nn−1×p(0,Σ⊗ In−1).

In other words, without (much) loss of generality, it is sufficient to consider

the case that µ = 0 when developing inferential tools for the variance of

multivariate normal populations. So for the remainder of this set of notes we

will assume Y ∼ Nn×p(0,Σ⊗ I), i.e. we replace R by Y and n− 1 by n for

notational simplicity.

Exercise 12. Consider a random effects model of the form

yi = ai1p + b + Wzi

a1, . . . , an ∼ i.i.d. N(0, σ2a)

z1 . . . , zn ∼ i.i.d. Np(0, Ip).

where the ai’s and zi’s are independent, and b, W and σ2a are unknown

parameters.

1. Write out a stochastic representation of the resulting n× p data matrix

Y, in matrix form.

2. Identify the matrix normal distribution of Y (i.e., find the mean and

variance of Y given b, W and σ2a).

19

3. Find an unbiased estimate of b. Discuss how estimating σ2a and WW>

might be challenging.

4. Suppose you fit an AMMI model to the data matrix Y. How would you

interpret the results? How would the estimates of the parameters in the

AMMI model relate to b, σ2a and W?

4 Decompositions of a normal matrix

Suppose we are going to observe a data matrix Y ∈ Rn×p for which E[Y] = 0

and E[Y>Y] = nΣ, and then

• do a PCA of Y,

• study the SVD of Y,

• use Y to estimate Σ.

It is useful to know the statistical properties of the above procedures, that

is, the behaviors of these procedures on average across datasets that we are

likely to see. For now, we define “on average” and “likely to see” via the

distributional assumption that Y ∼ Nn×p(0,Σ⊗ I) for some positive definite

Σ ∈ Rp×p.

We’ll start somewhat naively and intuitively, and then provide some more

formal results. First some notation:

Vr,m = X ∈ Rm×r : X>X = Ir, the r-dimensional Stiefel manifold in Rm.

Or = X ∈ Rr×r : X>X = Ir = XX> = Vr,r, the orthogonal group.

20

So for example, if Y = UDV> is the SVD of Y and n ≥ p, then

• U ∈ Vp,n

• V ∈ Op.

4.1 SVD of a normal matrix

Suppose you want to find the joint distribution of U, D and V. These

quantities are functions of Y, so one way to proceed is to do a change of

variables on the density of Y and see what we get. First, we need the density

of Y. Recall, under Y ∼ N(0,Σ⊗ I), the rows of Y are i.i.d. N(0,Σ).

p(Y|Σ) =n∏i=1

|2πΣ|−1/2 exp(−y>i Σ−1yi/2)

= |2πΣ|−n/2 exp(−∑

itr(yiy>i Σ−1)/2)

= |2πΣ|−n/2 exp(−tr(Y>Y>Σ−1)/2)

= (2π)−np/2|Σ|−n/2etr(−Y>YΣ−1/2).

Make sure you understand the steps involving the trace.

It would seem that the joint density of U,D,V is then given by

p(U,D,V|Σ) ∝ etr(−VDU>UDV>Σ−1/2)× |J|

= etr(−VD2V>Σ−1/2)× |J|

= etr(−D2V>Σ−1V/2)× |J|,

where J is some sort of Jacobian matrix, along the lines of J = dY/d(U,D,V).

From this we might make the following conjectures:

21

Conjectured distribution of U:

• p(Y|Σ) doesn’t depend on U.

• If J doesn’t depend on U, then the joint density of (U,D,V) is constant

in U.

• The random variable U is then

– uniformly distributed on Vp,n;

– independent of D and V.

Conjectured distribution of V: If J doesn’t depend on V, then

p(V|D) ∝ p(V,D) ∝ etr(D2V>AV),

where A = −Σ−1/2. This distribution has a name - the (generalized) Bing-

ham distribution. Distributions of this type are used in spatial statistics and

shape analysis, where the data might be axes or planes.

Exercise 13. Show that the Bingham distribution has antipodal symmetry:

if V ∼ Bingham(D2,A), with D2 a diagonal matrix, then Vd= VS , where

S is any fixed diagonal matrix with elements of -1 or +1.

Conjectured distribution of D: It is too much to hope for that |J| would

not depend on D. Therefore, the (marginal) distribution of the singular

values should be given by something like

p(D) ∝ |J|∫Op

etr(−D2V>Σ−1V/2)µ(dV).

These conjectures are more or less correct, but there are some technical

difficulties. The first one is that the Jacobian isn’t fully defined because the

SVD is not in fact unique:

22

Let S = diag(s1, . . . , sp), sj ∈ −1,+1. Then

UDV> = USD(VS)>.

This should make intuitive sense to you: The singular vectors (and eigenvec-

tors of Y>Y) describe axes of variation, not directions. Instead, we have the

following result:

Theorem 10. Let Y ∼ Nn×p(0,Σ⊗ I). Then Yd= UDV> where

• U ∼ uniform(Vp,n) is independent of (D,V);

• V|D ∼ Bingham(D2,−Σ−1/2);

• d21, . . . , d

2p are equal in distribution to the eigenvalues of a Wn(Σ) ran-

dom matrix.

Keep in mind, if we simulate U,D and V as described, then Y = UDV> is

an SVD of Y, not the SVD.

Properties of the Bingham distribution: The Bingham(L,Ψ) distribu-

tion on Op has density with respect to the uniform distribution given by

p(V|L,Ψ) = c(Ψ)etr(LV>ΨV),

so evidently this is a quadratic exponential family model.

What values of V do we expect from such a distribution?

Exercise 14. Show that E[V] = 0.

Exercise 15. Show that the Bingham(L,Ψ) distribution is the same as the

Bingham(L + aI,Ψ + bI) distribution.

23

So this distribution isn’t well described by its mean. Instead, let’s consider

the mode. A value of V is highly probable if tr(LV>ΨV) is large. Let

Ψ = EΓE> be the eigendecomposition of Ψ (note that if Ψ = −Σ−1/2, then

Ψ = E[−Λ−1/2]E> if Σ = EΛE>). Then

tr(LV>ΨV) = tr(LV>EΛE>V)

=

p∑j=1

p∑k=1

ljγk(v>j ek)

2.

So we see that this will be large if the columns of V are aligned with those

of E. Since the diagonal elements of L and Γ are decreasing, the “best”

alignment is to align v1 with e1, v2 with e2, etc.

Theorem 11. The modes of the Bingham(L,Ψ) distribution are E and ES :

S = diag(s), s ∈ −1, 1p.

Additionally, the concentration of V around its modes is increasing in the

gaps of diag(L), and the gaps of diag(Γ).

For example,

l1 ≈ l2 ⇒ v1d≈ v2.

If γ1 is substantially bigger than γ2, then v1 and v2 will span the space

defined by e1 and e2 with high probability, but will be roughly uniform on

this subspace.

Returning to our case where L = D2, the squared singular values of Y, and

Ψ = −Σ−1/2, with a bit of algebra (and Exercise 15) we can write the log

density of V as

tr(LV>E[−Λ−1/2]E>V) =∑j

∑k

ljδk(v>j ek)

2 + c

24

where ek are the eigenvectors of Σ = EΛE>, and δk = −1/(2λk) + 1/(2λ1)

is the difference between the minus half the kth and first eigenvalue of Σ

(which is positive).

This means that, for example, the axes defined by V will align with those

defined by E, particularly if the eigengaps of L (from the sample covariance

matrix), and those of Λ (from the population covariance matrix) are large.

When will these be large? We can’t control Σ, but remember

L = eval(Y>Y)

= eval(∑i

yiy>i )

So gaps should be getting bigger as n increases, and the eigenvectors/right

singular vectors V should concentrate more and more around those of Σ. We

already knew this though, by the properties of the Wishart distribution.

4.2 Polar decomposition of a normal matrix

A related decomposition of a matrix is the unique polar decomposition:

Y = Y(Y>Y)−1/2(Y>Y)1/2

= (Y(Y>Y)−1/2)(Y>Y)1/2

= HS1/2,

where you can show that H ∈ Vp,n and S is a positive (semi)definite matrix.

Here, H determines the p-dimensional subspace of Rn that the observations

are in, and HH> is the projection matrix onto this space. S stretches the

points in this space.

Theorem 12. Let Y = HS1/2 be the polar decomposition of Y. Then Y ∼Nn×p(0,Σ⊗ I) iff

25

• H ∼ uniform(Vp,n);

• S ∼ Wn(Σ) ;

• H and S are independent.

This should seem reasonable. We already knew that S = Y>Y ∼ Wn(Σ).

Also, the SVD of Y is

Y = UDV>

= UV>VDV>

= (UV>)(VDV>).

Exercise 16. Show that S1/2 = VDV>, H = UV> and that H ∈ Vp,n.

The theorem on the polar decomposition implies two interesting things:

• UV> ∼ uniform(Vp,n), regardless of “which” SVD is obtained.

• UV> is independent of S1/2 = VDV>, and hence also of S.

5 Decompositions of a Wishart matrix

Our decompositions so far have included

• SVD: Yd= UDV> , U is uniform, independent of D,V;

• Polar: Y = UDV>, H = UV> uniform, independent of S1/2 =

VDV>.

26

The uniformity of U (and UV>) results from the independence of the rows

of Y. In either decomposition, what remains are D and U, which of course

make up the covariance matrix/sum of squares matrix:

S = Y>Y

= VD2V>

≡ VLV> ∼ Wn(Σ).

Now recall from our discussion of PCA that the columns of V are our prin-

cipal axes, and the values l1, . . . , lp quantify the amount of data variation

along each axis. How do these things relate to Σ?

MLE of Σ: Let S ∼ Wn(Σ) where Σ is any positive definite matrix. The

density of S is given by

f(S) = pWn(S|Σ) = (2np/2Γp(n/2)|Σ|n/2)−1|S|(n−p−1)/2etr(−SΣ−1/2)

As a function of Σ, the log likelihood is given by

−2`(Σ : S) = n log |Σ|+ tr(Σ−1S).

The MLE Σ is the minimizer of this quantity.

Theorem 13. The MLE is Σ = S/n.

Proof. We can prove this without resorting to matrix calculus. Let’s repa-

rameterize as Σ = S1/2Ψ−1S1/2, or Ψ = S1/2Σ−1S1/2. Since this is a bijection

we have

minΣ>0

n log |Σ|+ tr(Σ−1S) = minΨ>0

n log |ΣΨ|+ tr(Σ−1Ψ S)

where ΣΨ = S1/2Ψ−1S1/2. The objective function on the right is

n log |S1/2Ψ−1S1/2|+ tr(S−1/2ΨS−1/2S) = n log |S| − n log |Ψ|+ tr(Ψ)

27

Now let Ψ = EΩE> be the eigendecomposition of Ψ, and recall that

|Ψ| = |EΩE>| =p∏1

ωj

tr(Ψ) = tr(EΩE>) =

p∑1

ωj.

Therefore the objective function is

n log |S|+∑

ωj − n∑

logωj

n log |S|+∑

(ωj − n logωj).

The function ωj − n logωj is strictly convex in ωj and uniquely minimized

by ωj = n, for each j. This means that

Ω = E(nI)E> = nI.

The MLE of Σ is then

Σ = S1/2(I/n)S1/2 = S/n.

(derivation due to Michael Perlman).

Now let Σ = ΓΛΓ> be the eigendecomposition of the population covariance

matrix Σ, and S = VLV>. That the MLE is Σ = S/n means that

• Γ = V;

• Λ = L/n.

So the sample principal axes are the MLEs of the population principal axes.

28

5.1 Eigendecomposition of Wishart matrix

We have already discussed the distribution of V. Now we need to obtain the

distribution of D, or equivalently L. We begin with useful general result:

Theorem 14 (Muirhead [1982]). If S is a random p × p positive definite

matrix with density f(S) then the joint density of its eigenvalues l1, . . . , lp is

given by

p(l1, . . . , lp) =πp

2/2

Γp(p/2)

∏j<j′

(lj − l′j)∫Op

f(VLV>)µ(dV),

where µ is the uniform probability measure on Op.

We’ll go through the basic idea of the proof, leaving the details to the more

interested reader.

Let S = VLV> be the eigendecomposition of S. We will proceed by obtaining

the joint density of V and L and then integrating out L:

p(V,L) dVdL = p(S(V,L)) dS

p(V,L) = p(S(V,L)) dSd(V,L)

Using some theory of matrix differentials, you can show that

dS =2pπp

2/2

Γp(p/2)

(∏j<j′

(lj − l′j)

) (p∏j=1

dlj

)dV,

where Γp is the multivariate gamma function.

29

This is the differential of S in the neighborhood of some value of V and L,

so

p(V,L) =

(2pπp

2/2

Γp(p/2)

∏j<j′

(lj − l′j)

)f(VLV>)

To obtain the density of diag(L), is seems we would just integrate this over

V with respect to the uniform measure. However, as we discussed before,

the eigendecomposition is not unique because we can multiply any column

of V by −1 and retain the representation. Therefore, there are 2p possible

representations. To make a 1-1 transformation between Y and V,L we

need to impose a restriction, such as that the first row of V is positive. This

restricts V to 1/2p of the space Op. Since the integrand doesn’t depend on

which of these 2p representations is being used, we can just integrate over all

of Op and divide the result by 2p, giving the answer:

p(l1, . . . , lp) =1

2p

∫Op

p(V,L)µ(dV)

=πp

2/2

Γp(p/2)

∏j<j′

(lj − l′j)∫Op

f(VLV>)µ(dV).

Now we can consider the special case of a Wishart density,

f(S) = pWn(S|Σ) = (2np/2Γp(n/2)|Σ|n/2)−1|S|(n−p−1)/2etr(−SΣ−1/2)

Plugging things in is straightforward:

p(l1, . . . , lp|Σ) = c(n, p)|Σ|−n/2p∏j=1

l(n−p−1)/2j

∏j<j′

(lj−lj′)∫Op

etr(−Σ−1VLV>/2)µ(dV).

Due to its complexity, this density is difficult to interpret, and even calculate.

However, some special cases can give us some insight: For example, in the

30

case Σ = Iσ2, we have

p(l1, . . . , lp|I) = c(n, p)|σ2I|−n/2p∏j=1

l(n−p−1)/2j

∏j<j′

(lj − lj′)∫Op

etr(−IVLV>/[2σ2])µ(dV)

= c(n, p)σ−npp∏j=1

l(n−p−1)/2j

∏j<j′

(lj − lj′)∫Op

etr(−L/[2σ2])µ(dV)

= c(n, p)σ−npp∏j=1

l(n−p−1)/2j

∏j<j′

(lj − lj′) exp(−∑

lj/[2σ2]).

The second partial derivatives of the log density with respect to lj and lj′ ,

with j < j′ (so lj > lj′) are

d log p(l)

dlj dlj′= − 1

(lj − lj′)2,

which indicates a negative correlation between the eigenvalues. This makes

sense, as the sum of the eigenvalues will be concentrated around ntr(Σ). If

one lj is too small, then another will pick up the slack.

Exercise 17. Show that E[∑

j lj] = ntr(Σ).

However, this covariance must be going to zero as n → ∞ since the magni-

tude of the lj’s is increasing linearly in n (since S is the sum of n rank one

matrices).

5.2 Asymptotic distribution of the eigendecomposition

The following asymptotic results for the sample covariance matrix may be

more useful: Let S/n = Y>Y/n have the eigendecomposition VLV (note

that now l1, . . . , lp are the eigenvalues of S/n and not S).

31

Theorem 15. Let S/n = VLV> and let Σ = ΓΛΓ>, with the elements of Λ

being distinct and ordered. Then

√n(l− λ)

d→ Np(0, 2× diag(λ λ)),

where l = diag(L) and λ = diag(Λ). Also, letting gj =√n(vj − γj),

• g1, . . . ,gp are asymptotically jointly normal and mean zero

(vj is consistent for γj);

• Var[gj]→∑

j 6=j′λjλj′

(λj−λj′ )2γjγ

>j′

• Var[gj,gj′ ]→ −λjλj′

(λj−λj′ )2γjγ

>j′.

The last correlation being negative is due to the vj’s being orthogonal.

In particular, this result gives an asymptotic normal distribution for the

sample principal axes (the vj’s) in terms of the population principal axes

(the ej’s) and the population eigenvalues.

Discuss: When will the asymptotic variance of vj about ej be

• small?

• big?

• not go to zero?

Exercise 18. Show that if S ∼ Wn(Σ), then Γ>SΓ ∼ Wn(Λ).

Proof of consistency:

32

First lets consider the case that Σ = Λ, a diagonal matrix. Then

nX ≡ S ∼ Wn(Λ)

Xd=∑

eie>i /n,

where e1, . . . , en ∼ i.i.d. N(0,Λ). Now note that

• The diagonal elements of X are independent;

• xj,j ∼ λjnχ2n;

• E[xj,j] = λj ; Var[xj,j] = (λ2j/n

2)× 2n = 2λ2j/n;

• E[xj,k] = 0 ; Var[xj,k] = λjλk/n;

By the CLT, we have

√n(diag(X)− λ)

d→ Np(0, 2Λ2)√nxj,k

d→ N(0, λjλk),

So in particular

• xjj = λj +Op(n−1/2)

• xjk = Op(n−1/2)

However, what we want to show are that the eigenvalues of X converge to

Λ, not the diagonal elements of X. However,

• the off-diagonal elements of X are approaching zero, so

• X is converging to a diagonal matrix, and

33

• the eigenvalues of a diagonal matrix are the (ordered) diagonal entries.

The intuition is then that

X ≈ diag(X) ≈ Λ

eval(X) ≈ diag(X) ≈ Λ.

We have already described formally the approximation diag(X) ≈ Λ, now we

have to be precise about eval(X) ≈ diag(X).

Recall an eigenvalue l of X satisfies |X− lI| = 0. Now we use an expansion

for determinants of the form

|A| =∏j

ajj + stuff,

where the stuff involves sums over terms that involve factors like ajkaj′k′

(times some other stuff). This means that

|X− lI| =∏j

(xjj − l) + stuff

involves sums of products over elements of X. the products always involve

at least two non-diagonal elements, and so

stuff = Op(n−1/2)×Op(n

−1/2) = Op(n−1).

In particular,

• n× stuff is bounded in probability;

•√n× stuff

p→ 0.

34

For example, if X ∈ R2×2, we have

|X− lI| = (x11 − l)(x22 − l)− x212.

Now at an eigenvalue, we have

|X− lI| = 0 =∏j

(xjj − l) +Op(n−1)

so ∏j

(xjj − l) = Op(n−1).

Now we know each xjjp→ λj, and the λj’s are distinct, so the xjj’s are

converging to distinct things. This means that the terms on the product can

be shrinking for at most one j. In other words,

xj = lj +Op(n−1).

or if you prefer,

xj = lj + z/n,

where z is some random variable (bounded in probability).

Putting things together, we have

√n(lj − λj) =

√n(lj − xj + xj − λj)

=√n(xj − λj) + z/

√n

d→ N(0, 2λ2j).

Finally, consider the case that Σ ∼ Wn(Σ), with Σ = ΓΛΓ>. Letting nX =

Γ>SΓ, we have

• nX ∼ Wn(Λ);

35

• eval(X) = eval(S).

So the limiting distribution of eval(X) where X ∼ Wn(Λ) is the limiting

distribution of eval(S) where X ∼ Wn(Σ). [Mardia et al., 1979, chapter 8].

Application: Population variance explained.

Recall that the sample variance explained by the first q principal axes is

pq = (l1 + · · ·+ lq)/(l1 + · · ·+ lp).

Under the normal model, we know that this is converging to the population

version

ψq = (λ1 + · · ·+ λq)/(λ1 + · · ·+ λp).

Our results above can give us tests that ψq takes on a particular value, or

alternatively, confidence intervals for this population quantity.

Exercise 19. Using the delta method, show that

√n(pq − ψq)

d→ N(0, v)

where

v =2tr(Σ2)

tr(Σ)2(ψ2 − 2αψ + α)

and α = (λ21 + · · ·+ λ2

q)/(λ21 + · · ·λ2

p).

5.3 Evaluating partial isotropy

Suppose we observe signals from p different sensors, which measure the out-

put q different sources plus ambient noise. A model for such signal data

is

y = Az + σe

where

36

• z ∈ Rq is a Gaussian source vector;

• e ∈ Rp is a Gaussian noise vector;

• A ∈ Rp×q,

with aj,k representing how well source k is measured by sensor j.

Clearly, y ∼ Np(0,AA> + σ2I). Now suppose we have n independent mea-

surements from this process. The model for the data matrix Y is then

Y ∼ Nn×p(0, (AA> + σ2I)⊗ I),

and so Y>Y ∼ Wn(AA> + σ2I).

Suppose we don’t know q, the number of sources, and would like to infer this

from the data. A simple ad-hoc approach would be to perform a series of

tests against H : q = q0.

Let Σ = AAT + σ2I. What are the eigenvalues of Σ? Note that A is p× q,so if p ≥ q it has an SVD of the form A = UDV> with U ∈ Vq,p. This gives

AA> + σ2I = UD2U> + σ2I

= [UU⊥]D2[UU⊥]> + σ2[UU⊥][UU⊥]>

= [UU⊥](D2 + σ2I)[UU⊥]>

where

D2 =

(D2 0

0 0

)and U⊥ ∈ Vp−q,p is any orthonormal basis for the null space of U, so that

[UU⊥] ∈ Op. The eigenvalues of Σ are therefore given by the diagonal

elements of D2 + σ2I, which are

diag(D2 + σ2I) = (d21 + σ2, . . . , d2

q + σ2, σ2, . . . , σ2) ∈ Rp.

37

Such a covariance matrix is said to have partial isotropy [Mardia et al., 1979].

Isotropic means equal in every direction. If Σ = σ2I, then we would say the

probability distribution was (fully) isotropic. Under partial isotropy, the

distribution is isotropic only on a subspace. More recently, this type of

covariance matrix has been referred to as a “spiked covariance model.”

[Draw a picture]

Exercise 20. Find a p − q dimensional subspace such that the distribution

of the projection of y onto the subspace is isotropic.

Let’s return to our testing problem. First let’s test H : q = p− 2, or in other

words, H : λp = λp−1.

First attempt: Let’s build a simple ad-hoc test from our existing results.

Recall our theorem that says

√n(l− λ)

d→ Np(0, 2Λ2).

This suggests that under H : λp = λp−1 for large n, we should have

√n(lp − lp−1)

d≈ N(0, 2(λ2

p + λ2p−1)).

or that√n

lp − lp−1√2(λ2

p + λ2p−1)

d≈ N(0, 1).

So maybe we could reject H when |z(l)| > 1.96 and hope that controls the

type I error of rejecting λp = λp−1 (and falsely claiming q > p− 2).

Unfortunately this approach does not work. Recall that one of the conditions

of the theorem regarding the asymptotic distribution of the lj’s was that the

population eigenvalues, the λj’s, were all distinct. However, for our test we

38

need the null distribution of the lj’s under the condition that some of the

eigenvalues are equal. To see what goes wrong in this case, examine the

simulated results below:

1 2 3 4 5

−1.

0−

0.5

0.0

0.5

1.0

1.5

2.0

log

lam

bda

1.3 1.4 1.5 1.6 1.7 1.8 1.9

0.40

0.45

0.50

0.55

0.60

l4

l 5 X0

1 2 3 4 5

0.0

0.5

1.0

1.5

2.0

log

lam

bda

0.90 0.95 1.00 1.05 1.10 1.15 1.20 1.25

0.75

0.80

0.85

0.90

0.95

1.00

1.05

1.10

l4

l 5

X

0

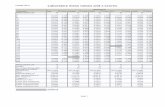

The first row of plots give a description of the sample eigenvalue distribution

under λ = (7, 5, 3, 1.5, .5), a situation where all the values are distinct. Note

that the marginal distributions of the lj’s are centered around the λj’s, and

the joint distribution of l4 and l5 looks normal and uncorrelated.

39

The second row of plots give a description of the sample eigenvalue distri-

bution under λ = (7, 5, 3, 1, 1), a situation where all the last two population

eigenvalues are equal. Note that the marginal distributions of l4 and l5 are

not centered around λ4 = λ5 = 1. The joint distribution of l4 and l5 is also

not approximately normal - we have l4 > l5 by design. Additionally, as esti-

mators of λ4 and λ5 the values of l4 and l5 have a bias that goes away slower

than n1/2.

Therefore, under any sort of partial isotropy model, the asymptotic normal

distribution we derived earlier is not valid. As an alternative means of testing

Hq : λq+1 = · · · = λ1 we consider a likelihood ratio test. To perform such a

test, we need to obtain the MLE of Σ under the partial isotropy model.

Recall that the partial isotropy model with q “spikes” is that

eval(Σ) = (d21 + σ2, . . . , d2

q + σ2, σ2, . . . , σ2)

or equivalently

eval(Σ) = (λ1, . . . , λq, λ, . . . , λ).

If S ∼ Wn(Σ) then the -2 log-likelihood, as a function of Σ alone, is given by

−2 log(|Σ|−n/2etr(−SΣ−1/2) = n log |Σ|+ tr(SΣ−1).

Now let S = nVLV> so that L are the eigenvalues of the sample covariance

matrix, and let Σ = ΓΛΓ>. Then the MLEs of (Γ,Λ) are the minimizers of

log |Σ|+ tr(LV>ΓΛ−1Γ>V)

Now for each Λ, a (non-unique) minimizer in Γ of the trace term is given by

Γ>V = I or Γ = V. This follows from our discussion of the modes of the

Bingham distribution. Plugging in this value of Γ means that the MLE for

Λ is the minimizer ofq∑1

log λq + (p− q) log λ+ tr(LΛ−1) =

q∑1

log λj + (p− q) log λ+

q∑1

lj/λj +

p∑q+1

lj/λ.

40

The MLE of λj is the minimizer of

log λj + lj/λj,

which is λj = lj. The MLE of λ is the minimizer of

(p− q) log λ+

p∑q+1

lj/λ,

which is λ =∑p

q+1 lj/(p− q), the average of the sample eigenvalues beyond

the first q.

The likelihood ratio test involves the maximized likelihoods under competing

models. The maximized -2 log-likelihood for Σ based on the normal data

matrix is (without the 2π’s )

−2 log p(Y|Σ) = n log |Σ|+ tr(SΣ−1).

Under the spiked covariance model, this is

n

(q∑1

log lj + (p− q)(log lp−q) + q + (p− q)lp−q/lp−q

)=

n

(q∑1

log lj + (p− q) log lp−q

)+ np.

Under the unrestricted model, the maximized -2 log likelihood is n∑

logp1 lj+

np, and so the difference is

n× (−p∑q+1

log lj + (p− q) log lp−q).

Now recognize the sum of the log eigenvalues in terms of the geometric mean:

log lp−q = log

(p∏q+1

lj

)1/(p−q)

(p− q) log lp−q =

p∑q+1

log lj.

41

So the likelihood ratio statistic is given by

−2 log(p(Y|Σq)/p(Y|Σ)) = n× (p− q) log(lp−q/lp−q),

in words, the statistic is n times p − q times the log ratio of the arithmetic

mean and geometric mean of the q+1st through pth eigenvalues. This should

make sense intuitively: If the sample eigenvalues are highly skewed, then the

arithmetic mean will be much larger than the geometric, and we will reject.

If these sample eigenvalues are nearly equal, then the ratio of the two means

will be close to one, and we will accept.

Exercise 21. Obtain the LR test statistic for testing H : λq > λq+1 = · · · =λp versus K : λq > λq +1 > λq+2 = · · · = λp, that is, q “sources” versus q+1

sources. Also obtain the asymptotic null distribution. Compare this test to

that of H versus an alternative of p distinct eigenvalues. Discuss situations

where you think one or the other may have more power.

Null distribution: To perform a test of H : rank(A) = q versus K :

rank(A) = p, we need to know the null distribution of the LRT statistic,

t(Y) = n× (p− q) log(l/l).

Standard results on likelihood ratio tests tell us that, asymptotically,

t(Y) ∼ χ2δ

where δ is the difference in the number of parameters in the full model as

compared to the null model. It seems like the difference in the number of

parameters is p − (q + 1), as under H we restrict λq+1 = · · · = λp = λ, and

only allow λ1, . . . , λq, λ to vary independently.

The correct change in the number of parameters can be most easily obtained

from the representation of Σ as

Σ = AA> + σ2I,

42

where A ∈ Rp×q. The number of parameters here might seem to be p×q+1,

but this is not correct. Note that for any orthogonal matrix R ∈ Oq,

ARR>A + σ2I = AA> + σ2I = Σ.

The correct degrees number of parameters is obtained by quotienting out this

invariance of the model to orthogonal rotations of A on the right. Alterna-

tively, we can consider a identifiable parameterization: Letting A = WCXT

be the SVD of A, we have

AA> = WC2W.

The number of parameters here is q for C2 and

1. p− 1 for w1 (a normal vector in Rp);

2. p− 2 for w2 (a normal vector in Rp, orthogonal to w1);

...

3. p− q for wq.

This gives a total of q + pq −(q+1

2

)parameters for AA>, plus 1 for σ2.

The full model places no restrictions on Σ, which has(p+1

2

)free parameters.

The difference is then

(p+ 1)p/2− [1 + (p+ 1)q − (q + 1)q/2] = (p− q + 2)(p− q − 1)/2.

Let’s confirm (or not reject) this claim empirically:

43

n<-500 ; p<-10 ; q<-5

lambda<- c( p:(p-q+1), rep(1,p-q) )

llrs<-NULL

for(s in 1:5000)

Y<-sweep( matrix(rnorm(n*p),n,p),2,sqrt(lambda),"*")

l<-eigen(crossprod(Y))$val/n

l<-l[-(1:q)]

llrs<- c(llrs,n*(p-q)*( log(mean(l)) - sum(log(l))/(p-q) ) )

x2<-rchisq(length(llrs),(p-q+2)*(p-q-1)/2 )

plot(sort(llrs), sort(x2)) ; abline(0,1)

10 20 30

1020

3040

sort(llrs)

sort

(x2)

An alternative approach to obtaining a null distribution would be to note that

44

the non-asymptotic distribution of the test statistic is scale invariant, and

that for large n it won’t depend on the actual values of λ1, . . . , λq under the

null. Therefore, one could simulate a null distribution from any distribution

in the null.

piso_test<-function(Y,q)

n<-nrow(Y) ; p<-ncol(Y)

l<-eigen(crossprod(Y))$val/n

l<-l[-(1:q)]

1-pchisq(n*(p-q)*(log(mean(l)) - sum(log(l))/(p-q)) , (p-q+2)*(p-q-1)/2)

n<-500 ; p<-10 ; q<-5

lambda<- c( p:(p-q+1), rep(1,p-q) )

PVAL<-NULL

for(s in 1:5000)

Y<-sweep( matrix(rnorm(n*p),n,p),2,sqrt(lambda),"*")

pval<-NULL

for(q in 1:(p-2)) pval<-c(pval, piso_test(Y,q) ) PVAL<-rbind(PVAL,pval)

boxplot(PVAL,col="lightblue")

45

1 2 3 4 5 6 7 8

0.0

0.2

0.4

0.6

0.8

1.0

Discuss:

• Suppose you do a sequential test at level α starting with q = 1 and

stopping when you accept. Describe the asymptotic probability of ob-

taining the correct q.

• How could you adjust the this procedure to get an asymptotically con-

sistent estimate of q?

46

6 Shrinkage estimators of covariance

6.1 Overdispersion of sample eigenvalues

Recall that the MLE of Σ for the model Y ∼ N(0,Σ⊗ I) is Σ = S/n, where

S = Y>Y. Letting Σ = ΓΛΓ>, and S = VLV> be the eigendecompositions

of Σ and S respectively, the precision of Σ depends on the quality of the

approximation

V(L/n)V> ≈ ΓΛΓ>.

So in some sense, the precision of the estimator depends on how good L/n,

the eigenvalues of Σ, is as an estimate of Λ, the eigenvalues of Σ.

The figure below displays the marginal distributions of the sample eigenvaluesof Σ = Y>Y/n, where Y ∼ N30×10(0,Λ ⊗ I) for three different diagonalmatrices Λ whose elements sum to one (the horizontal dashed lines are thediagonal elements of Λ).

1 2 3 4 5 6 7 8 9 10

0.0

0.1

0.2

0.3

0.4

0.5

1 2 3 4 5 6 7 8 9 10

0.0

0.1

0.2

0.3

0.4

0.5

1 2 3 4 5 6 7 8 9 10

0.0

0.1

0.2

0.3

0.4

0.5

So we see that in this case where n is not very large compared to p, the

sample eigenvalues are not particularly close to their population counterparts.

In each case, the largest sample eigenvalues are too big, and the smallest

47

ones too small. Possibly an estimator with lower risk than the MLE could

be obtained by “shrinking” the extreme eigenvalues towards each other by

some pre-specified amount. Alternatively, maybe the amount of shrinkage

could be determined adaptively from the data. In particular, if our sample

eigenvalues looked like those on the left-hand plot, we might consider some

sort of partial isotropy model (which would shrink the smallest eigenvalues

towards each other). Alternatively, if the sample eigenvalues looked like those

on the right-hand plot, we might consider shrinking all eigenvalues towards

each other. More generally, we might consider estimators of the form

Σ(S) = VΛ(L)V>

where Λ() maps the sample eigenvalues to some shrunken version of them.

Such an estimator Σ is called orthogonally equivariant. It turns out that

there exist orthogonally equivariant estimators that uniformly dominate the

MLE, depending on the risk function.

6.2 Equivariant covariance estimation

Suppose you are going to collect some data from a multivariate normal pop-

ulation,

Y ∼ Nn×p(0,Σ⊗ I),

where Σ could be any positive definite matrix. You’ve decided that some

estimator f(Y) is pretty good, and so will use it to estimate Σ. Now suppose

that for some reason, the data you get are not Y, but Y = YG> for some

known, invertible matrix G ∈ Rp×p. For example, maybe the variables were

• recorded in a different order than intended

(so G is a permutation matrix);

48

• recorded in a different coordinate system

(so G is an orthogonal matrix);

• recorded in terms of some linear combination of the original variables

(so G is a non-singular matrix).

What should your estimate of Σ be?

Method 1: Since G is invertible, we can easily enough transform the ob-

served data Y to obtain the data matrix Y = YG−> that we would’ve

obtained, had we gathered the data as planned. In this case, we would use

the estimate

Σ1 = f(Y) = f(YG−>).

Method 2: If the true covariance of Y is Σ, then the true covariance of

Y is Σ = GΣG>, which can be any positive definite matrix if Σ can be

anything. Therefore, the model for Y is the same as that of Y. Since you

were perfectly happy to use f(Y) as an estimate of Σ, you should be just as

happy to estimate Σ asˆΣ = f(Y).

But note that if Σ = GΣG>, then Σ = G−1ΣG−>, which suggests estimating

Σ with

Σ2 = G−1f(Y)G−>.

Invariant loss: To further justify Method 2, consider estimating Σ (or Σ)

using a loss function L(Σ,∆) that is invariant, in that

L(Σ,∆) = L(GΣG>,G∆G>).

49

What is the risk of estimating Σ with Σ2 under such a loss?

R(Σ, Σ2) = EY∼Σ[L(Σ,G−1f(Y)G−>)]

= EY∼Σ[L(GΣG>, f(Y))]

= EY∼Σ[L(Σ, f(Y))] = R(Σ, f).

So the “risk profile” of estimating Σ with Σ2 is

R(Σ, Σ2) : Σ ∈ S+p = R(Σ, f) : Σ ∈ S+

p ,

since as Σ varies over S+p so does Σ. But this is the same as the risk profile

of estimating Σ with Σ1:

R(Σ, Σ1) : Σ ∈ S+p = R(Σ, f) : Σ ∈ S+

p .

To summarize,

• if you are happy to use f(Y) to estimate Σ from Y ∼ Nn×p(0,Σ⊗ I),

regardless of what Σ is,

• you should be just as happy (in terms of risk) to use G−1f(YG>)G−>.

Principle of equivariance: Many statisticians have argued that, if the

model is invariant to the transformation Y 7→ YG>, and the loss function is

also invariant (so that L(Σ,∆) = L(GΣG>,G∆G>)), then both methods of

estimation are equally valid, and so you should use an estimator f for which

the two methods give the same result. For such an estimator, we have

G−1f(Y)G−> = f(Y)

f(Y) = Gf(Y)G>

f(YG>) = Gf(Y)G>.

50

An estimator that satisfies the above equation is said to be equivariant with

respect to the transformation G.

For simplicity, for the rest of this section we will only consider estimators

that are functions of the complete sufficient statistic S = Y>Y. In this case,

equivariance of an estimator f with respect to G implies that

Σ(GSG>) = GΣ(S)G>.

Equivariance with respect to a group: Let G be a set of p×p matrices

that form a group under matrix multiplication, so that

• I ∈ G;

• if G is in G then G is invertible and G−1 ∈ G;

• if G1 and G2 are in G, then G1G2 is in G.

If an estimator is equivariant with respect to every member G of a group Gof transformations, then it is said to be equivariant with respect to G.

Example: Consider an estimator of Σ of the form f(S) = cS for some

c > 0. Then

f(GSG>) = cGSG>

= Gf(S)G>

for all matrices G. The set of all p× p matrices doesn’t form a group under

matrix multiplication (as some matrices are not invertible), but the set of

non-singular matrices is a group, called the general linear group, or GL. As

we’ve shown, estimators of the form cS are equivariant with respect to this

group.

51

Exercise 22. Show that every GL-equivariant estimator can be written as

Σ(S) = cS for some scalar c > 0.

Risk of equivariant estimators If the loss is invariant then the risk of

an equivariant estimator is constant on orbits of the parameter space under

the group:

Theorem 16. Let Σ(S) and L(Σ,∆) be equivariant and invariant with re-

spect to a group G, respectively. Then R(Σ, Σ) = R(GΣG>, Σ).

Proof.

R(Σ, Σ) = E[L(Σ, Σ(S))|Σ]

= E[L(GΣG>, Σ(GSG>))|Σ]

= E[L(GΣG>, Σ(S))|GΣG>]

= R(GΣG>, Σ)

We say a group of matrices G is is transitive over S+p if you can get from

one element of S+p to any other element by transformation by an element of

G. Symbolically, this means that for every Σ1, Σ2 there exists a G ∈ G such

that Σ2 = GΣ1G>.

Corollary 4. If G is transitive then R(Σ, Σ) is constant in Σ.

For example, GL is transitive, so the risk of any GL-equivariant estimator

under a GL-invariant loss is constant.

In general, if G is transitive, then the risk function of each equivariant estima-

tor reduces to a single number. This typically means that there will exist an

52

equivariant estimator that uniformly minimizes the risk (among equivariant

estimators).

Exercise 23. Find the uniformly minimum risk GL-equivariant estimator

under the loss function LQ(Σ,∆) = tr([∆Σ−1 − I]2).

Exercise 24. Find the uniformly minimum risk GL-equivariant estimator

under the loss function LS(Σ,∆) = tr(∆Σ−1)− log |∆Σ−1| − p.

6.3 Orthogonally equivariant estimators

At the beginning of this section we entertained the idea of “shrinking” the

eigenvalues of a sample covariance matrix because we know that the sam-

ple eigenvalues are biased as estimates of the population eigenvalues. More

generally, consider estimators of Σ of the form

Σ(S) = VΛ(L)V>

where S = VLV> is the eigendecomposition of S and Λ() maps diagonal

matrices with ordered elements to the same set. Such an estimator is called

orthogonally equivariant, because it is invariant with respect to the orthog-

onal group: For every R ∈ Op,

Σ(RSR>) = Σ([RV]L[RV]>)

= [RV]Λ(L)[RV]>

= R[VΛ(L)V>]R> = RΣ(S)R>.

In fact, all orthogonally equivariant estimators can be written this way:

Theorem 17. An estimator of Σ ∈ S+p is orthogonally equivariant if and only

if it can be written as VΛ(L)V>, where S = VLV> is the eigendecomposition

of S and Λ(L) is a diagonal matrix.

53

Exercise 25. Prove the theorem.

Now we need to consider what the eigenvalue shrinkage should be. The

appropriate amount of shrinkage depends on what the loss function is. We

will focus on the LQ loss given in Exercise 23. Importantly, this loss is

invariant, which means that the risk function simplifies considerably:

Theorem 18. If Σ is orthogonally equivariant, then its risk function R(Σ, Σ) =

E[L(Σ, Σ)|Σ] does not depend on the eigenvectors of Σ, so if the eigenvalues

of Σ are Λ, we have

R(Σ, Σ) = R(Λ, Σ)

if Σ = ΓΛΓ>.

Exercise 26. Prove the theorem.

As you might expect, the “worst case” for the MLE is when the eigenvalues

are all the same so Λ = λI and n is not much bigger than p. In this case,

Wigner’s semicircle law says that as n→∞ and p→∞ with p/n→ r, then

l1/n→ λ(1 +√r)2

lp/n→ λ(1−√r)2.

This led Stein (1975) to argue for the shrinkage estimator ΣS(S) = VΛ(L)V>

where

λk(L) = lk/(n+ p+ 1− 2k).

Note that ck is less than 1/n for the top half of the eigenvalues (which need

to be shrunk) and and larger than 1/n for the bottom half (which need to be

expanded). The resulting estimator is minimax, and also dominates the MLE

S/n - it has lower risk than the MLE regardless of Σ [Dey and Srinivasan,

1985].

54

This is the best estimator of the form λk(L) = cklk in this worst case that

Σ = λI. However, things are rarely as bad as they could be. If we look at

the sample eigenvalues, we might decide that Σ = λI is unlikely, and decide

to shrink somewhat differently. One approach is Stein’s (1975) adaptive

eigenvalue shrinkage estimator, given by

λk(L) = lk/

(n− p+ 1 + 2lk

∑k′ 6=k

(lk − lk′)−1

)+

However, sometimes the diagonal values of Λ(L) are not in decreasing order,

suggesting that they be monotonized before being used to construct Σ. Lin

and Perlman [1985] showed empirically that that Stein’s estimator is better

than the MLE and the non-adaptive minimax estimator given above, over a

wide range of Λ values.

Stein’s adaptive shrinkage estimator is a bit messy. As an alternative, Haff

[1980] studied an adaptive empirical Bayes estimator that is minimax and

therefore dominates the MLE in terms of risk. Haff’s estimator may be

derived as follows:

• Prior: Σ−1 ∼ Wν(Ψ−1/a);

• Model: S ∼ Wn(Σ);

• Posterior: Σ−1|S ∼ Wn+ν((S + aΨ)−1);

• Posterior mean: (S + aΨ)/(ν + n− p− 1).

Haff’s idea was essentially to allow allow a to be estimated from the data

55

and the marginal distribution of S. For example,

S−1 ≈ E[S−1] = E[Σ−1]/(n− p− 1)

= νa−1Ψ−1

trS−1Ψ ≈ a−1pν/(n− p− 1)

a ≈ c/tr(S−1Ψ)

where c depends on n, p and ν. This suggests estimators of the form

ΣH = (S + ctr(S−1Ψ)

Ψ)/(ν + n− p− 1).

In particular, Haff showed that with ν = 2(p+1) and c = (p−1)/(n−p+3),

the estimator

ΣH = 1n+p+1

(S + p−1(n−p−3)tr(S−1Ψ)

Ψ)

has uniformly lower risk than the MLE S/n. Further theoretical work by

Haff, and empirical work by Lin and Perlman [1985], suggest that Stein’s

shrinkage estimator is an approximation to Haff’s empirical Bayes estimator

(or vice-versa).

Exercise 27. For what values of Ψ will Haff’s estimator be orthogonally

equivariant? For such a value of Ψ, write out ΛH(L), the eigenvalue shrink-

age function for ΣH .

Exercise 28. Perfom a simulation study comparing the LQ risks of ΣMLE,ΣS and an orthogonally equivariant version of ΣH . Do this for p = 10,n ∈ 20, 40, 80 and the values of Λ generated from the following R-code:

lambda<-1+4*qbeta((1:p)/(p+1),a,a)

lambda<-lambda/sum(lambda)

for a ∈ 0.01, 1, 100.

56

References

Yasuko Chikuse. Statistics on special manifolds, volume 174 of Lecture

Notes in Statistics. Springer-Verlag, New York, 2003. ISBN 0-387-00160-

3. doi: 10.1007/978-0-387-21540-2. URL http://dx.doi.org/10.1007/

978-0-387-21540-2.

Dipak K. Dey and C. Srinivasan. Estimation of a covariance matrix un-

der Stein’s loss. Ann. Statist., 13(4):1581–1591, 1985. ISSN 0090-5364.

doi: 10.1214/aos/1176349756. URL https://doi.org/10.1214/aos/

1176349756.

L. R. Haff. Empirical Bayes estimation of the multivariate normal covariance

matrix. Ann. Statist., 8(3):586–597, 1980. ISSN 0090-5364.

Wolfgang Karl Hardle and Leopold Simar. Applied multivariate statistical

analysis. Springer, Heidelberg, fourth edition, 2015. ISBN 978-3-662-

45170-0; 978-3-662-45171-7. doi: 10.1007/978-3-662-45171-7. URL http:

//dx.doi.org/10.1007/978-3-662-45171-7.

Peter D. Hoff. A hierarchical eigenmodel for pooled covariance estimation.

J. R. Stat. Soc. Ser. B Stat. Methodol., 71(5):971–992, 2009. ISSN 1369-

7412. doi: 10.1111/j.1467-9868.2009.00716.x. URL http://dx.doi.org/

10.1111/j.1467-9868.2009.00716.x.

Alan Julian Izenman. Modern multivariate statistical techniques. Springer

Texts in Statistics. Springer, New York, 2008. ISBN 978-0-387-78188-

4. doi: 10.1007/978-0-387-78189-1. URL http://dx.doi.org/10.1007/

978-0-387-78189-1. Regression, classification, and manifold learning.

A. T. James. Normal multivariate analysis and the orthogonal group. Ann.

Math. Statistics, 25:40–75, 1954. ISSN 0003-4851.

57

Shang P. Lin and Michael D. Perlman. A Monte Carlo comparison of four

estimators of a covariance matrix. In Multivariate analysis VI (Pittsburgh,

Pa., 1983), pages 411–429. North-Holland, Amsterdam, 1985.

Kantilal Varichand Mardia, John T. Kent, and John M. Bibby. Multivariate

analysis. Academic Press [Harcourt Brace Jovanovich Publishers], London,

1979. ISBN 0-12-471250-9. Probability and Mathematical Statistics: A

Series of Monographs and Textbooks.

Robb J. Muirhead. Aspects of multivariate statistical theory. John Wiley &

Sons, Inc., New York, 1982. ISBN 0-471-09442-0. Wiley Series in Proba-

bility and Mathematical Statistics.

58