Predictive Analytics: It's The Intervention That Matters

-

Upload

health-catalyst -

Category

Health & Medicine

-

view

639 -

download

1

description

Transcript of Predictive Analytics: It's The Intervention That Matters

© 2013 Health Catalyst

It’s The Intervention That Matters

ww©w.2h0e1a3lthHceaatalt

lhysCt.acotamlyst www.

healthcatalyst.com

Predictive Analytics:

David CrockettDale Sanders, Sep 2013

An Email Yesterday

© 2013 Health Catalyst www.healthcatalyst.com

From a CMIO about today’s

webinar Dale,

“One thing that I think would be helpful, generally speaking, is helping executives and operational leaders get a really concrete idea of what value true analytics brings to a healthcare organization. These days the terms ‘predictive analytics’ and ‘big data’are thrown around so frequently and so casually, that for many they have become devoid of actual meaning.”

Audience Poll

© 2013 Health Catalyst www.healthcatalyst.com

Benefits of predictive analytics tohealthcare over the next three years?

Overview

© 2013 Health Catalyst www.healthcatalyst.com

Dale Sanders

• Human interest and color commentary• A selection of stories, concepts and lessons learned,

such as…

• The parallels between “treating” terrorists and treatingpatients using predictive analytics

David Crockett

• A graduate-level crash course• Machine learning, algorithms, feature selection,

classification, tools, etc.

An Oddly Relevant Career Path

US Air Force CIO

•Nuclear warfare operations

TRW

• NSA• Nuclear Command &

Control Counter Threat Program

• Credit risk scoring, traffic routing & optimization

• Strategic Execution Decision Aid © 2013 Health Catalyst

www.healthcatalyst.com

5

Key Messages & Themes

© 2013 Health Catalyst www.healthcatalyst.com

6

1. We are fixated on predictions and interventions of readmission●

●

We should be predicting and intervening on

ADMISSIONS Aim higher…!

2. Predictions without interventions are useless-- and potentiallyworse than useless

3. Correlation does not imply causation

4. Missing data = Poor predictions● Patient outcomes, familial, genomics

5. Some of the most important predictions don’t need a computer

algorithm● Nurses and physicians can tell you

6. When it comes to analytics, take care of the basics, first ● The time will come for predictive analytics

Most Common Causes for Readmission

Robert Wood Johnson Foundation, Feb 2013

1. Patients have no family or other caregiver at home

2. Patients did not receive accurate discharge instructions

3. Patients did not understand discharge instructions

4. Patients discharged too soon

5. Patients referred to outpatient physicians and clinics notaffiliated with the hospital

© 2013 Health Catalyst www.healthcatalyst.com

7

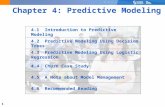

Healthcare Analytics Adoption Model

© 2013 Health Catalyst www.healthcatalyst.com

Level 8 Cost per Unit of Health Payment &Prescriptive Analytics

Contracting for & managing health. Tailoringpatient care based on population outcomes.

Level 7 Cost per Capita Payment & Predictive Analytics

Diagnosis-based financial reimbursement & managing risk proactively

Level 6 Cost per Case Payment& The Triple Aim

Procedure-based financial risk and applying“closed loop” analytics at the point of care

Level 5 Clinical Effectiveness & Accountable Care Measuring & managing evidence based care

Level 4 Automated External Reporting Efficient, consistent production & agility

Level 3 Automated Internal Reporting Efficient, consistent production

Level 2 Standardized Vocabulary & Patient Registries

Relating and organizing the core data

Level 1 Integrated, Enterprise Data Warehouse Foundation of data and technology

Level 0 Fragmented Point Solutions Inefficient, inconsistent versions of the truth

Healthcare Analytics Adoption Model: The Details for Organizational Self-Inspection

Level 8 Cost per Unit of Health Payment & Prescriptive Analytics: Providers Analytic motive expands to wellness management and mass customization of care. Physicians, hospitals, employers, payers and members/patients collaborate to share risk and reward (e.g., financial reward to patients for

healthy behavior). Analytics expands to include NLP of text, prescriptive analytics, and interventional © cision support. Prescriptive analytics are available at the

depoint of care to improve patient specific outcomes based upon population outcomes. Data content expands to include genomic and familial information. The EDW is updated within a few minutes of changes in the source systems.

Level 7 Cost per Capita Payment & Predictive Analytics: Analytic motive expands to address diagnosis-based, fixed-fee per capita reimbursement models. Focus expands from management of cases to collaboration with clinician and payer partners to manage episodes of care, using predictive modeling, forecasting, and risk stratification to support outreach, triage, escalation and referrals. Patients are flagged in registries who are unable or will not participate in care protocols. Data content expands to include external pharmacy data and protocol-specific patient reported outcomes. On average, the EDW is updated within one hour or less of source system changes.

Level 6 Cost per Case Payment & The Triple Aim: The “accountable care organization” shares in the financial risk and reward that is tied to clinical outcomes. At least 50% of acute care cases are managed under bundled payments. Analytics are available at the point of care to support the Triple Aim of maximizing the quality of individual patient care, population management, and the economics of care. Data content expands to include bedside devices and detailed activity based costing. Data governance plays a major role in the accuracy of metrics supporting quality-based compensation plans for clinicians and executives. On average, the EDW is updated within one day of source system changes. The EDW reports organizationally to a C-level executive who is accountable for balancing cost of care and quality of care.

Level 5 Clinical Effectiveness & Accountable Care: Analytic motive is focused on measuring clinical effectiveness that maximizes quality and minimizes waste and variability. Data governance expands to support care management teams that are focused on improving the health of patient populations. Permanent multidisciplinary teams are in-place that continuously monitor opportunities to improve quality, and reduce risk and cost, across acute care processes, chronic diseases, patient safety scenarios, and internal workflows. Precision of registries is improved by including data from lab, pharmacy, and clinical observations in the definition of the patient cohorts. EDW content is organized into evidence-based, standardized data marts that combine clinical and cost data associated with patient registries. Data content expands to include insurance claims. On average, the EDW is updated within one week of source system changes.

Level 4 Automated External Reporting: Analytic motive is focused on consistent, efficient production of reports required for regulatory and accreditation requirements (e.g. CMS, Joint Commission, tumor registry, communicable diseases); payer incentives (e.g. MU, PQRS, VBP, readmission reduction); and specialty society databases (e.g. STS,NRMI, Vermont-Oxford). Adherence to industry-standard vocabularies is required. Clinical text data content is available for simple key word searches. Centralized data governance exists for review and approval of externally released data.

Level 3 Automated Internal Reporting: Analytic motive is focused on consistent, efficient production of reports supporting basic management and operation of the healthcare organization. Key performance indicators are easily accessible from the executive level to the front-line manager. Corporate and business unit data analysts meet regularly to collaborate and steer the EDW. Data governance expands to raise the data literacy of the organization and develop a data acquisition strategy for Levels 4 and above.

Level 2 Standardized Vocabulary & Patient Registries: Master vocabulary and reference data identified and standardized across disparate source system content in the data warehouse. Naming, definition, and data types are consistent with local standards. Patient registries are defined solely on ICD billing data. Data governance forms around the definition and evolution of patient registries and master data management.

Level 1 Integrated, Enterprise Data Warehouse: At a minimum, the following data are co-located in a single data warehouse, locally or hosted: HIMSS EMR Stage 3 data, Revenue Cycle, Financial, Costing, Supply Chain, and Patient Experience. Searchable metadata repository is available across the enterprise. Data content includes insurance claims, if possible. Data warehouse is updated within one month of changes in the source system. Data governance is forming around the data quality of source systems. The EDW reports organizationally to the CIO.

Level 0 Fragmented Point Solutions: Vendor-based and internally developed applications are used to address specific analytic needs as they arise. The fragmented Point Solutions are neither co-located in a data warehouse nor otherwise architecturally integrated with one another. Overlapping data content leads to multiple versions of analytic truth. Reports are labor intensive and inconsistent. Data governance is non-existent.

Audience Poll

© 2013 Health Catalyst www.healthcatalyst.com

10

Analytics Adoption Model:At what level does your organization consistently and

reliably function?

Challenge of PredictingAnything Human

11

Sampling Rate vs. Predictability

The sampling rate and volume of data in an experiment is directly proportional to the predictability

of the next experiment

© 2013 Health Catalyst www.healthcatalyst.com

12

Can We Learn From Nuclear WarfareDecision Making?

“Clinical” observations

• Satellites and radar indicate an enemy launch

Predictive “diagnosis”

• Are we under attack or not?

Decision making timeframe

• <4 minutes to first impact when enemy subs launch from the east coast of the US

“Treatment” & intervention

• Launch on warning or not?

© 2013 Health Catalyst www.healthcatalyst.com

13

Desired “Outcomes”

1. Retain US society as described in the Constitution

2. Retain the ability to govern & command US forces

3. Minimize loss of US lives

4. Minimize destruction of US infrastructure

5. Achieve all of this as quickly as possible with minimal

expenditure of US military resources

© 2013 Health Catalyst www.healthcatalyst.com

14

Where And How Can A Computer Help?

© 2013 Health Catalyst www.healthcatalyst.com

15

Reduce variability in decision making & improve outcomes

16

Lessons For Healthcare

© 2013 Health Catalyst www.healthcatalyst.com

17

• Humans didn’t trust predictive models when the decision making timeframe was compressed and the consequences of a bad decision were extreme

• At present in healthcare, predictive analytics are easier to apply in slowly changing situations, e.g., chronic condition management, elective procedures, ventilator weaning, glucose management in the ER, antibiotic protocols

• Subjective human issues were not well-modeled•

•

The “Rogue Commander” scenario

We need to at least try to quantify the “difficult patient”

• Without outcomes data, it’s all guesswork• Thankfully, we don’t have much outcomes data related to

nuclearwarfare

That shouldn’t be the case in healthcare•

Quantifying the Atypical Patient

© 2013 Health Catalyst www.healthcatalyst.com

18

Not all patients can participate in a protocol

At Northwestern, we found that 30% of patients fell intoone or more of these categories

1. Cognitive inability

2. Economic inability

3. Physical inability

4. Geographic inability

5. Religious beliefs

6. Contraindications to the protocol

7. Voluntarily non-compliant

Accounting For These Patients

© 2013 Health Catalyst www.healthcatalyst.com

19

30% of your patients will have to be treated and/orreached in a unique way

• Your predictive algorithms must be adjusted these attributes, especially for readmission

• These patients are a unique numerator in the overalldenominator of patients under accountable care

• You need a data collection & governance strategy for these patient attributes

• You need a different interventional strategy for each ofthe 7 categories

• Your physician compensation model must be adjusted for these patient types

Sortie Turnaround TimesThe Goal: Predictable, fast turnaround of aircraft to a successful battle

© 2013 Health Catalyst www.healthcatalyst.com

20

Patient Fight Path Profiler

21

The Goal: Predictable, fast turnaround of patients to a good life

Healthcare As a Battle Field…??The Order of Battle and the Order of Care Demand

forecasting: What do we need and when?

22

NSA, Terrorists, and PatientsThe Odd Parallels of Terrorist Registries and Patient Registries

© 2013 Health Catalyst www.healthcatalyst.com

23

Predicting Terrorist Risk

Risk = P(A) × P(S|A) × C

• Probability of Attack

• Probability of Success if Attack occurs

• Consequences of Attack (dollars, lives, national psyche, etc.)

• What are the costs of intervention and mitigation?• Do they significantly outweigh the Risk?

© 2013 Health Catalyst www.healthcatalyst.com

24

Predicting Patient Risk

© 2013 Health Catalyst www.healthcatalyst.com

25

We Know the Probabilities

What are the consequences?What are the strategies and costs to intervene?

26

Lessons For Healthcare

© 2013 Health Catalyst www.healthcatalyst.com

27

•

•

Multiple predictive models that “vote” are more accurate than single models

In the absence of data, and until more data is available, multiple expert opinion isbetter than nothing for predicting outcomes and managing risk

• “Wisdom of crowds”

• Backward chaining predictive models (supervised learning) are the most accurate, but are also inflexible and fragile

• How did this person become a terrorist? What was their pre-terrorist data profile?

Friends, family, and what you read are MAJOR predictors of terror risks•

• We don’t collect familial data in the course of care

If you associate with more than one terrorist group, even greater predictor

Parallels to a comorbidity

•

•

• Even when we can predict accurately, the cultural willingness and ability to interveneare incredibly difficult and can be very controversial

• Armed drone “assassinations”, TSA profiling

BRCA genes and prophylactic mastectomies•

More Reading

© 2013 Health Catalyst www.healthcatalyst.com

28

1. Eliciting Probabilities from Experts. Advances in Decision Analysis. Cambridge, UK: Cambridge University Press, 2007. Hora S. ,Edwards W, Miles R, Jr., von Winterfeldt D (eds).

2. Estimating Terrorism Risk. RAND Center for Terrorism Risk Management Policy, 2003. Willis H, Morral A, Kelly T, Medby J.

3. Probabilistic Risk Analysis and Terrorism Risk. Risk Analysis, Vol. 30, No. 4, 2010. Barry Charles Ezell, Steven P. Bennett, Detlof von Winterfeldt, John Sokolowski, and Andrew J. Collins.

Suggestive Analytics©

© 2013 Health Catalyst www.healthcatalyst.com

Surround the decision making environment withsuggestions, based on analytic data

● Much easier than predicting● Leverages “Wisdom of Crowds” data

Worth reading● “Nudge: Improving Decisions About Health, Wealth, and

Happiness”

© 2013 Health Catalyst www.healthcatalyst.com

30

Closed Loop Analytics:The Triple Aim

31

The Antibiotic Assistant

© 2013 Health Catalyst www.healthcatalyst.com

32

• Predicting the efficacy and costs of antibioticprotocols for inpatients

Dave Claussen, Scott Evans

AntibioticProtocol

Dosage Route Interval PredictedEfficacy

AverageCost/Patient

Option 1 500mg IV Q12 98% $7,256

Option 2 300mg IV Q24 96% $1,236

Option 3 40mg IV Q6 90% $1,759

The Antibiotic Assistant Impact

© 2013 Health Catalyst www.healthcatalyst.com

33

Complications declined 50%

Avg # doses declined from 19 -> 5.3

The replicable and bigger story● Antibiotic cost per treated patient: $123 -

> $52

● By simply displaying the cost to physicians

Stories of Correlation vs. Causation

© 2013 Health Catalyst www.healthcatalyst.com

34

• The production of butter in Bangladesh and the S&P 500

• David Leinweber, UC Berkeley

• TRW Credit Reporting (Experian)• NSA-developed predictive algorithms indicated Black (African

American) borrowers were higher risk

• Sociologists on staff explained the bigger picture

• Women, Hormone Replacement Therapy, and Cardiovascular Disease

• Women with HRT had lower CVD• Women with HRT were from higher income levels

and couldafford to exercise

Audience Poll

© 2013 Health Catalyst www.healthcatalyst.com

35

How confident are you that your organization is prepared to combine the technology of predictive

analytics with the processes of intervention?

Wrap-Up

© 2013 Health Catalyst www.healthcatalyst.com

36

• Vendors are in the EXTREME hype cycle ofpredictive analytics

• Without outcomes data, we are largely stuck with predicting the obvious

• Take care of the basics of analytics, first

• The human “mathematical model” is years away

• Suggestive analytics is easier

• Intervening to reduce risk is the hard part• Predicting is the easy part• Predicting without intervening is ripe for lawyers

Many thanks

© 2013 Health Catalyst www.healthcatalyst.com

37

• Contact information• [email protected]

• @drsanders

• www.linkedin.com/in/dalersanders

© 2013 Health Catalyst

ww©w.2h0e1a3lthHceaataltlhy

sCt.acotamlys3t 8

www.healthcatalyst.com

David Crockett:

The Graduate Crash Course

Objectives

Topics we’ll cover today include:

Machine Learning Overview

Software Examples

(Open Source, Commercial)

Prediction Modeling

Demo

4 Insights toImplementatio

n

© 2013 Health Catalyst www.healthcatalyst.com

Machine Learning 101

A scientific discipline concerned with algorithm design and development that allows computers to learn based on data.

A major focus of machine learning research is to automatically learn to recognize complex patterns and make intelligent decisions based on data.

© 2013 Health Catalyst www.healthcatalyst.com

Machine Learning 102

Extracting useful information from large machine-readable data sets is a problem faced by people in nearly every area of commerce, manufacturing, government, academic discipline and science

Machine learning has a wide range of applications:

© 2013 Health Catalyst www.healthcatalyst.com

Machine learning algorithms are organized into a taxonomy, based on the desired outcome of the algorithm.

Supervised Learning Unsupervised Learning

Generates a function that mapsinputs to desired outputs

Models a set of inputs: labeledexamples are not available

Reinforcement Learning

Learns how to act given an observation of the world

TransductionTries to predict new outputs

based on training inputs, training outputs, and test inputs

Algorithms

© 2013 Health Catalyst www.healthcatalyst.com

The Modeling Process

Step 1

DefineProblem

GatherData

Run/EvaluateModels

INITIALDATASET

Step 2

SelectModel

TestModel

VALIDATIONDATASET

Step 3

Apply ModelRun

Prediction

TESTDATASET

© 2013 Health Catalyst www.healthcatalyst.com

A Few Definitions…

OUTPUT

• Outcome• Prediction• Forecast• Trend

© 2013 Health Catalyst www.healthcatalyst.com

INPUT

• Features• Attributes• Variables• Class• Labels

FEATURE SELECTION

Selecting a subset of relevant variables (features)for use in construction a model

CLASSIFICATION

Use an object's characteristics (features) to identify which class (or group) it belongs to

Feature Selection

PCA● principal components analysis

ReliefFAttributeEval● recursive feature elimination

CfsSubsetEval● correlation-based feature subset

selection

ChiSquaredAttributeEval● chi-squared statistic with respect to class

© 2013 Health Catalyst www.healthcatalyst.com

Know Your Data…

It is crucial to understand the data set being used

© 2013 Health Catalyst www.healthcatalyst.com

Insight #1

Don’t confuse more datawith more insight.

© 2013 Health Catalyst www.healthcatalyst.com

Specific Improves Accuracy

In our hands:

Generic ReadmissionsPredictor ~ 79% PPV

Heart FailureReadmission Predictor~ 91% PPV

© 2013 Health Catalyst www.healthcatalyst.com

Classification

Rules

Linear (Regression)

Trees

Neural Networks

Support Vector

Machine Bayes

© 2013 Health Catalyst www.healthcatalyst.com

Classification – Rules Based

Rulesgender=male AND age>40: getting a little thin on top ~ 65%

gender=male AND age>40 AND bald grandpa OR uncle ~ 82%

Linear (Regression)

Trees

Neural Networks

Support Vector

Machine Bayes

© 2013 Health Catalyst www.healthcatalyst.com

Classification – Regression

Rules

Linear (Regression)male(1)x(41.8) + age(44)x(0.607): getting thin on top ~ 68%

male(1)x(41.8)+age(44)x(0.607)+uncle(19.2): going bald ~ 87%

Trees

Neural Networks

Support Vector

Machine Bayes

© 2013 Health Catalyst www.healthcatalyst.com

Classification – Tree Based

Rules

Linear (Regression)

Trees

Neural Networks

Support Vector

Machine Bayes

© 2013 Health Catalyst www.healthcatalyst.com

Insight #2

Don’t confuseinsight with value.

© 2013 Health Catalyst www.healthcatalyst.com

The Cost of Readmissions

© 2013 Health Catalyst www.healthcatalyst.com

Medicare rehospitalization within 30 days after discharge: $17.4 billion

Jencks, NEJM 2009

87%

Prediction In Context

© 2013 Health Catalyst www.healthcatalyst.com

Data Warehouse Synergy

Prediction can be more powerful “in context.”

Rothman Index, an early wellness metric.

© 2013 Health Catalyst www.healthcatalyst.com

System architecture and data flow

In a data warehouseenvironment…

• Incorporate additionalsite specific data

• Filter across multipledata sources

• Available to any userand any application

• Show when and whereneeded

Evaluating Performance

sensitivity or true positive rate (TPR, hit rate, recall)

TPR = TP / P = TP / (TP + FN)

specificity (SPC or True Negative Rate)SPC = TN / N = TN / (FP + TN) = 1 − FPR

positive predictive value (PPV, precision)

PPV = TP / (TP + FP)

true positive (TP, hit)

true negative (TN, correct rejection)

false positive (FP, false alarm, Type I error)

false negative (FN, miss, Type II error)

Sensitivity measures the proportion of actual positives which are correctly identified

Specificity measures the proportion of negatives which are correctly identified

© 2013 Health Catalyst www.healthcatalyst.com

Always a trade off…

However, there is always a trade-off between sensitivityand specificity…

For example, airport security scanners can be set to trigger on low-risk items like belt buckles and keys…

(very sensitive, but not too specific)

This trade-off is often represented graphically as a

receiver operating characteristic (ROC) curve.© 2013 Health Catalyst

www.healthcatalyst.com

ROC Curves

The trade-off is between: Sensitivity vs. Specificity…

© 2013 Health Catalyst www.healthcatalyst.com

Area Under the Curve (AUC)

Machine learning often uses this AUC statistic for model comparison. (~ c statistic)

PPV (precision) is a common metric that’s also used.

PPV = TP / (TP + FP)

Whiting et al. BMC Med Res Methodol. 2008 Apr 11;8:20

Insight #3

Don’t overestimate the ability to interpret the data.

© 2013 Health Catalyst www.healthcatalyst.com

Open Source Tools

Many open source tools exist...

Waffles

http://jmlr.org/mloss/

© 2013 Health Catalyst www.healthcatalyst.com

Open Source Example

Various implementations of a given approach can be found.For example, random forest classification (circa 2001):

Original implementation by Leo Breiman and Adele Cutler (Fortran)

ALGLIB (library, C++, C#, Pascal, Visual Basic,

Python) Orange (toolkit, C++, with Python interface)

fast-random-forest

(Java) RandomForest

(Weka) Milk (toolkit,

Python) treelearn

(Python)http://oz.berkeley.edu/users/breiman/randomforest2001.pdf

© 2013 Health Catalyst www.healthcatalyst.com

Open Source Standards

Predictive Model Markup Language (PMML), is an industry standard used to represent numerous predictive modeling techniques.

Such as:

• Association Rules

• Cluster Models

• Neural Networks

• Decision Trees

© 2013 Health Catalyst www.healthcatalyst.com

Commercial Tools

Many commercial tools also exist...

IBM SPSS

GE PredictivityThe Forrester Wave™: Predictive Analytics Solutions, Q1

2013© 2013 Health Catalyst

www.healthcatalyst.com

Enhancing Commercial Tools

Leveraging the enterprise warehouse…

IBM SPSS

GE Predictivity

Late-BindingTM Data Bus Data Acquisition and

Storage Metadata Engine© 2013 Health Catalyst

www.healthcatalyst.com

Insight #4

Don’t underestimate thechallenge of implementation.

© 2013 Health Catalyst www.healthcatalyst.com

Predictive Modeling Demo

Supervised learning with a known class label (outcome)

• Simple example: Pima Indian diabetes

Several standard ML techniques have been built into a software "workbench" called Waikato Environment for Knowledge Analysis (WEKA).

© 2013 Health Catalyst www.healthcatalyst.com

Weka File Explorer

1

2

3

© 2013 Health Catalyst www.healthcatalyst.com

Weka Classify – Zero Rules

1

2

3

© 2013 Health Catalyst www.healthcatalyst.com

Weka Classify – One Rule

Test mode:10-fold cross-validation=== Classifier model (full training set)===

plas:< 114.5 -> tested_negative< 115.5 -> tested_positive< 133.5 -> tested_negative< 135.5 -> tested_positive< 144.5 -> tested_negative< 152.5 -> tested_positive< 154.5 -> tested_negative>= 154.5 ->

tested_positive(585/768 instances correct)

Time taken to build model: 0.05 s

1

© 2013 Health Catalyst www.healthcatalyst.com

Weka Classify – JRIP

Test mode:10-fold cross-validation=== Classifier model (full training set)===JRIP rules:===========(plas >= 132) and (mass >= 30) => class=tested_positive (182.0/48.0) (age >= 29) and (insu >= 125) and (preg <= 3) => class=tested_positive (19.0/4.0)(age >= 31) and (pedi >= 0.529) and (preg >= 8) and (mass >= 25.9) => class=tested_positive (22.0/5.0)=> class=tested_negative(545.0/102.0)

Number of Rules : 4

Time taken to build model: 0.06 s

1

© 2013 Health Catalyst www.healthcatalyst.com

Weka Classify – Regression

Test mode:=== Classif===Logistic Re parameter o Odds Ratio Variable========preg plas pres skin insu mass pedi age

Time taken

10-fold cross-validationier model (full training set)

gression with ridgef 1.0E-8

s...tested_negative

==================== 0.88410.96541.01340.99941.00120.91420.38860.9852

to build model: 0.06 s

1

© 2013 Health Catalyst www.healthcatalyst.com

Machine Learning Models

Step 1

DefineProblem

GatherData

Run/EvaluateModels

INITIALDATASET

Step 2

SelectModel

TestModel

VALIDATIONDATASET

Step 3

Apply ModelRun

Prediction

TESTDATASET

© 2013 Health Catalyst www.healthcatalyst.com

Lessons Learned:

● Lesson #1:Don’t confuse more data with more insight

● Lesson #2:Don’t confuse insight with value

● Lesson #3:Don’t overestimate the ability to interpret the data

● Lesson #4:Don’t underestimate the challenge of implementation

Predictive Analytics: Insights toImplementation/Intervention

© 2013 Health Catalyst www.healthcatalyst.com

From David Shaywitz, Forbes.com

Thank you!

© 2013 Health Catalyst www.healthcatalyst.com

Questions and Answers

Next WebinarsHealthcare Reform: Implications for Your Health SystemBrian Ahier, Healthcare Evangelist

Date: October 8, 2013 from 1:00 PM - 2:00 PM ET

Widely recognized as one of healthcare's most knowledgeable speakers on healthcare policy, Brian Ahier will provide an in-depth look at current healthcare reform and more specifically the implications of President Barack Obama's 2010 legislative efforts, namely the Patient Protection and Affordable Care Act, also referred to as 'Obamacare’.

Population Health FundamentalsDr. David A. Burton, MD, Executive Chairman

Date: October 9, 2013 from 1:00 PM - 2:00 PM ET

Dr. Burton, former Intermountain Executive and current Executive Chairman of the Board of Health Catalyst, will lead a webinar Oct. 9 on the paradigm shift in healthcare analytics from a focus on inpatient (acute care) to a focus on the continuum of care. The shift is being driven by the linked imperatives of Population Health Management and Accountable Care Organizations and other shared accountability arrangements.

© 2013 Health Catalyst www.healthcatalyst.com