P-values and their limitations & Type I and Type II errors

description

Transcript of P-values and their limitations & Type I and Type II errors

P-values and their limitations &Type I and Type II errors

Stats Club 5Marnie Brennan

What do you know about P-values?

References

• Petrie and Sabin - Medical Statistics at a Glance: Chapter 17 & 18 Good

• Petrie and Watson - Statistics for Veterinary and Animal Science: Chapter 6 Good

• Kirkwood and Sterne – Essential Medical Statistics: Chapter 8 & 35

• Dohoo, Martin and Stryhn – Veterinary Epidemiologic Research: Chapter 2 & 6

Interesting reads!• Sterne, JAC and Davey-Smith, G (2001) Sifting the

evidence – what’s wrong with significance tests? British Medical Journal, Vol. 322, 226-231. - Good

• Altman, DG and Bland, JM (1995) Absence of evidence is not evidence of absence. British Medical Journal, Vol. 311, 485.

• Nakagawa, S and Cuthill, IC (2007) Effect size, confidence interval and statistical significance: a practical guide for biologists. Biological Reviews, Vol. 82, 591-605. – I’ve not read this, but it has been recommended

Differences between groups

• Many different tests to measure the difference between two or more groups of subjects/animals/patients– We will cover these individually in subsequent

weeks• How do we know whether they are truly

different from each other?– i.e. There is truly a difference between groups or

not?

Hypothesis (significance) testing

• You have a scientific question you want to answer

• You construct a hypothesis to test your question

• You have to have an alternative hypothesis to test it against

Null and alternative hypotheses

• Null hypothesis – No difference between groups/no association between variables– Sometimes written as H0

• Alternative hypothesis – There is a difference between groups/an association between variables– Sometimes written as H1

• These hypotheses relate to the population of interest, not your sample of the population

What is a P-value?• We do our study, run our statistical tests, and come up

with a P-value or Probability

• ‘The P-value is the probability of obtaining our results or something more extreme, if the null hypothesis is true’ (Petrie and Sabin)

• ‘The probability of getting a difference at least as big as that observed if the null hypothesis is true’ (Kirkwood and Sterne)

• ‘The chance of getting the observed effect (or one or more extreme) if the null hypothesis is true’ (Petrie and Watson)

What does this mean??!!• Basically the probability of getting what you have got

with your study results if the null hypothesis is true!– If the difference between our groups is large

• The probability would be small, therefore unlikely the null hypothesis is true (and you usually reject the null hypothesis as there is evidence against it)

– If the difference between our groups is small • The probability would be large, therefore likely the null

hypothesis is true (there is not enough evidence to reject the null hypothesis)

• Bad to say you accept the null hypothesis!– ‘Absence of evidence is not evidence of absence’

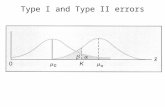

A value of the test statistic which gives P>0.05

A value of the test statistic which gives P<0.05

Significant at the 5% level

Not significant at the 5% level

Using P-values

• Usually you set your ‘significance’ level before you collect your data – this should be stated in the methods– e.g. ‘We set the significance level at P=0.01 for our analysis’

• P<0.05 is a fairly arbitrary level (one guy’s ponderings!)– Read the article by Sterne and Davey Smith– Bottom line - the smaller the P-value, the more evidence

against the null hypothesis

A sliding scale.......

How does this fit with what you do or have seen/experienced?

P-value etiquette!

• Always quote the exact P-value– E.g. P = 0.032, not P<0.05

• Display P-values accurate to two significant figures– E.g. P=0.032, or 0.17

• When P-values become very small, acceptable to display as P<0.001

Limitations of using just P-values• By just using P-values, you lose a lot of information

– Doesn’t tell you about the magnitude of the effect observed

• Often researchers only talk about P-values, and nothing else– I am certainly guilty of this!

• It is also important to determine whether your result is biologically or clinically important (not only that it is ‘significant’) – if you just use a number to interpret outcomes, it may not ‘mean’ anything

• You can use Confidence Intervals to quantify the effect of interest– Gives you a range of values which represent the difference between

your groups– This is our next Stats Club session

Interpretation of research

Errors in hypothesis testing

• Our decision to reject the null hypothesis or not can be wrong sometimes

• Petrie and Watson

Type I error

• When we reject the null hypothesis and it is actually true– Affected by:

• Significance level chosen (becomes the maximum chance of making a Type I error)– e.g. If significance level chosen is P<0.05, we have a 1 in 20 chance

that a test will be significant by chance; if P<0.01 is chosen, we have a 1 in 100 chance the test is significant by chance

• Number of comparisons – the greater number of comparisons carried out, the more likely you will get a ‘positive’ result that is spurious– Comes back to whether the result is biologically or clinically

important– Can adjust for this using post-hoc analysis e.g. Bonferroni correction

Type II error• We don’t reject the null hypothesis when it is

actually false– Affected by:• Small sample sizes – more chance of getting Type II

errors• Precision of the measurements – if measurements are

precise, less chance of getting Type II errors• Effect of interest – the larger the difference between

the groups, the less likely that a Type II error will occur

Type I and Type II error - relationship

• These two things are related, generally as one increases, the other decreases

• Bottom line – if your study design is correct, you have carried out a sample size calculation and have recruited the right number of subjects, then the chances of error decrease hugely as the power of your study will be sufficient– Sample size calcs and power will be discussed in later

Stats Club sessions

Summary

• Set your study up right to decrease the chances of Type I and Type II errors

• Use P-values but also CI’s to get an idea of the magnitude of the difference between groups

• Set your significance level BEFORE you start your data collection, and don’t just go automatically for P<0.05!

• Display your P-values correctly!

Next month

• Confidence intervals beware……