OPERATING SYSTEMS IO SYSTEMS. Categories of I/O Devices Human readable –Used to communicate with...

-

Upload

mae-gardner -

Category

Documents

-

view

212 -

download

0

Transcript of OPERATING SYSTEMS IO SYSTEMS. Categories of I/O Devices Human readable –Used to communicate with...

OPERATING SYSTEMS

IO SYSTEMS

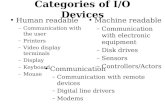

Categories of I/O Devices

• Human readable– Used to communicate with the user– Printers– Video display terminals

• Display

• Keyboard

• Mouse

Categories of I/O Devices

• Machine readable– Used to communicate with electronic

equipment– Disk and tap drives– Sensors– Controllers– Actuators

Categories of I/O Devices

• Communication– Used to communicate with remote devices– Digital line drivers– Modems

A Typical PC Bus Structure

Secondary storage

• Secondary storage typically:– is anything that is outside of “primary memory”

• Characteristics:– it’s large: 750-4000GB– it’s persistent: data survives power loss– it’s slow: milliseconds to access

• why is this slow??

– it does fail, if rarely• big failures (drive dies; MTBF ~3 years)

– if you have 100K drives and MTBF is 3 years, that’s 1 “big failure” every 15 minutes!

• little failures (read/write errors, one byte in 1013)

Memory hierarchy

• Each level acts as a cache of lower levels

CPU registers

L1 cache

L2 cache

Primary Memory

Secondary Storage

Tertiary Storage

100 bytes

32KB

256KB

1GB

1TB

1PB

10 ms

1s-1hr

< 1 ns

1 ns

4 ns

60 ns

Memory hierarchy: distance analogy

CPU registers

L1 cache

L2 cache

Primary Memory

Secondary Storage

Tertiary Storage

1 minute

10 minutes

1.5 hours

2 years

2,000 years

Pluto

Andromeda

“My head”

“This room”

“This building”

Olympia

seconds

9

• accessed through I/O ports talking to I/O busses• SCSI itself is a bus, up to 16 devices on one cable, SCSI

initiator requests operation and SCSI targets perform tasks

• Fibre Channel (FC) is high-speed serial architecture– Can be switched fabric with 24-bit address space – the

basis of storage area networks (SANs) in which many hosts attach to many storage units

• Serial ATA (SATA) – current standard for home & medium sized servers.

– Advantage is low cost with “reasonable” performance.

Mass-Storage Structure

10

SSD performance: reads

• Reads– unit of read is a page, typically 4KB large– today’s SSD can typically handle 10,000 –

100,000 reads/s• 0.01 – 0.1 ms read latency (50-1000x better

than disk seeks)

• 40-400 MB/s read throughput (1-3x better than disk seq. thpt)

SSD performance: writes

• Writes– flash media must be erased before it can be written to

– unit of erase is a block, typically 64-256 pages long• usually takes 1-2ms to erase a block

• blocks can only be erased a certain number of times before they become unusable – typically 10,000 – 1,000,000 times

– unit of write is a page• writing a page can be 2-10x slower than reading a page

• Writing to an SSD is complicated– random write to existing block: read block, erase block,

write back modified block• leads to hard-drive like performance (300 random writes / s)

– sequential writes to erased blocks: fast!• SSD-read like performance (100-200 MB/s)

12

Disks and the OS

• Job of OS is to hide this mess from higher-level software (disk hardware increasingly helps with this)– low-level device drivers (initiate a disk read, etc.)

– higher-level abstractions (files, databases, etc.)

• OS may provide different levels of disk access to different clients– physical disk block (surface, cylinder, sector)

– disk logical block (disk block #)

– file logical (filename, block or record or byte #)

Differences in I/O Devices

• Complexity of control

• Unit of transfer– Data may be transferred as a stream of bytes

for a terminal or in larger blocks for a disk

• Data representation– Encoding schemes

• Error conditions– Devices respond to errors differently

Differences in I/O Devices

• Programmed I/O– Process is busy-waiting for the operation to

complete

• Interrupt-driven I/O– I/O command is issued– Processor continues executing instructions– I/O module sends an interrupt when done

Techniques for Performing I/O

• Direct Memory Access (DMA)– DMA module controls exchange of

data between main memory and the I/O device

– Processor interrupted only after entire block has been transferred

Evolution of the I/O Function

• Controller or I/O module with interrupts– Processor does not spend time waiting for

an I/O operation to be performed

• Direct Memory Access– Blocks of data are moved into memory

without involving the processor– Processor involved at beginning and end

only

I/O Buffering

• Reasons for buffering– Processes must wait for I/O to complete

before proceeding– Certain pages must remain in main memory

during I/O

I/O Buffering

• Block-oriented– Information is stored in fixed sized blocks– Transfers are made a block at a time– Used for disks and tapes

• Stream-oriented– Transfer information as a stream of bytes– Used for terminals, printers, communication

ports, mouse, and most other devices that are not secondary storage

Single Buffer

• Operating system assigns a buffer in main memory for an I/O request

• Block-oriented– Input transfers made to buffer– Block moved to user space when needed– Another block is moved into the buffer

• Read ahead

Double Buffer

• Use two system buffers instead of one

• A process can transfer data to or from one buffer while the operating system empties or fills the other buffer

21

Physical disk structure

platter

surface

tracksector

cylinder

arm

head

Disk Formatting

An illustration of cylinder skew

Disk Performance Parameters

• To read or write, the disk head must be positioned at the desired track and at the beginning of the desired sector

• Seek time– time it takes to position the head at the

desired track

• Rotational delay or rotational latency– time its takes for the beginning of the

sector to reach the head

Timing of a Disk I/O Transfer

Disk Performance Parameters

• Access time– Sum of seek time and rotational delay– The time it takes to get in position to read

or write

• Data transfer occurs as the sector moves under the head

Performance via disk scheduling

• Seeks are very expensive, so the OS attempts to schedule disk requests that are queued waiting for the disk– FCFS (do nothing)

• reasonable when load is low• long waiting time for long request queues

– SSTF (shortest seek time first)• minimize arm movement (seek time), maximize request rate• unfairly favors middle blocks

– SCAN (elevator algorithm)• service requests in one direction until done, then reverse• skews wait times non-uniformly (why?)

– C-SCAN• like scan, but only go in one direction (typewriter)• uniform wait times

Disk Scheduling Policies

• First-in, first-out (FIFO)– Process request sequentially– Fair to all processes– Approaches random scheduling in

performance if there are many processes

Disk Scheduling Policies

• Priority– Goal is not to optimize disk use but to meet

other objectives– Short batch jobs may have higher priority– Provide good interactive response time

Disk Scheduling Policies

• Last-in, first-out– Good for transaction processing systems

• The device is given to the most recent user so there should be little arm movement

– Possibility of starvation since a job may never regain the head of the line

Disk Scheduling Policies

• Shortest Service Time First– Select the disk I/O request that requires the

least movement of the disk arm from its current position

– Always choose the minimum Seek time

Disk Scheduling Policies

• SCAN– Arm moves in one direction only, satisfying

all outstanding requests until it reaches the last track in that direction

– Direction is reversed

Disk Scheduling Policies

• C-SCAN– Restricts scanning to one direction only– When the last track has been visited in one

direction, the arm is returned to the opposite end of the disk and the scan begins again

Disk Scheduling Policies

• N-step-SCAN– Segments the disk request queue into

subqueues of length N– Subqueues are process one at a time, using

SCAN– New requests added to other queue when

queue is processed

• FSCAN– Two queues– One queue is empty for new request

Disk Scheduling Algorithms

Disk Scheduling

• Several algorithms exist to schedule the servicing of disk I/O requests.

• We illustrate them with a request queue (0-199).

98, 183, 37, 122, 14, 124, 65, 67

Head pointer 53

FCFSIllustration shows total head movement of 640 cylinders.

SSTF (Cont.)

SCAN (Cont.)

C-SCAN (Cont.)

Example

• Given a disk with 200 tracks, and the following disk track

requests: 27 , 129 , 110 , 186 , 147 , 41 , 10 , 64 , 120. Assume

the disk head is at track 100 and is moving in the direction of

decreasing track number. Calculate the access time for each

request and average seek time if the scheduling technique is :

• FIFO First In First Out

• SSTF Shortest Service Time First

• SCAN Back and Forth over Disk

• C-SCAN One way with fast return

Performance via caching, pre-fetching

• Keep data or metadata in memory to reduce physical disk access– problem?

• If file access is sequential, fetch blocks into memory before requested

Disk Cache

• Buffer in main memory for disk sectors

• Contains a copy of some of the sectors on the disk

Least Recently Used

• The block that has been in the cache the longest with no reference to it is replaced

• The cache consists of a stack of blocks• Most recently referenced block is on the

top of the stack• When a block is referenced or brought

into the cache, it is placed on the top of the stack

Least Recently Used

• The block on the bottom of the stack is removed when a new block is brought in

• Blocks don’t actually move around in main memory

• A stack of pointers is used

Least Frequently Used

• The block that has experienced the fewest references is replaced

• A counter is associated with each block• Counter is incremented each time block

accessed• Block with smallest count is selected for

replacement• Some blocks may be referenced many times in

a short period of time and then not needed any more

Seagate Barracuda 3.5” disk drive

• 1Terabyte of storage (1000 GB)

• $100

• 4 platters, 8 disk heads

• 63 sectors (512 bytes) per track

• 16,383 cylinders (tracks)

• 164 Gbits / inch-squared (!)

• 7200 RPM

• 300 MB/second transfer

• 9 ms avg. seek, 4.5 ms avg. rotational latency

• 1 ms track-to-track seek

• 32 MB cache