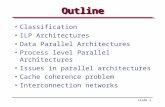

Multicores and Parallel Architectures

Transcript of Multicores and Parallel Architectures

CDA 3101 Spring 2016

Introduction to Computer Organization

Multicore / Multiprocessor Architectures

7, 12 April 2016

2

Multicore Architectures Introduction – What are Multicores?

Why Multicores? Power and Performance Perspectives

Multiprocessor Architectures

Conclusion

CDA 3101 – Fall 2011 Copyright © 2011 Prabhat Mishra

How to Reduce Power ConsumptionMulticore

One core with frequency 2 GHzTwo cores with 1 GHz frequency (each)

Same performance Two 1 GHz cores require half power/energy

– Power freq2

– 1GHz core needs one-fourth power compared to 2GHz core.

New challenges – Performance How to utilize the coresIt is difficult to find parallelism in programs to keep

all these cores busy.

Reducing Energy Consumption

[www.transmeta.com]

Pentium Max Temp = 105.5 deg C Crusoe Max Temp = 48.2 deg C

Both processors are running the same multimedia application.

Infrared Cameras (FLIR) can be used to detect thermal distribution.

6

IntroductionNever ending story …

Complex Applications Faster Computation How far did we go with uniprocessors?

Parallel Processors now play a major role Logical way to improve performance

Connect multiple microprocessors Not much left with ILP exploitation Server and embedded software have parallelism

Multiprocessor architectures will become increasingly attractiveDue to slowdown in advances of uniprocessors

7

Level of ParallelismBit level parallelism: 1970 to ~1985

4 bits, 8 bit, 16 bit, 32 bit microprocessorsInstruction level parallelism: ~1985 - today

Pipelining Superscalar VLIW Out-of-order execution / Dynamic Instr. Scheduling

Process level or thread level parallelism Servers are parallel Desktop dual processor PCs Multicore architectures (CPUs, GPUs)

8

Taxonomy of Parallel ArchitecturesSISD (Single Instruction Single Data)

Uniprocessors

MISD (Multiple Instruction Single Data)Multiple processors on a single data stream

No commercial prototypes. Can be thought of as successive refinement of a given set of data by multiple processors (units).

SIMD (Single Instruction Multiple Data)Examples: Illiac-IV, CM-2

Simple programming model, low overhead, and flexibilityAll custom integrated circuits

MIMD (Multiple Instruction Multiple Data)Examples: Sun Enterprise 5000, Cray T3D, SGI Origin

Flexible – Difficult to program – no unifying model of parallelismUse off-the-shelf microprocessors

MIMD in practice: designs with <= 128 processors

FlynnClassification

9

MIMDTwo types

Centralized shared-memory multiprocessors Distributed-memory multiprocessors

Exploits thread-level-parallelism The program should have at least n threads or

processes for a MIMD machine with n processors

Threads can be of different types Independent programs Parallel iterations of a loop (extracted by compiler)

10

Centralized Shared-Memory Multiprocessor

11

Centralized Shared-Memory MultiprocessorSmall number of processors share a

centralized memoryUse multiple buses or switchesMultiple memory banks

Main memory has a symmetric relationship to all processors and uniform access time from any processorSMP: symmetric shared-memory multiprocessorsUMA: uniform memory access architectures

Increase in processor performance and memory bandwidth requirements make centralized memory paradigm less attractive

12

Distributed-Memory Multiprocessors

13

Distributed-Memory MultiprocessorsDistributing memory has two benefits

Cost-effective way to scale memory bandwidthReduces local memory access time.

Communicating data between processors is complex and has higher latency

Two approaches for data communicationShared address space (not centralized memory)

Same physical addr. refers to same memory location DSM: Distributed Shared-Memory Architectures NUMA: Non-uniform memory access since the access

time depends on the location of the dataLogically disjoint address space - Multicomputers

14

Caches serve to:Increase

bandwidth versus bus/memory

Reduce latency of access

Valuable for both private data and shared data

What about cache consistency?

Time Event $A $B X (memory)

0 1 1 CPU A

reads X 1 1

2 CPU B reads X

1 1 1

3 CPU A stores 0 into X

0 1 0

Small-Scale—Shared Memory

15

Example: Cache Coherence Problem

Processors see different values for u after event 3

With write back caches, value written back to memory depends on which cache flushes or writes back value Processes accessing main memory may see very stale value

Unacceptable for programming, and its frequent!

I/O devices

Memory

P1

Cache Cache Cache

P2 P3

5

u = ?

4

u = ?

u :51

u :5

2

u :5

3

u = 7

4 C’s: Sources of Cache MissesCompulsory misses (aka cold start misses)

First access to a blockCapacity misses

Due to finite cache size A replaced block is later accessed again

Conflict misses (aka collision misses) In a non-fully associative cache Due to competition for entries in a set Would not occur in a fully associative cache of

the same total size Coherence Misses

Graphics Processing Units (GPUs) Moore’s Law will come to an end Many complicated solutions Simple solution – SPATIAL PARALLELISM SIMD model (single instr, multiple data streams) GPUs have a SIMD grid with local & shared memory model

17

GPUs – Nvidia CUDA Hierarchy

18

Map Process to Thread

Group Threads in Block

Group Blocks in Grids for Efficiency Memory Access

Also, memory coales-cing operations for faster data transfer

Graphics Processing Units (GPUs) Nvidia Fermi GPU – 3GB DRAM, 512 cores

19

CUDA architecture

-Thread

- Thread Block

- Grid of Thread Blocks

- Intelligent CUDA Compiler

Nvidia Tesla 20xx GPU Board

20

Graphics Processing Units (GPUs) Nvidia Maxwell GM100 – 8GB + 6,144 cores

21

CUDA architecture

-Threads can be spawned internally

- 32 cores per streaming multiprocessor

- 128KB L1 and 2MB L2 cache

- v.5.2+ CUDA Compiler

GPU Problems and Solutions GPUs are designed for graphics rendering GPUs are not designed for general-purpose

computing!! (no unifying model of ||-ism) Memory hierarchy:

Local Memory – Fast, small (MBs) Shared Memory – Slower, larger Global Memory – Slow, Gbytes

How to circumvent data movement cost? Clever hand coding costly, app-specific Automatic coding sub-optimal, softwe support

22

Speedup =

P is fraction of program that is parallel

S is fraction of program that is sequential

Advantages and Disadvantages GPUs provide fast parallel computing

GPUs work best for parallel solutions Sequential programs can actually run slower Amdahl’s Law describes speedup:

23

Multicore CPUs Intel Nehalem:

Servers, HPC arrays 45nm circuit technology

Intel Xeon:

2001-present 2 to >60 cores Workstations Multiple cores Laptops Heat dissipation?

24

DUAL NEHALEM

Intel Multicore CPU Performance

25

SINGLE CORE

Conclusions Parallel machines Parallel solutions Inherently sequential programs don’t benefit

much from parallelism 2 main types of parallel architectures

SIMD – Single-instruction, multiple data stream MIMD – Multiple-instruction, multiple data stream

Modern parallel architectures (multicores) GPUs – Exploit SIMD parallelism for general-

purpose parallel computing solutions CPUs – Multicore CPUs are more amenable to

MIMD parallel applications26