Mixed Signals: Speech Activity Detection and Crosstalk in the Meetings Domain

description

Transcript of Mixed Signals: Speech Activity Detection and Crosstalk in the Meetings Domain

June 14th, 2005 Speech Group Lunch Talk

Kofi A. Boakye

International Computer Science Institute

Mixed Signals: Speech Activity Detection and Crosstalk in the Meetings Domain

June 14th, 2005 Speech Group Lunch Talk

Overview• Motivation• Techniques• Meetings Domain• Crosstalk compensation• Initial Results and Modifications• Subsequent results

– Development– Evaluation

• Conclusions

June 14th, 2005 Speech Group Lunch Talk

MotivationAudio signal contains isolated non-speech

phenomena

I. Externally producedEx’s: Car honking, door slamming, telephone ringing

II. Speaker producedEx’s: Breathing, laughing, coughing

III. Non-productionEx’s: Pause, silence

June 14th, 2005 Speech Group Lunch Talk

Motivation• Some of these can be dealt with by recognizer

– Explicit modeling– “Junk” model

• Many cannot– Non-speaker produced phenomena is too large and

too rare for good modeling

• Desire: prevent non-speech regions from being processed by recognizer

→ Speech Activity Detection (SAD)

June 14th, 2005 Speech Group Lunch Talk

TechniquesTwo Main Approaches

I. Threshold based- Decision performed according to one or more

(possibly adaptive) thresholds- Method very sensitive to variations

II. Classifier based- Ex’s: Viterbi decoder, ANN, GMM- Method relies on general statistics rather than local

information- Requires fairly intensive training

June 14th, 2005 Speech Group Lunch Talk

TechniquesBoth threshold and classifier approaches typically

make use of certain acoustic features

I. Energy- Fundamental component to many SADs- Generally lacks robustness to noise and impulsive

interference

II. Zero-crossing rate- Successful as a correction term in energy-based systems

III. Harmonicity (e.g., via autocorrelation)- Relates to voicing- Performs poorly in unvoiced speech regions

June 14th, 2005 Speech Group Lunch Talk

Meetings Domain

• With initiatives such as M4, AMI, and our own ICSI meeting recorder project, ASR in meetings is of strong interest

• Objective: Determine who said what, when, using information from multiple sensors (mics)

June 14th, 2005 Speech Group Lunch Talk

Meetings Domain• Sensors of interest: personal mics

– Come as either headset or lapel units– Should be able to obtain fairly high transcripts from

these channels

• Domain has certain complexities that make task challenging, namely variability in

1) Number of speakers

2) Number, type, and location of sensors

3) Acoustic conditions

June 14th, 2005 Speech Group Lunch Talk

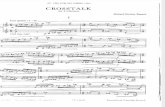

target speech

crosstalk

Crosstalk• As a preprocessing step to ASR, SAD is also

affected by these to varying levels• Key culprit in poor SAD performance: crosstalk• Example

June 14th, 2005 Speech Group Lunch Talk

Crosstalk compensation• Generate energy signals for each audio channel

and subtract minimum energy from each– Minimum energy serves as “noise floor”

June 14th, 2005 Speech Group Lunch Talk

Crosstalk compensation• Compute mean energy of non-target channels

June 14th, 2005 Speech Group Lunch Talk

Crosstalk compensation• Subtract mean from target channel

June 14th, 2005 Speech Group Lunch Talk

Crosstalk compensation• Apply thresholds using Schmitt trigger• Merge segments with inter-segment pauses less

than a set number• Suppress segments of duration less than a set

number• Apply head and tail collars to avoid “clipping”

segments

June 14th, 2005 Speech Group Lunch Talk

Initial Results• Performance was examined for RT-04

Meetings development data• 10 minute excerpts from 8 meetings, 2 from

each of1) ICSI

2) CMU

3) LDC

4) NIST

Note: CMU and LDC data obtained from lapel mics

June 14th, 2005 Speech Group Lunch Talk

Initial Results

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2006 18264 60.2 16.2 23.5 2.5 42.3 72.7

SRI Baseline:

My SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70 18.1 11.9 8.5 38.5 70.4

Verdict: Sad results

Possible reason: sensitivity of thresholds

June 14th, 2005 Speech Group Lunch Talk

Modification: Segment Intersection

• Idea:

System ideally should be generating segments from the target speaker only.

By intersecting these segments with another SAD, we can filter out crosstalk and reduce insertion errors

• Modified SAD to have zero threshold– Sensitivity needed to address deletions– False alarms addressed by intersection

June 14th, 2005 Speech Group Lunch Talk

• SRI SAD– Two-class HMM using GMMs for speech and non-

speech

– Regions merged and padded to satisfy constraints (min duration and min pause)

• Constraints optimized for recognition accuracy

Modification: Segment Intersection

S NS

June 14th, 2005 Speech Group Lunch Talk

New Results

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70.1 17.8 12.2 4.6 34.5 68.8

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70 18.1 11.9 8.5 38.5 70.4

Verdict: Happy results

June 14th, 2005 Speech Group Lunch Talk

New Results

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70.1 17.8 12.2 4.6 34.5 68.8.

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70 18.1 11.9 8.5 38.5 70.4

Note that improvement comes largely from reduced insertions

June 14th, 2005 Speech Group Lunch Talk

New Results

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70.1 17.8 12.2 4.6 34.5 68.8

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 70 18.1 11.9 8.5 38.5 70.4

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2002 18264 72.3 18.6 9.1 3.2 30.9 61.8

June 14th, 2005 Speech Group Lunch Talk

New Results• Site-level breakdown:

WERs

Insertions

All ICSI CMU LDC NIST

SRI SAD 38.5 21.4 52.7 50.4 29.8

Intersection SAD 34.5 19 47.9 40.9 30.9

Hand Segments 30.9 17.8 43.3 34.5 28.8

All ICSI CMU LDC NIST

SRI SAD 8.5 5 7.3 17.5 3.1

Intersection SAD 4.6 2.2 3.9 8.6 3.2

Hand Segments 3.2 2 3.2 4 3.7

June 14th, 2005 Speech Group Lunch Talk

Graphical Example

SRI SAD

My SAD

Intersection

Hand Segs

June 14th, 2005 Speech Group Lunch Talk

Results: Eval04• Applied 2004 Eval system to Eval04 data• 11 minute excerpts from 8 meetings, 2 from

each of1)ICSI

2)CMU

3)LDC

4)NIST

Note: No lapel mics (with exception of 1 ICSI channel)

June 14th, 2005 Speech Group Lunch Talk

Results: Eval04

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 5813 20781 67.8 16.2 16.0 2.1 34.3 34.3

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 5897 20785 67.8 16.5 15.6 3.3 35.5 34.6

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 5897 20785 71.3 17.5 11.2 3.4 32.1 31.4

• Applied 2004 Eval system to Eval04 data

June 14th, 2005 Speech Group Lunch Talk

Results: Eval04

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 5813 20781 67.8 16.2 16.0 2.1 34.3 34.3

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 5897 20785 67.8 16.5 15.6 3.3 35.5 34.6

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 5897 20785 71.3 17.5 11.2 3.4 32.1 31.4

• Applied 2004 Eval system to Eval04 data

June 14th, 2005 Speech Group Lunch Talk

Results: AMI Dev Data

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2887 40188 72.6 16.8 10.6 3.9 31.3 77.8

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2887 40187 72.2 17.4 10.4 7.0 34.8 79.0

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2887 40188 74.4 17.7 7.9 3.7 29.3 63.8

• Applied 2005 CTS (not meetings) system with AMI-adapted LM to AMI development data

June 14th, 2005 Speech Group Lunch Talk

Results: AMI Dev Data

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2887 40188 72.6 16.8 10.6 3.9 31.3 77.8

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2887 40187 72.2 17.4 10.4 7.0 34.8 79.0

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 2887 40188 74.4 17.7 7.9 3.7 29.3 63.8

• Applied 2005 CTS (not meetings) system with AMI-adapted LM to AMI development data

June 14th, 2005 Speech Group Lunch Talk

Moment of Truth: Eval05• ICSI System

– SRI SAD• GMMs trained on 2004 training data for non-AMI meetings

and 2005 AMI data for AMI meetings

– Recognizer• Based on models from SRI’s RT-04F CTS system w/

Tandem/HATS MLP features– Adapted to meetings using ICSI, NIST, and AMI data

• LMs trained on conversational speech, broadcast news, and web texts and adapted to meetings

• Vocab consisted of 54K+ words, from CTS system and ICSI, CMU, NIST, and AMI training transcripts

June 14th, 2005 Speech Group Lunch Talk

Moment of Truth: Eval05

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 3340 25121 77.5 11.1 11.4 3.3 25.8 66.0

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 3329 25121 78.7 11.2 10.1 7.7 29.0 65.1

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 3333 25121 82 11.2 6.7 1.6 19.5 52.3

Cf. AMI entry: 30.6 WER

!!!

June 14th, 2005 Speech Group Lunch Talk

Moment of Truth: Eval05

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 3340 25121 77.5 11.1 11.4 3.3 25.8 66.0

SRI Baseline:

Intersection SAD:

# Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 3329 25121 78.7 11.2 10.1 7.7 29.0 65.1

Hand segmentation # Sent # Words Corr Sub Del Ins WER Sent. Err

SUM/AVG 3333 25121 82 11.2 6.7 1.6 19.5 52.3

June 14th, 2005 Speech Group Lunch Talk

Moment of Truth: Eval05• Site-level breakdown:

WERs

Insertions

All ICSI CMU AMI NIST VT

SRI SAD 29 20.6 23.3 22 44.8 35.3

Intersection SAD 25.8 24.5 23.3 23.3 34.1 23.4

Hand Segments 19.5 16.9 19.9 19.2 21.2 20.6

All ICSI CMU AMI NIST VT

SRI SAD 7.7 1.1 2.8 1.4 20.7 13.5

Intersection SAD 3.3 0.9 2.6 1.5 1.3 1.6

Hand Segments 1.6 1 2.6 1.4 1.1 1.5

June 14th, 2005 Speech Group Lunch Talk

Moment of Truth: Eval05• One culprit: 3 NIST channels with no speech• Example (un-mic’d speaker?)

SRI SAD

My SAD

Intersection

Hand Segs

June 14th, 2005 Speech Group Lunch Talk

Conclusions• Crosstalk compensation is successful at

reducing insertions while not adversely affecting deletions, resulting in lower WER– Demonstrates power of combining information

sources

• For 2005 Meeting Eval, gap between automatic and hand segments quite large– Initial analysis identifies zero-speech channels – Further analysis necessary

June 14th, 2005 Speech Group Lunch Talk

Acknowledgments• Andreas Stolcke• Chuck Wooters• Adam Janin

June 14th, 2005 Speech Group Lunch Talk

Fin