Matching Loss - University of California, Santa Cruz Grid 0 50 100 150 200 250 300 350 400 0 50 100...

-

Upload

phungkhanh -

Category

Documents

-

view

215 -

download

2

Transcript of Matching Loss - University of California, Santa Cruz Grid 0 50 100 150 200 250 300 350 400 0 50 100...

Matching Loss

Manfred K. WarmuthMaya Hristakeva

UC Santa Cruz

October 23, 2009

MW, MH (UCSC) Matching Loss October 23, 2009 1 / 24

Two setups

w

a = w · xa = h−1(y)

x

Post: loss between y and y

y = h(a)

y = h(a) h(z) Pre: loss between a and a

MW, MH (UCSC) Matching Loss October 23, 2009 2 / 24

Two setups continued

RegressionExamples are tuples (xt , at)

xt ∈ Rn: data point (example)at ∈ R: true concentration (activity)

Linear activation label estimate: at = w · xt

Loss between at and at

ClassificationExamples are tuples (xt , yt)

xt ∈ Rn: data point (example)yt ∈ [0, 1]: true probability (label)

Probability label estimate: yt = h(at)Loss between yt and yt

MW, MH (UCSC) Matching Loss October 23, 2009 3 / 24

Why are we doing this?

Want framework for designing non-symmetric loss functions

Loss functions should be steep in important areas and flat inunimportant areas

Need flexible method for designing loss functions

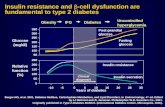

Tunning example: Clarke Grid for measureing Glucose

MW, MH (UCSC) Matching Loss October 23, 2009 4 / 24

Clarke Grid

0 50 100 150 200 250 300 350 4000

50

100

150

200

250

300

350

400

E

E

D D

C

C

B

B

A

A

Reference Values

Pre

dict

ions

Student Version of MATLAB

MW, MH (UCSC) Matching Loss October 23, 2009 5 / 24

Designing Loss for Clarke Grid

Goal: Accurately predict glucose levels of people with diabetes

Non-symmetric loss: Assymetry is needed ...

Low concentrations more important than high ones

Clarke Grid is standard loss

How do you optimize such a loss?

MW, MH (UCSC) Matching Loss October 23, 2009 6 / 24

Clarke Grid vs. square loss

0 50 100 150 200 250 300 350 4000

50

100

150

200

250

300

350

400

E

E

D D

C

C

B

B

A

A

Reference Values

Pre

dict

ions

Clarke Grid with Square Loss Level Curves

Student Version of MATLAB

MW, MH (UCSC) Matching Loss October 23, 2009 7 / 24

Clarke Grid versus our loss

0 50 100 150 200 250 300 350 4000

50

100

150

200

250

300

350

400

E

E

D D

C

C

B

B

A

A

Reference Values

Pre

dict

ions

Clarke Grid with Matching Loss Level Curves

Student Version of MATLAB

MW, MH (UCSC) Matching Loss October 23, 2009 8 / 24

Single neuron again

w

a = w · xa = h−1(y)

x

Post: ∆h−1(y , y)

y = h(a)

y = h(a) h(z) Pre: ∆h(a, a)

MW, MH (UCSC) Matching Loss October 23, 2009 9 / 24

Pre Matching Loss

a a

h(a)

h(a)

∆h(a, a) =

∫ a

a

(h(z)− h(a)) ∂z

h(z)

MW, MH (UCSC) Matching Loss October 23, 2009 10 / 24

Pre Matching Loss Examples

∆h(a, a) =

∫ a

a

(h(z)− h(a)) dz

Square Loss: h(z) = z

∆h(a, a) =1

2(a − a)2

Pre Logistic Loss: h(z) = ez

1+ez

∆h(a, a) = ln(1 + e a)− ln(1 + ea)− (a − a)ea

1 + ea︸ ︷︷ ︸y

MW, MH (UCSC) Matching Loss October 23, 2009 11 / 24

Post Matching Loss Examples

∆h−1(y , y) =

∫ y

y

(h−1(p)− h−1(y)

)dp

Square Loss: h(z) = h−1(z) = z

∆h−1(y , y) =1

2(y − y)2 =

1

2(a − a)2 = ∆h(a, a)

Logistic Loss: y = h(z) = ez

1+ez and h−1(p) = ln p1−p

∆h−1(y , y) = y lny

y+ (1− y) ln

1− y

1− y

MW, MH (UCSC) Matching Loss October 23, 2009 12 / 24

Dual View of Matching Loss

∆h(a, a) =

∫ a

a(h(z)− h(a)) dz Pre

h(z)=p h−1(p)=z

dz=(h−1(p))′dp

=

∫ h(a)

h(a)(p − h(a))) (h−1(p))

′dp

Integ. by parts=

∣∣∣h(a)h(a)(p − h(a))h−1(p) −

∫ h(a)

h(a)(h−1(p))dp

y=h(a) y=h(a)= (y − y)h−1(y)−

∫ y

y(h−1(p))dp

=

∫ y

y

(h−1(p)− h−1(y)

)dp

= ∆h−1(y , y) Post

MW, MH (UCSC) Matching Loss October 23, 2009 13 / 24

Two domains

Pre domain:

Examples: (x, a), for a ∈ RPrediction: a = x ·wLoss:

∆h(a, a) =

∫ a

a(h(z)− h(a)) dz

Post domain:

Examples: (x, y), for y ∈ [0, 1]Prediction: y = h(a)Loss:

∆h−1(y , y) =

∫ y

y

(h−1(p)− h−1(y)

)dp

MW, MH (UCSC) Matching Loss October 23, 2009 14 / 24

Why are we doing this?

Want to design good matching losses given a problem

Post Domain:

Shifting and scaling w results in use of different part of transferfunctionShifting and scaling transfer function can be undone by shiftingand scaling w

Pre Domain:

Shifting and scaling transfer function cannot be undone byshifting and scaling wLoss is “fixed” by choosing transfer functionAllows for design of “fancy” losses

MW, MH (UCSC) Matching Loss October 23, 2009 15 / 24

Scaling and Shifting the Sigmoid

Define the transfer function h(a) as

h(a) =eα(w·x+β)

1 + eα(w·x+β),

where α scales the sigmoid and β shifts it

For Clarke Grid use piece of sigmoid that is steep on the smallactivations and then flattens out

MW, MH (UCSC) Matching Loss October 23, 2009 16 / 24

Different Parts of Sigmoid

h(a)=s(a)h’(a)

Legend

0

0.2

0.4

0.6

0.8

1

–6 –4 –2 2 4 6a

h(a)=s(a/2–5)h’(a)

Legend

0

0.02

0.04

0.06

0.08

0.1

0.12

–6 –4 –2 2 4 6a

h(a)=s(a/2+2)h’(a)

Legend

0

0.2

0.4

0.6

0.8

1

–6 –4 –2 2 4 6a

loss(ah,–3)loss(ah,0)loss(ah,3)

Legend

0

1

2

3

4

5

–6 –4 –2 2 4 6ah

loss(ah,–3)loss(ah,0)loss(ah,3)

Legend

0

0.05

0.1

0.15

0.2

–6 –4 –2 2 4 6ah

loss(ah,–3)loss(ah,0)loss(ah,3)

Legend

0

0.5

1

1.5

2

2.5

–6 –4 –2 2 4 6ah

In the bottom row we plot the ∆(a, a) as a function of the estimate a for fixedactivities a = −3, 0, 3. Note that locally the losses are quadratic and the

steepness of the bowl is determined by h′(a).MW, MH (UCSC) Matching Loss October 23, 2009 17 / 24

3D View of the Loss

50 5

2

ah0

4

0 a

6

−5−5

5

50

ah

2

00

a

4

−5

6

−5

5

ah

0

−55

a0

−50

2

4

6

5.0

−2.5

ah

5−5.0

a

0

2.5

−5

0.0

5.0

2.5

0.0ah

−2.5

−5.0

5.02.50.0−2.5−5.0a

0.0

5.0

a5.0

ah

2.5

−5.0

−5.0 −2.5

0.0

−2.5

2.5

Regular Sigmoid, Left Piece of Sigmoid, Right Piece of SigmoidMW, MH (UCSC) Matching Loss October 23, 2009 18 / 24

Right Piece of Sigmoid Contours

0 50 100 150 200 250 300 350 4000

50

100

150

200

250

300

350

400

E

E

D D

C

C

B

B

A

A

Reference Values

Pre

dict

ions

Clarke Grid with Matching Loss Level Curves

Student Version of MATLAB

MW, MH (UCSC) Matching Loss October 23, 2009 19 / 24

Shift formulas

hα,β(a) := h(α(a + β)) (1)

hα,β(1

αa − β) = h(a) (2)

h−1α,β(y) =

1

αh−1(y)− β (3)

hα,β(h−1α,β(y))

(3)= hα,β(

1

αh−1(y)− β)

(2)= h(h−1(y)) = y

h−1α,β(hα,β(a))

(1)= h−1

α,β(h(α(a + β))(3)=

1

α(h−1(h(α(a + β)))− β = a

MW, MH (UCSC) Matching Loss October 23, 2009 20 / 24

Post loss unaffected by shifting and scaling of

transfer function

Can be undone by shifting and scaling linear activation

∆h−1α,β

(y ,

h(a)︷ ︸︸ ︷hα,β(

1

αa − β)) =

∫ y

h(a)

(h−1α,β(p)−Z

ZZh−1α,β(HHHhα,β(

1

αa − β))) dp

=

∫ y

h(a)

(1

αh−1(p)ZZ−β − (

1

αaZZ−β)) dp

=1

α

∫ y

h(a)

(h−1(p)− h−1(h(a))) dp

=1

α∆h−1(y , h(a))

MW, MH (UCSC) Matching Loss October 23, 2009 21 / 24

Summarize: why are we doing this?

Design good loss functions for given problem

What is best loss?

Square loss and logistic loss bad gold standards

MW, MH (UCSC) Matching Loss October 23, 2009 22 / 24

Gradient and Hessian of Preloss

Work in (a, a) domain

Loss

∆h(

a︷︸︸︷w · x, a) =

∫ a

a

(h(z)− h(a)) dz

1st derivatives

∂∆h(a, a)

∂w= (h(a)− h(a)) x

2nd derivatives∂2∆h(a, a)

(∂w)2= h′(a) x>x

MW, MH (UCSC) Matching Loss October 23, 2009 23 / 24

Weights updates

Pre Loss Gradient Descent (explicit)

wt = wt−1 − η∂∆h(at , at)

∂w︸ ︷︷ ︸old gradient

= wt−1 − η (h(at)− h(at)) xt , where at = wt−1 · xt

Post Loss Gradient Descent (explicit)

wt = wt−1 − η∂∆h−1(yt , h(at))

∂w︸ ︷︷ ︸old gradient

= wt−1 − η∂∆h(at , h

−1(yt))

∂w= wt−1 − η (h(at)− yt) xt

MW, MH (UCSC) Matching Loss October 23, 2009 24 / 24