Machine Learning in Practice Lecture 5 Carolyn Penstein Rosé Language Technologies Institute/...

-

Upload

molly-baldwin -

Category

Documents

-

view

216 -

download

1

Transcript of Machine Learning in Practice Lecture 5 Carolyn Penstein Rosé Language Technologies Institute/...

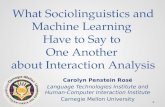

Machine Learning in PracticeLecture 5

Carolyn Penstein Rosé

Language Technologies Institute/ Human-Computer Interaction

Institute

Plan for the Day Announcements

Assignment 3Project update

Quiz 2 Naïve Bayes

Naïve Bayes

Decision Tables vs Decision Trees Open World

Assumption Only examine some

attributes in particular contexts

Uses majority class within a context to eliminate closed world requirement

Divide and Conquer approach

Closed World Assumption Every case

enumerated

No generalization except by limiting number of attributes

1R algorithms produces the simplest possible Decision Table

Decision Tables vs Decision Trees Open World

Assumption Only examine some

attributes in particular contexts

Uses majority class within a context to eliminate closed world requirement

Divide and Conquer approach

Closed World Assumption Every case

enumerated

No generalization except by limiting number of attributes

1R algorithms produces the simplest possible Decision Table

http://aidb.cs.iitm.ernet.in/cs638/questionbank_files/image004.jpg

Decision Tables vs Decision Trees Open World

Assumption Only examine some

attributes in particular contexts

Uses majority class within a context to eliminate closed world requirement

Divide and Conquer approach

Closed World Assumption Every case

enumerated

No generalization except by limiting number of attributes

1R algorithms produces the simplest possible Decision Table

Decision Tables vs Decision Trees Open World

Assumption Only examine some

attributes in particular contexts

Uses majority class within a context to eliminate closed world requirement

Divide and Conquer approach

Closed World Assumption Every case

enumerated

No generalization except by limiting number of attributes

1R algorithms produces the simplest possible Decision Table

Decision Tables vs Decision Trees Open World

Assumption Only examine some

attributes in particular contexts

Uses majority class within a context to eliminate closed world requirement

Divide and Conquer approach

Closed World Assumption Every case

enumerated

No generalization except by limiting number of attributes

1R algorithms produces the simplest possible Decision Table

Decision Tables vs Decision Trees Open World

Assumption Only examine some

attributes in particular contexts

Uses majority class within a context to eliminate closed world requirement

Divide and Conquer approach

Closed World Assumption Every case

enumerated

No generalization except by limiting number of attributes

1R algorithms produces the simplest possible Decision Table

Weights Versus Probabilities:A Historical Perspective Artificial intelligence is about separating

declarative and procedural knowledge Algorithms can reason using knowledge

in the form of rulesE.g., expert systems, some cognitive models

This can be used for planning, diagnosing, inferring, etc.

Weights Versus Probabilities:A Historical Perspective But what about reasoning under

uncertainty? Incomplete knowledgeErrorsKnowledge with exceptionsA changing world

Rules with Confidence Values Will Carolyn eat the chocolate?

Positive evidence Carolyn usually eats what she likes. (.85) Carolyn likes chocolate. (.98)

Negative Evidence Carolyn doesn’t normally eat more than one dessert

per day. (.75) Carolyn already drank hot chocolate. (.95) Hot chocolate is sort of like a dessert. (.5)

How do you combine positive and negative evidence?

What is a probability? You have a notion of an event

Tossing a coin How many things can happen

Heads, tails How likely are you to get heads on a

random toss?50%

Probabilities give you a principled way of combining predictionsHow likely are you to get heads twice in a row? .5 * .5 = .25

Statistical Modeling Basics Rule and tree based methods use

contingencies between patterns of attribute values as a basis for decision making

Statistical models treat attributes as independent pieces of evidence that the decision should go one way or another

Most of the time in real data sets the values of the different attributes are not independent of each other

Statistical Modeling Pros and Cons

Statistical modeling people argue that statistical models are more elegant than other types of learned models because of their formal properties You can combine probabilities in a principled way

You can also combine the “weights” that other approaches assign

But it is more ad hoc

Statistical Modeling Pros and Cons

Statistical approach depends on assumptions that are not in general true

In practice statistical approaches don’t work better than “ad-hoc” methods

Statistical Modeling Basics Even without features you can make a

prediction about a class based on prior probabilitiesYou would always predict the majority class

Statistical Modeling Basics Statistical approaches balance evidence

from features with prior probabilities Thousand feet view: Can I beat performance

based on priors with performance including evidence from features?

On very skewed data sets it can be hard to beat your priors (evaluation done based on percent correct)

Basic Probability If you roll a pair of dice, what is the

probability that you will get a 4 and a 5?

Basic Probability If you roll a pair of dice, what is the

probability that you will get a 4 and a 5? 1/18

Basic Probability If you roll a pair of dice, what is the

probability that you will get a 4 and a 5? 1/18 How did you figure it out?

Basic Probability If you roll a pair of dice, what is the

probability that you will get a 4 and a 5? 1/18 How did you figure it out? How many ways can the dice land?

Basic Probability If you roll a pair of dice, what is the

probability that you will get a 4 and a 5? 1/18 How did you figure it out? How many ways can the dice land? How many of these satisfy our constraints?

Basic Probability If you roll a pair of dice, what is the

probability that you will get a 4 and a 5? 1/18 How did you figure it out? How many ways can the dice land? How many of these satisfy our constraints? Divide ways to satisfy constraints by

number of things that can happen

Basic Probability What if you want the first die to be 5 and

the second die to be 4?

Basic Probability What if you want the first die to be 5 and

the second die to be 4? What if you know the first die landed on 5?

Computing Conditional Probabilities

Computing Conditional Probabilities

What is the probability ofhigh humidity?

Computing Conditional Probabilities

What is the probability ofhigh humidity?What is the probability of high humidity given thatthe temperature is cool?

How do we train a model?

How do we train a model?

For every value of everyfeature, store a count.How many times do yousee Outlook = rainy?

How do we train a model?

For every value of everyfeature, store a count.How many times do yousee Outlook = rainy?

What is P(Outlook = rainy)?

How do we train a model?We also need to know what evidence each value of every feature gives of each possible prediction (or how typical it wouldbe for instances of that class)What is P(Outlook = rainy | Class = yes)?

How do we train a model?We also need to know what evidence each value of every feature gives of each possible prediction (or how typical it wouldbe for instances of that class)What is P(Outlook = rainy | Class = yes)?

Store counts on (class value, feature value) pairs

How many times is Outlook = rainy when class = yes?

How do we train a model?We also need to know what evidence each value of every feature gives of each possible prediction (or how typical it wouldbe for instances of that class)What is P(Outlook = rainy | Class = yes)?

Store counts on (class value, feature value) pairs

How many times is Outlook = rainy when class = yes?

Likelihood that play = yes if Outlook = rainy = Count(yes & rainy)/ Count(yes) * Count(yes)/Count(yes or no)

How do we train a model?Now try to compute likelihood play = yes for Outlook = overcast, Temperature = hot, Humidity = high, Windy = FALSE

Combinations of features? E.g., P(play = yes | Outlook = rainy &

Temperature = hot) Multiply conditional probabilities for each

predictor and prior probability of predicted class together before you normalize P(play = yes | Outlook = rainy & Temperature = hot) Likelihood of yes = Count(yes & rainy)/ Count(yes) *

Count(yes & hot)/ Count(yes) * Count(yes)/Count(yes or no)

After you compute the likelihood of yes and likelihood of no, you will normalize to get probability of yes and probability of no

Unknown Values Not a problem for Naïve Bayes Probabilities computed using only the

specified values Likelihood that play = yes when Outlook =

sunny, Temperature = cool, Humidity = high, Windy = true 2/9 * 3/9 * 3/9 * 3/9 * 9/14 If Outlook is unknown, 3/9 * 3/9 * 3/9 * 9/14

Likelihoods will be higher when there are unknown valuesFactored out during normalization

Numeric Values List values of numeric feature for all class features

Values for play = yes: 83, 70,68, 64, 69, 75, 75, 72, 81

Compute Mean and Standard Deviation Values for play = yes: 83, 70, 68, 64, 69,75, 75, 72, 81

= 73, = 6.16

Values for play = no: 85, 80, 65, 72, 71 = 74.6, = 7.89

Compute likelihoods f(x) = [1/sqrt(2 )]e (x- )2/2 2

Normalize using proportion of predicted class feature as before

Bayes Theorem How would you compute the likelihood that

a person was a bagpipe major given that they had red hair?

Bayes Theorem How would you compute the likelihood that

a person was a bagpipe major given that they had red hair?

Could you compute the likelihood that a person has red hair given that they were a bagpipe major?

Bayes Theorem How would you compute the likelihood that

a person was a bagpipe major given that they had red hair?

Could you compute the likelihood that a person has red hair given that they were a bagpipe major?

Another Example Model

@attribute ice-cream {chocolate, vanilla, coffee, rocky-road, strawberry}@attribute cake {chocolate, vanilla}@attribute yummy {yum,good,ok}

@data

chocolate,chocolate,yumvanilla,chocolate,goodcoffee,chocolate,yumcoffee,vanilla,okrocky-road,chocolate,yumstrawberry,vanilla,yum

@relation is-yummy

Compute conditional probabilities for each attribute value/class pair P(B|A) = Count(B&A)/Count(A) P(coffee ice-cream | yum) = .25 P(vanilla ice-cream | yum) = 0

Another Example Model

@attribute ice-cream {chocolate, vanilla, coffee, rocky-road, strawberry}@attribute cake {chocolate, vanilla}@attribute yummy {yum,good,ok}

@data

chocolate,chocolate,yumvanilla,chocolate,goodcoffee,chocolate,yumcoffee,vanilla,okrocky-road,chocolate,yumstrawberry,vanilla,yum

@relation is-yummy

What class would you assign to strawberry ice cream with chocolate cake?

Compute likelihoods and then normalize Note: this model cannot take into account that the class might

depend on how well the cake and ice cream “go together”

What is the likelihood thatthe answer is yum?P(strawberry|yum) = .25P(chocolate cake|yum) = .75.25 * .75 * .66 = .124What is the likelihood that The answer is good?P(strawberry|good) = 0P(chocolate cake|good) = 10* 1 * .17 = 0What is the likelihood that The answer is ok?P(strawberry|ok) = 0P(chocolate cake|ok) = 00*0 * .17 = 0

Another Example Model

@attribute ice-cream {chocolate, vanilla, coffee, rocky-road, strawberry}@attribute cake {chocolate, vanilla}@attribute yummy {yum,good,ok}

@data

chocolate,chocolate,yumvanilla,chocolate,goodcoffee,chocolate,yumcoffee,vanilla,okrocky-road,chocolate,yumstrawberry,vanilla,yum

@relation is-yummy

What about vanilla ice cream and vanilla cake Intuitively, there is more evidence that the selected

category should be Good.What is the likelihood thatthe answer is yum?P(vanilla|yum) = 0P(vanilla cake|yum) = .250*.25 * .66= 0What is the likelihood that The answer is good?P(vanilla|good) = 1P(vanilla cake|good) = 01*0 * .17= 0What is the likelihood that The answer is ok?P(vanilla|ok) = 0P(vanilla cake|ok) = 10* 1 * .17 = 0

Statistical Modeling with Small Datasets

When you train your model, how many probabilities are you trying to estimate?

This statistical modeling approach has problems with small datasets where not every class is observed in combination with every attribute valueWhat potential problem occurs when you never

observe coffee ice-cream with class ok?When is this not a problem?

Smoothing

One way to compensate for 0 counts is to add 1 to every count

Then you never have 0 probabilities But what might be the problem you still

have on small data sets?

Naïve Bayes with smoothing

@attribute ice-cream {chocolate, vanilla, coffee, rocky-road, strawberry}@attribute cake {chocolate, vanilla}@attribute yummy {yum,good,ok}

@data

chocolate,chocolate,yumvanilla,chocolate,goodcoffee,chocolate,yumcoffee,vanilla,okrocky-road,chocolate,yumstrawberry,vanilla,yum

@relation is-yummyWhat is the likelihood thatthe answer is yum?P(vanilla|yum) = .11P(vanilla cake|yum) = .33.11*.33* .66= .03What is the likelihood that The answer is good?P(vanilla|good) = .33P(vanilla cake|good) = .33.33 * .33 * .17 = .02What is the likelihood that The answer is ok?P(vanilla|ok) = .17P(vanilla cake|ok) = .66.17 * .66 * .17 = .02

Take Home Message Naïve Bayes is a simple form of statistical

machine learning It’s naïve in that it assumes that all

attributes are independent In the training process, counts are kept that

indicate the connection between attribute values and predicted class values

0 counts interfere with making predictions, but smoothing can help address this difficulty