Machine Learning & Data MiningMachine Learning & Data Mining CS/CNS/EE 155 Lecture 3: SVM, Logistic...

Transcript of Machine Learning & Data MiningMachine Learning & Data Mining CS/CNS/EE 155 Lecture 3: SVM, Logistic...

MachineLearning&DataMiningCS/CNS/EE155

Lecture3:SVM,LogisticRegression,NeuralNets,

EvaluationMetrics

Announcements

• HW1DueTomorrow–Willbegradedinaboutaweek

• HW2ReleasedTonight/Tomorrow– DueJan23rd at9pm

• RecitationThursday– LinearAlgebra(&VectorCalculus)– Annenberg105

Recap: BasicRecipe

• TrainingData:

• ModelClass:

• LossFunction:

• LearningObjective:

S = (xi, yi ){ }i=1N

f (x |w,b) = wT x − b

L(a,b) = (a− b)2

LinearModels

SquaredLoss

x ∈ RD

y ∈ −1,+1{ }

argminw,b

L yi, f (xi |w,b)( )i=1

N

∑

OptimizationProblem

Recap:Bias-VarianceTrade-off

0 20 40 60 80 100−1

−0.5

0

0.5

1

1.5

0 20 40 60 80 1000

0.5

1

1.5

0 20 40 60 80 100−1

−0.5

0

0.5

1

1.5

0 20 40 60 80 1000

0.5

1

1.5

0 20 40 60 80 100−1

−0.5

0

0.5

1

1.5

0 20 40 60 80 1000

0.5

1

1.5VarianceBias VarianceBias VarianceBias

Recap:CompletePipeline

S = (xi, yi ){ }i=1N

TrainingData

f (x |w,b) = wT x − b

ModelClass(es)

L(a,b) = (a− b)2

LossFunction

argminw,b

L yi, f (xi |w,b)( )i=1

N

∑

CrossValidation&ModelSelection Profit!

SGD!

Today

• BeyondBasicLinearModels– SupportVectorMachines– LogisticRegression– Feed-forwardNeuralNetworks– Differentwaystointerpretmodels

• DifferentEvaluationMetrics

Today

• BeyondBasicLinearModels– SupportVectorMachines– LogisticRegression– Feed-forwardNeuralNetworks– Differentwaystointerpretmodels

• DifferentEvaluationMetrics

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

1

2

3

4

5

6

7

8

9

0/1Loss

SquaredLoss

f(x)

Loss

Targety

argminw,b

L yi, f (xi |w,b)( )i=1

N

∑

∂w L yi, f (xi |w,b)( )i=1

N

∑

Howtocomputegradientfor0/1Loss?

Recap:0/1LossisIntractable

• 0/1Lossisflatordiscontinuouseverywhere

• VERYdifficulttooptimizeusinggradientdescent

• Solution:OptimizesurrogateLoss– Today:HingeLoss(…eventually)

SupportVectorMachinesakaMax-MarginClassifiers

Source:http://en.wikipedia.org/wiki/Support_vector_machine

WhichLineistheBestClassifier?

Source:http://en.wikipedia.org/wiki/Support_vector_machine

WhichLineistheBestClassifier?

“Margin”

• Lineisa1D,Planeis2D• Hyperplane ismanyD– IncludesLineandPlane

• Definedby(w,b)

• Distance:

• SignedDistance:

Recall:Hyperplane Distance

wT x − bw

wT x − bw

w

un-normalizedsigneddistance!

LinearModel=

b/|w|

14

Recall: Margin

γ =maxwmin(x,y)

y(wT x)w

HowtoMaximizeMargin?(AssumeLinearlySeparable)

Choosewthatmaximizes:

argmaxw,b

min(x,y)

y wT x − b( )w

"

#$$

%

&''

Margin

HowtoMaximizeMargin?(AssumeLinearlySeparable)

argmaxw,b

min(x,y)

y wT x − b( )w

"

#$$

%

&''

≡ argmaxw,b: w =1

min(x,y)

y wT x − b( )#$%

&'(

Supposeweinsteadenforce:

min(x,y)

y wT x − b( ) =1

= argminw,b

w ≡ argminw,b

w 2

Then:

HoldDenominatorFixed

HoldNumeratorFixed

ImageSource:http://en.wikipedia.org/wiki/Support_vector_machine

MaxMarginClassifier(SupportVectorMachine)

“LinearlySeparable”

Bettergeneralizationtounseen testexamples(beyondscopeofcourse*)(only trainingdataonmarginmatter)

“Margin”

argminw,b

12wTw ≡ 1

2w 2

∀i : yi wT xi − b( ) ≥1

*http://olivier.chapelle.cc/pub/span_lmc.pdf

argminw,b,ξ

12wTw+ C

Nξi

i∑

∀i : yi wT xi − b( ) ≥1−ξi

∀i :ξi ≥ 0

Soft-MarginSupportVectorMachine

ξi

“Margin”

SizeofMarginvs

SizeofMarginViolations(Ccontrolstrade-off)

ImageSource:http://en.wikipedia.org/wiki/Support_vector_machine

“Slack”

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

f(x)

Loss

0/1Loss

HingeLossargmin

w,b,ξ

12wTw+ C

Nξi

i∑

∀i : yi wT xi − b( ) ≥1−ξi

∀i :ξi ≥ 0

HingeLoss

L(yi, f (xi )) =max(0,1− yi f (xi )) = ξi

Regularization

Targety

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

3.5

4

4.5

5

0/1Loss

SquaredLoss

f(x)

Loss

Targety

HingeLoss

HingeLossvs SquaredLoss

Recall:PerceptronLearningAlgorithm(LinearClassificationModel)

• w1 =0,b1 =0• Fort=1….– Receiveexample(x,y)– Iff(x|wt,bt)=y• [wt+1, bt+1]=[wt, bt]

– Else• wt+1=wt +yx• bt+1 =bt - y

21

S = (xi, yi ){ }i=1N

y ∈ +1,−1{ }

TrainingSet:

Gothroughtrainingsetinarbitraryorder(e.g.,randomly)

f (x |w) = sign(wT x − b)

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

ComparisonwithPerceptron“Loss”

22

max 0,−yi f (xi |w,b){ }

yf(x)

Loss

max 0,1− yi f (xi |w,b){ }

Perceptron SVM/Hinge

SupportVectorMachine

• 2Interpretations

• Geometric– Marginvs MarginViolations

• LossMinimization– Modelcomplexityvs HingeLoss– (Willdiscussindepthnextlecture)

• Equivalent!

argminw,b,ξ

12wTw+ C

Nξi

i∑

∀i : yi wT xi − b( ) ≥1−ξi

∀i :ξi ≥ 0

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

CommentonOptimization

• HingeLossisnotsmooth– Notdifferentiable

• Howtooptimize?

• Stochastic(Sub-)GradientDescentstillworks!– Sub-gradientsdiscussednextlecture

argminw,b,ξ

12wTw+ C

Nξi

i∑

∀i : yi wT xi − b( ) ≥1−ξi

∀i :ξi ≥ 0

https://en.wikipedia.org/wiki/Subgradient_method

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

LogisticRegressionaka“Log-Linear”Models

LogisticRegression

P(y | x,w,b) = e12y wT x−b( )

e12y wT x−b( )

+ e−12y wT x−b( )

P(y | x,w,b) = 11+ e−y(w

T x−b)

“Log-Linear”Model

-5 -4 -3 -2 -1 0 1 2 3 4 50

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

y*f(x)P(y|x)

Alsoknownassigmoidfunction: σ (a) = ea

1+ ea

y ∈ −1,+1{ }

• Trainingset:

• MaximumLikelihood:– (Why?)

• Each(x,y)inSsampledindependently!– DiscussedfurtherinProbablyRecitation

MaximumLikelihoodTraining

S = (xi, yi ){ }i=1N

argmaxw,b

P(yi | xi,w,b)i∏

x ∈ RD

y ∈ −1,+1{ }

• SVMsoftenbetteratclassification– Assumingmarginexists…

• CalibratedProbabilities?

• IncreaseinSVMscore….– ...similarincreaseinP(y=+1|x)?– Notwellcalibrated!

• LogisticRegression!

WhyUseLogisticRegression? 0

0.02 0.04 0.06 0.08 0.1

0.12 0.14 0.16

COV_TYPE ADULT LETTER.P1 LETTER.P2 MEDIS SLAC

0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16

HS

0

0.2

0.4

0.6

0.8

1

Fra

ctio

n o

f P

osi

tives

0

0.2

0.4

0.6

0.8

1

Fra

ctio

n o

f P

osi

tives

0

0.2

0.4

0.6

0.8

1

0 0.2 0.4 0.6 0.8 1

Fra

ctio

n o

f P

osi

tives

Mean Predicted Value 0 0.2 0.4 0.6 0.8 1

Mean Predicted Value 0 0.2 0.4 0.6 0.8 1

Mean Predicted Value 0 0.2 0.4 0.6 0.8 1

Mean Predicted Value 0 0.2 0.4 0.6 0.8 1

Mean Predicted Value 0 0.2 0.4 0.6 0.8 1

Mean Predicted Value 0 0.2 0.4 0.6 0.8 1

0

0.2

0.4

0.6

0.8

1

Fra

ctio

n o

f P

osi

tives

Mean Predicted Value

Figure 1. Histograms of predicted values and reliability diagrams for boosted decision trees.

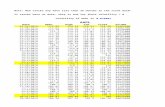

Table 4. Squared error and cross-entropy performance of learning algorithmsSQUARED ERROR CROSS-ENTROPY

ALGORITHM RAW PLATT ISOTONIC RAW PLATT ISOTONICBST-DT 0.3050 0.2650 0.2652 0.4810 0.3727 0.3745SVM 0.3303 0.2727 0.2719 0.5767 0.3988 0.3984BAG-DT 0.2818 0.2815 0.2799 0.4050 0.4082 0.3996ANN 0.2805 0.2821 0.2806 0.4143 0.4229 0.4120KNN 0.2861 0.2871 0.2839 0.4367 0.4300 0.4186BST-STMP 0.3659 0.3098 0.3096 0.6241 0.4713 0.4734DT 0.3211 0.3212 0.3145 0.5019 0.5091 0.4865

to have probability near 0.

The reliability plots in Figure 1 display roughly sigmoid-shaped reliability diagrams, motivating the use of a sig-moid to transform predictions into calibrated probabili-ties. The reliability plots in the middle row of the £gurealso show sigmoids £tted using Platt’s method. The reli-ability plots in the bottom of the £gure show the function£tted with Isotonic Regression.

To show how calibration transforms the predictions, weplot histograms and reliability diagrams for the sevenproblem for boosted trees after 1024 steps of boosting,after Platt Calibration (Figure 2) and after Isotonic Re-gression (Figure 3). The reliability diagrams for IsotonicRegression are very similar to the ones for Platt Scal-ing, so we omit them in the interest of space. The £guresshow that calibration undoes the shift in probability masscaused by boosting: after calibration many more caseshave predicted probabilities near 0 and 1. The reliabil-ity diagrams are closer to the diagonal, and the S shapecharacteristic of boosting’s predictions is gone. On each

problem, transforming the predictions using either PlattScaling or Isotonic Regression yields a signi£cant im-provement in the quality of the predicted probabilities,leading to much lower squared error and cross-entropy.The main difference between Isotonic Regression andPlatt Scaling for boosting can be seen when comparingthe histograms in the two £gures. Because Isotonic Re-gression generates a piecewise constant function, the his-tograms are quite coarse, while the histograms generatedby Platt Scaling are smooth and easier to interpret.

Table 4 compares the RMS and MXE performance of thelearning methods before and after calibration. Figure 4shows the squared error results from Table 4 graphically.

After calibration with Platt Scaling or Isotonic Regres-sion, boosted decision trees have better squared error andcross-entropy than the other learning methods. The nextbest methods are SVMs, bagged decision trees and neu-ral nets. While Platt Scaling and Isotonic Regression sig-ni£cantly improve the performance of the SVM models,they have little or no effect on the performance of bagged

ImageSource:http://machinelearning.org/proceedings/icml2005/papers/079_GoodProbabilities_NiculescuMizilCaruana.pdf

*FigureaboveisforBoostedDecisionTrees(SVMshavesimilareffect)

f(x)

P(y=+1)

LogLoss

P(y | x,w,b) = e12y wT x−b( )

e12y wT x−b( )

+ e−12y wT x−b( )

=e12yf (x|w,b)

e12yf (x|w,b)

+ e−12yf (x|w,b)

argmaxw,b

P(yi | xi,w,b)i∏ = argmin

w,b− lnP(yi | xi,w,b)

i∑

LogLoss

Solveusing(Stoch.)GradientDescent

L(y, f (x)) = − ln e12yf (x )

e12yf (x )

+ e−12yf (x )

"

#

$$$

%

&

'''

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

LogLossvs HingeLoss

L(y, f (x)) = − ln e12yf (x )

e12yf (x )

+ e−12yf (x )

"

#

$$$

%

&

'''

L(y, f (x)) =max(0,1− yf (x))

f(x)

Loss

0/1Loss

HingeLoss

LogLoss

Log-LossGradient(ForOneExample)

∂w − lnP(yi | xi ) = −∂w12yi f (xi |w,b)− ln e

12yi f (xi |w,b)

+ e−

12yi f (xi |w,b)#

$%

&

'(

#

$%%

&

'((

= − 12yixi +∂w ln e

12yi f (xi |w,b)

+ e−

12yi f (xi |w,b)#

$%

&

'(

= − 12yixi +

1

e12yi f (xi |w,b)

+ e−

12yi f (xi |w,b)

∂w e12yi f (xi |w,b)

+ e−

12yi f (xi |w,b)#

$%

&

'(

= −1+ 1

e12yi f (xi |w,b)

+ e−

12yi f (xi |w,b)

e12yi f (xi |w,b)

− e−

12yi f (xi |w,b)#

$%

&

'(

#

$

%%

&

'

((

12yixi

= −1+P(yi | xi )−P(−yi | xi )( ) 12yixi

= −P(−yi | xi )yixi = − 1−P(yi | xi )( ) yixiP(y | x,w,b) = e

12yf (x|w,b)

e12yf (x|w,b)

+ e−12yf (x|w,b)

LogisticRegression

• TwoInterpretations

• MaximizingLikelihood

• MinimizingLogLoss

• Equivalent!

Logis?c(Regression(

P(y | x,w,b) = ey wT x−b( )

ey wT x−b( ) + e

−y wT x−b( )

P(y | x,w,b)∝ ey wT x−b( ) ≡ ey* f (x|w,b)

“LogNLinear”&Model&

-5 -4 -3 -2 -1 0 1 2 3 4 50

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

y*f(x)(

P(y|x)(

-2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 30

0.5

1

1.5

2

2.5

3

Feed-ForwardNeuralNetworksakaNotQuiteDeepLearning

1LayerNeuralNetwork

• 1Neuron– Takesinputx– Outputsy

• ~LogisticRegression!– SolveviaGradientDescent

Σx y

“Neuron”

f(x|w,b)=wTx – b=w1*x1 +w2*x2 +w3*x3– b

y=σ(f(x))

-5 -4 -3 -2 -1 0 1 2 3 4 50

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

sigmoidtanhrectilinear

2LayerNeuralNetwork

• 2LayersofNeurons– 1st Layertakesinputx– 2nd Layertakesoutputof1st layer

• Canapproximatearbitraryfunctions– Providedhiddenlayerislargeenough– “fat”2-LayerNetwork

Σx y

ΣΣ

HiddenLayer

Non-Linear!

Aside:DeepNeuralNetworks

• WhypreferDeepovera“Fat”2-Layer?– Compactmodel

• (exponentiallylarge“fat”model)– Easiertotrain?– Discussedfurtherindeeplearninglectures

ImageSource:http://blog.peltarion.com/2014/06/22/deep-learning-and-deep-neural-networks-in-synapse/

TrainingNeuralNetworks

• GradientDescent!– EvenforDeepNetworks*

• Parameters:– (w11,b11,w12,b12,w2,b2)

Σx y

ΣΣ

*morecomplicated

∂w2 L yi,σ 2( )i=1

N

∑ = ∂w2L yi,σ 2( )i=1

N

∑ = ∂σ 2L yi,σ 2( )i=1

N

∑ ∂w2σ 2 = ∂σ 2L yi,σ 2( )i=1

N

∑ ∂ f2σ 2∂w2 f2

f(x|w,b)=wTx – b y=σ(f(x))

∂w1m L yi,σ 2( )i=1

N

∑ = ∂σ 2L yi,σ 2( )i=1

N

∑ ∂ f2σ 2∂w1 f2 = ∂σ 2L yi,σ 2( )

i=1

N

∑ ∂ f2σ 2∂σ1m f2∂ f1m

σ1m∂w1m f1m

Backpropagation =GradientDescent(lotsofchainrules)

StorySoFar

• DifferentLossFunctions– HingeLoss– LogLoss– Canbederivedfromdifferentinterpretations

• Non-LinearModelClasses– NeuralNets– Composablewithdifferentlossfunctions

• Noclosed-formsolutionfortraining– Mustusesomeformofgradientdescent

Today

• BeyondBasicLinearModels– SupportVectorMachines– LogisticRegression– Feed-forwardNeuralNetworks– Differentwaystointerpretmodels

• DifferentEvaluationMetrics

Evaluation

• 0/1Loss(Classification)

• SquaredLoss(Regression)

• AnythingElse?

Example:CancerPrediction

LossFunction HasCancer Doesn’t HaveCancer

Predicts Cancer Low MediumPredictsNo Cancer OMGPanic! LowM

odel

Patient

• ValuePositives&NegativesDifferently– Caremuchmoreaboutpositives

• “CostMatrix”– 0/1LossisSpecialCase

OptimizingforCost-SensitiveLoss

• Thereisnouniversallyacceptedway.

SimplestApproach(CostBalancing):

LossFunction HasCancer Doesn’t HaveCancer

Predicts Cancer 0 1PredictsNo Cancer 1000 0

argminw,b

1000 L yi, f (xi |w,b)( )i:yi=1∑ + L yi, f (xi |w,b)( )

i:yi=−1∑

#

$%%

&

'((

Precision&Recall

• Precision =TP/(TP+FP)• Recall =TP/(TP+FN)

• TP=TruePositive,TN=TrueNegative• FP=FalsePositive,FN=FalseNegative

Counts HasCancer Doesn’t HaveCancerPredicts Cancer 20(TP) 30(FP)PredictsNo Cancer 5(FN) 70(TN)M

odel

Patient

CareMoreAboutPositives!

F1 =2/(1/P+1/R)

ImageSource:http://pmtk3.googlecode.com/svn-history/r785/trunk/docs/demos/Decision_theory/PRhand.html

Example:SearchQuery

• Rankwebpagesbyrelevance

• PredictaRanking(ofwebpages)– Usersonlylookattop4– Sortbyf(x|w,b)

• Precision@4=1/2– Fractionoftop4relevant

• Recall@4=2/3– Fractionofrelevantintop4

• TopofRankingOnly!

RankingMeasures

ImageSource:http://pmtk3.googlecode.com/svn-history/r785/trunk/docs/demos/Decision_theory/PRhand.html

Top4

PairwisePreferences

2PairwiseDisagreements4PairwiseAgreements

ROC-Area

• ROC-Area– AreaunderROCCurve– Fractionpairwiseagreements

• Example:

ImageSource:http://www.medcalc.org/manual/roc-curves.php

ROC-Area:0.5#PairwisePreferences=6#Agreements=3

AveragePrecision

• AveragePrecision– AreaunderP-RCurve– P@Kforeachpositive

• Example:

ImageSource:http://pmtk3.googlecode.com/svn-history/r785/trunk/docs/demos/Decision_theory/PRhand.html

76.053

32

11

31

≈⎟⎠

⎞⎜⎝

⎛ ++⋅AP:

PrecisionatRankLocationofEachPositiveExample

ROC-AreaversusAveragePrecision

• ROC-AreaCaresabouteverypairwisepreferenceequally

• AveragePrecisioncaresmoreabouttopofranking

ROC-Area:0.5AveragePrecision:0.76

ROC-Area:0.5AveragePrecision:0.64

OtherChallenges

http://slazebni.cs.illinois.edu/publications/iccv15_active.pdf

Figure 6. Example sequences observed by the agent and the ac-tions selected to focus objects. Regions are warped in the sameway as they are fed to the CNN. Actions keep the object in thecenter of the box. More examples in the supplementary material.Last row: example Inhibition of Return marks placed during test.

ery single object without missing any instance. Notice thatmore candidates do not improve recall as much as othermethods do, so we hypothesize that fixing overall recall willimprove early recall even more.

To illustrate this result further, we plot the distribution ofcorrectly detected objects according to the number of stepsnecessary to localize them in Figure 5. The distribution hasa long tail, with 83% of detections requiring less than 50steps to be obtained, and an average of 25.6. A more robuststatistic for long-tailed distributions is the median, whichin our experiments is just 11 steps, indicating that most ofthe correct detections happen around that number of steps.Also, the agent is able to localize 11% of the objects imme-diately without processing more regions, because they arebig instances that occupy most of the image.

5.3. Qualitative Evaluation

We present a number of example sequences of regionsthat the agent attended to localize objects. Figure 7 showstwo example scenes with multiple objects, and presentsgreen boxes where a correct detection was explicitly markedby the agent. The plot to the left presents the evolutionof IoU as the agent transforms the bounding box. Theseplots show that correct detections are usually obtained witha small number of steps increasing IoU with the groundtruth rapidly. Points of the plots that oscillate below theminimum accepted threshold (0.5) indicate periods of thesearch process that were difficult or confusing to the agent.

Figure 6 shows sequences of attended regions as seen bythe agent, as well as the actions selected in each step. No-tice that the actions chosen attempt to keep the object in thecenter of the box, and also that the final object appears tohave normalized scale and aspect ratio. The top two exam-

Figure 7. Examples of multiple objects localized by the agent in asingle scene. Numbers in yellow indicate the order in which eachinstance was localized. Notice that IoU between the attended re-gion and ground truth increases quickly before the trigger is used.

4

1

Time (actions)

Inte

rsec

tion

Ove

r Uni

on

0.5

1.0

0.0 15 33 100 200

6 2

3 5

1 2 3 4 5 6

123 147

correct error missed

Figure 8. Examples of images with common mistakes that include:duplicated detections due to objects not fully covered by the IoRmark, and missed objects due to size or other difficult patterns.

ples also show the IoR mark that is placed in the environ-ment after the agent triggers a detection. The reader can findmore examples and videos in the supplementary material.

5.4. Error Modes

We also evaluate performance using the diagnostic toolproposed by Hoiem et al. [14]. In summary, object local-ization is the most frequent error of our system, and it issensitive to object size. Here we include the report of sensi-tivity to characteristics of objects in Figure 9, and compareto the R-CNN system. Our system is more sensitive to thesize of objects than any other characteristic, which is mainlyexplained by the difficulty of the agent to attend cluttered re-

• “Correct”ifoverlapislargeenough

• Howtodefinelargeenough?

• Duplicatedetections?

• Whatislearningobjective?

• Similarchallengesinvideos:• E.g.,temporalboundingboxaround“running”activity• Duplicatepredictions:breakintotwoseparaterunningactivities

• Otherexamples:heart-ratemonitoring

Summary:EvaluationMeasures

• DifferentEvaluationsMeasures– DifferentScenarios

• Largefocusongettingpositives– Largecostofmis-predictingcancer– Relevantwebpagesarerare• Aka“ClassImbalance”

• Otherchallenges:– localizationincontinuousdomain

NextLecture

• Regularization

• Lasso

• Thursday:– RecitationonMatrixLinearAlgebra(&Calculus)

![courses.cs.washington.edu · –mkdir hw1/{old,new,test} – hw1/old, hw1/new, hw1/test – ~bob – [abc] [a-c]](https://static.fdocuments.us/doc/165x107/60616dbea5b58226b1373df9/amkdir-hw1oldnewtest-a-hw1old-hw1new-hw1test-a-bob-a-abc-a-c.jpg)

![[Machine Learning] Kỹ thuật SVM](https://static.fdocuments.us/doc/165x107/55cf9905550346d0339b135d/machine-learning-ky-thuat-svm.jpg)