Lecture 3 -...

Transcript of Lecture 3 -...

Lecture3Big-Onotation,morerecurrences!!

Announcements!

• HW1isposted!(DueFriday)

• SeePiazzaforalistofHWclarifications

• Firstrecitationsectionwasthismorning,there’sanothertomorrow(samematerial). (Theseareoptional,it’sachanceforTAstogoovermoreexamplesthanwecangettoinclass).

FAQ• HowrigorousdoIneedtobeonmyhomework?• SeeourexampleHWsolutiononline

• Ingeneral,weareshootingfor:

Youshouldbeabletogiveafriendyoursolution

andtheyshouldbeabletoturnitintoarigorousproofwithoutmuchthought.

• Thisisadelicatelinetowalk,andthere’snoeasyanswer.Thinkofitlikemorelikewritingagoodessaythan“correctly”solvingamathproblem.

• What’swiththearrayboundsinpseudocode?• SORRY!I’mtryingtomatchCLRSandthiscausesmetomakemistakessometimes. Inthisclass,I’mtryingtodo:• Arraysare1-indexed

• A[1..n]isallentriesbetween1andn,inclusive

• IwillalsouseA[1:n](pythonnotation)tomeanthesamething(notpythonnotation).

• PleasecallmeoutwhenImessup.

Lasttime….

• Sorting:InsertionSort andMergeSort

• Analyzingcorrectnessofiterative+recursivealgs

• Via“loopinvariant”andinduction

• Analyzingrunningtimeofrecursivealgorithms

• Bywritingoutatreeandaddingupalltheworkdone.

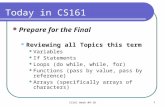

Today

• Howdowemeasuretheruntimeofanalgorithm?

• Worst-caseanalysis

• AsymptoticAnalysis

• Recurrencerelations:

• IntegerMultiplicationandMergeSort again

• The“MasterMethod”forsolvingrecurrences.

Recallfromlasttime…

• WeanalyzedINSERTIONSORTandMERGESORT.

• Theywerebothcorrect!

• INSERTIONSORTtooktimeaboutn2

• MERGESORT tooktimeaboutnlog(n).

0

200000

400000

600000

800000

1000000

0 200 400 600 800 1000

nlog(n) n^2

nlog(n)iswaybetter!!!

Afewreasonstobegrumpy

• Sorting

shouldtakezerosteps…whynlog(n)??

• What’swiththisT(MERGE)<2+4n<=6n?

1 2 3 4 5 6 7 8

Analysis

T(n)=T(n/2)+T(n/2)+T(MERGE)

Let’ssay

T(MERGEofsizen/2)≤ 6n

operations

Wewillseelaterhowtoanalyzerecurrencerelationslikethese

automagically…buttodaywe’lldoitfromfirstprinciples.

T(n)=timetorun

MERGESORTona

listofsizen

T(MERGEtwolistsofsizen/2)

isthetimetodo:

• 3 variableassignments(counters←1)

• ncomparisons

• nmoreassignments

• 2ncounterincrements

Sothat’s

2T(assign)+nT(compare)+

nT(assign)+2nT(increment)

or4n+2operations

Pluckythe

pedanticpenguin Luckythe

lackadaisicallemur

=2T(n/2)+6n

Thisiscalledarecurrencerelation:it

describestherunningtimeofaproblem

ofsizenintermsoftherunningtimeof

smallerproblems.

Or 4n + 3…

Afewreasonstobegrumpy

• Sorting

shouldtakezerosteps…whynlog(n)??

• What’swiththisT(MERGE)<2+4n<=6n?

• The“2+4n”operationsthingdoesn’tevenmakesense.Differentoperationstakedifferentamountsoftime!

• Webounded2+4n<=6n.Iguessthat’strue,butthatseemsprettydumb.

1 2 3 4 5 6 7 8

Howwewilldealwithgrumpiness

• Takeadeepbreath…

• Worstcaseanalysis

• Asymptoticnotation

Worst-caseanalysis

• Inthisclass,wewillfocusonworst-caseanalysis

• Pros:verystrongguarantee

• Cons:verystrongguarantee

1 2 3 4 5 6 7 8

Sortingasortedlist

shouldbefast!!

Algorithm

designer

Algorithm:

Do the thing

Do the stuff

Return the answer

Here is my algorithm!Here is an

input!

Big-Onotation

• Whatdowemeanwhenwemeasureruntime?

• Weprobablycareaboutwalltime: howlongdoesittaketosolvetheproblem,insecondsorminutesorhours?

• Thisisheavilydependentontheprogramminglanguage,architecture,etc.

• Thesethingsareveryimportant,butarenotthepointofthisclass.

• Wewantawaytotalkabouttherunningtimeofanalgorithm,independentoftheseconsiderations.

Howlongdoesan

operationtake?Whyare

webeingsosloppyabout

that“6”?

Rememberthisslide?

n nlog(n) n^2

8 24 64

16 64 256

32 160 1024

64 384 4096

128 896 16384

256 2048 65536

512 4608 262144

1024 10240 1048576 0

200000

400000

600000

800000

1000000

0 200 400 600 800 1000 1200

nlog(n) n^2

Changenlog(n)to5nlog(n)….

n 5nlog(n) n^2

8 120 64

16 320 256

32 800 1024

64 1920 4096

128 4480 16384

256 10240 65536

512 23040 262144

1024 51200 1048576 0

200000

400000

600000

800000

1000000

0 200 400 600 800 1000 1200

5nlog(n) n^2

AsymptoticAnalysisHowdoestherunningtimescaleasngetslarge?

• Abstractsawayfromhardware- andlanguage-specificissues.

• Makesalgorithmanalysismuchmoretractable.

• Onlymakessenseifnis

large(comparedtothe

constantfactors).

Pros:Cons:

Onealgorithmis“faster”thananotherifits

runtimegrowsmore“slowly”asngetslarge.

2100000000000000 n

is“better”thann2 ?!?!

Thisisespeciallyrelevant

now,asdatagetbiggerand

biggerandbigger…

Thiswillprovideaformalwayofsaying

thatn2 is“worse”than100nlog(n).

• Quickreminders:

• ∃: “Thereexists”• ∀:”Forall”• Example: ∀ studentsinCS161,∃ analgorithmsproblemthatreallyexcitesthestudent.

• Muchstrongerstatement: ∃ analgorithmsproblemsothat,∀ studentsinCS161,thestudentisexcitedbytheproblem.

• We’regoingtoformallydefineanupperbound:

• “T(n)growsnofasterthanf(n)”

Nowforsomedefinitions…

O(…)meansanupperbound

• LetT(n),f(n)befunctionsofpositiveintegers.• ThinkofT(n)asbeingaruntime:positiveandincreasinginn.

• Wesay“T(n)isO(f(n))”iff(n)growsatleastasfastasT(n)asngetslarge.

• Formally,

� � = � � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � � ≤ � ⋅ �(�)

pronounced“big-ohof…”orsometimes“ohof…”

Parsingthat…

� � = � � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � � ≤ � ⋅ �(�)

T(n)

f(n)

cf(n)

n0n

T(n)=O(f(n))means:

Eventually,(forlargeenoughn)

somethingthatgrowslikef(n)

isalwaysbiggerthanT(n).

Example1

• T(n)=n,f(n)=n2.

• T(n)=O(f(n))f(n)

n

T(n)

c=1

n0 =1

� � = � � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � � ≤ � ⋅ �(�)

(formalproof

onboard)

cf(n)=

Examples2and3

• AlldegreekpolynomialswithpositiveleadingcoefficientsareO(nk).

• Foranyk≥ 1,nk isnot O(nk-1).

(Ontheboard)

Take-awayfromexamples

• ToproveT(n)=O(f(n)),youhavetocomeupwithcandn0sothatthedefinitionissatisfied.

• ToproveT(n)isNOT O(f(n)),onewayisbycontradiction:

• Supposethatsomeonegivesyouacandann0 sothatthedefinitionissatisfied.

• Showthatthissomeonemustbylyingtoyoubyderivingacontradiction.

O(…)meansanupperbound,and

Ω(…)meansalowerbound

• Wesay“T(n)isΩ(f(n))”iff(n)growsatmostasfastasT(n)asngetslarge.

• Formally,

� � = Ω � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � ⋅ � � ≤ � �

Switchedthese!!

Parsingthat…

� � = Ω � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � ⋅ � � ≤ � �

T(n)

cf(n)

f(n)

n0n

Θ(…)meansboth!

•Wesay“T(n)isΘ(f(n))”if:

T(n)=O(f(n))

-AND-

T(n)=Ω(f(n))

Yetmoreexamples

• n3 – n2+3n=O(n3)

• n3 – n2+3n=Ω(n3)

• n3 – n2+3n=Θ(n3)

• 3n isnot O(2n)

• nlog(n)=Ω(n)

• nlog(n)isnot Θ(n). Funexercise:

checkallofthese

carefully!!

We’llbeusinglotsofasymptoticnotationfromhereonout

• ThismakesbothPluckyandLuckyhappy.• PluckythePedanticPenguinishappybecausethere

isaprecisedefinition.

• LuckytheLackadaisicalLemurishappybecausewe

don’thavetopaycloseattentiontoallthosepesky

constantfactorslike“4”or“6”.

• Butweshouldalwaysbecarefulnottoabuseit.

• Inthecourse,(almost)everyalgorithmwesee

willbeactuallypractical,withoutneedingto

take� ≥ �. = 2:........

Thisismy

happyface!

Questionsaboutasymptoticnotation?

Backtorecurrencerelations

We’veseenthreerecursivealgorithmssofar.

• Needlesslyrecursiveintegermultiplication

• T(n)=4T(n/2)+O(n)

• T(n)=O(n2)

• Karatsubaintegermultiplication

• T(n)=3T(n/2)+O(n)

• T(n)=O(nlog_2(3) ≈ n1.6)

• MergeSort

• T(n)=2T(n/2)+O(n)

• T(n)=O(nlog(n))

T(n)=timetosolveaproblemofsizen.

What’sthepattern?!?!?!?!

(Remindersonboard)

Themastertheorem

• Aformula thatsolvesrecurrenceswhenallofthesub-problemsarethesamesize.

• (We’llseeanexampleWednesdaywhennotallproblemsarethesamesize).

JedimasterYoda

A useful

formula it is.

Know why it works

you should.

Themastertheorem

• Suppose� � = � ⋅ � => + � �@ .Then

Many symbols

those are….

Threeparameters:

a:numberofsubproblems

b:factorbywhichinputsizeshrinks

d:needtodond worktocreateallthe

subproblems andcombinetheirsolutions.

Examples(detailsonboard)

• Needlesslyrecursiveintegermult.

• T(n)=4T(n/2)+O(n)

• T(n)=O(n2)

• Karatsubaintegermultiplication

• T(n)=3T(n/2)+O(n)

• T(n)=O(nlog_2(3) ≈ n1.6)

• MergeSort

• T(n)=2T(n/2)+O(n)

• T(n)=O(nlog(n))

� � = � ⋅ � => + � �@ .

a=4

b=2

d=1

a=3

b=2

d=1

a=2

b=2

d=1

a>bd

a>bd

a=bd

✓

✓

✓

Proofofthemastertheorem

• We’lldothesamerecursiontreethingwedidforMergeSort,butbemorecareful.

• Supposethat � � = � ⋅ � => + � ⋅ �@.

Pluckythe

PedanticPenguin

Hangon!ThehypothesisoftheMasterTheoremwas

thetheextraworkateachlevelwasO(nd).That’sNOT

thesameaswork<=cn forsomeconstantc.

Luckythe

lackadaisicallemur

That’strue… we’llactuallyproveaweaker

statementthatusesthishypothesisinsteadof

thehypothesisthat� � = � ⋅ � => + � �@ .

It’sagoodexercisetrytomakethisproofwork

rigorouslywiththeO()notation.

Recursiontree

Sizen

n/bn/b

(Size1)

…

n/b2

n/btn/btn/btn/btn/btn/bt

…

Level

Amountof

workatthis

level

0

#

problems

1

2

t

logb(n)

1

a

a2

at

alog_b(n)

Sizeof

each

problem

n

n/b

n/b2

n/bt

1

…

n/b

n/b2

n/b2n/b2

n/b2

n/b2

n/b2

� � = � ⋅ � �� + � ⋅ �@

� ⋅ ��

�C� ��C

@

�� ��@

�D� ��D

@

�EFG_>(=)�

Recursiontree

Sizen

n/bn/b

(Size1)

…

n/b2

n/btn/btn/btn/btn/btn/bt

…

Level

Amountof

workatthis

level

0

#

problems

1

2

t

logb(n)

1

a

a2

at

alog_b(n)

Sizeof

each

problem

n

n/b

n/b2

n/bt

1

…

n/b

n/b2

n/b2n/b2

n/b2

n/b2

n/b2

� � = � ⋅ � �� + � ⋅ �@

� ⋅ ��

�C� ��C

@

�� ��@

�D� ��D

@

�EFG_>(=)�

Totalwork(derivationonboard)isatmost:

� ⋅ �@ ⋅ I ��@

DJKLM(=)

DN.

Nowlet’scheckallthecases(onboard)

Evenmoregenerally,forT(n)=aT(n/b)+f(n)…

Recap

• O()notationmakesourliveseasier.

• The”MasterMethod”alsomakeourliveseasier.

Nexttime:

• Whatifthesub-

problemsare

differentsizes?

• Andwhenmight

thathappen?

Extraslides…

Somebrainteasers

• Aretherefunctionsf,gsothatNEITHER f=O(g)norf=Ω(g)?

• Aretherenon-decreasing functionsf,gsothattheaboveistrue?

• Definethen’th fibonacci numberbyF(0)=1,F(1)=1,F(n)=F(n-1)+F(n-2)forn>2.

• 1,1,2,3,5,8,13,21,34,55,…

Trueorfalse:

• F(n)=O(2n)

• F(n)=Ω(2n)

AfewmoreO()examples

ExampleA

• g(n)=2,f(n)=1.

• g(n)=O(f(n))(andalsof(n)=O(g(n)))

� � = � � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � � ≤ � ⋅ �(�)

f(n)

n0=1n

g(n)

2.1⋅ f(n)

1

2

2.1

ExampleB

• f(n)=1,g(n)asbelow.

• g(n)=O(f(n))(andalsof(n)=O(g(n)))

� � = � � � ⟺

∃�, �. > 0�. �. ∀� ≥ �.,0 ≤ � � ≤ � ⋅ �(�)

f(n)

n

g(n)

2.1⋅ f(n)

1

2

2.1

n0