Leadership Academy “Data Analysis” June 2010. Some thoughts about data analysis… We have moved...

-

Upload

andrew-dean -

Category

Documents

-

view

212 -

download

0

Transcript of Leadership Academy “Data Analysis” June 2010. Some thoughts about data analysis… We have moved...

Leadership Academy“Data Analysis”

June 2010

Some thoughts about data analysis…

We have moved from a system where hunches and “cardiac data” were followed, to a system that is data driven.

We have moved from assuming good things are happening to insisting good things are happening and proving this is so.

Teaching is an art……and becoming a science.

Learning Outcomes

• Participants will identify:– Key ideas about effective data-based decision

making– Four types of data– Challenges within our district ITBS/ITED results– Findings & results from last year’s Leadership

Academy– Effective strategies to help improve student

achievement– Ideas to include within our revised Strategic Plan

8 Key Points

1.Data provides insight

2.Data are nothing without analysis.

3.Interpret graphs accurately

4.Improvement means more success, less failure, and less unwanted variation!

5.Real-time, frequent data are necessary to monitor improvement.

6.Data must be accessible to users.

7.To improve results, work on both product and processes.

8.Take action!

8 Key Points (cont.)

Leaders Ask Good Questions

What does the graph look like?

What patterns or trends do the data show?

Special or common cause?

Over-reacting or under-reacting?

“Is this the complete picture?”

Are there other assessments we need to look at?

What other data might we need to confirm, support and or clarify what we are seeing now?

How do I know if what I’m seeing is real? Try to use multiple data sources. Triangulation occurs if three different data sources all indicate the same thing.

ITBS scores

Unit test scores

District test score

Why do some people dislike (fear?) data analysis?

Data

Share your thoughts with someone at your

table?

Why do some people dislike (fear?) data analysis?

Data analysis… unlocks the door to the

traditionally private domain of the classroom,

inevitably results in a mandate for change, and

increases pressure for accountability, especially if results are poor.

Data

What did we learn from last year’s Leadership Academy?

Global Positioning System (GPS)

Mapping the Way

Few credits earned

Proficient math scores

Reading scores were low.

Did not attend

Estimate time of arrival…

Commencement Day!

12th11th10th9th8th7th6th5th4th3rd2nd1stKPre K

Write data in each arrow indicating your student's successes and/or challenges at each grade level.

Student GPS Timeline

Student dropped out

Start of poor attendance

Good attendance

Data DrillsDropouts

Struggling Students

Walkthoughs

Perception Surveys

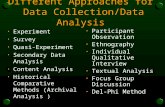

Four Types of Data

Classroom Data ______________

_____

______________

______________

_____

______________

_____

Findings & Results of Last Year’s Leadership Academy

1. Struggling students & dropouts are a K-12 concern.-“Intervention Record” piloted in four elementary schools.

-Pinnacle Analytics set up to red flag struggling students & potential dropouts

2. Our data is inconsistent, poorly organized, and not effectively used to guide our instruction.

-Pinnacle Analytics has been designed to:

*Manage, organize & store our data,

*Visually help us correlate & analyze our data, and

*Help us work smarter, not harder.

Informing Our Strategic Plan

• Last year’s Leadership Academy findings were incorporated into our Strategic Plan.

• This year’s Leadership Academy findings will be as well.

Strategic Plan GoalsAchievement

Perception

Demographic

Classroom

NEEDS, GOAL(S), FOR STRATEGIC PLAN

Throughout the Leadership Academy, record relevant data along with corresponding needs and goals of our district that you believe

should be included in our next revision of the Strategic Plan.

Student Achievement Data

1. What is our current reality?

2. Is it “good enough”?

3. What are we doing to cause this?

4. What will we do to maintain or

improve?

Initial 2009-2010 ITBS/ITED Results

Reading Comprehension Grades 3-5 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 66.7 67.5 68.9 64.9 68.3 70.3

Trajecory 69.4 69.4 69.4 75.5 75.5 75.5 81.6 87.8 93.9 100

2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Trajectory

District FAY Score Trend line

Reading Comprehension Grades 6-8 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

Fay 58 60.2 62 61 62 63.7

Trajectory 64.4 64.4 64.4 71.5 71.5 71.5 78.7 85.8 92.9 100

2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Reading Comprehension Grade 11 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 61.8 61.7 68.0 67.5 68.4 68.5 74.6

Trajectory 69.0 74.2 74.2 74.2 79.3 79.3 79.3 84.5 89.7 94.8 100

2003 2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Math Total Grades 3-5 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 66.3 66.9 69.1 66.4 68.3 70

Trajectory 68.8 68.8 68.8 75.1 75.1 75.1 81.3 87.5 93.8 100

2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Math Total Grades 6-8 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 61 62.4 64.3 63 63.7 65

Trajectory 65.3 65.3 65.3 72.3 72.3 72.3 79.2 86.1 93.1 100

2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Math Total Grade 11 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 64.0 67.7 63.8 71.2 66.6 68.6 71.5

Trajectory 69.0 74.2 74.2 74.2 79.3 79.3 79.3 84.5 89.7 94.8 100

2003 2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Science Grades 3-5 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 69.7 73.2 74 72.9 73.1 75.9

2004 2005 2006 2007 2008 2009

Science Grades 6-8 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 68.9 69.3 74.5 72.9 73 76.6

2004 2005 2006 2007 2008 2009

Science Grade 11 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 61.8 66.8 66.4 73.8 73.6 77 77.8

2003 2004 2005 2006 2007 2008 2009

Reading Comprehension Grades 3-5 FAY Students

0

20

40

60

80

100

% Pr

ofici

ent

FAY 66.7 67.5 68.9 64.9 68.3 70.3

Trajecory 69.4 69.4 69.4 75.5 75.5 75.5 81.6 87.8 93.9 100

2004 2005 2006 2007 2008 2009 2010 2011 2012 2013

Trajectory

District FAY Score Trend line

1. What is our current reality?

2. Is it “good enough”?

3. What are we doing to cause this?

4. What will we do to maintain or

improve?

MathTotal Grades 6-8 FAY Students

0

10

20

30

40

50

60

70

80

90

100

% Pr

ofici

ent

2006 72.1 51.1 73.8 46.2 47.3

2007 71.1 50.4 64 40.9 48.3

2008 71.6 51.8 64.2 42.6 51.5

2009 73.3 58.2 65.9 47.6 53.1

White Native Am. Asian African Am Hispanic

% Proficient Trajectory

Reading Comprehension Grades 3-5 FAY Students

0

10

20

30

40

50

60

70

80

90

100

% P

rofic

ient

2006 68.9 58.9 33.8 50.1

2007 64.9 54.4 28.1 46.9

2008 68.3 60.6 33.8 51.7

2009 70.3 62.3 34.9 52.6

FAY Students Low SES IEP ELL

% Proficient Trajectory

1. What is our current reality?

2. Is it “good enough”?

3. What are we doing to cause this?

4. What will we do to maintain or

improve?

Full Academic Year (FAY) StudentsCohort Group from 3rd Grade to 11th Grade

40

60

80

100

% P

rofic

ient

Reading 64 69.8 69.3 55.3 63.7 63.3 56 63.2 74.6

Math 54.7 66.2 62.4 56.8 63.1 64.8 66 59.4 71.5

Science 64.9 76.6 72.6 65.8 70.1 77.4 75.9 69.8 77.8

3rd 4th 5th 6th 7th 8th 9th 10th 11th

FINAL District AYP Results of 2008-09 Iowa Tests

Elementary

MathElementary

ReadingMiddle Math

Middle Reading

High MathHigh

Reading

All MissedMet - Safe

HarborMissed Missed Missed Missed

Low SES Missed Missed Missed Missed Missed Missed

IEP MissedMet - Safe

HarborMissed Missed

Met - Safe Harbor

Missed

ELL MissedMet - Safe

HarborMet - Safe

HarborMissed

Met - Safe Harbor

Missed

African American Met- GHS Missed Missed Met - GSHMet - Safe

HarborMet - Safe

Harbor

Asian MissedMet - Safe

HarborMet

Triennium Met - Trien Met

Met Triennium

Hispanic MissedMet - Safe

HarborMissed Missed Missed Missed

Native American Missed Missed Missed MissedMet - Safe

HarborMissed

White Met Met Met - Trien Met - Trien Met - Trien Missed

“Final” District AYP Results of 2008-09 Iowa Tests

Reading Math Partic Reading Math Partic Reading Math Partic

District delay 2 delay 2 DINA 3 DINA 3 DINA 4 DINA 4

East High watch SINA 1 SINA 1 SINA 2 SINA 2 SINA 3

North High SINA 2 SINA 3 watch SINA 3 SINA 4 SINA 1 SINA 4 SINA 5 delay 1

West High SINA 3 SINA 1 SINA 4 SINA 2 watch SINA 5 SINA 3

East Middle delay 2 watch SINA 3 SINA 1 SINA 4

North Middle watch watch SINA 1 SINA 1

West Middle SINA 3 delay 2 SINA 4 SINA 3 SINA 5 SINA 4

Central Campus delay 2 delay 2 watch SINA 3 SINA 3 SINA 1 SINA 4 SINA 4 SINA 2

Bryant watch watch SINA 1

ClarkCrescent Park watch

Emerson watch watch

Everett watch watch SINA 1 SINA 1

HuntIrving watch watch SINA 1

JoyLeeds watch watch

LincolnLongfellow watch SINA 1

Riverside watch watch SINA 1

Roosevelt watch watch SINA 1

Smith watch watch SINA 1 SINA 1

SunnysideUnity watch

WashingtonWhittier watch watch SINA 1

# of watch 1 3 2 12 10 2

# of SINA 3 5 6 1 11 14 1

# of delay 1 3 1

Disrict's & Schools' AYP Status Based Upon Previous Year's ITBS/ITED Results

2007-08 2008-09 2009-10

1. What is our current reality?

2. Is it “good enough”?

3. What are we doing to cause this?

4. What will we do to maintain or

improve?

We are moving to spring ITBS/ITED testing next year.

How might this change help our current reality?

Since we will continue to be evaluated by the

ITBS/ITED annual “snapshot”, how can we use our transition

to spring testing to help us better prepare

for the tests?

How can we provide a better picture of learning over a particular school year using the spring ITBS/ITED?

How can we better address our dip in transition year scores?

How can we better address the concerns of summer learning loss?

Can we avoid having to estimate the effects of one grade level from another since we no long would take the tests only three months into the school year?

1. How might this change help our current reality?

2. How can we use our transition to spring testing to better prepare for the tests?

3. How can we provide a better picture of learning over a particular school year?

4. How can we better address our dip in transition year scores?

5. How can we better address the concerns of summer learning loss?

6. Can we avoid having to estimate the effects of one grade level from another?

Moving to Spring ITBS/ITED

Will simply changing to spring testing with four additional months to prepare, automatically improve our scores?

What about norms?

Norms compare a student’s raw score, the number of problems the student got correct, with the scores from other students who took the same test at approximately the same time of the school year.

How much a student “knows” is inferred from

their standing or rank within that comparison or

norm group.

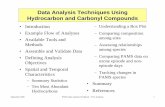

ITBS & ITEDNumber of Correct Items Required for Proficiency

Form

Code

ContentProficiency

Fall Midyear

Spring

# of items

3rd Grade-- Level 9

A RC Reading Comprehension

15 17 19 37

M1 Math Concepts & Estimation

16 17 19 31

M2 Math P. S*. & Data Interpret.

10 12 13 22

SC Science 13 14 15 30

B RC Reading Comprehension

17 19 21 37

M1 Math Concepts & Estimation

15 16 18 31

M2 Math P. S. & Data Interpret.

11 12 14 22

SC Science 13 14 16 30

Simply changing to spring testing with four additional months to prepare, will not automatically improve our scores.

We may even experience a dip in scores?

Spring ITBS / ITED Testing

• We are confident

• Improved curriculum, instruction & assessments

• Increased ownership and motivation

• Improved student achievement and proficiency on the ITBS/ITED.

Spring ITBS / ITED Testing?

• Beginning with the 2010-11 school year, we will be moving to spring testing.

• Consensus from all stakeholder groups

• Board acknowledgement and support

Preparing for Spring Testing

• March 14 – April 1• Results will arrive mid-May • District Assessment Calendar Adjustments

(ELDA in Feb, DRA-2 to 4th qrt.)• Improve curriculum, instruction & assessment• Increase formative assessment & differentiation• Use Pinnacle to analyze and triangulate data

throughout the year• Increase ownership and motivation• Learn from each other

What other achievement data should we be looking at?