From Stories to Evidence: Quantitative patient -preference ... · From Stories to Evidence:...

Transcript of From Stories to Evidence: Quantitative patient -preference ... · From Stories to Evidence:...

1

April 19, 2018

MDICx webinar seriesFrom Stories to Evidence: Quantitative patient-preference information to inform product-development and regulatory reviews

F. Reed JohnsonProfessor, Duke School of Medicine

Juan Marcos GonzalezAssistant Professor, Duke School of Medicine

From Stories to Evidence: Quantitative patient-preference information to inform product-development and regulatory reviews

MDIC Webinar IIIApril 19, 2018 F. Reed Johnson

Professor, Duke School of Medicine

Juan Marcos GonzalezAssistant Professor, Duke School of Medicine

Patients’ Tolerance for Treatment Risks

How much risk are people willing to bear in return for better efficacy?

• Important factor in regulatory reviews:

• Implications for comparative effectiveness analysis

3

?

Utility Weights: Standard Gamble

U(Asthma) = p·U(Perfect Health) + (1-p) ·U(Instantaneous Painless Death)= p·(1) + (1-p)·(0)

= p

Probability = p

Probability = (1-p)

A

B

Perfect Health

Instantaneous Painless Death

Asthma

Why not standard gamble?• Does not identify risk preferences

– Assumes risk neutrality, but EU < p · U(Outcome) Diminishing marginal utility

– Assumes preferences are linear in probabilities, but EU = w(p) ·U(Outcome) Prospect theory

• Risk characteristics other than probability matter Decision science

– Familiarity, avoidability, voluntariness

– Latency

– Quality of end of life

• Simplicity versus rigor—impose fewest possible assumptions 5

Test of Standard-Gamble Assumptions:

Crohn’s Disease

• Tysabri re-licensing for Crohn’s

• Discrete-choice experiment

• Number of subjects ~550

• Web-enabled survey instrument

• Treatment attributes– Symptom severity– Serious complications– Time between flare-ups– Need to take steroids– 10-year mortality risks: Serious Infection, PML*, Lymphoma

• No assumption about risk preferences*progressive multifocal leukoencephalopathy Johnson et al. Crohn's disease patients' risk-benefit preferences: Serious adverse event risks versus treatment efficacy. Gastroenterology. 2007; 6

Categorical RiskPreferences

Pref

eren

ce U

tility

PML Risk0 0.005 0.02 0.05

Van Houtven et al. Eliciting benefit-risk preferences and probability-weighted utility using choice-format conjoint analysis. Med Decis Making. 2011.

7 41

Linear Continuous Versus Categorical Risk Preferences

Pref

eren

ce U

tility

PML Risk

0 0.05 0.02 0.05

Van Houtven et al. Eliciting benefit-risk preferences and probability-weighted utility using choice-format conjoint analysis. Med Decis Making. 2011.

42

QALY assumption

Tversky & Kahneman Weighted Risk Preferences

Pref

eren

ce U

tility

PML Risk0 0.05 0.02 0.05

γ = 0.55

Van Houtven et al. Eliciting benefit-risk preferences and probability-weighted utility using choice-format conjoint analysis. Med Decis Making. 2011.

45

Comparative Effectiveness

Analysis Anti-TNF Prolonged corticosteroids

Difference(Months)

QALYs 0.81 0.80 0.01

Patient-Preference Weighted Durations 5.5 3.7 1.9

Johnson, Bewtra, Scott, Reed, Minding the Gaps: Combining Patient-Centric Valuation, Observational Data Analysis, and Decision Modeling to Deliver Patient-Centered Comparative Effectiveness Research . International Society for Pharmacoeconomics and Outcomes Research Meetings, Boston, 2017.

Inflammatory Bowel Disease Study

11

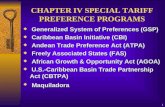

Approaches to Quantifying Preferences

Risk

Benefit

A

B

There is a technical relationshipbetween A and B

12

U(B)

Risk

Benefit

Utility

How do we determine the relative height

of these bundles?

There also is a preferential relationship between profiles A and B.

(B1,R1) (B2,R2)

13

U(A)

U(B)

Risk

Benefit

Utility

Utility equivalence indicates a threshold

Utility equivalence is particularly interesting because it indicates two outcomes have

equal value

• We use utility equivalence between treatment-related benefits and risks to measure risk tolerance

• Risk level that offsets treatment benefits(Maximum acceptable risk)

• Benefit level that offsets treatment risks(Minimum acceptable benefit)

• Unrelated to risk attitudes

14

U(A)

From: IMI PREFER

Methods

7

Fairchild, et al. 2017, J Derm Treat.

U(B)

U(A)

Risk

Benefit

Utility

39%

61%

Example Question: Discrete-Choice Experiment

16

Present offsetting aspect (e.g., risk)

Ask if respondent

accepts scenario

with Risk 1

Yes

No

Ask if respondent

accepts scenario

with Risk 2

Ask if respondent

accepts scenario

with Risk 3

Current Scenario (may consider a

single aspect or a profile)

U(Scenario with Risk 1)

U(Current) Risk

Benefit

Utility

U(Scenario with Risk 2)

Example Questions: Threshold Method

17

Utility

Mortality risk from 5% to None

Weight losschange

Change in Mortality risk

Example Rating Question: Swing Weighting

18

Ranking

• Provides a way to infer how many respondents would have chosen one alternative over the other

19

Item 1

Rankedabove

Item 2

Item 3

Item 4

Item 1 Item 2

Item 3

Item 4

Item 1

Item 1

Which would you choose?

Example Question: Graded Pairs

20

⃝ ⃝ ⃝ ⃝ ⃝What would you do? Definitely Likely Choose Likely Definitely

Choose A Choose A Either Choose B Choose B

• You obtain a signal of choice or judgment and intensity –facilitates individual-level preference estimates

• Difficult to answer strategically

• Can evaluate interaction effects

• Need experimental design

• Can face scenario rejection

• Can evaluate only a limited number of attributes

It is also possible to combine methods

DCE

Relative rating

21

Evaluating Internal Validity of Preference Data

In God we trust, all others must bring data.W. Edwards Deming

22

good

Validity

• Face validity

– Does our measure clash with widely accepted priors? • Content validity

– Are we really measuring preferences?• Predictive validity

– Can we predict actions that follow preferences?• Convergent validity

– Does our measure correlate with measures produced by others?

• External validity

– Can we generalize our results?

InternalValidity

ExternalValidity

In SP methods, generally evaluated through

consistency with theory

Gaining attention with literature reviews by

disease area and meta-analyses

23

Face Validity

• Elapsed time

• Straight-lining or patterning

• Comprehension issues

• Studied outcomes

• Elicitation task

Content Validity

• Monotonicity tests

• Transitivity tests

• Scope tests

• Dominance tests

Predictive Validity

• Repeated questions

• Internal consistency (variance) of an individual’s utilities

24

DCE Internal Validity Tests

• Stability (test/retest)

• Within-Set Monotonicity

• Cross-Set Monotonicity

• Transitivity

• Preference Domination (no tradeoffs)

• Scope

25

Stability Test Failure

ATTRIBUTE TREATMENT A TREATMENT BEfficacy Good ExcellentSide effects Moderate MildCost $10 per month $100 per monthWhich would you choose?

ATTRIBUTE TREATMENT A TREATMENT BEfficacy Good ExcellentSide effects Moderate MildCost $10 per month $100 per monthWhich would you choose?

Question #2

Question #6

26

Within-Set Monotonicity

ATTRIBUTE TREATMENT A TREATMENT BEfficacy Good ExcellentSide effects Moderate MildCost $100 per month $10 per monthWhich would you choose?

27

ATTRIBUTE TREATMENT A TREATMENT BEfficacy Good ExcellentSide effects Moderate MildCost $10 per month $100 per monthWhich would you choose?

ATTRIBUTE TREATMENT A TREATMENT BEfficacy Good ExcellentSide effects None MildCost $10 per month $100 per monthWhich would you choose?

Question #2

Question #6

Cross-Set Monotonicity

19

Transitivity Test Failure

Question #2

Question #4

Question #7

20

Preference Domination (No Tradeoffs)

Question #2

Question #4

Question #7

21

Annual increase in risk of serious side effect

0.3% 0.8% 1.0% 3.5%

Pref

eren

ce W

eigh

ts Almost 3% change in

risk0.7% change

in riskConsistent with recoding risk of serious side effect

increase as “low” and “high,” regardless of risk

range

Gonzalez et al. 2012

Split-sample scope test

Risk levels for group 1: 0.3% 0.8% 1.0%

Risk levels for group 2: 0.8% 1.0% 3.5%

Scope-Test Failure

22

Annual increase in risk of serious side effect

0.3% 0.8% 1.0% 3.5%

Pref

eren

ce W

eigh

ts

Gonzalez et al. 2012

Scope Test Failure

23

After Accounting for Recoding Respondents

Annual increase in risk of serious side effect

0.3% 0.8% 1.0% 3.5%

Pref

eren

ce W

eigh

ts

33

Interpreting Internal-Validity Failures• Avoid failure counts as quality score

• Apparent failures as valid preference information

– Attribute domination: inattention or strong preferences?

– Always choosing or always rejecting constant status-quo alternative: what reasons for scenario rejection?

• Respondent or researcher failure?

– Indication of survey-design problems—poor attribute definitions, too lengthy

– Characteristics of failure respondent characteristics—age, educational attainment, disease severity, unusually short or unusually long survey times

• Analysis of failure data

– Sensitivity of preference weights to inclusion or exclusion

– Latent-class analysis to identify and isolate groups with questionable response patterns

– Attribute non-attendance modeling 34

35Johnson, et al. 2018 (under review)

% Failures

Test Type

Number of Summaries

with the Test

Mean (SD) Median 25th

Percentile75th

Percentile Range

Stability (repeated question) 16 30% (26%) 24% 10% 44% 0% -- 81%

Within-set monotonicity 21 18% (20%) 7% 3% 27% 0.2% -- 58%

Across-set monotonicity 28 6% (9%) 2% 1% 7% 0% -- 29%

Transitivity 3 0% (NA) 0% 0% 0% 0% -- 0%

Attribute dominance 42 22% (14%) 20% 11% 35% 0.3% -- 50%

No variation in chosen alternative (straight-lining) 55 7% (11%) 2% 1% 8% 0.2% -- 43%

Validity Test Summary (N=55)

36

Estimating Preference Weights

Analysis options

Choice-Based and Ranking-

based Methods

Advanced statistical analysis

Threshold-Based Methods

Basic Statistics Regression

Rating-Based Methods

Basic statistics Regression

37

ISPOR Conjoint-Analysis Statistical Analysis Task Force

• Presents assumptions across analysis options for conjoint analysis—primarily discrete-choice experiments

• Explains how results can be interpreted

• Discusses options to characterize preference heterogeneity

• Provides guidance on the evaluation of model fit

38

Common Models for DCE and Ranking Data

39

• Conditional logit

• Random-parameters logit (RPL)

• Hierarchical Bayes (HB)

• Latent-class analysis

Conditional Logit Model

• Discrete-choice analog to ordinary least squares for continuous dependent variables

• Assumes each response independent of all other responses

• Assumes all preferences are identical

40

Random-Parameters (or Mixed) Logit

• Relaxes conditional-logit assumptions

• Accounts for multiple observations from same respondent

• Allows preferences to vary across respondents

• Requires specifying a distribution form for taste variation

• Estimates mean and standard deviation for distribution around each preference weight

41

32

Standard Deviation Estimates

ISPOR Task Force Report, ViH 2016

Hierarchical Bayes Model

• Estimates individual-level preference weights

• Uses logit mean preference weights as priors for Bayesian update using individual choice data

• Means based on full experimental design; update based on fraction of the design seen by each respondent

43

Comparing Results

44

ISPOR Task Force Report, ViH 2016

Latent-Class Finite-Mixture Logit Model

• Estimates preference weights for groups of respondents with similar choice patterns

• Researcher must specify the number of classes for the estimation

• Covariates can be included to evaluate determinants of class membership

45

36ISPOR Task Force Report, ViH 2016

Example Applications

Ph 2a/b Ph 3 Reg Post-approval

What endpoints do patients care most about?

Maximum acceptable risk, minimum required benefit, choice share?

What level/rate of endpoints are critical to patients?

What is the relative importance of benefits, risks and other treatment features to patients?

How do patients vary in these properties (heterogeneity)? Are there distinct subgroups?

Are there important differences between stakeholders?

Trial design TPP Approval & reimbursement

Effect size Defensible B-R Shared decision-making

Commercial viability / Patient needs

Where Can Patient Preferences Inform the Development Lifecycle?

48Courtesy of Bennett Levitan

• Rare genetic condition impacting development– Learning and intellectual disabilities, cognitive impairment, behavioral challenges

(ADHD, autism, social anxiety), physician features– No cure – educational, therapeutic support

• Preference study conducted to prepare for phase 3 study– Intent was to identify which endpoints or components of existing

instruments were most important to patients– Survey administered to family members, given patient cognitive limitations

49

Fragile-X Syndrome

Courtesy of Bennett Levitan

-2

-1

0

1

Very

har

d

Som

ewha

t har

d

Not

har

d

Very

har

d

Som

ewha

t har

d

Not

har

d

Very

har

d

Som

ewha

t har

d

Not

har

d

Very

har

d

Som

ewha

t har

d

Not

har

d

Very

har

d

Som

ewha

t har

d

Not

har

d

Very

har

d

Som

ewha

t har

d

Not

har

d

Learn and apply new skills

Explain needs Control own behavior

Take part in new social activities

Care for self Pay attention

Pref

eren

ce W

eigh

ts

106.0

8.5 9.9

4.34.2

Ability/outcome

Clinical and commercial perspective of the most important endpoints

Caretaker perspective of the most important endpoints

N = 614

J. Cross, CNS Summit, Nov, 2012

Rela

tive

Impo

rtan

ce

Clinician and Patient Caretaker Beliefs

50

R. DiSantostefano, B. Levitan, F. R. Johnson, S. Reed, J, Streffer and J. Yang, “Statistical Opportunities in Disease Interception”. Joint Statistical Meetings, Baltimore, July 2017.

Trade-off Task in Disease Interception

51

Alzheimer’s Disease Preference Study

4 years Normal Memory

8 years Normal Memory

Patients are willing to accept high risks of disabling stoke in exchange for 2 more years of normal memory.

Maximum Acceptable Risk

52Johnson, et al. Manuscript under review

4 years Normal Memory

4 years Normal Memory

8 years Normal Memory

8 years Normal Memory

MAR decreases as healthy lifespan

increases

53

Maximum Acceptable Risk

Johnson, et al. Manuscript under review

Latent-Class Analysis

54

Proportion of sample 40% 33% 27%

Statistically significant participant-levelcovariates

• Younger • Older

• Health problems reported

• No health problems reported

• Less likely to have AD caregiving experience

• Least likely to have AD caregiving experience

• Most likely to have AD caregiving experience

• Assigned to 16-year version

• Assigned to 12-year version

0.0

0.1

0.2

0.3

0.4

0.5R

elat

ive

Imp

ort

ance

Axi

s Ti

tle

Axi

s Ti

tle

Trading Class Risk-Averse Class AD-Averse Class

Proportion of sample 40% 33% 27%

Johnson, et al. Manuscript under review

Family of Tradeoffs in Target Product Profiles (TPPs)

55

• TPP is a point on a continuum of tradeoffs

Efficacy 1

Safe

ty 1

Ideal Product

Acceptable Tradeoffs

Family of TPPs

TPP

• Can generate a family of viable TPPs

• Provides much greater flexibility for alignment between product and TPP goals more “shots on goal”

• Lessens post hoc/ad hoc changes in differentiation strategy

Courtesy of Bennett Levitan

Death

DisablingStroke

Non-DisablingStroke

Major Bleeding

Heart Attack

Blood Clot

US Physician

Death

Disabling Stroke

Non-DisablingStroke

Major Bleeding

Heart Attack

Blood Clot

US Patient

Levitan, Yuan, González, et al., ISPOR 18th Ann Int Mtg, 2013

Preferences for Anticoagulants in Atrial Fibrillation

Identifying Differences Between Key Stakeholders

56

Contact Information

Reed [email protected]

919 668 1075

Shelby [email protected]

919 668 8991

Juan Marcos [email protected]

919 668 5157

57

58

Join MDIC at upcoming events

• May 18 MDIC workshop on patient preference in clinical trial design – In Arlington, VA and online – http://mdic.org/spi/pcor/workshop

• May 22 – Panel presentation at ISPOR: What is patient-centered and fit-for-purpose patient preference information?

• May 30, 2018 – MDICx webinar Update on CDRH Patient-Centered Initiatives – details soon at http://mdic.org/mdicx

• June 8, 2018 - Patient-Focused Medical Products Development in Sleep Apnea - http://www.awakesleepapnea.org/

• Recording and slides from today’s webinar will be available on http://mdic.org/mdicx