Establishing the Reliability and Validity of Outcomes Assessment Measures Anthony R. Napoli, PhD...

-

date post

21-Dec-2015 -

Category

Documents

-

view

216 -

download

0

Transcript of Establishing the Reliability and Validity of Outcomes Assessment Measures Anthony R. Napoli, PhD...

Establishing the Reliability and Validity of Outcomes Assessment Measures

Anthony R. Napoli, PhD

Lanette A. Raymond, MA

Office of Institutional Research & Assessment

Suffolk County Community College

http://sccaix1.sunysuffolk.edu/Web/Central/IT/InstResearch/

Validity defined

The validity of a measure indicates to what extent items measure some aspect of what they are purported to measure

Content Validity

Incorporates quantitative estimates

Domain Sampling

The simple summing or averaging of dissimilar items is inappropriate

Construct Validity

Indicated by correspondence of scores to other known valid measures of the underlying theoretical trait

Discriminant Validity

Convergent Validity

Criterion-Related Validity

Represents performance in relation to particular tasks of discrete cognitive or behavioral objectives Predictive Validity

Concurrent Validity

Reliability defined

The reliability of a measure indicates the degree to which an instrument consistently measures a particular skill, knowledge base, or construct

Reliability is a precondition for validity

Types of Reliability

Inter-rater (scorer) reliability

Inter-item reliability

Test-retest reliability

Split-half & alternate forms reliability

Validity & Reliability in Plain English

Assessment results must represent the institution, program, or course

Evaluation of the validity and reliability of the assessment instrument and/or rubric will provide the documentation that it does

Content Validity for Subjective Measures

The learning outcomes represent the program/course (domain sampling)

The instrument addresses the learning outcomes

There is a match between the instrument and the rubric

Rubric scores can be applied to the learning outcomes, and indicate the degree of student achievement within the program/course

Inter-Scorer Reliability

Rubric scores can be obtained and applied to the learning outcomes, and indicate the degree of student achievement within the program/course consistentlyconsistently

Content Validity for Objective Measures

The learning outcomes represent the program/course

The items on the instrument address specific learning outcomes

Instrument scores can be applied to the learning outcomes, and indicate the degree of student achievement within the program/course

Inter-Item Reliability

Items that measure the same learning outcomes should consistentlyconsistently exhibit similar scores

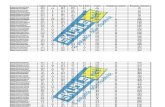

Content Validity (CH19)

ObjectiveIII

III

IV

DescriptionWrite and decipher chemical nomenclature

Solve both quantitative and qualitative problems

Balance equations and solve mathematical problems associated w/ balanced equations

Demonstrate an understanding intra-molecular forces

A 12-item test measured students’ mastery of the objectives

Content Validity (CH19)

Item # Objective 1 Objective 2 Objective 3 Objective 41 100.00%2 100.00%3 100.00%4 25.00% 75.00%5 25.00% 75.00%6 100.00%7 100.00%8 100.00%9 12.50% 87.50%

10 100.00%11 100.00%12 12.50% 12.50% 75.00%

# of items linked to each objective 3 1 3 5Items assigned to objective 2, 6, 11 1 7, 10, 12 3, 4, 5, 8, 9Overall agreement rate = 91.67%

Content Validity (SO11)

ObjectiveIII

III

DescriptionIdentify the basic methods of data

collection

Demonstrate an understanding of basic sociological concepts and social processes that shape human behavior

Apply sociological theories to current social issues

A 30-item test measured students’ mastery of the objectives

Content Validity (SO11)

Test Question Number -objective 1

Rated OB1

Item Agreement % %

1 0 0.02 8 100.03 7 87.54 1 12.55 0 0.06 0 0.07 0 0.08 8 100.09 8 100.010 8 100.0

Objective-wide agreement rate 50.0Questions affectively linked to objective 1 5 Items 2,3,8,9,10

Content Validity (SO11)

Test Question Number -objective 3

Rated OB3

Item Agreement % %

21 5 62.522 1 12.523 1 12.524 3 37.525 3 37.526 2 25.027 3 37.528 4 50.029 6 75.030 3 37.5

Objective-wide agreement rate 37.4Questions affectively linked to objective 3 1 Item 29

Inter-Rater ReliabilityFine Arts Portfolio

DrawingDesignTechniqueCreativityArtistic ProcessAesthetic CriteriaGrowthPortfolio Presentation

Scale:

5 = Excellent4 = Very Good3 = Satisfactory2 =

Unsatisfactory1 =

Unacceptable

Inter-Rater ReliabilityFine Arts Portfolio

Point of Evaluation Alpha High Avg Score Low Avg Score Improved Alpha Drawing 0.857 4.7 4.3 n/a Design 0.934 4.5 3.8 0.946Technique 0.948 4.5 4.1 0.954Creativity 0.934 4.2 3.7 n/a Artistic Process 0.973 4.1 3.6 n/a Aesthetic Criteria 0.931 4.3 3.6 n/a Growth 0.91 4.3 3.8 n/a Portfolio Presentation 0.909 4.1 3.3 0.919

Inter-Item Reliability (PC11)

Objective DescriptionDemonstrate a satisfactory knowledge of: 1. the history, terminology, methods, & ethics in

psychology

2. concepts associated with the 5 major schools of psychology

3. the basic aspects of human behavior including learning and memory, personality, physiology, emotion, etc…

4. an ability to obtain and critically analyze research in the field of modern psychology

A 20-item test measured students’ mastery of the objectives

Inter-Item Reliability (PC11)

Embedded-questions methodology

Inter-item or internal consistency reliability KR-20, rtt = .71

Mean score = 12.478

Std Dev = 3.482Std Error = 0.513

Mean grade = 62.4%

Inter-Item Reliability (PC11)Motivational Comparison

2 GroupsGraded Embedded QuestionsNon-Graded Form & Motivational

Speech

Mundane Realism

Inter-Item Reliability (PC11)Motivational Comparison

Graded condition produces higher scores (t(78) = 5.62, p < .001).

Large effect size (d = 1.27).

8.6(43%)

12.5(62%)

0.0

2.0

4.0

6.0

8.0

10.0

12.0

14.0

Non-Graded Graded

Inter-Item Reliability (PC11)Motivational Comparison

0%

41%

0%

10%

20%

30%

40%

50%

Non-Graded Graded

Minimum competency 70% or better

Graded condition produces greater competency (Z = 5.69, p < .001).

Inter-Item Reliability (PC11)Motivational Comparison

In the non-graded condition this measure is neither reliable nor valid

KR-20N-g = 0.29 0.29

0.71

0

0.2

0.4

0.6

0.8

Non-graded Graded

Criterion-Related Concurrent Validity (PC11)

2 3 4 Mean Std n.85*** .79*** .45* 62.4 17.4 46

.95*** .48** 81.4 12.1 46.45* 2.9 0.9 46

CPT-Reading Comprehension test (4) 80.6 18.0 30* p < .05, ** p < .01, p < .0001

Correlation with

Correlations between assessment measure, course outcomes, and reading comprehension scores.

Assessment grade (1)Term Average (2)Course GPA (3)

“When you can measure what you are speaking about and express it in numbers, you know something about it; but when you cannot express it in numbers, your knowledge is of a meager and unsatisfactory kind.”

-- Lord Kelvin --

“There are three kinds of lies: lies, damned lies, and statistics.” -- Benjamin Disraeli --