Entropy-based Histograms for Selectivity Estimation

-

Upload

university-of-southern-california -

Category

Science

-

view

310 -

download

1

Transcript of Entropy-based Histograms for Selectivity Estimation

1H I E N T O , U N I V E R S I T Y O F S O U T H E R N C A L I F O R N I AK U O R O N G C H I A N G , T E R A D A T A

C Y R U S S H A H A B I , U N I V E R S I T Y O F S O U T H E R N C A L I F O R N I A

ENTROPY-BASED HISTOGRAMS FOR SELECTIVITY ESTIMATION

MOTIVATION

• Query optimization• Selectivity estimation of intermediate relations

significantly influences the choice of a query plan

• Selectivity estimation is estimating the size of the result set of a relational algebra operator

2

PROBLEM STATEMENT

• Histogram is the most popular technique used in commercial DBMS• Challenges of constructing histograms• Large error on skewed datasets• Fast construction time

3

Spike

Value

Freq

ZipfValue

Freq

RELATED WORK

• Classical Histograms• [Shapiro’84] Equi-Width and Equi-Height

• Variants of V-optimal Histograms:• [Ioannidis’93] V-Optimal• [Poosala’96] Iterative Improvement• [Jagadish’98] Dynamic programming – Efficiency

issue • Local Search Approaches• [Poosala’96] Max-Diff• [Jagadish’98] MHIST• [Terzi’06] DnS• [Guha’06] AHistL• [Halim’09] GDY_BDP 4

)( 2BNO

CONTRIBUTIONS

5

Created the FIRST entropy-based histogram (EBH) which outperforms existing approaches for range query

Developed two EBHs which are superior for equality query

Conducted extensive experiments to evaluate our proposed techniques with many other histogram algorithms in terms of accuracy and efficiency

Provided a general best cut framework for all EBHs and reduce the complexity of the algorithms with incremental and pruning techniques

OUTLINE

• Histogram for Selectivity Estimation• Maximum Entropy (Range Query)• Minimum Selectivity Error (Equality Query)• Experiments

6

HISTOGRAM FOR SELECTIVITY ESTIMATION

• Partition the original distribution (N attribute values) into B buckets (N>B)• #of tuples; : #distinct values within

• Selectivity factors• Equality• Range

7

32 2

1

1 2 3 4Original distribution Approximated distribution

3

2 21

1 2 3 4 1 2 3 4

ii dvsbvx /1)( )(/)()()( 1221 iii blenvvsbvxbv

idvit ibib

24

1

1

dvt

24

2

2

dvt

Bucketizing

OUTLINE

• Histogram for Selectivity Estimation• Maximum Entropy (Range Query)• Minimum Selectivity Error (Equality Query)• Experiments

8

MAXIMUM ENTROPY HISTOGRAM

• Entropy is a measure of information content• Entropy for selectivity estimation• Why ME for histogram?• Maximizing information content is the goal of any

histogram• Achieving standard uniformity is equivalent to

grouping similar frequencies in the same bucket in histogram

9B1 B2

32 2

1

1 2 3 4

1)( 1 bH

81.0)( 2 bH

ME HISTOGRAM CONSTRUCTION

• Entropy of a bucket (Shannon)• : the probability of a value in the bucket

• Entropy of a histogram• : the number of tuples in

• Best cut approach• Iteratively chooses B – 1 global best cuts• Minimize reduction in histogram entropy

• Local best cuts minimize entropy reduction within each bucket

• Complexity

10

idv

jjji ppbH

12 )(log)(

B

iii bHbWH

1

)()(

)( RHSLHSO HHHH

)( 2NO

jp

)( ibWib

INCREMENTAL AND PRUNNING TECHNIQUES

• Local cuts optimization• Update entropy of a bucket (paper)• Compute entropy reduction incrementally

• Global cuts optimization• Most of the local best cuts are already known• Min heap stores minimum entropy reductions of all buckets

• Complexity11

)log( BNO

)( RHSLHSO HHHH

OUTLINE

• Histogram for Selectivity Estimation• Maximum Entropy (Range Query)• Minimum Selectivity Error (Equality Query)• Experiments

12

13

ENTROPY-BASED SELECTIVITY FACTOR

Distribution

H Improvement

(0.5,0.5) 0 1 0 0%(0.6,0.4) 0.1 0.97 0.098 0.2%(0.8,0.2) 0.3 0.72 0.25 5%(0.9,0.1) 0.4 0.469 0.26 14%(0.99,0.01) 0.49 0.081 0.1 39%

ii dvs /1 )(2 ibHis

The more biased the data, the more the entropy model can improve upon the estimation error

Average error

Estimation error tends to occur as under-estimation!

14

• Total selectivity error

• : expected squared error of selectivity factor

• : the probability of a value in the bucket• : the entropy-based selectivity factor of

• MSE maximizes error reduction• Best cut approach• Incremental and prunning techniques

MINIMUM SELECTIVITY ERROR (MSE)

B

iii bEbW

1

)()(

idv

jijji sppbE

1

2)()(

)( ibE

jpis ib

OUTLINE

• Histogram for Selectivity Estimation• Maximum Entropy (Range Query)• Minimum Selectivity Error (Equality Query)• Experiments

15

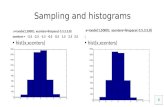

EXPERIMENTAL SETUP: DATA DISTRIBUTIONS

• Two real-world time series (Phone and Balloon)• 12 synthetic skewed datasets• The number of attribute values N is 1000

16

Value sets

Frequency setszipf zipf_ran

Uniform S=2 T=800K

S=0.2 T=800K

zipf_inc S=38 T=700K

S=16 T=500K

zipf_dec S=-1.5 T=800K

S=-23 T=400K

cusp_min S=2/8 T=800K

S=-0.1 T=600K

cusp_max S=-1.6 T=800K

S=-5 T=700K

zipf_ran S=2.9 T=700K

S=0.6 T=400K

Synthetic datasets [Poosala’96]

0

50000

100000

Value

Freq

0 46 93 1401872350

400

800

1200

Value

Freq

0 -0.252-0.1 0.006 10

100

200

Value

Freq

Phone Balloon

Spike

Real datasets Halim’09]

CONSTRUCTION COST

• B = 30 and N =1000 20,000• MB: Minimum Bias histogram

17

Algorithm TimeVODPDnSAHistLMHISTGDY_BDPVOIIME,MSE,MBMDEHEW

)( 2BNO

)( 3/53/4 BNO

)log)(log( 23 nnBnO )(NBO

)(NBO)(NBO

)log( BNO

)log( BNO)(NO

)(BO

Algorithm

Input size (N)1000 10,00

020,000

VODP 235 2,325 5,423MHIST 0.547 49 211VOII 0.047 2.376 8.885MSE 0.024 0.033 0.045MB 0.02 0.025 0.036ME 0.017 0.02 0.036MD 0.015 0.015 0.025EH 0 0.001 0.001EW 0 0.001 0.001

Construction time in seconds (uniform_zipf dataset)Complexity

RANGE QUERY- REAL• 10,000 random queries whose midpoints are in the value

domain and their lengths are bounded by an offset• Offset =1 means query length = domain size/B

• ME consistently performs well, and it outperforms most of the other techniques

18a) Balloon dataset (top 5 techniques)

1 2 4 6 8 100

300

600GDY_BDPMEVODPMD

Query size (offset)

RM

SE (x

100)

EQUALITY QUERY- REAL• 10,000 random queries (x = val), val is in the value domain• MSE, MD and MB are close to the optimal line produced by

VODP, in which MSE performs best

19

10 20 30 40 500

100

200

300VODP

GDY_BDP

MSE

MB

MD

B (number of buckets)

RM

SE

a) Phone dataset (top 5 techniques)

20

RANGE QUERY VS EQUALITY QUERY – 12 SYN

Range query• Avg errors of ME and MHIST

are only 8% larger than that of VODP while the complexity of ME is lower

Equality query• Avg error of MSE is 21%

smaller than that of MD while their complexities are the same

0100020003000400050006000

RM

SE (x

100

0)

VODP

MHISTVOII ME

MD0

100002000030000400005000060000

RM

SE (x

10,

000)

Average estimation error over 12 skewed synthetic datasets (top 5 techniques)

CONCLUSION

21

Provided a general framework to construct EBHs

Proposed a class of entropy-based histograms (EBHs) for both range queries (based on maximum entropy) and equality queries (based on entropy for estimating selectivity factor and bias factor)

Designed an incremental approach to efficiently construct the histograms based on the incremental property of entropyEBHs offer the best performance for both range and equality queries in terms of balancing efficiency and accuracy

QUALITY IMPROVEMENT(RANGE QUERY)

• Frequency approach• Ignore the spread of the values

• Area approach• Area = spread * frequency [Poosala’96] • Considers proximity of both the value and frequency

sets• Incoporate area approach into ME histogram• Improve range query estimation

22

21 1 1 1

0 4 5 6 9

23

REFERENCES

• Hien To, Kuorong Chiang, and Cyrus Shahabi. Entropy-based histograms for selectivity estimation. In Proceedings of the 22nd ACM International Conference on Conference on information & knowledge management (CIKM 2013). ACM, 2013. (Acceptance rate ~17%) (Paper) (PPT)