EE30332 Ch7 DP.1 °Most of the slides are from Prof. Dave Patterson of University of California at...

-

date post

19-Dec-2015 -

Category

Documents

-

view

218 -

download

2

Transcript of EE30332 Ch7 DP.1 °Most of the slides are from Prof. Dave Patterson of University of California at...

EE30332 Ch7 DP .1

° Most of the slides are from Prof. Dave Patterson of University of California at Berkeley

° Part of the materials is from Sun Microsystems

° Part of the material is from AF

° "Copyright 1997 UCB." Permission is granted to alter and distribute this material provided that the following credit line is included: "Adapted from (complete bibliographic citation). Copyright 1997 UCB.

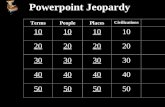

Ch 7 Memory Hierarchy

EE30332 Ch7 DP .2

Ch 7 Memory Hierarchy

DRAM

Year Size Cycle Time

1980 64 Kb 250 ns

1983 256 Kb 220 ns

1986 1 Mb 190 ns

1989 4 Mb 165 ns

1992 16 Mb 145 ns

1995 64 Mb 120 ns

Capacity Speed (latency)

Logic: 2x in 3 years 2x in 3 years

DRAM: 4x in 3 years 2x in 10 years

Disk: 4x in 3 years 2x in 10 years

1000:1! 2:1!

EE30332 Ch7 DP .3

Who Cares About the Memory Hierarchy?

µProc60%/yr.(2X/1.5yr)

DRAM9%/yr.(2X/10 yrs)1

10

100

1000

1980

1981

1983

1984

1985

1986

1987

1988

1989

1990

1991

1992

1993

1994

1995

1996

1997

1998

1999

2000

DRAM

CPU1982

Processor-MemoryPerformance Gap:(grows 50% / year)

Per

form

ance

Time

“Moore’s Law”

Processor-DRAM Memory Gap (latency)

EE30332 Ch7 DP .4

Impact on Performance

° Suppose a processor executes at • Clock Rate = 200 MHz (5 ns per cycle)

• CPI = 1.1

• 50% arith/logic, 30% ld/st, 20% control

° Suppose that 10% of memory operations get 50 cycle miss penalty

° CPI = ideal CPI + average stalls per instruction= 1.1(cyc) +( 0.30 (datamops/ins) x 0.10 (miss/datamop) x 50 (cycle/miss) )

= 1.1 cycle + 1.5 cycle = 2. 6

° 58 % of the time the processor is stalled waiting for memory!

° a 1% instruction miss rate would add an additional 0.5 cycles to the CPI!

DataMiss(1.6)49%

Ideal CPI(1.1)35%

Inst Miss(0.5)16%

EE30332 Ch7 DP .5

The Goal: illusion of large, fast, cheap memory

° Fact: Large memories are slow, fast memories are small

° How do we create a memory that is large, cheap and fast (most of the time)?

• Hierarchy

• Parallelism

EE30332 Ch7 DP .6

An Expanded View of the Memory System

Control

Datapath

Memory

Processor

Mem

ory

Memory

Memory

Mem

ory

Fastest Slowest

Smallest Biggest

Highest Lowest

Speed:

Size:

Cost:

EE30332 Ch7 DP .7

Why hierarchy works

° The Principle of Locality:• Program access a relatively small portion of the

address space at any instant of time.

Address Space0 2^n - 1

Probabilityof reference

EE30332 Ch7 DP .8

Memory Hierarchy: How Does it Work?

° Temporal Locality (Locality in Time):=> Keep most recently accessed data items closer to the

processor

° Spatial Locality (Locality in Space):=> Move blocks consists of contiguous words to the

upper levels

Lower LevelMemoryUpper Level

MemoryTo Processor

From ProcessorBlk X

Blk Y

EE30332 Ch7 DP .9

Memory Hierarchy: Terminology

° Hit: data appears in some block in the upper level (example: Block X)

• Hit Rate: the fraction of memory access found in the upper level

• Hit Time: RAM access time + time to determine hit/miss

° Miss: data needs to be retrieved from lower storage• Miss Rate = 1 - (Hit Rate)

• Miss Penalty: Time to replace a block in the upper level + Time to deliver the block the processor

° Hit Time << Miss Penalty Lower LevelMemoryUpper Level

MemoryTo Processor

From ProcessorBlk X

Blk Y

EE30332 Ch7 DP .10

How is the hierarchy managed?

° Registers <-> Memory (cache is faster copy of mem)• by compiler (programmer?)

° cache <-> memory• by the hardware

° memory <-> disks• by the hardware and operating system (virtual memory)

• by the programmer (files)

Processor sees Registers, cache and memory but not disk, when executing instruction

Disks are handled as I/O, thru Page Fault to/from memory

EE30332 Ch7 DP .11

Memory Hierarchy Technology

° Random Access:

• “Random” is good: access time is the same for all locations

• DRAM: Dynamic Random Access Memory- High density, low power, cheap, slow- Dynamic: need to be “refreshed” regularly

• SRAM: Static Random Access Memory- Low density, high power, expensive, fast- Static: content will last “forever”(until lose power)

° “Non-so-random” Access Technology:

• Access time varies from location to location and from time to time

• Examples: Disk, CDROM° Sequential Access Technology: access time linear in location

(e.g.,Tape)

• The Main Memory: DRAMs + Caches: SRAMs

EE30332 Ch7 DP .12

Main Memory Background

° Performance of Main Memory: • Latency: Cache Miss Penalty

- Access Time: time between request and word arrives- Cycle Time: time between requests

• Bandwidth: I/O & Large Block Miss Penalty (L2)

° Main Memory is DRAM: Dynamic Random Access Memory (except supercomputers)

• Dynamic since needs to be refreshed periodically (8 ms)• Addresses divided into 2 halves (Memory as a 2D matrix):

- RAS or Row Access Strobe- CAS or Column Access Strobe

° Cache uses SRAM: Static Random Access Memory• No refresh (6 transistors/bit vs. 1 transistor /bit)• Address not divided

° Size: DRAM/SRAM 4-8

° Cost/Cycle time: SRAM/DRAM 8-16

EE30332 Ch7 DP .13

Random Access Memory (RAM) Technology

° Why do computer designers need to know about RAM technology?

• Processor performance is usually limited by memory bandwidth

• As IC densities increase, lots of memory will fit on processor chip

- Tailor on-chip memory to specific needs

- Instruction cache

- Data cache

- Write buffer

° What makes RAM different from a bunch of flip-flops?• Density: RAM is much more denser

EE30332 Ch7 DP .14

Classical DRAM Organization (square)

row

decoder

rowaddress

Column Selector & I/O Circuits Column

Address

data

RAM Cell Array

word (row) select

bit (data) lines

° Row and Column Address together:

• Select 1 bit a time

Each intersection representsa 1-T DRAM Cell

EE30332 Ch7 DP .15

Increasing Bandwidth - Interleaving

Access Pattern without Interleaving:

Start Access for D1

CPU Memory

Start Access for D2

D1 available

Access Pattern with 4-way Interleaving:

Acc

ess

Ban

k 0

Access Bank 1

Access Bank 2

Access Bank 3

We can Access Bank 0 again

CPU

MemoryBank 1

MemoryBank 0

MemoryBank 3

MemoryBank 2

EE30332 Ch7 DP .16

Main Memory Performance

° Timing model• 1 to send address,

• 6 access time, 1 to send data

• Cache Block is 4 words° Simple M.P. = 4 x (1+6+1) = 32° Wide M.P. = 1 + 6 + 1 = 8° Interleaved M.P. = 1 + 6 + 4x1 = 11

EE30332 Ch7 DP .17

Independent Memory Banks

° How many banks?number banks number clocks to access word in bank

• For sequential accesses, otherwise will return to original bank before it has next word ready

° Increasing DRAM => fewer chips => harder to have banks

• Growth bits/chip DRAM : 50%-60%/yr

• Nathan Myrvold M/S: mature software growth (33%/yr for NT) growth MB/$ of DRAM (25%-30%/yr)

EE30332 Ch7 DP .18

Fewer DRAMs/System over TimeM

inim

um

PC

Mem

ory

Siz

e

DRAM Generation‘86 ‘89 ‘92 ‘96 ‘99 ‘02 1 Mb 4 Mb 16 Mb 64 Mb 256 Mb 1 Gb

4 MB

8 MB

16 MB

32 MB

64 MB

128 MB

256 MB

32 8

16 4

8 2

4 1

8 2

4 1

8 2

Memory per System growth@ 25%-30% / year

Memory per DRAM growth@ 60% / year

(from PeteMacWilliams, Intel)

EE30332 Ch7 DP .19

Today’s Situation: DRAM

° Commodity, second source industry high volume, low profit, conservative

• Little organization innovation (vs. processors) in 20 years: page mode, EDO, Synch DRAM

° DRAM industry at a crossroads:• Fewer DRAMs per computer over time

- Growth bits/chip DRAM : 50%-60%/yr

- Nathan Myrvold M/S: mature software growth (33%/yr for NT) growth MB/$ of DRAM (25%-30%/yr)

° DRAM is often chosen as the first major product for a new semiconductor technology, because the cell are simple and arrangement is regular, and it is large volume and earlier return of investment

EE30332 Ch7 DP .20

Example: 1 KB Direct Mapped Cache with 32 B Blocks

° For a 2 ** N byte cache:• The uppermost (32 - N) bits are always the Cache Tag

• The lowest M bits are the Byte Select (Block Size = 2 ** M)

Cache Index

0

1

2

3

:

Cache Data

Byte 0

0431

:

Cache Tag Example: 0x50

Ex: 0x01

0x50

Stored as partof the cache “state”

Valid Bit

:

31

Byte 1Byte 31 :

Byte 32Byte 33Byte 63 :Byte 992Byte 1023 :

Cache Tag

Byte Select

Ex: 0x00

9

EE30332 Ch7 DP .21

Block Size Tradeoff

° In general, larger block size take advantage of spatial locality BUT:

• Larger block size means larger miss penalty - Takes longer time to fill up the block

• If block size is too big relative to cache size, miss rate will go up

- Too few cache blocks

° In general, Average Access Time: • = Hit Time x (1 - Miss Rate) + Miss Penalty x Miss Rate

MissPenalty

Block Size

MissRate Exploits Spatial Locality

Fewer blocks: compromisestemporal locality

AverageAccess

Time

Increased Miss Penalty& Miss Rate

Block Size Block Size

EE30332 Ch7 DP .22

Extreme Example: single big line

° Cache Size = 4 bytes Block Size = 4 bytes

• Only ONE entry in the cache° If an item is accessed, likely that it will be accessed again soon

• But it is unlikely that it will be accessed again immediately!!!

• The next access will likely to be a miss again- Continually loading data into the cache but

discard (force out) them before they are used again

- Worst nightmare of a cache designer: Ping Pong Effect

° Conflict Misses are misses caused by:

• Different memory locations mapped to the same cache index

- Solution 1: make the cache size bigger

- Solution 2: Multiple entries for the same Cache Index

0

Cache DataValid Bit

Byte 0Byte 1Byte 3

Cache Tag

Byte 2

EE30332 Ch7 DP .23

Another Extreme Example: Fully Associative

° Fully Associative Cache• Forget about the Cache Index

• Compare the Cache Tags of all entries in parallel

° By definition: there is no Conflict Miss for a fully associative cache

:

Cache Data

Byte 0

0431

:

Cache Tag (27 bits long)

Valid Bit

:

Byte 1Byte 31 :

Byte 32Byte 33Byte 63 :

Cache Tag

Byte Select

Ex: 0x01

X

X

X

X

X

EE30332 Ch7 DP .24

A Two-way Set Associative Cache

° N-way set associative: N entries for each Cache Index• N direct mapped caches operates in parallel

° Example: Two-way set associative cache• Cache Index selects a “set” from the cache

• The two tags in the set are compared in parallel

• Data is selected based on the tag resultCache Data

Cache Block 0

Cache TagValid

:: :

Cache Data

Cache Block 0

Cache Tag Valid

: ::

Cache Index

Mux 01Sel1 Sel0

Cache Block

CompareAdr Tag

Compare

OR

Hit

EE30332 Ch7 DP .25

Disadvantage of Set Associative Cache

° N-way Set Associative Cache versus Direct Mapped Cache:

• N comparators vs. 1• Extra MUX delay for the data• Data comes AFTER Hit/Miss decision and set selection

° In a direct mapped cache, Cache Block is available BEFORE Hit/Miss:

• Possible to assume a hit and continue. Recover later if miss.

Cache Data

Cache Block 0

Cache Tag Valid

: ::

Cache Data

Cache Block 0

Cache TagValid

:: :

Cache Index

Mux 01Sel1 Sel0

Cache Block

CompareAdr Tag

Compare

OR

Hit

EE30332 Ch7 DP .26

A Summary on Sources of Cache Misses

° Compulsory (cold start or process migration, first reference): first access to a block

• “Cold” fact of life: not a whole lot you can do about it

• Note: If you are going to run “billions” of instruction, Compulsory Misses are insignificant

° Conflict (collision):• Multiple memory locations mapped

to the same cache location• Solution 1: increase cache size• Solution 2: increase associativity

° Capacity:• Cache cannot contain all blocks access by the

program• Solution: increase cache size

° Invalidation: other process (e.g., I/O) updates memory

EE30332 Ch7 DP .27

Direct Mapped N-way Set Associative Fully Associative

Compulsory Miss:

Cache Size: Small, Medium, Big?

Capacity Miss

Invalidation Miss

Conflict Miss

Source of Cache Misses Quiz

Choices: Zero, Low, Medium, High, Same

EE30332 Ch7 DP .29

Impact on Cycle Time

Example: direct map allows miss signal after data

IR

PCI -Cache

D Cache

A B

R

T

IRex

IRm

IRwb

miss

invalid

Miss

Cache Hit Time:directly tied to clock rateincreases with cache sizeincreases with associativity

Average Memory Access time = Hit Time + Miss Rate x Miss Penalty

Time = IC x CT x (ideal CPI + memory stalls)

EE30332 Ch7 DP .30

Improving Cache Performance: 3 general options

1. Reduce the miss rate, ° Larger cache, higher associativity

2. Reduce the miss penalty,

faster memory system

3. Reduce the time to hit in the cache.

faster parts for cache

EE30332 Ch7 DP .31

Basic Cache Types (for write)

° Write through—The information is written to both the block in the cache and to the block in the lower-level memory.

° Write back—The information is written only to the block in the cache. The modified cache block is written to main memory only when it is replaced.

• is block clean or dirty?

° Pros and Cons of each?• WT: read misses cannot result in writes

• WB: no writes of repeated writes

° WT always combined with write buffers so that don’t wait for lower level memory

EE30332 Ch7 DP .32

Write Buffer for Write Through

° A Write Buffer is needed between the Cache and Memory

• Processor: writes data into the cache and the write buffer

• Memory controller: write contents of the buffer to memory

° Write buffer is can be a FIFO or a small associative cache:

• Typical number of entries: 4

• Works fine if: Store frequency (w.r.t. time) << 1 / DRAM write cycle

° Memory system designer’s nightmare:

• Store frequency (w.r.t. time) -> 1 / DRAM write cycle

• Write buffer saturation

ProcessorCache

Write Buffer

DRAM

EE30332 Ch7 DP .33

Write Buffer Saturation

° Solution for write buffer saturation:• Use a write back cache

• Install a second level (L2) cache:

ProcessorCache

Write Buffer

DRAM

ProcessorCache

Write Buffer

DRAML2Cache

EE30332 Ch7 DP .34

Write-miss Policy: Write Allocate versus Not Allocate

° Assume: a 16-bit write to memory location 0x0 and causes a miss

• Do we read in the block?- Yes: Write Allocate

- No: Write Not Allocate

Cache Index

0

1

2

3

:

Cache Data

Byte 0

0431

:

Cache Tag Example: 0x00

Ex: 0x00

0x00

Valid Bit

:

31

Byte 1Byte 31 :

Byte 32Byte 33Byte 63 :Byte 992Byte 1023 :

Cache Tag

Byte Select

Ex: 0x00

9

EE30332 Ch7 DP .35

Recall: Levels of the Memory Hierarchy

CPU Registers100s Bytes<10s ns

CacheK Bytes10-100 ns$.01-.001/bit

Main MemoryM Bytes100ns-1us$.01-.001

DiskG Bytesms10 - 10 cents-3 -4

CapacityAccess TimeCost

Tapeinfinitesec-min10-6

Registers

Cache

Memory

Disk

Tape

Instr. Operands

Blocks

Pages

Files

StagingXfer Unit

prog./compiler1-8 bytes

cache cntl8-128 bytes

OS512-4K bytes

user/operatorMbytes

Upper Level

Lower Level

faster

Larger

EE30332 Ch7 DP .36

Basic Issues in Virtual Memory System Designsize of information blocks that are transferred from secondary to main storage (M)

block of information brought into M, and M is full, then some region of M must be released to make room for the new block --> replacement policy

which region of M is to hold the new block --> placement policy

missing item fetched from secondary memory only on the occurrence of a fault --> demand load policy

Paging Organization

virtual and physical address space partitioned into blocks of equal size

page framespages

pages

reg

cachemem disk

frame