Dual Problem of Linear Program subject to Primal LP Dual LP subject to ※ All duality theorems hold...

-

date post

20-Dec-2015 -

Category

Documents

-

view

225 -

download

4

Transcript of Dual Problem of Linear Program subject to Primal LP Dual LP subject to ※ All duality theorems hold...

Dual Problem of Linear Program

minx2R n

p0x

subject to Ax > b; x>0

Primal LP

Dual LP maxë2R m

b0ë

subject to A0ë6p; ë>0

※ All duality theorems hold and work perfectly!

Primal Dual

Nonnegative variable

Inequality constraint

Free variable Equality constraint

Inequality constraint

Nonnegative variable

Equality constraint Free variable

min p0x max b0ë

xj>0

xj 2 R

ë i>0

ë i 2 R

A ix>bi

A ix = bi

A0jë6pj

A0jë = pj

Primal min x1 + x2s. t. 2

à 1à 2

à 11

à 3

" #x1

x2

ô õ>

1à 2à 5

" #

x1>0 ; x2 : f ree

Dual

2ë1 à ë2 à 2ë361s. t.

max ë1 à 2ë2 à 5ë3

ë1 > 0;ë2 > 0;ë3 > 0

à ë1 + ë2 à 3ë3 = 1

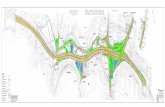

Primal Problem Feasible Region

x2

x1

(0;à 2)

Dual Problem of Strictly Convex Quadratic Program

minx2R n

21x0Qx + p0x

subject to Ax6 b

Primal QP

With strictly convex assumption, we have

Dual QP

max à 21(p0+ ë0A)Qà 1(A0ë + p) à ë0b

subject to ë>0

Classification Problem2-Category Linearly Separable

Case

A-

A+

x0w+ b= à 1

wx0w+ b= + 1x0w+ b= 0

Malignant

Benign

Support Vector MachinesMaximizing the Margin between Bounding

Planes

x0w+ b= + 1

x0w+ b= à 1

A+

A-

w

jjwjj22 = Margin

Algebra of the Classification Problem

2-Category Linearly Separable Case

Given m points in the n dimensional real spaceRn

Represented by anmâ nmatrixAor Membership of each pointA iin the classesAà A+

is specified by anmâ mdiagonal matrix D :

D ii = à 1 if A i 2 Aà and D ii = 1 A i 2 A+if SeparateAà and A+by two bounding planes such that:

A iw+ b > + 1; for D ii = + 1;A iwà b 6 à 1; for D ii = à 1

More succinctly:D(Aw+ eb)>e

e= [1;1;. . .;1]02 Rm:

, where

Support Vector Classification(Linearly Separable Case)

Let S = f (x1;y1);(x2;y2);. . .(xl;yl)gbe a linearly separable training sample and represented by

matrices

A =

(x1)0

(x2)0...

(xl)0

2

64

3

75 2 R lâ n; D =

y1 ááá 0......

...0 ááá yl

" #

2 R lâ l

Support Vector Classification(Linearly Separable Case, Primal)

The hyperplane that solves the minimization problem:

(w;b)

min(w;b)2R n+1

21 jjwjj22

D(Aw+ eb)>e;

realizes the maximal margin hyperplane withgeometric margin í = jjwjj2

1

Support Vector Classification(Linearly Separable Case, Dual Form)

The dual problem of previous MP:

maxë2R l

e0ë à 21ë0DAA0Dë

subject to

e0Dë = 0; ë>0:Applying the KKT optimality conditions, we have

w = A0Dë. But where isb?

06ë ? D(Aw+ eb) à e>0Don’t forget

Dual Representation of SVM

(Key of Kernel Methods: )

The hypothesis is determined by(ëã;bã)

h(x) = sgn(êx;A0Dëã

ë+ bã)

= sgn(P

i=1

l

yiëãi

êxi;x

ë+ bã)

= sgn(P

ëãi >0

yiëãi

êxi;x

ë+ bã)

w = A0Dëã =P

i=1

`

yiëiA0i

Remember : A0i = xi

Compute the Geometric Margin via Dual Solution

The geometric margin í = jjwãjj21 and

êwã;wã

ë= (ëã)0DAA0Dëã, hence we can

computeí by usingëã. Use KKT again (in dual)!

0 6 ëã ? D(AA0Dëã + bãe) à e> 0 Don’t forgete0Dëã = 0

í = (e0ëã)à 21

= (P

ëãi >0

ëãi )

à 21