Alaeddine El Fawal DR Plan for Private-Cloud Based Data Center

Developing Dependable Systems by Maximizing Component Diversity and Fault Tolerance Jeff Tian, Suku...

-

Upload

randall-parker -

Category

Documents

-

view

217 -

download

0

Transcript of Developing Dependable Systems by Maximizing Component Diversity and Fault Tolerance Jeff Tian, Suku...

Developing Dependable Systems by Maximizing Component

Diversity and Fault Tolerance

Jeff Tian, Suku Nair, LiGuo Huang, Nasser Alaeddine and Michael Siok

Southern Methodist University

US/UK Workshop on Network-Centric Operation and Network Enabled Capability, Washington, D.C., July 24-25,

2008

Outline Overall Framework External Environment Profiling Component Dependability:

Direct Measurement and Assessment Indirect Assessment via Internal Contributor Mapping Value Perspective

Experimental Evaluation Fault Injection for Reliability and Fault Tolerance Security Threat Simulation

Summary and Future Work

7/24/2008 2US/UK NCO/NEC Workshop

Overall Framework Systems made up of different components Many factors contribute to system dependability

Our focus: Diversity of individual components Component strength/weakness/diversity:

Target: Different dependability attributes and sub-attributes

External reference: Operational profile (OP) Internal assessment: Contributors to dependability Value perspective: Relative importance and trade-off

Maximize diversity => Maximize dependability Combine strength Avoid/complement/tolerate flaws/weaknesses

7/24/2008 3US/UK NCO/NEC Workshop

Overall Framework (2) Diversity: Four Perspectives

Environmental perspective: Operational profile (OP) Target perspective: Goal, requirement Internal contributor perspective: Internal

characteristics Value perspective: Customer

Achieving diversity and fault tolerance: Component evaluation matrix per target per OP Multidimensional evaluation/composition via DEA

(Data Envelopment Analysis) Internal contributor to dependability mapping Value-based evaluation using single objective function

7/24/2008 4US/UK NCO/NEC Workshop

Terminology Quality and dependability are typically defined

in terms of conformance to customer’s expectations and requirements

Key concepts: defect, failure, fault, and error Dependability: the focus in this presentation Key attributes: reliability, security, etc.

Defect = some problem with the software either with its external behavior or with its internal characteristics

7/24/2008 5US/UK NCO/NEC Workshop

Failure, Fault, Error IEEE STD 610.12 terms related to defect:

Failure: The inability of a system or component to perform its required functions within specified requirements

Fault: An incorrect step, process, or data definition in a computer program

Error: A human action that produces an incorrect result

Errors may cause faults to be injected into the software

Faults may cause failures when the software is executed

7/24/2008 6US/UK NCO/NEC Workshop

Reliability and Other Dependability Attributes Software reliability = the probability for

failure-free operation of a program for a specified time under a specified set of operating conditions (Lyu, 1995; Musa et al., 1987)

Estimated according to various model based on defect and time/input measurements

Standard definitions for other dependability attributes, such as security, fault tolerance, availability, etc.

7/24/2008 7US/UK NCO/NEC Workshop

Outline Overall Framework External Environment Profiling Component Dependability:

Direct Measurement and Assessment Indirect Assessment via Internal Contributor Mapping Value Perspective

Experimental Evaluation Fault Injection for Reliability and Fault Tolerance Security Threat Simulation

Summary and Future Work

7/24/2008 8US/UK NCO/NEC Workshop

Diversity: Environmental Perspective Dependability defined for a specific environment Stationary vs dynamic usage environments

Static, uniform, or stationary (reached an equilibrium) Dynamic, changing, evolving, with possible

unanticipated changes or disturbances Single/overall OP for former category

Musa or Markov variation Single evaluation result possible per component per

dependability attribute: e.g., component reliability R(i) Environment Profiling for Individual Components

Environmental snapshots captured in Musa or Markov Ops

Evaluation matrix (later)

7/24/2008 9US/UK NCO/NEC Workshop

Operational Profile (OP)

Operational profile (OP) is a list of disjoint set of operations and their associated probabilities of occurrence (Musa 1998)

OP describes how users use an application: Help guide the allocation of test cases in accordance

with use Ensure that the most frequent operations will receive

more testing As the context for realistic reliability evaluation Other usages, including diversity and internal-

external mapping in this presentation

7/24/2008 10US/UK NCO/NEC Workshop

Markov Chain Usage Model

Markov chain usage model is a set of states, transitions, and the transition probabilities

As an alternative to Musa (flat) OP Each link has an associated probability of occurrence Models complex and/or interactive systems better

Unified Markov Models (Kallepalli and Tian, 2001; Tian et al., 2003):

Collection of Markov Ops in a hierarchy Flexible application in testing and reliability

improvement

7/24/2008 11US/UK NCO/NEC Workshop

Operational Profile Development:Standard Procedure

Musa’s steps (1998) for OP construction: Identify the initiators of operations Choose a representation (tabular or graphical) Create an operations “list” Establish the occurrence rates of the individual

operations Establish the occurrence probabilities

Other variations Original Musa (1993): 5 top-down refinement steps Markov OP (Tian et al): FSM then probabilities based

on log files

7/24/2008 12US/UK NCO/NEC Workshop

OPs for Composite Systems

Using standard procedure whenever possible For overall stationary environment For individual component usage => component OP For dynamic environment:

Snapshot identification Sets of OPs for each snapshot System OP from individual component OPs

Special considerations: Existing test data or operational logs can be used to

develop component OPs Union of component OPs => system OP

7/24/2008 13US/UK NCO/NEC Workshop

OP and Dependability Evaluation

Some dependability attributes defined with respect to a specific OP: e.g., reliability For overall stationary environment: direct

measurement and assessment possible For dynamic environment: OP-reliability pairs Consequence of improper reuse due to different OPs

(Weyuker 1998) From component to system dependability:

Customization/selection of best-fit OP for estimation Compositional approach (Hamlet et al, 2001)

7/24/2008 14US/UK NCO/NEC Workshop

Outline Overall Framework External Environment Profiling Component Dependability:

Direct Measurement and Assessment Indirect Assessment via Internal Contributor Mapping Value Perspective

Experimental Evaluation Fault Injection for Reliability and Fault Tolerance Security Threat Simulation

Summary and Future Work

7/24/2008 15US/UK NCO/NEC Workshop

Diversity: Target Perspective Component Dependability:

Component reliability, security, etc. to be scored/evaluated

Direct Measurement and Assessment Indirect Assessment (later)

Under stationary environment: Dependability vector for each component Diversity maximization via DEA (data envelopment

analysis) Under dynamic environment:

Dependability matrix for each component Diversity maximization via extended DEA by flattening

out the matrix

7/24/2008 16US/UK NCO/NEC Workshop

Diversity Maximization via DEA DEA (data envelopment

analysis): Non-parametric analysis Establishes a multivariate

frontier in a dataset Basis: linear programming Applying DEA

Dependability attribute frontier

Illustrative example (right) N-dimensional: hyperplane

7/24/2008 17US/UK NCO/NEC Workshop

DEA Example

Lockheed-Martin software project performance with regard to selected metrics and production efficiency model

Measures efficiencies of decision making units (DMU) using weighted sums of inputs and weighted sums of outputs

Compares DMUs to each other Sensitivity analysis affords study of non-efficient DMUs in

comparison BCC VRS Model used in initial study

InputsInputs OutputsOutputs• Labor hours• Software Change Size

• Software Reliability At Release• Defect Density after test• Software Productivity

EfficiencyEfficiencyOutput/Input

7/24/2008 18US/UK NCO/NEC Workshop

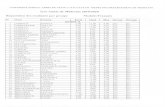

DEA Example (2) Using

production efficiency model for Compute-Intensive dataset group

Ranked set of projects

Data showing distance and direction from efficiency frontier

Rank DMU Score1 34 11 30 11 26 11 22 11 10 11 13 11 15 18 14 0.944099 4 0.83152710 37 0.80533311 1 0.40572212 7 0.25670513 18 0.210479

DMU Score I/O Data Projection Difference %

1 0.405722Chng_Size_code 196493.5 79721.8 -116772 -59.43%Total_Labor 48800.03 19799.26 -29000.8 -59.43%DD_After_test_MESLOC 59.96817 96.5992 36.63103 61.08%ESLOC_per_labor_mo 4.026504 7.851672 3.825168 95.00%Weighted_Reliability_at_Release22.83505 46.10035 23.2653 101.88%

4 0.831527Chng_Size_code 179734.6 149454.2 -30280.4 -16.85%Total_Labor 12400.21 10311.11 -2089.1 -16.85%DD_After_test_MESLOC 47.08071 47.08071 0 0.00%ESLOC_per_labor_mo 14.49448 15.63405 1.13957 7.86%Weighted_Reliability_at_Release27.33631 49.03719 21.70089 79.38%

7 0.256705Chng_Size_code 416797.6 106994 -309804 -74.33%Total_Labor 66587.41 17093.33 -49494.1 -74.33%DD_After_test_MESLOC 97.9607 97.9607 0 0.00%ESLOC_per_labor_mo 6.259405 10.18545 3.926048 62.72%Weighted_Reliability_at_Release15.05019 49.30659 34.25639 227.61%

10 1Chng_Size_code 330386.7 330386.7 0 0.00%Total_Labor 17136.34 17136.34 0 0.00%DD_After_test_MESLOC 67.15824 67.15824 0 0.00%ESLOC_per_labor_mo 19.27988 19.27988 0 0.00%Weighted_Reliability_at_Release12.08211 12.08211 0 0.00%

13 1Chng_Size_code 132123.2 132123.2 0 0.00%Total_Labor 10384 10384 0 0.00%DD_After_test_MESLOC 13.12492 13.12492 0 0.00%ESLOC_per_labor_mo 12.72373 12.72373 0 0.00%Weighted_Reliability_at_Release109.6671 109.6671 0 0.00%

7/24/2008 19US/UK NCO/NEC Workshop

Diversity: Internal Perspective Component Dependability:

Direct Measurement and Assessment: might not be available, feasible, or cost-effective

Indirect Assessment via Internal Contributor Mapping Internal Contributors:

System design, architecture Component internal characteristics: size, complexity,

etc. Process/people/other characteristics Usually more readily available data/measurements

Internal=>External mapping Procedure with OP as input too (e.g., fault=>reliability)

7/24/2008 20US/UK NCO/NEC Workshop

Example: Fault-Failure Mapping for Dynamic Web Applications

Web server logs

Defect Data from Defect

Tracking tool

Application Operational

Profile

Defect Impact

Scheme

Step 2Classification of

HTTP Responses

Step 4Number of Hit with

successful response code

Step 1Classification of

defect information

Step 3TOP HTTP faults

Step 5Number of

transactions

Step 6Top Faults from

Defect data

Step 7Top List

7/24/2008 21US/UK NCO/NEC Workshop

Web Example: Fault-Failure Mapping Input to analysis (and fault-failure conversion):

Anomalies recorded in web server logs (failure view) Faults recorded during development and maintenance Defect impact scheme (weights) Operational profile

Product “A” is an ordering web application for telecom services

Consists of hundreds of thousands of lines of code Running on IIS 6.0 (Microsoft Internet Information

Server), Process couple of millions requests per day

7/24/2008 22US/UK NCO/NEC Workshop

Web Example: Fault-Failure Mapping (Step 1)

Defect Data classes

0%5%

10%15%20%25%30%

Inte

rface

s

Code

Log

ic,co

mpu

tatio

nus

er in

terfa

ceco

de Miss

ingve

rbiag

e

Miss

ing fi

les

Brok

en o

rm

issing

or

Wro

ng o

utpu

tst

ate

Data

issu

e

Miss

ing In

put

fields

Inpu

tco

nstra

int/v

alid cach

e

Error class

Erro

r per

cent

age

• Pareto chart for the defect classification of product “A”

• The top three categories represent 66.26% of the total defect data7/24/2008 23US/UK NCO/NEC Workshop

Web Example: Fault-Failure Mapping (Steps 4 & 5)

Number of Hits with response code 200 and 300

235142

Average Number of hits per transaction 40

Number of transactions 5880

Operation OperationProbabilit

y

Number of Transaction

s

New order 0.1 588

Change order

0.35 2058

Move order 0.1 588

Order Status 0.45 2646

• OP for product “A” and the corresponding numbers of transactions.

7/24/2008 24US/UK NCO/NEC Workshop

Web Example: Fault-Failure Mapping (Step 6)

Application Aspect

Impact Weight Number of transactions

FailureFrequency

Order status

Showstopper

100% 2646 2646

Order status

High 70% 2646 1852

Order status

Medium 50% 2646 1323

Order status

Low 20% 2646 529

Order status

Exception 5% 2646 132

• Using the number of transactions calculated from OP and the defined fault impact schema, we calculated the fault exposure or corresponding potential failure frequencies

7/24/2008 25US/UK NCO/NEC Workshop

Web Example: Fault-Failure Mapping (Step 7)

Rank ResponseCode

Fault FailureFrequency

1 404 /images/dottedsep.gif 5805

2 404 /images/gnav_redbar_s_r.gif 3687

3 404 /images/gnav_redbar_s_l.gif 3537

4 200/300 Order status – showstopper 2646

5 404 /includes/css/images/background.gif 2593

6 200/300 Change order- showstopper 2058

7 200/300 Order status – high 1852

8 200/300 Change order – high 1441

9 200/300 Order status – medium 1323

10 200/300 Change order – medium 1029

11 404 /includes/css/nc2004style.css 721

7/24/2008 26US/UK NCO/NEC Workshop

Web Example: Fault-Failure Mapping (Result Analysis) A large number of failures were caused by a

small number of errors with high usage frequencies

Fixing faults with a high usage frequency and a high impact could achieve better efficiency in reliability improvement

By fixing the top 6.8% faults, the total failures were reduced by about 57%

Similarly, 10% -> 66%, 15%->71%, 20%->75%, for top-faults induced failure reduction

Defect data repository and web server log recorded failures have insignificant overlap => both are needed for effective reliability improvement

7/24/2008 27US/UK NCO/NEC Workshop

Diversity: Value Perspective Component Dependability Attribute:

Direct Measurement and Assessment: might not capture what customers truly care about

Different value attached to different dependability attributes

Value-based software quality analysis: Quantitative model for software dependability ROI

analysis Avoid one-size-fits-all

Value-based process: experience at NASA/USC (Huang and Boehm) extend to dependability

Mapping to value-based perspective more meaningful to target customers

7/24/2008 28US/UK NCO/NEC Workshop

Value Maximization Single objective

function: Relative importance Trade-off possible Quantification scheme Gradient scale to

selecte component(s) Compare to DEA General cases

Combination with DEA Diversity as a separate

dimension possible

7/24/2008 29US/UK NCO/NEC Workshop

Outline Overall Framework External Environment Profiling Component Dependability:

Direct Measurement and Assessment Indirect Assessment via Internal Contributor Mapping Value Perspective

Experimental Evaluation Fault Injection for Reliability and Fault Tolerance Security Threat Simulation

Summary and Future Work

7/24/2008 30US/UK NCO/NEC Workshop

Experimental Evaluation Testbed

Basis: OPs Focus on problems and system behavior under

injected or simulated problems Fault Injection for Reliability and Fault

Tolerance Reliability mapping for injected faults Use of fault seeding models Direct fault tolerance evaluation

Security Threat Simulation Focus 1: likely scenarios Focus 2: coverage via diversity

7/24/2008 31US/UK NCO/NEC Workshop

Summary and Future Work Overall Framework External Environment Profiling Component Dependability:

Direct Measurement and Assessment Indirect Assessment via Internal Contributor Mapping Value Perspective

Experimental Evaluation Fault Injection for Reliability and Fault Tolerance Security Threat Simulation

Summary and Future Work

7/24/2008 32US/UK NCO/NEC Workshop