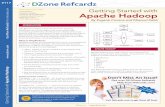

Data Governance in Apache Falcon - Hadoop Summit Brussels 2015

-

Upload

venkatesh-seetharam -

Category

Technology

-

view

388 -

download

1

Transcript of Data Governance in Apache Falcon - Hadoop Summit Brussels 2015

Page 1 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Apache FalconHadoop Data Governance

Hortonworks. We do Hadoop.

Page 2 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Venkatesh Seetharam

Architect, Data Management

Hortonworks Inc.

PMC, Apache Falcon

PMC, Apache Knox

Proposed Apache Atlas

Page 3 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Agenda

Overview Components Features Governance

• Motivation

• High Level

Summary

• Entities:

• Clusters

• Feeds

• Process

• Monitoring

• Tracing

• Replication

• Retention

• Governance

• Replication to Cloud

• Recipes

• User Interface

Page 4 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Motivation for Apache Falcon

Page 5 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Simple Data Pipeline…

Page 5

Page 6 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Add Data Management Capability to the Pipeline

Page 6

Page 7 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Pipeline Becomes Considerably More Complex

Results in Many Complex Oozie Workflows

Data Management Requirements

Page 8 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Introduction to Apache Falcon

Page 9 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Falcon Overview

Centrally Manage Data Lifecycle– Centralized definition & management of pipelines for data ingest, process &

export

Business Continuity & Disaster Recovery– Out of the box policies for data replication & retention

– End to end monitoring of data pipelines

Address audit & compliance requirements– Visualize data pipeline lineage

– Track data pipeline audit logs

– Tag data with business metadata

The data traffic cop

Page 10 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Complicated Pipeline Simplified with Apache Falcon

Falcon Generates and Instruments Oozie Workflows

Page 11 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Falcon Architecture

Centralized Falcon Orchestration Framework

Hadoop ecosystem tools

Falcon Server JMS

API&UI

AMBARI

HDFS / Hive

Oozie

Entity Specs

Scheduled Jobs Process Status

MapRed / Pig / Hive / Sqoop / Flume / DistCP

Data stewards

+ Hadoop admins

Page 12 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Falcon Basic Concepts

• Cluster: Represents the “interfaces” to a Hadoop cluster• Feed: Defines a “dataset” File, Hive Table or Stream• Process: Consumes feeds, invokes processing logic & produces feeds

Page 12

All these put together represent ‘Data Pipelines’ in Hadoop

CLUSTER

FEEDaka

DATASETPROCESS

RUNS ON

STORED IN

INPUT TO

CREATES

Page 13 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Data Pipeline: Definition

• Flexible based pipeline specification– JAXB / JSON / JAVA / XML– Modular - Clusters, feeds & processes defined separately and then linked together– Easy to re-use across multiple pipelines

• Out of the box policies– Predefined policies for replication, late data handling & eviction – Easily customization of policies

• Extensible– Plug in external solutions at any step of the pipeline– Eg. Invoke third party data obfuscation components

Page 14 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Flexibility in Processing

Common types of processing engines can be tied to Falcon processes

Oozie workflows Pig scripts HQL scripts

Page 15 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Data Pipeline: Monitoring

DATA

Primary site DR site

Centralized monitoring of data pipeline With Falcon + Ambari

Pipeline run alerts

Hadoop Cluster-1 Hadoop Cluster-2

Pipeline run history

Pipeline Scheduling

raw clean prep raw clea

n prep

Page 16 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Replication with Falcon

Staged DataPresented

DataCleansed

DataConformed

Data

Staged DataPresented

Data

Rep

licat

ion

Failover Hadoop Cluster

Primary Hadoop Cluster

Rep

licat

ion

BI / Analytics

BusinessObjects BI

• Falcon manages workflow and replication• Enables business continuity without requiring full data reprocessing• Failover clusters can be smaller than primary clusters

Page 17 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Data Retention with Falcon

Staged DataPresented

DataCleansed

DataConformed

Data

Retain 5 Years

Retain Last Copy Only

Retain 3 Years

Retain 3 Years

• Sophisticated retention policies expressed in one place• Simplify data retention for audit, compliance, or for data re-processing

Ret

entio

n P

olic

y

Page 18 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Late Data Handling with Falcon

Staged Data Combined Data

Online Transaction Data

(via Sqoop)

Web Log Data (via FTP)

Wait up to 4 hours for FTP data to arrive

• Processing waits until all required input data is available• Checks for late data arrivals, issues retrigger processing as necessary• Eliminates writing complex data handling rules within applications

Page 19 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

HCatalog

Table access

Aligned metadata

REST API

• Raw Hadoop data• Inconsistent, unknown• Tool specific access

Apache Falcon provides metadata services via HCatalog

Metadata Services with HCatalog

• Consistency of metadata and data models across tools (MapReduce, Pig, Hbase, and Hive)

• Accessibility: share data as tables in and out of HDFS• Availability: enables flexible, thin-client access via REST API

Shared table and schema management opens the platform

Page 19

Page 20 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Data Governance in Apache Falcon

Page 21 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Data Pipeline: Tracing

.

Purchase feed

Customer feed

Product feedStore feed

View dependencies between clusters,

datasets and processes

Data pipeline dependencies

Add arbitrary tags to feeds & processes

Credit

feed

Sensitive Encrypted

Data pipeline tagging

Coming Soon

Know who modified a dataset when and into

what

Data pipeline audits

File-1

File-2

File-3

Analyze how a dataset reached a

particular state

Data pipeline lineage

Page 22 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Custom Metadata in Falcon

• Metadata on Ingest (Content)– What is the format I expect my data to be in?– What source systems did the data come from, owners?– Answer: ingest descriptors + Hcat schema versioning

• Metadata for Security (Access Controls)– How is each column blinded or encrypted?– Can I trust that I can join data across tables? What if email is encrypted differently?– Answer: security descriptors

• Metadata for lineage (Source, History)– How do I chase down sources of data leading to reports and data?– Answer: lineage carried forward per workflow

• Metadata for marts (Usage Constraints, Enrichment)– How do I materialize views and drop views as needed?

Page 23 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Entity Dependency in Falcon

• Dependencies between Falcon entity definitions: cluster, feed & process– Lineage attributes: workflows, input/output feed windows, user, input and output paths, workflow engine,

input/output size

Page 24 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Lineage in Falcon

Page 25 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Audit, Tagging and Access Control

• Tagging– Allows custom tags in entities– Can decorate process entities pipeline names

• Access Control– Support for ACL in entities– Authorization driven based on ACLs in entities

• Audit– Each execution is controlled by Falcon and runs are audited– Correlate the execution with Lineage (Design)

• Search– Search based on Tags, Pipelines, etc.– Full-text search

Page 26 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Technology

• Metadata Repository– Titan Graph Database – Pluggable backing store, berkelydbje, Hbase

• Entity Metadata– Tags, Entities are stored in the repository

• Execution Metadata– Execution metadata are stored in the repository as well – this is unique to Falcon– Optional inputs

• Search– Pluggable backend – Solr or Elastic Search

Page 27 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

New in Apache Falcon 0.6.0What is coming soon?

Page 28 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

DR Mirroring of HDFS with Recipes

•Mirroring for Disaster Recovery and Business continuity use cases.

•Customizable for multiple targets and frequency of synchronization

•Recipes: Template model re-use of complex workflows

Recipe

Reduce

Cleanse

Replicate

Properties

WorkflowTemplate

Recipe

Reduce

Cleanse

Replicate

Properties

Recipe

Reduce

Cleanse

Replicate

Properties

WorkflowTemplate

Page 29 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Replication to Cloud

•Seemlessly replicate to Cloud targets

•Replicate from Cloud as a source.

•Support for Amazon S3 and Microsoft Azure

On Prem Cluster

Page 30 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Page 31 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Page 32 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Page 33 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Page 34 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Page 35 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Page 36 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Q & A

Page 37 © Hortonworks Inc. 2011 – 2014. All Rights Reserved

Thank you!

Learn more at:hortonworks.com/hadoop/falcon/