Perforce Unplugged: Central and Distributed Versioning and distributed versioning

CyberShake Study 2.3 Technical Readiness Review. Study re-versioning SCEC software uses year.month...

-

Upload

eleanore-byrd -

Category

Documents

-

view

216 -

download

0

Transcript of CyberShake Study 2.3 Technical Readiness Review. Study re-versioning SCEC software uses year.month...

CyberShake Study 2.3 Technical Readiness Review

Study re-versioning

SCEC software uses year.month versioning Suggest renaming this study to 13.4

Study 13.4 Scientific Goals

Compare Los Angeles-area hazard maps― RWG V3.0.3 vs AWP-ODC-SGT (CPU)― CVM-S4 vs CVM-H

● Different version of CVM-H than previous runs● Adds San Bernardino, Santa Maria basins

286 sites (10 km mesh + points of interest)

Proposed Study sites

Study 13.4 Data Products

2 CVM-S4 Los Angeles-area hazard maps 2 CVM-H 11.9 Los Angeles-area hazard maps Hazard curves for 286 sites – 10s, 5s, 3s

― Calculated with OpenSHA v13.0.0 1144 sets of 2-component SGTs Seismograms for all ruptures (about 470M) Peak amplitudes in DB for 10, 5, 3s Access via CyberShake Data Product Site (in

development)

Study 13.4 Notables

First AWP-ODC-SGT hazard maps First CVM-H 11.9 hazard maps First CyberShake use of Blue Waters (SGTs) First CyberShake use of Stampede (post-

processing) Largest CyberShake calculation by 4x

Study 13.4 Parameters

0.5 Hz, deterministic post-processing― 200 m spacing

CVMs― Vs min = 500 m/s― GTLs for both velocity models

UCERF 2 Latest rupture variation generator

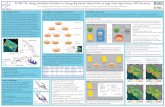

Verification work

4 sites (WNGC, USC, PAS, SBSM)― RWG V3.0.3, CVM-S― RWG V3.0.3, CVM-H― AWP, CVM-S― AWP, CVM-H

Plotted with previously calculated RWG V3 Expect RWG V3 slightly higher than the others

WNGCCVM-S CVM-H

RWG V3.0.3 - GreenAWP - Purple

RWG V3 - Orange

USCCVM-S CVM-H

RWG V3.0.3 - GreenAWP - Purple

RWG V3 - Orange

PASCVM-S CVM-H

RWG V3.0.3 - GreenAWP - Purple

RWG V3 - Orange

SBSMCVM-S CVM-H

RWG V3.0.3 - GreenAWP - Purple

RWG V3 - Orange

SBSM Velocity Profile

Study 13.4 SGT Software Stack

Pre AWP― New production code― Converts velocity mesh into AWP format― Generates other AWP input files

SGTs― AWP-ODC-SGT CPU v13.4 (from verification work)― RWG V3.0.3 (from verification work)

Post AWP― New production code― Creates SGT header files for post-processing with AWP

Study 13.4 PP Software Stack

SGT Extraction ― Optimized MPI version with in-memory rupture variation

generation― Support for separate header files― Same code as Study 2.2

Seismogram Synthesis / PSA calculation― Single executable― Same code as Study 2.2

Hazard Curve calculation― OpenSHA v13.0

All codes tagged in SVN before study begins

Distributed Processing (SGTs)

Runs placed in pending file on Blue Waters (as scottcal)

Cron job calls build_workflow.py with run parameters― build_workflow.py creates PBS scripts defining jobs with dependencies

Cron job calls run_workflow.py― run_workflow.py submits PBS scripts using qsub dependencies

― Limited restart capability

Final workflow jobs ssh to shock, call handoff.py― Performs BW->Stampede SGT file transfer (as scottcal)

― scottcal BW proxy must be resident on shock

― Registers SGTs in RLS

― Adds runs to pending file on shock for post-processing

Distributed Processing (PP)

Cron job on shock submits post-processing runs― Pegasus 4.3, from Git repository― Condor 7.6.6― Globus 5.0.3

Jobs submitted to Stampede (as tera3d)― 8 sub-workflows― Extract SGT jobs as standard jobs (128 cores)― seis_psa jobs as PMC jobs (1024 cores)

Results staged back to shock, DB populated, curves generated

SGT Computational Requirements

SGTs on Blue Waters Computational time: 8.4 M SUs

― RWG: 16k SUs/site x 286 sites = 4.6 M SUs ― AWP: 13.5k Sus/site x 286 sites = 3.8 M SUs― 22.35 M SU allocation, 22 M SUs remaining

Storage: 44.7 TB― 160 GB/site x 286 sites = 44.7 TB

PP computational requirements

Post-processing on Stampede Computational time:

― 4000 SUs/site x 286 sites = 1.1 M SUs― 4.1 M SU allocation, 3.9 M remaining

Storage: 44.7 TB input, 13 TB output― 44.7 TB of SGT inputs; will need to rotate out― Seismograms: 46 GB/site x 286 sites = 12.8 TB― PSA files: 0.8 GB/site x 286 sites = 0.2 TB

Computational Analysis

Monitord for post-processing performance― Will run after workflows have completed― May need python scripts for specific CS metrics

Scripts for SGT performance― Cronjob to monitor core usage on BW― Does wrapping BW jobs in kickstart help?

Ideally, same high-level metrics as Studies 1.0 and 2.2

Long-term storage

44.7 TB SGTs:― To be archived to tape (NCSA? TACC?

Somewhere else?) 13 TB Seismograms, PSA data

― Have been using SCEC storage - scec-04? 5.5 TB workflow logs

― Can compress after mining for stats CyberShake database

― 1.4 B entries, 330 GB data (scaling issues?)

Estimated Duration

Limiting factors:― Blue Waters queue time

● Uncertain how many sites in parallel― Blue Waters → Stampede transfer

● 100 MB/sec seems sustainable from tests, but could get much worse

● 50 sites/day; unlikely to reach

Estimated completion by end of June

Personnel Support

Scientists― Tom Jordan, Kim Olsen, Rob Graves

Technical Lead― Scott Callaghan

Job Submission / Run Monitoring― Scott Callaghan, David Gill, Phil Maechling

Data Management― David Gill

Data Users― Feng Wang, Maren Boese, Jessica Donovan

Risks

Stampede becomes busier― Post-processing still probably shorter than SGTs

CyberShake database unable to handle data― Would need to create other DBs, distributed DB, change

technologies

Stampede changes software stack― Last time, necessitated change to MPI library― Can use Kraken as backup PP site while resolving issues

New workflow system on Blue Waters― May be as yet undetected bugs

Thanks for your time!