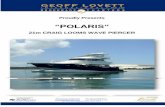

CSE 5526: Introduction to Neural Networks

Transcript of CSE 5526: Introduction to Neural Networks

Part I 1

CSE 5526: Introduction to Neural Networks

Instructor: DeLiang Wang

Part I 2

What is this course is about? AI (artificial intelligence) in the broad sense, in particular

learning The human brain and its amazing ability, e.g. vision

Part I 3

Human brain

Lateral fissure

Central sulcus

Part I 4

Brain versus computer

Brain

••

Computer

••

Brain-like computation – Neural networks (NN or ANN) –Neural computation• Discuss syllabus

Part I 5

A single neuron

Part I 6

A single neuron

Part I 7

Real neurons, real synapses Properties

Action potential (impulse) generation Impulse propagation Synaptic transmission & plasticity Spatial summation

Terminology Neurons – units – nodes Synapses – connections – architecture Synaptic weight – connection strength (either positive or negative)

Part I 8

Model of a single neuron

Part I 9

Neuronal model

∑=

=m

jjkjk xwu

1

Adder, weighted sum, linear combiner

kkk buv += Activation potential; bk: bias

)( kk vy ϕ= Output; φ: activation function

Part I 10

Another way of including biasSet x0 = +1 and wk0 = bk

So we have ∑=

=m

jjkjk xwv

0

Part I 11

McCulloch-Pitts model

}1,1{ −∈ix

)(1

bxwy i

m

ii += ∑

=

ϕ

0 if0 if

11

)(<≥

−

=vv

vϕ A form of signum (sign) function

Note difference from textbook; also number of neurons in the brain

Bipolar input

Part I 12

McCulloch-Pitts model (cont.)

• Example logic gates (see blackboard)

• McCulloch-Pitts networks (introduced in 1943) are the first class of abstract computing machines: finite-state automata• Finite-state automata can compute any logic (Boolean) function

Part I 13

Network architecture

• View an NN as a connected, directed graph, which defines its architecture• Feedforward nets: loop-free graph• Recurrent nets: with loops

Part I 14

Feedforward net

• Since the input layer consists of source nodes, it is typically not counted when we talk about the number of layers in a feedforward net

• For example, the architecture of 10-4-2 counts as a two-layer net

Part I 15

A one-layer recurrent net

In this net, the input typically sets the initial condition of the output layer

Part I 16

Network components

• Three components characterize a neural net• Architecture• Activation function• Learning rule (algorithm)

Part I 17

CSE 5526: Introduction to Neural Networks

Perceptrons

Part I 18

Perceptrons

• Architecture: one-layer feedforward net• Without loss of generality, consider a single-neuron perceptron

• • •

• • •

x1

x2

xm

y1

y2

Part I 19

Definition

)(vy ϕ=

otherwise0 if

11

)(≥

−

=v

vϕ

Hence a McCulloch-Pitts neuron, but with real-valued inputs

bxwv i

m

ii +=∑

=1

Part I 20

Pattern recognition

• With a bipolar output, the perceptron performs a 2-class classification problem• Apples vs. oranges

• How do we learn to perform a classification problem?• Task: The perceptron is given pairs of input xp and desired

output dp. How to find w (with b incorporated) so that

? allfor , pdy pp =

Part I 21

Decision boundary

• The decision boundary for a given w:

• g is also called the discriminant function for the perceptron, and it is a linear function of x. Hence it is a linear discriminant function

0)(1

=+=∑=

bxwg i

m

iix

Part I 22

Example

• See blackboard

Part I 23

Decision boundary (cont.)

• For an m-dimensional input space, the decision boundary is an (m ‒ 1)-dimensional hyperplane perpendicular to w. The hyperplane separates the input space into two halves, with one half having y = 1, and the other half having y = -1• When b = 0, the hyperplane goes through the origin

x1

x2

y = -1

y = +1w

g = 0

x

o

o

o o

o

oo

oo

xx

x

x

xx

Part I 24

Linear separability

• For a set of input patterns xp, if there exists one w that separates d = 1 patterns from d = -1 patterns, then the classification problem is linearly separable• In other words, there exists a linear discriminant function that

produces no classification error• Examples: AND, OR, XOR (see blackboard)

• A very important concept

Part I 25

Linear separability: a more general illustration

Part I 26

Perceptron learning rule

• Strengthen an active synapse if the postsynaptic neuron fails to fire when it should have fired; weaken an active synapse if the neuron fires when it should not have fired• Formulated by Rosenblatt based on biological intuition

Part I 27

Quantitatively

)()()1( nwnwnw ∆+=+

n: iteration number η: step size or learning rate

)()]()([)( nxnyndnw −+= η

In vector form

xw ][ yd −=∆ η

Part I 28

Geometric interpretation

• Assume η = 1/2

x1

x2

w(0)

x: d = 1

o

oo

o: d = -1

xx

x

Part I 29

Geometric interpretation

• Assume η = 1/2

x1

x2

w(0)

x: d = 1

o

oo

o: d = -1

xx

x

w(1)

Part I 30

Geometric interpretation

• Assume η = 1/2

x1

x2

w(0)

x: d = 1

o

oo

o: d = -1

xx

x

w(1)

Part I 31

Geometric interpretation

• Assume η = 1/2

x1

x2

w(0)

x: d = 1

o

oo

o: d = -1

xx

x

w(1)

w(2)

Part I 32

Geometric interpretation

• Assume η = 1/2

x1

x2

w(0)

x: d = 1

o

oo

o: d = -1

xx

x

w(1)

w(2)

Part I 33

Geometric interpretation

• Assume η = 1/2

x1

x2

w(0)

x: d = 1

o

oo

o: d = -1

xx

x

w(1)

w(2)w(3)

Part I 34

Geometric interpretation

Each weight update moves w closer to d = 1 patterns, or away from d = -1 patterns. w(3) solves the classification problem

x1

x2 x: d = 1

o

oo

o: d = -1

xx

xw(3)

Part I 35

Perceptron convergence theorem

• Theorem: If a classification problem is linearly separable, a perceptron will reach a solution in a finite number of iterations

• [Proof]Given a finite number of training patterns, because of linear separability, there exists a weight vector wo so that

where

0>≥αpTopd xw (1)

)(min pTopp d xw=α

Part I 36

Proof (cont.)

• We assume that the initial weights are all zero. Let Np denote the number of times xp has been used for actually updating the weight vector at some point in learning

At that time:

])([ pppp

p ydN xw −=∑ η

ppp

pdN x∑= η2

Part I 37

Proof (cont.)

• Consider first

where

pTop

pp

To dN xwww ∑= η2

wwTo

(2)

∑=p

pNP

due to (1) ∑≥p

pNηα2

Pηα2=

Part I 38

Proof (cont.)

• Now consider the change in square length after a single update by x:

whereSince upon an update, d(wTx) ≤ 0

22222 2 wxwwwww −+=−∆+=∆ dη

2w

2max pp x=β

xwx Tdηη 44 22 +=

βη24≤

Part I 39

Proof (cont.)

• By summing for P steps we have the bound:

• Now square the cosine of the angle between wo and w, we have by (2) and (3)

Cauchy-Schwarz inequality

Pβη22 4≤w

2w

(3)

2

2

22

222

22

2

44)(1

ooo

To P

PP

wwwwww

βα

βηαη

=≥≥

Part I 40

Proof (cont.)

• Thus, P must be finite to satisfy the above inequality. This completes the proof

• Remarks• In the case of w(0) = 0, the learning rate has no effect on the proof.

That is, the theorem holds no matter what η (η > 0) is• The solution weight vector is not unique

Part I 41

Generalization

• Performance of a learning machine on test patterns not used during training

• Example: Class 1: handwritten “m”: class 2: handwritten “n”• See blackboard

• Perceptrons generalize by deriving a decision boundary in the input space. Selection of training patterns is thus important for generalization