CS230: Lecture 2 Deep Learning Intuitioncs230.stanford.edu/fall2018/slides_week2.pdf · We load an...

Transcript of CS230: Lecture 2 Deep Learning Intuitioncs230.stanford.edu/fall2018/slides_week2.pdf · We load an...

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

CS230: Lecture 2 Deep Learning Intuition

Kian Katanforoosh

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Recap

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Model =

Architecture +

Learning Process

Input Output

0

Loss

Gradients

Things that can change- Activation function - Optimizer - Hyperparameters

Parameters

- …

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Logistic Regression as a Neural Network

image2vector

255231...94142

⎛

⎝

⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟

…

/255

/255

/255

/255

… σ 0.73

x1(i )

wT x(i ) + b

x2(i )

xn−1(i )

xn(i )

“it’s a cat”0.73 > 0.5

…

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

image2vector

255231...94142

⎛

⎝

⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟

…

/255

/255

/255

/255

… σ 0.73

x1(i )

wT x(i ) + b

x2(i )

xn−1(i )

xn(i )

σwT x(i ) + b

σwT x(i ) + b

Multi-class

0.04

0.12

Cat?0.73 > 0.5

Giraffe?0.04 < 0.5

Dog?0.12 < 0.5

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

image2vector

255231...94142

⎛

⎝

⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟

…

/255

/255

/255

/255

… σ

x1(i )

wT x(i ) + b

x2(i )

xn−1(i )

xn(i )

σwT x(i ) + b

σwT x(i ) + b

Neural Network (Multi-class)

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

image2vector

255231...94142

⎛

⎝

⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟

…

/255

/255

/255

/255

…

x1(i )

x2(i )

xn−1(i )

xn(i )

Hidden layer

a1[2]

output layer

0.73

Cat

0.73 > 0.5

a1[1]

a2[1]

a3[1]

Neural Network (1 hidden layer)

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Deeper network: Encoding

output layer

a1[1]

a2[1]

a3[1]

a4[1]

a1[2]

a2[2]

a3[2]

a1[3]

x1(i )

x2(i )

x3(i )

x4(i )

y(i )

Technique called “encoding”

Hidden layer

Hidden layer

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Let’s build intuition on concrete applications

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

I. Day’n’Night classification

II. Face verification and recognition

III. Neural style transfer (Art generation)

IV. Trigger-word detection

V. Shipping model

Today’s outline

We will learn tips and tricks to:

- Analyze a problem from a deep learning approach

- Choose an architecture

- Choose a loss and a training strategy

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Day’n’Night classification

Goal: Given an image, classify as taken “during the day” (0) or “during the night” (1)

1. Data?

2. Input?

3. Output?

4. Architecture ?

5. Loss?

10,000 images

Resolution?

y = 0 or y = 1 Last Activation?

Split? Bias?

Shallow network should do the job pretty well

L = −[y log( y)+ (1− y) log(1− y)] Easy warm up

(64, 64, 3)

sigmoid

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Face Verification

2. Input?

Resolution? (412, 412, 3)

1. Data?

Picture of every student labelled with their name

3. Output?

y = 1 (it’s you) or

y = 0 (it’s not you)

Bertrand

Goal: A school wants to use Face Verification for validating student IDs in facilities (dinning halls, gym, pool …)

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Goal: A school wants to use Face Verification for validating student IDs in facilities (dinning halls, gym, pool …)

4. What architecture?

Simple solution:

database image input image

compute distance pixel per pixel

if less than threshold then y=1

- Background lighting differences - A person can wear make-up, grow a

beard… - ID photo can be outdated

Issues:

Face Verification

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Goal: A school wants to use Face Verification for validating student IDs in facilities (dinning halls, gym, pool …)

4. What architecture?Our solution: encode information about a picture in a vector

Deep Network

0.9310.4330.331!0.9420.1580.039

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟

128-d

Deep Network

0.9220.3430.312!0.8920.1420.024

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟

distance 0.4 y=10.4 < threshold

We gather all student faces encoding in a database. Given a new picture, we compute its distance with the encoding of card holder

Face Verification

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Face Recognition

Goal: A school wants to use Face Verification for validating student IDs in facilities (dinning hall, gym, pool …)

4. Loss? Training?We need more data so that our model understands how to encode: Use public face datasets

What we really want: So let’s generate triplets:

minimize encoding distance

maximize encoding distancesimilar encoding different encoding

positiveanchor negative

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Model =

Architecture +

Recap: Learning Process

Input Output

Loss

Gradients

Parameters

L = Enc(A)− Enc(P) 22

− Enc(A)− Enc(N ) 22

+α

0.130.42..0.100.310.73..0.430.33

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟

0.950.45..0.200.410.89..0.310.34

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟

0.010.54..0.450.110.49..0.120.01

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟⎟

Enc(A) Enc(P) Enc(N)

0positiveanchor negative

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Face Recognition

Goal: A school wants to use Face Identification for recognize students in facilities (dinning hall, gym, pool …)

Goal: You want to use Face Clustering to group pictures of the same people on your smartphone

K-Nearest Neighbors

K-Means Algorithm

Maybe we need to detect the faces first?

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

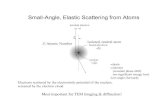

Art generation (Neural Style Transfer)

Goal: Given a picture, make it look beautiful

1. Data?

Let’s say we have any data

2. Input? 3. Output?

content image

style image generated

image

Leon A. Gatys, Alexander S. Ecker, Matthias Bethge: A Neural Algorithm of Artistic Style, 2015

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Art generation (Neural Style Transfer)

4. Architecture?

5. Loss?

We want a model that understands images very well We load an existing model trained on ImageNet for example

Deep Network classification

When this image forward propagates, we can get information about its content & its style by inspecting the layers.

ContentCStyleS

Leon A. Gatys, Alexander S. Ecker, Matthias Bethge: A Neural Algorithm of Artistic Style, 2015

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Correct Approach

Art generation (Neural Style Transfer)

Deep Network (pretrained)

After 2000 iterations

computeloss

update pixels using gradients

L = ContentC −ContentG 2

2 + StyleS − StyleG 2

2

Kian Katanforoosh, Andrew Ng, Younes Bensouda MourriLeon A. Gatys, Alexander S. Ecker, Matthias Bethge: A Neural Algorithm of Artistic Style, 2015

We are not learning parameters by minimizing L. We are learning an image!

Kian Katanforoosh

Trigger word detection

Goal: Given a 10sec audio speech, detect the word “activate”.

1. Data?

2. Input?

3. Output?

A bunch of 10s audio clips

Resolution? (sample rate)

Distribution?

x = A 10sec audio clip

y = 0 or y = 1

Kian Katanforoosh

Let’s have an experiment!

y = 1y = 0y = 1

y = 000000000000000000000000000000000000000010000000000

y = 000000000000000000000000000000000000000000000000000

y = 000000000001000000000000000000000000000000000000000

Kian Katanforoosh

Trigger word detection

Goal: Given a 10sec audio speech, detect the word “activate”.

1. Data?

2. Input?

3. Output?

4. Architecture ?

5. Loss?

A bunch of 10s audio clips

Resolution? (sample rate)

y = 00..00001..1000..000

Last Activation?

Distribution?

Sounds like it should be a RNN

L = −( y log( y)+ (1− y) log(1− y))

sigmoid (sequential)

x = A 10sec audio clip

(sequential)

y = 0 or y = 1y = 00..0000100000..000

Kian Katanforoosh

2. Architecture search & Hyperparameter tuning1. Strategic data collection/labelling process

What is critical to the success of this project?

Positive word Negative words Background noise

000000..000001..10000..000 000000..000001..10000..000

Fourier transform

…LSTM LSTM LSTM LSTM LSTM LSTM

…σ σ σ σ σ σ

Fourier transform

…

00..00001..100..0

σ σ σ σ

CONV + BN

000000..000001..10000..000

…GRU +

BN

GRU +

BN

GRU +

BN

GRU +

BN

Automated labelling Never give up

+ Error analysis

Trigger word detection

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Another way of solving the TWD problem?

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Trigger word detection (other method)

Goal: Given an audio speech, detect the word “lion”.

4. What architecture?

0.12 0.01 0.27 … … … … … … … 0.21 0.92 0.43 … … … … … … Threshold: 0.6

Deep Network

0.9310.4330.331!0.9420.1580.039

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟

Deep Network

0.9220.3430.312!0.8920.1420.024

⎛

⎝

⎜⎜⎜⎜⎜⎜⎜⎜

⎞

⎠

⎟⎟⎟⎟⎟⎟⎟⎟

distance 0.4 y=10.4 < threshold

L = Enc(A)− Enc(P) 22

− Enc(A)− Enc(N ) 22

+αy = (0,0,0,0,0,0,0,0,0,0,0,0,0,….,0,0,0,0,0,0,1,0,0, ….,0,0,0,0,0,0,0,0,0,0)

[For more on query-by-example trigger word detection, check: Guoguo Chen et al.: Query-by-example keyword spotting using long short-term memory networks (2015)]

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Featured in the Magazine “the Most Beautiful Loss functions 2015”

Joseph Redmon, Santosh Divvala, Ross Girshick, Ali Farhadi: You Only Look Once: Unified, Real-Time Object Detection

App implementation

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Server-based or on-device?

On-device

Server-based

Model Architecture+

Learned Parametersy = 0

Model Architecture+

Learnt Parameters

y=0

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Server-based or on-device?

On-device

Server-based

Model Architecture+

Learned Parametersy = 0

Model Architecture+

Learnt Parameters

y=0

App is light-weight

Faster predictions

App is easy to update

Works offline

Kian Katanforoosh, Andrew Ng, Younes Bensouda Mourri

Duties for next week

For Wednesday 10/09, 10am:

C1M3• Quiz: Shallow Neural Networks• Programming Assignment: Planar data classification with one-hidden layer

C1M4• Quiz: Deep Neural Networks• Programming Assignment: Building a deep neural network - Step by Step• Programming Assignment: Deep Neural Network Application

Others:• TA project mentorship (mandatory this week)• Friday TA section (10/05): focus on git and neural style transfer.• Fill-in AWS Form to get GPU credits for your projects