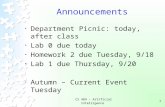

CS 484

description

Transcript of CS 484

CS 484

Designing Parallel Algorithms

Designing a parallel algorithm is not easy.There is no recipe or magical ingredient Except creativity

We can benefit from a methodical approach. Framework for algorithm design

Most problems have several parallel solutions which may be totally different from the best sequential algorithm.

PCAM Algorithm Design4 Stages to designing a parallel algorithm Partitioning Communication Agglomeration Mapping

P & C focus on concurrency and scalability.A & M focus on locality and performance.

PCAM Algorithm DesignPartitioning Computation and data are decomposed.Communication Coordinate task executionAgglomeration Combining of tasks for performanceMapping Assignment of tasks to processors

PartitioningIgnore the number of processors and the target architecture.Expose opportunities for parallelism.Divide up both the computation and dataCan take two approaches domain decomposition functional decomposition

Domain DecompositionStart algorithm design by analyzing the data Divide the data into small pieces Approximately equal in sizeThen partition the computation by associating it with the data.Communication issues may arise as one task needs the data from another task.

Domain DecompositionEvaluate the definite integral.

1

021

4x

0 1

Split up the domain

0 1

Split up the domain

0 1

Split up the domain

0 10.50.25 0.75

Now eachtask simplyevaluatesthe integralin their range.

All that is left is to sum up eachtask's answerfor the total.

Domain DecompositionConsider dividing up a 3-D grid What issues arise?

Other issues? What if your problem has more

than one data structure? Different problem phases? Replication?

Functional DecompositionFocus on the computationDivide the computation into disjoint tasks Avoid data dependency among tasksAfter dividing the computation, examine the data requirements of each task.

Functional DecompositionNot as natural as domain decompositionConsider search problemsOften functional decomposition is very useful at a higher level. Climate modeling

Ocean simulation Hydrology Atmosphere, etc.

Partitioning ChecklistDefine a LOT of tasks?Avoid redundant computation and storage?Are tasks approximately equal?Does the number of tasks scale with the problem size?Have you identified several alternative partitioning schemes?

CommunicationThe information flow between tasks is specified in this stage of the design

Remember: Tasks execute concurrently. Data dependencies may limit

concurrency.

CommunicationDefine Channel Link the producers with the consumers. Consider the costs

Intellectual Physical

Distribute the communication.Specify the messages that are sent.

Communication PatternsLocal vs. GlobalStructured vs. UnstructuredStatic vs. DynamicSynchronous vs. Asynchronous

Local CommunicationCommunication within a neighborhood.

Algorithm choice determines communication.

Global CommunicationNot localized.Examples All-to-All Master-Worker

5 3 72

1

Avoiding Global Communication

Distribute the communication and computation

5 3 72

1

15 3 7 2 131013

Divide and ConquerPartition the problem into two or more subproblemsPartition each subproblem, etc.

15 3

7

2 158 3

Results in structured nearest neighbor communication pattern.

Structured CommunicationEach task’s communication resembles each other task’s communicationIs there a pattern?

Unstructured Communication

No regular pattern that can be exploited.Examples Unstructured Grid Resolution changes

Complicates the next stages of design

Synchronous Communication

Both consumers and producers are aware when communication is requiredExplicit and simple

t = 1 t = 2 t = 3

Asynchronous Communication

Timing of send/receive is unknown. No pattern

Consider: very large data structure Distribute among computational tasks

(polling) Define a set of read/write tasks Shared Memory

Problems to AvoidA centralized algorithm Distribute the computation Distribute the communicationA sequential algorithm Seek for concurrency Divide and conquer

Small, equal sized subproblems

Communication Design Checklist

Is communication balanced? All tasks about the same

Is communication limited to neighborhoods? Restructure global to local if possible.

Can communications proceed concurrently?Can the algorithm proceed concurrently? Find the algorithm with most concurrency.

Be careful!!!

AgglomerationPartition and Communication steps were abstractAgglomeration moves to concrete.Combine tasks to execute efficiently on some parallel computer.Consider replication.

Agglomeration GoalsReduce communication costs by increasing computation decreasing/increasing granularityRetain flexibility for mapping and scaling.Reduce software engineering costs.

Changing GranularityA large number of tasks does not necessarily produce an efficient algorithm.We must consider the communication costs.Reduce communication by having fewer tasks sending less messages (batching)

Surface to Volume EffectsCommunication is proportional to the surface of the subdomain.Computation is proportional to the volume of the subdomain.Increasing computation will often decrease communication.

How many messages total?How much data is sent?

How many messages total?How much data is sent?

Replicating ComputationTrade-off replicated computation for reduced communication.Replication will often reduce execution time as well.

Summation of N Integers

s = sumb = broadcast

How many steps?

Using Replication (Butterfly)

Using Replication

Butterfly to Hypercube

Avoid CommunicationLook for tasks that cannot execute concurrently because of communication requirements.Replication can help accomplish two tasks at the same time, like: Summation Broadcast

Preserve FlexibilityCreate more tasks than processors.Overlap communication and computation.Don't incorporate unnecessary limits on the number of tasks.

Agglomeration ChecklistReduce communication costs by increasing locality.Do benefits of replication outweigh costs?Does replication compromise scalability?Does the number of tasks still scale with problem size?Is there still sufficient concurrency?

MappingSpecify where each task is to operate.Mapping may need to change depending on the target architecture.Mapping is NP-complete.

MappingGoal: Reduce Execution Time Concurrent tasks ---> Different

processors High communication ---> Same

processorMapping is a game of trade-offs.

MappingMany domain-decomposition problems make mapping easy. Grids Arrays etc.

MappingUnstructured or complex domain decomposition based algorithms are difficult to map.

Other Mapping ProblemsVariable amounts of work per taskUnstructured communicationHeterogeneous processors different speeds different architectures

Solution: LOAD BALANCING

Load BalancingStatic Determined a priori Based on work, processor speed, etc.Probabilistic RandomDynamic Restructure load during executionTask Scheduling (functional decomp.)

Static Load BalancingBased on a priori knowledge.Goal: Equal WORK on all processorsAlgorithms: Basic Recursive Bisection

BasicDivide up the work based on

Work required Processor speed

i

ii p

pRr

Recursive BisectionDivide work in half recursively.Based on physical coordinates.

Dynamic AlgorithmsAdjust load when an imbalance is

detected.Local or Global

Task SchedulingMany tasks with weak locality

requirements.Manager-Worker model.

Task SchedulingManager-WorkerHierarchical Manager-Worker

Uses submanagersDecentralized

No central manager Task pool on each processor Less bottleneck

Mapping ChecklistIs the load balanced?Are there communication

bottlenecks?Is it necessary to adjust the load

dynamically?Can you adjust the load if necessary?Have you evaluated the costs?

PCAM Algorithm DesignPartition

Domain or Functional DecompositionCommunication

Link producers and consumersAgglomeration

Combine tasks for efficiencyMapping

Divide up the tasks for balanced execution

Example: Atmosphere ModelSimulate atmospheric processes

Wind Clouds, etc.

Solves a set of partial differential equations describing the fluid behavior

Representation of Atmosphere

Data Dependencies

Partition & Communication

Agglomeration

Mapping

Mapping

![[Shinobi] Bleach 484](https://static.fdocuments.us/doc/165x107/568bf1c41a28ab89339449cb/shinobi-bleach-484.jpg)