CORRELATION AND SIMPLE LINEAR REGRESSION - Revisited Ref: Cohen, Cohen, West, & Aiken (2003), ch. 2.

CORRELATION AND SIMPLE LINEAR REGRESSION - Revisited

description

Transcript of CORRELATION AND SIMPLE LINEAR REGRESSION - Revisited

CORRELATION AND SIMPLE LINEAR REGRESSION - Revisited

Ref: Cohen, Cohen, West, & Aiken (2003), ch. 2

Pearson Correlation n

(xi – mx)(yi – my)/(n-1) rxy = I=1_____________________________ = sxy/sxsy

sx sy

= zxizyi/(n-1) / = 1 – ( (zxi-zyi)2/2(n-1)

= 1 – ( (dzi)2/2(n-1)

= COVARIANCE / SDxSDy

Variance of X=1

Variance of Y=1

r2 = percent overlap in the two squares

Fig. 3.6: Geometric representation of r2 as the overlap of two squares

a. Nonzero correlation

Variance of X=1

Variance of Y=1

B. Zero correlation

SSySSx

Sxy

Sums of Squares and Cross Product (Covariance)

SATMath

CalcGrade

.00364 (.40))

error

.932(.955)

Figure 3.4: Path model representation of correlation between SAT Math scores and Calculus Grades

R2 = .42 = .16

Path Models• path coefficient -standardized coefficient

next to arrow, covariance in parentheses• error coefficient- the correlation between

the errors, or discrepancies between observed and predicted Calc Grade scores, and the observed Calc Grade scores.

• Predicted(Calc Grade) = .00364 SAT-Math + 2.5

• errors are sometimes called disturbances

X Y

a

X Y

b

X Y

c

Figure 3.2: Path model representations of correlation

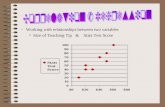

SUPPRESSED SCATTERPLOT

• NO APPARENT RELATIONSHIP

X

Y

Prediction lines

MALES

FEMALES

IDEALIZED SCATTERPLOT• POSITIVE CURVILINEAR

RELATIONSHIP

X

Y

Linear

prediction line

Quadratic

prediction line

LINEAR REGRESSION- REVISITED

Single predictor linear regression.

• Regression equations:• y = xb1x+ xb0

• x = yb1y + yb0

• Regression coefficients:• xb1 = rxy sy / sx

• yb1 = rxy sx / sy

Two variable linear regression

• Path model representation:unstandardized

x y e

b1

Linear regression

y = b1x + b0

If the correlation coefficient is calculated, then b1 can be calculated from the equation above:

b1 = rxy sy / sx

The intercept, b0, follows by placing the means for x and y into the equation above and solving:

_ _b0 = y. – [ rxysy/sx ] x.

Linear regression

• Path model representation:standardized

zx zy e

rxy

Least squares estimation

The best estimate will be one in which the sum of squared differences between each score and the estimate will be the smallest among all possible linear unbiased estimates (BLUES, or best linear unbiased estimate).

Least squares estimation

• errors or disturbances. They represent in this case the part of the y score not predictable from x:

• ei = yi – b1xi .

• The sum of squares for errors follows:• n• SSe = e2

i .

• i-1

e

y

x

e

e

e

e

e

e e

SSe = e2i

Matrix representation of least squares estimation.

• We can represent the regression model in matrix form:

• y = X + e

Matrix representation of least squares estimation

• y = X + e

• y1 1 x1 e1

• 0

• y2 1 x2 1 e2

• y3 1 x3 e3

• y4 = 1 x4 + e4

• . 1 . .

• . 1 . .

• . 1 . .

Matrix representation of least squares estimation

• y = Xb + e• The least squares criterion is satisfied by the following

matrix equation:• b = (X’X)-1X’y .• The term X’ is called the transform of the X matrix. It is

the matrix turned on its side. When X’X is multiplied together, the result is a 2 x 2 matrix

• n xi

• xi x2i

SUMS OF SQUARES

• SSe = (n – 2 )s2e

• SSreg = ( b1 xi – y. )2

• SSy = SSreg + SSe

SUMS OF SQUARES-Venn Diagram

ssregSSy

SSe

Fig. 8.3: Venn diagram for linear regression with one predictor and one outcome measure

SSx

STANDARD ERROR OF ESTIMATE

s2y = s2yhat + s2e

s2zy = 1 = r2y.x +s2ez

sez = sy ( 1 - r2y.x )

= SSe / (n-2)

Review slide 17: this is the standard deviation of the errors shown there

SUMS OF SQUARES- ANOVA Table

SOURCE df Sum of Mean FSquares Square

x 1 SSreg SSreg / 1 SSreg/ 1

SSe /(n-2)

e n-2 SSe SSe / (n-2)

Totaln-1 SSy SSy / (n-1)

Table 8.1: Regression table for Sums of Squares

Confidence Intervals Around b and Beta weights

sb = (sy / sx ) (1 - r2y.x )/ (n-2)

Standard deviation of sampling error of estimate of regression weight b

sβ = ( 1 - r2y.x )/ (n-2)

Note: this is formally correct only for a regression equation, not for the Pearson correlation

Distribution around parameter estimates: b-weight

bestimatesb

± t sb

Hypothesis testing for the regression weight

Null hypothesis: bpopulation = 0Alternative hypothesis: bpopulation ≠ 0

Test statistic: t = bsample / seb

Student’s t-distribution with degrees of freedom = n-2

Model Summary

.539a .291 .268 3.121Model1

R R SquareAdjustedR Square

Std. Error ofthe Estimate

Predictors: (Constant), LOCUS OF CONTROLa.

ANOVAb

123.867 1 123.867 12.714 .001a

302.012 31 9.742425.879 32

RegressionResidualTotal

Model1

Sum ofSquares df Mean Square F Sig.

Predictors: (Constant), LOCUS OF CONTROLa.

Dependent Variable: SOCIAL STRESSb.

Coefficientsa

-4.836 2.645 -1.828 .077.190 .053 .539 3.566 .001

(Constant)LOCUS OF CONTROL

Model1

B Std. Error

UnstandardizedCoefficients

Beta

StandardizedCoefficients

t Sig.

Dependent Variable: SOCIAL STRESSa.

Test of b=0 rejected at .05 level

SPSS Regression Analysis option predicting Social Stress from Locus of Control in a sample of 16 year olds

Locus of Control

Social Stress

.190 (.539))

error

3.12(.842)

Figure 3.4: Path model representation of prediction of Social Stress from Locus of Control

R2 = .291

√1- R2 = .842

b βse

Difference between Independent b-weights

Compare two groups’ regression weights to see if they differ (eg. boys vs. girls)

Null hypothesis: bboys = bgirls

Test statistic: t = (bboys - bgirls) / (sbboys – bgirls)(sbboys – bgirls) = √ s2bboys + s2bgirls

Student’s t distribution with n1+ n2 - 4

Coefficientsa

-.416 3.936 -.106 .917.106 .081 .289 1.314 .205

(Constant)LOCUS OF CONTROL

Model1

B Std. Error

UnstandardizedCoefficients

Beta

StandardizedCoefficients

t Sig.

Dependent Variable: SOCIAL STRESSa.

Coefficientsa

-9.963 2.970 -3.354 .007.281 .058 .835 4.807 .001

(Constant)LOCUS OF CONTROL

Model1

B Std. Error

UnstandardizedCoefficients

Beta

StandardizedCoefficients

t Sig.

Dependent Variable: SOCIAL STRESSa.

boys n=22

girls n=12

t = ( .281 - .106) / √ (.0812 + .0582 )

= 1.76