Computing for Belle

description

Transcript of Computing for Belle

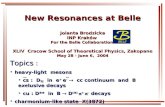

Computing for BelleComputing for Belle

CHEP2004September 27, 2004

Nobu KatayamaKEK

2004/09/27 Nobu Katayama(KEK) 2

OutlineOutline

Belle in generalSoftwareComputingProductionNetworking and Collaboration issuesSuper KEKB/Belle/Belle computing upgrades

2004/09/27 Nobu Katayama(KEK) 3

Belle detectorBelle detector

2004/09/27 Nobu Katayama(KEK) 4

Integrated luminosityIntegrated luminosity

May 1999:first collisionJuly 2001:30fb

Oct. 2002:100fb

July 2003:150fb

July 2004:287fb

July 2005:550fb

Super KEKB1~10ab/year!

>1TB raw data/daymore than 20% is hadronic events

~1 fb/day !

2004/09/27 Nobu Katayama(KEK) 5

No need to stop runAlways at ~max. currents and therefore maximum luminosity

Continuous InjectionContinuous Injection

[CERN courier Jan/Feb 2004]~30% more Ldt both KEKB & PEP-II

HER current

LER current

continuous injection (new)normal injection (old)

Luminosity

0 12 24

Time

~1 fb-1/day ! (~1x106 BB)

-

2004/09/27 Nobu Katayama(KEK) 6

Summary of Summary of b b sqq sqq CPV CPV

2.4

“sin21”

(A: consistent with 0)

Something new in the loop??

-

We needa lot more data

2004/09/27 Nobu Katayama(KEK) 7

IHEP, Moscow IHEP, ViennaITEPKanagawa U.KEKKorea U.Krakow Inst. of Nucl. Phys.Kyoto U. Kyungpook Nat’l U. U. of Lausanne Jozef Stefan Inst.

Aomori U.BINPChiba U.Chonnam Nat’l U.Chuo U.U. of CincinnatiEwha Womans U.Frankfurt U.Gyeongsang Nat’l U.U. of HawaiiHiroshima Tech.IHEP, Beijing

U. of MelbourneNagoya U.Nara Women’s U.National Central U.Nat’l Kaoshiung Normal U.Nat’l Lien-Ho Inst. of Tech.Nat’l Taiwan U.Nihon Dental CollegeNiigata U.Osaka U.Osaka City U.Panjab U.Peking U.Princeton U.Riken-BNLSaga U.USTC

Seoul National U.Shinshu U.Sungkyunkwan U.U. of SydneyTata InstituteToho U.Tohoku U.Tohuku Gakuin U.U. of TokyoTokyo Inst. of Tech.Tokyo Metropolitan U.Tokyo U. of A and T.Toyama Nat’l CollegeU. of TsukubaUtkal U.VPIYonsei U.

The The BelleBelle Collaboration CollaborationThe The BelleBelle Collaboration Collaboration

13 countries, institutes, ~400 members

2004/09/27 Nobu Katayama(KEK) 8

Collaborating institutionsCollaborating institutionsCollaborators

Major labs/universities from Russia, China, IndiaMajor universities from Japan, Korea, Taiwan, Australia…Universities from US and Europe

KEK dominates in one sense30~40 staffs work on Belle exclusivelyMost of construction and operating costs are paid by KEK

Universities dominates in another senseYoung students to stay at KEK, help operations, do physics analysis

Human resource issueAlways lacking man power

SoftwareSoftware

2004/09/27 Nobu Katayama(KEK) 10

Core SoftwareCore SoftwareOS/C++

Solaris 7 on sparc and RedHat 6/7/9 on PCsgcc 2.95.3/3.0.4/3.2.2/3.3 (code compiles with SunCC)

No commercial software except for batch queuing system and hierarchical storage management system

QQ, EvtGen, GEANT3, CERNLIB (2001/2003), CLHEP(~1.5), postgres 7

Legacy FORTRAN codeGSIM/GEANT3/ and old calibration/reconstruction code)

I/O:home-grown stream IO package + zlibThe only data format for all stages (from DAQ to final user analysis skim files)Index file (pointer to events in data files) are used for final physics analysis

2004/09/27 Nobu Katayama(KEK) 11

Framework (BASF)Framework (BASF)

Event parallelism on SMP (1995~)Using fork (for legacy Fortran common blocks)

Event parallelism on multi-compute servers (dbasf, 2001~, V2, 2004)Users’ code/reconstruction code are dynamically loadedThe only framework for all processing stages (from DAQ to final analysis)

2004/09/27 Nobu Katayama(KEK) 12

Data access methodsData access methodsThe original design of our framework, BASF, allowed to have an appropriate IO package loaded at run time

Our (single) IO package grew to handle more and more possible ways of IOs (disk, tape, etc.) and was extended to deal with special situations and became spaghetti-ball like code

This summer we were faced again to extend it to handle new HSM and for tests with new software

We finally rewrote our big IO package into small pieces, making it possible to simply add one derived class for one IO method

Basf_io class

Basf_io_disk Basf_io_tape Basf_io_zfserv Basf_io_srb …

derived classes

IO objects are dynamically loaded upon request

2004/09/27 Nobu Katayama(KEK) 13

Reconstruction softwareReconstruction software

30~40 people have contributed in the last several yearsFor many parts of reconstruction software, we only have one package. Very little competition

Good and bad

Identify weak points and ask someone to improve them

Mostly organized within the sub detector groupsPhysics motivated, though

Systematic effort to improve tracking software but very slow progress

For example, 1 year to get down tracking systematic error from 2% to less than 1%Small Z bias for either forward/backward or positive/negative charged tracks

When the problem is solved we will reprocess all data again

2004/09/27 Nobu Katayama(KEK) 14

Analysis softwareAnalysis softwareSeveral ~ tens of people have contributed

Kinematical and vertex fitterFlavor taggingVertexingParticle ID (Likelihood)Event shapeLikelihood/Fisher analysis

People tend to use standard packages but…System is not well organized/documentedHave started a task force (consisting of young Belle members)

2004/09/27 Nobu Katayama(KEK) 15

Postgresql database systemPostgresql database system

The only database system Belle usesother than simple UNIX files and directoriesA few years ago, we were afraid that nobody uses postgresql but it seems postgresql is now widely used and well maintained

One master, several copies at KEK, many copies at institutions/on personal PCs

~120,000 records (4.3GB on disk)IP (Interaction point) profile is the largest/most popular

It is working quite well although consistency among many database copies is the problem

2004/09/27 Nobu Katayama(KEK) 16

BelleG4 for (Super) BelleBelleG4 for (Super) BelleWe have also started (finally) building Geant4 version of the Belle detector simulation programOur plan is to build the Super Belle detector simulation code first, then write reconstruction codeWe hope to increase number of people who can write Geant4 code in Belle and in one year or so, we can write Belle G4 simulation code and compare G4, G3 and real dataSome of the detector code is in F77 and we must rewrite them

ComputingComputing

2004/09/27 Nobu Katayama(KEK) 18

Computing Equipment budgetsComputing Equipment budgetsRental system

Four five year contract (20% budget reduction!)

• 1997-2000 (25Byen;~18M euro for 4 years)• 2001-2005 (25Byen;~18M euro for 5 years)

A new acquisition process is starting for 2006/1-Belle purchased systems

KEK Belle operating budget 2M Euro/yearOf 2 M Euro, 0.4~1Meuro/year for computing

• Tapes(0.2MEuro), PCs(0.4MEuro) etc.Sometimes we get bonus(!)

• so far in five years we got about 2M Euro in total

Other institutions0~0.3Meuro/year/institutionOn the average, very little money allocated

2004/09/27 Nobu Katayama(KEK) 19

Rental system(2001-2005)Rental system(2001-2005)

0.51.8

0.7

4.2

3.9

2.7

1.2

0.4

3.9

DAQ interfaceGroup serversUser terminalsTape systemDisk systemsSparc serversPC serversNetworkSupport

total cost in fiveyears (M Euro)

2004/09/27 Nobu Katayama(KEK) 20

Sparc and Intel CPUsSparc and Intel CPUsBelle’s reference platform

Solaris 2.7Everyone has account9 workgroup servers (500Hz, 4CPU)38 compute servers

500GHz, 4CPULSF batch system40 tape drives (2 each on 20 servers)

Fast access to disk servers20 user workstations with DAT, DLT, AITsMaintained by Fujitsu SE/CEs under the big rental contract

Compute servers (@KEK, Linux RH 6.2/7.2/9)User terminals (@KEK to log onto the group servers)

120 PCs (~50Win2000+X window sw, ~70 Linux)

User analysis PCs(@KEK, unmanaged)Compute/file servers at universities

A few to a few hundreds @ each institutionUsed in generic MC production as well as physics analyses at each institutionTau analysis center @ Nagoya U. for example

2004/09/27 Nobu Katayama(KEK) 21

Fujitsu 127PCs(Pentium-III 1.26GHz)

Appro 113PCs(Athlon 1.67GHz×2)

Compaq 60PCs(Pentium-III 0.7GHz)

1020GHz 320GHz

168GHz

Dell 36PCs(Pentium-III ~0.5GHz)

470GHz768GHz

377GHz

NEC 84PCs(Xeon 2.8GHz×2)

Fujitsu 120PCs(Xeon 3.2GHz×2)

Dell 150PCs(Xeon 3.4GHz×2)

Comingsoon!

PC farm of several generationsPC farm of several generations

0

600

1200

1800

2400

3000

3600

1999/6/ 1

2000/6/ 1

2001/6/ 1

2002/6/ 1

2003/6/ 1

2004/6/ 1

IntegratedLuminosity

Total # of GHz

99 00 01 02 03 04

2004/09/27 Nobu Katayama(KEK) 22

Disk servers@KEKDisk servers@KEK8TB NFS file servers

Mounted on all UltraSparc servers via GbE4.5TB staging disk for HSM

Mounted on all UltraSparc/Intel PC servers~15TB local data disks on PCs

generic MC files are stored and used remotelyInexpensive IDE RAID disk servers

160GB (7+1) 16 = 18TB @ 100K Euro (12/2002)250GB (7+1) 16 = 28TB @ 110K Euro (3/2003)320GB (6+1+S) 32 = 56TB @ 200K Euro (11/2003)400GB (6+1+S) 40 = 96TB @ 250K Euro (11/2004)

• With a tape library in the back end

2004/09/27 Nobu Katayama(KEK) 23

Tape librariesTape librariesDirect access DTF2 tape library

40 drives (24MB/s each) on 20 UltraSparc servers4 drives for data taking (one is used at a time)Tape library can hold 2500 tapes (500TB)We store raw data and dst using

• Belle tape IO package (allocate, mount, unmount, free)• perl script (files to tape database), and• LSF (exclusive use of tapes)

HSM backend with three DTF2 tape librariesThree 40TB tape libraries are back-end of 4.5TB disksFiles are written/read as all are on disk

• When the capacity of the library becomes full, we move tapes out of the library and human operators insert tapes when requested by users by mails

2004/09/27 Nobu Katayama(KEK) 24

Data size so farData size so farRaw data

400TB written since Jan. 2001 for 230 fb of data on 2000 tapesDST data

700TB written since Jan. 2001 for 230 fb of data on 4000 tapes, compressed with zlib

MDST data (four vectors, verteces and PID)15TB for 287 fb of hadronic events (BBbar and continuum), compressed with zlib, two photon: add 9TB for 287 fb

DTF(2) Tape usage

0

1000

2000

3000

4000

5000

6000

7000

8000

Nu

mb

er

of

tap

es DTF(2) tape usages

2001 2002 2003 2004

DTF(40GB) tapesfrom 1999-2000

DTF2(200GB) tapes2001-2004

Total ~1PB in DTF(2) tapesplus 200+TB in SAIT

2004/09/27 Nobu Katayama(KEK) 25

Mass storage strategyMass storage strategyDevelopment of next gen. DTF drives was canceled

SONY’s new mass storage system will use SAIT drive technology (Metal tape, helical scan)

We decided to test itPurchased a 500TB tape library and installed it as the backend (HSM) using newly acquired inexpensive IDE based RAID systems and PC file servers

We are moving from direct tape access to hierarchical storage system

We have learned that automatic file migration is quite convenientBut we need a lot of capacity so that we do not need operators to mount tapes

2004/09/27 Nobu Katayama(KEK) 26

Compact, inexpensive HSMCompact, inexpensive HSMThe front-end disk system consists of

8 dual Xeon PC servers with two SCSI channelsEach connecting one 16 320GB IDE disk RAID system

• Total capacity is 56TB (1.75TB(6+1+S)228)

The back-end tape system consists ofSONY SAIT Petasite tape library system in three rack wide space; main system (four drives) + two cassette consoles with total capacity of 500 TB (1000 tapes)

They are connected by a 16 port 2Gbit FC switchInstalled in Nov. 2003~Feb. 2004 and is working well

We keep all new data on this systemLots of disk failures (even power failures) but system is surviving

Please see my poster

2004/09/27 Nobu Katayama(KEK) 27

PetaServe: HSM softwarePetaServe: HSM softwareCommercial software from SONY

We have been using it since 1999 on Solaris

It now runs on Linux (RH7.3 based)It used Data Management API of XFS developed by SGI

When the drive and the file server is connected via FC switch, the data on disk can directly be written to tapeMinimum size for migration and the size of which the first part of the file remains on disk can be set by usersThe backup system, PetaBack, works intelligently with PetaServe; In particular, in the next version, if the file has been shadowed (one copy on tape, one copy on disk), it will not be backed up, saving space and time

So far, it’s working extremely well but

Files must be distributed by ourselves among disk partitions (now 32, in three months many more as there is 2TB limit…)Disk is a file system and not cacheHSM disk can not make an effective scratch disk

• There is a mechanism to do nightly garbage collection if the users delete files by themselves

2004/09/27 Nobu Katayama(KEK) 28

Extending the HSM systemExtending the HSM systemThe front-end disk system will include

ten more dual Xeon PC servers with two SCSI channelsEach connecting one 16 400GB RAID system

• Total capacity is 150TB (1.7TB(6+1+S)228) +(2.4TB(6+1+S)2210)The back-end tape system will includeFour more cassette consoles and eight more drives;

• total capacity is 1.29 PB (2500 tapes)

32 port 2Gbit FC switch will be added (32+16 inter connected with 4 ports)Belle hopes to migrate all DTF2 data into this library by the end of 2005

20TB/day!data must bemoved fromthe old tapelibrary to thenew tapelibraray

2004/09/27 Nobu Katayama(KEK) 29

Floor space requiredFloor space required

B D D D D D D D J

DD

DC

CC

CC

CC

150 EX 150EX150EX

B DC

D

11,493mm

4,792mm

3,325mm

3,970mm

3,100mm

12,285mm

•Lib( ~500TB) ~141㎡•BHSM( ~150TB) ~31㎡•Backup( ~13TB) ~16㎡

200BF200C200C200C200C200C200C

6,670mm

2,950mm

Capacity

Spacerequire

d

DTF2

663TB 130m2

SAIT 1.29PB

20m2SAIT library systems

DTF2 library systems

2004/09/27 Nobu Katayama(KEK) 30

Use of LSFUse of LSFWe have been using LSF since 1999We started using LSF on PCs since 2003/3Of ~1400 CPUs (as of 2004/11), ~1000 CPU are under LSF and being used for DST production, generic MC generation, calibration, signal MC generation and user physics analyses

DST production uses it’s own distributed computing (dbasf) and child nodes do not run under LSFAll other jobs share the CPUs and dispatched from LSFFor users we use fair share scheduling

We will be trying to evaluate new features in LSF 6.0 such as report and multi-clusters hoping to use it a collaboration wide job management tool

2004/09/27 Nobu Katayama(KEK) 31

Rental system plan for BelleRental system plan for Belle

We have started the lengthy process of the computer acquisition for 2006 (-2010)100,000 specCINT2000_rates (or whatever…) compute servers10PB storage system with extensions possiblefast enough network connection to read/write data at the rate of 2-10GB/s (2 for DST, 10 for physics analysis)User friendly and efficient batch system that can be used collaboration wideHope to make it a Grid capable system

Interoperate with systems at remote institutions which are involved in LHC grid computing

Careful balancing of lease and yearly-purchases

ProductionProduction

2004/09/27 Nobu Katayama(KEK) 33

Online production and KEKBOnline production and KEKBAs we take data, we write raw data directly on tapes (DTF2), at the same time we run dst production code (event reconstruction) using 85 dual Athlon 2000+ PC servers

See Itoh san’s talk on RFARM on 29th in Online computing session

Using the results of event reconstruction we send feedback to the KEKB accelerator group such as location and size of the collision so that they can tune the beams to maximize instantaneous luminosity, keeping them collide at the centerWe also monitor BBbar cross section very precisely and we can change the machine beam energies by 1MeV or so to maximize the number of BBbars produced

The resulting DST are written on temporary disks and skimmed for detector calibration

Once detector calibration is finished, we run the production again and make DST/mini-DST for final physics analysis

Changed “RF phase” corresponding to .25 mm

VertexZ position

Luminosity

2004/09/27 Nobu Katayama(KEK) 34

Online DST production systemOnline DST production system

Serverroom

Belle Event Builder

DTF2

Control room

E-hut Computer Center 1000base/SX

100base-TX

PCs are operated by Linux

DellCX600

3com4400

3com4400

Dual Athron

1.6GHz .....

85 nodes

inputdistributor

outputcollector

control

disk serverDNSNIS

PlanexFMG-226SX

Dual PIII(1.3GHz)

Dual Xeon(2.8GHz)

Dual PIII(1.3GHz) (170 CPUs)

2004/09/27 Nobu Katayama(KEK) 35

Online data processingOnline data processing

Run 39-53 (Sept. 18, 2004)211 pb accumulatedAccepted 9,029,058 eventsAccept rate 360.30HzRun time 25,060sPeak Lum was 91033,we started running from Sept. 9

Level 3softwaretrigger

Recorded 4710231 events(52%) Used 186.6[GB]

DTF2 tape libraryRFARM82 dual Athlon2000+ servers

Total CPU time:1812887 secL4 outputs:3980994 events893416 HadronsDST:381GB(95K/ev)

New SAIT HSM

Skims: pair 115569 (5711are written)Bhabha: 475680 (15855)Tight Hadron 687308 (45819)

More than 2MBBbar events!

2004/09/27 Nobu Katayama(KEK) 36

DST productionDST productionReprocessing strategy

Goal:3 months to reprocess all data using all KEK compute servers

• Often we have to wait for constants• Often we have to restart due to bad constants• Efficiency:50~70%

History2002: Major software updates

• 2002/7 Reprocessed all data till then (78fb in three months)

2003: No major software updates• 2003/7 Reprocessed data taken since 2002/10 (~60fb in

three months)2004: SVD2 was installed in summer 2003

• Software is all new and being tested till mid May• 2004/7 Reprocessed data taken since 2003/10 (~130fb

in three months)

Elapsed time (actual hours)

Setup

Dbaseupdate

ToF(RFARM)

dE/dx Belleincomingdata rate(1fb1/day)

Comparison with previous system

New!

Old…

0

1000

2000

3000

4000

5000

6000

1 5 9 13 17 21 25 29 33 37 41 45 49 53 57 61L pro

cess

ed/d

ay(p

b)

day

-2004-2003

See Adachi/Ronga poster

Using 1.12THz total CPUwe achieved >5 fb1/day

5 fb1/day

2004/09/27 Nobu Katayama(KEK) 39

generic MC productiongeneric MC productionMainly used for physics background study400GHz Pentium III~2.5fb/day80~100GB/fbdata in the compressed formatNo intermediate (GEANT3 hits/raw) hits are kept.

When a new release of the library comes, we try to produce new generic MC sample

For every real data taking run, we try to generate 3 times as many events as in the real run, taking

Run dependenceDetector background are taken from random trigger events of the run being simulated

into account

At KEK, if we use all CPUs we can keep up with the raw data taking (3) MC production

We ask remote institutions to generate most of MC eventsWe have generated more than 2109 events so far using qq

100TB for 3300 fb of real dataWould like to keep on disk

0

50

100

150

200

250

M events produced since Apr. 2004

2004/09/27 Nobu Katayama(KEK) 40

Random number generatorRandom number generatorPseudo random number can be difficult to manage when we must generate billions of events in many hundred thousands of jobs. (Each event consumes about one thousand random numbers in one mille second.Encoding the daytime as the seed may be one solution but there is always a danger that one sequence is the same as anotherWe tested a random generator board using thermal noises.

The board can generate up to 8M real random numbers in one secondWe will have random number servers on several PC servers so that we can run many event generator jobs in parallel

Network/data transferNetwork/data transfer

2004/09/27 Nobu Katayama(KEK) 42

Networks and data transferNetworks and data transferKEKB computer system has a fast internal network for NFS

We have added Belle bought PCs, now more than 500 PCs and file serversWe have connected Super-SINET dedicated 1Gbps lines to four universitiesWe have also requested that we connect this network to outside KEK for tests of Grid computing

Finally, a new Cisco 6509 has been added to separate the above three networks

A firewall and login servers make the data transfer miserable (100Mbps max.)DAT tapes to copy compressed hadron files and MC generated by outside institutionsDedicated GbE network to a few institutions are now being addedTotal 10Gbit to/from KEK being addedStill slow network to most of collaborators

2004/09/27 Nobu Katayama(KEK) 43

Data transfer to universitiesData transfer to universities

KEK Computing Research cento

r

Osaka U

Nagoya U

1TB/day~100Mbps

~ 1TB/day400 GB/day~45 Mbps

170 GB/day

NFS

e+e- Bo Bo

Tohoku U

10Gbps

Tsukuba HallBelle

We use Super-SINET, APAN and other internationalAcademic networks as the back bone of the experiment

U of TokyoTIT

AustraliaU.S.

KoreaTiwanErc.

2004/09/27 Nobu Katayama(KEK) 44

Grid Plan for BelleGrid Plan for BelleBelle hasn’t made commitment to Grid technology (We hope to learn more here)

Belle@KEK has not butBelle@remote institutions might have been involved, in particular, when they are also involved in one of the LHC experiments

Parallel/distributed computing aspect

Nice but we do have our own solutionEvent (trivial) parallelism worksHowever, as we accumulate more data, we hope to have more parallelism (several tens to hundreds to thousands of CPUs)Thus we may adopt a standard solution

Parallel/distributed file systemYes we need something better than many UNIX files on many nodesFor each “run” we create ~1000 files; we have had O(10,000) runs so far

Collaboration wide issuesWAN aspect

• Network connections are quite different from one institution to another

authentication• We use DCE to login• We are testing VPN with OTP• CA based system would be

nice

resource management issues• We want submit jobs to

remote institutions if CPU is available

2004/09/27 Nobu Katayama(KEK) 45

Belle’s attemptsBelle’s attemptsWe have separated stream IO package so that we can connect to any of (Grid) file management packagesWe started working with remote institutions (Australia, Tohoku, Taiwan, Korea)

See our presentation by G. Molony on 30th in Distributed Computing session

SRB (Storage Resource Broker by San Diego Univ.)We constructed test beds connecting Australian institutes, Tohoku university using GSI authenticationSee our presentation by Y. Iida on 29th in Fabric session

gfarm (AIST)We are using gfarm (cluster) at AIST to generate generic MC eventsSee presentation by O. Tatebe on 27th in Fabric session

2004/09/27 Nobu Katayama(KEK) 46

Human resourcesHuman resourcesKEKB computer system+Network

Supported by the computer center (1 researcher, 3~4 system engineers+1 hardware engineer, 2~3 operators)

PC farms and Tape handling1 KEK/Belle researcher, 2 Belle support staffs (they help productions as well)

DST/MC production management2 KEK/Belle researchers, 1 pos-doc or student at a time from collaborating institutions

Library/Constants database2 KEK/Belle researchers + sub detector groups

We are barely surviving and are proud of what we have been able to do; make the data usable for analyses

Super KEKB, S-Belle and Super KEKB, S-Belle and Belle computing upgradesBelle computing upgrades

2004/09/27 Nobu Katayama(KEK) 48

More questions in flavor physicsMore questions in flavor physics

The Standard Model of particle physics cannot really explain the origin of the universe

It does not have enough CP violationModels beyond the SM have many CP violating phases

The is no principle to explain flavor structure in models beyond the Standard model

Why Vcb>>Vub

What about the lepton sector?Is there a relationship between quark and neutrino mixing matrices?

We know thatStandard Model (incl. Kobayashi-Maskawa mechanism) is the effective low energy description of Nature.However most likely New Physics lies in O(1) TeV region.

• LHC will start within a few years and (hopefully) discover new elementary particles.

Flavor-Changing-Neutral-Currents (FCNC) suppressed

• New Physics w/o suppression mechanism excluded up to 103 TeV. New Physics Flavor Problem

• Different mechanism different flavor structure in B decays (tau, charm as well)

Luminosity upgrade will be quite effective and essential.

2004/09/27 Nobu Katayama(KEK) 49

Roadmap of B physicsRoadmap of B physics

Discovery of CPV in B decays

Precise test of SMand search for NP

Study of NP effect in B and decays

Identification of SUSY breaking mechanism

time or

integrated luminosity

Yes!!2001

sin21, CPV in B,3, Vub, Vcb, bs,bsll, new states etc.

AnomalousCPV in bsss

NP discoveredat LHC (2010?)

Now280 fb-1

if NP=SUSY

Tevatron (m~100GeV) LHC (m~1TeV)KEKB (1034) SuperKEKB (1035)

Concurrent program

2004/09/27 Nobu Katayama(KEK) 50

Projected LuminosityProjected Luminosity

SuperKEKB“Oide scenario”

50ab-1

2004/09/27 Nobu Katayama(KEK) 51

UT MeasurementsUT Measurements

5 ab1Two cases at

sin21=0.013, 2=2.9o, 3=4o, |Vub|=5.8%

New Physics !SM only

estimated from current results

2004/09/27 Nobu Katayama(KEK) 52

With more and more dataWith more and more dataWe now have more than 250M BWe now have more than 250M B00 on tape. We can no on tape. We can not only observe very rare decays but also measure time t only observe very rare decays but also measure time dependent asymmetry ot the rare deay modes and deterdependent asymmetry ot the rare deay modes and determine the CP phasesmine the CP phases

2716 events 68 events

enlarged

2004/09/27 Nobu Katayama(KEK) 53

New New CPCPV phase in V phase in bb ss

J/Ks

Ks

5 ab1

(S =0.24 summer 2003 bs WA)6.2

S Sf – SJ/Ks

2004/09/27 Nobu Katayama(KEK) 54

SuperKEKB: schematicsSuperKEKB: schematics

8 GeV e+ beam 4.1 A

3.5 GeV e beam 9.6 A

L ∝ I y

y*

(Super Quad)

Super Belle

4 x 3 0.5 ~24

2004/09/27 Nobu Katayama(KEK) 55

Detector upgrade: baseline designDetector upgrade: baseline design

/ K

L d

etec

tio

nS

cin

till

ato

r st

rip

/til

e

Tracking + dE/dx small cell/fast-gas + larger radius

CsI(Tl) 16X0

Si vtx. det.

SC solenoid1.5T lower?

2 pixel lyrs. + 3 lyr. DSSD

pure CsI (endcap)

PID“TOP” + RICH

R&D inprogress

2004/09/27 Nobu Katayama(KEK) 56

Computing for super KEKBComputing for super KEKB

For (even) 1035 luminosity;DAQ:5KHz, 100KB/event500MB/sPhysics rate: BBbar@100Hz1015 bytes/year:1PB/year800 4GHz CPUs to catch up data taking2000 4GHz 4CPU PC servers10+PB storage system (what media?)300TB-1PB MDST/year online data disk

Costing >50 M Euro?

2004/09/27 Nobu Katayama(KEK) 57

ConclusionsConclusionsBelle has accumulated 274M BBbar events so far (July, 2004); data are fully processed; everyone is enjoying doing physics (tight competition with BaBar)A lot of computing resource are added to the KEKB computer system to handle floods of dataThe management team remains small (less than 10 KEK staffs (not full time, two of whom are group leasers of sub detectors) + less than 5 SE/CE) in particular, the PC farms and the new HSM are managed by a few peopleWe look for the GRID solution for us at KEK and for the rest of Belle collaborators

2004/09/27 Nobu Katayama(KEK) 58

Belle jargonBelle jargonDST: output files of good reconstructed eventsMDST: mini-DST, summarized events for physics analysis(re)-production/reprocess

Event reconstruction process(Re)-run all reconstruction code on all raw datareprocess:process ALL events using a new version of software

Skim (Calibration and Physics) pairs, gamma-gamma, Bhabha, Hadron, Hadron, J/, single/double/end-point lepton, bs, hh…

generic MC (Production)A lot of jetset c,u,d,s pairs/QQ/EvtGen generic bc decays MC events for background study

2004/09/27 Nobu Katayama(KEK) 59

Belle Software LibraryBelle Software LibraryCVS (no remote check in/out)

Check-ins are done by authorized persons

A few releases per yearUsually it takes a few weeks to settle down after a major release as we have no strict verification, confirmation or regression procedure so far. It has been left to the developers to check the “new” version” of the code. We are now trying to establish a procedure to compare against old versionsAll data are reprocessed/All generic MC are regenerated with a new major release of the software (at most once per year, though)

Reprocess all data before summer conferences

In April, we have a version with improved reconstruction softwareDo reconstruction of all data in three monthsTune for physics analysis and MC productionFinal version before October for physics publications using this version of dataTakes about 6 months to generate generic MC samples

Library cycle (2002~2004)20020405 0416 0424 0703 1003 20030807(SVD1) (+) 20040727(SVD2)

Overview – Main changes Based on the RFARM

Adapted to off-line production

Dedicated database server

Input from tape

Output to HSM

⇒ Stable, light, fastInputbcs03

Tape

Master

basf

basf

basf

basf

Outputbcs202

basf

zfserv

Typical DBASF cluster

Overview – CPU powerFarm 1 nodes CPU [Ghz] Power [Ghz]bcs101-bcs130 30 4 x 0.766 92bcs131-bcs160 30 4 x 0.766 92

Farm 2bcs201-bcs242 42 2 x 1.7 143bcs243-bcs284 42 2 x 1.7 143bcs285-bcs326 42 2 x 1.7 143

Farm 3bcs801-bcs840 40 2 x 3.2 256bcs841-bcs880 40 2 x 3.2 256bcs881-bcs900 20 2 x 3.2 128

Total 286 1252

34%

15%

51%

1.261.261.26

2004/09/27 Nobu Katayama(KEK) 62

Belle PC farmsBelle PC farmsHave added as we take data

’99/06:16 4CPU 500MHz Pen III’00/04:20 4CPU 550MHz Pen III’00/10:20 2CPU 800MHz Pen III’00/10:20 2CPU 933MHz Pen III’01/03:60 4CPU 700MHz Pen III’02/01:127 2CPU 1.26GHz Pen III’02/04:40 700MHz mobile Pen III’02/12:113 2CPU Athlon 2000+’03/03:84 2CPU 2.8GHz Xeon’04/03:120 2CPU 3.2GHz Xeon’04/11:150 2CPU 3.4GHz Xeon(800)…

We are barely catching up…We must get a few to 20 TFLOPS in coming years as we take more data

Computing resources

Int. Luminosities

0

600

1200

1800

2400

3000

3600

1999/6/ 1

2000/6/ 1

2001/6/ 1

2002/6/ 1

2003/6/ 1

2004/6/ 1

0

600

1200

1800

2400

3000

3600

1999/6/ 1

2000/6/ 1

2001/6/ 1

2002/6/ 1

2003/6/ 1

2004/6/ 1

Summary and prospects

Major challenges

Database access (very heavy load at job start)

Input rate (tape servers are getting old…)

Network stability (shared disks, output,…)

Runs Time Time online Luminosity Rate Rate onlineExp [hour] [hour] [1/fb] [1/fb/day] [1/fb/day]

33 565 413.5 321.2 20.5 1.19 1.5335 489 254.4 137.3 18.8 1.78 3.2931 1078 140.0 136.8 20.0 3.43 3.5137 1276 507.4 349.1 66.4 3.14 4.57All 3408 1349.0 944.3 125.8 2.24 3.20

Old system: 1.12 1/fb/day (online)

Production performance (CPU hours)

Farm 8 operational!

> 5.2 fb/day