Cognizant Technology Solutions · Web viewCognizant will log any delivered bugs/defects in...

Transcript of Cognizant Technology Solutions · Web viewCognizant will log any delivered bugs/defects in...

Montclair State UniversityPeopleSoft Financials Implementation

Test Plan

Version No.1.10

Reviewed By Approved By

Name Doug Gosnell, Anil Joshi, Rohit Srivastava

Role Engagement Director, Project Manager, Solution Architect

Signature DG, AJ, RS

Date 12/12/2014

Cognizant Technology Solutions - Confidential and Proprietary Page 1

Table of Contents

1.0 Overview 3

1.1 Purpose 3

1.2 System Description 3

1.3 Test Requirements 31.3.1 Testing Requirements 31.3.2 Types of Testing 41.3.3 Rounds of Testing 51.3.4 Testing Tools 51.3.5 Test Duration 8

1.4 Testing Scope 9

1.5 Schedule 13

1.6 Role Assignments 13

1.7 Test Environment 13

1.8 Test Data 14

1.9 Special Testing Considerations 14

2.0 Resources Planning 142.1.1 Hardware/Software Environment 142.1.2 Other Resource Requirements 14

3.0 Test Report 14

4.0 Assumptions, Dependencies and Constraints 14

5.0 Glossary & References 16

5.1 Glossary 16

5.2 Reference 16

6.0 Change Log 17

1.0 Overview 3

1.1 Purpose 3

1.2 System Description 3

1.3 Test Requirements 31.3.1 Testing Requirements 31.3.2 Types of Testing 41.3.3 Rounds of Testing 51.3.4 Testing Tools 51.3.5 Test Duration 7

1.4 Testing Scope 8

1.5 Schedule 12

1.6 Role Assignments 12

1.7 Test Environment 12

1.8 Test Data 13

1.9 Special Testing Considerations 13

2.0 Resources Planning 132.1.1 Hardware/Software Environment 132.1.2 Other Resource Requirements 13

3.0 Test Report 13

4.0 Assumptions, Dependencies and Constraints 13

5.0 Glossary & References 15

5.1 Glossary 15

5.2 Reference 15

6.0 Change Log 16

1.0 Overview

1.1 Purpose The purpose of this document is to describe the testing approach and provide an overview of the testing activities to be performed during the System & Integration Testing (SIT) phase of the MSU PeopleSoft Financials Implementation Project (v9.2) - Phase1. However, this document will not include the details of test steps to be performed.

The scope of this test plan includes:

1) Test Requirements (Requirement Traceability Matrix)

2) Plan resources (human, software, hardware)

3) Schedule for Testing

4) Plan for environment, Test Data

1.2 System DescriptionThe primary objective of testing PeopleSoft Financials (v9.2) application is to ensure that the system meets the approved business requirements and the functionality works according to the design and development.

The secondary objective of testing will be to identify defects and associated risks, communicate to the development team, and ensure that the defects are addressed before the release.

Testing will encompass execution of test cases associated with each system requirement to validate the functional accuracy of standard (vanilla) and custom functionalities, data integrity with in PeopleSoft Financial Modules (refer section 1.4) and its integration with legacy applications.

1.3 Test Requirements

1.3.1 Testing Requirements

The following are the list of System Requirements identified based on the Business Requirements by the RTM. Business Requirements are high-level needs or problem of an organization while the System requirements address the software solution to the problem stated in the business requirements.

Each Business Requirement might have one or more system requirements. These System Requirements were defined such that each requirement can be considered as a Testable Requirement. Similarly, each system requirement might have one or more test cases identified.

Cognizant Technology Solutions - Confidential and Proprietary Page 1

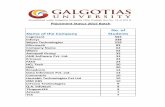

Financial ModuleBusiness Requirements System Requirements

Accounts Payable 82 84Accounts Receivables 65 75Billing 70 80Cash Management 13 13Commitment Control 25 28Contracts 1 2Customer Contracts 9 9eProcurement 40 48General Ledger 62 78Grants 65 66Multiple 18 19Project Costing 46 47Purchasing 74 82Grand Total 570 631

1.3.2 Types of Testing

The following are the types of testing that shall be performed by Cognizant Testing Team during the SIT1 and SIT2 phases whereas the Performance Testing will be conducted right after the SIT2 phase.

System & Integration testing: Performed after completion of Build and Unit testing and prior to UAT. Test case scenarios are strung together by combining select system test cases, with appropriate test data, to evolve end-to-end integration test cycles. The objective of integration testing is to test a business process end-to-end.

Performance Testing: Performance testing will be conducted to validate the effective system performance of key, pre-identified batch and online processes. For these identified batch test scenarios, an appropriate benchmark will be establish to measure acceptable full process “wall clock” processing times for the identified batch process scenarios.

Online performance test scenarios will be comprised of up to two online processes per PeopleSoft module. As with the batch performance testing, individual benchmarks will be established for each online test scenario and the results of the actual “wall clock” time to execute will be discuss to fit in reasonable/acceptable time window.

Both batch and online performance testing will be conducted with an appropriate level of concurrent users required to adequately test the robustness of the processes. Five hundred “signed on” users will be utilized as the concurrent test loads for each of the online performance scenarios and for those batch process test scenarios that are appropriately tested with an active online system.

If needed, the system will be ‘performance tuned within the limits of the hardware environment and, if appropriate, performance tests will be re-executed and the results evaluated.

User Acceptance Testing: Cognizant will provide SIT test scenarios to MSU which can be leveraged for UAT to permit end-to-end testing of business processes by the MSU users. Cognizant will resolve all defects pursuant to Section 10 of this SOW, and also provide adequate onsite support during UAT. All user roles with associated permission list shall be configured and system tested before UAT.

1.3.3 Rounds of Testing

The System & Integration Testing will be conducted in cycle concepts, until prior cycle is not pass, next cycle will not proceed.

SIT1 and SIT2 –The objective of testing is to identify any fatal or critical defects in the system. This phase includes Configuration, Setup, Functional, and Transactional Data Validation. The following table describes the logical grouping of test cases during each test cycle.

Test Cycle Test Type Focus Area

SIT 1, Config Config/Setup and Security Roles Testing

Test a set of config values based on the config workbook and security matrix for all the modules to make sure basic system readiness is there.

SIT 1, Functional

Delivered/ Custom functionality Testing (conversion, negative)

Test all the custom functionality as a business process to ensure that custom functions are working along with delivered functions and RICEW.

SIT 2, Functional, Regression

Delivered/ Custom functionality Testing (conversion, negative)

Retesting of some key Processes

Test all the custom functionality as a business process to ensure that custom functions are working along with delivered functions and RICEW.

Retest some of the key business processes to make sure they work correctly.

1.3.4 Testing Tools

Testing will be leveraging MS Excel for test management and defect management purposes. Cognizant’s Test Management Tool ‘TEMEX- Test Management Using Excel as Tool’ will be used to record and track information through the testing lifecycle. Each spreadsheet is an outcome of a specific test activity.

The TEMEX tool is crafted with the following tabs –

Test Case Description : This tab contains the system requirements and test cases associated with it. It also includes the test case designer and approver information.

Test Case Design : This tab describes the Test Case design progress.

Test Suite: The Test Suite is the outcome of Test Case Design. Each Test Case will have multiple test steps describing the detailed procedure to be followed when

executing the test case. It also contains the expected result for each test step.

RTM : This tab may be used to track the coverage of test cases against the requirements.

Test Execution Log : This tab contains the actual test execution logs. In addition to the expected result, the actual result will also be captured; In case of deviations defects should be logged.

Note: Test observation document/logs should be stored in SharePoint and the file name and folder stored should be provided in this tab.

Test Case Exec Status Log: This tab contains the Test Execution Status Log by each round or cycle of testing.

Test Execution Overall Status: This tab captures the status of each test case while execution.

Temex Report: This tab provides a dashboard with different charts derived for each test activity

Defect Management : Defects: The defects tab captures all the defect related information along with the test case id and requirement id.

Note: Defect observation document/logs should be stored in SharePoint and the file name and folder stored should be provided in the defect.

Refer 6.5 of Test Strategy Document.

Report Summary: There are many tabs in the tool which provides different types and level of reporting like Test Case Exec Status Log, Test Execution Overall Status and Temex Report.

Please find attach TEMEX excel file.

Test Execution Process:

The following is the high level test execution process and each test activity can be associated to an excel template. These excel templates can be used for tracking and managing various test activities.

Daily Meetings:

The Daily Meetings will occur – in the morning. During morning session, the test lead from Cognizant for each “in flight” test scenarios will present a brief summary of goals for the day and review any new defects that have been raised since the last meeting. The test lead (Cognizant) will also indicate the priority order of their respective defects. The fix lead (development lead) will provide a status of the builds that are underway or available, identify any defects corrected since the last meeting, and highlight any items of interest discovered during testing.

The goal of the Daily Meetings is to make sure that the development team is aware of the daily

priorities within the different test scenarios and that the highest priority activities across the project are receiving the most attention.

These meetings will be attended by functional leads and test manager from MSU and CTS and will be limited to maximum of 60 minutes.

Weekly Strategy Meetings:

The Weekly Strategy Meetings will occur once per week. A suitable day and time for these meetings will be determined when the meeting schedule commences. During these sessions, the test management information and reports that are identified will be presented. Where relevant, the reports will be recalibrated. Mitigating actions will be proposed for any areas where progress is deficient.

The goal of the Weekly Strategy Meetings is to make sure that the Test Strategy for MSU is successful and that the Project Team frequently considers and verifies deliverability of test activities. The meeting should maintain a high-level, strategic view to focus efforts on high-priority and high-value activities.

These meetings will be attended by key Team Leads within the CTS and MSU Core Teams.

1.3.5 Test Duration

Test Execution Timelines:

Functional Category 2/16

/201

5

2/17

/201

5

2/18

/201

5

2/19

/201

5

2/20

/201

5

2/23

/201

5

2/24

/201

5

2/25

/201

5

2/26

/201

5

2/27

/201

5

3/2/

2015

3/3/

2015

3/4/

2015

3/5/

2015

3/6/

2015

3/16

/201

5

3/17

/201

5

3/18

/201

5

3/19

/201

5

3/20

/201

5

3/23

/201

5

3/24

/201

5

3/25

/201

5

3/26

/201

5

3/27

/201

5

Gra

nd T

otal

Approval Workflow 1 2 1 1 5Attachments 1 1 1 1 1 5Audit 1 3 4Conversion 7 3 8 3 2 1 1 3 1 5 34Format 15 15Grants 5 3 2 10Interface 5 1 8 3 6 2 1 3 1 1 4 35Invoicing 1 1 1 2 5Job Scheduler 1 1 1 3Online 8 13 16 8 25 16 21 15 18 9 23 18 14 13 3 14 4 20 19 11 9 15 3 6 321Process 2 5 1 1 5 3 2 19Reporting 3 3 10 1 2 3 1 1 4 25 11 1 8 12 14 14 113Security 1 2 3Setup 19 2 3 1 1 4 2 2 34Sub System transactions 2 1 3Validation 1 1 1 1 4Workflow 1 14 2 1 18Grand Total 28 27 27 25 28 30 24 26 22 25 25 26 22 24 27 25 24 26 25 27 27 23 21 27 20 631

SIT 1 SIT 2

Financial Module 2/16

/201

5

2/17

/201

5

2/18

/201

5

2/19

/201

5

2/20

/201

5

2/23

/201

5

2/24

/201

5

2/25

/201

5

2/26

/201

5

2/27

/201

5

3/2/

2015

3/3/

2015

3/4/

2015

3/5/

2015

3/6/

2015

3/16

/201

5

3/17

/201

5

3/18

/201

5

3/19

/201

5

3/20

/201

5

3/23

/201

5

3/24

/201

5

3/25

/201

5

3/26

/201

5

3/27

/201

5

3/31

/201

5

Gra

nd T

otal

Approval Workflow 1 2 1 1 5Attachments 1 1 1 1 1 5Audit 1 3 4Conversion 3 10 11 2 1 1 1 5 34Format 15 15Grants 8 1 1 10Interface 5 4 7 1 1 3 4 2 1 1 1 5 35Invoicing 1 1 1 2 5Job Scheduler 1 1 1 3Online 2 8 28 48 37 17 25 12 3 11 6 12 2 6 2 1 18 8 12 16 6 8 9 17 6 1 321Process 7 1 1 3 1 1 3 2 19Reporting 3 13 5 1 1 1 4 32 4 1 2 14 4 15 13 113Security 2 1 3Setup 14 5 5 1 5 4 34Sub System transactions 2 1 3Validation 1 1 1 1 4Workflow 1 12 3 1 1 18Grand Total 17 21 53 81 53 31 30 14 6 17 10 19 7 7 36 9 50 12 13 19 19 24 15 33 21 14 631

SIT1 SIT2/Regression

1.4 Testing Scope

Scope Inclusions:

Functional Testing

1. The business requirements were broadly classified into Functional and Non-Functional Requirements. The following table represents the number of business requirements identified as Functional and Non-Functional requirements

Financial Module Functional Non Functional Total Accounts Payable 65 17 82Accounts Receivables 54 11 65Billing 58 12 70Cash Management 12 1 13Commitment Control 17 8 25Contracts 1 1Customer Contracts 9 9eProcurement 39 1 40General Ledger 45 17 62Grants 46 19 65Multiple 8 10 18Project Costing 37 9 46Purchasing 70 4 74Grand Total 461 109 570Functional Requirements: The following table represents the functional requirements for each module classified further into functional category:

Financial Modules Appr

oval

Wor

kflo

w

Att a

chm

ents

Audi

t

Con

ver s

ion

Form

at

Gr a

nts

Int e

rf ace

Invo

i cin

g

Job

Sch e

dule

r

Onl

i ne

Proc

ess

Rep

orti n

g

Secu

r ity

Setu

p

Sub

Syst

em tr

ansa

ctio

ns

Vali d

ati o

n

Wor

kflo

w

Gr a

nd T

otal

Accounts Payable 5 12 35 6 26 84Accounts Receivables 1 2 1 1 4 46 3 10 1 4 1 1 75Billing 2 1 1 15 9 1 28 11 1 10 1 80Cash Management 1 1 10 1 13Commitment Control 1 8 1 13 4 1 28Contracts 2 2Customer Contracts 9 9eProcurement 1 31 1 1 14 48General Ledger 1 1 4 13 2 22 2 17 15 1 78Grants 3 1 42 19 1 66Multiple 4 3 3 9 19Project Costing 6 4 25 7 3 1 1 47Purchasing 3 58 14 1 3 3 82Grand Total 5 5 4 34 15 10 35 5 3 321 19 113 3 34 3 4 18 631

Non - Functional Testing:Performance testing will be conducted to validate the effective system performance of key, pre-identified batch and online processes. For these identified batch test scenarios, an appropriate benchmark will be establish to measure acceptable full process “wall clock” processing times for the identified batch process scenarios.

Online performance test scenarios will be comprised of up to two online processes per PeopleSoft module. As with the batch performance testing, individual benchmarks will be established for each online test scenario and the results of the actual “wall clock” time to execute will be discuss to fit in reasonable/acceptable time window.

Both batch and online performance testing will be conducted with an appropriate level of concurrent users required to adequately test the robustness of the processes. Five hundred “signed on” users will be utilized as the concurrent test loads for each of the online performance scenarios and for those batch process test scenarios that are appropriately tested with an active online system.

If needed, the system will be ‘performance tuned within the limits of the hardware environment and, if appropriate, performance tests will be re-executed and the results evaluated.

Performance testing is planned for 10 business days (4/13/2015 – 4/24/2015).

1. Performance Testing: On-line testing will be focusing on key business process across functional module.

On-line TestPO:

1. Create Requisitions2. Requisition Approval

AP:

1. Enter Voucher Information2. Paycycle

Grants:

1. Proposal Creation2. Entering Cost Share

Project Costing

1. Cost Collection ProcessContracts

1. Award Modification2. Assigning Bill Plan

Billing:

1. Entering online Billing2. Billing Worksheet

AR:

1. Collection Workbench2. Payment Application

GL:

1. Journal Entry2. Allocation Setup

KK:

1. Budget Journal2. Budget Definition

Batch Objective:o AR Updateo Paycycleo Journal Generatoro Journal Edit and Posto PO Dispatcho Invoice Process and Finalizationo Award Generationo Finalize Budgeto Automatic Bank Reconciliationo Payment Predictoro Voucher and Payment Postingo Voucher Buildo Cost Collection Processo Single Action Invoice.

Documentation Testing

Scope Exclusions:1) Functional Testing – System Integration & User acceptance testing

a) Resolution of defects in 3rd party system or software other than PeopleSoft application b) Any testing outside of PeopleSoft application and other than the RICEW components

developed as part of the engagement

2) Non Functional – Performance & Conversion testinga) Performance Benchmarking PeopleSoft vanilla version b) Other Non-Functional tests such as failover, disaster recovery, and compatibility c) Test data setup for Performance Testing d) Performance Testing of 3rd party applications/interfaces e) Testing & fixing of any bad data identified during conversions in the legacy is out of

scopef) Veracity and validity of any migrated data – both master and transaction data

3) Unit Testing – Unit testing is not part of SIT phase. Unit testing will be conducted by Cognizant’s Development Team prior to SIT Phase.

4) User Acceptance Testing – User Acceptance Testing should be performed by MSU during the UAT phase with the support of CTS

5) Phase2 Modules – The modules (eSupplier connection, eSettlement, Strategic Sourcing, Asset Management, Travel & Expenses (including Mobile Integration)) are not in scope for phase1 of the project.

6) Requirements Not Applicable : The system requirements which are not applicable or not in scope will not be part of SIT testing. Refer the requirements in RTM with Not Applicable

Test Planning

1.5 Schedule

Milestone Task Completion Date Responsibility

Submit Test Plan Document 12/5/2014 Cognizant Testing Team

Review Test Plan Appendix G (SOW) MSU

Submit Test Cases including Test Steps and Data Sheets 2/13/2015 Cognizant Testing Team

Submit Test Summary Report Template 2/13/2015 Cognizant Testing Team

Review Test Cases and Test Summary ReportAppendix G (SOW) MSU

SIT1 Test log with Defect & resolution details. 3/13/2015 Cognizant Testing Team

Review the SIT1 Test LogAppendix G (SOW) MSU

SIT2 Test log with Defect & resolution details. 4/10/2015 Cognizant Testing Team

Review the SIT2 Test LogAppendix G (SOW) MSU

UAT Test log with Defect & resolution details 6/12/2015 MSU

1.6 Role Assignments Refer the Roles and Responsibilities section in Test Strategy Document.

1.7 Test EnvironmentSystem & Integration tests will be performed in a separate Test environment. Testing in this environment is more tightly managed and controlled. Objects and configurations being introduced or migrated to Test environment are under strict configuration and change control. Configurations and custom code versions should be documented and tracked.

SIT Environment preparation

DB Refresh from Gold Config Environment

Migrate Code Components from Development Instance

Convert Data from Source Systems (Mock Conversion)

Create User Profiles as Required

Take a DB Backup of SIT Instance

1.8 Test DataConfiguration Data: All configuration values should be configured and carried over to the SIT environment. This task is carried out by the Cognizant Development/ Functional Team. All the configuration data values should be recorded in configuration workbook for each module. MSU/ Cognizant Conversion Team should provide critical data sets for testing purposes during SIT phase. The data values should be captured in the test data sheets provided by Cognziant’s Testing Team.

Transactional data: The transactional data should be converted and tested as part of the conversion strategy. However, identified data sets will be tested against the conversion requirements by the Cognizant Testing Team. The data sets should be identified by MSU.

1.9 Special Testing ConsiderationsNot known at this time

2.0 Resources Planning

2.1.1 Hardware/Software Environment

Attached is the test environment system will be used for integration system testing

2.1.2 Other Resource Requirements

Not know at this time

3.0 Test Report

Refer section1.3.4 for the Test Report.

4.0 Assumptions, Dependencies and Constraints

Assumptions:

1) Unit testing will be successfully completed and the test results are recorded before start of System Integration Testing.

2) SMEs are available to clarify the questions raised by testing team.

3) SIT will be performed in a dedicated test environment

4) MSU will review and provide feedback on the test cases (including the steps and data sheets) document before the SIT phase starts

5) Cognizant Testing Team will be responsible for System & Integration testing (SIT). Cognizant will share testing results, as well as a report showing all defects fixed to confirm readiness for UAT

6) Cognizant will log any delivered bugs/defects in PeopleSoft Financials 9.2/Tools 8.53 product, with Oracle Support and will provide the required information. However, the turnaround time by Oracle will be governed by the support agreement between MSU and Oracle.

7) MSU will be responsible for preparation of test plan, test cases and scenarios for User Acceptance Testing (UAT).Cognizant will provide necessary support during UAT phase.

8) Cognizant’s Excel based tool called TEMEX will be used for Test management and Defect Management purposes.

9) Accuracy and completeness of any existing data that will be migrated into PeopleSoft system will not be Cognizant Testing Team’s responsibility.

10) The test observation documents and defect logs will be stored in SharePoint.

Dependencies:

1) Config/Master Test Data:

a. MSU/ Cognizant Conversion Team should provide critical data sets for testing the Master Data values during the SIT phase.

b. Cognizant Functional Team should provide the config workbook for each module to test the Config/setup values testing during the SIT phase.

c. The data values should be captured in the test data sheets provided by Cognziant’s Testing Team.

d. Without this data the configuration and master data requirements will not be tested.

2) Transactional Data:

a. MSU/ Conversion Team should provide data sets for the critical features that need to be tested during the functional testing cycle.

b. The conversion data values should be captured in the test data sheets provided by Cognziant’s Testing Team. The configuration and master data requirements require this data to execute related testing.

3) Security: The Security Matrix should be complete and roles and permission lists should be defined in the SIT environment.

5.0 Glossary & References

5.1 Glossary

N/A

5.2 Reference Sr. No Documents Version Number

1

Requirement Specification Document

MSU-OM PSFT FSCM Requirements Traceability_v1.22.xlsx

2

Test Cases mapping to Requirements

MSU-OM PSFT FSCM Initial Requirements Traceability_TestCases_Mapping_V1.1.xls MSU-OM PSFT FSCM Initial Requirements Traceability_TestCases_Mapping_V1.0.xlsx

6.0 Change Log

Version Number

Changes made

V1.0 10/29/2014: Initial version prepared by Venkatesh Pacheneela

V1.1 <If the change details are not explicitly documented in the table below, reference should be provided here>12/22/2014: Responded feedback from MSU

V1.2 <If the change details are not explicitly documented in the table below, reference should be provided here>

Page no

Changed by

Effective date

Changes effected