CloudShare: Guaranteed Application Performance on Idle...

Transcript of CloudShare: Guaranteed Application Performance on Idle...

CloudShare: Guaranteed Application Performanceon Idle Data Center Resources

D. Anderson?, D. Kondo†, A. Legrand†

? SSL, UC Berkeley † CNRS - Inria - Grenoble

Berkeley – Inria – Stanford Workshop 2013

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team 1 / 23

Outline

1 CloudShare Associated TeamCloud ComputingVolunteer ComputingAssociated Team

2 Non-Cooperative Scheduling Considered Harmful in CollaborativeVolunteer Computing Environments

BOINC in a NutshellImpact of Server ConfigurationGame Theoretic Point of ViewConclusion

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team 2 / 23

Outline

1 CloudShare Associated TeamCloud ComputingVolunteer ComputingAssociated Team

2 Non-Cooperative Scheduling Considered Harmful in CollaborativeVolunteer Computing Environments

BOINC in a NutshellImpact of Server ConfigurationGame Theoretic Point of ViewConclusion

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 3 / 23

Why would Business need Distributed Computing Systems?Originally, little need for performance

I Business computations seldom extend beyond ordinary rational arithmetic(unless when science is involved in business) Mostly desktop usage

I Computer systems distributed iff the company is (interconnect business units)

And then came the Internet

I Some company relying on the Internet emerged (eBay, amazon, google)

I Computers naturally play a central role in their business plan

I Cannot afford to loose clients ; High Availability Computing

I But load is very changing ; Servers dimensioned for flash crowds

Amazon idea

I Data centers often 85% idle

I Rent unused power to others!

I Computers better amortized. Buybigger ones, loose no client

I Infrastructure as a Service (IaaS)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 4 / 23

Why would Business need Distributed Computing Systems?Originally, little need for performance

I Business computations seldom extend beyond ordinary rational arithmetic(unless when science is involved in business) Mostly desktop usage

I Computer systems distributed iff the company is (interconnect business units)

And then came the Internet

I Some company relying on the Internet emerged (eBay, amazon, google)

I Computers naturally play a central role in their business plan

I Cannot afford to loose clients ; High Availability Computing

I But load is very changing ; Servers dimensioned for flash crowds

RequestsUser

Day/Night cycle

Amazon idea

I Data centers often 85% idle

I Rent unused power to others!

I Computers better amortized. Buybigger ones, loose no client

I Infrastructure as a Service (IaaS)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 4 / 23

Why would Business need Distributed Computing Systems?Originally, little need for performance

I Business computations seldom extend beyond ordinary rational arithmetic(unless when science is involved in business) Mostly desktop usage

I Computer systems distributed iff the company is (interconnect business units)

And then came the Internet

I Some company relying on the Internet emerged (eBay, amazon, google)

I Computers naturally play a central role in their business plan

I Cannot afford to loose clients ; High Availability Computing

I But load is very changing ; Servers dimensioned for flash crowds

Server Capacity Lost

Clients!

Amazon idea

I Data centers often 85% idle

I Rent unused power to others!

I Computers better amortized. Buybigger ones, loose no client

I Infrastructure as a Service (IaaS)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 4 / 23

Why would Business need Distributed Computing Systems?Originally, little need for performance

I Business computations seldom extend beyond ordinary rational arithmetic(unless when science is involved in business) Mostly desktop usage

I Computer systems distributed iff the company is (interconnect business units)

And then came the Internet

I Some company relying on the Internet emerged (eBay, amazon, google)

I Computers naturally play a central role in their business plan

I Cannot afford to loose clients ; High Availability Computing

I But load is very changing ; Servers dimensioned for flash crowds

Server Capacity Lost

Clients! Amazon idea

I Data centers often 85% idle

I Rent unused power to others!

I Computers better amortized. Buybigger ones, loose no client

I Infrastructure as a Service (IaaS)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 4 / 23

Here Comes the Cloud

Client IncitativesI Complexity of infrastructure management hidden from users

IT maintenance burden assumed by external specialistsI Pay only used power: rent a server 1h, send computations in the cloud, enjoy

This is called Elastic Computing

I The created need revealed very profound: everyone wants it nowI Clients even want to rent OS+apps (PaaS) or software (SaaS)

The Data Centers GrowthI Scale allows Cost Cuttings, as always.

(Motivation for big DC already existed)I Clouds removes the wastes due to over-

dimensioning⇒ Corporate Data Centers become as bigas Scientific Supercomputers! G

oo

gle

Da

taC

ente

r

It’s not that sunny Cloud infrastructures are not that easy and transparent touse (virtualization and co-localization overhead, unexpected preemption of spotinstances, unavailability) and can quickly reveal expensive

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 5 / 23

Here Comes the Cloud

Client IncitativesI Complexity of infrastructure management hidden from users

IT maintenance burden assumed by external specialistsI Pay only used power: rent a server 1h, send computations in the cloud, enjoy

This is called Elastic Computing

I The created need revealed very profound: everyone wants it nowI Clients even want to rent OS+apps (PaaS) or software (SaaS)

The Data Centers GrowthI Scale allows Cost Cuttings, as always.

(Motivation for big DC already existed)I Clouds removes the wastes due to over-

dimensioning⇒ Corporate Data Centers become as bigas Scientific Supercomputers! G

oo

gle

Da

taC

ente

r

It’s not that sunny Cloud infrastructures are not that easy and transparent touse (virtualization and co-localization overhead, unexpected preemption of spotinstances, unavailability) and can quickly reveal expensive

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 5 / 23

Here Comes the Cloud

Client IncitativesI Complexity of infrastructure management hidden from users

IT maintenance burden assumed by external specialistsI Pay only used power: rent a server 1h, send computations in the cloud, enjoy

This is called Elastic Computing

I The created need revealed very profound: everyone wants it nowI Clients even want to rent OS+apps (PaaS) or software (SaaS)

The Data Centers GrowthI Scale allows Cost Cuttings, as always.

(Motivation for big DC already existed)I Clouds removes the wastes due to over-

dimensioning⇒ Corporate Data Centers become as bigas Scientific Supercomputers! G

oo

gle

Da

taC

ente

r

It’s not that sunny Cloud infrastructures are not that easy and transparent touse (virtualization and co-localization overhead, unexpected preemption of spotinstances, unavailability) and can quickly reveal expensive

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 5 / 23

Volunteer Computing in a Nutshell

Most of the world’s computing power is distributed across the hundreds of millionsof Internet hosts on residential broadband networks

Volunteer computing: harnessing the free collective computational and storageresources of desktop PC’s throughout the Internet

I Cooperation ; one of the largest and most powerful distributed computingsystems on the planet

I Volunteer donate their unused CPU cyclesto scientific/geeky/humanitarian projects

I Complex client and server scheduling mech-anisms to handle practical considerations(e.g., heterogeneity, volatility, volunteer sat-isfaction).

I Understanding the behavior of such archi-tectures is non-trivial

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 6 / 23

Volunteer Computing in a Nutshell

Most of the world’s computing power is distributed across the hundreds of millionsof Internet hosts on residential broadband networks

Volunteer computing: harnessing the free collective computational and storageresources of desktop PC’s throughout the Internet

I Cooperation ; one of the largest and most powerful distributed computingsystems on the planet

I Volunteer donate their unused CPU cyclesto scientific/geeky/humanitarian projects

I Complex client and server scheduling mech-anisms to handle practical considerations(e.g., heterogeneity, volatility, volunteer sat-isfaction).

I Understanding the behavior of such archi-tectures is non-trivial

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 6 / 23

Volunteer Computing in a Nutshell

Most of the world’s computing power is distributed across the hundreds of millionsof Internet hosts on residential broadband networks

Volunteer computing: harnessing the free collective computational and storageresources of desktop PC’s throughout the Internet

I Cooperation ; one of the largest and most powerful distributed computingsystems on the planet

I Volunteer donate their unused CPU cyclesto scientific/geeky/humanitarian projects

I Complex client and server scheduling mech-anisms to handle practical considerations(e.g., heterogeneity, volatility, volunteer sat-isfaction).

I Understanding the behavior of such archi-tectures is non-trivial

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 6 / 23

BOINC: the most popular VC infrastructure

The Berkeley Open Infrastructure forNetwork Computing is the most popular VC in-frastructure today:

I 50 projects: SETI@home, WCG, Ein-stein@home, ClimatePrediction.net, . . .

I 596, 000 hosts, 9.2 PetaFlops (March 2013)

I Since 2000, generated 100+ scientific pub-lications (Science, Nature)

BOINC has proved to scale to millions of unreliable resources

VC limitations Unfortunately, the types of applications and services that canrun over VC platforms is largely limited to trivially parallel ones

Fair and autonomous scheduling of billions of CPU-bound independent tasks(i.e. optimize throughput)

Extending to a wider context requires smart modeling and scheduling techniques

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 7 / 23

BOINC: the most popular VC infrastructure

The Berkeley Open Infrastructure forNetwork Computing is the most popular VC in-frastructure today:

I 50 projects: SETI@home, WCG, Ein-stein@home, ClimatePrediction.net, . . .

I 596, 000 hosts, 9.2 PetaFlops (March 2013)

I Since 2000, generated 100+ scientific pub-lications (Science, Nature)

BOINC has proved to scale to millions of unreliable resources

VC limitations Unfortunately, the types of applications and services that canrun over VC platforms is largely limited to trivially parallel ones

Fair and autonomous scheduling of billions of CPU-bound independent tasks(i.e. optimize throughput)

Extending to a wider context requires smart modeling and scheduling techniques

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 7 / 23

Associated Team Backgrounds

The cloud and VC context have actually a lot in common but propos-ing good solutions requires the good blend of practice and theory.

Berkeley/Palo Alto Lead development of BOINC middleware forvolunteer computing. Google data-center management. Invualu-able knowledge of production systems.

I David Anderson, Walfredo Cirne

Inria MESCAL Statistical performance and failure modeling, sim-ulation, scheduling, game theory and resource management.

I Derrick Kondo, Arnaud Legrand, Bruno Gaujal, Jean-Marc Vin-cent, Olivier Richard

I Sheng Di, Bahman Javadi, B. Donnassolo, Lucas Schnorr

Inria associated team (2009-2014)

CloudComputing@Home Create a virtually dedicated cloud fromunreliable Internet resources

CloudShare Guaranteed Application Performance on Idle Data Cen-ter Resources

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 8 / 23

Associated Team Backgrounds

The cloud and VC context have actually a lot in common but propos-ing good solutions requires the good blend of practice and theory.

Berkeley/Palo Alto Lead development of BOINC middleware forvolunteer computing. Google data-center management. Invualu-able knowledge of production systems.

I David Anderson, Walfredo Cirne

Inria MESCAL Statistical performance and failure modeling, sim-ulation, scheduling, game theory and resource management.

I Derrick Kondo, Arnaud Legrand, Bruno Gaujal, Jean-Marc Vin-cent, Olivier Richard

I Sheng Di, Bahman Javadi, B. Donnassolo, Lucas Schnorr

Inria associated team (2009-2014)

CloudComputing@Home Create a virtually dedicated cloud fromunreliable Internet resources

CloudShare Guaranteed Application Performance on Idle Data Cen-ter Resources

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 8 / 23

Associated Team Backgrounds

The cloud and VC context have actually a lot in common but propos-ing good solutions requires the good blend of practice and theory.

Berkeley/Palo Alto Lead development of BOINC middleware forvolunteer computing. Google data-center management. Invualu-able knowledge of production systems.

I David Anderson, Walfredo Cirne

Inria MESCAL Statistical performance and failure modeling, sim-ulation, scheduling, game theory and resource management.

I Derrick Kondo, Arnaud Legrand, Bruno Gaujal, Jean-Marc Vin-cent, Olivier Richard

I Sheng Di, Bahman Javadi, B. Donnassolo, Lucas Schnorr

Inria associated team (2009-2014)

CloudComputing@Home Create a virtually dedicated cloud fromunreliable Internet resources

CloudShare Guaranteed Application Performance on Idle Data Cen-ter Resources

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 8 / 23

Expected outcomes

Models and Algorithms

I Models of bursty workloads and resource usageI Statistical and machine learning algorithms for predicting

idleness in data centersI Fair scheduling algorithms for guaranteed performance across

unreliable resources

Traces and Software Tools

I Failure and Application Trace ArchiveI Cloud and VC SimulatorI BOINC software adapted to data centers

In the following, I will present a joint work (CCGrid’11) with B. Don-nassolo and C. Geyer, from UFRGS, Porto Alegre, Brazil.

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team CloudShare Associated Team 9 / 23

Outline

1 CloudShare Associated TeamCloud ComputingVolunteer ComputingAssociated Team

2 Non-Cooperative Scheduling Considered Harmful in CollaborativeVolunteer Computing Environments

BOINC in a NutshellImpact of Server ConfigurationGame Theoretic Point of ViewConclusion

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 10 / 23

Volunteer Computing in a Nutshell

Most of the world’s computing power is distributed across the hundreds ofmillions of Internet hosts on residential broadband networks

Volunteer computing: harnessing the free collective computational andstorage resources of desktop PC’s throughout the Internet

I Cooperation ; one of the largest and most powerful distributed com-puting systems on the planet

I Volunteer donate their unused CPUcycles to scientific/geeky/humanitarianprojects

I Complex client and server schedulingmechanisms to handle practical consid-erations (e.g., heterogeneity, volatility,volunteer satisfaction).

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 11 / 23

Volunteer Computing in a Nutshell

Most of the world’s computing power is distributed across the hundreds ofmillions of Internet hosts on residential broadband networks

Volunteer computing: harnessing the free collective computational andstorage resources of desktop PC’s throughout the Internet

I Cooperation ; one of the largest and most powerful distributed com-puting systems on the planet

I Volunteer donate their unused CPUcycles to scientific/geeky/humanitarianprojects

I Complex client and server schedulingmechanisms to handle practical consid-erations (e.g., heterogeneity, volatility,volunteer satisfaction).

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 11 / 23

Volunteer Computing in a Nutshell

Most of the world’s computing power is distributed across the hundreds ofmillions of Internet hosts on residential broadband networks

Volunteer computing: harnessing the free collective computational andstorage resources of desktop PC’s throughout the Internet

I Cooperation ; one of the largest and most powerful distributed com-puting systems on the planet

I Volunteer donate their unused CPUcycles to scientific/geeky/humanitarianprojects

I Complex client and server schedulingmechanisms to handle practical consid-erations (e.g., heterogeneity, volatility,volunteer satisfaction).

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 11 / 23

BOINC: the most popular VC infrastructure

The Berkeley Open Infrastructure for Network Computing is themost popular VC infrastructure today:

I 50 projects: SETI@home, WCG, Ein-stein@home, ClimatePrediction.net, . . .

I 596, 000 hosts, 9.2 PetaFlops (March2013)

I Since 2000, generated 100+ scientificpublications (Science, Nature)

BOINC has proved to scale to millions of unreliable resources

Unfortunately, the types of applications and services that can run over VCplatforms is largely limited to trivially parallel ones

Fair and autonomous scheduling of billions of CPU-bound independenttasks (i.e. optimize throughput)

Possible research direction: Fair optimization of response time of BoTs

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 12 / 23

BOINC: the most popular VC infrastructure

The Berkeley Open Infrastructure for Network Computing is themost popular VC infrastructure today:

I 50 projects: SETI@home, WCG, Ein-stein@home, ClimatePrediction.net, . . .

I 596, 000 hosts, 9.2 PetaFlops (March2013)

I Since 2000, generated 100+ scientificpublications (Science, Nature)

BOINC has proved to scale to millions of unreliable resources

Unfortunately, the types of applications and services that can run over VCplatforms is largely limited to trivially parallel ones

Fair and autonomous scheduling of billions of CPU-bound independenttasks (i.e. optimize throughput)

Possible research direction: Fair optimization of response time of BoTs

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 12 / 23

BOINC: the most popular VC infrastructure

The Berkeley Open Infrastructure for Network Computing is themost popular VC infrastructure today:

I 50 projects: SETI@home, WCG, Ein-stein@home, ClimatePrediction.net, . . .

I 596, 000 hosts, 9.2 PetaFlops (March2013)

I Since 2000, generated 100+ scientificpublications (Science, Nature)

BOINC has proved to scale to millions of unreliable resources

Unfortunately, the types of applications and services that can run over VCplatforms is largely limited to trivially parallel ones

Fair and autonomous scheduling of billions of CPU-bound independenttasks (i.e. optimize throughput)

Possible research direction: Fair optimization of response time of BoTs

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 12 / 23

The BOINC Server in a Nutshell

Each project sets up its own server

, which uses pragmatic schedul-ing mechanism to handle:

Volatility and Heterogeneity

I The server waits for clients to contact himI Upon work request, the server selects a subset of tasks and

assign them a soft deadlineI If a task is not returned before its deadline, it is considered

as lost and may be resubmitted to another client

Rewarding Volunteers

I Clients claim credits based on benchmarkI Servers reward minimum of claimed credits for correct resultsI Rank volunteers based on their contribution

Correctness (over-clocking, unstable numerical applications, mali-cious participants)

I Majority votingI Limited replication, homogeneous redundancy, black hole

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 13 / 23

The BOINC Server in a Nutshell

Each project sets up its own server, which uses pragmatic schedul-ing mechanism to handle:

Volatility and Heterogeneity

I The server waits for clients to contact himI Upon work request, the server selects a subset of tasks and

assign them a soft deadlineI If a task is not returned before its deadline, it is considered

as lost and may be resubmitted to another client

Rewarding Volunteers

I Clients claim credits based on benchmarkI Servers reward minimum of claimed credits for correct resultsI Rank volunteers based on their contribution

Correctness (over-clocking, unstable numerical applications, mali-cious participants)

I Majority votingI Limited replication, homogeneous redundancy, black hole

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 13 / 23

The BOINC Server in a Nutshell

Each project sets up its own server, which uses pragmatic schedul-ing mechanism to handle:

Volatility and Heterogeneity

I The server waits for clients to contact himI Upon work request, the server selects a subset of tasks and

assign them a soft deadlineI If a task is not returned before its deadline, it is considered

as lost and may be resubmitted to another client

Rewarding Volunteers

I Clients claim credits based on benchmarkI Servers reward minimum of claimed credits for correct resultsI Rank volunteers based on their contribution

Correctness (over-clocking, unstable numerical applications, mali-cious participants)

I Majority votingI Limited replication, homogeneous redundancy, black hole

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 13 / 23

The BOINC Server in a Nutshell

Each project sets up its own server, which uses pragmatic schedul-ing mechanism to handle:

Volatility and Heterogeneity

I The server waits for clients to contact himI Upon work request, the server selects a subset of tasks and

assign them a soft deadlineI If a task is not returned before its deadline, it is considered

as lost and may be resubmitted to another client

Rewarding Volunteers

I Clients claim credits based on benchmarkI Servers reward minimum of claimed credits for correct resultsI Rank volunteers based on their contribution

Correctness (over-clocking, unstable numerical applications, mali-cious participants)

I Majority votingI Limited replication, homogeneous redundancy, black hole

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 13 / 23

The BOINC Client in a Nutshell

Each volunteer may register to many projects and define resourceshares

Fairness Respect resource shares and variety ; Fair Sharing. . .

Satisfy deadlines Rough simulation and switch to Earliest DeadlineFirst if needed

Avoid waste Work as much as possible and do not start working ontasks whose deadline can obviously not be met

Consequence

Once a task has been downloaded, the client will try to complete itbefore its deadline

A project with shorter deadline could thus obtainmore resources than the volunteer wishes

Long term fairness inhibits requesting tasks to overworked projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 14 / 23

The BOINC Client in a Nutshell

Each volunteer may register to many projects and define resourceshares

Fairness Respect resource shares and variety ; Fair Sharing. . .

Satisfy deadlines Rough simulation and switch to Earliest DeadlineFirst if needed

Avoid waste Work as much as possible and do not start working ontasks whose deadline can obviously not be met

Consequence

Once a task has been downloaded, the client will try to complete itbefore its deadline

A project with shorter deadline could thus obtainmore resources than the volunteer wishes

Long term fairness inhibits requesting tasks to overworked projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 14 / 23

The BOINC Client in a Nutshell

Each volunteer may register to many projects and define resourceshares

Fairness Respect resource shares and variety ; Fair Sharing. . .

Satisfy deadlines Rough simulation and switch to Earliest DeadlineFirst if needed

Avoid waste Work as much as possible and do not start working ontasks whose deadline can obviously not be met

Consequence

Once a task has been downloaded, the client will try to complete itbefore its deadline

A project with shorter deadline could thus obtainmore resources than the volunteer wishes

Long term fairness inhibits requesting tasks to overworked projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 14 / 23

The BOINC Client in a Nutshell

Each volunteer may register to many projects and define resourceshares

Fairness Respect resource shares and variety ; Fair Sharing. . .

Satisfy deadlines Rough simulation and switch to Earliest DeadlineFirst if needed

Avoid waste Work as much as possible and do not start working ontasks whose deadline can obviously not be met

Consequence

Once a task has been downloaded, the client will try to complete itbefore its deadline

A project with shorter deadline could thus obtainmore resources than the volunteer wishes

Long term fairness inhibits requesting tasks to overworked projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 14 / 23

The BOINC Client in a Nutshell

Each volunteer may register to many projects and define resourceshares

Fairness Respect resource shares and variety ; Fair Sharing. . .

Satisfy deadlines Rough simulation and switch to Earliest DeadlineFirst if needed

Avoid waste Work as much as possible and do not start working ontasks whose deadline can obviously not be met

Consequence

Once a task has been downloaded, the client will try to complete itbefore its deadline

A project with shorter deadline could thus obtainmore resources than the volunteer wishes

Long term fairness inhibits requesting tasks to overworked projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 14 / 23

The BOINC Client in a Nutshell

Each volunteer may register to many projects and define resourceshares

Fairness Respect resource shares and variety ; Fair Sharing. . .

Satisfy deadlines Rough simulation and switch to Earliest DeadlineFirst if needed

Avoid waste Work as much as possible and do not start working ontasks whose deadline can obviously not be met

Consequence

Once a task has been downloaded, the client will try to complete itbefore its deadline

A project with shorter deadline could thus obtainmore resources than the volunteer wishes

Long term fairness inhibits requesting tasks to overworked projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 14 / 23

The Deadline Effect

I The slack is the ratio between the deadline and the actual run-ning time of tasks [KAM07] (has to be > 7; the current medianis about 60)

0 10 20 30 40 50 600

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

Slack

Was

te

0 10 20 30 40 50 60

waste

BOINC is perfectly tailored for throughput optimization

But with such a slack, response time is really large

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 15 / 23

GridBot

GridBot [SSGS09] (Technion - Israel Institute of Technology)

I Focus on response time of BoTsI Use both community resources (BOINC) and grid resources

(Condor). Has also been connected with Amazon EC2I Better than BOINC and than Condor for this kind of workload

I Tighter deadlines for reliable resourcesI Replicate on reliable resources toward the end

1. System state DB

2. Job Queue

Execution

client

Communication

frontend

Work dispatch logic

Grid overlay

constructor

Grid submitter

Execution

client

Execution

client

Community grid

Collaborative gridDedicated cluster

Submit

Execution

client

Grid submitter

Submit

Resource RequestResource Request

Fetch job

Fetch/generate jobs

Fetch queue stateUpdate result

Work−dispatch server

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 16 / 23

GridBot

GridBot [SSGS09] (Technion - Israel Institute of Technology)

I Focus on response time of BoTsI Use both community resources (BOINC) and grid resources

(Condor). Has also been connected with Amazon EC2I Better than BOINC and than Condor for this kind of workload

I Tighter deadlines for reliable resourcesI Replicate on reliable resources toward the end

FCFS scheduling on a desktop Grid [KTB+04]

A.k.a the last finishing task issue

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 16 / 23

GridBot

GridBot [SSGS09] (Technion - Israel Institute of Technology)

I Focus on response time of BoTsI Use both community resources (BOINC) and grid resources

(Condor). Has also been connected with Amazon EC2I Better than BOINC and than Condor for this kind of workloadI Tighter deadlines for reliable resourcesI Replicate on reliable resources toward the end

Com

ple

ted j

obs

(thousa

nds)

BOINC execution endsGridBot execution ends

Unmodified BOINCGridBot

50 100Time (hours)

150 200 25000

5

30

25

20

15

10

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 16 / 23

GridBot

GridBot [SSGS09] (Technion - Israel Institute of Technology)

I Focus on response time of BoTsI Use both community resources (BOINC) and grid resources

(Condor). Has also been connected with Amazon EC2I Better than BOINC and than Condor for this kind of workloadI Tighter deadlines for reliable resourcesI Replicate on reliable resources toward the end

The deadline/boomerang effect

“Since a single client is connected to many suchprojects, those with shorter deadlines (less than threedays) effectively require their jobs to be executedimmediately, thus postponing the jobs of the otherprojects. This is considered selfish and leads to con-tributor migration and a bad project reputation, whichtogether result in a significant decrease in throughput.”

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 16 / 23

GridBot

GridBot [SSGS09] (Technion - Israel Institute of Technology)

I Focus on response time of BoTsI Use both community resources (BOINC) and grid resources

(Condor). Has also been connected with Amazon EC2I Better than BOINC and than Condor for this kind of workloadI Tighter deadlines for reliable resourcesI Replicate on reliable resources toward the end

Some (guru) volunteers noticed that tight deadlinejobs were causing significant delays in other projectsand even deadline misses

I Are current mechanisms sufficient to isolateprojects from each others ?

I Response-time optimizing strategies (deadline,replication) need to be accepted by volunteers andother projects

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 16 / 23

A Game Theoretic Model of BOINC

Although every client tries to fairly and efficiently share its resources,the configuration decisions of each project may impact the perfor-mance of other projects

A Non Cooperative Game This can be modeled as a game be-tween the projects

I Each project should choose its own scheduling strategy (dead-line, replication, resource selection, . . . ) to optimize its ownmetric

I This is a long term gameI The volunteer opinion and feeling really matters

Methodology

I Really hard to study by deploying a real systemI Really hard to study on a purely theoretical point of view

We used SimGrid, a simplified but realistic modeling of BOINC,real traces from the FTA, and realistic application characteristics

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 17 / 23

A Game Theoretic Model of BOINC

Although every client tries to fairly and efficiently share its resources,the configuration decisions of each project may impact the perfor-mance of other projects

A Non Cooperative Game This can be modeled as a game be-tween the projects

I Each project should choose its own scheduling strategy (dead-line, replication, resource selection, . . . ) to optimize its ownmetric

I This is a long term gameI The volunteer opinion and feeling really matters

Methodology

I Really hard to study by deploying a real systemI Really hard to study on a purely theoretical point of view

We used SimGrid, a simplified but realistic modeling of BOINC,real traces from the FTA, and realistic application characteristics

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 17 / 23

Modeling the Whole System

A complex problem

A multi-parametric, multi-player, multi-objective setting

Volunteer Vj :

I peak performance (inMFLOP .s−1)

I an availability trace

I project shares

Project Pi:

I Obj i: objective function:either throughput %i or theaverage completion timeof a batch αi

I wi [MFLOP .task−1]: sizeof a task

I bi [task .batch−1]: numberof tasks within each batch

I ri [batch.day−1]: inputrate, i.e., the number ofbatches per day

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

A complex problem

A multi-parametric, multi-player, multi-objective setting

Volunteer Vj :

I peak performance (inMFLOP .s−1)

I an availability trace

I project shares

Project Pi:

I Obj i: objective function:either throughput %i or theaverage completion timeof a batch αi

I wi [MFLOP .task−1]: sizeof a task

I bi [task .batch−1]: numberof tasks within each batch

I ri [batch.day−1]: inputrate, i.e., the number ofbatches per day

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

A complex problem

A multi-parametric, multi-player, multi-objective setting

Volunteer Vj :

I peak performance (inMFLOP .s−1)

I an availability trace

I project shares

Project Pi:

I Obj i: objective function:either throughput %i or theaverage completion timeof a batch αi

I wi [MFLOP .task−1]: sizeof a task

I bi [task .batch−1]: numberof tasks within each batch

I ri [batch.day−1]: inputrate, i.e., the number ofbatches per day

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

(π, σ, τ, γ)A (π, σ, τ, γ)B (π, σ, τ, γ)C

A complex problem

A multi-parametric, multi-player, multi-objective setting

Strategy Si

I πi: work send policy[KAM07](πcste=c/saturation/EDF)

I σi: slack [KAM07](s ∈ [1, 10])

I τi: conn. interval [HAH09](12mn to 30hrs)

I γi: replication strategy[KCC07] (r ∈ {1, . . . , 8})

The strategy Si of a project Pi

is thus a tuple (π, σ, τ, γ)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

(π, σ, τ, γ)A (π, σ, τ, γ)B (π, σ, τ, γ)C

A complex problem

A multi-parametric, multi-player, multi-objective setting

Strategy Si

I πi: work send policy[KAM07](πcste=c/saturation/EDF)

I σi: slack [KAM07](s ∈ [1, 10])

I τi: conn. interval [HAH09](12mn to 30hrs)

I γi: replication strategy[KCC07] (r ∈ {1, . . . , 8})

The strategy Si of a project Pi

is thus a tuple (π, σ, τ, γ)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

(%A,WA) (αB,WB) (%C ,WC)

⇒(π, σ, τ, γ)A (π, σ, τ, γ)B (π, σ, τ, γ)C

A complex problem

A multi-parametric, multi-player, multi-objective setting

Strategy Si

I πi: work send policy[KAM07](πcste=c/saturation/EDF)

I σi: slack [KAM07](s ∈ [1, 10])

I τi: conn. interval [HAH09](12mn to 30hrs)

I γi: replication strategy[KCC07] (r ∈ {1, . . . , 8})

The strategy Si of a project Pi

is thus a tuple (π, σ, τ, γ)

Outcome

I Waste

I Throughput/Response Time

; Cluster Equivalence[KTB+04]

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

(CEA,WA) (CEB,WB) (CEC ,WC)

⇒(π, σ, τ, γ)A (π, σ, τ, γ)B (π, σ, τ, γ)C

A complex problem

A multi-parametric, multi-player, multi-objective setting

Strategy Si

I πi: work send policy[KAM07](πcste=c/saturation/EDF)

I σi: slack [KAM07](s ∈ [1, 10])

I τi: conn. interval [HAH09](12mn to 30hrs)

I γi: replication strategy[KCC07] (r ∈ {1, . . . , 8})

The strategy Si of a project Pi

is thus a tuple (π, σ, τ, γ)

Outcome

I Waste

I Throughput/Response Time; Cluster Equivalence

[KTB+04]A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

Modeling the Whole System

(CEA,WA) (CEB,WB) (CEC ,WC)

⇒(π, σ, τ, γ)A (π, σ, τ, γ)B (π, σ, τ, γ)C

A complex problem

A multi-parametric, multi-player, multi-objective setting

Strategy Si

I πi: work send policy[KAM07](πcste=c/saturation/EDF)

I σi: slack [KAM07](s ∈ [1, 10])

I τi: conn. interval [HAH09](12mn to 30hrs)

I γi: replication strategy[KCC07] (r ∈ {1, . . . , 8})

The strategy Si of a project Pi

is thus a tuple (π, σ, τ, γ)

Outcome

I Waste

I Throughput/Response Time; Cluster Equivalence

[KTB+04]A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 18 / 23

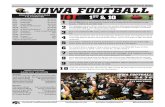

Sensibility Analysis

I 1000 clients over 5 monthsI 4 identical throughput projects with standard configurationI 1 burst project adjusting its slack and connection interval

parameters (fixed send policy and no replication)

and observe impact on

and wastecluster equivalence

1 Burst project

(π, σ, τ , γ)Tune slack andconnection interval

4 Continuous projects

(π, σ, τ, γ) (π, σ, τ, γ) (π, σ, τ, γ) (π, σ, τ, γ)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 19 / 23

Sensibility Analysis

I 1000 clients over 5 monthsI 4 identical throughput projects with standard configurationI 1 burst project adjusting its slack and connection interval

parameters (fixed send policy and no replication)

ClusterEquivalence

0 1 2 3 4 5 6 7 8 9 10 05

1015

2025

3035

40

110

120

126

Slack

126

120

110

Connection Interval (h)0 1 2 3 4 5 6 7 8 9 10 0

510

1520

2530

3540

20

50

100

130

2050

100130

SlackConnection Interval (h)

Waste0 1 2 3 4 5 6 7 8 9 10 0

510

1520

2530

3540

0.018

0.030.032

0.025

0.018

0.025

0.032

Slack

Connection Interval (h)0 1 2 3 4 5 6 7 8 9 10 0

510

1520

2530

3540

0

20

40

60

0

60

40

20

SlackConnection Interval (h)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 19 / 23

Sensibility Analysis

I 1000 clients over 5 monthsI 4 identical throughput projects with standard configurationI 1 burst project adjusting its slack and connection interval

parameters (fixed send policy and no replication)

Similar studies at finer granularity and for other parameters enableto understand that:

I σ: Slack has a dramatic effect on CE of all projects but areasonable trade-off can be found (around 1.1)

Burst projects need to carefully tune their slack

I τ: Connection interval has almost no influence and can be ar-bitrarily set to 1hr

I γ: Allowing a few replicas (around 2-3) improves CE and wasteI π: Among the different work send policies we tried, one of them

leads to unacceptably high waste (around 50%) for a minorCE improvement and should thus be disregarded as it wastesresources and could upset volunteers.

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 19 / 23

Best Response Strategy and Nash Equilibrium

Definition: Nash Equilibrium.

S is a Nash equilibrium for (V, P) iff

for all i and for any S′i, CE i(V, P, S|Si=S′

i) 6 CE i(V, P, Si),

where S|Si=S′i

denote the strategy set where Pi uses strategy S′i and

every other player keeps the same strategy as in S.

I a Nash equilibrium is a stable point for a best response strat-egy

I a best response strategy does not necessarily converge

I there may be no Nash equilibrium

I Nash equilibria are in the general case neither fair nor efficient

I Although they are not particularly desirable, they are adaptedto model our situation

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 20 / 23

Utility Set Sampling and Nash Equilibrium

I 2 identical throughput projects with standard configurationI 4 identical burst project adjusting their slack (EDF send

policy, replication=2)I Almost saturated system

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 21 / 23

Utility Set Sampling and Nash Equilibrium

I 2 identical throughput projects with standard configurationI 4 identical burst project adjusting their slack (EDF send

policy, replication=2)I Almost saturated system

ConsensusDissident Burst

Non−Dissident Bursts

30

35

40

45

50

55

60

65

70

1 1.1 1.2 1.3 1.4 1.5 1.6 1.7 1.8 1.9 2 2.1 2.2 2.3 2.4 2.5 2.6 2.7 2.8 2.9 3 3.1 3.2 3.3 3.4 3.5

CE

Deadline (h)

Nash Equilibrium

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 21 / 23

Utility Set Sampling and Nash Equilibrium

I 2 identical throughput projects with standard configurationI 4 identical burst project adjusting their slack (EDF send

policy, replication=2)I Almost saturated system

trajectory

Convergingbest-response

trajectory

Cyclicbest-response

1

1.5

2

2.5

3

3.5

1 1.5 2 2.5 3 3.5

Dea

dlin

e-

Ten

den

cy

Deadline - Base

Arbitrary starting points

Nash Equilibrium

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 21 / 23

Utility Set Sampling and Nash Equilibrium

I 2 identical throughput projects with standard configurationI 4 identical burst project adjusting their slack (EDF send

policy, replication=2)I Almost saturated system

NE

10% better configuration

165

170

175

180

185

190

195

56 58 60 62 64 66 68 70 72 74

CE

-C

onti

nu

ous

CE - Burst

Burst - Deadline(h)2.7

2.752.8

2.852.9

2.953

3.053.1

3.153.2

3.253.3

3.353.4

3.53.452.6

2.65

2.552.5

1.951.9

1.85

22.05

2.12.15

2.22.25

2.32.35

2.4

1.81.75

1.71.65

1.61.55

1.51.45

1.351.3

1.4

1.251.2

1.11.05

1

1.15

2.45

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 21 / 23

Utility Set Sampling and Nash Equilibrium

I 1 identical throughput projects with standard configurationI 7 identical burst project adjusting their slack (EDF send

policy, replication=2)I Almost saturated system

Better configuration (10%,7%)

NE

1

1.15

1.25

2.72.75

2.82.85

2.92.95

3.053.1

3.153.2

3.253.3

3.353.4

3.453.5

2.552.6

2

2.35

1.95

2.65

2.05

1.851.9

2.12.15

2.22.25

2.3

2.42.45

2.5

1.451.5

1.551.6

1.65

1.751.7

1.8

1.31.35

1.4

1.11.05

1.2

3

305

310

315

320

325

330

335

340

345

350

355

34 35 36 37 38 39 40 41

CE

-C

onti

nu

ous

CE - Burst

Burst - Deadline(h)

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 21 / 23

Conclusion

Harmful non-cooperative optimization Under high load and high pres-sure from burst projects, the current BOINC scheduling mechanism isunable to enforce fairness and project isolation We found inefficient NashEquilibrium:

I Efficient configurations seem rather unstable.I Can we found worse than 10% inefficiency?I Could there be Braess paradoxes?

Game theory provides nice tools to address such issues(correlated equilibria, pricing mechanisms, coalition)

Fair Optimization of Bag of Tasks A profound need: umbrella projects.Need to leverage both volunteer, grid and cloud resources.Currently designing fair multi-user scheduler for this context.

Evolution of the Associated Team

I Collaboration with Google on cloud load characterization is on hold(Derrick Kondo is on sabbatical in the Bay area)

I Upcoming collaboration between B. Gaujal (Inria), R. Righter(UCB) and D. Anderson on reliable data storage in BOINC

BOINC workshop September in Grenoble.

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 22 / 23

Conclusion

Harmful non-cooperative optimization Under high load and high pres-sure from burst projects, the current BOINC scheduling mechanism isunable to enforce fairness and project isolation We found inefficient NashEquilibrium:

I Efficient configurations seem rather unstable.I Can we found worse than 10% inefficiency?I Could there be Braess paradoxes?

Game theory provides nice tools to address such issues(correlated equilibria, pricing mechanisms, coalition)

Fair Optimization of Bag of Tasks A profound need: umbrella projects.Need to leverage both volunteer, grid and cloud resources.Currently designing fair multi-user scheduler for this context.

Evolution of the Associated Team

I Collaboration with Google on cloud load characterization is on hold(Derrick Kondo is on sabbatical in the Bay area)

I Upcoming collaboration between B. Gaujal (Inria), R. Righter(UCB) and D. Anderson on reliable data storage in BOINC

BOINC workshop September in Grenoble.

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 22 / 23

Conclusion

Harmful non-cooperative optimization Under high load and high pres-sure from burst projects, the current BOINC scheduling mechanism isunable to enforce fairness and project isolation We found inefficient NashEquilibrium:

I Efficient configurations seem rather unstable.I Can we found worse than 10% inefficiency?I Could there be Braess paradoxes?

Game theory provides nice tools to address such issues(correlated equilibria, pricing mechanisms, coalition)

Fair Optimization of Bag of Tasks A profound need: umbrella projects.Need to leverage both volunteer, grid and cloud resources.Currently designing fair multi-user scheduler for this context.

Evolution of the Associated Team

I Collaboration with Google on cloud load characterization is on hold(Derrick Kondo is on sabbatical in the Bay area)

I Upcoming collaboration between B. Gaujal (Inria), R. Righter(UCB) and D. Anderson on reliable data storage in BOINC

BOINC workshop September in Grenoble.

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 22 / 23

Conclusion

Harmful non-cooperative optimization Under high load and high pres-sure from burst projects, the current BOINC scheduling mechanism isunable to enforce fairness and project isolation We found inefficient NashEquilibrium:

I Efficient configurations seem rather unstable.I Can we found worse than 10% inefficiency?I Could there be Braess paradoxes?

Game theory provides nice tools to address such issues(correlated equilibria, pricing mechanisms, coalition)

Fair Optimization of Bag of Tasks A profound need: umbrella projects.Need to leverage both volunteer, grid and cloud resources.Currently designing fair multi-user scheduler for this context.

Evolution of the Associated Team

I Collaboration with Google on cloud load characterization is on hold(Derrick Kondo is on sabbatical in the Bay area)

I Upcoming collaboration between B. Gaujal (Inria), R. Righter(UCB) and D. Anderson on reliable data storage in BOINC

BOINC workshop September in Grenoble.A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 22 / 23

References

Eric Heien, David Anderson, and Kenichi Hagihara.Computing low latency batches with unreliable workers in volunteer computing environ-ments.Journal of Grid Computing, 7:501–518, 2009.

Derrick Kondo, David P. Anderson, and John VII McLeod.Performance evaluation of scheduling policies for volunteer computing.In Proceedings of the 3rd IEEE International Conference on e-Science and Grid Computinge-Science’07, Bangalore, India, dec 2007.

Derrrick Kondo, Andrew A. Chien, and Henri Casanova.Scheduling task parallel applications for rapid application turnaround on enterprise desktopgrids.Journal of Grid Computing, 5(4), 2007.

Derrrick Kondo, M. Taufer, C. Brooks, Henri Casanova, and Andrew A. Chien.Characterizing and Evaluating Desktop Grids: An Empirical Study.In Proc. of the Intl. Parallel and Distributed Processing Symp. (IPDPS), 2004.

Mark Silberstein, Artyom Sharov, Dan Geiger, and Assaf Schuster.Gridbot: execution of bags of tasks in multiple grids.In Proceedings of the Conference on High Performance Computing Networking, Storageand Analysis, SC ’09, New York, NY, USA, 2009. ACM.

A. Legrand (CNRS) INRIA-MESCAL CloudShare Associated Team Non-Cooperative Scheduling 23 / 23