Chapter 7. Exercise 1 Mean X*:7.6,8.1,9.6,10.2,10.7,12.3,13.4,13.9,14.6,15.2 Here the percentile BS...

-

Upload

quentin-dalton -

Category

Documents

-

view

217 -

download

2

Transcript of Chapter 7. Exercise 1 Mean X*:7.6,8.1,9.6,10.2,10.7,12.3,13.4,13.9,14.6,15.2 Here the percentile BS...

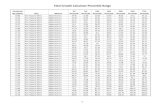

Chapter 7

Exercise 1Mean X*:7.6,8.1,9.6,10.2,10.7,12.3,13.4,13.9,14.6,15.2

Here the percentile BS method is applied, testing

P is given by where is the proportion of bootstrap sample means greater than μo, in this case 8.5. 80% of the boostrap samples are greater than 8.5, thus P is estimated at 0.4. Calculation is given by (2✕(1-0.8))

The middle 80% of observed bootstraped sample means range from 8.1 to 14.6, so this would be the 0.8 CI for the population mean.

Exercise 2

x=c(5,12,23,24,6,58,9,18,11,66,15,8)

out(x)$out.val[1] 58 66

trimci(x)[1] 7.157829 22.842171

trimci(x, tr=0)[1] 8.504757 33.995243

Distribution is skewed with outliers Inflating variance for means but not trimmed means

Exercise 3x=c(5,12,23,24,6,58,9,18,11,66,15,8)> trimcibt(x,tr=0,side=F)[1] "Taking bootstrap samples. Please wait."[1] "NOTE: p.value is computed only when side=T"$estimate[1] 21.25

$ci[1] 12.40284 52.46772

$test.stat[1] 3.669678

trimci(x)[1] 7.157829 22.842171

trimci(x, tr=0)[1] 8.504757 33.995243

Exercise 4

The trimmed mean will be more accurate because skewness and outliers shift the the T distribution in a manner that changes its cumulative probabilities (and quantiles). Trimmed means, based in bootstrap methods (trimcibt), perform much better under skewness, and they remove outliers. The boostrap method estimates well the sampling distribution and actual T.

Exercise 5

y=c(7.6,8.1,9.6,10.2,10.7,12.3,13.4,13.9,14.6,15.2)trimcibt(y, side=F)

$estimate[1] 11.68333

$ci[1] 8.171324 15.754730

Exercise 6

x=c(5,12,23,24,6,58,9,18,11,66,15,8)> trimpb(x)[1] "The p-value returned by the this function is based on the"[1] "null value specified by the argument null.value, which defaults to 0"[1] "Taking bootstrap samples. Please wait."$ci[1] 9.75 31.50

$p.value[1] 0

Exercise 7

Percentile t bootstrap will be more accurate in this case, based on simulations studies:

Exercise 8

a=c(2,4,6,7,8,9,7,10,12,15,8,9,13,19,5,2,100,200,300,400)

onesampb(a)$ci[1] 7.425758 19.806385

Exercise 9a=c(2,4,6,7,8,9,7,10,12,15,8,9,13,19,5,2,100,200,300,400)

onesampb(a)$ci[1] 7.425758 19.806385

trimpb(a)$ci[1] 7.25000 63.33333

The M estimators remove the outliers by design. In contrast, when bootstrapping trimmed means with 4 outliers, some bootstrap samples will include more than 4 outliers (sampling with replacement) in a manner that exceeds the 20% trimming. For this reason, the CI will be longer for trimmed means.

Exercise 10

trimpb(a,tr=.30)$ci[1] 7.125 35.500

CI is shorter relative to 20% trimming.The trimming is higher so it can withstand higher number of outliers that are sampled in the bootstrap procedure.

Exercise 11

trimpb(a,tr=.40)[1] 7.0 14.5

CI is now even shorter because the trimming is high enough to handle almost all outliers that are sampled in the bootstrap procedure.

Exercise 12

The bootstrap method for estimating standard errors can be highly inaccurate with respect to probability coverage in the case of the median when there are tied values in the data set.

Tied values can significantly alter the estimate of the median across different bootstrap samples.

Exercise 13

sint(a)[1] 7.00000 14.52953> onesampb(a)$ci[1] 7.425758 19.806385

There are situation where CI for the median is shorter than CI for M estimators even though they remove outliers.

Exercise 14Data from table 6.7, not 6.6. x=c(34,49,49,44,66,48,49,39,54,57,39,65,43,43,44,42,71,40,41,38,42,77,40,38,43,42,36,55,57,57,41,66,69,38,49,51,45,141,133,76,44,40,56,50,75,44,181,45,61,15,23,42,61,146,144,89,71,83,49,43,68,57,60,56,63,136,49,57,64,43,71,38,74,84,75,64,48)

y=c(129,107,91,110,104,101,105,125,82,92,104,134,105,95,101,104,105,122,98,104,95,93,105,132,98,112,95,102,72,103,102,102,80,125,93,105,79,125,102,91,58,104,58,129,58,90,108,95,85,84,77,85,82,82,111,58,99,77,102,82,95,95,82,72,93,114,108,95,72,95,68,119,84,75,75,122,127) lsfitci(x,y)$intercept.ci[1] 89.80599 110.16031

$slope.ci [,1] [,2][1,] -0.3293914 0.1169401

Exercise 15

corb(x,y)$r[1] -0.03474317

$ci[1] -0.3326596 0.2138606

CI contains 0, so do not reject

Exercise 16

indt(x,y)[1] "Taking bootstrap sample, please wait.”$dstat[1] 26.20945$p.value.d[1] 0.016

Test for independence is rejected, so variable are dependent.

Exercise 17

0 correlation does not mean independence. There are many situations for which there is an association between variables while r=0, some pertain to curvature, heteroscedasticity, and bad leverage points.

Exercises 18 and 19lsfitci(x,y,xout=T)$intercept.ci[1] 104.4706 140.0141

$slope.ci [,1] [,2][1,] -0.8733226 -0.07469801

After manual removallsfitci(x,y)[$intercept.ci[1] 104.3009 137.8797

$slope.ci [,1] [,2][1,] -0.7979083 -0.1467203

pcorb(x,y)$r[1] -0.3868361

$ci[1] -0.6403710 -0.1176394

Now we reject, whereas previously we did not

Data from table 6.7, not 6.6.

Exercise 20

c=c(300,280,305,340,348,357,380,397,453,456,510,535,275,270,335,342,354,394,383,450,446,513,520,520)d=c(32.75,28,30.75,29,27,31.20,27,27,23.50,21,21.5,22.8,30.75,27.25,31,26.50,23.50,22.70,25.80,27.80,21.50,22.50,20.60,21)

lsfitci(c,d)$intercept.ci[1] 36.11869 44.19982

$slope.ci [,1] [,2][1,] -0.04761471 -0.02518437

Conventional methods can have accurate probability coverage in certain situations.There isn’t always a difference.

Exercise 21x=c(0.032,0.034,0.214,0.263,0.275,0.275,0.450,0.500,0.500,0.630,0.800,0.900,0.900,0.900,0.900,1.000,1.100,1.100,1.400,1.700,2.000,2.000,2.000,2.000)y=c(170,290,-130,-70,-185,-220,200,290,270,200,300,-30,650,150,500,920,450,500,500,960,500,850,800,1090)

lsfitci(x,y)$intercept.ci[1] -191.9351 108.0508

$slope.ci [,1] [,2][1,] 305.8974 642.884