CGAR: Strong Consistency without Synchronous Replication › Seminar Talks › retreat-2017 › Seo...

Transcript of CGAR: Strong Consistency without Synchronous Replication › Seminar Talks › retreat-2017 › Seo...

CGAR: Strong Consistency without Synchronous Replication

Seo Jin Park Advised by: John Ousterhout

● Improved update performance of storage systems with master-back replication § Fast: updates complete before replication to backups § Safe: save RPC requests and retry if master crashes

● Two variants: • CGAR-C: save RPC requests in client library • CGAR-W: save RPC requests in a different server (Witness)

● Performance Result • RAMCloud: 0.5x latency, 4x throughput • Redis: strongly consistent (cost: 12% latency é)

Slide 2

Overview

Slide 3

CGAR’s Role in Platform Lab

Granular Computing Platform

Cluster Scheduling

Low-Latency RPC

Scalable Notifications

Thread/App

Mgmt

Low-Latency Storage

Hardware Accelerat

ors CGAR

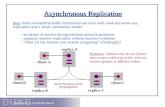

● Master-backup replication: client send updates to a master and master replicate state to backups.

● Consistency after crash § Responses for update operations must wait for backup

replications (synchronous replication) § Must not reveal non-replicated value

Slide 4

Consistency in Master-Backup

client

Master Backup

� write x = 1

� ok X: 1 � ok

� X: 1

X: 1

● Synchronous replication increases latency of updates ● Alternative: asynchronous replication

§ Non-replicated data can be lost § Sacrifice consistency if master crashes § Enables batched replication (more efficient)

Slide 5

Waiting for Replication is Not Cheap

4 µs 8 µs 3 µs

Processing time for RAMCloud

WRITE operation

Asynchronous update: 7 µs

Client Backup

Master Backup Backup

RAMCloud uses synchronous replication

● Consistent even after crash

● Write: 14.3 µs vs. Read: 5 µs

● Focused on minimizing latency while consistent

● Polling wait for replication è Write throughput is only 18% of read throughput

Slide 6

Consistency over Performance: RAMCloud

Master(s) Client Backups

Durable Log Write

Write

Ok

Redis uses asynchronous replication

● Backup to a file in disk

● Default: fsync every second § Lose data if a master crashes

● Option for strong consistency: fsync-always § On SSDs, 1~2 ms delay § Without fsync, SET takes 25 µs.

Slide 7

Performance over Consistency: Redis

Fsync Log File

Server Memory

Client Server Disk

SET

Ok

Can we have both consistency and performance?

● Asynchronous Replication à performance ● For consistency

§ Save RPC requests in 3rd-party server (Witness) § Replay RPCs in Witness if master crashes

Slide 8

Consistency Guaranteed Asynchronous Replication

Client Master Backup Backup Backup

Witness

recover RPC

● Client multicasts RPC request to master and witness

● Witness vouches the RPC will be retried if master crash

Slide 9

Witness Record Operation

client

Master

X: 1

Witness

async

write x = 1

Backup

X: 0

(8MB)

● Step 1: recover from backups

● Step 2: retry update RPCs in witness

Slide 10

Recovery Steps of CGAR-W

Master

X: 1 Y: 7

Backup

X: 0 Y: 7

X: 0 Y: 7

New Master

1� retry

client

Witness

write x = 1 �

recover

replicate

1

● Witness may receive RPCs in a different order than master § Solution: witness saves only 1 record per key § Concurrent operations on same key? Witness rejects all but first

● Retry may re-execute an RPC § Solution: use RIFL to ignore already completed RPC.

● Update may depend on unreplicated value in master § Master cannot assume witness saved the RPC request § Solution: delay update if current value is not yet replicated

Slide 11

Challenges in Using Witness for Recovery

Slide 12

Example: RPCs in a Different Order

write x = 2

1

Witness

Client Red

Client Blue

Master

Backup

write x = 1

write x = 2

Slide 13

Example: RPCs in a Different Order

write x = 2

1 2

1

Witness

Master

Backup

ok

write x = 1

write x = 2

Must wait for replication

Can complete as soon as master returns

Client Red

Client Blue

● Witness must drop a record before accepting new one with same key

Slide 14

Garbage Collection

Master

X: 1

Witness

write x = 1

client

X: 1

Backup

client “write x = 2”

accept

async

Drop “write x = 1” [use RPC ids assigned by RIFL]

● A system can use multiple witnesses per each master § Higher availability

(recovery can use any witnesses)

§ To use async update, all witnesses must accept

Slide 15

Using Multiple Witnesses

client

Master

X: 1

Witnesses

write x = 1

write x = 1

Evaluation of CGAR Ø RAMCloud implementation

• Performance improvement • Latency reduction

Ø Redis implementation • Supports wide range of operations

Slide 16

● Writes are issued sequentially by a client to a master

Slide 17

RAMCloud’s Latency after CGAR

Median 6.6 μs,7.1 μs

Median 14.3 μs

● Batching replication improved throughput

Slide 18

RAMCloud’s Throughput after CGAR

184

702

725

● SET: write to key-value store

● HMSET: write to a member of hashmap

● INCR: increment an integer counter

Slide 19

Making Redis Consistent with Small Cost

● Fast: updates don’t wait for replication

● Consistent: CGAR saves RPC requests in witness; If server crashes, retry the saved RPCs to recover

● High throughput: replication can be batched

Slide 20

Conclusion

Slide 21

Questions

● YCSB-A: Zipfian dist (1M objects, p = 0.99)

Slide 25

Latency under Skewed Workloads

● Delay replication RPC’s completion time

Slide 26

CGAR Decoupled Replication from Update