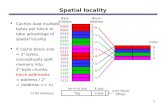

Caches Where is a block placed in a cache? –Three possible answers three different types...

-

Upload

ursula-davidson -

Category

Documents

-

view

219 -

download

2

Transcript of Caches Where is a block placed in a cache? –Three possible answers three different types...

Caches• Where is a block placed in a cache?

– Three possible answers three different types

Anywhere Fully associative

Only intoone block

Direct mapped

Into subsetof blocks

Set associative

How is a block found?• Cache has an address tag for each block

• Tags are checked in parallel for a match

• Also has a valid bit

Processor address: Block Address Block OffsetTag Index

Identifies datain blockIdentifies setIdentifies block

Which block should be replaced on a miss?

• Direct mapped:– Simple (there can only be one!)

• Associative caches:– Choice involved– Three techniques

• Random

• Least-recently used (LRU)– Often only approximated

• FIFO (approximates LRU)

Random vs LRU (16kB cache)

0

1

2

3

4

5

6

2-way 4-way 8-way

LRURandom

Mis

s R

ate

(%)

Random vs LRU (256kB cache)

0

0.2

0.4

0.6

0.8

1

1.2

2-way 4-way 8-way

LRURandom

Mis

s R

ate

(%)

What happens on a write?

• Reads predominate– Instruction fetches, more loads than stores– MIPS instruction mix:

• 10% stores

• 37% loadsWrites: 7% of memory traffic,21% of data traffic

Amdahl’s Law: We can’t ignore them!

Write Strategy

• Must complete checking tags before starting to write– Read can sometimes proceed safely while tags

are checked

• Must modify only part of the block– Reads can read more than is required

Write Strategy

• Two main approaches

Dirty bit

• Write through

Cache MainMemory

CPU

• Write back

Cache MainMemory×

CPU

Advantages

• Write back– Writes occur at cache speeds– Only one memory access after multiple writes

• Lower memory bandwidth

• Write through– Efficient read misses– Simple implementation– Memory and cache are consistent

Good for multiprocessors

Good for multi-processors!

Optimising Write Through

• Reduce write stalls– Write buffer

• Processor continues while write buffer updates memory

Handling Write Misses

• Write allocate– Fetch block into cache on miss– Good with write back

• No-write allocate– Memory is updated without loading block into

cache– Good with write through

Alpha 21264 Data Cache

• Data cache– 64kB– 64-byte blocks– 2-way set associative– Write back

• Write allocate

– Victim Buffer (similar to Write Buffer)• 8 blocks

Alpha Data Cache Hit

Data Cache

• Uses FIFO (one bit per set)

• If victim buffer is full, CPU must stall

• Write miss:– Write allocate– Similar to read miss

Performance

• Hit– 3 cycles

• Three cycle load delay

• Miss– 9ns to transfer data from next level (6 cycles @

667MHz)

Alpha 21264 Instruction Cache

• Instruction cache– Separate from data cache– 64kB

Separate Caches

• Doubles available bandwidth– Prevents fetch unit stalling on data accesses

• Caches can be optimised separately– UltraSPARC

• Data cache: 16kB, direct mapped, 2 × 16-byte sub-blocks

• Instruction cache: 16kB, 2-way set associative, 32-byte blocks

Unified Caches

• Hold both data and instructions

• Miss rates for instructions are much lower than for data (an order of magnitude)

• Unified cache may have slightly better overall miss rate– 16kB data cache: 11.4%– 16kB instruction cache: 0.4%– 32kB unified cache: 3.18%

3.24%

BUT: extra cycle stall for unified cache: average memoryaccess time is slower (4.44 rather than 4.24 cycles)

5.3. Cache Performance

• Miss rate can be misleading– See last example!

• Better measure is average memory access time

= Hit time + Miss rate × Miss penalty

Performance Issues• Cache is very significant factor

– Example: CPU time increased by 4

• Particularly for:– Low CPI machines– Fast clock speeds

• Simplicity of direct mapped cache may give faster clock rate

Miss Penalty and Out of Order Execution

• Processor may be able to do useful work during cache miss

• Makes analysis of cache performance very difficult!

• Can have a significant impact

Improving Cache Performance

• Very important topic– 1600 papers in 6 years! (2nd Edition)– 5000 papers in 13 years! (3rd Edition)

Improving Cache Performance

• Four categories of optimisation:– Reduce miss rate– Reduce miss penalty– Reduce miss rate or miss penalty using

parallelism– Reduce hit time

AMAT = Hit time + Miss rate × Miss penalty

5.4. Reducing Miss Penalty

• Traditionally, focus on miss rate

• But, cost of miss penalties is increasing dramatically

Multi-level Caches

• Two caches– A small, fast one close to the CPU– A big, slower one between the first cache and

memory

L1cache Main

Memory

CPU L2 Cache

Second-level caches

• Complicates analysis

L1L1L1 penalty Miss RateMissTime Hit AMAT

L2L2L2L1 penalty Miss RateMissTime Hit PenaltyMiss

Analysis of two-level caches

• Local miss rate– Number of misses / number of accesses to this

cache– Artificially high for L2 cache

• Global miss rate– Number of misses / number of accesses by CPU

– Miss rateL1 × Miss rateL2 for L2 cache

Design of two-level caches

• Second level cache should be large– Minimises local miss rate– Big blocks are more feasible (reducing miss

rate)

• Multilevel inclusion property– All data in L1 is also in L2– Useful for multiprocessor consistency

• Can be enforced at L2

Early restart & critical word first

• Minimise CPU waiting time

• Early restart– As soon as requested word arrives send it to

CPU

• Critical word first– Request required word from memory first then

fill rest of cache block

Prioritising read misses

• Write-through caches normally make use of a write buffer

• Problem: may lead to RAW hazards

• Solution: stall read miss until write buffer empties– May be as much as 50% increase in read miss

• Better solution: check write buffer for conflict

Prioritising read misses

• Write-back caches– Long read misses due to writing back dirty

block

• Solution:– Write buffer– Handle read miss then write back the dirty

block– Need to do the same conflict checking (or stall

for the write buffer to drain)

Merging Write Buffer

• Write buffers merge data being written to the same area of memory

• Benefits:– More efficient use of buffer– Reduces stalls due to write buffer being full

Victim Caches

• Small ( 5 entries), fully associative cache on the refill path– Holds recently discarded blocks

• Temporal locality

– Experiment (4kB, direct-mapped cache):• 4-entry victim cache

• Removed 20% to 95% of conflict misses

• AMD Athlon: 8 entry victim cache