Blue Gene: A Next Generation Supercomputer (BlueGene/P)

Transcript of Blue Gene: A Next Generation Supercomputer (BlueGene/P)

© 2007 IBM Corporation

Blue Gene: A Next Generation Supercomputer (BlueGene/P)

Presented by Alan Gara (chief architect) representing the Blue Gene team.

Outline of Talk

• A brief sampling of applications on BlueGene/L. • A detailed look at the next generation BlueGene (BlueGene/P)• Future challenges motivated by a look at computing ~10 to 15 years

out.– Insight into future generation BlueGene/Q machine.

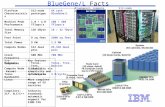

1GB VersionAvailable 1Q06

Blue Gene/L(PPC 440-700 Mhz)

Scalable to 360 TFlops

Blue Gene/Q(Power architecture)

Scales to 10s of PFlops

Blue Gene RoadmapPe

rfor

man

ce

Blue Gene/P(PPC 450: 0.85 GHz)Scalable to 1 PFlops

LA: 12/04, GA 6/05

2004 2006 20102008

SoC DesignCu-08 (9SF) tech

Providing unmatched sustained $$/perf and Watts/perf for scalable applications

The primary IBM system research vehicle which influences our more traditional PowerPC product line

BlueGene/L at RPI73.0IBM7

BlueGene/L at Stony Brook/BNL82.1IBM5

ORNL101.7Cray2Sandia (Red Storm)101.4Cray/Sandia3

Barcelona PowerPC blades62.6IBM9Leibniz Rechenzentrum56.5SGI10

ASC Purple LLNL75.76IBM6

BlueGene/L at Watson91.2IBM4

BlueGene/L DOE/NSSA/LLNL 280.6IBM1

NCSA62.6Dell8

Rmax TFlops InstallationVendor#

Top500 list (June 2007)

© 2007 IBM Corporation4

Formation of a Non-abrupt SiO2/Si interface correctly predicted “from scratch”

Car-Parrinello Molecular Dynamics (CPMD):Studying the effect on dopants on SiO2/Si boundaries

When nitrogen and hafnium are introduced during the simulation process, detrimental defects are unraveled

Simulations from first principles to understand the physics and chemistry of current technology and guide the design of next-generation materials

Characterization of materials currently under experimental test

© 2007 IBM Corporation5

Blue Brain

EPFL to simulate the neocortical column

Our understanding of the brain is limited by insufficient information and complexity

► Overcome limitations of neuroscientific experimentation

Inform experimental design and theoryEnable scientific discovery for understanding brain function and diseasesFinally feasible!!! (although by no means finshed)8096 processors (BG/L)

► 100,000 morphologically complex neurons in real-time

Total area: 1570 cm2

Thickness: ~3 mmColumns: ~1million

50 cm

35 cm

300 µm

3 mm

~10,000 neurons

Courtesy of Henry Markham, EPFL

POP2 0.1° benchmark

Courtesy of M. Taylor, John Dennis

20% of time in solver

71% of time in solver

Projected BlueGene/PComparison point for same system (node) size.

© 2007 IBM Corporation

BlueGene/P in Focus

BlueGene/P Architectural Highlights

• Scaled performance through density and frequency bump– 2x performance through doubling the processors/node– 1.2x from frequency bump due to technology

• Enhanced function – 4 way SMP– DMA, remote put-get, user programmable memory prefetch– Greatly enhanced 64 bit performance counters (including 450 core)

• Hold BlueGene/L packaging as much as possible:– Improve networks through higher speed signaling on same wires– Improve power efficiency through aggressive power management

• Higher signaling rate – 2.4x higher bandwidth, – improve latency for Torus and Tree networks– 10x higher bandwidth for Ethernet IO

• 72ki nodes in 72 racks should hit 1.00 PF peak.

BGP comparison with BGL

2.7 MW1.7MWTotal Power

1PF410TFPeak Performance (72k nodes)System Properties

4us5.0usHardware Latency (round trip worst case)

2*0.85GB/s=1.7GB/s2*350MB/s=700MB/sBandwidthCollective Network

5us(64 hops)6.4us (64 hops)Hardware Latency(Worst Case)

160ns (32B packet)500ns(256B packet)

200ns (32B packet)1.6us(256B packet)

Hardware Latency (Nearest Neighbor)

6*2*425MB/s=5.1GB/s6*2*175MB/s=2.1GB/sBandwidthTorus Network

13.6 GF/node5.6GF/nodePeak Performance

13.6 GB/s (2*16B wide)5.6GB/s (16B wide)Main Store Bandwidth

2GB512MB/1GBMain Store/node

8MB4MBL3 Cache size (shared)

14 stream prefetching14 stream prefetchingL2 Cache (private)

32KB/processor32KB/processorL1 Cache (private)

SMPSoftware managedCoherency

0.85GHz (target)0.7GHzProcessor Frequency

4* 450 PowerPC2* 440 PowerPCNode ProcessorsNode Properties

BG/PBG/LProperty

PPC 450

FPUL1 Prefetching

L24MB

eDRAML3

DDR-2Controller

PPC 450

FPUL1 Prefetching

L2

PPC 450

FPUL1 Prefetching

L24MB

eDRAML3

DDR-2Controller

PPC 450

FPUL1 Prefetching

L2

Torus Collective BarrierJTAG 10Gb Ethernet

DMA

BlueGene/P node

Arb

6*3.4Gb/sbidirectional

3*6.8Gb/sbidirectional

6*3.5Gb/sbidirectional

ControlNetwork

2*16B bus @425Mb/s

To 10Gbphysical layer

(Shares I/O with Torus)

Multiplexing sw

itchM

ultiplexing switch

14GB/s read(each), 14GB/s write(each)

Data read @ 7GB/sData write @ 7GB/sInstruction @ 7GB/s

13.6GB/s external DDR2 DRAM bus

4 symmetric ports for collective, torus and global barriers

DMA module allowsRemote direct “put”/ “get”

IBM® System Blue Gene®/P Solution © 2007 IBM Corporation

IBM® System Blue Gene®/P Solution: Expanding the Limits of Breakthrough Science

13.6 GF/s8 MB EDRAM

4 processors

1 chip, 20 DRAMs

13.6 GF/s2.0 GB DDR

Supports 4-way SMP

32 Node Cards1024 chips, 4096 procs

14 TF/s2 TB

1 to 72 or more Racks

1 PF/s +144 TB +

Cabled 8x8x16Rack

System

Compute Card

Chip

435 GF/s64 GB

(32 chips 4x4x2)32 compute, 0-2 IO cards

Node Card

Blue Gene/P

Front End Node / Service NodeSystem p Servers

Linux SLES10

Blue Gene/P continues Blue Gene’s leadership performance in a space-saving, power-efficient package for the most demanding and scalable

high-performance computing applications

HPC SW:CompilersGPFSESSLLoadleveler

IBM® System Blue Gene®/P Solution © 2007 IBM Corporation

IBM® System Blue Gene®/P Solution: Expanding the Limits of Breakthrough Science

Blue Gene/P Interconnection Networks3 Dimensional Torus

– Interconnects all compute nodes• Communications backbone for computations

– Adaptive cut-through hardware routing– 3.4 Gb/s on all 12 node links (5.1 GB/s per node)– 0.5 µs latency between nearest neighbors, 5 µs to the farthest

• MPI: 3 µs latency for one hop, 10 µs to the farthest– 1.7/2.6 TB/s bisection bandwidth, 188TB/s total bandwidth (72k machine)

Collective Network– Interconnects all compute and I/O nodes (1152)– One-to-all broadcast functionality– Reduction operations functionality– 6.8 Gb/s of bandwidth per link– Latency of one way tree traversal 2 µs, MPI 5 µs – ~62TB/s total binary tree bandwidth (72k machine)

Low Latency Global Barrier and Interrupt– Latency of one way to reach all 72K nodes 0.65 µs, MPI 1.6 µs

Other networks– 10Gb Functional Ethernet

• I/O nodes only– 1Gb Private Control Ethernet

• Provides JTAG access to hardware. Accessible only from Service Node system

BG/L applications easily port to 4-way-virtual-node BG/P.May increase performance through new BG/P features: •Program model changes:

• Support mixed OpenMP +MPI (OpenMP on 4-way in node)

• Virtual Node mode supported as in BGL. In BGP 4 MPI tasks/node.

• ptheads supported.

• Number of threads limited to number of cores (4)

• DMA engine enables effective offloading of messaging and increases value of overlapping compute with communicate.

• Messaging library utilizes DMA and is built around put/get functionality.

• HPC toolkit will enable access to performance counters. (BG/P has processor counts.)

• BGL model of high performance kernel on compute nodes and linux on I/O nodes.

•Working on supporting dynamic linking on high performance kernel.

Above also enables new applications for BG/P.

BlueGene/P Software

© 2007 IBM Corporation

Future Challenges Insights for BlueGene/Q

© 2005 IBM CorporationPage 16

June 15, 2005

Challenges for the futureCan get understanding of challenges by projecting to issues in 2023 (Exaflop era)

• Power is fundamental problem that is pervasive at many system levels (compute, memory, disk)• Memory cost and performance is not keeping pace with compute potential• Network performance (bandwidth and latency) will be both costly (bandwidth) and will not scale well to Exaflops• Ease of use to extract promised performance from compute will be main focus. Big peak Flops is mainly a power problem.• Reliability at the Exaflop scale will require a holistic approach at the architecture level. This results from both a lessening of the underlying silicon technology and from the shear number of logic elements.

Supercomputer Peak Performance Supercomputer Peak Performance

1940 1950 1960 1970 1980 1990 2000 2010Year Introduced

1E+2

1E+5

1E+8

1E+11

1E+14

1E+17

Peak

Spe

ed (f

lops

)

Doubling time = 1.5 yr.

ENIAC (vacuum tubes)UNIVAC

IBM 701 IBM 704IBM 7090 (transistors)

IBM StretchCDC 6600 (ICs)

CDC 7600CDC STAR-100 (vectors) CRAY-1

Cyber 205 X-MP2 (parallel vectors)

CRAY-2X-MP4 Y-MP8

i860 (MPPs)

ASCI White

Blue Gene/L

Blue Pacific

DeltaCM-5 Paragon

NWT

ASCI Red OptionASCI Red

CP-PACS

Earth

VP2600/10SX-3/44

Red Storm

ILLIAC IV

SX-2

SX-4SX-5

S-810/20

T3D

T3E

ASCI Purple

2020

Current/Past(performance growth through exponential processor performance growth )

Near Term(performance through exponential growth in parallelism)

Long Term(power cost=system cost)

© 2007 IBM Corporation17

Extrapolating an Exaflop in 2023

Memory footprint is no problem42 MB42 MB2.7 MBMemory footprint/node

Requires:1)fast global sum2)hardware offload for messaging (Driverless messaging)

15 usec9.4 usec2.3 msecQCD CG single iteration time

Approx equal time to science128^3x256128^3x25664^3X128Total problem size (QCD example)

5 mW/Gbps assumed6 MW50 MW12.8 KWPower in memory I/O (not DRAM)

5 Gbps per pin 4000 pins32,000 pins128 pinsData pins associated with memory/node

About 6-7 technology generations500 MB16 GB4 MBL2 cache/node

Not possible to maintain external bandwidth/Flop2 TB/s20TB/s5.6GB/sMemory Bandwidth/node

10 mW/Gbps assumed8 MW38 MW100 KWPower in network

20 Gbps differential assumed1,200 pins6,000 pins24 pinsPins in network on node

Large wire count will eliminate high density and drive links onto cables where they are 100x more expensive.

100 wires500 wires2Wires per unidirectional 3-D link

Not possible to maintain bandwidth ratio1 Tbps5 Tbps1.4GbpsLink Bandwidth (Each unidirectional 3-D link)

80x improvement (very optimistic)50 MW4 GW1 MWSystem Power Compute Chip

Assume 3.5GHz, 3-D packaging160080002Number of hardware threads/node

Same node count (64k)20TF20TF5.6GFNode Peak Perf

Assumption for “Educated guess”ExaflopEducated guess

ExaflopDirectly scaled

BlueGene/L (2005)

• Power associated with external memory will force high efficiency computing to reside inside chip. (or chip stack)

• Network scaling will be both a latency and bandwidth problem. Bandwidth is a cost problem and latency will require hardware offload to avoid nearly all software layers.

• Processing in a node will be done via thousand(s) of hardware units , each which is only somewhat faster than today’s.

IBM Research

© 2007 IBM Corporation

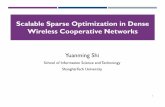

0.23

0.35

0.02 0.02 0.02

0.00

0.05

0.10

0.15

0.20

0.25

0.30

0.35

BG/L BG/P Red Storm Thunderbird Purple

System Power EfficiencyG

FLO

P/s

per W

att

Year

GFL

OP/

s pe

r Wat

t

Power-efficient design focus

“Single thread focus design

1997 1999 2001 2003 2005 20070.001

0.01

0.1

1

QCDSPColumbia

QCDOCColumbia/IBM

Blue Gene/L

ASCI WhitePower 3

Earth Simulator

ASCI QNCSA, Xeon LLNL, Itanium 2

ECMWF, p690Power 4+

NASA, SGI

SX-8

NASA, SGI Cray XT3

Fujitsu BioserverBlue Gene/P

?

Commodity driven

• Large peak power efficiency advantage• Still need dramatic improvement to enable computing in the future.

© 2005 IBM CorporationPage 19

June 15, 2005

The Power Problem“Thick” gate oxide Scaled gate oxide

t

1.2 nm oxynitride

Field effect transistor

Gate

Oxide thickness is near the limit.• Traditional CMOS scaling has ended.• Density improvements will continue but… power efficiency from technology will only improve very slowly.

1.5 GWatt20 GWatt250 MWatt2019100 PF

2025

2012

2008

2005

Projected Year

150 MWatt2 GWatt25 MWatt10 PF

15 MWatt200 MWatt2.5 MWatt1 PF

1000 PF

250 TF

15 GWatt200 GWatt2.5 GWatt

5 MWatt100 MWatt1.0 MWatt

MareNostrumEarth SimulatorBlueGene/L

If power efficiency does not improve…CMOS alone will no longer enable faster computers with similar power

• Solution is not known!• Architecture can help (to some extent) witness the better power efficiency of commodity processors from “simplification”• New circuits can also help• This problem needs to be addressed now.

Summary/Conclusion

• BlueGene/L has achieved an application reach far broader than expected (or targeted in the design)

– Partnership and collaboration have been critical to exploiting BlueGene/L

• BlueGene/P is an architectural evolution from BlueGene/L– Enhancements from BlueGene/L such as a hardware DMA engine promise same or better

per node scaling on BlueGene/P.– BlueGene/P offers a fully coherent 4-way node with a software stack designed to exploit

parallelism.– BlueGene/P will offer approximately 2-3x speed up with respect to BlueGene/L for same

node count.

• Future Trends – Power will be a severe constraint in the future (and now)– Large systems will have millions of threads, each similar in performance to today.– Challenges of power will apply to all systems (commercial and HPC).

• Market forces in commodity commercial world could result in a different , potentially not well aligned with HPC, direction.

– Reliability of systems in the future will require a holistic approach to reach the extreme levels of scalability.

– Latency in networks will become a pinch point for capability computing.