An alternating variable metric inexact linesearch based...

Transcript of An alternating variable metric inexact linesearch based...

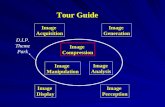

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

An alternating variable metric inexact linesearch basedalgorithm for nonconvex nonsmooth optimization

Simone Rebegoldi

(Joint work with Silvia Bonettini and Marco Prato)

Workshop “Computational Methods for Inverse Problems in Imaging”

July 16-18 2018, Como, Italy

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 1 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Outline

1 Motivation

2 The proposed algorithm

3 Convergence of the algorithm

4 Numerical experience

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 2 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Problem setting

Optimization problem:

argminxi∈R

ni ,i=1,...,p

f(x1, . . . , xp) ≡ f0(x1, . . . , xp) +

p∑

i=1

fi(xi)

fi : Rni → R, i = 1, . . . , p, n1 + . . . + np = n, are proper, convex, lower

semicontinuous functionsf0 : Rn → R is continuously differentiable on an open set Ω0, with Ω0 ⊇∏p

i=1 dom(fi)f is bounded from below.

Applications:

image processing (image deblurring and denoising, image inpainting, im-age segmentation, image blind deconvolution, ...)signal processing (non–negative matrix factorization, non–negative ten-sor factorization, ...)machine learning (SVMs, deep neural networks, ...)

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 3 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Block–coordinate proximal–gradient methods

Proximal–gradient methods (p = 1)

x(k+1) = proxαkf1

(x(k) − αk∇f0(x(k)))

= argminz∈Rn

f0(x(k)) +∇f0(x

(k))T (z − x(k)) +

1

2αk

‖z − x(k)‖2 + f1(z)

where αk > 0 and proxf1(x) = argmin

z∈Rn

12‖z − x‖2 + f1(z), x ∈ R

n

is the proximity operator associated to a convex function f1 : Rn → R.

Block–coordinate proximal–gradient methods (p > 1)

x(k+1) = (x(k+1)1 , . . . , x

(k+1)p ), where x

(k+1)i , i = 1, . . . , p, is given by

x(k+1)i = prox

α(k)i

fi

(

x(k)i − α

(k)i ∇if0

(

x(k+1)1 , . . . , x

(k+1)i−1 , x

(k)i , x

(k)i+1, . . . , x

(k)p

))

being ∇if0(x1, . . . , xp) the partial gradient of f0 with respect to xi.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 4 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Recent advances

Theorem (Bolte et al., Math. Program., 2014)

Suppose that the sequence x(k)k∈N is bounded and

f satisfies the Kurdyka–Łojasiewicz (KL) inequality at each point of its domain;

∇f0 is Lipschitz continuous on bounded subsets of Rn;

∇if0(x(k+1)1 , . . . , x

(k+1)i−1 , ·, x

(k)i+1, . . . , x

(k)p ) is β

(k)i -Lipschitz continuous on R

ni ,i = 1, . . . , p;

0 < infβ(k)i : k ∈ N ≤ supβ

(k)i : k ∈ N < ∞, i = 1, . . . , p;

α(k)i = (γiβ

(k)i )−1, with γi > 1, i = 1, . . . , p.

Then x(k)k∈N has finite length and converges to a critical point x∗ of f .

Other advances under the KL property

Majorization–Minimization techniques [Chouzenoux et al., J. Glob. Optim., 2016]

Extrapolation techniques [Xu et al., SIAM J. Imaging Sci., 2013]

Convergence under proximal errors [Frankel et. al., J. Optim. Theory Appl., 2015]

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 5 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Main idea

In our proposed approach, each block of variables is updated by applying L(k)i steps of the

Variable Metric Inexact Linesearch based Algorithm (VMILA) [1]

x(k,ℓ+1)i = x

(k,ℓ)i + λ

(k,ℓ)i (u

(k,ℓ)i − x

(k,ℓ)i ), ℓ = 0, 1, . . . , L

(k)i − 1

x(k,0)i = x

(k)i

u(k,ℓ)i is a suitable approximation of the proximal-gradient step given by

u(k,ℓ)i ≈

ǫ(k,ℓ)i

proxD

(k,ℓ)i

α(k,ℓ)i

(

x(k,ℓ)i − α

(k,ℓ)i

(

D(k,ℓ)i

)−1∇if0(x

(k,ℓ))

)

,

where x(k,ℓ) = (x(k,L

(k)1 )

1 , . . . , x(k,L

(k)i−1)

i−1 , x(k,ℓ)i , x

(k)i+1, . . . , x

(k)p ), α

(k,ℓ)i > 0 is the

steplength parameter, D(k,ℓ)i ∈ R

ni×ni a scaling matrix, and ǫ(k,ℓ)i the accuracy of

the approximation;

λ(k,ℓ)i a linesearch parameter ensuring a certain sufficient decrease condition on the

function f .

[1] S. Bonettini, I. Loris, F. Porta, M. Prato, S. Rebegoldi, Inverse Probl., 2017

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 6 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Ingredient (1): Variable metric strategy

Let α(k,ℓ)i ∈ [αmin, αmax] and D

(k,ℓ)i ∈ R

ni×ni a s.p.d. matrix with 1µI D

(k,ℓ)i µI.

u(k,ℓ)i = prox

D(k,ℓ)i

α(k,ℓ)i

fi

(

x(k,ℓ) − α(k,ℓ)i

(

D(k,ℓ)i

)−1∇if0(x

(k,ℓ))

)

= argminu∈R

ni

∇if0(x(k,ℓ))T (u− x(k,ℓ)) +

1

2α(k,ℓ)i

‖u− x(k,ℓ)‖2D

(k,ℓ)i

+ fi(u)− fi(x(k,ℓ))

︸ ︷︷ ︸

:=h(k,ℓ)i

(u)

Observe that

any D(k,ℓ)i s.p.d. matrix is allowed, including those suggested by the split gradient

strategy and the majorization-minimization technique.

any positive steplength α(k,ℓ)i is allowed, thus allowing to exploit thirty years of literature

in numerical optimization to improve the actual convergence rate (Barzilai-Borwein rules[1], adaptive alternating strategies [2], Ritz values [3] ...).

[1] J. Barzilai, J. M. Borwein, IMA Journal of Numerical Analysis, 8(1), 141–148, 1988.

[2] G. Frassoldati, G. Zanghirati, L. Zanni, Journal of Industrial and Management Optimization, 4(2), 299–312, 2008.

[3] R. Fletcher, Mathematical Programming, 135(1–2), 413–436, 2012.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 7 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Ingredient (2): sufficient decrease condition

Theorem (Bonettini et. al, SIAM J. Optim., 2016)

If h(k,ℓ)i (u) < 0, then the one-sided directional derivative of f at x(k,ℓ) with respect to

d(k,ℓ) = (0, . . . , u− x(k,ℓ)i , 0, . . . , 0) is negative:

f ′(x(k,ℓ); d(k,ℓ)) = limλ→0+

f(x(k,ℓ) + λd(k,ℓ))− f(x(k,ℓ))

λ< 0.

The negative sign of h(k,ℓ)i detects a descent direction, since

h(k,ℓ)i (u) < 0 ⇒ f(x(k,ℓ) + λd(k,ℓ))− f(x(k,ℓ)) < 0 for λ sufficiently small.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 8 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Ingredient (2): sufficient decrease condition

Definition (Armijo-like linesearch)

Fix δ, β ∈ (0, 1). Let u(k,ℓ)i be a point such that h(k,ℓ)

i (u(k,ℓ)i ) < 0 and set

d(k,ℓ) = (0, . . . , u(k,ℓ)i − x

(k,ℓ)i , 0, . . . , 0).

Compute the smallest nonnegative integer mk,ℓ such that λ(k,ℓ)i = δmk,ℓ satisfies

f(x(k,ℓ) + λ(k,ℓ)i d(k,ℓ)) ≤ f(x(k,ℓ)) + βλ

(k,ℓ)i h

(k,ℓ)i (u

(k,ℓ)i )

When fi = ιΩi, being Ωi ⊆ Rni some closed and convex set, and neglecting the quadratic

term in h(k,ℓ)i (u

(k,ℓ)i ), one recovers the classical Armijo condition for smooth optimization.

Theorem (Bonettini et. al, SIAM J. Optim., 2016)

The linesearch is well-defined, i.e. mk,ℓ < +∞ for all k.

No Lipschitz continuity of ∇if0 needed

independent of the choice of parameters α(k,ℓ)i and D

(k,ℓ)i (free to improve convergence

speed)

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 9 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Ingredient (3): Inexact computation of the proximal point

Definition

Given ǫ ≥ 0, the ǫ-subdifferential ∂ǫh(u) of a convex function h at the point u is defined as:

∂ǫh(u) =

w ∈ Rn : h(u) ≥ h(u) +wT (u− u)− ǫ, ∀u ∈ R

n

.

Relax the optimality condition u = proxDαf1(x) = argmin

uh(u) ⇔ 0 ∈ ∂h(u).

Idea: replace the subdifferential with the ǫ−subdifferential.

Definition

Given ǫ ≥ 0, a point u ∈ Rni is an ǫ−approximation of the proximal point u if

0 ∈ ∂ǫh(u),

or equivalently h(u)− h(u) ≤ ǫ.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 10 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Ingredient (3): Inexact computation of the proximal point

Special case:

f1(x) = g(Ax), with g proper, convex, continuous function and A ∈ Rm×n.

Theorem (Bonettini et. al, SIAM J. Optim., 2016)

Let Ψ be the dual function of h and define the primal–dual gap function

G(u, v) = h(u)−Ψ(v).

If u ∈ Rn, v ∈ Rm are such thatG(u, v) ≤ ǫ (1)

where u = x− αD−1AT v, then u is an ǫ−approximation of proxDαf1(x).

Practical procedure:

Generate a sequence v(t)t∈N ⊆ dom(g∗) such that limt→+∞

v(t) = arg maxv∈Rm

Ψ(v).

Compute u(t) = Pdom(f1)

(x− αD−1AT v(t)

)and stop iterates when (1) is met.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 11 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

The proposed algorithmAlgorithm 1 Variable metric inexact line-search based algorithm - block version

Choose x(0) ∈ dom(f), 0 < αmin ≤ αmax , µ ≥ 1, δ, β ∈ (0, 1), Li ∈ Z+ for i = 1, . . . , p.FOR k = 0, 1, 2, ...

FOR i = 1, ..., p

Set x(k,0)i

= x(k)i

Choose the inner iterations number L(k)i

≤ Li and ℓ(k)i

< L(k)i

FOR ℓ = 0, ..., L(k)i

− 1

• Set x(k,ℓ) = (x(k,L

(k)1 )

1 , . . . , x(k,L

(k)i−1

)

i−1 , x(k,ℓ)i

, x(k)i+1, . . . , x

(k)p )

• Choose the parameters α(k,ℓ)i

∈ [αmin, αmax] and D(k,ℓ)i

∈ Dµ

• Compute u(k,ℓ)i

such that 0 ∈ ∂ǫ(k,ℓ)i

hi(u(k,ℓ)i

) and hi(u(k,ℓ)i

) < 0

• Set d(k,ℓ)i

= u(k,ℓ)i

− x(k,ℓ)i

and d(k,ℓ) = (0, . . . , d(k,ℓ)i

, 0, . . . , 0)

• Compute the smallest non-negative integer mk,ℓ such that λ(k,ℓ)i

= δmk,ℓ satisfies

f(x(k,ℓ)

+ λ(k,ℓ)i

d(k,ℓ)

) ≤ f(x(k,ℓ)

) + βλ(k,ℓ)i

h(k,ℓ)i

(u(k,ℓ)i

)

• Update the inner iterate: x(k,ℓ+1)i

= x(k,ℓ)i

+ λ(k,ℓ)i

d(k,ℓ)i

END

x(k+1)=

(x(k,L

(k)1 )

1 , . . . , x(k,L

(k)p )

p ) if f(x(k,L

(k)1 )

1 , . . . , x(k,L

(k)p )

p ) ≤f(u(k,ℓ

(k)1 )

1 , . . . , u(k,ℓ

(k)p )

p )

(u(k,ℓ

(k)1

)

1 , . . . , u(k,ℓ

(k)p )

p ) otherwise

ENDEND

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 12 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Convergence in the inexact case

Theorem (Bonettini, Prato, Rebegoldi, COAP, 2018)

Assume that the sequence x(k)k∈N admits a limit point x. If the error sequences satisfy

limk→∞

L(k)i

−1∑

ℓ=0

ǫ(k,ℓ)i = 0, i = 1, . . . , p

then we have

x is a stationary point for the function f ;

limk→∞ f(x(k)) = f = f(x).

The result follows by combining the linesearch procedure with the definition ofǫ(k,ℓ)i −approximation.

No Lipschitz assumption on f .The last step of the Algorithm, where we impose

f(x(k+1)) ≤ f(u(k,ℓ1)1 , . . . , u

(k,ℓp)p ) (2)

is needed here only to prove the convergence of the function values.⇒ if f0, f1, . . . , fp are all continuous, the result holds even if we neglect step (2).

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 13 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Convergence in the exact case

Assumption 1 (Bolte et al., SIAM J. Optim., 2007)

For any limit point x of the sequence x(k)k∈N, the function f has the Kurdyka-Łojasiewicz(KL) property at x, i.e. there exist υ ∈ (0,+∞], a neighborhood U of x and a continuousconcave function φ : [0, υ) −→ [0,+∞) such that:

φ(0) = 0;

φ is C1 on (0, υ);

φ′(s) > 0 for all s ∈ (0, υ);

the inequalityφ′(f(x) − f(x))dist(0, ∂f(x)) ≥ 1 (3)

holds for all x ∈ U ∩ [f(x) < f < f(x) + υ].

If f satisfies the KL property at each point of dom(∂f), then f is called a KL function.

When f is smooth, finite-valued, and f(x) = 0, inequality (3) can be rewritten as

‖∇(φ f)(x)‖ ≥ 1 (4)

for each convenient x ∈ Rn.

This inequality may be interpreted as follows: KL functions are sharp up to a reparametriza-tion around their critical points.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 14 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Convergence in the exact case

Figure: Example of the KL property for a smooth function. (Image source: Ochs,arXiv:1602.07283, 2016)

Examples

Real analytic functions with ϕ(t) = Cθtθ , where C > 0 and θ ∈ (0, 1]

Semialgebraic functions: e.g., the indicator function of a semialgebraic set

Sum of real analytic and semialgebraic functions

⇒ Kullback-Leibler or p-norm + box constraint + inequality constraint is a KL function

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 15 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Convergence in the exact case

Assumption 2

∇f0 is locally Lipschitz continuous, i.e. for each bounded set B ⊆ Ω0, there exists MB > 0such that

‖∇f0(x1)−∇f0(x2)‖ ≤ MB‖x1 − x2‖, ∀x1, x2 ∈ Rn.

Unlike in other works, we do not require that the partial gradients ∇if0, i = 1, . . . , p, areglobally Lipschitz continuous.⇒ applicable to problems where global Lipschitz properties are not ensured (Poisson blinddeconvolution, non-negative matrix factorization)

Although we require local Lipschitz continuity of ∇f0, none of the parameters involved inthe algorithm depend on its Lipschitz constant.

Assumption 3

For all k ∈ N, ǫ(k,ℓ)i = 0, ℓ = 0, . . . , Li − 1, i = 1, . . . , p.

The proximal-gradient points need be computed exactly.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 16 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Convergence in the exact case

Theorem (Bonettini, Prato, Rebegoldi, COAP, 2018)

Let Assumptions 1-2-3 hold. Suppose that the sequence x(k)k∈N admits a limit point x.Then the sequence has finite length, i.e.

+∞∑

k=0

‖x(k+1) − x(k)‖ < +∞

and therefore the sequence x(k)k∈N converges to x.

Main idea of the proof:define a suitable neighborhood Bρ of the limit point x such that the KL inequality can

be applied at the point (u(k,ℓ1)1 , . . . , u

(k,ℓp)p ) whenever it belongs to Bρ;

prove that the following basic inequality holds:

2‖t(k)‖ ≤ ‖t(k−1)‖+ φk, (5)

whenever the subiterates generated by the algorithm belong to Bρ, where φk is a quan-tity depending on the objects of the KL definition, and t(k) a column vector in which the

vectorial differences x(k,ℓ+1)i − x

(k,ℓ)i , i = 1, . . . , p, ℓ = 0, . . . , Li − 1 are stacked;

show by induction that, for all k ≥ k0, all the subiterates of the algorithm belong to Bρ;use (5) to prove the thesis.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 17 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Poisson blind deconvolution

Given an observed image g ∼ Poisson(ω ⊗ x+ be), g ∈ Rn2

, where:

x ∈ Rn2

is the original object;

ω ∈ Rn2

is the PSF of the acquisition system;

⊗ denotes the convolution operator (with periodic BCs);

e ∈ Rn2

is the vector of all ones;

b > 0 is the (constant and known) background term.

the objective is to recover both x and ω.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 18 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Poisson blind deconvolution

argminx∈Ωx,ω∈Ωω

F (x,ω) ≡ KL(x, ω) + ρxTV (x) + ρωTV (ω),

KL is the generalized Kullback–Leibler divergence

KL(x, ω) =n2∑

i=1

gi log

(

gi

(ω ⊗ x)i + b

)

+ (ω ⊗ x)i + b− gi

TV is the standard total variation functional

TV (x) =

n2∑

i=1

‖∇ix‖2

ρx, ρω are positive regularization parameters

Ωx = x ∈ Rn2

| x ≥ 0

Ωω = ω ∈ Rn2

| ω ≥ 0,∑n2

i=1 ωi = 1.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 19 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Test problem

Figure: From left to right: true image, PSF and blurred and noisy data.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 20 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Parameters setting

Let w ∈ x, ω be one of the two blocks of variables.

Scaling matrix

SG Split Gradient matrix

(

D(k,ℓ)w

)−1= max

min

w(k,ℓ)

V (w(k,ℓ)), µ

,1

µ

where V (w(k,ℓ)) comes from the gradient decomposition

∇wKL(w) = V (w)− U(w), with V (w) > 0, U(w) ≥ 0.

I Identity matrix.

Steplength

α(k,ℓ)w is computed by alternating the two Barzilai-Borwein rules

αBB1w =

s(k,ℓ)TD

(k,ℓ)w D

(k,ℓ)w s(k,ℓ)

s(k,ℓ)TD

(k,ℓ)w z(k,ℓ)

, αBB2k =

s(k,ℓ)T(

D(k,ℓ)w

)−1z(k,ℓ)

z(k,ℓ)T(

D(k,ℓ)w

)−1 (

D(k,ℓ)w

)−1z(k,ℓ)

where s(k,ℓ) = w(k,ℓ) − w(k,ℓ−1) and z(k,ℓ) = ∇wKL(w(k,ℓ))−∇wKL(w(k,ℓ−1)).

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 21 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Parameters setting

Automatic choice of the error sequenceChoose τ > 0. If we find u

(k,ℓ)w , v(k,ℓ)w such that

h(k,ℓ)w (u

(k,ℓ)w ) ≤

(1

1 + τ

)

Ψ(k,ℓ)w (v

(k,ℓ)w ),

then it followsG(k,ℓ)w (u

(k,ℓ)w , v

(k,ℓ)w ) ≤ −τh

(k,ℓ)w (u

(k,ℓ)w )

which implies that u(k,ℓ)w is an ǫ

(k,ℓ)w −approximation with ǫ

(k,ℓ)w = −τh

(k,ℓ)w (u

(k,ℓ)w ).

Stopping criterion for the inner iterates

Stop the inner iterate w(k,ℓ) when |h(k,ℓ)w (u

(k,ℓ)w )| is sufficiently small, i.e. when

η(k)w ≤ h

(k,ℓ)w (u

(k,ℓ)w ) < 0

where the adaptive parameter η(k)w < 0 is initialized as η(0)w = ǫ · h

(0,0)w (u

(0,0)w ), being

ǫ > 0 a prefixed tolerance, and it is updated as

η(k)w =

0.5 · η(k−1)w if h(k,1)

w (u(k,1)w ) ≥ η

(k−1)w

η(k−1)w otherwise.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 22 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Results

0 10 20 30 40 50 60Time(s)

10-6

10-4

10-2

100

f(x(k

) ,(k

) )-f lim

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

0 10 20 30 40 50 60Time(s)

10-4

10-3

10-2

10-1

100

f(x(k

) ,(k

) )-f lim

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

0 10 20 30 40 50 60Time(s)

10-6

10-4

10-2

100

f(x(k

) ,(k

) )-f lim

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

Figure: Test phantom (left), satellite (center) and crab (right). Decrease of the objectivefunction versus time. Comparison made with the Block coordinate VMFB (green lines) [1].

[1] E. Chouzenoux, J.-C. Pesquet, A. Repetti, J. Glob. Optim. 66(3), 457–485, 2016.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 23 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Risultati

0 10 20 30 40 50 60Time(s)

0.3

0.35

0.4

0.45

0.5

0.55

0.6

0.65

0.7

RM

SE

(x(k

) )

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

0 10 20 30 40 50 60Time(s)

0

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

RM

SE

((k

) ) Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

0 10 20 30 40 50 60Time(s)

0.2

0.25

0.3

0.35

0.4

0.45

0.5

0.55

0.6

RM

SE

(x(k

) )

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

0 10 20 30 40 50 60Time(s)

0.3

0.4

0.5

0.6

0.7

0.8

RM

SE

((k

) ) Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

Figure: Test phantom (above) and satellite (below). RMSE versus time on the image (left)and the PSF (right). Comparison made with the Block coordinate VMFB (green lines) [1].

[1] E. Chouzenoux, J.-C. Pesquet, A. Repetti, J. Glob. Optim. 66(3), 457–485, 2016.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 24 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Risultati

0 10 20 30 40 50 60Time(s)

0.2

0.25

0.3

0.35

0.4

0.45

0.5R

MS

E(x

(k) )

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

0 10 20 30 40 50 60Time(s)

0.15

0.2

0.25

0.3

0.35

RM

SE

((k

) )

Dw(k,l) = I

Dw(k,l) = SG

BC-VMFB-1BC-VMFB-2

Figure: Test crab. RMSE versus time on the image (left) and the PSF (right). Comparisonmade with the Block coordinate VMFB (green lines) [1].

[1] E. Chouzenoux, J.-C. Pesquet, A. Repetti, J. Glob. Optim. 66(3), 457–485, 2016.

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 25 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Results

RMSE(x(k))

L(k)x

L(k)ω 1 2 3 4 5 6 7 8 9 10 minL

(k)ω , 10

1 0.542 0.438 0.386 0.357 0.359 0.384 0.407 0.406 0.417 0.423 –2 0.522 0.422 0.371 0.346 0.343 0.351 0.363 0.375 0.386 0.396 –3 0.62 0.472 0.397 0.348 0.333 0.336 0.345 0.358 0.37 0.38 –4 0.643 0.48 0.399 0.352 0.33 0.328 0.335 0.345 0.356 0.367 –5 0.706 0.538 0.438 0.377 0.338 0.325 0.32 0.324 0.331 0.34 –6 0.708 0.544 0.443 0.384 0.345 0.325 0.348 0.33 0.319 0.322 –7 0.76 0.604 0.495 0.419 0.368 0.337 0.319 0.317 0.318 0.324 –8 0.784 0.622 0.505 0.422 0.366 0.332 0.317 0.314 0.32 0.327 –9 0.811 0.65 0.539 0.46 0.393 0.356 0.324 0.313 0.313 0.318 –10 0.814 0.655 0.545 0.465 0.404 0.356 0.328 0.315 0.312 0.314 –

minL(k)x , 10 – – – – – – – – – – 0.321

RMSE(ω(k) )

L(k)x

L(k)ω 1 2 3 4 5 6 7 8 9 10 minL

(k)ω , 10

1 0.279 0.193 0.132 0.072 0.05 0.122 0.197 0.179 0.227 0.262 –2 0.236 0.165 0.115 0.071 0.042 0.049 0.075 0.104 0.132 0.161 –3 0.26 0.181 0.133 0.087 0.052 0.039 0.053 0.076 0.1 0.121 –4 0.254 0.174 0.129 0.093 0.061 0.042 0.043 0.058 0.078 0.098 –5 0.264 0.188 0.143 0.11 0.079 0.063 0.046 0.04 0.046 0.058 –6 0.265 0.191 0.145 0.114 0.086 0.062 0.086 0.068 0.049 0.04 –7 0.27 0.202 0.158 0.127 0.101 0.079 0.056 0.05 0.041 0.043 –8 0.268 0.201 0.158 0.126 0.098 0.075 0.057 0.043 0.04 0.045 –9 0.27 0.205 0.163 0.135 0.109 0.09 0.066 0.05 0.041 0.041 –10 0.271 0.203 0.163 0.136 0.113 0.09 0.07 0.056 0.045 0.041 –

minL(k)x , 10 – – – – – – – – – – 0.041

Table: Relative mean squared error versus the number of inner iterations (phantom).Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 26 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

Conclusions and future work

Conclusions:

a new block-coordinate proximal-gradient method for nonconvex nonsmoothoptimization

possibility to perform a variable, bounded number of proximal-gradient stepsto update each block

variable metric + Armijo-like rule

convergence under KL property + Local Lipschitz continuity

numerical results show the improvements in adopting a variable number ofinner iterations combined with a variable metric of the proximal operator

Future work:

convergence under KL property + proximal errors

generalization to nonconvex regularizers

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 27 / 28

MotivationThe proposed algorithm

Convergence of the algorithmNumerical experience

VMILA Software

Main reference:

S. Bonettini, M. Prato, and S. Rebegoldi (2018)A block coordinate variable metric linesearch based proximal gradient methodComputational Optimization and Applications, 1–48.

http://www.oasis.unimore.it/site/home/software.html

Simone Rebegoldi An alternating variable metric inexact linesearch based algorithm CMIPI 2018 28 / 28

![Linear and nonlinear shaping of ultrashort optical pulses.scienze-como.uninsubria.it/phil/Doctorate/english/verifiche/... · Linear and nonlinear shaping of ultrashort optical pulses.]](https://static.fdocuments.us/doc/165x107/5e7c137d259a9a2cd039068a/linear-and-nonlinear-shaping-of-ultrashort-optical-linear-and-nonlinear-shaping.jpg)