Alu design-project

Transcript of Alu design-project

ENEL 619.76

EMBEDDED SYSTEM

FINAL PROJECT

ARM ALU

Group Members:

Mohammad Ahmadi

Michael Chen

Yifeng Qiu

Roghoyeh Salmeh

1

Contents

1. Arithmetic Logic Unit (ALU) 3

2. Project Specification 4

3. Logic Units 7

4. Carry Look Ahead Adder 11

5. Multiplier 16

6.Verilog Program 28

7. Simulation Results 30

2

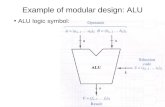

1. Arithmetic Logic Unit (ALU)

An arithmetic-logic unit (ALU) is the part of a computer processor (CPU) that carries out

arithmetic and logic operations on the operands in computer instruction words. ALU

performs operations such as addition, subtraction and multiplication of integers and bit-

wise AND, OR, NOT, XOR and other Boolean operations. The CPU's instruction decode

logic determines which particular operation the ALU should perform, the source of the

operands and the destination of the result.

The width in bits of the words which the ALU handles is usually the same as that quoted

for the processor as a whole whereas its external buses may be narrower. Floating-point

operations are usually done by a separate "floating-point unit". Some processors use the

ALU for address calculations (e.g. incrementing the program counter), others have

separate logic for this.

In some processors, the ALU is divided into two units, an arithmetic unit (AU) and a

logic unit (LU). Some processors contain more than one AU - for example, one for fixed-

point operations and another for floating-point operations. (In personal computers

floating point operations are sometimes done by a floating point unit on a separate chip

called a numeric coprocessor.)

Typically, the ALU has direct input and output access to the processor controller, main

memory (random access memory or RAM in a personal computer), and input/output

devices. Inputs and outputs flow along an electronic path that is called a bus. The input

consists of an instruction word (sometimes called a machine instruction word) that

contains an operation code (sometimes called an "op code"), one or more operands, and

sometimes a format code. The operation code tells the ALU what operation to perform

and the operands are used in the operation. (For example, two operands might be added

together or compared logically.) The format may be combined with the op code and tells,

for example, whether this is a fixed-point or a floating-point instruction. The output

consists of a result that is placed in a storage register and settings that indicate whether

the operation was performed successfully. (If it isn't, some sort of status will be stored in

a permanent place that is sometimes called the machine status word.)

3

In general, the ALU includes storage places for input operands, operands that are being

added, the accumulated result (stored in an accumulator), and shifted results. The flow of

bits and the operations performed on them in the subunits of the ALU is controlled by

gated circuits. The gates in these circuits are controlled by a sequence logic unit that uses

a particular algorithm or sequence for each operation code. In the arithmetic unit,

multiplication and division are done by a series of adding or subtracting and shifting

operations. There are several ways to represent negative numbers. In the logic unit, one

of 16 possible logic operations can be performed - such as comparing two operands and

identifying where bits don't match.

The design of the ALU is obviously a critical part of the processor and new approaches to

speeding up instruction handling are continually being developed.

2. Project Specification

The ALU is designed on a 5-stage pipeline operation. One instruction cycle consists of 5

system clocks. The first system clock is for Fetch Instruction, the second for Instruction

Decoding, the third for Read Operands, the fourth for Execution and the fifth for Write

Back. Because there is 5-stage pipeline operation existing, thus for each system clock, it

is necessary to feed the opcode and data/address (if available) into the ALU module.

Thus, for each Write Back cycle, if there are any output data and address, they will be fed

back onto the output bus. A detailed specification of the ALU is presented at the

following section:

2.1. Specification of the ALU

1. 16-bit register based RISC ALU architecture with 8 general registers

2. 5-stage pipeline operation

3. Instructions including signed and unsigned data operation

4. Internal accumulators

5. Condition registers (ZF, CF, SF, OF)

6. Instruction with 1 or 2 operands with internal stack

7. Carry look-ahead algorithm to implement ADD operation

4

8. Wallace Tree algorithm to implement MUL operation

2.2. Set of the functions

The input signals for ALU module are rst, clk, opcode and data.

rst: reset signal

clk: system clocks

opcode: the instruction operational code, [13:6] for instruction code, [5:3] for register 1

address, [2:0] for register 2 address

data: instruction required data or addresses for ALU module

The output signals for ALU module are output_address, output_data and

pc_counter_address.

output_address: when meeting the external memory storage instructions, the

output_address will output the external memory address

output_data: when meeting the external memory storage instructions, the output_data will

output the data to be stored in the external memory

pc_counter_address: when meeting the jumping instructions, the pc_counter_address will

output the jumping address to inform the external PC

The internal registers are explained as following.

system_stack: ALU internal system stack, which is for PUSH and POP operation

ZF,CF,SF,OF: four flags for arithmetic instruction result

accum: ALU accumulator A

bccum: ALU accumulator B

accum_for_out: to solve the pipeline hazard (read-after-write), this internal register is

introduced

General_Register: general purpose registers (AX, BX, CX, DX, EX, FX, GX, HX)

temp_opcode: internal register to temporarily store the instruction code

temp_register1_address: internal register to temporarily store the register 1 address

temp_register2_address: internal register to temporarily store the register 2 address

temp_data: internal register to temporarily store the data/address

temp_operand1_top: internal register to temporarily store the top bit (D16) for arithmetic

5

operation result

operand_number: record the operand number of instructions

state_number: the status of system clocks

stack_point: internal stack point

jumping_tag: indicate whether the program will need execute jumping operation

first_round: there is 5-stage pipeline operation, and at the beginning of the pipeline

operation, the first round flag register will indicate the beginning status of

the system clocks for operation

HLT_FLAG: if there is a HLT instruction, the HLT FLAG will be set to 1

Carry, G, P: internal registers for carry look-ahead ADD algorithm

i, td, shift: internal registers for control flow and arithmetic operations

The ALU system operations will begin at each positive edge of the system clock. When

there is a rst signal coming, all the internal signals will be cleared and output signals will

be set to High Z status.

The overall structure of the program:

always @(posedge rst or posedge clk)

{

if (rst is active) initialize the system internal registers and output buses

else

{

if (there is the first round pipeline operation) set the status number register

else status number register=(status + 1) mod 5

implement the first system cycle operation (Fetch Instruction)

implement the second system cycle operation (determine the operand number)

implement the third system cycle operation (Read Operands)

implement the fourth system cycle operation (Execution)

implement the fifth system cycle operation (Write Back)

}

}

3. Logic Units

3.1. AND

6

(1) Instruction Format: AND Oprand1 Oprand2

(2) Function: Oprand1 and Oprand2 are two 16_bits register inputs. This operator

performs the bitwise_AND operation, and put the result in Oprand1.

The truth table defines the behavior of each bit operation shown in figure 1-(a). The

circuit symbol is shown in figure 1-(b).

(3) Implementing AND with Verilog HDL:

module ALU_AND(Clk,Operand0,Operand1);

input [15:0] Operand0;

input [15:0] Operand1;

input Clk;

reg[15:0] Operand0;

always@(posedge Clk) Operand0 = Operand0 & Operand1;

endmodule

3.2. OR:(1) Instruction Format: OR Oprand1 Oprand2

(2) Function: Oprand1 and Oprand2 are two 16_bits register inputs. This operator

performs the bitwise_OR operation, and put the result in Oprand1.

The truth table defines the behavior of each bit operation shown in figure 2-(a). The

circuit symbol is shown in figure 2-(b).

(3) Implementing OR with Verilog HDL:

module ALU_OR(Clk, Operand0, Operand1);input [15:0] Operand0;input [15:0] Operand1;input Clk;

7

reg[15:0] Operand0;always@(posedge Clk) Operand0 = Operand0 | Operand1;

endmodule

3.3. XOR:(1) Instruction Format: XOR Oprand1 Oprand2

(2) Function: Oprand1 and Oprand2 are two 16_bits register inputs. This operator

performs the bitwise_XOR operation, and put the result in Oprand1.

The truth table defines the behavior of each bit operation shown in figure 3-(a). The

circuit symbol is shown in figure 3-(b).

(3) Implementing XOR with Verilog HDL:

module ALU_XOR(Clk, Operand0, Operand1);

input [15:0] Operand0;

input [15:0] Operand1;

input Clk;

8

reg[15:0] Operand0;

always@(posedge Clk) Operand0 = Operand0 ^ Operand1;

endmodule

3.4. NOT:

(1) Instruction Format: NOT Oprand1

(2) Function: Oprand1 is a 16_bits register input. This operator performs the

bitwise_NOT operation on Oprand1.

The truth table defines the behavior of each bit operation shown in figure 4-(a). The

circuit symbol is shown in figure 4-(b).

(3) Implementing NOT with Verilog HDL:

module ALU_NOT(Clk, Operand0);

input [15:0] Operand0;

input Clk;

reg[15:0] Operand0;

always@(posedge Clk) Operand0 = ~Operand0;

Endmodule

3.5. NEG:

(1) Three different ways of representing signed numbers:a) Signed magnitude representation: To negate a number by adding an extra sign

bit to the front of our numbers. By convention:

– A 0 sign bit represents a positive number.

– A 1 sign bit represents a negative number.

9

For example: 01101B = +13 D(a positive number in 5-bit signed magnitude)

1 1101B = -13 D(a negative number in 5-bit signed magnitude)

b) One’s complement representation: To negate a number by complementing each bit of the number.For example: 01101 B= +13D (a positive number in 5-bit one’s complement)

1 0010B= -13D (a negative number in 5-bit one’s complement)

c) Two’s complement representation: To negate a number, complement each bit (just as for ones’ complement) and then add 1.For example: 01101 = +1310 (a positive number in 5-bit two’s complement)

1 0011 = -1310 (a negative number in 5-bit two’s complement)

In this project, we use the third, 2’s complement representation, to represent

signed numbers.

(2) Instruction Format: NEG Oprand1

(3) Function: Oprand1 is a 16_bits register inputs. This operator performs negating

Oprand1, and put the result in Oprand1.

(4) Implementing NEG with Verilog HDL:

module ALU_NEG(Clk, Operand0);

input [15:0] Operand0;

input Clk;

reg [15:0] Operand0;

always@(posedge Clk) Operand0 = ~Operand0 + 1;

endmodule

4. Carry Look Ahead Adder

The sum of two single-bit binary numbers can be formed using the logic gates. If both

bits are zero the sum is zero; the sum of a one and a zero is one, but the sum of two ones

is two, which is represented in binary notation by the two bits '10'. An adder for two

single-bit inputs must therefore have two output bits: a SUM bit with the same weight as

the input bits and a CARRY bit which has twice that weight. An adder for N-bits binary

10

numbers can be constructed from single-bit adders, but all bits except the first may have

to accept a carry input from the next lower stage. Each bit of the adder produces a sum

and a carry-out from the inputs and the carry-in.

In the 4-bit ripple-carry adder, called so because the result of an addition of two

bits depends on the carry generated by the addition of the previous two bits. Thus, the

Sum of the most significant bit is only available after the carry signal has rippled through

the adder from the least significant stage to the most significant stage. This can be easily

understood if one considers the addition of the two 4-bit words: 1 1 1 1 2 + 0 0 0 1 2, as

shown in Fig. 5.

Figure 5. Addition of two 4-bit numbers illustrating the generation of the carry-out bit.

In this case, the addition of (1+1 = 10)2 in the least significant stage causes a carry bit to

be generated. This carry bit will consequently generate another carry bit in the next stage,

and so on, until the final carry-out bit appears at the output. This requires the signal to

travel (ripple) through all the stages of the adder as shown in Fig. 5. As a result, the final

Sum and Carry bits will be valid after a considerable delay. For the schematic of Fig. 5,

the Sum of the most significant stage will be valid after 2(N-1) + 1 gate delays, in which

N is the number of bits. The carry-out bit will be valid after 2N gate delays. This delay

may be in addition to any delays associated with interconnections. It should be mentioned

that in case one implements the circuit in a FPGA, the delays may be different from the

above expression depending on how the logic has been placed in the look up tables and

how it has been divided among different CLBs.

The disadvantage of the ripple-carry adder is that it can get very slow when one needs to

add many bits. In Fig. 6. the delay of the carry bit for a 4 – bit Ripple carry adder is

11

shown. For instance, for a 32-bit adder, the delay would be about 66 ns if one assumes a

gate delay of 1 ns. That would imply that the maximum frequency one can operate this

adder would be only 15 MHz! For fast applications, a better design is required. The

carry-look-ahead adder solves this problem by calculating the carry signals in advance,

based on the input signals. It is based on the fact that a carry signal will be generated in

two cases: (1) when both bits Ai and Bi are 1, or (2) when one of the two bits is 1 and the

carry-in (carry of the previous stage) is 1.

Figure 6. 4 - bits Ripple carry adder, showing the delay of the carry bit.

Thus, one can write,

COUT = Ci+1 = Ai . Bi + (Ai Å Bi).Ci. (1)

The “ Å “ stands for exclusive OR or XOR. One can write this expression also, as

Ci+1 = Gi + Pi . Ci (2)

12

in which,

Gi = Ai . Bi (3)

Pi = (Ai Å Bi) (4)

are called the Generate and Propagate term, respectively.

From (2) – (4) it is clear that both the Propagate and Generate terms only depend on the

input bits and thus will be valid after one gate delay. If we use the above expression to

calculate the carry signals, we do not need to wait for the carry to ripple through all the

previous stages to find its proper value. Now if we apply this to a 4-bit adder.

C1 = G0 + P0.C0 (5) C2 = G1 + P1.C1 = G1 + P1.G0 + P1.P0.C0 (6) C3 = G2 + P2.G1 + P2.P1.G0 + P2.P1.P0.C0 (7) C4 = G3 + P3.G2 + P3.P2.G1 + P3P2.P1.G0 + P3P2.P1.P0.C0 (8)

Now it is clear that the carry-out bit, Ci+1, of the last stage will be available after three

delays (one delay to calculate the Propagate signal and two delays as a result of the AND

and OR gate). The Sum signal can be calculated as follows,

Si = Ai Å Bi Å Ci = Pi Å Ci (9)

The Sum bit will thus be available after one additional gate delay. The advantage is that

these delays will be the same independent of the number of bits one needs to add, in

contrast to the ripple counter.

The carry-lookahead adder can be broken up in two modules: (A) the Partial Full Adder,

PFA, which generates Si, Pi and Gi as defined by equations 3, 4 and 9 above; and (B) the

Carry Look-ahead Logic, which generates the carry-out bits according to equations 5 to

8. The 4-bit adder can then be built by using 4 PFAs and the Carry Look-ahead logic

block as shown in Fig. 7.

The disadvantage of the carry-lookahead adder is that the carry logic is getting quite

13

complicated for more than 4 bits. For that reason, carry-look-ahead adders are usually

implemented as 4-bit modules and are used in a hierarchical structure to realize adders

that have multiples of 4 bits. Fig. 8 shows the block diagram for a 16-bit CLA adder. The

circuit makes use of the same CLA Logic block as the one used in the 4-bit adder. Notice

that each 4-bit adder provides a group Propagate and Generate Signal, which is used by

the CLA Logic block.

Figure 7. The block diagram of a 4-bit Carry Look Ahead ADDER.

The group Propagate PG of a 4-bit adder will have the following expressions,

PG = P3.P2.P1.P0; (10)

GG = G3 + P3G2 + P3.P2.G1. + P3.P2.P1.G0 (11)

The group Propagate PG and Generate GG will be available after 2 and 3 gate delays,

respectively (one or two additional delays than the Pi and Gi signals, respectively).

14

Figure 8. The block diagram of a 16-bit CLA Adder

5. Wallace Tree Multiplier

At the most basic level, digital multiplication can be seen as a series of bit shifts and bit

additions, where two numbers, the multiplier and the multiplicand are combined into the

final result. Consider the multiplication of two numbers: the multiplier P, and

multiplicand C, where P is an n-bit number with bit representation {pn-1,pn-2,...,p0}, the

most significant bit being pn-1 and the least significant bit being p0; C has a similar bit

representation {cn-1,cn-2,...,c0}. For unsigned multiplication, up to n shifted copies of the

multiplicand are added to form the result. The entire procedure is divided into three steps:

partial product (PP) generation, partial product reduction, and final addition. This is

illustrated conceptually in Figure 9. In order to find a convenient and fast structure for

our multiplier, we should consider various multiplier structures.

15

Figure 9. Digital multiplication flow.

5.1. Partial Product GenerationThe initial step in digital multiplication is to generate n shifted copies of the multiplicand

which may then be added in the next stage. Whether a given shifted copy of the

multiplicand is added depends on the value of the multiplier bit corresponding to this

multiplicand copy. If the ith bit, (i =0 to n-1) of the multiplier is ‘1’, then the

multiplicand is added. If the bit is ‘0’, the multiplicand is not added.

The bit representation of this function can be implemented using a logical AND gate,

which performs AND(ci,pj), i = 0 to n-1, j = 0 to n-1. The resulting values are called

partial product bits or simply, partial products; if we line up these partial products by bit

position (bp = i + j), we arrive at a structure shown in Figure 10. In this design, the partial

product bits are arranged in columns, which are to be added together to form the final

value. The resulting trapezoidal structure is called a partial product array or simply PPA.

This step is called partial product array generation.

Various forms of partial product arrays exist, depending on the number representation.

For example, in the following section we describe a common variant called Booth

recoding, which allows a signed multi-bit partial product representation of the design.

16

Figure 10. Partial product generation for 6-bit by 6-bit multiplication.

There are several important points to notice about the partial product array. First, in the

most basic formulation (PPA bits generated via logical AND), all the bits are created in

parallel; that is, the static delay of each of the bits is equal. Second, the dimensions of the

array are functions of the size of the multiplier and multiplicand: the height of the array is

proportional to the size of the multiplier, and the width is proportional to the size of the

multiplicand.

Finally, all the bits in a particular column are to be added together, and some columns

have fewer bits than others. Specifically, low-order and high-order bit positions will

require fewer additions than the middle bit positions; slightly more additions will be

required at the high-order positions than at the low-order ones, as carries from low order

positions result in a larger number of bits to be added at the high order bit positions.

5.2. Partial Product ReductionThe heart of an efficient digital multiplier implementation is in the manner in which the

PPA bits are added. Were conventional carry adders used to implement these add

operations, the delay of all the adders would consume a large amount of time, as each

shifted version of the multiplicand would contribute a delay which is proportional to the

width of the multiplicand. Instead, the partial product is reduced using a technique called

carry-save addition. This procedure allows successive additions to be incorporated into

one global addition step.

17

Consider a numerical bit vector representation of the following form: (bn-1,bn-2,...,b1,b0),

bi = {0,1}. If we wish to add two bits from two bit vectors, say a0 and b0, from bit vectors

a and b, we can use the full adder (Figure 11a); it takes in three bits and produces a sum

bit, and a carry bit. When adding two vectors together, this block can be used to add two

bits at a given bit position with the carry-in from the previous bit position. Consider the

case where two bit vectors are to be added. At the lowest bit position, two bits are added,

and the carry is propagated to the next bit position. From then on the carry-in and the next

two bits at the higher bit position are combined, and a carry-out is generated. Using this

rippling technique, we see that adding two n-bit number takes O(n) sequential bit

additions, resulting in a delay of O(n).

If we have to add three bit vectors, A, B, and C, each of size n, we can use this method to

add first A and B, and then to add C to the result of A+B. The number of bit additions is

O(2n). We see that if we were to use this technique in the most simple-minded fashion to

add n shifted copies of an n-bit multiplicand, the delay will be O(n2). This occurs because

we assume that the add operations are dependent on previous add operations (the output

of earlier operations are inputs to later operations). See Figure 11.

Although the final result comes about from combining all add operations, a certain

amount of independence exists between each operation. We can consider the add

operation on a column by column basis; all the bits in a column must be added together,

along with the carry-in bits of the previous column. The delay in calculating the output of

a given column is a function of when the carry-in bits (from the previous column) are

available, as well as the number of bits which are to be added (Figure 11). Carry-save

addition leverages the fact that the add operations in separate columns can be performed

independently.

18

Figure 11. Partial product addition. (a) Full adder cell. (b) Basic ripple adder. (c) We can use ripple addition to add all the shifted copies of the multiplicand. (d) Since there are n-1 ripple adders, each of width n, this basic method takes O(n2).

If we are to add three vectors of bits, we can use full adders to add the three bits in each

column. The result is a carry and a sum bit at each bit position, except at the lowest and

highest bit positions, which have one bit each. We see that three bit vectors have been

“reduced” to two bit vectors, in the delay necessary for a full adder. Using this technique

called carry-save addition, we can ’reduce’ a set of vectors to be added together down to

two bit vectors. Since the full adders are the basic addition element, full adders used in

this context are often called carry-save adders or CSA’s. Using carry-save addition is the

prime reason why multiplication is much faster than would be predicted by the number of

additions necessary.

5.3. Array Style Reduction

Given the trapezoidal array of partial product bits which must be added using carry-save

addition, there exist several ways to implement a partial product reduction adder. In this

section, we describe the most basic method, called array partial product reduction.

For example, in Figure 12a, we see the trapezoidal PPA generated for a 6-bit by 6-bit

multiplication. We can take the first three bit vectors, and add them using full adders, as

in Figure 12b. Combining the results of the addition with the remaining bits of the PPA,

we get a result, which appears, in Figure 12c. As we can see, three vectors have been

reduced to two vectors.

19

Figure 12. Array partial product reduction- (a) the initial partial product (b) using a row of carry-save full adders to reduce 3 bit vectors down to two (c) resulting PPA (d) full array structure.Note that the outputs of this first set of full adders can now serve as inputs to another row

of full adders, along with another bit vector. This structure repeats until the full array is

instantiated as in Figure 12d.

The notable characteristic about the array architecture is its regular structure. This has the

advantage that it is very easy to lay out, as a single adder block and associated

connections are replicated the width and depth of the array. The delay of this block is a

function of the number of rows, O(n), which is a big improvement over the simple-

minded scheme of using conventional adders for each row. However, it is possible to do

better.

5. 4. Wallace Tree Partial Product ReductionIn 1964, C.S. Wallace observed that the later stages of the array structure must always

wait for all the earlier stages to complete before their final values will be established.

When performing a series of independent add operations, it is possible to create a

20

structure, which has less delay by performing the addition operations in parallel, where

possible. For example, in the partial product array for 6-bit x 6-bit multiplication, two

carry-save reductions can be done in parallel, resulting in a smaller PPA after just one

step (Figure 12a-c.) This can be repeated, yielding the structure shown in Figure 12d.

(The final set of connections is somewhat complicated to draw here).

Obviously, parallelizing the carry-save operations will yield a delay which is much

shorter than the array’s sequential series of operations. When using carry-save addition,

we take three input bit vectors and reduce these to two output bit vectors. A sequential

carry-save procedure will reduce the number of bit vectors by one at each stage, whereas

the parallel method can take sets of 3 vectors and reduce them to sets of 2 vectors. The

number of stages, and hence the delay of the sequential operation will be O(n), whereas

the parallel method will be O(log3/2n). Such parallel arrangements of CSA blocks are

called Wallace trees and allow for a large reduction in the delay of the partial product

reduction stage. The disadvantage of Wallace trees lies in their irregular layout

(especially with respect to array structures), resulting in potentially greater wire loads.

21

Figure 12. Wallace tree partial product reduction. (a) partial product array (b) parallel

carry-save addition (c) resulting PPA (d) complete Wallace tree structure.

Furthermore, note that array structures result in a final add operation of width n, whereas

the final adder in Wallace trees has a width of approximately 2n - log3/2 n.

5. 5. Partial Product Reduction/Generation Using Booth RecodingThe technique of Booth recoding is based on the observation that under certain

conditions, namely when a bit in the multiplier is ‘0’, a bank of carry-save adders does

not perform a useful function; that is, a ’0’ is added to the accumulated carry-save result,

and the input bits are simply propagated to the output bits. In this case, these carry-save

adders could be removed from the multiplier structure, resulting in delay and power

savings. Unfortunately, it is not generally possible to know a priori what bits of the

22

multiplier will be ‘0’. To maintain generality, we must provide for the case when all bits

of the multiplier are ’1’. It may be possible to reduce circuitry, however, if one considers

the largest delay case. In a 4 bit*4 bit multiplication, circuitry must be provided for the

case where the multiplier is ‘1111’---resulting in a delay of 4 stages. An important

observation is that multiplying by ‘1111’ is the same as multiplying by ‘10000’, and

subtracting the multiplicand from the result--- multiplying by a power of two is simply a

shift, so this costs two stages. Therefore, we have cut down our worst case from 4 stages

of delay to 2 stages of delay. This type of stage reduction can be generalized into the

technique known as Booth recoding. Three bits of the multiplicand are used to determine

whether a shifted and/or complemented copy of the multiplicand are to be used. Two bits

of the multiplicand are used multiplexed to created the actual value. The encoding

scheme is shown in Table 1.

The connections of the Booth multiplexors are shown in Figure 13. The net result is that

the size of the PPA array is reduced, since fewer shifted copies of the multiplicand are

necessary in the partial product array. The number of these multiplicand ‘copies’ needed

in the partial product array depends on the degree to which Booth recoding is applied.

Generally, each level of recoding cuts the number of partial product bits in half. There is

additional circuitry involved in performing the recoding however, so this optimization

entails inserting complicated logic which itself adds delay and power consumption. In

practice, Booth recoding is applied to one level (called radix-4) or two levels, but hardly

ever more than two levels.

23

Table 1 . Booth Encoding

Figure 13. Booth recoding (radix 4).

Booth recoding was initially applied to array multipliers, where a reduction in the size of

the PPA by two means reducing the delay of the partial product reduction stage by a

factor of two. Note, however, that cutting the size of the PPA in half has less of an impact

on the Wallace tree scheme, since Wallace trees reduce the size of the PPA at a

logarithmic rate; the savings from Booth recoding yield a reduction of 1-2 levels of logic.

Furthermore, there is logic involved in the actual Booth recoding process. Therefore, it is

24

unclear whether there is an advantage in applying Booth recoding to Wallace trees.

Doubts have been expressed about the validity of Booth recoding for Wallace trees even

for 64-bit x 64-bit multipliers. So we chose Wallace tree partial product reduction method

because it seems that its implementation is easier. Besides using the Wallace’s method

speeds up the multiplication high enough.

5.6. Final AdderA common method for achieving low delay in multipliers is to speed up the final addition

stage. Several optimizations exist for performing high-speed addition. The

straightforward application of these designs to multipliers has resulted in various designs

with high speed or low power.

We do not discuss various adder structures in this section and suppose it would be

discussed in the adder structure section of this report. However it should be noted that we

have used carry lookahead adder structure for the implementation of our final adder,

because this type of adders is faster than simple carry ripple adder structure.

Finally, the overall architecture of our multiplier is depicted in the next page. It is should

be noted that 16th bits of multiplier and multiplicand are sign bits. We splitted it from

multiplier and multiplicand and computed the sign of the result by using an XOR that its

inputs is the 16th bits of inputs. So the 31st bit of the result is A[15] XOR B[15], and the

30th bit of the result is zero. Other 30 bits should be computed by the multiplication of

two 15 bits unsigned multiplier and multiplicand as is shown in figure 14. Each of the F

blocks is an array of half adder and full adder cell that has three input and two outputs.

25

Figure 14. The overall structure of our multiplier.

26