AI Lecture Notes - CAUai.cau.ac.kr/teaching/ai-2011/12a-feature-selection.pdfAll of the features...

Transcript of AI Lecture Notes - CAUai.cau.ac.kr/teaching/ai-2011/12a-feature-selection.pdfAll of the features...

Feature Selection

School of Computer Science & Engineering Chung-Ang University

Artificial Intelligence

Dae-Won Kim

What is feature selection?

All of the features must be used?

Name Age Sex Alg. C++ Military Height Weight C Smoke Class

label

Student-1 21 M A B Y 178 80 A Yes A

Student-2 23 F A A N 165 50 A No B

Student-3 22 F B C N 160 45 C Yes A

Student-4 21 M B A N 180 70 B No C

We predict the grade of ‘AI’ using the following training data.

Definition: Given a set of features, select a subset that performs best.

Q: Advantages ?

Benefits-1: simpler and faster

It reduces the number of features.

Benefits-2: cost-effect

It provides lower computational cost and clinical cost, etc.

Benefits-3: a better accuracy

It may provide a better accuracy by removing useless features.

Benefits-4: a deeper insight

Knowledge of good features gives insights into the underlying structure.

Caution: Feature Selection vs. Feature Transformation

Related to scaling and normalization.

Certain transformation of features may lead to the discovery of structures that were not obvious on the original scale.

For scaling, we usually take square roots, reciprocals, and logarithms.

We also handle the question of normalization.

Features are often normalized to lie in a fixed range, from zero to one.

1. By dividing all values by the maximum value encountered.

2. By subtracting the minimum value and dividing by the range between the max. and the min.

3. By calculating the standard mean and standard deviation of the feature, subtract the mean from each value, and divide the result by the standard deviation.

This is also called standardization. (mean-zero and s.d.-one)

However, we may sacrifice the way it represents the underlying data.

Caution: Feature Selection vs. Feature Extraction

Definition: It creates new good features using combinations or transformation of the original feature.

1. By using linear combinations that are simple to compute & tractable.

2. By projecting high dimensional data onto a lower dimensional space.

PCA (principal component analysis) SVD (singular value composition) LDA (linear discriminant analysis) MDA (multiple discriminant analysis) ICA (independent component analysis) MDS (multi-dimensional scaling) NMF (nonnegative matrix factorization) …

PCA extracts principal components calculated by the covariance.

New features are obtained by a linear combination of PCs.

Have you heard about eigen vector, eigen value in linear algebra?

PCA (Principal Component Analysis)

548

187

1324

121

4

3

2

1

321

X

X

X

X

AAA

Given four data where each data point has three attributes, reduce the dimension of attributes to ‘two’

Step 1. Find the eigen vectors () and corresponding eigen values () through the covariance matrix

Step 2. Sort the eigen vectors according to their corresponding eigen values in descending order

1 = 17.79 1 = [0.59 -0.43 0.68]T (referred to as the first principal component)

2 = 9.58 2 = [0.42 -0.56 -0.72]T (referred to as the second principal component)

3 = 2.42 3 = [0.69 0.71 -0.15]T (referred to as the third principal component)

Step 3. Create new attributes using the top k eigen vectors where k = 2.

Original attributes x eigenvectorT

= [1 2 1] x 1T

= [1 2 1] x [0.59 -0.43 0.68]T

= 0.41

Original attributes x eigenvectorT

= [1 2 1] x 2T

= [1 2 1] x [0.42 -0.56 -0.72]T

= -1.42

??

??

??

??

4

3

2

1

21

X

X

X

X

AA newnew

48.240.6

26.237.1

80.834.10

42.141.0

4

3

2

1

21

X

X

X

X

AA newnew

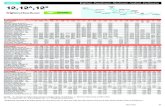

SVD (Singular Value Decomposition)

Given the relation matrix of documents and terms, recalculate the relation matrix with top 2 singular values

Step 1. Decompose the original matrix A into three matrices (i.e., using MATLAB)

A = [1/singular values x A x eigen vectors] [singular values] [eigen vectors]T, singular value = (eigen value)1/2

Step 2. Create new matrix Anew using the top k entries of the decomposed matrices where k = 2.

101000truck

011001car

000011moon

000010astronaut

000101cosmonaut

654321 dddddd

A

??????truck

??????car

??????moon

??????astronaut

??????cosmonaut

654321 dddddd

Anew

58.058.057.000.000.000.0

22.041.019.063.029.053.0

58.058.000.058.000.000.0

33.012.020.045.075.028.0

41.022.063.019.053.029.0

12.033.045.020.028.075.0

039.00000

0000.1000

00028.100

000059.10

0000016.2

09.058.041.065.026.0

16.058.015.035.070.0

61.000.037.051.048.0

73.000.059.033.013.0

25.058.057.030.044.0

101000

011001

000011

000010

000101

A

1st singular value

2nd singular value

1st eigen vector 2nd eigen vector

49.041.090.008.039.013.0

41.062.003.121.013.098.0

21.016.005.036.072.000.1

18.003.021.016.036.036.0

08.021.013.028.052.085.0

41.022.063.019.053.029.0

12.033.045.020.028.075.0

59.10

016.2

65.026.0

35.070.0

51.048.0

33.013.0

30.044.0

newA

PCA is useful for representing data.

LDA is useful for discriminating data.

PCA seeks for the orthogonal directions.

ICA seeks for the independent directions.

ICA is useful for blind source separation.

Let us go back to feature selection.

How can we select good features?

Quiz-11: propose your idea on feature selection using examples

We can find a set of good features.

We can find a set of bad features.

We need definitions on the good and bad feature.

redundant, relevant, inconsistent,…

Feature selection is a special case of search problem (optimization prob).

We can use all search techniques.

Among many approaches, we learn techniques using Filter vs. Wrapper.

The difference: learning algorithm.

Wrapper uses learning algorithms.

Filter: univariate ranking 1. score each feature 2. sort them 3. select top-ranked features

Q: pros and cons of univariate filter

Filter: multivariate correlation

Wapper: Search + Learning + Evaluation 1. Form subsets 2. Evaluate them using classifers 3. Expand or modify subsets

Wapper: Search 1. Greedy algorithm 2. Branch and bound 3. Hill-climbing algorithm 4. Genetic algorithm 5. …

Wapper: Learning (Bayesian, K-NN) + Evaluation (accuracy)

Q: pros and cons of wrapper

Q: which feature handling technique is appropriate to your project?