Affine-invariant Principal Components Charlie Brubaker and Santosh Vempala Georgia Tech School of...

-

date post

19-Dec-2015 -

Category

Documents

-

view

217 -

download

0

Transcript of Affine-invariant Principal Components Charlie Brubaker and Santosh Vempala Georgia Tech School of...

Affine-invariant Principal Components

Charlie Brubaker and Santosh Vempala

Georgia TechSchool of Computer Science

Algorithms and Randomness Center

What is PCA?

“PCA is a mathematical tool for finding directions in which a distribution is stretched out.”

• Widely used in practice• Gives best-known results for some problems

History• First discussed by Euler in a work on inertia of rigid bodies (1730).• Principal Axes identified as eigenvectors by Lagrange.• Power method for finding eigenvectors published in 1929, before computers• Ubiquitous in practice today:

Bioinformatics, Econometrics,

Data Mining, Computer Vision, ...

4

Principal Components Analysis

For points a1…am in Rn, the principal components are orthogonal vectors v1…vn s.t. Vk = span{v1…vk} minimizes among all k-subspaces.

Like regression.

Computed via SVD.

Singular Value Decomposition (SVD)

Real m x n matrix A can be decomposed as:

6

PCA (continued)

• Example: for a Gaussian the principal components are the axes of the ellipsoidal level sets.

v1v2

• “top” principal components = where the data is “stretched out.”

7

Why Use PCA?1. Reduces computation or space. Space goes from O(mn) to O(mk+nk).

--- Random Projection, Random Sampling

also reduce space requirement

2. Reveals interesting structure that is hidden in high dimension.

Problem

• Learn a mixture of Gaussians

Classify unlabeled samples

Each component is a logconcave distribution (e.g., Gaussian).

Means, variances and mixing weights are unknown

9

Distance-based Classification

“Points from the same component should be closer to each other than those from different components.”

Mixture models

• Easy to unravel if components are far enough apart

• Impossible if components are too close

Distance-based classificationHow far apart?

Thus, suffices to have

[Dasgupta ‘99][Dasgupta, Schulman ‘00][Arora, Kannan ‘01] (more general)

PCA

• Project to span of top k principal components of the data

Replace A with

• Apply distance-based classification in this subspace

Main idea

Subspace of top k principal components spans the means of all k Gaussians

SVD in geometric terms

Rank 1 approximation is the projection to the line

through the origin that minimizes the sum of squared

distances.

Rank k approximation is projection k-dimensional subspace minimizing sum of squared distances.

Why?

• Best line for 1 Gaussian?

- Line through the mean

• Best k-subspace for 1 Gaussian?

- Any k-subspace through the mean

• Best k-subspace for k Gaussians?

- The k-subspace through all k means!

How general is this?

Theorem [V-Wang’02]. For any mixture of weakly isotropic distributions, the best k-subspace is the span of the means of the k components.

“weakly isotropic”: Covariance matrix = multiple of identity

17

PCA

Projection to span of means gives

For spherical Gaussians,

Span(means) = PCA subspace of dim k.

Sample SVD

• Sample SVD subspace is “close” to mixture’s SVD subspace.

• Doesn’t span means but is close to them.

2 Gaussians in 20 Dimensions

4 Gaussians in 49 Dimensions

Mixtures of Logconcave Distributions

Theorem [Kannan-Salmasian-V ’04].

For any mixture of k distributions with SVD subspace V,

22

Mixtures of Nonisotropic, Logconcave Distributions

Theorem [Kannan, Salmasian, V, ‘04].

The PCA subspace V is “close” to the span of the means, provided that means are well-separated.

where is the maximum directional variance.

Polynomial was improved by Achlioptas-McSherry.

Required separation:

However,…

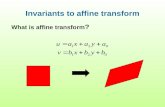

PCA collapses separable “pancakes”

Limits of PCA

• Algorithm is not affine invariant.

• Any instance can be made bad by an affine transformation.

• Spherical Gaussians become parallel pancakes but remain separable.

25

Parallel Pancakes

• Still separable, but previous algorithms don’t work.

Separability

27

Hyperplane Separability

• PCA is not affine-invariant.

• Is hyperplane separability sufficient to learn a mixture?

Affine-invariant principal components?

• What is an affine-invariant property that distinguishes 1 Gaussian from 2 pancakes?

• Or a ball from a cylinder?

29

Isotropic PCA

1. Make point set isotropic via an affine transformation.

2. Reweight points according to a spherically symmetric function f(|x|).

3. Return the 1st and 2nd moments of reweighted points.

30

Isotropic PCA [BV’08]

• Goal: Go beyond 1st and 2nd moments to find “interesting” directions.

• Why? What if all 2nd moments are equal?

v?

v? v?v?

• This isotropy can always be achieved by an affine transformation.

Ball vs Cylinder

32

Algorithm

1. Make distribution isotropic.

2. Reweight points.

3. If mean shifts, partition along this direction. Recurse.

4. Otherwise, partition along top principle component. Recurse.

33

Step1: Enforcing Isotropy

• Isotropy:a. Mean = 0 and b. Variance = 1 in every direction

• Step 1a: move the origin to the mean (translation).

• Step 1b: apply linear transformation

34

Step 1: Enforcing Isotropy

-3 -2 -1 0 1 2 3

-2.5

-2

-1.5

-1

-0.5

0

0.5

1

1.5

2

2.5

-3 -2 -1 0 1 2 3-3

-2

-1

0

1

2

35

Step 1: Enforcing Isotropy

-4 -3 -2 -1 0 1 2 3 4

-3

-2

-1

0

1

2

3

4

-12 -10 -8 -6 -4 -2 0 2 4

-6

-4

-2

0

2

4

6

36

Step 1: Enforcing Isotropy

• Turns every well-separated mixture into (almost) parallel pancakes, separable along the intermean direction.

• PCA no longer helps us!

-5 -4 -3 -2 -1 0 1 2 3 4 5

-2

-1

0

1

2

3

4

5

-3 -2 -1 0 1 2

-2

-1.5

-1

-0.5

0

0.5

1

1.5

2

37

Algorithm

1. Make distribution isotropic.

2. Reweight points (using a Gaussian).

3. If mean shifts, partition along this direction. Recurse.

4. Otherwise, partition along top principle component. Recurse.

Two parallel pancakes

• Isotropy pulls apart the components

• If one is heavier, then overall mean shifts along the separating direction

• If not, principal component is along the separating direction

39

Steps 3 & 4: Illustrative Examples• Imbalanced Pancakes:

• Balanced Pancakes:Mean

40

Step 3: Imbalanced Case

• Mean shifts toward heavier component

41

Step 4: Balanced Case

• Mean doesn’t move by symmetry.• Top principle component is inter-mean direction

Unraveling Gaussian Mixtures

Theorem [Brubaker-V. ’08]

The algorithm correctly classifies samples from two arbitrary Gaussians “separable by a hyperplane” with high probability.

Original Data

• 40 dimensions, 8000 samples (subsampled for visualization)

• Means of (0,0) and (1,1).

-4 -3 -2 -1 0 1 2 3 4 5

-3

-2

-1

0

1

2

3

4

Random Projection

-3 -2 -1 0 1 2 3

-2

-1.5

-1

-0.5

0

0.5

1

1.5

2

2.5

PCA

-3 -2 -1 0 1 2 3 4

-3

-2

-1

0

1

2

Isotropic PCA

-4 -3 -2 -1 0 1 2 3 4 5

-3

-2

-1

0

1

2

3

4

47

Results:k=2

• Theorem: For k=2, algorithm succeeds if there is some direction v such that:

(i.e., hyperplane separability.)

48

Fisher Criterion• For a direction p,

intra-component variance along p

J(p) = ------------------------------------------------

total variance along p

• Overlap: Min J(p) over all directions p.(

small overlap => well-separated)

• Theorem: For k=2, algorithm suceeds if overlap is

49

Results:k>2

• For k > 2, we need k-1 orthogonal directions with small overlap

50

Fisher Criterion

J(S)= max intra-component variance within S

• Make F isotropic. For subspace S

• Overlap is affine-invariant.

• Overlap = Min J(S), S: k-1 dim subspace

• Theorem [BV ’08]: For k>2, the algorithm succeeds if the overlap is at most

51

Original Data (k=3)

• 40 dimensions, 15000 samples (subsampled for visualization)

-1.5 -1 -0.5 0 0.5 1 1.5

-1

-0.5

0

0.5

1

52

Random Projection

-3 -2 -1 0 1 2 3

-3

-2

-1

0

1

2

53

PCA

-4 -2 0 2 4 6

-4

-3

-2

-1

0

1

2

3

4

54

Isotropic PCA

-1 -0.5 0 0.5 1 1.5

-0.5

0

0.5

1

1.5

Conclusion

• Most of this in a new book: “Spectral Algorithms,” with Ravi Kannan

• IsoPCA gives an affine-invariant clustering(independent of a model)

• What do Iso-PCA directions mean?

• Robust PCA (Brubaker 08; robust to small changes in point set); applied to noisy/best-fit mixtures.

• PCA for tensors?