AdPredictor - Large Scale Bayesian Click-Through Rate Prediction in Microsoft's Bing Search Engine

-

Upload

rayssa-kuellian -

Category

Documents

-

view

130 -

download

2

description

Transcript of AdPredictor - Large Scale Bayesian Click-Through Rate Prediction in Microsoft's Bing Search Engine

-

Click Prediction with adPredictor

at Microsoft Advertising

Joaquin Quionero Candela Thore Graepel

Ralf Herbrich

Thomas Borchert

Microsoft Research & Microsoft adCenter

-

Microsoft + Yahoo! = 1/3 US search market adPredictor predicts probability of click on ads for Microsoft Bing and Yahoo! search engines

-

flowers

-

$1.00

$2.00

$0.10

* 10%

* 4%

* 50%

=$0.10

=$0.08

=$0.05

$0.80

$1.25

$0.05

Efficient use of ad space

Increased user satisfaction by better targeting

Increased revenue by showing ads with high click-thru rate

Importance of accurate probability estimates

Over-simplified ranking function: this is not what is used in practice

-

Impression Level Predictions

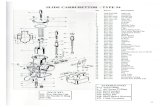

Sparse binary input features (many 10s of them)

Some high cardinality (~100M), some low (

-

Sparse Linear Probit Regression

1341201

1570165

2213187

9215433

Ad ID

Exact Match

Broad Match

Match Type

Position

ML-1

SB-1

SB-2

+ pClick

-

Uncertainty: A Bayesian Treatment

1341201

1570165

2213187

9215433

Ad ID

Exact Match

Broad Match

Match Type

Position

ML-1

SB-1

SB-2

p(pClick) +

-

A Linear Probit Model

Notation = 1 if click is the vector of all weights = 1 if non-click is a sparse binary input vector

Generalised linear model with weights vector :

|, :=

Inverse link function is the probit function:

; 0,1

controls the steepness: it corresponds to the standard deviation of additive zero mean noise.

9

-

0

click no click

|, :=

Observation Noise (Assume Known Noiseless Weights)

10

Think of as indicator variables that select weights: we will soon remove from the notation Example = = [1; 0; 0; 0; 1; 0; ; 0; 1]

-

Uncertainty About the Weights A Bayesian Treatment

Factorizing Gaussian prior over the weights:

= ; , 2

=1

Given (|,) the posterior is given by:

|, = |,

|, d

Problem: This posterior cannot be represented compactly nor calculated in closed form

11

-

Desiderata and Approximations

We want

The posterior to remain a factorized Gaussian

Incremental online learning rather than batch

This is how it is done

Approximate inference with latent variables

Single pass approximate (online) schedule

12

-

Sum of posterior weights 0

Predicting Average Probability of Click

Now that our posterior over the weights is a factorizing Gaussian

100%

=

=1

2 + 2

=1

-

Principled Exploration

0

1

2

3

4

5

6

7

8

9

10

0% 20% 40% 60% 80% 100%

p(p

Clic

k)

pClick

average: 25% (3 clicks out of 12 impressions)

average: 30% (30 clicks out of 100 impressions)

-

Approximate Inference with Latent Variables

Prior: = ; , 2

Sum of active weights:

, = =1

Noisy version thereof: , = ; , 2

The sign of determines click: , = sign

15

1

1

-

Approximating () and ()

-5 0 5 -5 0 5

-5 0 5 -5 0 5 -5 0 5

-5 0 5

* =

* =

() () ()

() () ()

-

Updating the Posterior

No

Clic

k

Clic

k

w1 w2 +

s

y Prediction Training/Update

-

Posterior Updates for the Click Event

-

The importance of joint updates

0.01%

0.10%

1.00%

10.00%

100.00%

0.01% 0.10% 1.00% 10.00% 100.00%

Act

ual

CTR

Predicted CTR

adPredictor

0.01%

0.10%

1.00%

10.00%

100.00%

0.01% 0.10% 1.00% 10.00% 100.00%

Act

ual

CTR

Predicted CTR

Naive Bayes

-

Calibration by Isotonic Regression

0.01%

0.10%

1.00%

10.00%

100.00%

0.01% 0.10% 1.00% 10.00% 100.00%

Act

ual

CTR

Predicted CTR

Calibrated adPredictor

0.01%

0.10%

1.00%

10.00%

100.00%

0.01% 0.10% 1.00% 10.00% 100.00%

Act

ual

CTR

Predicted CTR

Calibrated Naive Bayes

-

Calibration Cant Improve the ROC

0.00%

10.00%

20.00%

30.00%

40.00%

50.00%

60.00%

70.00%

80.00%

90.00%

100.00%

0.00% 20.00% 40.00% 60.00% 80.00% 100.00%

Tru

e P

osi

tive

s

False Positives

Nave Bayes

adPredictor

-

adPredictor Wrap Up

Automatic learning rate

Calibrated: 2% prediction means 2% clicks

Use of very many features, even if correlated

Modelling the uncertainty explicitly

Natural exploration mode

-

Discussion (For Later)

Sample selection bias and exploration Dynamics: forgetting with time Pruning uninformative weights Approximate parallel inference Hierarchical priors Input features the secret sauce Some of this is detailed in the ICML 2010 paper: Web-Scale Bayesian Click-Through Rate Prediction for Sponsored Search Advertising in Microsofts Bing Search Engine

We are hiring! Please contact me if you are interested.

-

Thank you!

We are hiring! Please contact me if you are interested.