Actionable and Political Text Classification using Word Embeddings and LSTM

-

Upload

nemanja-spasojevic -

Category

Engineering

-

view

345 -

download

2

Transcript of Actionable and Political Text Classification using Word Embeddings and LSTM

Actionable and Political Text Classification using Word

Embeddings and LSTMAdithya Rao, Nemanja Spasojevic

Lithium Technologies | Klout

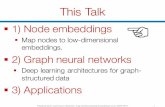

Main Contributions

● Explore contextual classification problems beyond sentiment:

○ Actionability for customer service

○ Political Leaning on social media

● Deep Learning Models are built with Word Embeddings + LSTM and analyzed

for several languages.

● 85-91 % accuracy for predicting actionability and political leaning.

● Significant improvement over traditional methods.

● Actionability models deployed in production

● Political Leaning model open to download

Online Customer Service

● Sentiment is largely negative in

customer complaints.

● Just knowing sentiment by itself is not

very useful.

● Prioritizing which messages to

respond to can lead to huge cost

savings.

Actionable vs Non-Actionable

Actionable

Non-Actionable

Political Leaning

● Mixed sentiments on various

issues.

● Sentiment towards

candidates are not always

indicative of party lines. eg.

Primaries, #NeverTrump

Political Leaning Examples

Republican

Democrat

Actionability Data● Lithium Response is a

platform for customer

service.

● Labels:

○ If an agent provided a response,

then Actionable.

○ If ignored then Non-Actionable.

● 6 months of data, from Nov

2014 to May 2015

● 12 million training, 3 million

test samples across different

languages

Political Leaning Dataset● Twitter Lists: Use crowdsourced topical

lists to find people with known

Republican or Democrat leaning.

● Sample messages that they posted over a

period of 3 months, between Oct 12th,

2015 to Jan 12th, 2016

● ~330k Training, ~84k Test samples

● List of users available here:

https://github.com/klout/opendata

Deep Network Schematic ● Embedding layer: Maps words to a smaller

n-dimensional vector space.

● LSTM layer: Multiple memory units wirth

gates ~ deep network across timesteps.

● Dropout layer: For regularization

● Fully Connected layer: Learns non-linear

transformations of higher level features.

● Loss Layer: Binary cross-entropy loss

● Learning: Back-propagation of gradients to

train and learn weights.

Language-based Performance

Deep Learning vs Traditional Techniques

LSTM and Embedding Units

Actionability Predictions

Political Leaning Predictions

Political Leaning Predictions (cont.)

Future Work and Improvements

● Analysis of word embeddings, mapping embeddings to word clusters.

● Exploring other architectures with LSTMs and RNNs for training.

● Choosing optimal hyperparameters.

● Time sensitivity of training models for Political Leaning.

Questions?

Thank you!

Contact info:

Adithya Rao [email protected] Spasojevic [email protected]

Github link: https://github.com/klout/opendata

![COMPARISON OF RNN, LSTM AND GRU ON SPEECH … · 2020. 1. 24. · class of RNN, Long Short-Term Memory [LSTM] networks. LSTM networks have special memory cell structure, which is](https://static.fdocuments.us/doc/165x107/6023b2546ec94637630984a4/comparison-of-rnn-lstm-and-gru-on-speech-2020-1-24-class-of-rnn-long-short-term.jpg)

![Abstract arXiv:1507.01526v1 [cs.NE] 6 Jul 2015 · 2015-07-07 · Standard LSTM block 2d Grid LSTM block m m! h! h! I! xi h1 h2 2! 1 m 1 m! 1 m! m 2 2 1d Grid LSTM Block 3d Grid LSTM](https://static.fdocuments.us/doc/165x107/5ecb54ee586f3c589645830a/abstract-arxiv150701526v1-csne-6-jul-2015-2015-07-07-standard-lstm-block.jpg)