6asso[3]

-

Upload

yogesh-bansal -

Category

Documents

-

view

213 -

download

0

Transcript of 6asso[3]

-

Data Mining: Concepts and Techniques Chapter 6

Data Mining: Concepts and Techniques

-

Chapter 6: Mining Association Rules in Large DatabasesAssociation rule miningMining single-dimensional Boolean association rules from transactional databasesMining multilevel association rules from transactional databasesMining multidimensional association rules from transactional databases and data warehouseFrom association mining to correlation analysisConstraint-based association miningSummary

Data Mining: Concepts and Techniques

-

What Is Association Mining?Association rule mining:Finding frequent patterns, associations, correlations, or causal structures among sets of items or objects in transaction databases, relational databases, and other information repositories.Applications:Basket data analysis, cross-marketing, catalog design, loss-leader analysis, clustering, classification, etc.Examples. Rule form: Body Head [support, confidence].buys(x, diapers) buys(x, beers) [0.5%, 60%]major(x, CS) ^ takes(x, DB) grade(x, A) [1%, 75%]

Data Mining: Concepts and Techniques

-

Association Rule: Basic ConceptsGiven: (1) database of transactions, (2) each transaction is a list of items (purchased by a customer in a visit)Find: all rules that correlate the presence of one set of items with that of another set of itemsE.g., 98% of people who purchase tires and auto accessories also get automotive services doneApplications* Maintenance Agreement (What the store should do to boost Maintenance Agreement sales)Home Electronics * (What other products should the store stocks up?)Attached mailing in direct marketingDetecting ping-ponging of patients, faulty collisions

Data Mining: Concepts and Techniques

-

Rule Measures: Support and ConfidenceFind all the rules X & Y Z with minimum confidence and supportsupport, s, probability that a transaction contains {X Y Z}confidence, c, conditional probability that a transaction having {X Y} also contains ZLet minimum support 50%, and minimum confidence 50%, we haveA C (50%, 66.6%)C A (50%, 100%)Customerbuys diaperCustomerbuys bothCustomerbuys beer

Data Mining: Concepts and Techniques

Sheet1

Transaction IDItems Bought

2000A,B,C

1000A,C

4000A,D

5000B,E,F

-

Association Rule Mining: A Road MapBoolean vs. quantitative associations (Based on the types of values handled)buys(x, SQLServer) ^ buys(x, DMBook) buys(x, DBMiner) [0.2%, 60%]age(x, 30..39) ^ income(x, 42..48K) buys(x, PC) [1%, 75%]Single dimension vs. multiple dimensional associations (see ex. Above)Single level vs. multiple-level analysisWhat brands of beers are associated with what brands of diapers?Various extensionsCorrelation, causality analysisAssociation does not necessarily imply correlation or causalityMaxpatterns and closed itemsetsConstraints enforcedE.g., small sales (sum < 100) trigger big buys (sum > 1,000)?

Data Mining: Concepts and Techniques

-

Mining Association RulesAn ExampleFor rule A C:support = support({A C}) = 50%confidence = support({A C})/support({A}) = 66.6%The Apriori principle:Any subset of a frequent itemset must be frequentMin. support 50%Min. confidence 50%

Data Mining: Concepts and Techniques

Sheet1

Transaction IDItems Bought

2000A,B,C

1000A,C

4000A,D

5000B,E,F

Sheet1

Frequent ItemsetSupport

{A}75%

{B}50%

{C}50%

{A,C}50%

-

Mining Frequent Itemsets: the Key StepFind the frequent itemsets: the sets of items that have minimum supportA subset of a frequent itemset must also be a frequent itemseti.e., if {AB} is a frequent itemset, both {A} and {B} should be a frequent itemsetIteratively find frequent itemsets with cardinality from 1 to k (k-itemset)Use the frequent itemsets to generate association rules.

Data Mining: Concepts and Techniques

-

The Apriori AlgorithmJoin Step: Ck is generated by joining Lk-1with itselfPrune Step: Any (k-1)-itemset that is not frequent cannot be a subset of a frequent k-itemsetPseudo-code:Ck: Candidate itemset of size kLk : frequent itemset of size k

L1 = {frequent items};for (k = 1; Lk !=; k++) do begin Ck+1 = candidates generated from Lk; for each transaction t in database do increment the count of all candidates in Ck+1 that are contained in t Lk+1 = candidates in Ck+1 with min_support endreturn k Lk;

Data Mining: Concepts and Techniques

-

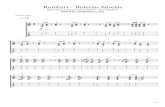

The Apriori Algorithm ExampleDatabase DScan DC1L1L2C2C2Scan DC3L3Scan D

Data Mining: Concepts and Techniques

Sheet1

TIDItems

1001 3 4

2002 3 5

3001 2 3 5

4002 5

Sheet1

itemsetsup.

{1}2

{2}3

{3}3

{4}1

{5}3

Sheet1

itemsetsup.

{1}2

{2}3

{3}3

{5}3

Sheet1

itemset

{1 2}

{1 3}

{1 5}

{2 3}

{2 5}

{3 5}

Sheet1

itemsetsup

{1 2}1

{1 3}2

{1 5}1

{2 3}2

{2 5}3

{3 5}2

Sheet1

itemsetsup

{1 3}2

{2 3}2

{2 5}3

{3 5}2

Sheet1

itemset

{2 3 5}

Sheet1

itemsetsup

{2 3 5}2

-

How to Generate Candidates?Suppose the items in Lk-1 are listed in an orderStep 1: self-joining Lk-1 insert into Ckselect p.item1, p.item2, , p.itemk-1, q.itemk-1from Lk-1 p, Lk-1 qwhere p.item1=q.item1, , p.itemk-2=q.itemk-2, p.itemk-1 < q.itemk-1Step 2: pruningforall itemsets c in Ck doforall (k-1)-subsets s of c doif (s is not in Lk-1) then delete c from Ck

Data Mining: Concepts and Techniques

-

How to Count Supports of Candidates?Why counting supports of candidates a problem?The total number of candidates can be very huge One transaction may contain many candidatesMethod:Candidate itemsets are stored in a hash-treeLeaf node of hash-tree contains a list of itemsets and countsInterior node contains a hash tableSubset function: finds all the candidates contained in a transaction

Data Mining: Concepts and Techniques

-

Example of Generating CandidatesL3={abc, abd, acd, ace, bcd}Self-joining: L3*L3abcd from abc and abdacde from acd and acePruning:acde is removed because ade is not in L3C4={abcd}

Data Mining: Concepts and Techniques

-

Methods to Improve Aprioris EfficiencyHash-based itemset counting: A k-itemset whose corresponding hashing bucket count is below the threshold cannot be frequentTransaction reduction: A transaction that does not contain any frequent k-itemset is useless in subsequent scansPartitioning: Any itemset that is potentially frequent in DB must be frequent in at least one of the partitions of DBSampling: mining on a subset of given data, lower support threshold + a method to determine the completenessDynamic itemset counting: add new candidate itemsets only when all of their subsets are estimated to be frequent

Data Mining: Concepts and Techniques

-

Is Apriori Fast Enough? Performance BottlenecksThe core of the Apriori algorithm:Use frequent (k 1)-itemsets to generate candidate frequent k-itemsetsUse database scan and pattern matching to collect counts for the candidate itemsetsThe bottleneck of Apriori: candidate generationHuge candidate sets:104 frequent 1-itemset will generate 107 candidate 2-itemsetsTo discover a frequent pattern of size 100, e.g., {a1, a2, , a100}, one needs to generate 2100 1030 candidates.Multiple scans of database: Needs (n +1 ) scans, n is the length of the longest pattern

Data Mining: Concepts and Techniques

-

Mining Frequent Patterns Without Candidate GenerationCompress a large database into a compact, Frequent-Pattern tree (FP-tree) structurehighly condensed, but complete for frequent pattern miningavoid costly database scansDevelop an efficient, FP-tree-based frequent pattern mining methodA divide-and-conquer methodology: decompose mining tasks into smaller onesAvoid candidate generation: sub-database test only!

Data Mining: Concepts and Techniques

-

Construct FP-tree from a Transaction DBmin_support = 0.5TIDItems bought (ordered) frequent items100{f, a, c, d, g, i, m, p}{f, c, a, m, p}200{a, b, c, f, l, m, o}{f, c, a, b, m}300 {b, f, h, j, o}{f, b}400 {b, c, k, s, p}{c, b, p}500 {a, f, c, e, l, p, m, n}{f, c, a, m, p}Steps:Scan DB once, find frequent 1-itemset (single item pattern)Order frequent items in frequency descending orderScan DB again, construct FP-tree

Data Mining: Concepts and Techniques

-

Benefits of the FP-tree StructureCompleteness: never breaks a long pattern of any transactionpreserves complete information for frequent pattern miningCompactnessreduce irrelevant informationinfrequent items are gonefrequency descending ordering: more frequent items are more likely to be sharednever be larger than the original database (if not count node-links and counts)Example: For Connect-4 DB, compression ratio could be over 100

Data Mining: Concepts and Techniques

-

Mining Frequent Patterns Using FP-treeGeneral idea (divide-and-conquer)Recursively grow frequent pattern path using the FP-treeMethod For each item, construct its conditional pattern-base, and then its conditional FP-treeRepeat the process on each newly created conditional FP-tree Until the resulting FP-tree is empty, or it contains only one path (single path will generate all the combinations of its sub-paths, each of which is a frequent pattern)

Data Mining: Concepts and Techniques

-

Major Steps to Mine FP-treeConstruct conditional pattern base for each node in the FP-treeConstruct conditional FP-tree from each conditional pattern-baseRecursively mine conditional FP-trees and grow frequent patterns obtained so farIf the conditional FP-tree contains a single path, simply enumerate all the patterns

Data Mining: Concepts and Techniques

-

Step 1: From FP-tree to Conditional Pattern BaseStarting at the frequent header table in the FP-treeTraverse the FP-tree by following the link of each frequent itemAccumulate all of transformed prefix paths of that item to form a conditional pattern baseConditional pattern basesitemcond. pattern basecf:3afc:3bfca:1, f:1, c:1mfca:2, fcab:1pfcam:2, cb:1

Data Mining: Concepts and Techniques

-

Properties of FP-tree for Conditional Pattern Base ConstructionNode-link propertyFor any frequent item ai, all the possible frequent patterns that contain ai can be obtained by following ai's node-links, starting from ai's head in the FP-tree headerPrefix path propertyTo calculate the frequent patterns for a node ai in a path P, only the prefix sub-path of ai in P need to be accumulated, and its frequency count should carry the same count as node ai.

Data Mining: Concepts and Techniques

-

Step 2: Construct Conditional FP-tree For each pattern-baseAccumulate the count for each item in the baseConstruct the FP-tree for the frequent items of the pattern basem-conditional pattern base:fca:2, fcab:1All frequent patterns concerning mm, fm, cm, am, fcm, fam, cam, fcam{}f:4c:1b:1p:1b:1c:3a:3b:1m:2p:2m:1Header TableItem frequency head f4c4a3b3m3p3

Data Mining: Concepts and Techniques

-

Mining Frequent Patterns by Creating Conditional Pattern-Bases

Data Mining: Concepts and Techniques

-

Step 3: Recursively mine the conditional FP-treeCond. pattern base of am: (fc:3)Cond. pattern base of cm: (f:3){}f:3cm-conditional FP-treeCond. pattern base of cam: (f:3){}f:3cam-conditional FP-tree

Data Mining: Concepts and Techniques

-

Single FP-tree Path GenerationSuppose an FP-tree T has a single path PThe complete set of frequent pattern of T can be generated by enumeration of all the combinations of the sub-paths of P{}f:3c:3a:3m-conditional FP-treeAll frequent patterns concerning mm, fm, cm, am, fcm, fam, cam, fcam

Data Mining: Concepts and Techniques

-

Principles of Frequent Pattern GrowthPattern growth propertyLet be a frequent itemset in DB, B be 's conditional pattern base, and be an itemset in B. Then is a frequent itemset in DB iff is frequent in B. abcdef is a frequent pattern, if and only ifabcde is a frequent pattern, andf is frequent in the set of transactions containing abcde

Data Mining: Concepts and Techniques

-

Why Is Frequent Pattern Growth Fast?Our performance study showsFP-growth is an order of magnitude faster than Apriori, and is also faster than tree-projectionReasoningNo candidate generation, no candidate testUse compact data structureEliminate repeated database scanBasic operation is counting and FP-tree building

Data Mining: Concepts and Techniques

-

FP-growth vs. Apriori: Scalability With the Support ThresholdData set T25I20D10K

Data Mining: Concepts and Techniques

Chart1

22

43

46

524

566

7500

110.3

180.2

440.1

D1 FP-growth runtime

D1 Apriori runtime

Support threshold(%)

Run time(sec.)

Sheet1

dataset T25I10D10k item=1k

FP-tree/FP-mineTreeProjectionComparison

threshold(%)#tree nodestree size(k)#stack nodesstack size(k)#trans(FP)#tree nodesmax width#transmatrices costfp/lexi treestack/widthlexi/fp trans

523034553230345533296173734312495681315.53315.531.31

4727611745727611745825771831839186973038397.60397.601.11

31461193507146119350715596639339316422148777371.80371.801.05

221896852552188515252228746732683240778730933299.14320.431.05

12684466443256093614627404457811000557415269663046.44256.092.03

0.828377668112587466210286271930019697046093535114930.51131.412.46

0.53585048604261771628334510028647561412844755892687212.5146.633.72

0.357507613802267947643153101269654131412007235728161858.2620.393.78

0.21059473254272735056564978119127070295162757934750178118.349.272.82

0.1268092164342279803671525038933391221267594461514609136977.912.211.78

dataset T25I10D100k item=10k

FP-tree/FP-mineTreeProjectionComparison

threshold(%)#tree nodestree size(k)#stack nodesstack size(k)#trans(FP)#tree nodesmax width#transmatrices costfp/lexi treestack/widthlexi/fp trans

21018822445101870244520173914313033161421641131712.46783.621.64

11009635242319958992390211050085256104739870832750956301192.09951.193.61

0.8137053532893134386832253146157710230193462021634034686178133.97694.864.24

0.5203932048944196776747226211608427067528211607208204318718275.34372.545.49

0.3252033060488232748155860255857272640124062057613046477459234.70187.618.04

0.130063537215825941226225929763641515433054527400206133656480819.8484.939.21

dataset T25I10D10k item=1kdataset T25I10D100k item=1k

threshold(%)FP-mem(k)FP-costTP-mem(k)TP-costTP/FP memTP/FP costthreshold(%)FP-mem(k)FP-costTP-mem(k)TP-costTP/FP memTP/FP cost

5198240020114855112110.751.2821515635076391361942986140.901.23

44520785584282420607960.622.621666291277946564469576597470.974.51

3100231793296655992441020.655.150.89429618492627969981034582331.035.59

217518331020812342253472630.707.660.5155409319159871770242373272401.147.44

122462442046018237390080730.818.820.3199892422556982753103687389961.388.73

0.823218461853819747406276690.858.800.1239233511939173518854815280571.479.41

0.525875513138325132436604110.978.51

0.333842628702933896478740991.007.61

0.255016940138252178597613610.956.36

0.1143191238758621728861420208151.215.95

threshold(%)D1 running mem. Req.D2 running mem. Req.

252522445

1614623902

0.8621032253

0.5628347226

0.3643155860

0.1671562259

Sheet1

D1 running mem. Req.

D2 running mem. Req.

Support threshold(%)

Running memory requirement (Kb)

Sheet2

T25I10D10k 1k items

threshold(%)D1 #frequent itemsetsD1 #weighted lengthD1 longestD2 #frequent itemsetsD2 #weighted lengthD2 longest

3393111911

27321.0831431.13

1.514822.18611363.27

157813.791052563.539

0.893003.6311102303.8911

0.5286474.0912270674.0912

0.3696544.4313726404.7414

0.21270704.3813997074.6614

0.13391223.84141515434.6414

Sheet2

00

00

00

00

00

00

00

00

00

D1 #frequent itemsets

D2 #frequent itemsets

Support threshold(%)

Number of frequent itemsets

Sheet3

0000

0000

0000

0000

0000

0000

0000

0000

0000

D1 #weighted length

D1 longest

D2 #weighted length

D2 longest

Support threshold(%)

Length of frequent itemsets

Sheet4

threshold(%)D1 FP-growth runtimeD1 Apriori runtimeD2 FP-growth runtimeD2 Apriori runtimeD1 #frequent itemsetsD2 #frequent itemsetsD1 runtime/itemsetD2 runtime/itemset

3221012393190.00508905850.5263157895

24311217321430.00546448090.0769230769

1.5461564148211360.00269905530.0132042254

152426225578152560.00086490230.0049467275

0.8566359300102300.00053763440.0034213099

0.575005828647270670.00024435370.0021428308

0.3119169654726400.00015792350.0012527533

0.218107127070997070.00014165420.0010731443

0.1441373391221515430.00012974680.0009040338

Sheet4

00

00

00

00

00

00

00

00

00

D1 FP-growth runtime

D1 Apriori runtime

Support threshold(%)

Run time(sec.)

Sheet5

00

00

00

00

00

00

00

00

00

D2 FP-growth runtime

D2 Apriori runtime

Support threshold(%)

Run time(sec.)

Sheet6

00

00

00

00

00

00

00

00

00

D1 runtime/itemset

D2 runtime/itemset

Support threshold(%)

Runtime/itemset(sec.)

Size(K)FP-growthApriori

1014

20311

30517

50731

801251

1001564

00

00

00

00

00

00

FP-growth

Apriori

Number of transactions (K)

Run time (sec.)

D1 10k

threshold(%)D1 FP-growthD1 TreeProjection

322

244

1.545

157

0.859

0.5718

0.31131

0.21846

0.14498

D2 100k

threshold(%)D2 FP-growthD2 TreeProjection

31011

21111

1.51521

12657

0.835123

0.558300

0.391

0.2107

0.1137

scalability1.00%

sizeFP-growthTreeProjection

1033

2059

30815

501327

802045

1002657

00

00

00

00

00

00

00

00

00

D1 FP-growth

D1 TreeProjection

Support threshold (%)

Run time (sec.)

00

00

00

00

00

00

00

00

00

D2 FP-growth

D2 TreeProjection

Support threshold (%)

Runtime (sec.)

00

00

00

00

00

00

FP-growth

TreeProjection

Number of transactions (K)

Run time (sec.)

Support threshold(%)#nodes in FP-tree#frequent item occurrencesRatioFP-tree MemDB MemMem ratio

9036313757483789.9487125502992631.6565656566

80151517787821174.11363607115128195.6855885589

7026151930953738.41627607723812123.0690248566

6081052147771264.99194520859108444.165556241

50134492219609165.04322776887843627.5064936674

4037162231038762.17891888924154810.3617808514

30123368242560519.66296083297024203.2769235134

2037387326563797.118972952106255161.1841717196

1080747327925353.4619379352111701400.5763938856

00

00

00

00

00

00

00

00

00

#nodes in FP-tree

#frequent item occurrences

-

FP-growth vs. Tree-Projection: Scalability with Support ThresholdData set T25I20D100K

Data Mining: Concepts and Techniques

Chart3

1011

1111

1521

2657

35123

58300

910.3

1070.2

1370.1

D2 FP-growth

D2 TreeProjection

Support threshold (%)

Runtime (sec.)

Sheet1

dataset T25I10D10k item=1k

FP-tree/FP-mineTreeProjectionComparison

threshold(%)#tree nodestree size(k)#stack nodesstack size(k)#trans(FP)#tree nodesmax width#transmatrices costfp/lexi treestack/widthlexi/fp trans

523034553230345533296173734312495681315.53315.531.31

4727611745727611745825771831839186973038397.60397.601.11

31461193507146119350715596639339316422148777371.80371.801.05

221896852552188515252228746732683240778730933299.14320.431.05

12684466443256093614627404457811000557415269663046.44256.092.03

0.828377668112587466210286271930019697046093535114930.51131.412.46

0.53585048604261771628334510028647561412844755892687212.5146.633.72

0.357507613802267947643153101269654131412007235728161858.2620.393.78

0.21059473254272735056564978119127070295162757934750178118.349.272.82

0.1268092164342279803671525038933391221267594461514609136977.912.211.78

dataset T25I10D100k item=10k

FP-tree/FP-mineTreeProjectionComparison

threshold(%)#tree nodestree size(k)#stack nodesstack size(k)#trans(FP)#tree nodesmax width#transmatrices costfp/lexi treestack/widthlexi/fp trans

21018822445101870244520173914313033161421641131712.46783.621.64

11009635242319958992390211050085256104739870832750956301192.09951.193.61

0.8137053532893134386832253146157710230193462021634034686178133.97694.864.24

0.5203932048944196776747226211608427067528211607208204318718275.34372.545.49

0.3252033060488232748155860255857272640124062057613046477459234.70187.618.04

0.130063537215825941226225929763641515433054527400206133656480819.8484.939.21

dataset T25I10D10k item=1kdataset T25I10D100k item=1k

threshold(%)FP-mem(k)FP-costTP-mem(k)TP-costTP/FP memTP/FP costthreshold(%)FP-mem(k)FP-costTP-mem(k)TP-costTP/FP memTP/FP cost

5198240020114855112110.751.2821515635076391361942986140.901.23

44520785584282420607960.622.621666291277946564469576597470.974.51

3100231793296655992441020.655.150.89429618492627969981034582331.035.59

217518331020812342253472630.707.660.5155409319159871770242373272401.147.44

122462442046018237390080730.818.820.3199892422556982753103687389961.388.73

0.823218461853819747406276690.858.800.1239233511939173518854815280571.479.41

0.525875513138325132436604110.978.51

0.333842628702933896478740991.007.61

0.255016940138252178597613610.956.36

0.1143191238758621728861420208151.215.95

threshold(%)D1 running mem. Req.D2 running mem. Req.

252522445

1614623902

0.8621032253

0.5628347226

0.3643155860

0.1671562259

Sheet1

00

00

00

00

00

00

D1 running mem. Req.

D2 running mem. Req.

Support threshold(%)

Running memory requirement (Kb)

Sheet2

T25I10D10k 1k items

threshold(%)D1 #frequent itemsetsD1 #weighted lengthD1 longestD2 #frequent itemsetsD2 #weighted lengthD2 longest

3393111911

27321.0831431.13

1.514822.18611363.27

157813.791052563.539

0.893003.6311102303.8911

0.5286474.0912270674.0912

0.3696544.4313726404.7414

0.21270704.3813997074.6614

0.13391223.84141515434.6414

Sheet2

00

00

00

00

00

00

00

00

00

D1 #frequent itemsets

D2 #frequent itemsets

Support threshold(%)

Number of frequent itemsets

Sheet3

0000

0000

0000

0000

0000

0000

0000

0000

0000

D1 #weighted length

D1 longest

D2 #weighted length

D2 longest

Support threshold(%)

Length of frequent itemsets

Sheet4

threshold(%)D1 FP-growth runtimeD1 Apriori runtimeD2 FP-growth runtimeD2 Apriori runtimeD1 #frequent itemsetsD2 #frequent itemsetsD1 runtime/itemsetD2 runtime/itemset

3221012393190.00508905850.5263157895

24311217321430.00546448090.0769230769

1.5461564148211360.00269905530.0132042254

152426225578152560.00086490230.0049467275

0.8566359300102300.00053763440.0034213099

0.575005828647270670.00024435370.0021428308

0.3119169654726400.00015792350.0012527533

0.218107127070997070.00014165420.0010731443

0.1441373391221515430.00012974680.0009040338

Sheet4

00

00

00

00

00

00

00

00

00

D1 FP-growth runtime

D1 Apriori runtime

Support threshold(%)

Run time(sec.)

Sheet5

00

00

00

00

00

00

00

00

00

D2 FP-growth runtime

D2 Apriori runtime

Support threshold(%)

Run time(sec.)

Sheet6

00

00

00

00

00

00

00

00

00

D1 runtime/itemset

D2 runtime/itemset

Support threshold(%)

Runtime/itemset(sec.)

Size(K)FP-growthApriori

1014

20311

30517

50731

801251

1001564

00

00

00

00

00

00

FP-growth

Apriori

Number of transactions (K)

Run time (sec.)

D1 10k

threshold(%)D1 FP-growthD1 TreeProjection

322

244

1.545

157

0.859

0.5718

0.31131

0.21846

0.14498

D2 100k

threshold(%)D2 FP-growthD2 TreeProjection

31011

21111

1.51521

12657

0.835123

0.558300

0.391

0.2107

0.1137

scalability1.00%

sizeFP-growthTreeProjection

1033

2059

30815

501327

802045

1002657

00

00

00

00

00

00

00

00

00

D1 FP-growth

D1 TreeProjection

Support threshold (%)

Run time (sec.)

00

00

00

00

00

00

00

00

00

D2 FP-growth

D2 TreeProjection

Support threshold (%)

Runtime (sec.)

00

00

00

00

00

00

FP-growth

TreeProjection

Number of transactions (K)

Run time (sec.)

Support threshold(%)#nodes in FP-tree#frequent item occurrencesRatioFP-tree MemDB MemMem ratio

9036313757483789.9487125502992631.6565656566

80151517787821174.11363607115128195.6855885589

7026151930953738.41627607723812123.0690248566

6081052147771264.99194520859108444.165556241

50134492219609165.04322776887843627.5064936674

4037162231038762.17891888924154810.3617808514

30123368242560519.66296083297024203.2769235134

2037387326563797.118972952106255161.1841717196

1080747327925353.4619379352111701400.5763938856

00

00

00

00

00

00

00

00

00

#nodes in FP-tree

#frequent item occurrences

-

Presentation of Association Rules (Table Form )

Data Mining: Concepts and Techniques

-

Visualization of Association Rule Using Plane Graph

Data Mining: Concepts and Techniques

-

Visualization of Association Rule Using Rule Graph

Data Mining: Concepts and Techniques

-

Iceberg QueriesIcerberg query: Compute aggregates over one or a set of attributes only for those whose aggregate values is above certain thresholdExample:select P.custID, P.itemID, sum(P.qty)from purchase Pgroup by P.custID, P.itemIDhaving sum(P.qty) >= 10Compute iceberg queries efficiently by Apriori:First compute lower dimensionsThen compute higher dimensions only when all the lower ones are above the threshold

Data Mining: Concepts and Techniques

-

Multiple-Level Association RulesItems often form hierarchy.Items at the lower level are expected to have lower support.Rules regarding itemsets at appropriate levels could be quite useful.Transaction database can be encoded based on dimensions and levelsWe can explore shared multi-level mining

Data Mining: Concepts and Techniques

-

Mining Multi-Level AssociationsA top_down, progressive deepening approach: First find high-level strong rules: milk bread [20%, 60%]. Then find their lower-level weaker rules: 2% milk wheat bread [6%, 50%].Variations at mining multiple-level association rules.Level-crossed association rules: 2% milk Wonder wheat breadAssociation rules with multiple, alternative hierarchies: 2% milk Wonder bread

Data Mining: Concepts and Techniques

-

Multi-level Association: Uniform Support vs. Reduced SupportUniform Support: the same minimum support for all levels+ One minimum support threshold. No need to examine itemsets containing any item whose ancestors do not have minimum support. Lower level items do not occur as frequently. If support threshold too high miss low level associationstoo low generate too many high level associationsReduced Support: reduced minimum support at lower levelsThere are 4 search strategies:Level-by-level independentLevel-cross filtering by k-itemsetLevel-cross filtering by single itemControlled level-cross filtering by single item

Data Mining: Concepts and Techniques

-

Uniform SupportMulti-level mining with uniform supportMilk[support = 10%]2% Milk [support = 6%]Skim Milk [support = 4%]Level 1min_sup = 5%Level 2min_sup = 5%Back

Data Mining: Concepts and Techniques

-

Reduced SupportMulti-level mining with reduced support2% Milk [support = 6%]Skim Milk [support = 4%]Level 1min_sup = 5%Level 2min_sup = 3%BackMilk[support = 10%]

Data Mining: Concepts and Techniques

-

Multi-level Association: Redundancy FilteringSome rules may be redundant due to ancestor relationships between items.Examplemilk wheat bread [support = 8%, confidence = 70%]2% milk wheat bread [support = 2%, confidence = 72%]We say the first rule is an ancestor of the second rule.A rule is redundant if its support is close to the expected value, based on the rules ancestor.

Data Mining: Concepts and Techniques

-

Multi-Level Mining: Progressive DeepeningA top-down, progressive deepening approach: First mine high-level frequent items: milk (15%), bread (10%) Then mine their lower-level weaker frequent itemsets: 2% milk (5%), wheat bread (4%)Different min_support threshold across multi-levels lead to different algorithms:If adopting the same min_support across multi-levelsthen toss t if any of ts ancestors is infrequent.If adopting reduced min_support at lower levelsthen examine only those descendents whose ancestors support is frequent/non-negligible.

Data Mining: Concepts and Techniques

-

Progressive Refinement of Data Mining QualityWhy progressive refinement?Mining operator can be expensive or cheap, fine or roughTrade speed with quality: step-by-step refinement.Superset coverage property: Preserve all the positive answersallow a positive false test but not a false negative test.Two- or multi-step mining:First apply rough/cheap operator (superset coverage)Then apply expensive algorithm on a substantially reduced candidate set (Koperski & Han, SSD95).

Data Mining: Concepts and Techniques

-

Progressive Refinement Mining of Spatial Association RulesHierarchy of spatial relationship:g_close_to: near_by, touch, intersect, contain, etc.First search for rough relationship and then refine it.Two-step mining of spatial association:Step 1: rough spatial computation (as a filter) Using MBR or R-tree for rough estimation.Step2: Detailed spatial algorithm (as refinement) Apply only to those objects which have passed the rough spatial association test (no less than min_support)

Data Mining: Concepts and Techniques

-

Multi-Dimensional Association: ConceptsSingle-dimensional rules:buys(X, milk) buys(X, bread)Multi-dimensional rules: 2 dimensions or predicatesInter-dimension association rules (no repeated predicates)age(X,19-25) occupation(X,student) buys(X,coke)hybrid-dimension association rules (repeated predicates)age(X,19-25) buys(X, popcorn) buys(X, coke)Categorical Attributesfinite number of possible values, no ordering among valuesQuantitative Attributesnumeric, implicit ordering among values

Data Mining: Concepts and Techniques

-

Techniques for Mining MD AssociationsSearch for frequent k-predicate set:Example: {age, occupation, buys} is a 3-predicate set.Techniques can be categorized by how age are treated.1. Using static discretization of quantitative attributesQuantitative attributes are statically discretized by using predefined concept hierarchies.2. Quantitative association rulesQuantitative attributes are dynamically discretized into binsbased on the distribution of the data.3. Distance-based association rulesThis is a dynamic discretization process that considers the distance between data points.

Data Mining: Concepts and Techniques

-

Static Discretization of Quantitative AttributesDiscretized prior to mining using concept hierarchy.Numeric values are replaced by ranges.In relational database, finding all frequent k-predicate sets will require k or k+1 table scans.Data cube is well suited for mining.The cells of an n-dimensional cuboid correspond to the predicate sets.Mining from data cubes can be much faster.

Data Mining: Concepts and Techniques

-

Quantitative Association Rulesage(X,30-34) income(X,24K - 48K) buys(X,high resolution TV)Numeric attributes are dynamically discretizedSuch that the confidence or compactness of the rules mined is maximized.2-D quantitative association rules: Aquan1 Aquan2 AcatCluster adjacent association rulesto form general rules using a 2-D grid.Example:

Data Mining: Concepts and Techniques

-

ARCS (Association Rule Clustering System)How does ARCS work?

1. Binning

2. Find frequent predicateset

3. Clustering

4. Optimize

Data Mining: Concepts and Techniques

-

Limitations of ARCSOnly quantitative attributes on LHS of rules.Only 2 attributes on LHS. (2D limitation)An alternative to ARCSNon-grid-basedequi-depth binningclustering based on a measure of partial completeness.Mining Quantitative Association Rules in Large Relational Tables by R. Srikant and R. Agrawal.

Data Mining: Concepts and Techniques

-

Mining Distance-based Association RulesBinning methods do not capture the semantics of interval data

Distance-based partitioning, more meaningful discretization considering:density/number of points in an intervalcloseness of points in an interval

Data Mining: Concepts and Techniques

Sheet1

Price($)Equi-width(width $10)Equi-depth(depth 2)Distance-based

7[0,10][7,20][7,7]

20[11,20][22,50][20,22]

22[21,30][51,53][50,53]

50[31,40]

51[41,50]

53[51,60]

-

S[X] is a set of N tuples t1, t2, , tN , projected on the attribute set XThe diameter of S[X]:

distx:distance metric, e.g. Euclidean distance or Manhattan

Clusters and Distance Measurements

Data Mining: Concepts and Techniques

-

The diameter, d, assesses the density of a cluster CX , where

Finding clusters and distance-based rulesthe density threshold, d0 , replaces the notion of supportmodified version of the BIRCH clustering algorithm

Clusters and Distance Measurements(Cont.)

Data Mining: Concepts and Techniques

-

Interestingness MeasurementsObjective measuresTwo popular measurements: support; and confidence

Subjective measures (Silberschatz & Tuzhilin, KDD95)A rule (pattern) is interesting ifit is unexpected (surprising to the user); and/oractionable (the user can do something with it)

Data Mining: Concepts and Techniques

-

Criticism to Support and ConfidenceExample 1: (Aggarwal & Yu, PODS98)Among 5000 students3000 play basketball3750 eat cereal2000 both play basket ball and eat cerealplay basketball eat cereal [40%, 66.7%] is misleading because the overall percentage of students eating cereal is 75% which is higher than 66.7%.play basketball not eat cereal [20%, 33.3%] is far more accurate, although with lower support and confidence

Data Mining: Concepts and Techniques

Sheet1

basketballnot basketballsum(row)

cereal200017503750

not cereal10002501250

sum(col.)300020005000

MBD00A1A217.unknown

-

Criticism to Support and Confidence (Cont.)Example 2:X and Y: positively correlated,X and Z, negatively relatedsupport and confidence of X=>Z dominates We need a measure of dependent or correlated events

P(B|A)/P(B) is also called the lift of rule A => B

Data Mining: Concepts and Techniques

Sheet1

X11110000

Y11000000

Z01111111

Sheet1

RuleSupportConfidence

X=>Y25%50%

X=>Z37.50%75%

-

Other Interestingness Measures: InterestInterest (correlation, lift)taking both P(A) and P(B) in considerationP(A^B)=P(B)*P(A), if A and B are independent eventsA and B negatively correlated, if the value is less than 1; otherwise A and B positively correlated

Data Mining: Concepts and Techniques

Sheet1

X11110000

Y11000000

Z01111111

Sheet1

ItemsetSupportInterest

X,Y25%2

X,Z37.50%0.9

Y,Z12.50%0.57

-

Chapter 6: Mining Association Rules in Large DatabasesAssociation rule miningMining single-dimensional Boolean association rules from transactional databasesMining multilevel association rules from transactional databasesMining multidimensional association rules from transactional databases and data warehouseFrom association mining to correlation analysisConstraint-based association miningSummary

Data Mining: Concepts and Techniques

-

Constraint-Based MiningInteractive, exploratory mining giga-bytes of data? Could it be real? Making good use of constraints!What kinds of constraints can be used in mining?Knowledge type constraint: classification, association, etc.Data constraint: SQL-like queries Find product pairs sold together in Vancouver in Dec.98.Dimension/level constraints:in relevance to region, price, brand, customer category.Rule constraintssmall sales (price < $10) triggers big sales (sum > $200).Interestingness constraints:strong rules (min_support 3%, min_confidence 60%).

Data Mining: Concepts and Techniques

-

Rule Constraints in Association MiningTwo kind of rule constraints: Rule form constraints: meta-rule guided mining. P(x, y) ^ Q(x, w) takes(x, database systems). Rule (content) constraint: constraint-based query optimization (Ng, et al., SIGMOD98).sum(LHS) < 100 ^ min(LHS) > 20 ^ count(LHS) > 3 ^ sum(RHS) > 10001-variable vs. 2-variable constraints (Lakshmanan, et al. SIGMOD99): 1-var: A constraint confining only one side (L/R) of the rule, e.g., as shown above. 2-var: A constraint confining both sides (L and R).sum(LHS) < min(RHS) ^ max(RHS) < 5* sum(LHS)

Data Mining: Concepts and Techniques

-

Constrain-Based Association QueryDatabase: (1) trans (TID, Itemset ), (2) itemInfo (Item, Type, Price)A constrained asso. query (CAQ) is in the form of {(S1, S2 )|C },where C is a set of constraints on S1, S2 including frequency constraintA classification of (single-variable) constraints:Class constraint: S A. e.g. S ItemDomain constraint:S v, { , , , , , }. e.g. S.Price < 100v S, is or . e.g. snacks S.TypeV S, or S V, { , , , , } e.g. {snacks, sodas } S.TypeAggregation constraint: agg(S) v, where agg is in {min, max, sum, count, avg}, and { , , , , , }.e.g. count(S1.Type) 1 , avg(S2.Price) 100

Data Mining: Concepts and Techniques

-

Constrained Association Query Optimization ProblemGiven a CAQ = { (S1, S2) | C }, the algorithm should be :sound: It only finds frequent sets that satisfy the given constraints Ccomplete: All frequent sets satisfy the given constraints C are foundA nave solution:Apply Apriori for finding all frequent sets, and then to test them for constraint satisfaction one by one.Our approach:Comprehensive analysis of the properties of constraints and try to push them as deeply as possible inside the frequent set computation.

Data Mining: Concepts and Techniques

-

Anti-monotone and Monotone ConstraintsA constraint Ca is anti-monotone iff. for any pattern S not satisfying Ca, none of the super-patterns of S can satisfy CaA constraint Cm is monotone iff. for any pattern S satisfying Cm, every super-pattern of S also satisfies it

Data Mining: Concepts and Techniques

-

Succinct ConstraintA subset of item Is is a succinct set, if it can be expressed as p(I) for some selection predicate p, where is a selection operatorSP2I is a succinct power set, if there is a fixed number of succinct set I1, , Ik I, s.t. SP can be expressed in terms of the strict power sets of I1, , Ik using union and minusA constraint Cs is succinct provided SATCs(I) is a succinct power set

Data Mining: Concepts and Techniques

-

Convertible ConstraintSuppose all items in patterns are listed in a total order RA constraint C is convertible anti-monotone iff a pattern S satisfying the constraint implies that each suffix of S w.r.t. R also satisfies CA constraint C is convertible monotone iff a pattern S satisfying the constraint implies that each pattern of which S is a suffix w.r.t. R also satisfies C

Data Mining: Concepts and Techniques

-

Relationships Among Categories of ConstraintsSuccinctnessAnti-monotonicityMonotonicityConvertible constraintsInconvertible constraints

Data Mining: Concepts and Techniques

-

Property of Constraints: Anti-MonotoneAnti-monotonicity: If a set S violates the constraint, any superset of S violates the constraint.Examples: sum(S.Price) v is anti-monotonesum(S.Price) v is not anti-monotonesum(S.Price) = v is partly anti-monotoneApplication:Push sum(S.price) 1000 deeply into iterative frequent set computation.

Data Mining: Concepts and Techniques

-

Characterization of Anti-Monotonicity ConstraintsS v, { , , }v SS VS VS Vmin(S) vmin(S) vmin(S) vmax(S) vmax(S) vmax(S) vcount(S) vcount(S) vcount(S) vsum(S) vsum(S) vsum(S) vavg(S) v, { , , }(frequent constraint)yesnonoyespartlynoyespartlyyesnopartlyyesnopartlyyesnopartlyconvertible(yes)

Data Mining: Concepts and Techniques

-

Example of Convertible Constraints: Avg(S) VLet R be the value descending order over the set of itemsE.g. I={9, 8, 6, 4, 3, 1}Avg(S) v is convertible monotone w.r.t. RIf S is a suffix of S1, avg(S1) avg(S){8, 4, 3} is a suffix of {9, 8, 4, 3}avg({9, 8, 4, 3})=6 avg({8, 4, 3})=5If S satisfies avg(S) v, so does S1{8, 4, 3} satisfies constraint avg(S) 4, so does {9, 8, 4, 3}

Data Mining: Concepts and Techniques

-

Property of Constraints: SuccinctnessSuccinctness:For any set S1 and S2 satisfying C, S1 S2 satisfies CGiven A1 is the sets of size 1 satisfying C, then any set S satisfying C are based on A1 , i.e., it contains a subset belongs to A1 , Example : sum(S.Price ) v is not succinctmin(S.Price ) v is succinctOptimization:If C is succinct, then C is pre-counting prunable. The satisfaction of the constraint alone is not affected by the iterative support counting.

Data Mining: Concepts and Techniques

-

Characterization of Constraints by SuccinctnessS v, { , , }v SS VS VS Vmin(S) vmin(S) vmin(S) vmax(S) vmax(S) vmax(S) vcount(S) vcount(S) vcount(S) vsum(S) vsum(S) vsum(S) vavg(S) v, { , , }(frequent constraint)Yesyesyesyesyesyesyesyesyesyesyesweaklyweaklyweakly nononono(no)

Data Mining: Concepts and Techniques

-

Why Is the Big Pie Still There?More on constraint-based mining of associations Boolean vs. quantitative associationsAssociation on discrete vs. continuous dataFrom association to correlation and causal structure analysis.Association does not necessarily imply correlation or causal relationshipsFrom intra-trasanction association to inter-transaction associationsE.g., break the barriers of transactions (Lu, et al. TOIS99). From association analysis to classification and clustering analysisE.g, clustering association rules

Data Mining: Concepts and Techniques

-

SummaryAssociation rule mining probably the most significant contribution from the database community in KDDA large number of papers have been publishedMany interesting issues have been exploredAn interesting research directionAssociation analysis in other types of data: spatial data, multimedia data, time series data, etc.

Data Mining: Concepts and Techniques