1 Experimental Statistics - week 10 Chapter 11: Linear Regression and Correlation Note: Homework Due...

-

Upload

madeleine-casey -

Category

Documents

-

view

216 -

download

1

Transcript of 1 Experimental Statistics - week 10 Chapter 11: Linear Regression and Correlation Note: Homework Due...

1

Experimental StatisticsExperimental Statistics - week 10 - week 10Experimental StatisticsExperimental Statistics - week 10 - week 10

Chapter 11:

Linear Regression and Correlation

Note:

Homework Due Thursday

2

( )ijk i j i k ik ijky

2-Factor with Repeated Measure -- Model

type subject within type

timetype by time interaction

NOTES: type and time are both fixed effects in the current example

- we say “subject is nested within type”

- Expected Mean Squares given on page 1032

3

The GLM ProcedureDependent Variable: conc Sum ofSource DF Squares Mean Square F Value Pr > FModel 17 57720.50000 3395.32353 110.87 <.0001Error 32 980.00000 30.62500Corrected Total 49 58700.50000

R-Square Coeff Var Root MSE conc Mean 0.983305 6.978545 5.533986 79.30000

Source DF Type III SS Mean Square F Value Pr > F

type 1 40.50000 40.50000 1.32 0.2587subject(type) 8 3920.00000 490.00000 16.00 <.0001time 4 34288.00000 8572.00000 279.90 <.0001type*time 4 19472.00000 4868.00000 158.96 <.0001

2-Factor Repeated Measures – ANOVA Output

4

2-factor Repeated Measures

Source Type III Expected Mean Square

type Var(Error) + 5 Var(subject(type)) + Q(type,type*time) subject(type) Var(Error) + 5 Var(subject(type)) time Var(Error) + Q(time,type*time) type*time Var(Error) + Q(type*time)

The GLM Procedure Tests of Hypotheses for Mixed Model Analysis of Variance

Dependent Variable: conc Source DF Type III SS Mean Square F Value Pr > F* type 1 40.500000 40.500000 0.08 0.7810 Error 8 3920.000000 490.000000Error: MS(subject(type))* This test assumes one or more other fixed effects are zero. Source DF Type III SS Mean Square F Value Pr > F subject(type) 8 3920.000000 490.000000 16.00 <.0001* time 4 34288 8572.000000 279.90 <.0001 type*time 4 19472 4868.000000 158.96 <.0001 Error: MS(Error) 32 980.000000 30.625000

5

NOTE: Since time x type interaction is significant, and since these are fixed effects we DO NOT test main effects

– we compare cell means (using MSE)

.0252 2(30.6250)

(32) 2.042 7.1475 5

MSELSD t

.5 1 2 3 4 C 37 63 85 140 76 T 55 81 134 80 42

Cell Means

6

The write-up related to the SAS output should be something like the following. Note, that even though we get a significant variance component due to subject(within group) I did not estimate the variance component itself. (I did not give this particular variance component estimation formula.)

Note also that since there is a significant interaction between the fixed effects type and time, we do not test the main effects.

7

Dealing with Normality/Equal Variance Issues

Normalizing Transformations: - log

- square root

- Box-Cox transformations

1, 0

xw

Note: the normalizing transformations sometimes also produce variance stabilization

8

Nonparametric “ANOVA”

Man-Whitney U – for comparing 2 samples

Kruskal-Wallis Test – for comparing >2 samples

Friedman’s Test – nonparametric alternative to randomized complete block/ 1-factor repeated measures design

HistogramHistogram

• displays distribution of 1 variable

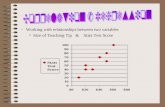

Scatter Diagram (Scatterplot)Scatter Diagram (Scatterplot)

• displays joint distribution of 2 variables

• plots data as “points” in the “x-y plane.”

10

11

Association Between Two Association Between Two VariablesVariables

– indicates that knowing one helps in predicting the other

Linear Association– our interest in this course– points “swarm” about a line

Correlation Analysis– measures the strength of linear association

13

14

(association)(association)

Regression AnalysisRegression Analysis

We want to predict the dependent variable

- response variable

using the independent variable

- explanatory variable

- predictor variable

DependentDependentVariableVariable

(Y)(Y)

Independent Variable (X)Independent Variable (X)

More than one independent variable – Multiple Regression

16

11.7 Correlation Analysis11.7 Correlation Analysis11.7 Correlation Analysis11.7 Correlation Analysis

Correlation CoefficientCorrelation Coefficient

- measures - measures linearlinear association association

-1 0 +1

perfect no perfect

negative linear positiverelationship relationship relationship

Denoted or yxr r

Positive CorrelationPositive Correlation - - high values of one variable are associated with- - high values of one variable are associated with

high values of the other high values of the other

Examples:• - father’s height, son’s height

• - daily grade, final grade

• r = 0.93 for plot on the left

1 2 3 4 5 6 7 81 2 3 4 5 6 7 8

33

22

11

00

19

EXAMS I and II

Negative CorrelationNegative Correlation - -- - high with low, low with highhigh with low, low with high

Examples: - car age, selling price

- days absent, final grade

• r = - 0.89 for plot shown here

1 2 3 4 5 6 71 2 3 4 5 6 7

44

33

22

11

00

21

Zero CorrelationZero Correlation - - no linear relationship- - no linear relationship

Examples:• - height, IQ score

• r = 0.0 for plot here

1 2 3 4 5 6 71 2 3 4 5 6 7

55

44

33

22

11

00

23

24

-.75, 0, .5, .99-.75, 0, .5, .99

25

26

1

2 2

1 1

( )( )

( ) ( )

n

i ii

yx n n

i ii i

x x y y

r

x x y y

Calculating the Correlation Coefficient

27

2

1

( )

n

xx ii

S x x

Notation:

2

1

( )

n

yy ii

S y y

1

( )( )

n

xy i ii

S x x y y

2 2

1 1

( ) /

n n

i ii i

y y n

So --

xyyx

xx yy

Sr

S S

2 2

1 1

( ) /

n n

i ii i

x x n

1 1 1

( )( ) /

n n n

i i i ii i i

x y x y n

28

Study Time Exam (hours) Score

(X) (Y)

10 92

15 81

12 84

20 74

8 85

16 80

14 84

22 80

The data below are the study times and the test scores on an exam given over the material covered during the two weeks.

Find r

1

2

1

1

2

1

1

117

1,869

660

54,638

9,519

n

ii

n

ii

n

ii

n

ii

n

i ii

x

x

y

y

x y

29

DATA one;INPUT time score;DATALINES;10 9215 8112 8420 748 8516 8014 8422 80;PROC CORR; Var score time; TITLE ‘Study Time by Score';RUN;PROC PLOT;PLOT time*score;RUN;PROC GPLOT;PLOT time*score;RUN;

30

The CORR Procedure 2 Variables: score time

Simple Statistics Variable N Mean Std Dev Sum Minimum Maximum score 8 82.50000 5.18239 660.00000 74.00000 92.00000 time 8 14.62500 4.74906 117.00000 8.00000 22.00000

Pearson Correlation Coefficients, N = 8 Prob > |r| under H0: Rho=0

score time score 1.00000 -0.77490 0.0239

time -0.77490 1.00000 0.0239

Study Time by Score

31

Plot of score*time. Legend: A = 1 obs, B = 2 obs, etc.score ‚ ‚ 92 ˆ A ‚ 91 ˆ ‚ 90 ˆ ‚ 89 ˆ ‚ 88 ˆ ‚ 87 ˆ ‚ 86 ˆ ‚ 85 ˆ A ‚ 84 ˆ A A ‚ 83 ˆ ‚ 82 ˆ ‚ 81 ˆ A ‚ 80 ˆ A A ‚ 79 ˆ ‚ 78 ˆ ‚ 77 ˆ ‚ 76 ˆ ‚ 75 ˆ ‚ 74 ˆ A ‚ Šƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒƒƒƒˆƒƒ 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 time

32

33

0 : 0 : 0 aH H

Rejection Region

Test Statistic

2

- 2

1-

nt r

r

t > t2 or t < t/2 df n 2

Testing Statistical Significance of Correlation Coefficient

34

Correlation Between Study Time Correlation Between Study Time and Scoreand Score

H0: There is No Correlation Between Study Time and ScoreHa: There is a Correlation Between Study Time and Score

Rejection Region

Test Statistic

Conclusion

P-value

35

36

• Correlation measures the strength of the linear relationship between two variables.

• Correlation requires that both variables be quantitative.

• r does not change when we change the units of measurement of x, y, or both.

• Correlation makes no distinction between explanatory and response variables.

• The correlation coefficient is not resistant to outliers.

Properties of Correlations

37

The CORR Procedure

Pearson Correlation Coefficients, N = 20 Prob > |r| under H0: Rho=0 math reading math 1.00000 0.87207 <.0001 reading 0.87207 1.00000 <.0001

Math vs Reading Scores

38

The CORR Procedure Pearson Correlation Coefficients, N = 20 Prob > |r| under H0: Rho=0 math reading math 1.00000 0.27198 0.2460 reading 0.27198 1.00000 0.2460

Math vs Reading Scores with Outlier

39

Pearson Correlation Coefficients, N = 14 Prob > |r| under H0: Rho=0 math reading math 1.00000 -0.1973 0.5194 reading -0.1973 1.00000 0.5194

40

Pearson Correlation Coefficients, N = 14 Prob > |r| under H0: Rho=0 math reading math 1.00000 0.53211 0.0502 reading 0.53211 1.00000 0.0502

41

Divorce Rate (per 1000)

% in prison on Drug Offenses

IMPORTANT NOTE:IMPORTANT NOTE:

Correlation Correlation DOES NOTDOES NOT Imply CausationImply Causation

• strong association between 2 variables is not enough to justify conclusions about cause and effect

• best way to get evidence that X causes Y is through a controlled experiment

43

11.1-5 Regression Analysis11.1-5 Regression Analysis11.1-5 Regression Analysis11.1-5 Regression Analysis

44

45

Goal of Regression Analysis:

Predict Y from knowledge of X

For data such as the Father-Son data, it seems reasonable to assume a model of the form

| 0 1Y x x

i.e. the conditional means of Y given x follow a straight line

46

Alternative mathematical expression for the “regression model”:

0 1y x 2' (0, )where the s (errors) are distributed N

2- i.e. all the errors have the same variance

In practice, we want to estimate this line from the data.

0

2

4

6

8

10

12

14

0 2 4 6 8

0

2

4

6

8

10

12

14

0 2 4 6 8

0

2

4

6

8

10

12

14

0 2 4 6 8

0

2

4

6

8

10

12

14

0 2 4 6 8

0

2

4

6

8

10

12

14

0 2 4 6 8

0

2

4

6

8

10

12

14

0 2 4 6 8

Which line is “closest” to the Which line is “closest” to the points ?points ?

0

2

4

6

8

10

12

14

0 2 4 6 8

Criterion for measuring “closeness” --- the sum of squared vertical distances from the points to the line

Regression (Least Squares) Line --- the line for which this sum-of-squared distance is a minimum

55

Notation

Theoretical Model

Regression line

0 1y x

0 1ˆ ˆy x

0 1ˆ ˆ and are called "least-squares estimates"

56

Data1 1

2 2

n n

x y

x y

x y

0 1ˆ ˆˆ i iy x

0 1ˆ ˆ i i iy x e

we write

where is called the th residualie i

ˆi.e. i i ie y y

57

1

2 20 1

1 1

ˆ ˆ

ˆ ˆ( ) [ ( )]ˆ

0 and are chosen to minimize

n n

i i i ii i

y y y x

NOTE:

- this is a calculus problem

58

11

2

1

( )( )ˆ

( )

n

i ixyi

nxx

ii

x x y yS

Sx x

Least Squares Estimates

0 1ˆ ˆy x

Computation Formula

1 1 11

2 2

1 1

( )( ) /ˆ

( ) /

n n n

i i i ii i i

n n

i ii i

x y x y n

x x n

59

Study Time Exam (hours) Score

(X) (Y)

10 92

15 81

12 84

20 74

8 85

16 80

14 84

22 80

The data below are the study times and the test scores on an exam given over the material covered during the two weeks.

Find the equation of the regression line for prediction exam score from study time.

1

2

1

1

2

1

1

117

1,869

660

54,638

9,519

n

ii

n

ii

n

ii

n

ii

n

i ii

x

x

y

y

x y

60

The GLM Procedure

Dependent Variable: score Sum ofSource DF Squares Mean Square F Value Pr > FModel 1 112.8883610 112.8883610 9.02 0.0239Error 6 75.1116390 12.5186065Corrected Total 7 188.0000000

R-Square Coeff Var Root MSE score Mean 0.600470 4.288684 3.538164 82.50000

Source DF Type I SS Mean Square F Value Pr > Ftime 1 112.8883610 112.8883610 9.02 0.0239Source DF Type III SS Mean Square F Value Pr > Ftime 1 112.8883610 112.8883610 9.02 0.0239

Standard Parameter Estimate Error t Value Pr > |t| Intercept 94.86698337 4.30408629 22.04 <.0001 time -0.84560570 0.28159265 -3.00 0.0239

PROC GLM;MODEL score=time;RUN;