Capacity of the Arbitrarily Varying Channel Under List Decoding

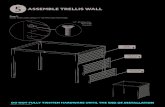

1 Channel Coding (III) Channel Decoding. ECED4504 2 of 15 Topics today u Viterbi decoding –trellis...

-

Upload

bertram-franklin -

Category

Documents

-

view

228 -

download

0

Transcript of 1 Channel Coding (III) Channel Decoding. ECED4504 2 of 15 Topics today u Viterbi decoding –trellis...

1

Channel Coding (III)

Channel Decoding

ECED4504

2 of 15

Topics today Viterbi decoding

– trellis diagram

– surviving path

– ending the decoding Soft and hard decoding

ECED4504

3 of 15

State diagram of a convolutional code

Each new block of k bits causes a transition into new state Hence there are 2k branches leaving each state Assuming encoder zero initial state, encoded word for any input k bits

can thus be obtained. For instance, below for u=(1 1 1 0 1) the encoded word v=(1 1, 1 0, 0 1, 0 1, 1 1, 1 0, 1 1, 1 1) is produced:

Encoder state diagram for an (n,k,L)=(2,1,2) coder

Verify that you have the same result!

Input state

Output state

ECED4504

4 of 15

Decoding convolutional codes

Maximum likelihood decoding of convolutional codes means finding the code branch in the code trellis that was most likely transmitted

Therefore maximum likelihood decoding is based on calculating code Hamming distances dfree for each branch forming encoded word

Assume that information symbols applied into a AWGN channel are equally alike and independent

Let’s denote by x the message bits (no errors; in the sender; unknown) and by y the decoded bits:

Probability to decode the sequence y provided x was transmitted is then

The most likely path through the trellis will maximize this metric Also, the following metric is maximized (prob.<1) that can alleviate

computations:

0 1 2... ...

m m m m mjx x x xx

0 1... ...

m jy y yy

0( , ) ( | )

m j mjj

p p y x

y x

0ln ( , ) ln ( | )jm j mjp p y x

y x

Decoderm

yreceived bits:

mxnon-erroneous bits:

bitdecisions

ECED4504

5 of 15

Example of exhaustive maximal likelihood detection

Assume a 3-bit message is to transmitted. To clear the encoder 2 zero-bits are appended after message. Thus 5 bits are inserted into encoder and 10 bits produced (each input bit causes state conversion and emits 2 output bits). Assume channel error probability is p=0.1. After the communication,10,01,10,11,00 is received. What comes after decoder’s decision, e.g. what was most likely the transmitted sequence (from the sender)?

ECED4504

6 of 15

0( , ) ( | )

m j mjj

p p y x

y x

0ln ( , ) ln ( | )jm j mjp p y x

y x

errors correct

weight for prob. to receive bit in-error

channel error

probability is p=0.1

ECED4504

7 of 15

Note also the Hamming distances!

correct:1+1+2+2+2=8;8 ( 0.11) 0.88

false:1+1+0+0+0=2;2 ( 2.30) 4.6

total path metric: 5.48

The largest metric, verifythat you get the same result!

ECED4504

8 of 15

Soft and hard decoding

Regardless whether the channel outputs hard or soft decisions the decoding rule remains the same: maximize the probability

However, in soft decoding decision region energies must be accounted for, and hence Euclidean metric dE, rather that Hamming metric dfree is used

Transition for Pr[3|0] is indicated by the arrow

E

fre be Cd d E R

0ln ( , ) ln ( | )jm j mjp p y x

y x

ECED4504

9 of 15

Decision regions

Coding can be realized by soft-decoding or hard-decoding principle For soft-decoding reliability (measured by bit-energy) of decision

region must be known Example: decoding BPSK-signal: Matched filter output is a continuos

number. In AWGN matched filter output is Gaussian For soft-decoding

several decision region partitionsare used

Transition probabilityfor Pr[3|0], e.g. prob. that transmitted ‘0’ falls into region no: 3

ECED4504

10 of 15

The Viterbi algorithm

Exhaustive maximum likelihood method must search all paths in phase trellis for 2k bits for a (n,k,L) code

By Viterbi-algorithm search depth can be decreased to comparing surviving paths where 2L is the number of nodes and 2k is the number of branches coming to each node (see the next slide!)

Problem of optimum decoding is to find the minimum distance path from the initial stage back to initial stage (below from S0 to S0). The minimum distance is the sum of all path metrics

that is maximized by the correct path The Viterbi algorithm gets its

efficiency via concentrating intosurvivor paths of the trellis

0ln ( , ) ln ( | )jm j mjp p y x

y x

Channel output sequenceat the RX

TX Encoder output sequencefor the m:th path

2 2k L

ECED4504

11 of 15The survivor path Assume for simplicity a convolutional code with k=1, and up to 2k = 2

branches can enter each stage in trellis diagram Assume optimal path passes S. Metric comparison is done by adding the

metric of S into S1 and S2. At the survivor path the accumulated metric is naturally smaller (otherwise it could not be the optimum path)

For this reason the non-survived path canbe discarded -> all path alternatives need notto be considered

Note that in principle whole transmittedsequence must be received before decision.However, in practice storing of states for input length of 5L is quite adequate

2 branches enter each nodek

2 nodesL

ECED4504

12 of 15

Example of using the Viterbi algorithm

Assume received sequence is

and the (n,k,L)=(2,1,2) encoder shown below. Determine the Viterbi decoded output sequence!

01101111010001y

(Note that for this encoder code rate is 1/2 and memory depth L = 2)

states

ECED4504

13 of 15

The maximum likelihood path

The decoded ML code sequence is 11 10 10 11 00 00 00 whose Hamming distance to the received sequence is 4 and the respective decoded sequence is 1 1 0 0 0 0 0 (why?). Note that this is the minimum distance path.(Black circles denote the deleted branches, dashed lines: '1' was applied)

(1)

(1)

(0)

(2)

(1)

(1)

1

1

Smaller accumulated metric selected

First depth with two entries to the node

After register length L+1=3branch pattern begins to repeat

(Branch Hamming distancein parenthesis)

ECED4504

14 of 15

How to end-up decoding?

In the previous example it was assumed that the register was finally filled with zeros thus finding the minimum distance path

In practice with long code words zeroing requires feeding of long sequence of zeros to the end of the message bits: wastes channel capacity & introduces delay

To avoid this path memory truncation is applied:– Trace all the surviving paths to the

depth where they merge

– Figure right shows a common pointat a memory depth J

– J is a random variable whosemagnitude shown in the figure (5L) has been experimentally tested fornegligible error rate increase

– Note that this also introduces thedelay of 5L! 5 stages of the trellisJ L

ECED4504

15 of 15Error rate of convolutional codes: Weight spectrum and error-event probability

Error rate depends on

– channel SNR

– input sequence length, numberof errors is scaled to sequence length

– code trellis topology These determine which path in trellis was followed while decoding An error event happens when an erroneous path is followed by the

decoder All the paths producing errors must have a distance that is larger than

the path having distance dfree, e.g. there exists the upper bound for following all the erroneous paths (error-event probability):

2( )

free

e dd d

p a p d

Number of paths (the weight spectrum) at the Hamming distance d

Probability of the path at the Hamming distance d

![Linear Programming (LP) Decoding Corrects a Constant ...people.csail.mit.edu/jonfeld/pubs/lpexpand_talk.pdfPCWs [FKMT ’01] = trellis “flow” polytope [FK ’02] Rate-1/2 RA code](https://static.fdocuments.us/doc/165x107/60d22dcac085cd4e75421400/linear-programming-lp-decoding-corrects-a-constant-pcws-fkmt-a01-trellis.jpg)